The Mellanox SX1036 is a 1U managed Ethernet switch that provides up to 2.88Tb/s of non-blocking throughput via 36 40Gb/s QSFP ports that can be broken out to achieve up to 64 10Gb/s ports or offer a mixture of 40Gb/s and 10Gb/s connectivity. As a backbone to the StorageReview Enterprise Lab networking infrastructure, the SX1036 switch provides the connectivity required to test the latest Ethernet-based high-speed network storage appliances. On the compute side, we’re pairing the SX1036 with Mellanox’s dual-port 10GbE and 40GbE ConnectX-3 EN PCIe 3.0 Ethernet adapters and connecting with Mellanox QSFP and SFP+ cables, giving our lab a complete end-to-end Mellanox interconnect solution.

The Mellanox SX1036 is a top-of-rack access switch that can provide 10Gb/s connectivity to servers and 40Gb/s for uplinks. The SX1036 is offered in two form factors: the MSX1036B-1SFR standard-depth, with back to front airflow; and the MSX1036B-1BRR short depth, with front to back airflow. The two models are otherwise functionally identical, with our lab being equipped with the standard-depth model.

The Mellanox SX1036 provides 36 40GigE ports or up to up to 64 10GigE ports with the use of breakout cables. When operated at 40GigE, the Mellanox specifies 230nsec latency, with 250nsec latency at 10GigE.

Mellanox SX1036 Layer 2 Features:

- 48K L2 Forwarding Entries

- 802.1w Rapid Spanning Tree Protocol

- 802.1Qbb Priority Flow Control (PFC)

- Static MAC

- 802.3x Flow control

- VLAN 802.1Q (4K)

- IGMP v1,v2

- Access Control Lists (L2-L4)

- 802.3ad Link Aggregation/LACP (16 ports/channel, 32 groups per system)

- 802.1Qaz Enhanced Transmission Selection

- (ETS)

- Jumbo Frames (9216 Bytes)

Supported Modules and Cables

- QSFP–40GbE module

- QSA-QSFP to SFP+ adapter

- QSFP to QSFP Cable (1M or 3M)

- QSFP splitter cables 40GbE to 4x10GbE

ConnectX-3 EN Ethernet Adapters and QSFP/SFP+ Cabling

We’ve paired the SX1036 with Mellanox’s latest ConnectX-3 EN PCIe 3.0 ethernet adapters, which can be deployed as part of an end-to-end 40Gb/s solution with the SX1036 to maximize throughput or offer mixed 10Gb/s connectivity, for example to 10Gb/s server NICs. We have dual-port 10Gb/s cards and dual-port 40Gb/s cards to test with the SX1036.

The ConnectX-3 EN platform offers a variety of technologies to optimize network performance in virtualized environments, including single root IOV, address translation and protection, multiple queues per virtual machine, and VMware NetQueue support. It also provides CPU offloads for RDMA over converged ethernet, stateless TCP/UDP/IP, and intelligent interrupt coalescence.

To round off the end-to-end Mellanox networking configuration, we use a variety of Mellanox QSFP and SFP+ cabling solutions in our lab. To interface 10GbE compute and networking storage solutions to the SX1036 switch, we have a variety of ports configured in a fan-out mode, which converts a single 40GbE port into four 10GbE connections. Using Mellanox QSFP splitter cables, one cable lets us connect the switch to two or four devices.

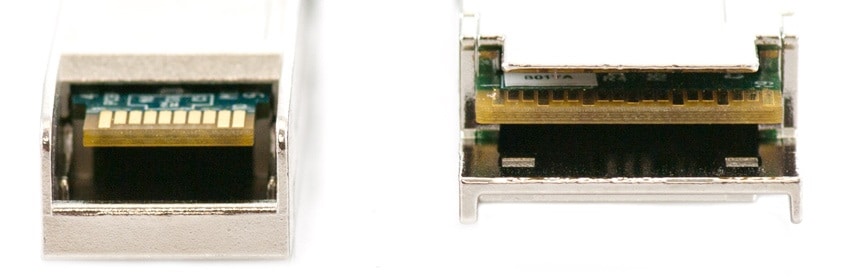

Shown above are SFP+ (Enhanced Small Form-Factor Pluggable) and QSFP (Quad Small Form-factor Pluggable) connectors, which we use to connect to 10GbE and 40GbE equipment respectively.

Design and Build

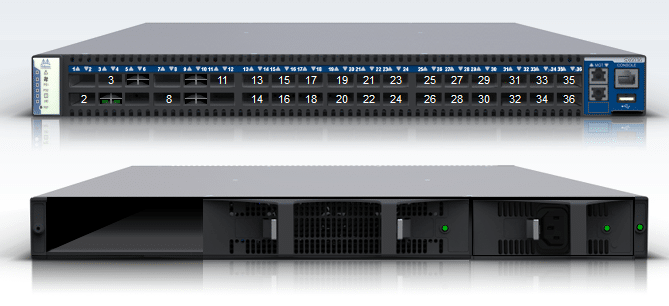

The connector side of the switch has 36 QSFP ports placed in two rows, 18 ports to a row. The front panel features system status LEDs and management connection ports. Above the ports are corresponding status LEDs. The RJ-45 connections labeled “MGT” provide access for remote management. A USB interface is also available to update MLNX-OS.

The power side of the switch includes one factory-installed hot-swap PSU on the left, a blank cover for an optional second PSU for redundancy on the right, and a hot-swap fan tray. Each PSU has a 2-color status LED. There is an I2C connector on the left of the rear panel for debug and troubleshooting. This connector can be used to install firmware upgrades should the switch’s firmware become damaged or otherwise cannot be upgraded normally.

Installation and Initial Setup

This switch can be installed in any standard 19-inch rack with depths of 40cm to 80cm. Mellanox offers two installation kit options for the SX1036: a long kit and a short kit. Both the standard (MSX1036B-1SFR) and the short (MSX1036B-1BRR) SX1036 switches can be mounted using the long rail kit. The short kit will only work with the short switch. The SX1036 weighs in at about 21 pounds, which is not the heaviest piece of gear in the rack but will still take careful coordination for one person to install.

Each of the 36 QSFP ports is capable of 40Gb/s, as well as 10Gb/s with a QSA QSFP to SFP+ adaptor or QSFP to SFP+ Mellanox cables. Certain ports can be split by using a QSFP 1X4 breakout cable to split one 40 Gb/s port into 4 lanes (4 SFP+ connectors). Other ports can be split into 2 10 Gb/s ports, using lanes 1 and 2 only. With breakout cables you can achieve a total of 64 ports of 10Gb/s.

The first time you configure the SX1036, you must establish a DB9 connection to the console RJ-45 port of the switch using the supplied harness and terminal emulation software on the host PC. Once the administrator establishes basic settings via the console, MLNX-OS remote and web management functions can be enabled.

Cooling

There are two possible air flow directions depending on the SX1036 model. The standard-depth SX1036 flows from back to front, while the short-depth version uses front to back airflow. The SX1036 can operate with one its three fans in the fan module inoperable while the ambient temperature remains below 45 degrees Celsius or 113 degrees Fahrenheit.

To extract the fan module, you must push both latches towards each other while pulling the module up and out of the switch. If you are removing the fan while the switch is powered, the fan status indicator for the module in question will turn off once the fan is unseated.

To install a fan module, you slide it into the opening until you begin to feel a slight resistance, then continue pressing until it seats completely and the corresponding fan status indicator displays green when the switch is powered on.

Power

According to Mellanox, typical power consumption for the SX1036 is 86W, including 1.3 Watts per port. Maximum power consumption is 100W, and the SX1036 is equipped for input voltage from 100 to 240 VAC. The SX1036 ships with one power supply, but additional PSUs are available in order to provide hot-swap redundant power.

With two power supplies installed, either PSU may be extracted while the switch is operational. To extract a PSU, you remove the power cord, and then push the latch release while pulling outward on the PSU handle. To install a PSU, slide the unit into the opening until you begin to feel slight resistance, and then continue pressing until the PSU is completely seated. When the PSU is properly installed, the latch will snap into place and you can attach the power supply cord.

Management

The SX1036 offers management options including support for Mellanox’s Unified Fabric Manager (UFM), and MLNX-OS. MLNX-OS is Mellanox’s standard management software module for its SwitchX-based managed switch systems and ConnectX-3 adapter family, and is included with the SX1036.

MLNX-OS software includes: CLI, WebUI, SNMP and XML gateway interfaces. The XML Gateway provides an XML protocol that can be used to get and set management information over HTTP/HTTPS or SSH. MLNX-OS enables the user to define and manage logging, e-mail alerts, and security capabilities including RADIUS, TACACS+, AAA, and LDAP. The MLNX-OS web interface provides port status with event and error logs, graphical CPU load display, graphical fan speed over time display, power supplies voltage alarms, and graphical internal temperature display with alarm notification.

Conclusion

The Mellanox SX1036 10/40Gb Ethernet Switch is a core component of the StorageReview Enterprise Lab, pairing with Mellanox interface cards and cabling to give us a complete high-speed Ethernet fabric. With most enterprise storage arrays integrating multiple 10GbE interconnects, the Mellanox products give us the backbone we need to properly review and stress both hard drive and flash-based arrays. For the higher-end array testing, we also have a complete Mellanox InfiniBand solution in the lab. As interconnects gain pace, storage is the new performance bottleneck. Stay tuned as we try to balance the equation with an expanding set of arrays and servers that hope to stress the fabric.

Amazon

Amazon