While many consumers are just becoming aware of solid state drives, the origins of SSDs go back nearly 60 years. The SSD was born in the 1950's as engineers were working to advance storage systems. Two technologies, Charged Capacitor Read Only Storage (CCROS) and Core memory, were developed around the same time and served as the foundation for the SSDs that we know today.

IBM began in the 1920s with a focus on “Business Machines.” In those days this meant mechanical machines, and more precisely – machines with motor-assist. This is how electric typewriters, repeating calculators, printers, and sorting machines came about. These advances greatly revolutionized business in America and throughout the world, especially in banking.

It's important to understand this legacy as it relates to origins of Solid State Drives (SSDs). IBM had a very mechanical engineering approach to solving problems. They first looked at how to solve the problem with a mechanical “machine,” and then considered how to make that machine work better and faster by adding motors derived from factory assembly line systems, and electronic tubes from the radio and television industries.

It's important to understand this legacy as it relates to origins of Solid State Drives (SSDs). IBM had a very mechanical engineering approach to solving problems. They first looked at how to solve the problem with a mechanical “machine,” and then considered how to make that machine work better and faster by adding motors derived from factory assembly line systems, and electronic tubes from the radio and television industries.

They took their hybrid electro/mechanical machine solutions and created a business machine market that allowed the company to grow swiftly through the 1940s.

Before long, however, they found that their machines needed to be more flexible. They could do certain things well, like a long series of single additions or single subtractions, but that was it. They could not do adds, subtracts, multiplies, divides, or compares in any particular desired combination. It was then that IBM realized they needed to make their machines programmable, and that this would be the next big breakthrough.

Hand-in-hand with this was a requirement for memory – both temporary and permanent. The easiest solution was to leverage what they had been using all along – paper. They quickly devised ways to use punched paper cards and punched paper tape for input and output storage, along with using ink printers to output results.

Hand-in-hand with this was a requirement for memory – both temporary and permanent. The easiest solution was to leverage what they had been using all along – paper. They quickly devised ways to use punched paper cards and punched paper tape for input and output storage, along with using ink printers to output results.

For temporary storage they developed memory arrays from rows and columns of discrete capacitors soldered to boards and coupled to the tube-based repeating calculator. These paper-memory methods were used on many of early digital systems from late 1940s even up until the early 1980s.

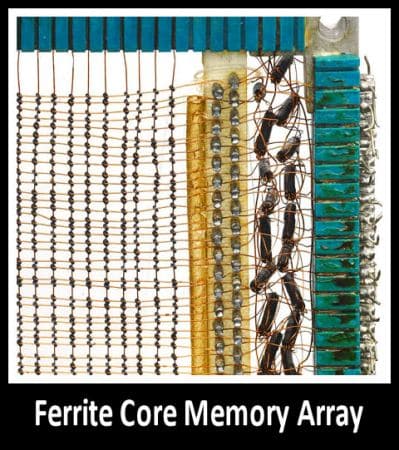

Because of these shortcomings IBM needed to develop new memory alternatives. The main methods that took root were based on magnetism. It had been known from the mid-1800s that certain earth ferrite materials could be magnetized and demagnetized using an electromagnet. After much work, IBM exploited this method and developed a clever series of “paper-equivalent” systems – magnetic strip cards, magnetic tape, and magnetic disk. Interestingly, these were still mechanical, motor-assisted, tube machines used “on the periphery” of the main business machine. Initially, the electromagnet was a fixed head for all of these devices. Later on, the heads on disks were made moveable like they are today.

With this advancement, for the first time computing systems could retain the software programs when the machine was totally powered off, and could permanently store the program results without the use of paper at all. Soon paper was phased out for everything other than for reports.The focus now could be on enhancing the computing system, and in particular, its local nonvolatile memory.

In the mid 1950s, as the transistor was emerging from IBM research, IBM developed their first bulk solid state nonvolatile memory called the Charged Capacitor Read Only Store (CCROS). It was the first true SSD and was the predecessor to today’s EPROMS, EEPROMS, UVPROMS, NVPROMS, and FLASH memory devices.

Core memory technology advanced considerably through extensive use by NASA for early space programs due to its superior static and environmental stability. It is immune to radiation which was a major concern for use in space. All of the memory used in the Apollo mission computers were IBM core memory based.

Core memory was also used up until 1990 on most mission-critical defense systems due to its reliability and data durability. After 1990 or so, capacitive-based nonvolatile memory technology had advanced to a point where it could retain its stored data virtually indefinitely matching the advantage long provided by Core memory.

Today's SSDs offer exponentially more storage and speed, and continue to evolve as advancements in memory, controllers and other core components come to market.

Return to the SSD Guide

Amazon

Amazon