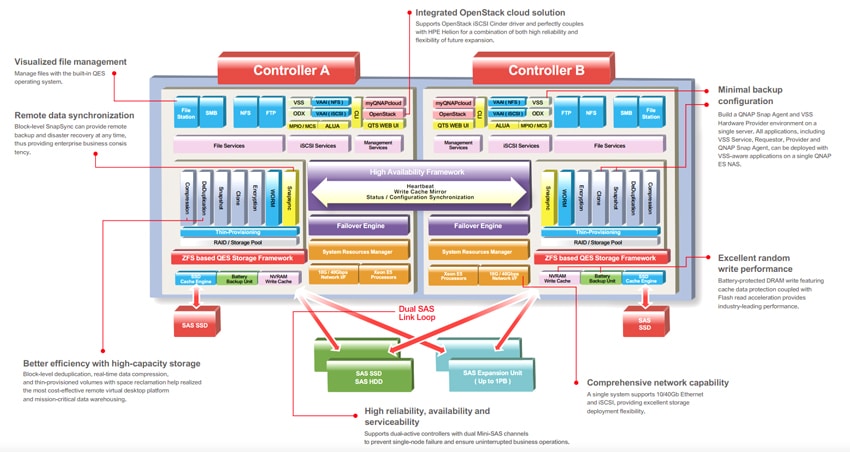

The QNAP ES1640dc is a dual-controller NAS positioned as what the company is calling “real business-class” cloud computing data storage. In order to up its game, QNAP is going HA, leveraging better hardware, software to this device as well as deduplication and data compression. Each node inside the ES1640dc comes equipped with an Intel Xeon 6-core E5-2420 v2 Processor, 32GB of DDR3 RAM, an mSATA SSD for NVRAM cache and comes preloaded with the FreeBSD-backed QNAP QES operating system. The new QES OS supports ZFS, which can lead to better data protection and higher storage capacities. The ES1640dc offers 16 3.5″ drive bays with SAS 12Gb/s capabilities, supporting the latest in high-performance SAS SSDs and high-capacity HDDs.

The new ES1640dc centers around high availability. As mentioned above, the new unit has dual active controllers with failover, so in the event one is lost, the other takes over immediately, resulting in zero downtime. Storage can also be configured in such a way to also operate in an active/active configuration where both controllers can be put to use hosting their own allocated storage. The field replaceable nature of the controller enables a quick replacement without shutting down the NAS. Following the high availability theme, the QNAP ES1640dc also offers battery-backed NVRAM cache that writes data to an mSATA SSD in the event of a power failure. The NVRAM cache also has mirroring between both controllers to avoid data loss in the event of one node going offline.

QNAP took is NAS OS that it has used for all of its units, QTS, and made changes to it for this new enterprise system. The new operating system, QES, will keep the same architecture as QTS, shortening the learning curve. QES builds off of FreeBSD, offering support for ZFS for better data protection. Along with ZFS comes deduplication and in-line data compression, which QNAP states will maximize VDI storage potential. QES is catered more to the needs of the enterprise and we take a more in depth look in our management section below.

The QNAP ES1640dc NAS is available now and has a street price of roughly $9,699 USD. For this review we will be looking at the ES1640dc v1. There is a v2 available now, but not at the time of the review. The v2 supports SAS 12Gb/s drives, has up to 4 10GbE SFP+ ports per controller, and the expansion slots come preinstalled with Mini-SAS and 1 LAN-10G2T-X550.

QNAP ES1640dc NAS specifications:

- Form Factor: 3U

- CPU: Intel Xeon 6-core Processor E5-2420 v2 (15M Cache, 2.20GHz)

- Memory: 32GB/64GB (DDR3) for main memory DIMM and 16GB write cache DIMM per controller

- Cache: mSATA SSD dedicated to NVRAM, SATA signaling

- Drive bays: 16

- Max Capacity: 160TB (10TB x 16)

- Compatible Drive Types:

- 3.5” SAS 6Gb/s HDD

- 2.5” SAS 6Gb/s HDD

- 2.5” SAS 6Gb/s SSD

- External Ports:

- 2 x USB 3.0/2.0 port

- 1 x 10/100/1000 Mbps LAN Port/controller

- 2 x RJ45 (LAN-10G2T-X550)/controller

- PCIe slots

- PCIe Slot x8 (Gen2 x8): reserved for 40GbE or 10GbE LAN card

- PCIe Slot x4 (Gen2 x4): reserved for dual path Mini-SAS

- Power

- Consumption:

- Sleep mode: 314.86W

- Operation: 501.40W

- Supply: 770W

- Fan: Hot-swappable fan module (60x60x38mm; 16000RPM/12v/2.8A x 3)

- Consumption:

- Dimensions

- Depth: 618mm

- Width: 446.2mm

- Height: 132mm

- Weight: 26.75kg / 58.97lb

Design and Build

The QNAP ES1640dc is a 3U unit that is front loading. It accommodates up to 16, 3.5” drives with these bays dominating the majority of the front of the device. On the left hand side there is the power and status button. And each drive tray has an indication light for status when the unit is powered on.

In the theme with high availability the ES1640dc is designed with field replaceable in mind as a field replaceable unit or FRU. The two controls are at the bottom of the device and can be removed and replaced without opening the chassis. On the left hand side of both controllers is a removable BBU. And above both is a removable PSU. The fan module is also easily removable and replaceable, however, the top of the chassis would have to come off for that.

Management

QNAP has traditionally offered the same operating system across all of their devices, keeping the same look and feel consistent no matter what hardware a user is interacting with. While the new QES OS forks to a new backend OS, it still has the same look at feel as existing systems running QTS. The interface is pretty easy to use, but lacks some of the refinement that other large-enterprise companies offer that are pushing down into the sub-20k market segment.

While there are some overarching similarities between the old and new OS, there are also some striking differences. Aside from having different OS kernels (the old was Linux with the new being FreeBSD), the new OS uses ZFS for its file system versus Ext4. With QES there is no App Station, Virtualization Station, Container Station, or Intel Quick Assist. However, the new OS has increased its upper Snapshot and single LUN snapshot limit from 1,024 to 65,536. The move away from Virtualization Station shows a level of seriousness from QNAP for larger businesses that are more likely to favor hypervisors such as VMware or Hyper-V. For remote backup and DR, QES uses SnapSync as opposed to Snapshot Replica used by QTS.

Application Workload Analysis

In order to understand the performance characteristics of enterprise storage devices, it is essential to model the infrastructure and the application workloads found in live production environments. Our benchmarks for the QNAP ES1640dc are therefore the Microsoft SQL Server OLTP performance, MySQL OLTP performance via SysBench, and VMmark. For our database application workloads, each drive will be running 4 or more identically configured VMs.

QNAP ES1640dc Configuration as tested:

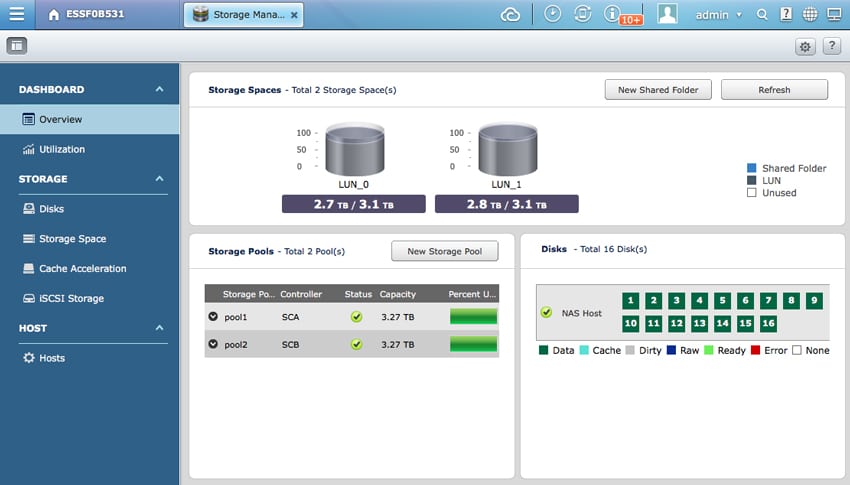

- 16 x 960GB Toshiba PX04S SAS3 SSD (2 RAID10 Pools, one per controller)

- 2 x Intel X520 SFP (one per controller)

- 2 x 3.1TB instant-allocated LUNs (one per controller, compression and deduplication disabled)

- Mellanox SX1036 as ToR switch for storage and compute on same fabric

StorageReview’s Microsoft SQL Server OLTP testing protocol employs the current draft of the Transaction Processing Performance Council’s Benchmark C (TPC-C), an online transaction processing benchmark that simulates the activities found in complex application environments. The TPC-C benchmark comes closer than synthetic performance benchmarks to gauging the performance strengths and bottlenecks of storage infrastructure in database environments. Each instance of our SQL Server VM for this review uses a 333GB (1,500 scale) SQL Server database and measures the transactional performance and latency under a load of 15,000 virtual users.

SQL Server Performance

Each SQL Server VM is configured with two vDisks: 100GB volume for boot and a 500GB volume for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. While our Sysbench workloads tested previously saturated the platform in both storage I/O and capacity, the SQL test is looking for latency performance.

This test uses SQL Server 2014 running on Windows Server 2012 R2 guest VMs, being stressed by Dell’s Benchmark Factory for Databases. While our traditional usage of this benchmark has been to test large 3,000-scale databases on local or shared storage, in this iteration we focus on spreading out four 1,500-scale databases evenly across the ES1640dc (two VMs per controller).

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

SQL Server OLTP Benchmark Factory LoadGen Equipment

- Dell PowerEdge R730 Virtualized SQL 4-node Cluster

- Eight Intel E5-2690 v3 CPUs for 249GHz in cluster (Two per node, 2.6GHz, 12-cores, 30MB Cache)

- 1TB RAM (256GB per node, 16GB x 16 DDR4, 128GB per CPU)

- 4 x Mellanox ConnectX-3 InfiniBand Adapter (vSwitch for vMotion and VM network)

- 4 x Emulex 16GB dual-port FC HBA

- 4 x Emulex 10GbE dual-port NIC

- VMware ESXi vSphere 6.0 / Enterprise Plus 8-CPU

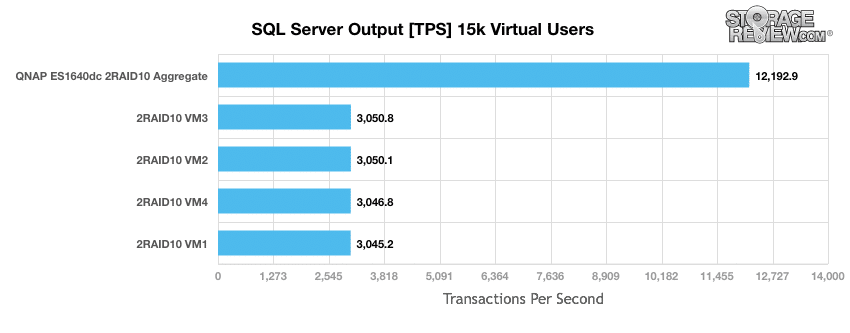

When looking at SQL Server Output, we were testing four identical VMs that gave us TPS scores ranging from 3,045.2 to 3,050.8 with an aggregate of 12,192.9 TPS.

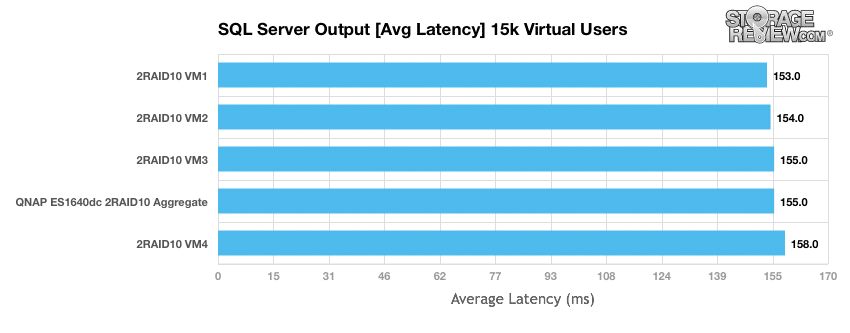

Looking at average latency, the four VMs ranged from 153ms to 158ms with an average of 155ms. While latency was consistent across all four VMs spread across two controllers the average latency was higher than other all-flash enterprise solutions we’ve tested; albeit faster than solutions running in-line data-reduction.

Sysbench Performance

Each Sysbench VM is configured with three vDisks, one for boot (~92GB), one with the pre-built database (~447GB) and the third for the database under test (270GB). In previous tests, we allocated 400GB to the database volume (253GB database size); however, in order to pack additional VMs onto the ES1640dc, we shrunk that allocation down to make more room. From a system resource perspective, we configured each VM with 16 vCPUs, 60GB of DRAM and leveraged the LSI Logic SAS SCSI controller. Load gen systems are four Dell R730 servers with VMs evenly spread across the cluster.

Dell PowerEdge R730 Virtualized MySQL 4 node Cluster

- Eight Intel E5-2690 v3 CPUs for 249GHz in cluster (Two per node, 2.6GHz, 12-cores, 30MB Cache)

- 1TB RAM (256GB per node, 16GB x 16 DDR4, 128GB per CPU)

- 4 x Mellanox ConnectX-3 InfiniBand Adapter (vSwitch for vMotion and VM network)

- 4 x Emulex 16GB dual-port FC HBA

- 4 x Emulex 10GbE dual-port NIC

- VMware ESXi vSphere 6.0 / Enterprise Plus 8-CPU

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Storage Footprint: 1TB, 800GB used

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

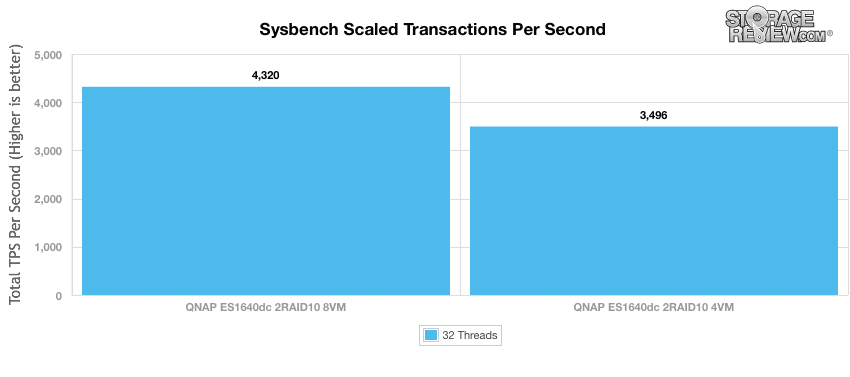

At 4 VMs we saw an aggregate TPS score of 3,496. Doubling that to 8 VMs we saw an aggregate score climb to 4,320 TPS. While this performance range isn’t the fastest we’ve measured recently, it stacks up well quite well for the price segment the ED1640dc competes in.

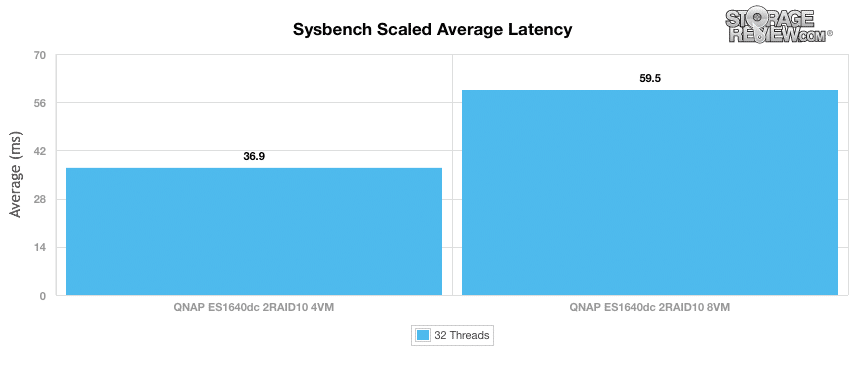

With average latency we once again looked at 4 and 8 VMs. The 4VM setup gave us an average latency of 36.9ms and doubling that to 8VMs only raised the latency to an average of 59.5ms.

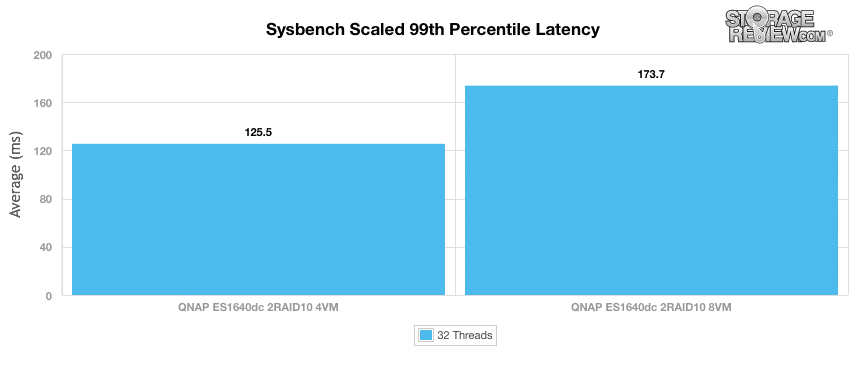

The ES1640dc had slightly higher worst-case MySQL latency scenario (99th percentile latency) at the 4VM level than what we have seen recently for other all-flash systems, but not excessively higher.

VMmark Performance Analysis

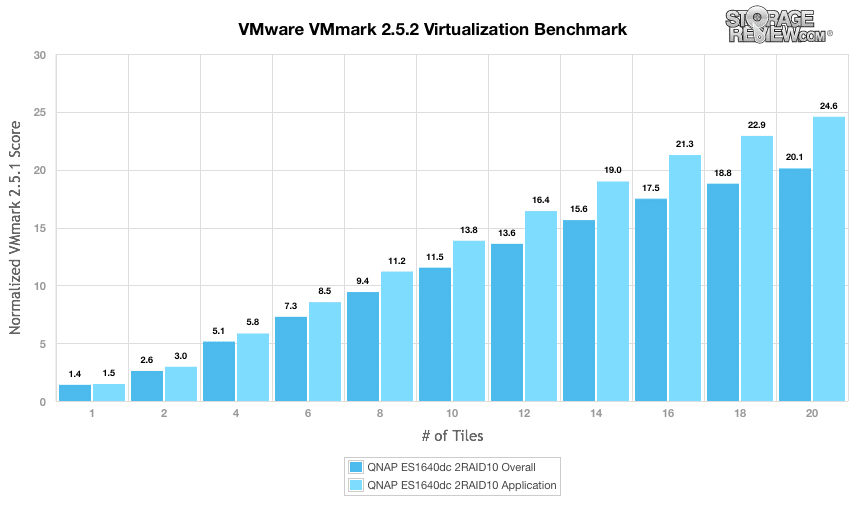

As with all of our Application Performance Analysis, we attempt to show how products perform in a live production environment compared to the company’s claims on performance. We understand the importance of evaluating storage as a component of larger systems, most importantly how responsive storage is when interacting with key enterprise applications. In this test we use the VMmark virtualization benchmark by VMware in a multi-server environment.

VMmark by its very design is a highly resource-intensive benchmark, with a broad mix of VM-based application workloads stressing storage, network and compute activity. When it comes to testing virtualization performance, there is almost no better benchmark for it, since VMmark looks at so many facets, covering storage I/O, CPU, and even network performance in VMware environments.

The QNAP ES1640dc performed quite well for a SMB-geared NAS in our VMmark 2.5.2 benchmark, hitting 20 tiles. Considering that many 100k enterprise platforms a few years ago had trouble hitting 10-tiles, an 11k NAS hitting 20 tiles equipped with a read-centric flash is impressive.

Conclusion

QNAP has its eye on the SMB/SME markets as it introduces the ES1640dc NAS. The NAS is 16-bay (160TB max capacity before expansion units are added) and comes with dual controllers, battery-backed with flush to NVRAM controller cache, as well deduplication and compression capabilities . Two of the main focuses of this NAS are its high availability through its active/active controllers, and its new enterprise operating system QES which brings with it rich enterprise features such as data-reduction, snapshots and end-to-end data integrity.

Looking at performance, we tested the ES1640dc with our application workload analysis of SQL Server, SysBench, and VMmark. In our SQL Server TPC-C benchmark the ES1640dc had an aggregate score of 12,192.9 TPS and an average aggregate latency of 155ms. On our SysBench TPC-C benchmark, the NAS hit an aggregate of 4,320 TPS with 8 VMs, and had an aggregate average latency of 59.5ms. In a virtualization-rich environment testing performance with VMmark, the QNAP was able to run a 20-tile load, which is very impressive given the price-point of the system. Overall for the 11k starting point, the QNAP ES1640dc has a lot to offer in the entry-enterprise segment where budgets are tight, but demands for rich featuresets are also high.

Pros

- Dual controllers that can run in active/passive or active/active configurations

- Features data-reduction and snapshot capabilities

- Strong virtualization performance as measured in our VMmark benchmark

Cons

- Lacks some OS refinement compared to other enterprise offerings

The Bottom Line

The QNAP ES1640dc is a dual-controller high-performance NAS aimed at the SMB/SME market for buyers that require broad feature-sets on a more limited budget.

Amazon

Amazon