The Dell EMC PowerVault ME4 Series is a family of storage arrays designed to meet the needs of the entry storage market, which is generally thought of in the sub-$25K price band. However, the PowerVault ME4 Series is exceedingly flexible. The system can be deployed in an HDD configuration to meet the needs of the entry market or edge with starting pricing below $10K, or it can be configured in hybrid or as all-flash to meet more demanding needs of a growing business. Regardless of how it’s deployed, the PowerVault ME4 provides organizations with an easy-to-deploy and manage storage solution that offers a depth of features that are common in enterprise storage products.

The PowerVault ME4 is delivered in a few different configurations but they are all designed to be able to start small and scale by adding drives to meet business demands over time. The ME4 also comes with an all-inclusive licensing program, making ownership easier for small businesses to understand and budget for. In terms of deployment, the ME4 can be configured in DAS and SAN, meeting a variety of different use cases. Management is handled through an HTML5 web interface, making management and initial rollout intuitive; Dell EMC expects new systems to be operational in under 15 minutes.

While many will start their PowerVault ME4 out in an HDD configuration, the system can be turned into a hybrid later by adding a little bit of flash. With SSDs on board, the ME4 can deliver quite a bit of performance; in fact, Dell EMC says up to 320,000 IOPS. The ME4 is built for reliability as well, with five 9’s availability. If scale is a concern, the ME4 delivers here as well, scaling up to 4PB in raw capacity via the 12G SAS backend. All of the systems have a deep set of features including caching/tiering, asynchronous replication, snapshots, support for SED drives, integration with VMware vCenter and SRM, distributed RAID, thin provisioning and so on.

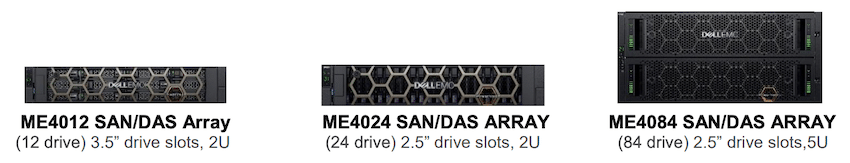

Depending on the business need, the ME4 comes in three chassis options to get started: the ME4012, ME4024, and the ME4048. The ME4012 and ME4024 are both 2U chassis that can come with single or dual controllers and can scale to roughly 3PB. The core difference between the two is the ME4012 supports 12 3.5″ drives, where the ME4024 supports 24 2.5″ drives. Dell EMC also offers a larger chassis as part of the PowerVault ME4 line; the ME4048 is a 5U chassis that comes in dual-controller-only configuration and on board, it has support for 84 3.5″ drives. All of the systems can be expanded with the ME412 (2U, 12 3.5″ drives), ME424 (2U, 24 2.5″ drives) or the ME484 (5U, 84 3.5″ drives) which is available as a JBOD.

It’s worth noting that Dell EMC is using their own chassis based on PowerEdge designs, with Seagate (Dot Hill) controllers. While these controllers are available from other vendors, Dell EMC believes they have an advantage when it comes to integration and scalability in VMware, Microsoft and even HPC environments, amongst others. They also offer the ME4 in their “Future Proof Loyalty Program,” which covers a whole host of services and guarantees. Dell EMC further differentiates their offering with an overall 4PB maximum scale, connected with 12Gb SAS backend. Additionally, Dell EMC offers a full range of JBOD options.

In this review, we tested the PowerVault ME4024 in a fully populated hybrid configuration with twelve 1.8TB 10K HDDs and twelve 960GB read-intensive SSDs. Our array was also supplied with eight 16Gb FC optics. Full specifications and features are available here.

Dell EMC PowerVault ME4024 Design and Build

The Dell EMC PowerVault ME4 is a 2U storage array that is consistent in design with the rest of the current generation Dell EMC data center products. The unit has a stylish bezel with both Dell EMC and PowerVault branding on the front. Removing the bezel on our ME4024 reveals the twenty-four 2.5” drive bays that can fit either HDDs or SDDs.

Flipping around to the rear of the device, we can see the connectivity of the platform. Each controller offers a mirrored connection assortment. Looking at the top controller, the blue port is for JBOD expansion, black is management (LAN, USB, and serial) while the purple ports are SFP+ connections that can be used for 10G networking or 8/16Gb FC. In our setup we have 16Gb optics installed and all ports allocated to FC. The array supports all ports for FC, all ports for Ethernet, or half for each in a hybrid configuration. While the system does offer certain PowerEdge design elements, it does not include iDRAC management that you might find with other Dell EMC storage offerings based on PowerEdge hardware.

The Dell EMC PowerVault ME4024 is highly serviceable, with redundant user-replaceable power supplies as well as dual active-active controllers. Users are able to pull and swap controllers online, as well as manage firmware mirroring across controllers. This makes it easy to keep the system up to date and synchronized as firmware is pushed. The same on-board firmware tools can also be leveraged for updating attached JBODs and disks (anything the controllers can see).

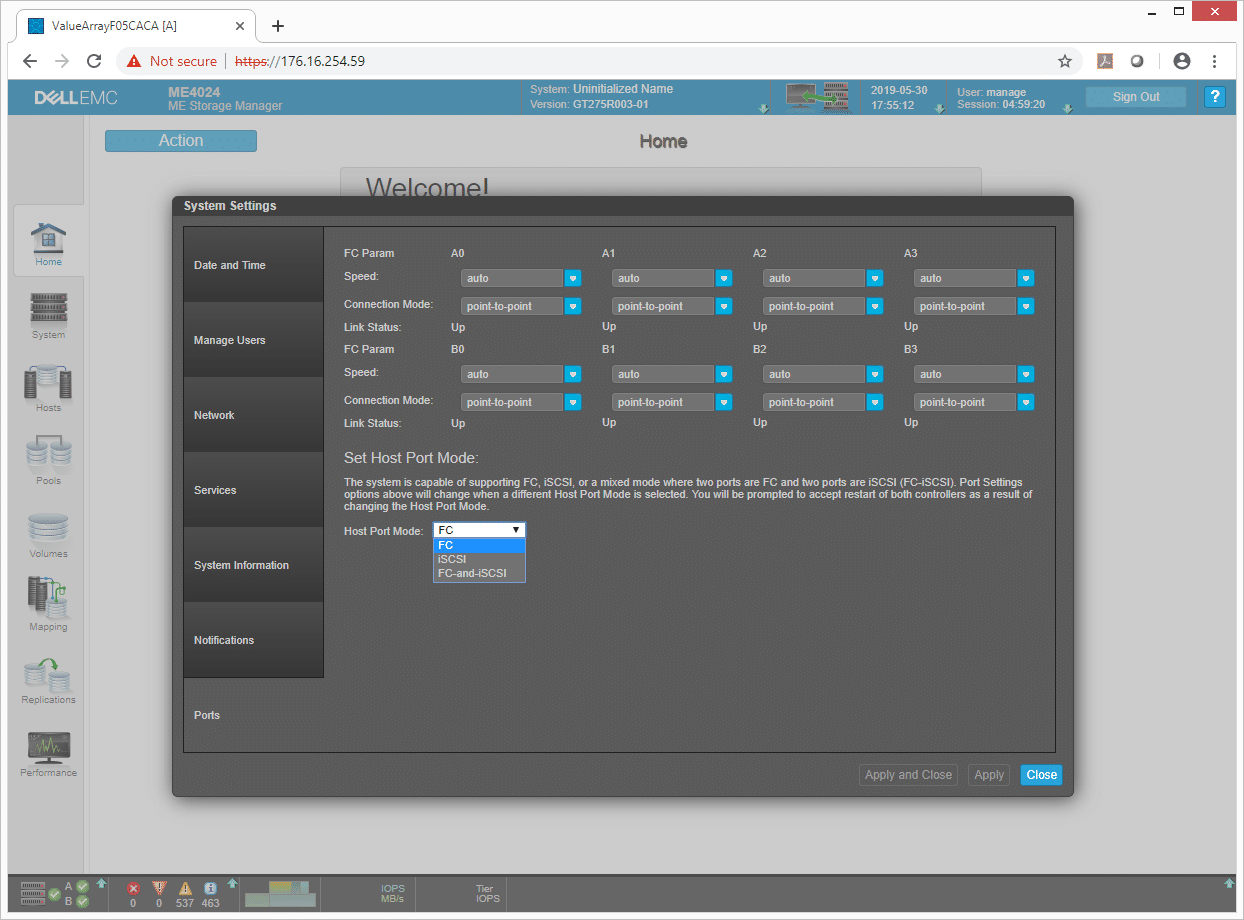

Dell EMC PowerVault ME4024 Management

For management, the Dell EMC PowerVault ME4 leverages ME Storage Manager. Opening up to the home screen, users can go through the system settings and set up actions such as the host port mode. In the screen shown below you can see the configuration on a per port basis for each Fibre Channel connection (all ports configured for FC) and the option to reconfigure the array to iSCSI or Hybrid. You can also note the port connection mode for each FC port, which can be adjusted based on if you will be connecting servers to the array through a switch or direct attached. Certain smaller environments can leverage that type of topography to reduce cost and complexity.

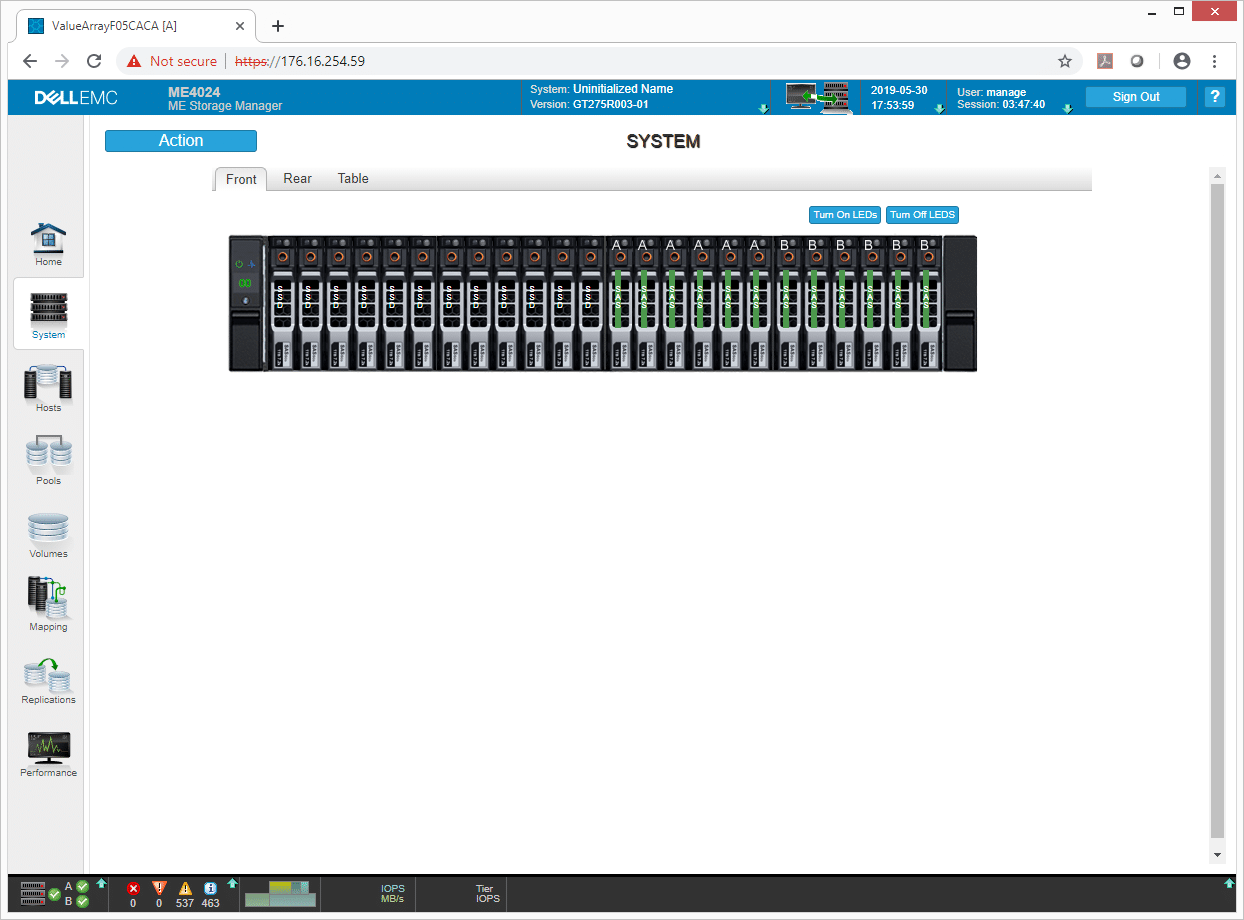

The next tab is the System tab that allow users to get a quick overview of various parts of the system. Hovering over elements expands the information for that item. On the System tab you can look at the front or rear in a graphical form, or look at all information presented in a text table. This area is useful for drilling into system specifics such as drive-wear levels, firmware revisions or other non-day-to-day stats.

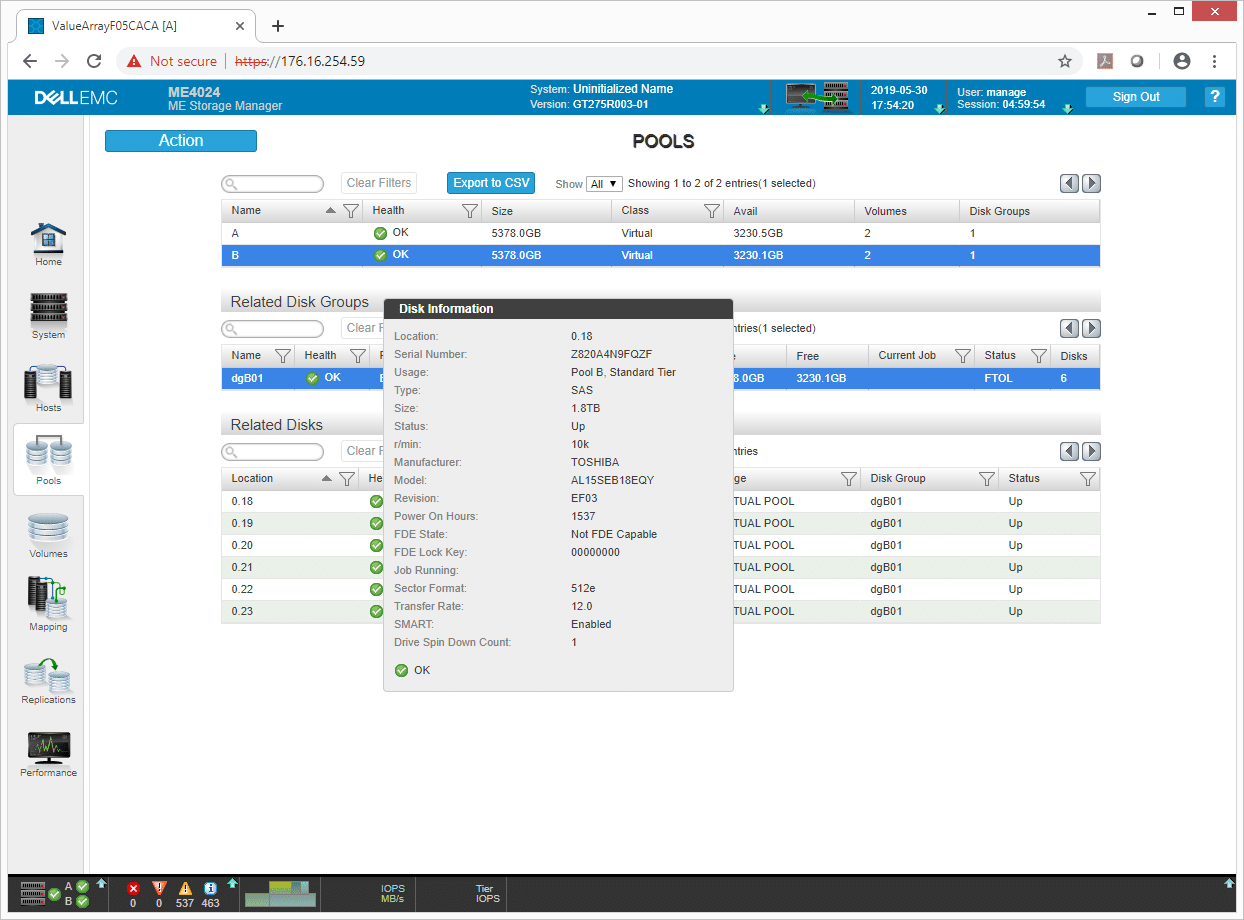

The Pools tab allows users to set up, get information on, or delete storage pools. They will also get basic information such as capacity, class, and available capacity. If users are adding disks into the array, they can use an action to add in a disk group to expand storage capacity.

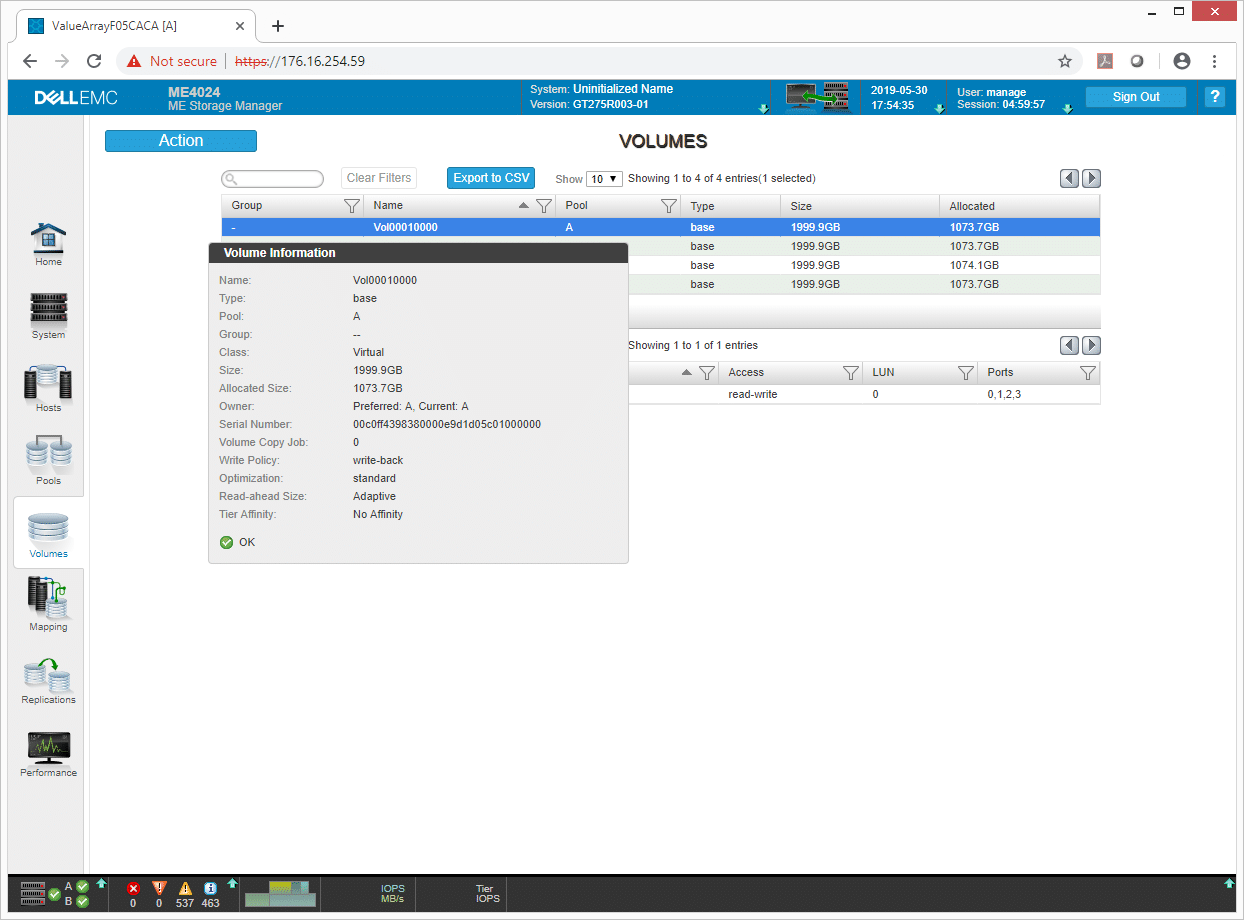

Similar to the Pools tab, the Volumes tab gives users a quick rundown of current volumes created with pertinent information and allows new volumes to be created or existing ones to be expanded or deleted. This area also allows volumes to be mapped to hosts and includes volume options such as setting tier priority.

Dell EMC PowerVault ME4024 Configuration

The Dell EMC PowerVault ME4024 was supplied with 24 disks, half of which are Read-Intensive 960GB SSDs and the other half 1.8TB 10K SAS HDDs. The ME4024 supports both caching and tiering, the latter of which offers the full performance advantage of flash when data is pushed up into that tier. To speed up our testing process and show the performance characteristics of both storage types, we created storage pools of just flash or just spinning media. In a production system, users would have both storage types in the same pool, with the array managing data moving up and down based on access patterns.

For storage specifically, we leveraged RAID10 which is commonly used by customers purchasing this storage array. With 12 drives of each type and a two-controller layout, we split them into two groups of six drives. This allowed us to create a six-drive RAID10 pool on each controller, with three disk groups. This configuration was used for both SSDs and HDDs separately.

For the back-end connectivity, the ME4024 supports three modes; iSCSI, FC or Hybrid FC/iSCSI. The system was supplied with all 16Gb FC optics, so we went with a pure FC configuration. This leveraged all 8 ports (four per controller) attached to our dual-switch FC fabric. In aggregate, this allowed for a theoretical 128Gb bandwidth from the storage array (16GB/s) where our dual-port 8-host cluster supports 256Gb or 32GB/s peak.

Dell EMC PowerVault ME4024 Performance Review

SQL Server Performance

StorageReview’s Microsoft SQL Server OLTP testing protocol employs the current draft of the Transaction Processing Performance Council’s Benchmark C (TPC-C), an online transaction processing benchmark that simulates the activities found in complex application environments. The TPC-C benchmark comes closer than synthetic performance benchmarks to gauging the performance strengths and bottlenecks of storage infrastructure in database environments.

Each SQL Server VM is configured with two vDisks: 100GB volume for boot and a 500GB volume for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. While our Sysbench workloads tested previously saturated the platform in both storage I/O and capacity, the SQL test looks for latency performance.

This test uses SQL Server 2014 running on Windows Server 2012 R2 guest VMs, and is stressed by Dell’s Benchmark Factory for Databases. While our traditional usage of this benchmark has been to test large 3,000-scale databases on local or shared storage, in this iteration we focus on spreading out four 1,500-scale databases evenly across our servers.

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

For our SQL Server transactional performance, the Dell EMC PowerVault ME4 saw an aggregate of 12,622.2 TPS with individual VMs ranging from 3,152.5 TPS to 3,158.6 TPS.

Looking at SQL Server average latency, the ME4 gave us an aggregate of 10.5ms with individual VMs ranging from 6ms to 15ms.

Sysbench MySQL Performance

Our first local-storage application benchmark consists of a Percona MySQL OLTP database measured via SysBench. This test measures average TPS (Transactions Per Second), average latency, and average 99th percentile latency as well.

Each Sysbench VM is configured with three vDisks: one for boot (~92GB), one with the pre-built database (~447GB), and the third for the database under test (270GB). From a system resource perspective, we configured each VM with 16 vCPUs, 60GB of DRAM and leveraged the LSI Logic SAS SCSI controller.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

For Sysbench, we tested sets of VMs that include both 4VM and 8VM. For the average transactional testing, the ME4 was able to hit 9,330.1 TPS for 4VM and 13,606.8 TPS for 8VM.

With Sysbench average latency, the ME4 had latency of 13.7ms for 4VM and 18.9ms for 8VM.

In our worst-case scenario (99th percentile) latency, the ME4 was able to hit 33.5ms for 4VM and 39.5ms for 8VM.

VDBench Workload Analysis

When it comes to benchmarking storage arrays, application testing is best, and synthetic testing comes in second place. While not a perfect representation of actual workloads, synthetic tests do help to baseline storage devices with a repeatability factor that makes it easy to do apples-to-apples comparison between competing solutions. These workloads offer a range of different testing profiles ranging from “four corners” tests, common database transfer size tests, as well as trace captures from different VDI environments. All of these tests leverage the common vdBench workload generator, with a scripting engine to automate and capture results over a large compute testing cluster. This allows us to repeat the same workloads across a wide range of storage devices, including flash arrays and individual storage devices.

Profiles:

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

- 4K Random Write: 100% Write, 64 threads, 0-120% iorate

- 64K Sequential Read: 100% Read, 16 threads, 0-120% iorate

- 64K Sequential Write: 100% Write, 8 threads, 0-120% iorate

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

The Dell EMC PowerVault ME4 will most likely be used as a hybrid, however it is capable of being filled with all spinning disks or all flash. We tested both SSDs and HDDs individually to give readers an overall picture of how the unit would react to data sitting inside those two tiers. When operated with spinning media and flash inside the same pool, the system will automatically handle data tiering, with licenses included with the ME4 purchase.

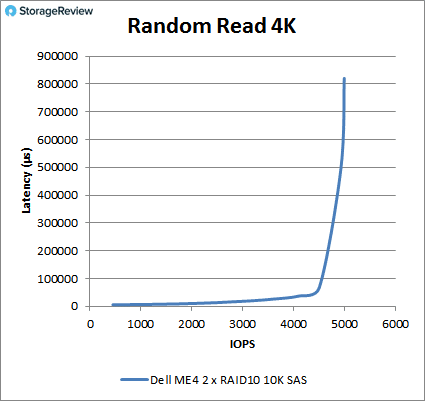

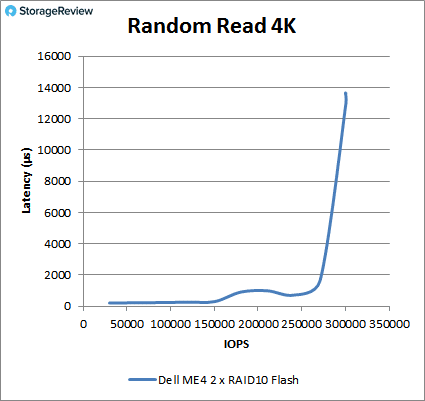

For random 4K read performance, the HDDs had a peak performance or 4,986 IOPS at a latency of 820ms. The SSDs were able to stay under 1ms until about 260K IOPS and peaked at 299,962 IOPS with a latency of 13.6ms.

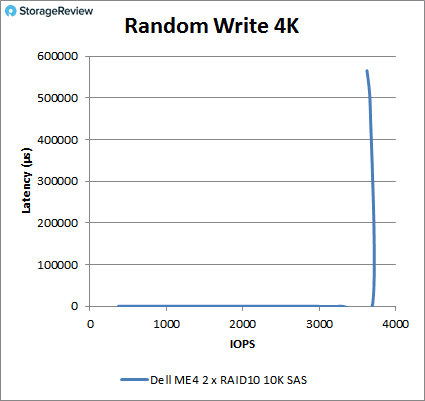

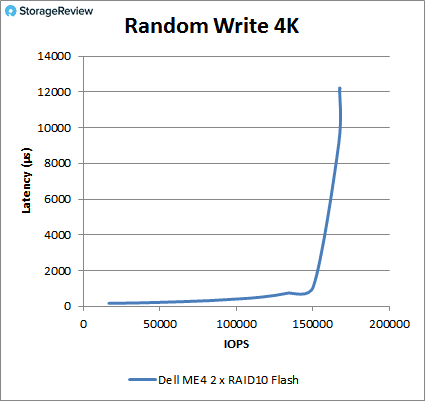

With random 4K write performance, the HDDs peaked at 3,621 IOPS and a latency of 566ms. The SSDs stayed under 1ms until about 150K IOPS and peaked at 167,569 IOPS with a latency of 12.2ms.

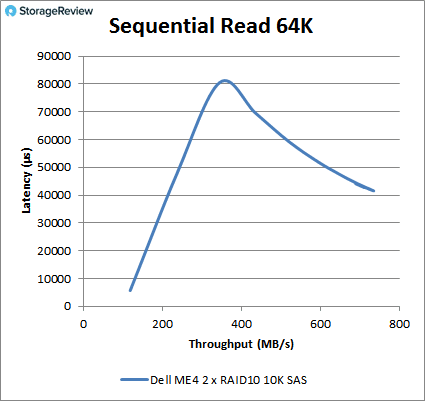

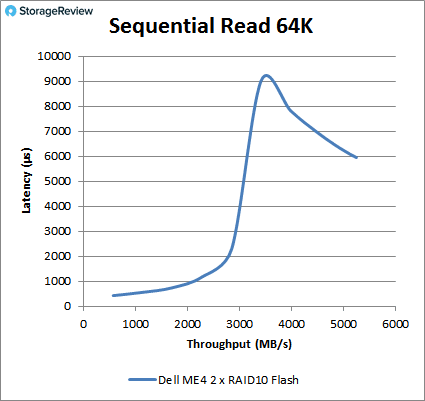

Next, we switch over to sequential testing with our 64K benchmarks. With read, the HDDs peaked at 11,764 IOS or 735MB/s with a latency of 41.5ms (though the latency spiked up to around 80ms near the middle of the benchmark). The SSDs had sub-millisecond latency until about 34K IOPS or about 2.2GB/s and peaked at 83,860 IOPS or 5.2GB/s at a latency of 6ms, again with a larger spike near the middle (near 9ms).

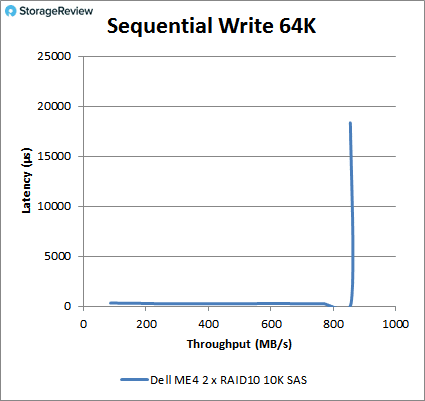

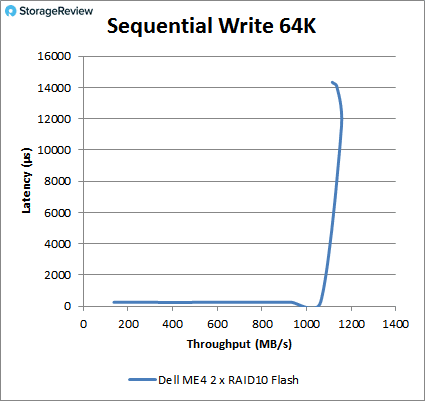

For sequential 64K write, the HDDs had a pretty consistent run until peaking at 13,664 IOPS or 854MB/s and a latency of 18.3ms. The SSDs also had a large run on consistent performance and stayed under 1ms until about 17.5K IOPS, going on to peak at roughly 18,200 IOPS or 1.15GB/s at a latency of roughly 12ms before dropping off in performance and its latency trailing up.

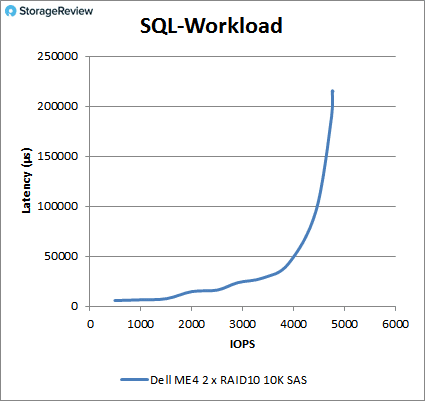

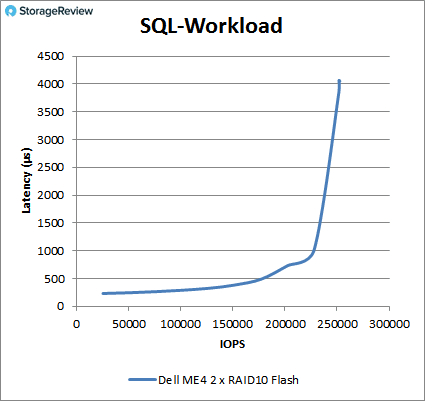

Our next batch of benchmarks are our SQL tests. In SQL, the ME4 with HDDs peaked at 4,752 IOPS with a latency of 215ms. The SSDs had sub-millisecond latency performance until about 227K IOPS and peaked at 251,860 IOPS with a latency of 4ms.

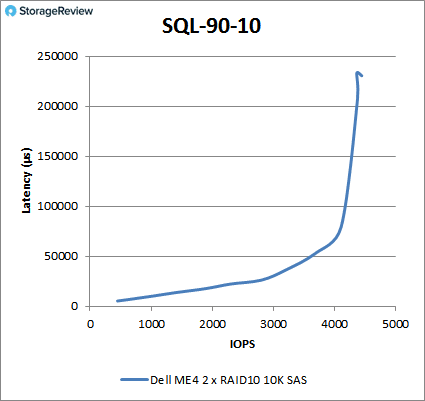

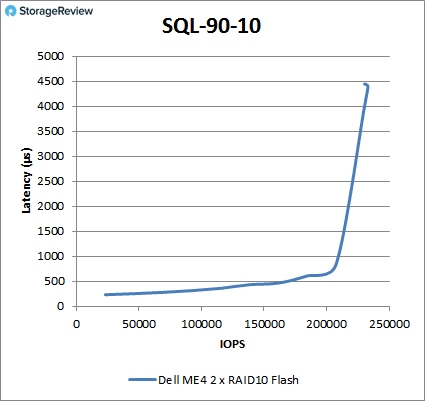

For SQL 90-10, the HDDs peaked at 4,441 IOPS and a latency of 230ms. The SSDs stayed under 1ms until about 210K IOPS and peaked at 233,040 IOPS with a latency of 4.4ms.

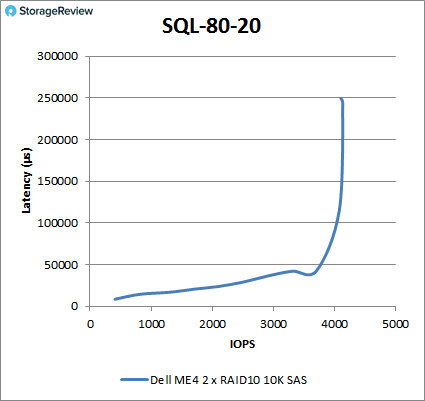

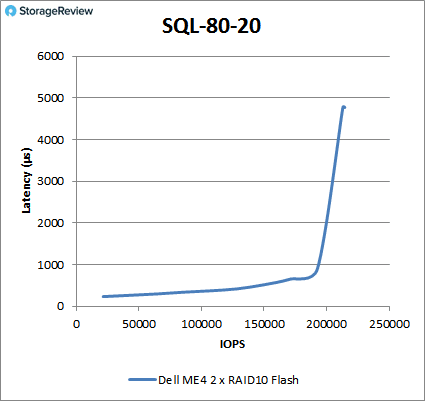

With SQL 80-20, the HDDs had a peak performance of 4,131 IOPS at a latency of 244ms. The SSDs stayed under 1ms until about 195K IOPS and peaked at 214,547 IOPS with a latency of 4.8ms.

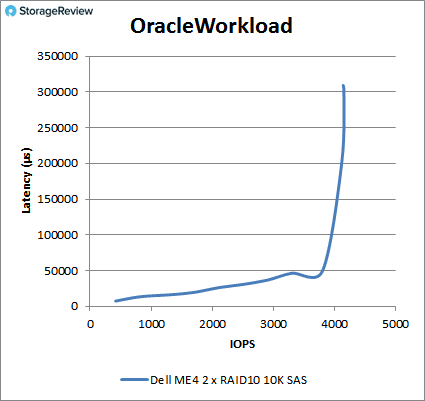

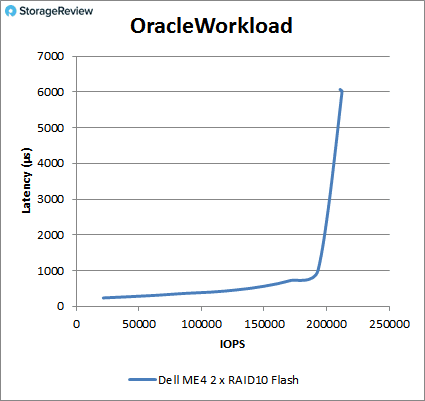

Moving on to our Oracle workloads, we saw the HDDs peak at 4,138 IOPS and a latency of 302ms. The SSDs maintained sub-millisecond latency until about 193K IOPS and peaked at 212,272 IOPS with a latency of 5.98ms.

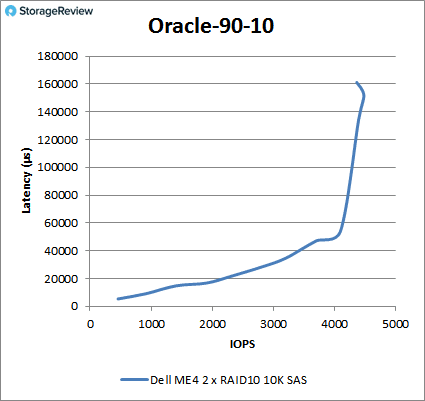

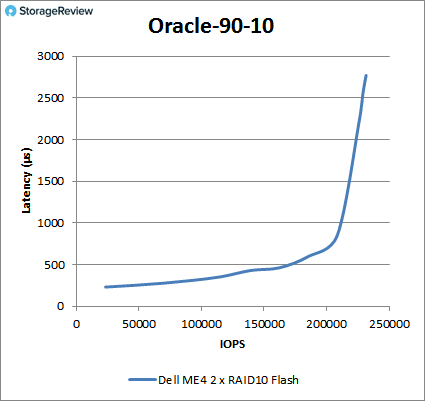

For Oracle 90-10, the HDDs peaked at 4,477 IOPS with a latency of 152ms before dropping off some. The SSDs ran under 1ms for most of the test and peaked at 231,521 IOPS with a latency of 2.8ms.

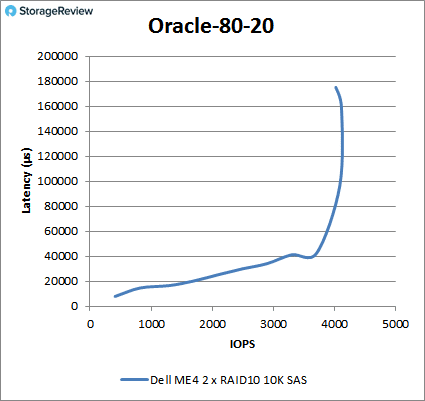

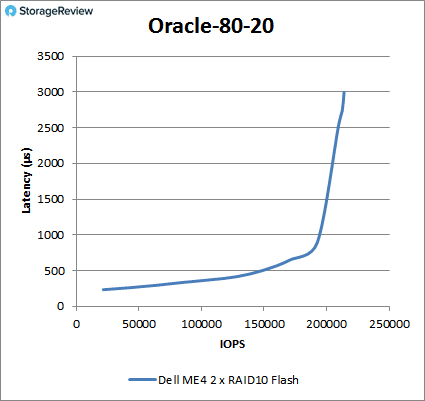

Oracle 80-20 had the HDDs peak at 4,017 IOPS with a latency of 175ms. The SSDs ran to 193K IOPS under 1ms and peaked at 213,965 IOPS with 3ms of latency.

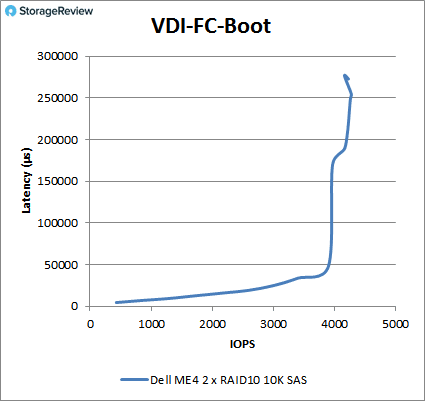

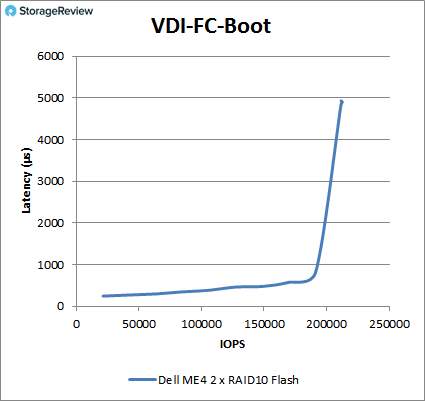

Next, we switched over to our VDI Clone Test, Full and Linked. For VDI Full Clone Boot, the HDDs peaked at 4,257 IOPS at a latency of 249ms. The SDDs peaked at 212,565 IOPS and a latency of 4.8ms.

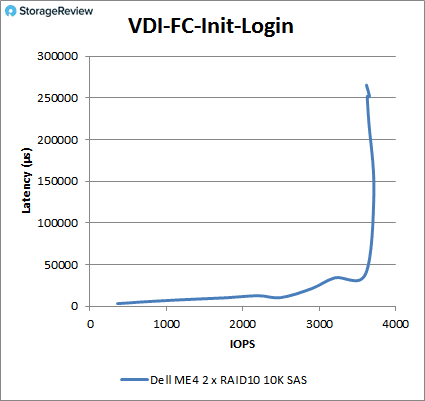

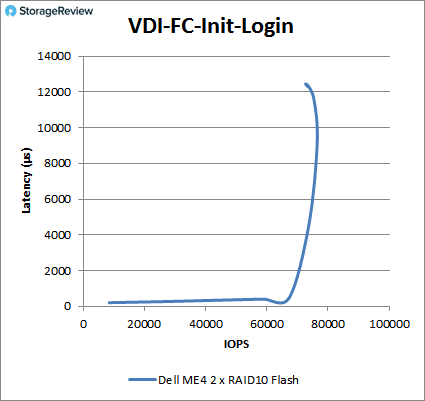

With VDI FC Initial Log In, the HDDs peaked at 3,614 IOPS and a latency of 265ms. The SSDs maintained sub-millisecond latency until about 70K IOPS and peaked at about 76K IOPS at 10ms before falling off some.

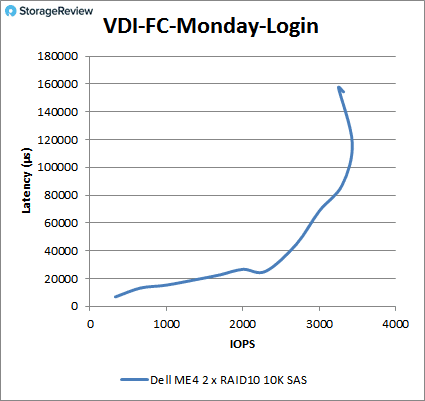

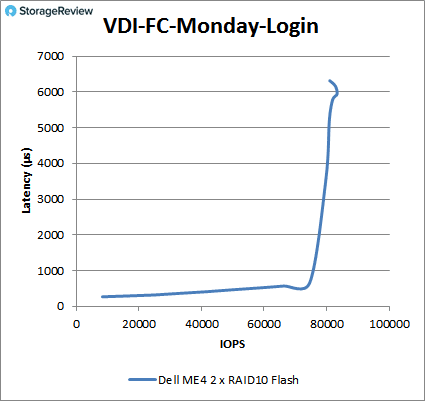

VDI FC Monday Login saw the HDDs peak at about 3,400 IOPS at 120ms latency. The SSDs stayed under 1ms until about 75K IOPS and peaked at 83,371 IOPS with 5.9ms latency.

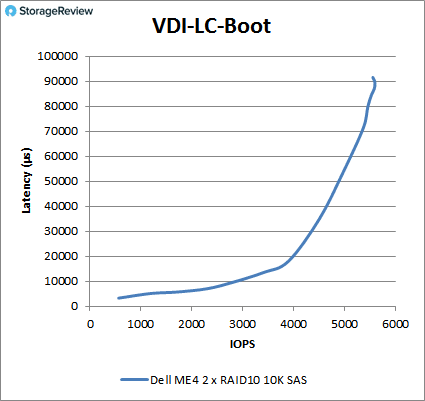

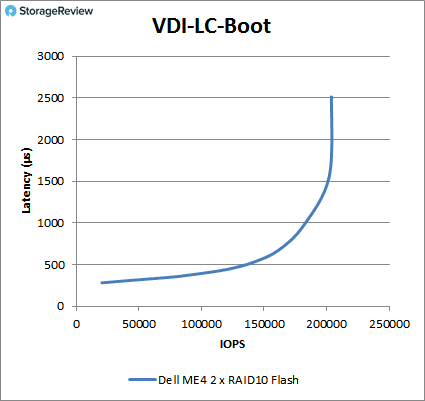

Switching over to the VDI Linked Clone (LC), in the boot test, the HDDs peaked at 5,588 IOPS with a latency of 870ms. The SSDs had sub-millisecond performance until about 181K IOPS and went on to peak at 203,898 IOPS with a latency of 2.5ms.

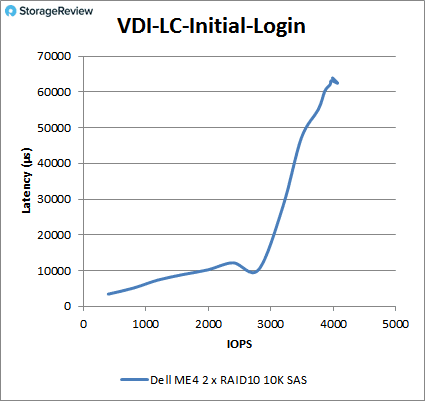

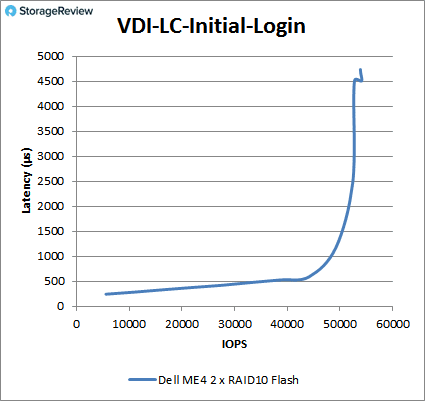

For VDI LC Initial Log In, the HDDs peaked at 4,067 IOPS with a latency of 63.2ms. The flash storage stayed under 1ms until just shy of 50K IOPS and peaked at 54,120 IOPS with a latency of 4.5ms.

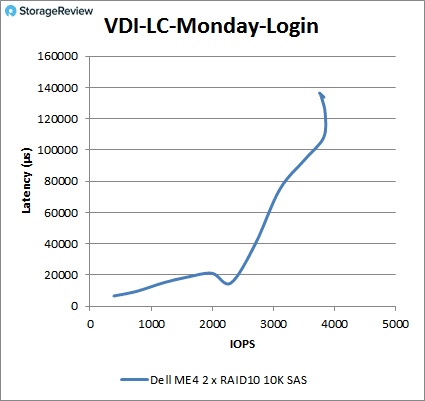

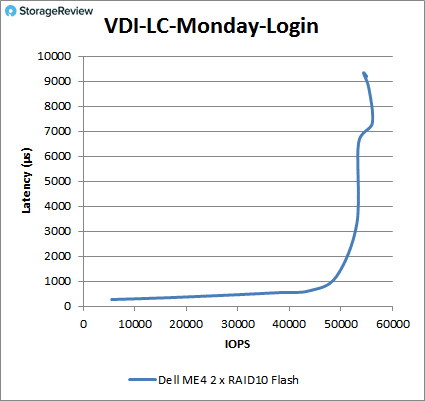

Finally, for VDI LC Monday Login, the HDDs peaked at about 3,900 IOPS at roughly 120ms before falling off a bit. The SSDs stayed under 1ms until about 49K IOPS and peaked at about 57K IOPS with a latency of 7ms.

Conclusion

When it comes to storage arrays, not every business is looking for the highest performing box with a slew of features. Many businesses are looking for strong performing, reliable and affordable storage arrays. This is the spot where platforms such as the Dell EMC PowerVault ME4 come in, as an entry offering, and with the speed and price point geared for most SMB/edge use cases. The ME4 knocks it out of the park on this front, with a sub-$10K starting price and a build-it-as-required pricing model. PowerVault ME4 buyers also get an all-inclusive licensing package, enabling flash caching/tiering with support for iSCSI or FC deployments amongst other features.

In terms of performance, the PowerVault ME4 is specced at offering up to 320k IOPS of throughput. In our tests with a configuration half-populated with read-intensive flash, we were able to get just shy of 300k IOPS 4K read with two RAID10 pools (one per controller) in our virtualized environment. Random write performance was 167k IOPS 4K, which is also very respectable for a storage platform in this market segment. Bandwidth measured was also strong, peaking out above 5GB/s read and 1.15GB write.

The eye opener, though, is just how well it performs in our application tests focusing on SQL Server and MySQL performance. In these areas, we saw an average latency of 10.5ms in SQL Server, which is very good even when compared to much more expensive all-flash arrays. Sysbench performance scaled from 9K TPS to 13.6K TPS, which again is very strong. The only downside to this platform is if trying to use it in a larger than intended environment, performance will falter. That’s the domain of midrange all-flash products, though, that can handle increased loads better. In Dell EMC parlance, that’s either the Unity or SC Storage lines.

Overall though, the ME4 knows what it’s trying to be. Our results looked at both HDD and all-flash pools. Most use cases will sit in between as a hybrid ME4 configuration. A few SSDs go a long way in accelerating HDD volumes. Should there be an application that can benefit from all-flash, organizations can easily pin volumes to a flash tier. While the performance story is strong for the entry market, the rest of the package is there as well. The GUI is easy to understand and configure, and expansion options can take our review model up to 3PB, with the ME4084 topping out at 4PB. Moreover, there’s a deep set of enterprise features. This price band has a lot of options in the marketplace, but not a lot of “very good” options. The PowerVault ME4 is a clear leader in the entry-storage market.

Amazon

Amazon