The Lenovo ThinkSystem SR570 is a 2-socket 1U server that can be used by the entire gamut of SMB to large enterprises. The server offers a balance of performance, memory, and storage. This balance leads to versatility allowing the SR570 to be used in a wide range of workloads, such as virtualization and cloud computing, infrastructure security, web serving, and application development. The SR570 is also a popular option for software-defined solutions, of which Lenovo supports many via their ThinkAgile platforms, that have a heavier reliance on compute density than storage footprint.

Under the hood, the SR570 can sport up to two Intel Xeon Scalable CPUs for up to 26 cores, up to 1TB of 2666MHz TruDDR4 RAM, and up to 10 2.5-inch drives (or up to 4 3.5” drives) with the users choice of NVMe, SAS, or SATA SSDs, and 3 PCIe slots and one optional LOM slot. The Lenovo ThinkSystem SR570 server can fit all of the above in a compact 1U frame.

The SR570 has the potential for great performance and is highly versatile. There are multiple storage options (including the additional of Lenovo’s AnyBays that fit multiple interfaces in the same bay) that can allow users to have either the capacity or media type needed in a 1U footprint. The server also supports several networking and PCIe card options depending on customer needs. To top off everything the server is affordable starting at just $2,300 USD.

Our particular build consists of two Intel Xeon Gold 5118 Processors, 384GB of RAM, and we leverage four 4TB Memblaze PBlaze5 Mixed Use NVMe SSDs.

Lenovo ThinkSystem SR570 Server Specifications

| Form factor | 1U |

| Processor |

Up to two Intel Xeon Bronze, Silver, Gold, or Platinum processors of up to 150 W TDP: Up to 26 cores (2.0 GHz core speeds) |

| Chipset | Intel C622 |

| Memory |

Up to 16 DIMM sockets |

| Storage | |

| Drive bays | 4 LFF SATA Simple Swap drive bays 4 LFF SAS/SATA hot-swap drive bays 8 SFF SAS/SATA hot-swap drive bays 10 SFF hot-swap drive bays: 6x 2.5" SAS/SATA & 4x 2.5" AnyBay |

| Internal Storage capacity | 2.5-inch models: Up to 76.8 TB with 10x 7.68 TB 2.5" SAS/SATA SSDs 3.5-inch models: Up to 56 TB with 4x 14 TB 3.5" NL SAS/SATA HDDs |

| Storage controller |

6 Gbps SATA |

| Network interfaces |

2x Integrated 1 GbE RJ-45 ports (no 10/100 Mb support) |

| I/O expansion slots |

Up to three slots depending on the riser cards installed. The slots are as follows: Slot 1: PCIe 3.0 x8; low profile |

| Ports | |

| Front | 1x USB 2.0 port with XClarity Controller access 1x USB 3.0 port. 1x VGA port (optional) |

| Rear | 2x USB 3.0 ports 1x VGA port Optional 1x DB-9 serial port |

| Cooling |

4x LFF or 8x SFF drive bay models: Four (one processor) or six (two processors) hot-swap single-rotor system fans with N+1 redundancy. |

| Power Supply | Up to two redundant hot-swap 550 W or 750 W (100 – 240 V) High Efficiency Platinum or 750 W (200 – 240 V) High Efficiency Titanium AC power supplies. HVDC support (China only). |

| OS support | Microsoft Windows Server 2012 R2, 2016, and 2019 Red Hat Enterprise Linux 6 (x64) and 7 SUSE Linux Enterprise Server 11 (x64), 12, and 15 VMware vSphere (ESXi) 6.0, 6.5, and 6.7. |

| Warranty | 1- or 3-year |

| Dimensions | H: 43 mm (1.7 in), W: 434 mm (17.1 in), D: 715 mm (28.1 in) |

| Weight | Min configuration: 10.2 kg (22.5 lb), max: 16.0 kg (35.3 lb) |

Design and Build

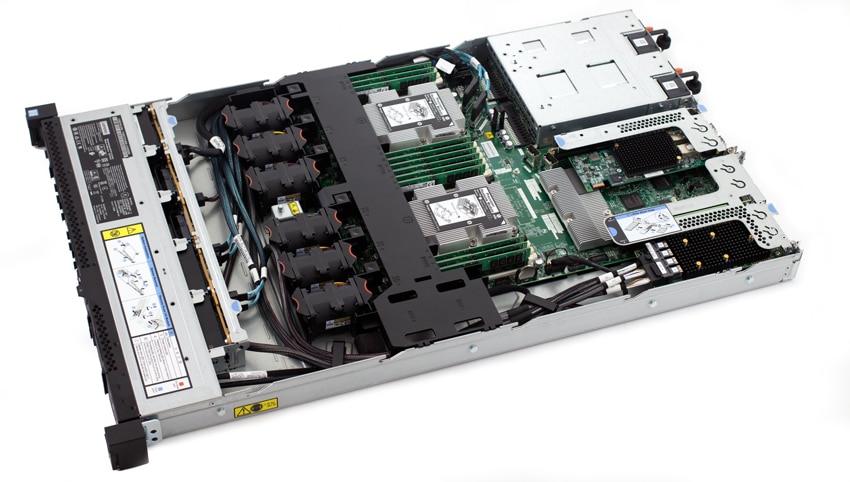

The Lenovo SR570 is a 1U server that comes in two configurations, either four large form factor (3.5”) bays across the front, or up to 10 small form factor (2.5”) bays. Our review unit offers six SATA/SAS bays in addition to four 2.5" NVMe-enabled bays. The drive bays take up most of the front of the server on the left side across the front. On the right side are the power button, USB 3.0 port, status LEDs, USB 2.0 port, and optional VGA port (the configuration is slightly different with the LFF layout with some of the above across the top).

Flipping the server around to the rear we see two hot-swappable PSUs on the right, two USB 3.0 ports to the left of the PSUs, followed by a VGA port, two RJ-45 GbE ports, a 10/100/1000 Mb Ethernet port for XCC, an optional LOM card slot, and up to three PCIe slots on top.

The top view shot shows the clean airflow pathways across the internal components with minimal obstructions. Cooling is provided through six fans, split evenly on the left and right side for each CPU.

The NVMe bays in this server offer direct attachment to the motherboard, which helps out by not consuming a PCIe slot. We've seen a number of servers that require sacrificing a precious (in a 1U server) slot to add NVMe, which makes additional expansion more difficult.

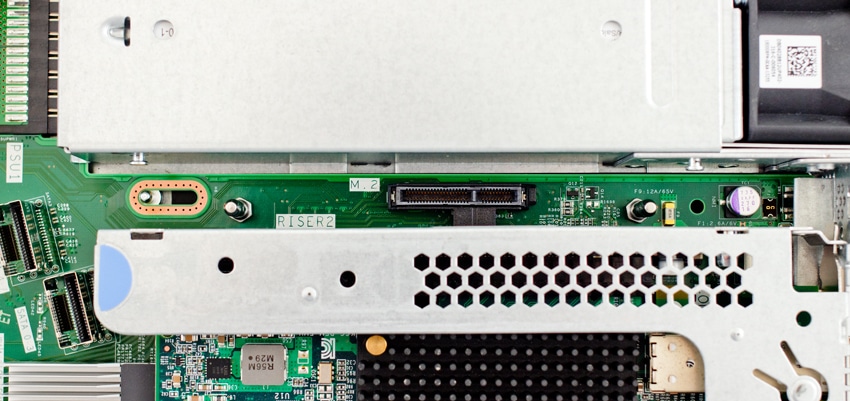

In addition the ThinkSystem SR570 includes an onboard M.2 connector for handling onboard boot storage. This further lets a user stretch the configuration to be customized to the use case itself, versus taking over resources that could instead be allocated to better uses.

Management

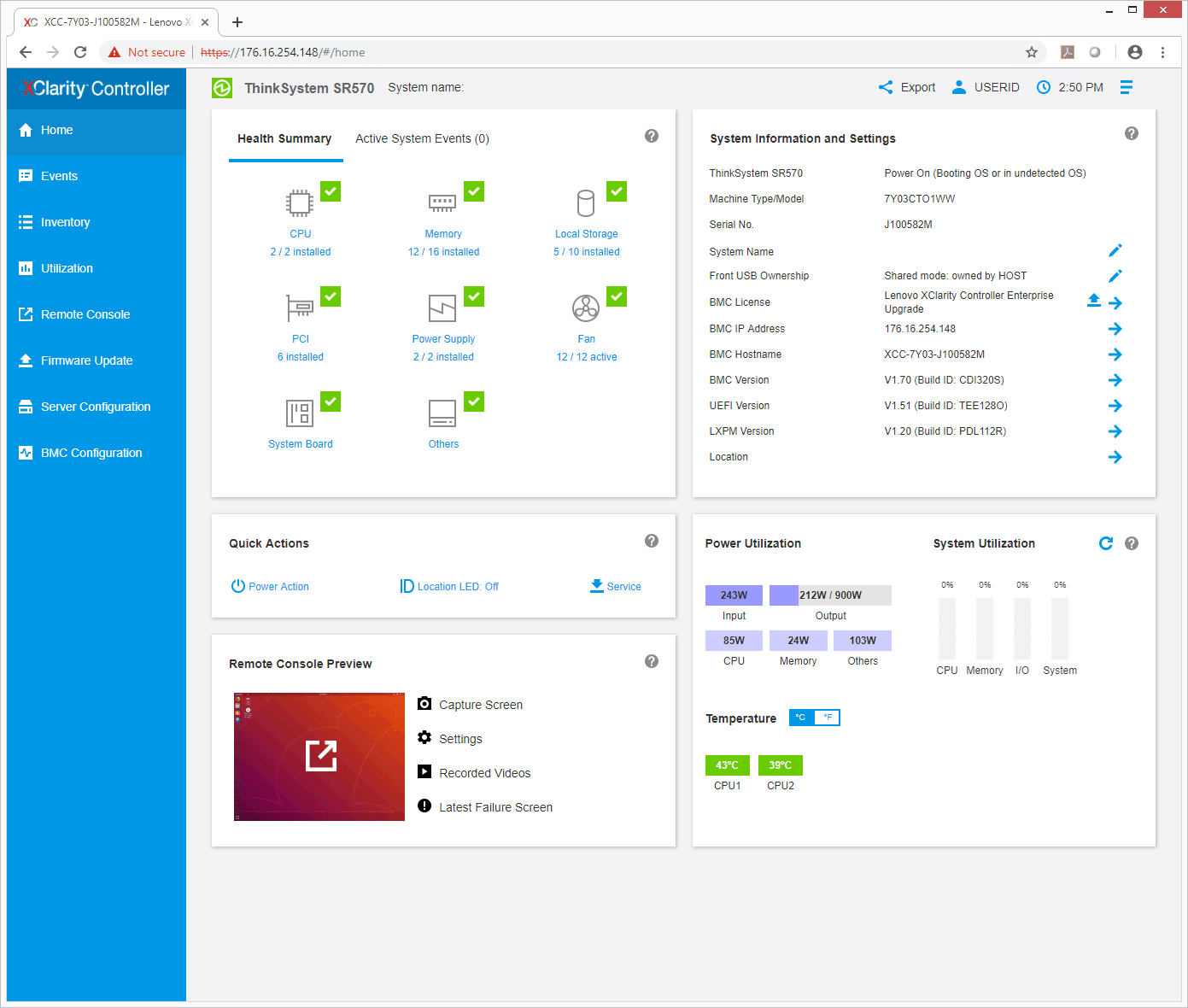

For ThinkSystem and ThinkAgile systems, Lenovo offers XClarity for management. XClarity centralizes and streamlines hardware resource management, speeds cloud as well as traditional infrastructure deployment, and enables visibility and control of physical resources from external, higher level management software tools.

On the main screen XClarity lays everything out for users to see quickly and easily. There are five main windows that show a Health Summary (that breaks down various hardware components), Quick access (for actions such as turning the system on or off), a Remote Console Preview, System Information and Setting, and Power Utilization. Down the right side of the screen are main tabs including: Home, Events, Inventory, Utilization, Remote Console, Firmware Update, Server Configuration, and BMC Configuration.

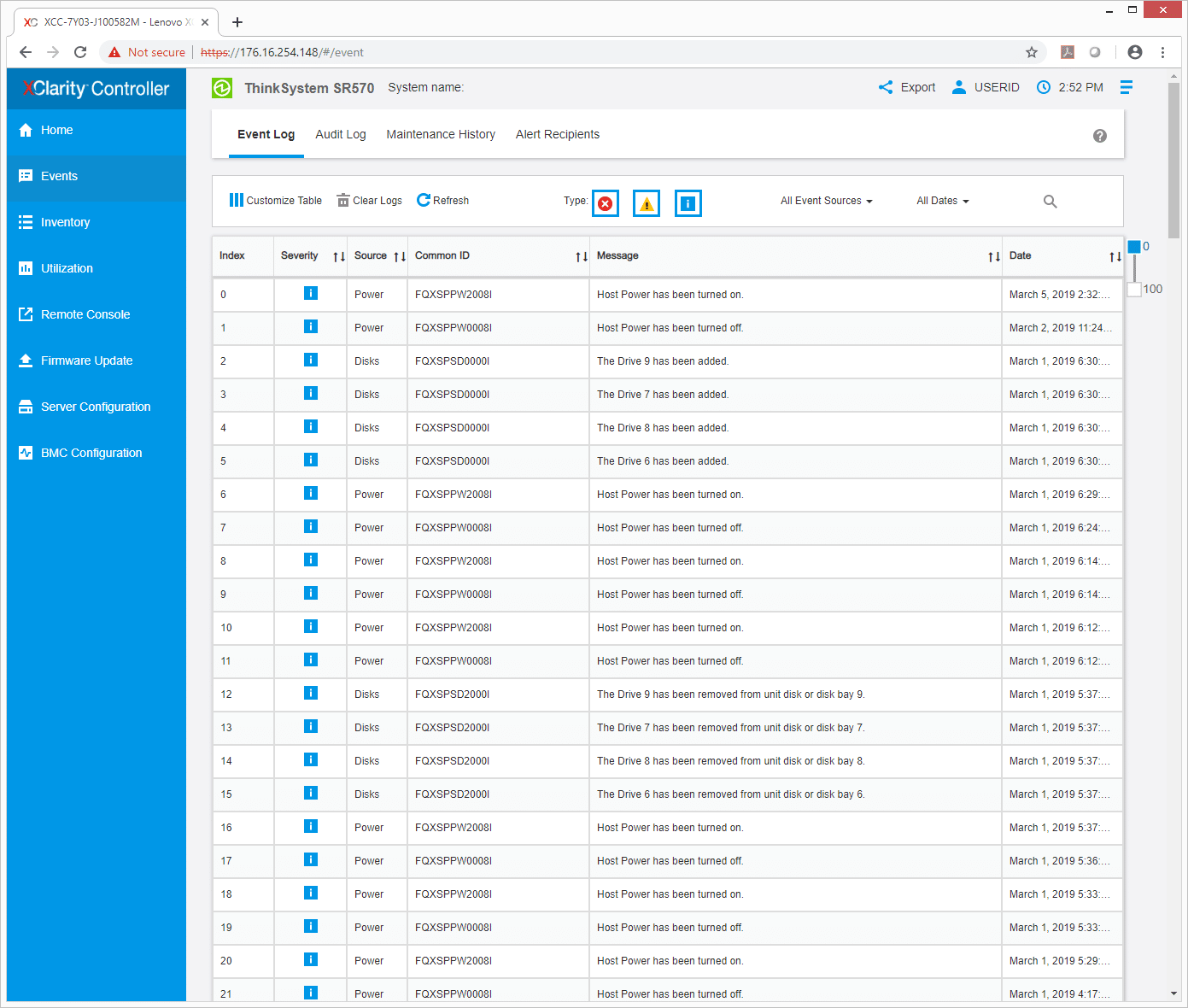

The Event tab is exactly like it sounds. It lists events with an ID, a message as to what it was, and a time and date when it happened. Users can use this screen to go through audit logs, maintenance history, and alert recipients.

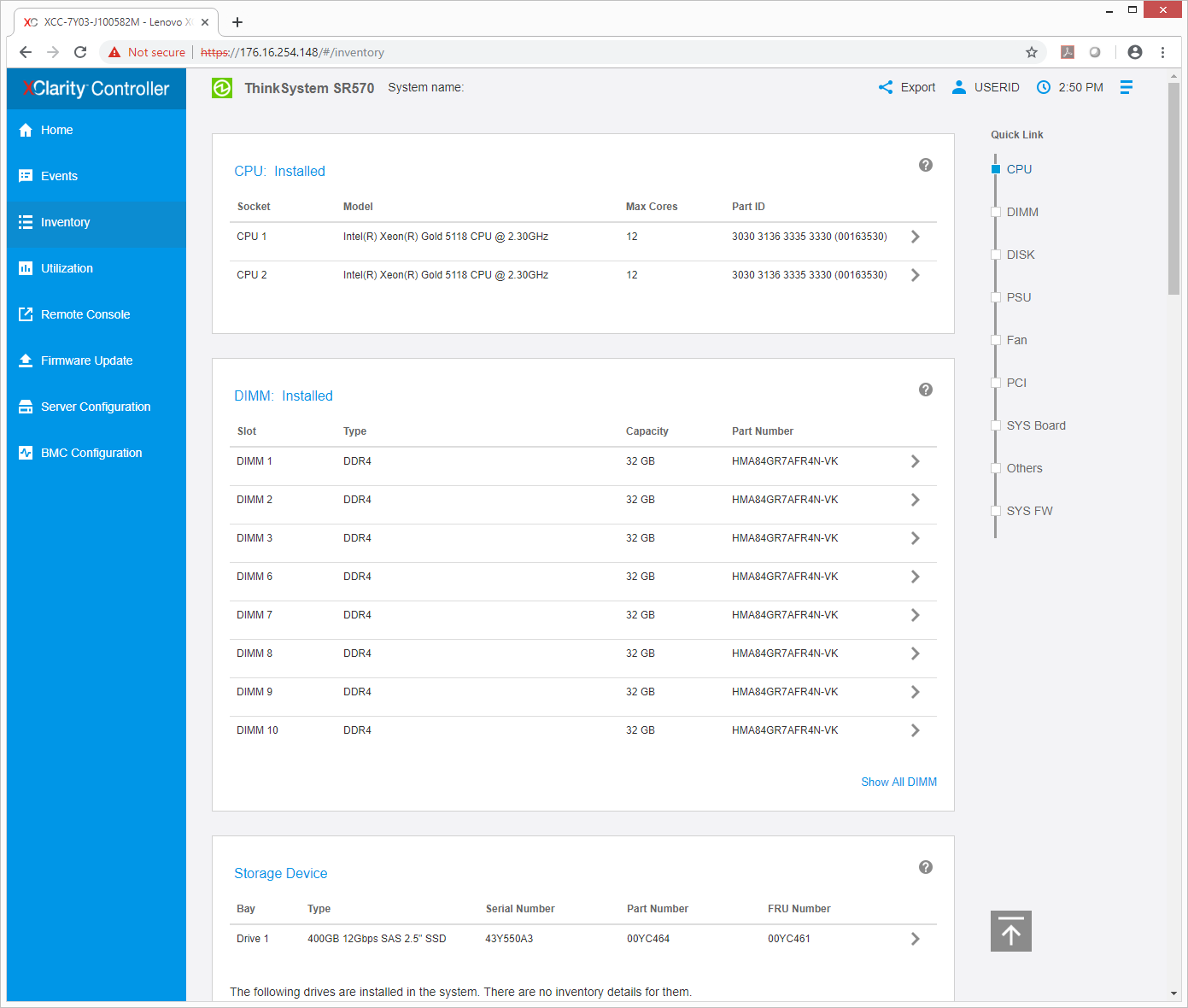

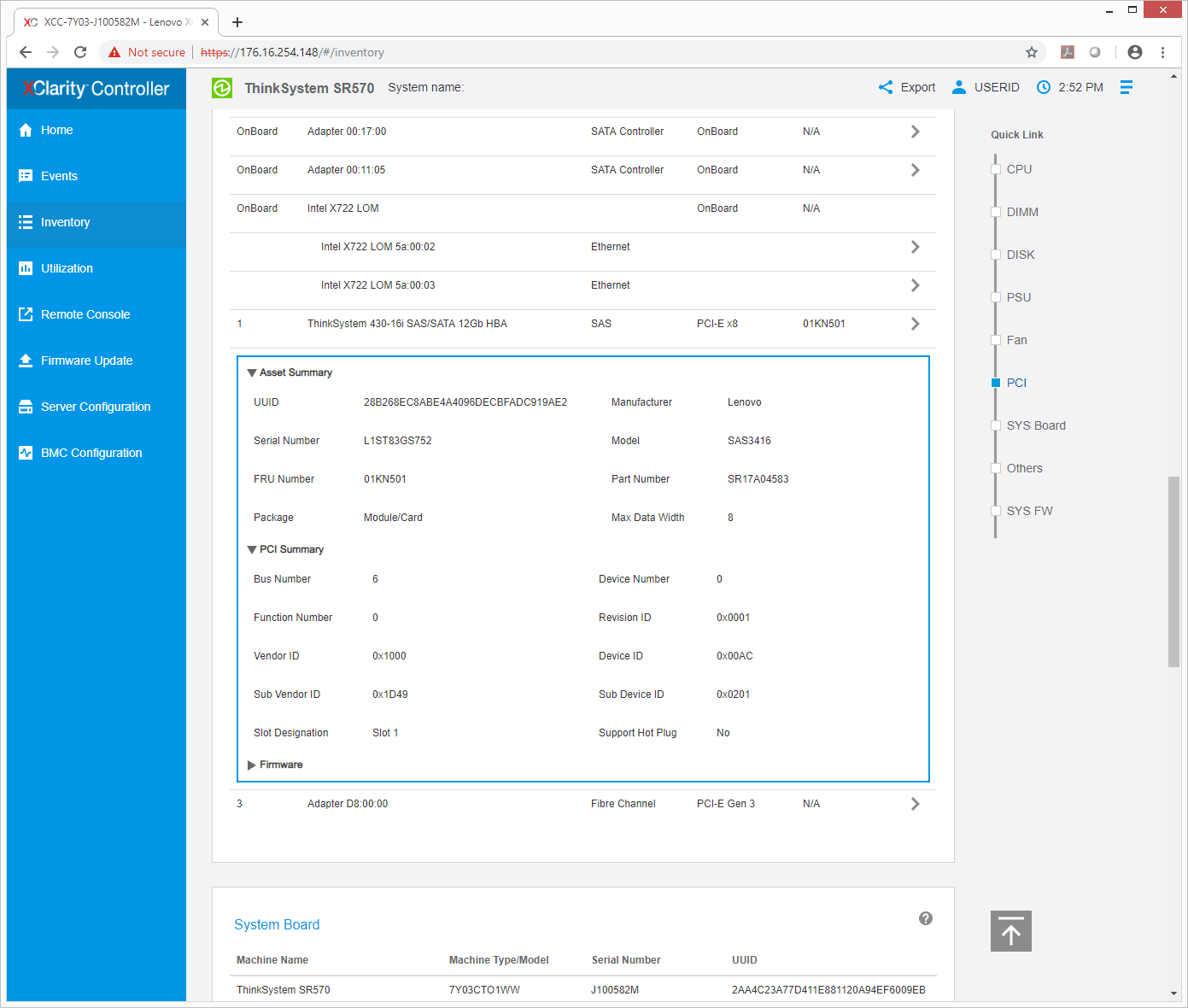

The Inventory tab lists the various hardware components of the server and gives a basic description, i.e. how many cores per CPU or the capacity of RAM.

Users can drill down deeper into individual parts and gather more specific information. This is good if there is an issue or a replacement needs to be ordered.

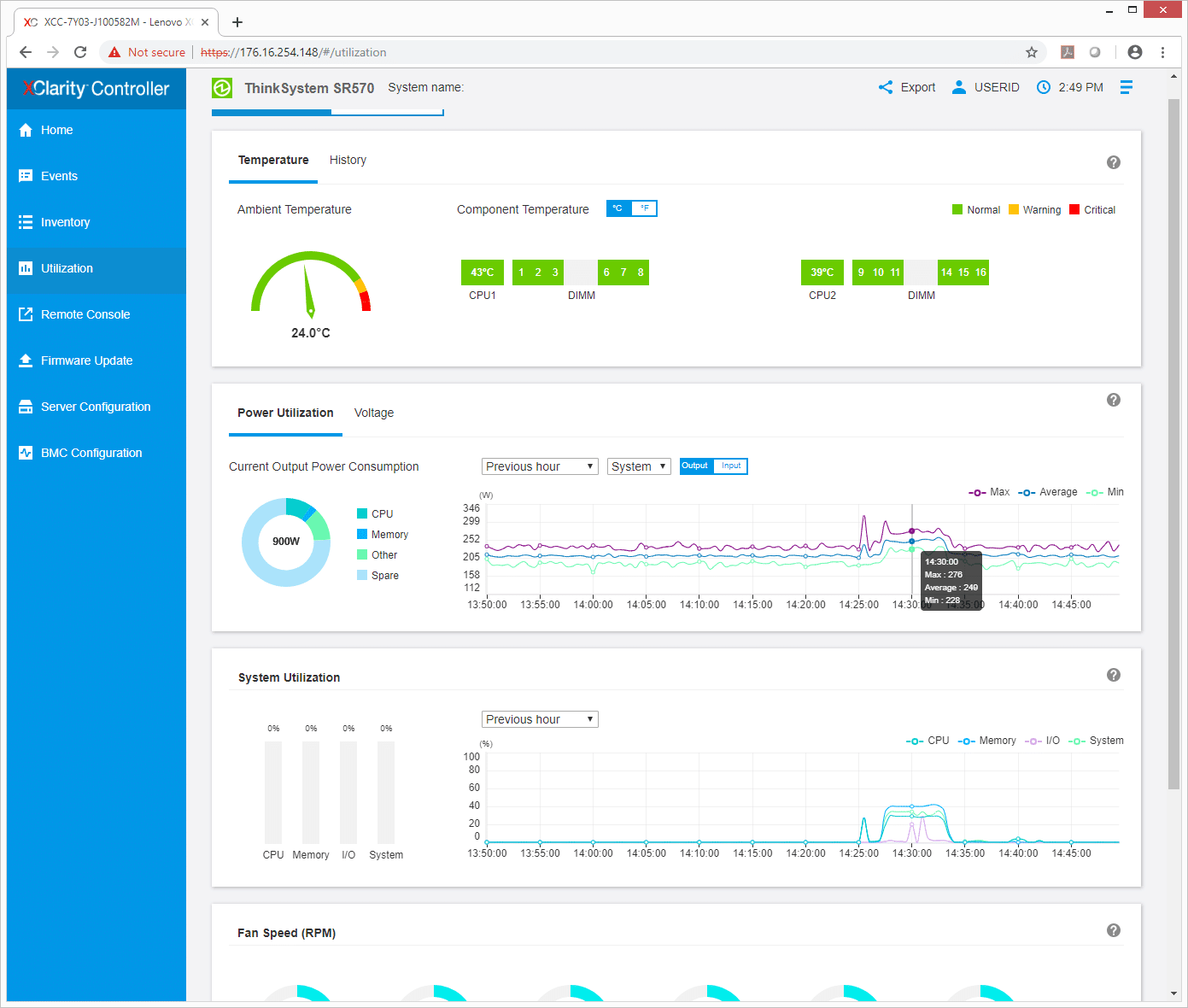

The Utilization tab shows what resources and how much of those said resources are being used by the server and offers either graphical or a table view.

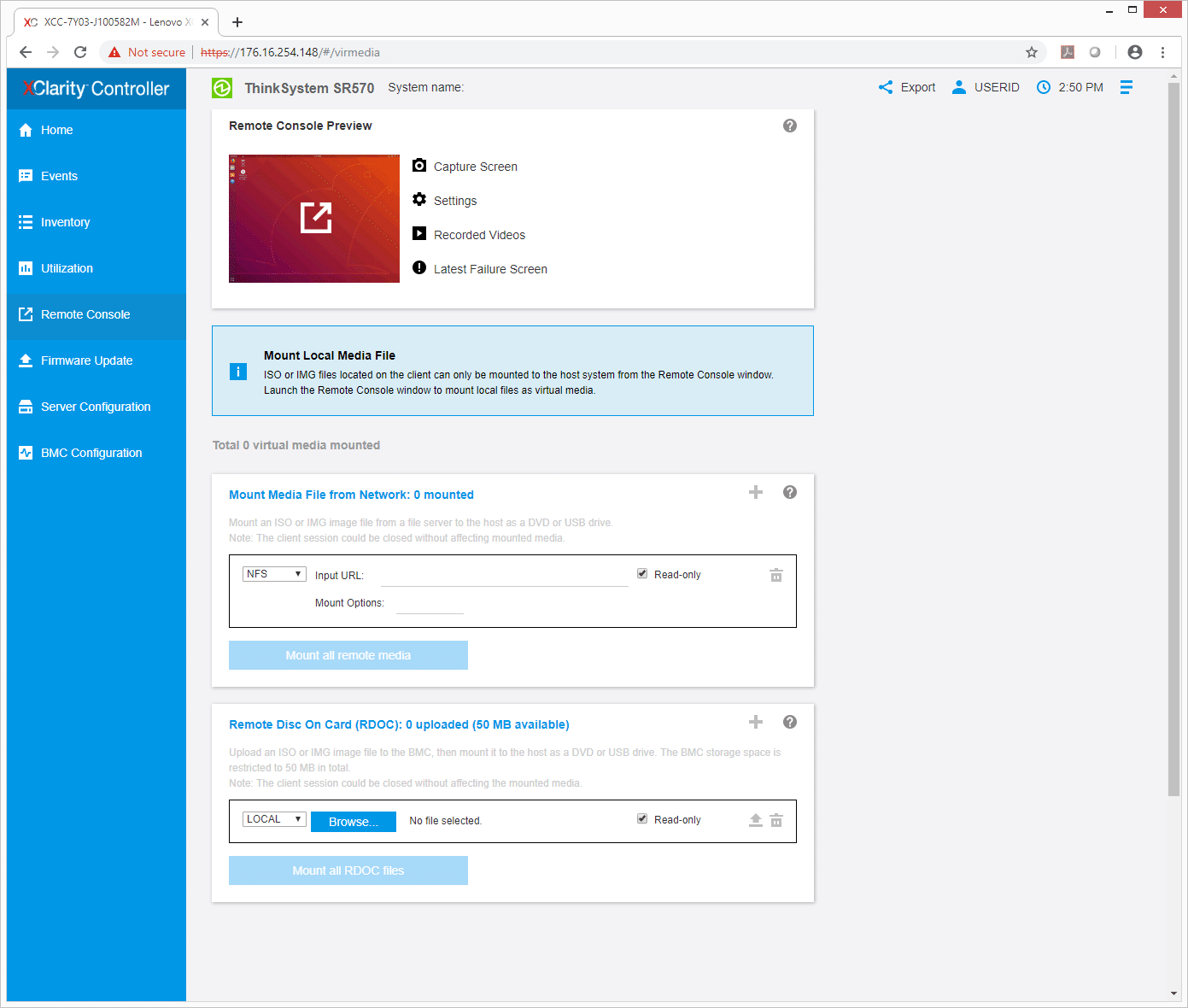

The Remote Console tab shows a review of what a remote console would look like as well as allowing users to configure their remote consoles.

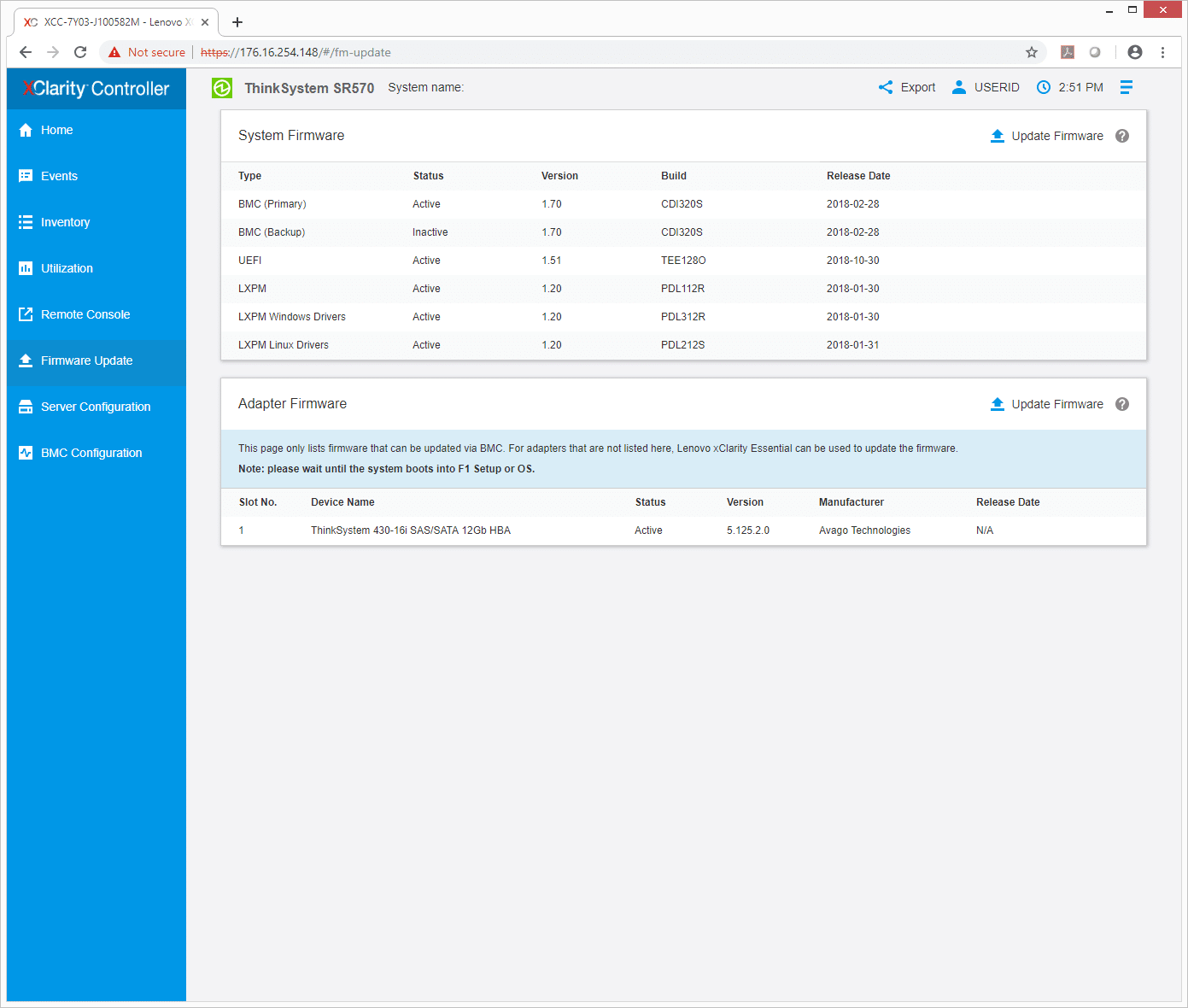

With the Firmware Update tab, admins can see system and/or adaptor firmware updates that are available and manually update them.

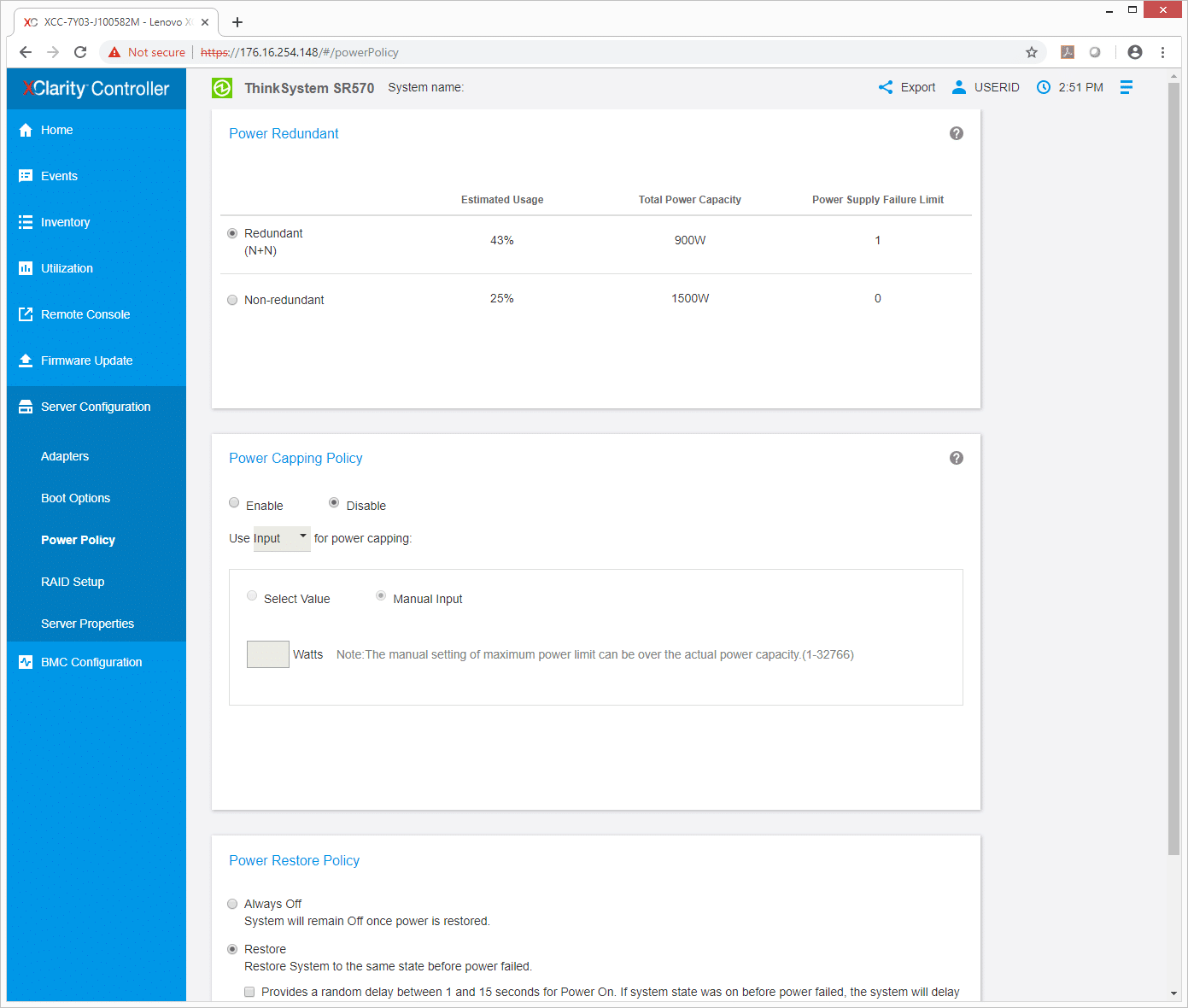

The next main tab is Server Configuration with several sub tabs including Adapters, Boot Options, Power Policy, RAID Setup, and Sever Properties. The Power Policy allows admins to set up either redundant or non-redundant as well as set the power restore policy: setting it to stay off, on, or revert to the previous settings after a power restore.

Performance

SQL Server Performance

StorageReview’s Microsoft SQL Server OLTP testing protocol employs the current draft of the Transaction Processing Performance Council’s Benchmark C (TPC-C), an online transaction processing benchmark that simulates the activities found in complex application environments. The TPC-C benchmark comes closer than synthetic performance benchmarks to gauging the performance strengths and bottlenecks of storage infrastructure in database environments.

Each SQL Server VM is configured with two vDisks: 100GB volume for boot and a 500GB volume for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. While our Sysbench workloads tested previously saturated the platform in both storage I/O and capacity, the SQL test looks for latency performance.

This test uses SQL Server 2014 running on Windows Server 2012 R2 guest VMs, and is stressed by Dell's Benchmark Factory for Databases. While our traditional usage of this benchmark has been to test large 3,000-scale databases on local or shared storage, in this iteration we focus on spreading out four 1,500-scale databases evenly across our servers.

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

For our transactional SQL Server benchmark the SR570 had an aggregate score of 12,631.39 TPS with individual VMs ranging from 3,156.91 TPS to 3,160.53 TPS.

With SQL Server average latency the SR570 gave us an aggregate score of 6.5ms with individual VMs ranging from 2ms to 8ms.

Sysbench MySQL Performance

Our first local-storage application benchmark consists of a Percona MySQL OLTP database measured via SysBench. This test measures average TPS (Transactions Per Second), average latency, and average 99th percentile latency as well.

Each Sysbench VM is configured with three vDisks: one for boot (~92GB), one with the pre-built database (~447GB), and the third for the database under test (270GB). From a system resource perspective, we configured each VM with 16 vCPUs, 60GB of DRAM and leveraged the LSI Logic SAS SCSI controller.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

With the Sysbench OLTP we tested 4VM and the SR570 had an aggregate of 4,247.9 TPS.

With Sysbench latency the server had an average of 22.6ms.

In our worst-case scenario (99th percentile) latency the SR570 gave us 46.53ms.

VDBench Workload Analysis

When it comes to benchmarking storage arrays, application testing is best, and synthetic testing comes in second place. While not a perfect representation of actual workloads, synthetic tests do help to baseline storage devices with a repeatability factor that makes it easy to do apples-to-apples comparison between competing solutions. These workloads offer a range of different testing profiles ranging from "four corners" tests, common database transfer size tests, as well as trace captures from different VDI environments. All of these tests leverage the common vdBench workload generator, with a scripting engine to automate and capture results over a large compute testing cluster. This allows us to repeat the same workloads across a wide range of storage devices, including flash arrays and individual storage devices.

Profiles:

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

- 4K Random Write: 100% Write, 64 threads, 0-120% iorate

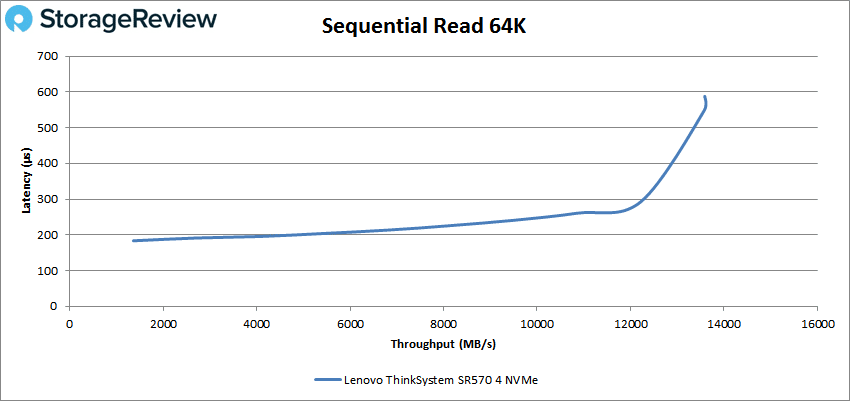

- 64K Sequential Read: 100% Read, 16 threads, 0-120% iorate

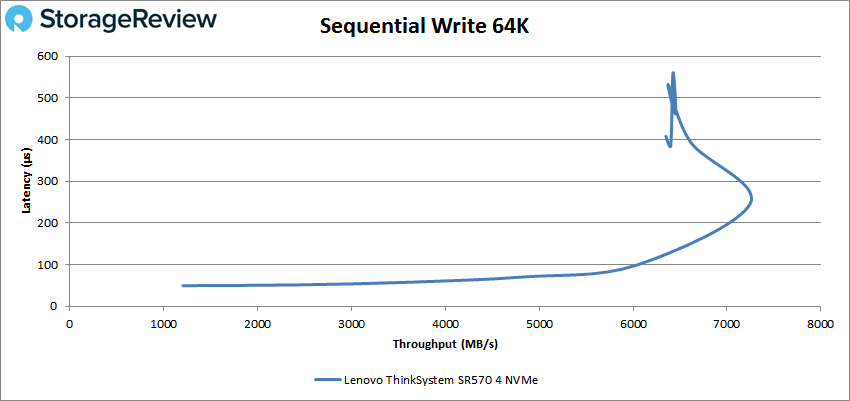

- 64K Sequential Write: 100% Write, 8 threads, 0-120% iorate

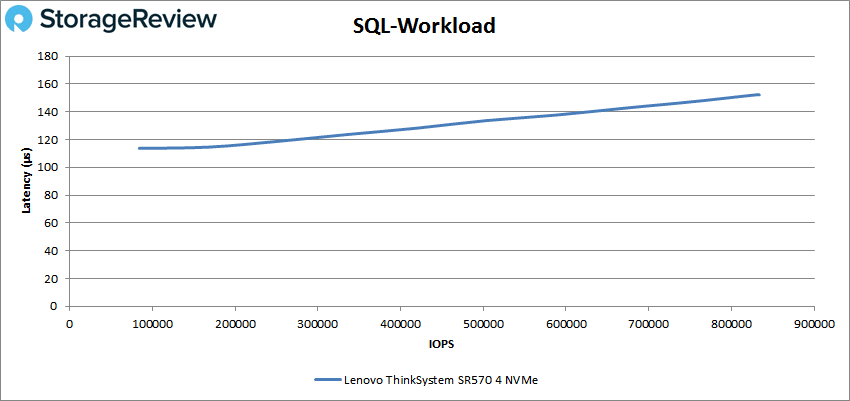

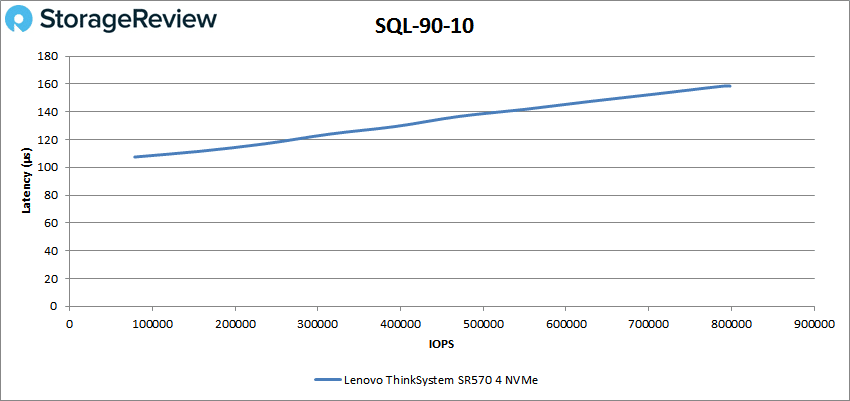

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

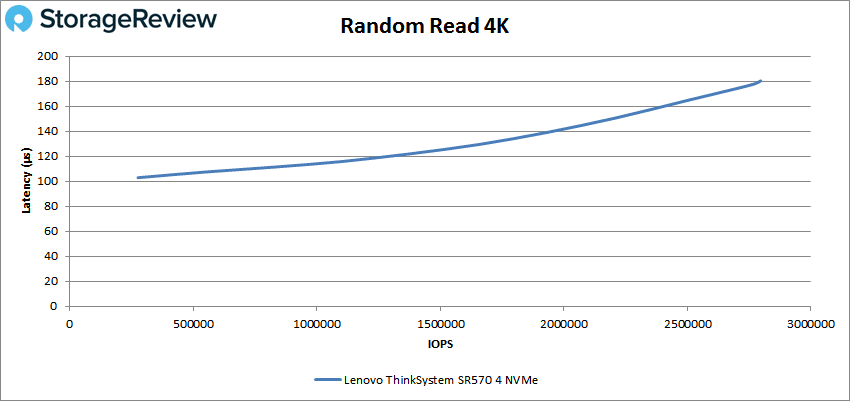

With random 4K read the Lenovo ThinkSystem SR570 gave sub-millisecond latency (that it continued through most of our tests) throughout starting at 276,949 IOPS at 102.9μs and peaked at 2,797,268 IOPS with only 180.3μs latency.

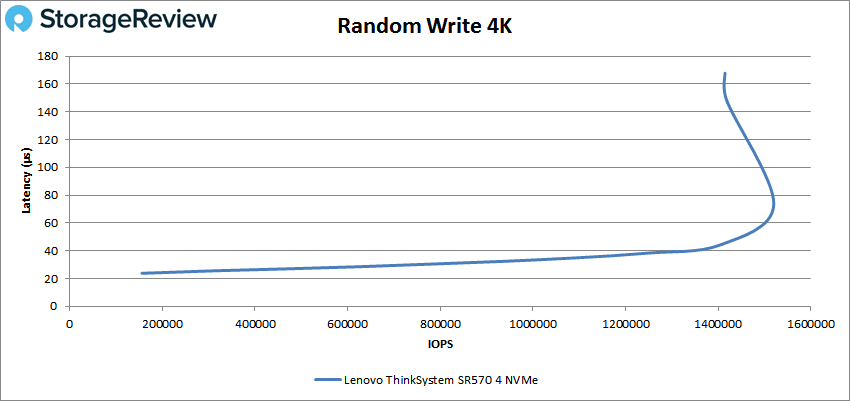

For random 4K write the server started at 312,036 IOPS with only 23.8μs latency. It was able to maintain very low latency until about 1.5 million IOPS were it peaked at roughly 1.52 million IOPS and about 73.3μs latency before dropping off some.

Switching over to sequential work, in 64K sequential read the SR570 started at 21,804 IOPS or 1.36GB/s with a latency of 183.4μs before going on to peak at about 218K IOPS or 13.6GB/s with a latency of 548.4μs.

For 64K sequential write the server started off at 38,607 IOPS or 1.21GB/s at only 49.6μs latency. The server maintained very low latency until about 100K IOPS or 6GB/s and went on to peak at roughly 116K IOPS or 7.3GB/s at 247.9μs latency before dropping off.

Are next set of tests are our SQL workloads: SQL, SQL 90-10, and SQL 80-20. For SQL the SR570 peaked at 832,170 IOPS with a latency of only 152.4μs less than 40μs than it started.

For SQL 90-10 the server started at 78,698 IOPS with a latency of 107.5μs and peaked at 796,731 IOPS at a latency of 158.7μs.

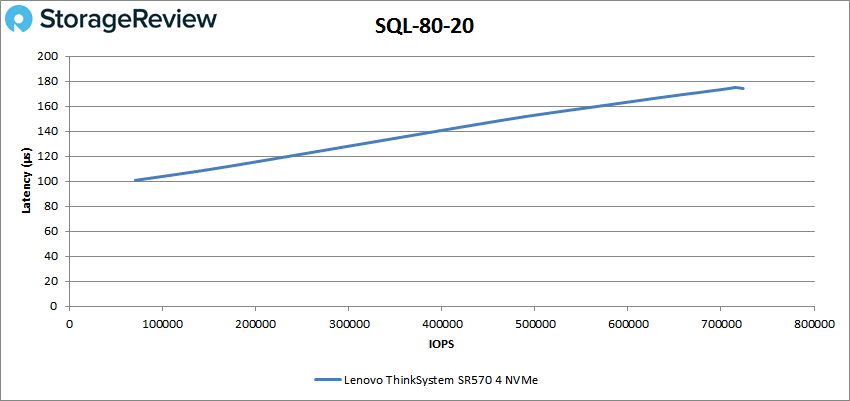

SQL 80-20 had the SR570 start at 70,569 IOPS at 100.8μs latency and a peak of 723,716 IOPS with 174.2μs for latency.

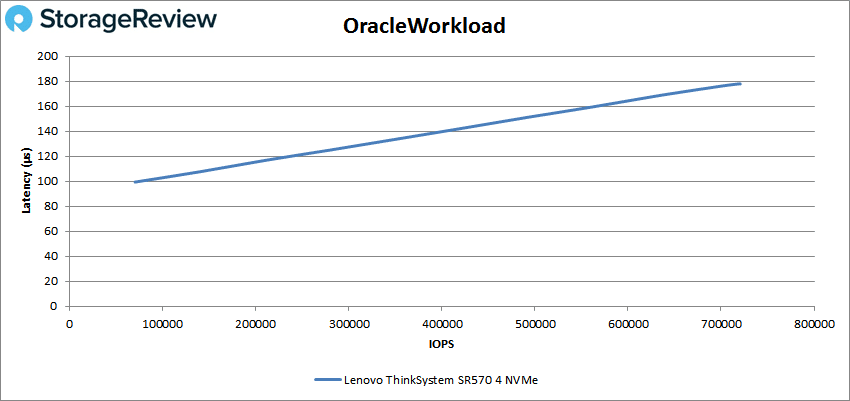

Next up are our Oracle workloads: Oracle, Oracle 90-10, and Oracle 80-20. With Oracle the SR570 started under 100μs (99.4μs) latency and peaked at 720,323 IOPS with a latency of only 178μs.

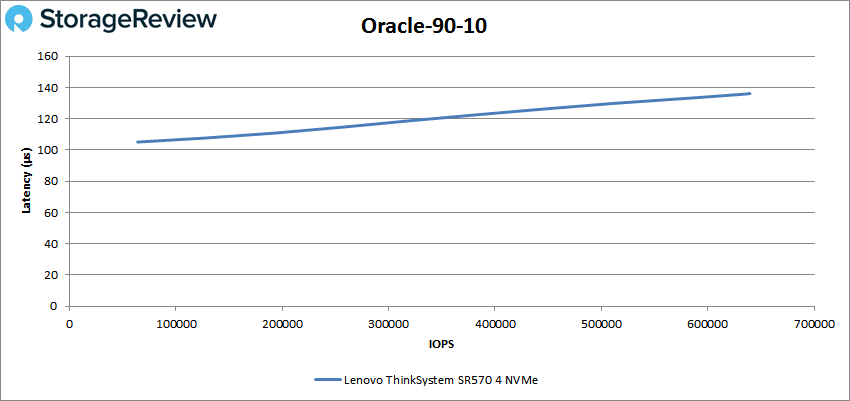

Oracle 90-10 saw the server start at 63,884 IOPS with 105.1μs latency and a peak of 638,417 IOPS and a latency of 136μs.

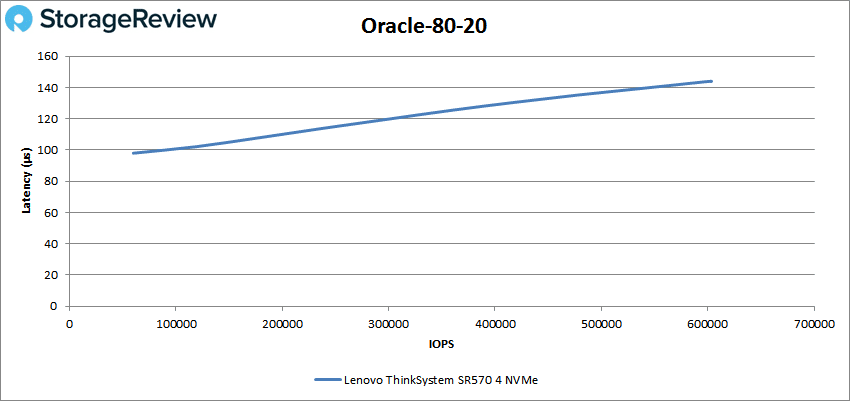

With Oracle 80-20 the SR570 start at 59,830 IOPS and a latency of 98μs going on to peak at 603,487 IOPS and a latency of 144μs.

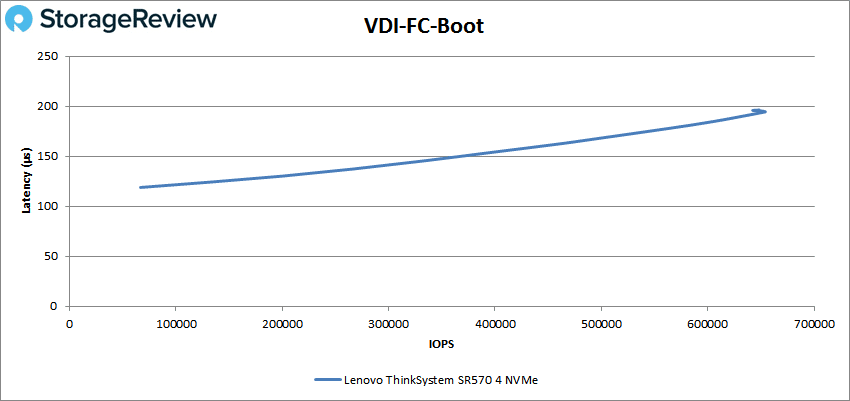

Next, we switched over to our VDI clone test, Full and Linked. For VDI Full Clone (FC) Boot, the Lenovo ThinkSystem SR570 started at 66,617 IOPS and a latency of 118.9μs and peaked at 654,079 IOPS at a latency of 194.4μs.

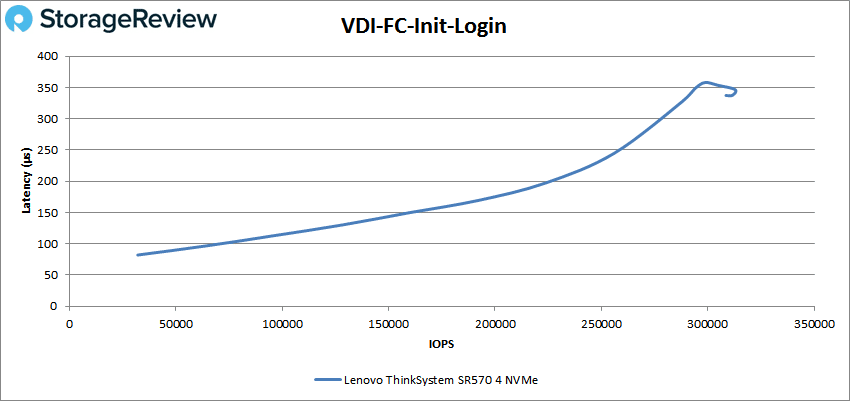

For VDI FC Initial Login the server started at 32,008 IOPS and 82.2μs latency before going on to peak at 312,794 IOPS at 346.7μs before a slight drop off.

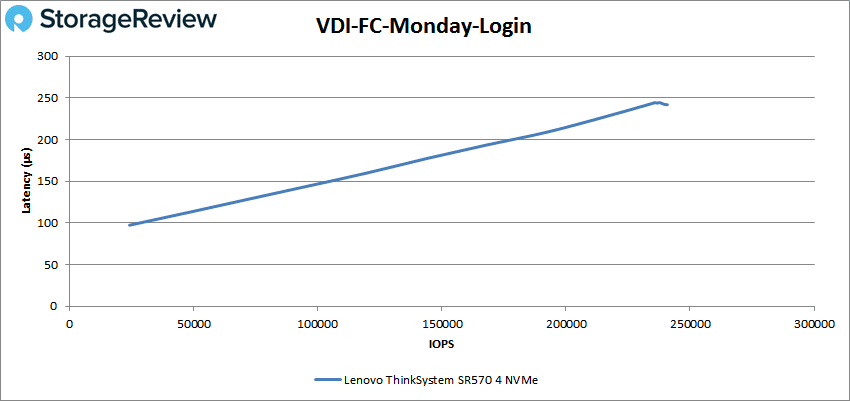

VDI FC Monday Login saw the server start at 24,114 IOPS and 97.4μs latency with a peak of 240,852 IOPS and a latency of 241.9μs.

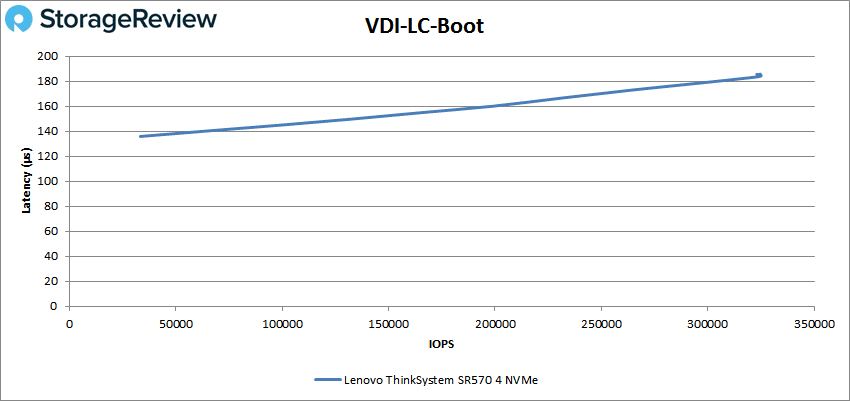

For VDI LC Boot the SR570 started at 33,129 IOPS with 135.9μs latency and peaked at 325,125 IOPS at 184.4μs.

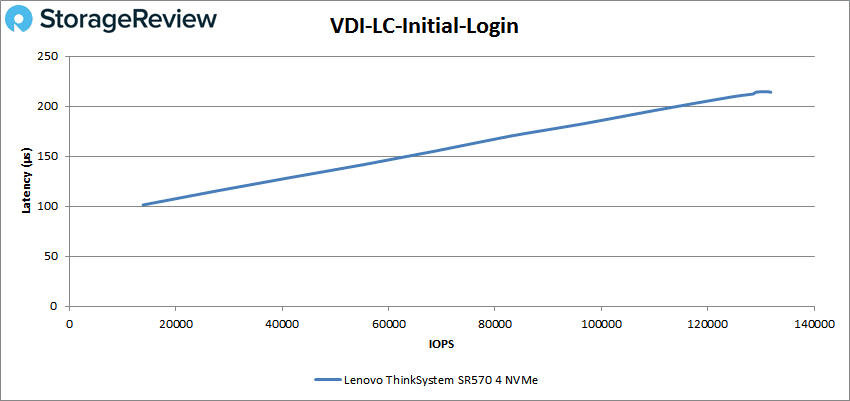

VDI LC Initial Login saw the sever start at 13,797 IOPS at 101.4μs latency and a peak of 131,463 IOPS with a latency of 214.6μs.

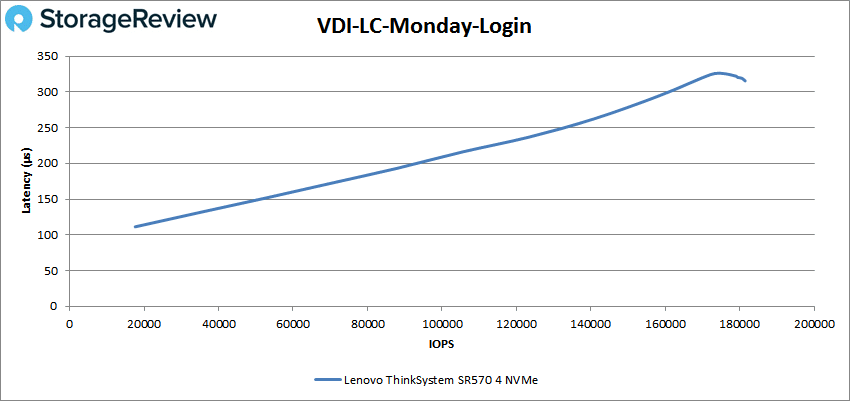

Finally, VDI LC Monday Login had the SR570 start at 17,606 IOPS and 111.3μs latency before going on to peak at 181,479 IOPS at 319.4μs.

Conclusion

The Lenovo ThinkSystem SR570 is a compact 1U, 2-socket server aimed at virtualization and cloud computing, infrastructure security, web serving, and application development. Of course these use cases run the gamut from small offices to larger enterprises. This small server can be outfitted with up to 1TB of ram, two Platinum Intel Xeon CPUs, and up to 76.8TB of capacity, depending on the drive configuration chosen. In addition to standard server use cases, the SE570 can find itself in a variety of software-defined solutions via Lenovo's ThinkAgile suite of offerings.

In our application workload analysis the ThinkSystem SR570 was limited more by our exact configuration of the processors versus its potential given four NVMe SSDs. We were able to hit aggregate scores of 12,631.39 TPS and an average latency of 6.5ms in SQL Server. In Sysbench the sever had an average TPS score of 4,247.9 with average latency of 22.6ms and worst-case scenario latency of 46.53ms. With a more stout CPU configuration you can easily double the compute resources available and net better overall system performance.

For VDBench, the SR570 had sub-millisecond latency performance in every test. Peak performance highlights include in random 4K read the server hit 2.8 million IOPS, 1.52 million IOPS in random 4K write, 13.6GB/s in 64K sequential read and 7.3GB/s in sequential 64K write. The SR570 showed some impressive numbers in our SQL and Oracle tests with SQL score of 832K IOPS, 90-10 score of 797K IOPS, and 80-20 score of 723K IOPS. For Oracle it hit 720K IOPS, 638K IOPS for 90-10, and 603K IOPS for 80-20. Latency is interesting to note here at the server had a high peak latency of 548.4μs and a low peak latency of only 73.3μs.

The Lenovo ThinkSystem SR570 is a versatile server that can fit many needs with a wide range of configuration options. The server is capable of offering high-end compute performance with NVMe SSDs, or it can be configured for less performant needs at a much lower cost. In either case it's a great offering for instances where compute needs and server density outweigh the need for massive storage and add-in card flexibility.

Amazon

Amazon