The Mangstor NX-Series of all-flash arrays (AFA) are a family of appliances that are designed to bring the performance and low-latency benefits of NVMe to a shared storage environment. Shared storage of course isn't new, but being able to leverage the benefits of NVMe in a shared environment is. Conceptually, NVMe over Fabrics takes the power of the best of breed SSDs, that have been confined to in-server use, and shares them over a high-speed network (Ethernet or Infiniband). Specifically, the Mangstor NX6320 uses NVMe over Fabrics with RDMA network access in order to deliver performance benefits to latency sensitive applications. This scalable storage has several use cases including critical applications, database and HPC.

The main benefit of the Mangstor NX6320 is its ability share NVMe storage devices across a network as direct-attached block storage for many servers. The servers gain the speed and low latency of local storage without the cost of NVMe SSDs in each server. This ability gives administrators centralized management and serviceability.

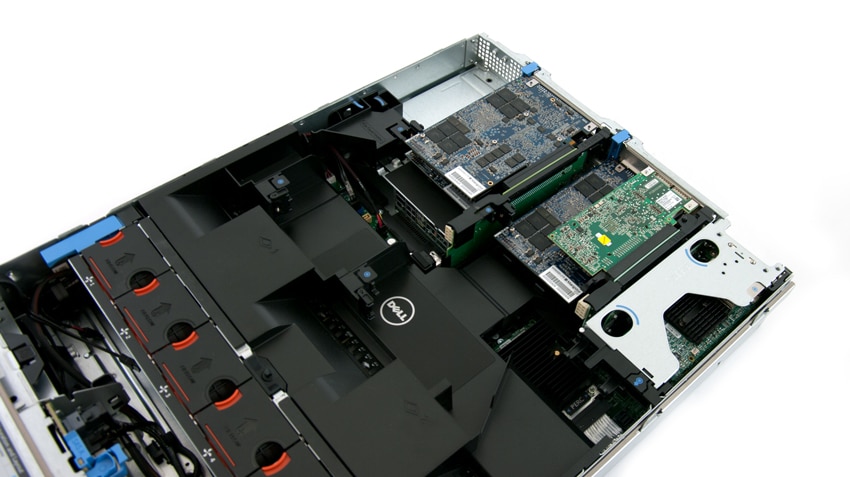

The NX6320 is based off of Mangstor’s software-configurable MX6300 NVMe SSD combined with its TITAN storage stack. The MX6300, which we’ve previously reviewed, is notably different from other SSDs as it allows the user to configure its controller to optimize the use of NAND that can lead to lower power usage. TITAN storage software has the ability to take industry-standard servers and transform them into all-flash storage arrays with the use of MX6300 NVMe SSDs. TITAN can also combine NVMe, RDMA, and multi-core technologies to deliver what Mangstor refers to as unparalleled block storage access bandwidth and latency. To do this, TITAN optimizes the path from the network to the MX6300, reducing CPU overhead.

Mangstor NX6320 specifications

- Form Factor: 2U

- Capacity: 8TB | 12TB | 16TB | 32TB

- Bandwidth Rd/Wr (GB/s): 6.0 / 4.5 | 9.0 / 6.75 | 12.0 / 9.0 | 12.0 / 9.0

- Throughput Rd/Wr (4K) (IOPS): 1.5 M / 1.1 M | 2.25 M / 1.67 M | 3.0 M / 2.25 M | 3.0 M / 2.25 M

- Read/Write Latency: 110 uS / 30 uS

- I/O Connectivity

- 2×40/56Gb/s QSFP Ethernet, 2x40Gb/s QSFP InfiniBand | 4×40/56Gb/s QSFP Ethernet, 4x40Gb/s QSFP InfiniBand

- Fabric Protocol Support

- RDMA over Converged Ethernet (RoCE)

- InfiniBand

- iWARP

- Client OS Driver Support

- RHEL

- SLES

- CentOS

- Ubuntu

- Windows

- VMware ESXi 5.5/6.0 (VMDirectPath)

- Environmental

- Inlet temperature 10 – 35°C (50 – 95°F)

- Altitude: 0 to 7,500 feet

- Humidity: 5-95% (non condensing)

- Warranty: Hardware 5 years; Base software 90 days

- Power: 350 W | 400 W | 450 W | 450 W

Build and Design

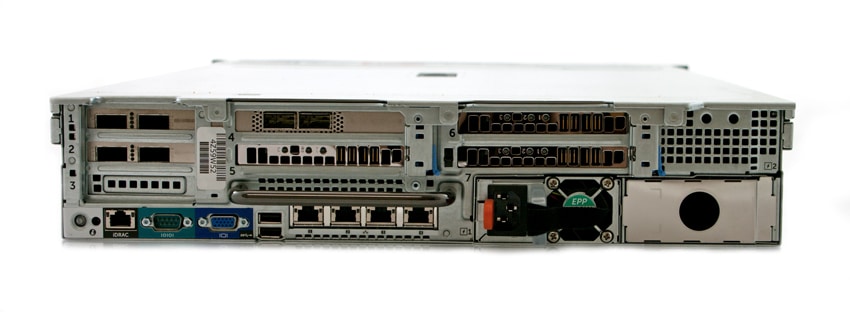

Beneath its bright blue bezel, Mangstor leverages the Dell PowerEdge 13G R730 as the backbone of the NX6320. Leveraging a Tier1 server has its benefits, of course, including strong hardware compatibility and driver qualifications, as well as management options such as iDRAC for mass deployment.

Beneath the customized bezel is what one would expect from a Poweredge R730. The front of the device has a Video connector, Information tag, vFlash media card slot, USB connector, and a USB management port/iDRAC Direct. The Power button (and Power-on indicator) and an NMI button are also present, the latter of which is used to troubleshoot software and device driver errors when running certain operating systems. Taking up the majority of the front panel are the drive bays, which Mangstor will be able to use for added capacity in future product releases.

From left to right, the back panel includes a System identification button, System identification connector, and an iDRAC8 Enterprise port. PCIe slots are also visible, which in our configuration include a variety of Mellanox Ethernet NIC options (40G and 100G), as well as three MX6300-series NVMe SSDS. The Serial, Video (VGA), and 2x USB connectors are also present, while the four Ethernet connectors offer 10/100/1000 Mbps NIC connectivity.

Sysbench Performance

To measure the performance from the 12TB version of Mangstor's NX6320 NVMe over Fabrics All-Flash Array, we leveraged our Dell PowerEdge 13G R730 compute cluster. Each server had four Mellanox ConnectX-3 Pro NIC cards configured in pass-through mode in ESXI 6.0 and connected to specific VMs in our Sysbench benchmarking environment. This test offered strong driver support, so we focused on it for performance testing.

In our testing layout, we tested one static configuration of 8 Sysbench VMs. While the NX6320 Array would easily support more in both capacity and performance terms, the Mellanox ConnectX-3 Pro NIC OFED ESXi 6.0 driver support in passthrough mode only supports linking one physical NIC to one VM. With just 8 ConnectX-3 Pro NICs in the lab, our largest configuration supported was 8VMs. Mellanox and Mangstor are working on ConnectX-4 OFED ESXi 6.0 driver support where one card can support multiple virtual NICs in pass-through mode, further increasing VM density. But at the time of the review, the drivers were not yet finalized.

Dell PowerEdge R730 2-node Cluster Specifications

- Dell PowerEdge R730 Servers (x2)

- CPUs: Eight Intel Xeon E5-2690 v3 2.6GHz (12C/24T)

- Memory: 32 x 16GB DDR4 RDIMM

- Mellanox ConnectX-3 Pro

- VMware ESXi 6.0

For this testing we configured 8 VMs identically and looked at individual scores, as well as aggregate score. Each Sysbench VM is configured with three vDisks, one for boot (~92GB), one with the pre-built database (~447GB) and the third for the database under test (400GB). The third vDisk is the shared NVMe block storage device.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Storage Footprint: 1TB, 800GB used

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

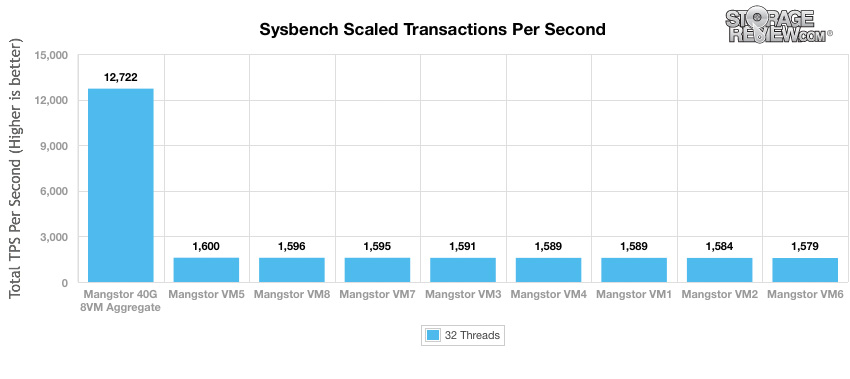

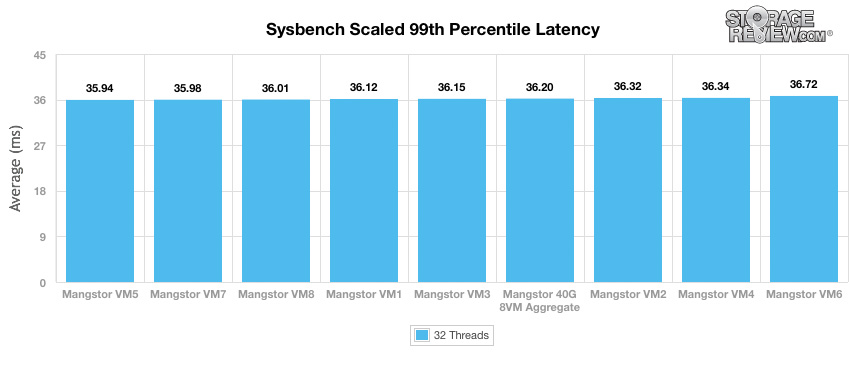

Our Sysbench test measures average TPS (Transactions Per Second), average latency, as well as average 99th percentile latency at a peak load of 32 threads. Looking at scaled transactions per second, the individual VMs of the Mangstor NX6320 ran right around 1,600 TPS (running between 1,579 to 1,600 TPS). The NX6320 had an aggregate score of 12,722 TPS.

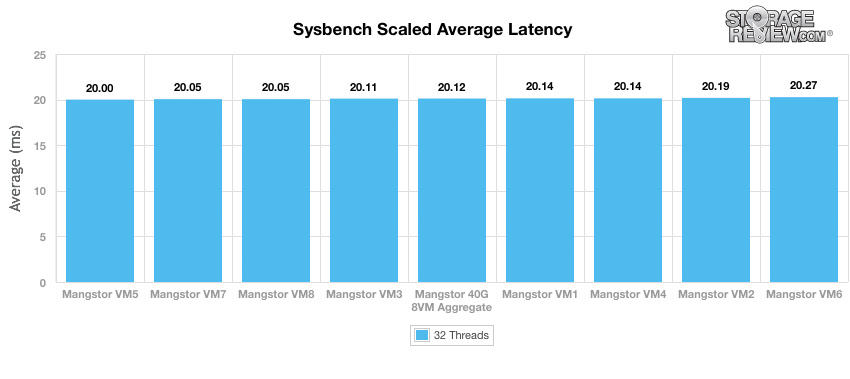

Looking at average latency, the NX6320 was fairly consistent running right around 20ms throughout (ranging from 20.00ms to 20.27ms). Unsurprisingly the aggregate score was also very consistent and low at 20.12ms.

In terms of our worst-case MySQL latency scenario (99th percentile latency), once again the NX6320 gave a strong and consistent performance, this time falling in between 35ms and 37ms (running from 35.94ms to 36.72ms). The aggregate score was 36.20ms

Conclusion

The Mangstor NX6320 is a 2U all-flash array that brings the performance and latency benefits of local NVMe to a shared-storage environment. In order to see these improvements in performance and latency, Mangstor leverages its own MX6300 NVMe SSDs and TITAN software. Combining these two technologies enables Mangstor to optimize its system to get higher performance and lower latency. Mangstor claims the NX6320-16TB version has higher single array performance and continues to scale in performance as additional arrays are added. This isn't without some compromise however, currently the downside to NVMe over Fabrics solutions such as the Mangstor is limited driver support compared to traditional storage solutions. While support is growing by the day, there's more work to be done. This implementation of NVMe over Fabrics also requires a little more effort to integrate into a production environment.

Looking at performance, we ran our Sysbench application test on the NX6320 12TB version, with storage provisioned out to eight identical VMs. Throughout this test the NX6320 surpassed our expectations in terms of individual VM performance, as well as consistency across the VM group. The NX6320 delivered industry-leading performance at 8VMs, achieving a 2x advantage over the nearest flash array we have tested to date. Looking at throughput, each VM ran around 1,600 TPS with an aggregate score of 12,722 TPS. To put that in perspective, we've generally seen the upper limit in our 8VM virtualized Sysbench test measure under 1,000 TPS per VM. The only way to exceed that, up until now, is leveraging local NVMe or SAS3 SSDs, which of course lack the ability to be easily shared without taking a large performance hit. In our scaled average latency test, the NX6320 only varied 0.27ms in latency across all VMs and the aggregate score. In our worst case scenario (99th percentile latency) once again the NX6320 delivered consistent scores this time only varying 0.78ms from lowest to highest.

Ultimately, this is still early days for NVMe over Fabrics. This testing shows the early potential, but there's plenty more to come. Driver development continues at a steady pace, and vendors like Mellanox are invested in seeing a positive outcome and more broad acceptance of faster interconnects. The NX-Series paired with Mellanox 100GbE ConnectX-4 will be available soon, which should allow scaling to even higher VM counts and better overall scalability.

Pros

- Best performance seen thus far in shared storage

- Consistent low latency in Sysbench test

Cons

- Limited driver support, but that is improving as time goes on

The Bottom Line

The Mangstor NX6320 brings NVMe over Fabrics in a 2U form factor, delivering high performance with low latency to a wide variety of applications and use cases that are highly latency sensitive.

Amazon

Amazon