In 2018, QSAN rolled out its XCubeNAS XN8000R series of next generation, high efficiency NAS products. The XN8000R series is aimed at SMBs that leverage enterprise applications. There are two types in this series, the QSAN XCubeNAS XN8008R and the QSAN XCubeNAS XN8012R. The last two digits, -08 and -12, are reflective of how many LFF bays (3.5″) the NAS has. In this review we will be looking at the QSAN XCubeNAS XN8012R, with 12 front facing bays and 6 rear facing bays.

From the hardware side of things, QSAN is pushing a NAS with a lot of performance. The XN8012R comes with two Intel Kaby Lake CPUs and up to 64GB of DDR4 ECC RAM. For storage the NAS has twelve front loading bays that are 3.5″ for maximum capacity HDDs. For those that want to benefit from tiering but don’t want to give up space in the front, the NAS also has six rear facing 2.5″ bays where SATA or NVMe (up to two) drives can be added for even better storage performance. Of course, if cost and density aren’t issues and performance in the priority, SSDs can be loaded into the front facing bays as well. The NAS also has PCIe slots so user can add in Thunderbolt3 or a 10GbE network adaptor card for more performance and access options. Or users can add dual port expansion SAS cards for capacity expansion with QSAN’s expansion units, when leveraging 10TB HDDs, customers can scale all the way up to 2PB.

The QSAN XCubeNAS XN8012R uses yet another type of management, the third we’ve seen from the company. This OS is the QSAN Storage Management 3 or QSM 3. According to the company, the core of QSM is Linux kernel and in-house fine-tuning 128-bit ZFS (Petabyte File System) file system. The new OS claims several benefits to users including persistent, reliable storage management, protection against data corruption, seamless capacity expansion, several data integrity mechanisms, pool and disk encryption protection, unlimited snapshots, and unlimited clones.

QSAN XCubeNAS XN8012R Specifications

| Form Factor | 2U |

| CPU | Intel Xeon 3.3GHz Quad-Core Processor |

| RAM | Up to 64GB DDR4 ECC U-DIMM |

| Storage | |

| Internal hard Drives | 18 |

| Drive Bays | 12 LFF with lock 4 SFF (SATA SSD) 2 SFF (NVMe SSD) |

| Max Raw Capacity | 178TB |

| Hard Drive Interface | SATA 6Gb/s |

| Flash | 8GB USB DOM |

| Ports | |

| Front | USB 2.0 |

| Rear | 4x USB 3.0 1x HDMI 4x GbE LAN (RJ45) |

| Expansion Slots | PCIe Gen3x8 for Thunderbolt 3 /SAS adapter cards PCIe Gen3x4 for 10 GbE adapter cards |

| Dimensions (HxWxD) | 88.5 x 438 x 510 mm |

| Power | 250W 1+1 redundant |

| Temperature | Operating temperature: 0 to 40°C Shipping temperature: 10 to 50°C |

| Relative Humidity | Operating relative humidity: 20% to 80% non-condensing Non-operating relative humidity: 10% to 90% |

| Warranty | 3-year |

Design and Build

The QSAN XCubeNAS XN8012R is a 2U rackmount NAS. Since we are using the -12R model for this review, there are 12, 3.5″ bays running horizontally across the front in three rows. Each bay is black with green highlights the color of QSAN. On the left there is the power button, UID button, the system status LED, the system access LED, and a USB 2.0 port.

Flipping around to the rear, we see the two PSUs on the left side. The right side has an HDMI port and two PCIe slots (one for Gen3 x4 and one for Gen3 x8). Next over are the four LAN ports, four USB 3.0 ports, a mute button, a reset button, and a console port. Near the PSUs are the SFF bays or what we commonly refer to as 2.5″ bays. These 6 bays are a combination of SATA, SAS, PCIe, or NVMe depending on the configuration chosen.

Management

In our previous reviews we looked at QSAN’s SAN units and the two OS’s for them, SANOS and XEVO. With their NAS units they have yet another OS, QSM 3. QSM 3 has the feel of other familiar NAS OS’s in the industry. We are greeted with the quick install screen that gives users the option of either a quick setup or customized setup depending on their needs.

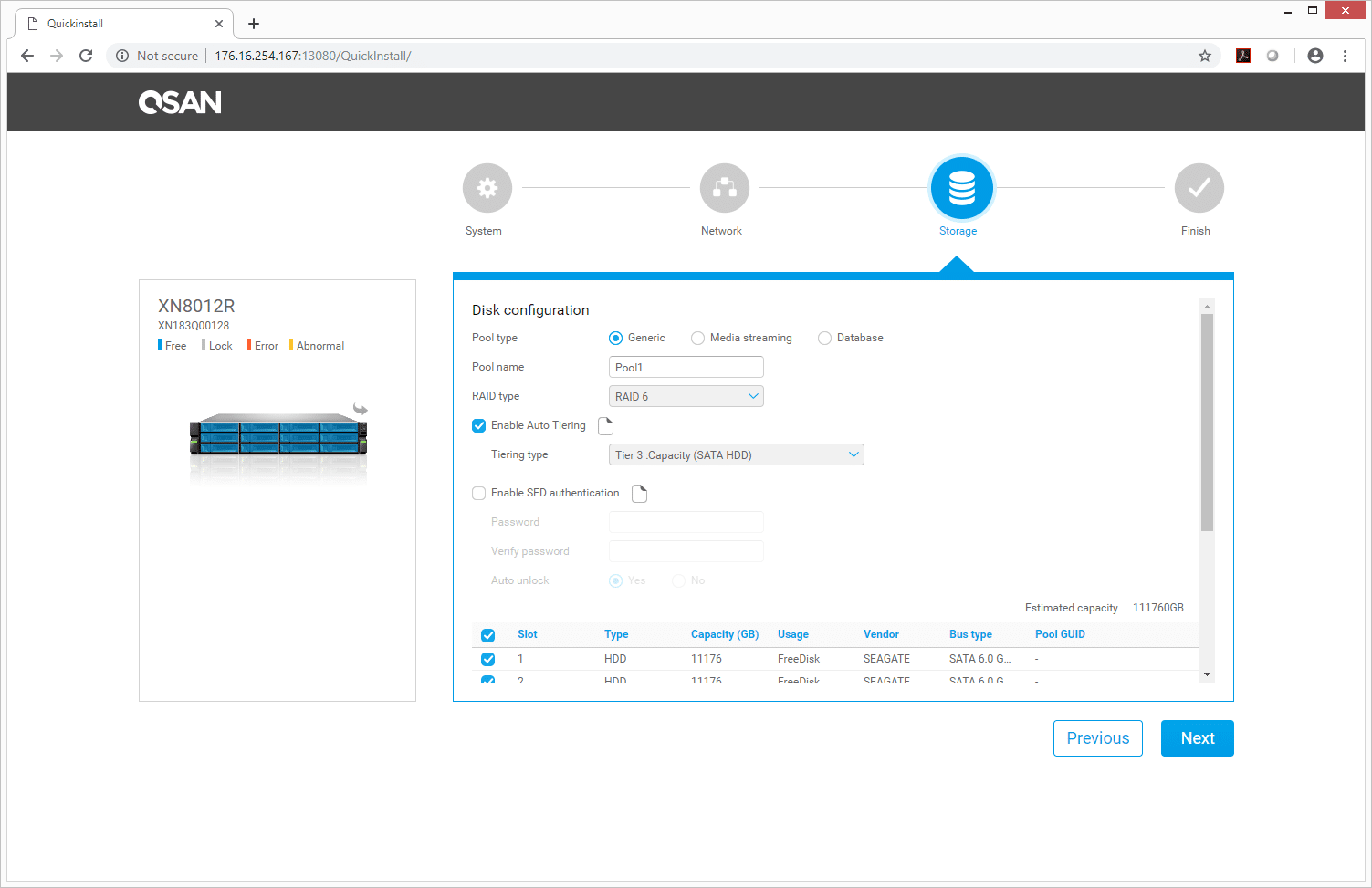

For a custom setup, users will have to go through and setup the system, network, and storage. In storage we setup our storage pool and RAID configuration. We also enabled the auto tiering.

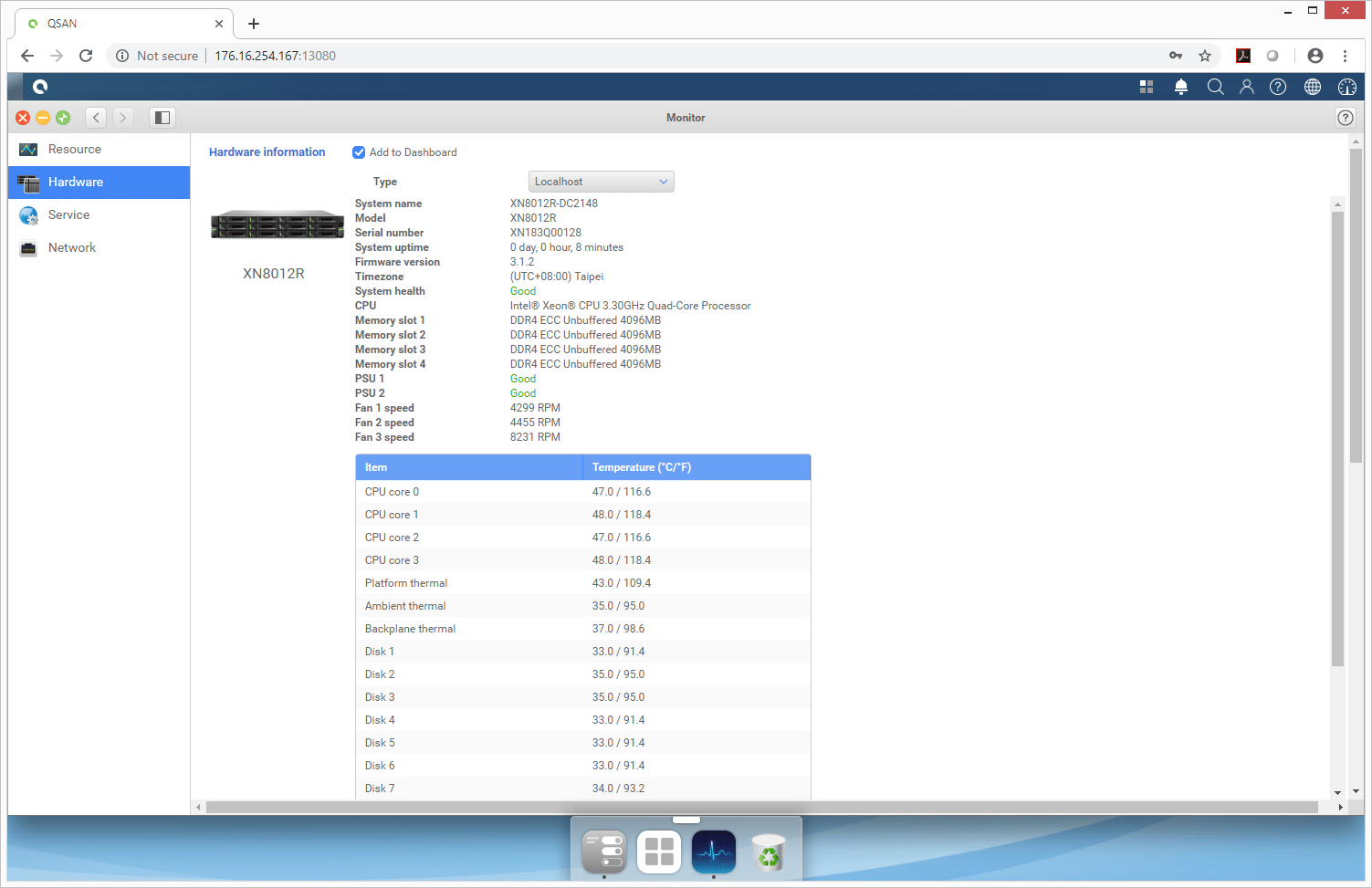

The GUI offers many basic features we see in several of the NAS on the market. One such feature is Monitor and as it sounds it allows users to monitor the system. One can look at things like resource utilization, hardware (and drill down into specific parts), service, and network.

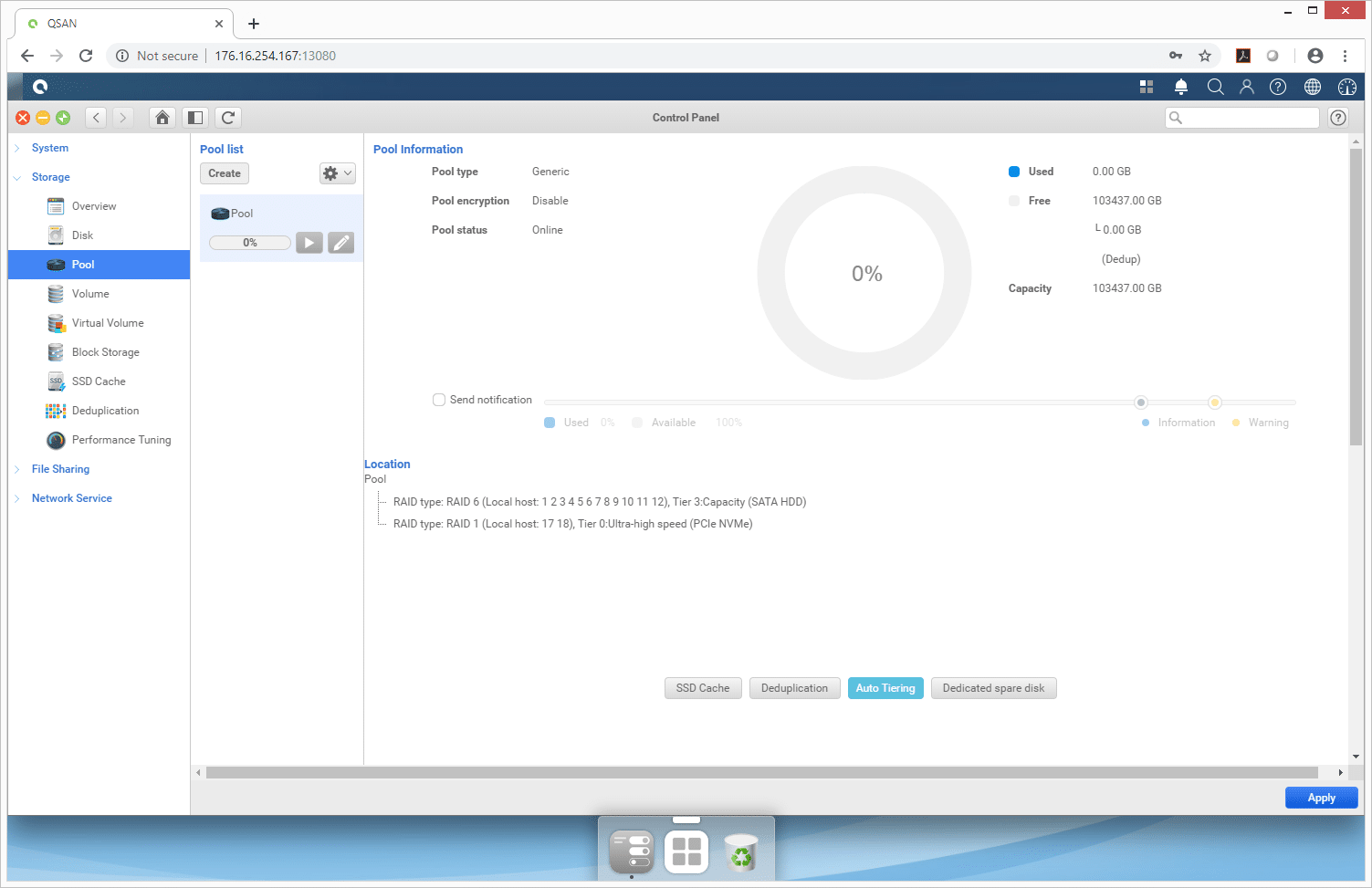

The Control Panel enables several adjustments to be made though Overview, Disk, Pool, Volume, Virtual Volume, Block Storage, SSD Cache, Deduplication, and Performance Tuning. Drilling down to Pool we can setup SSD Cache, Deduplication, Auto Tiering, and Dedicated spare disk. It is also a quick way to see the amount of capacity used versus free.

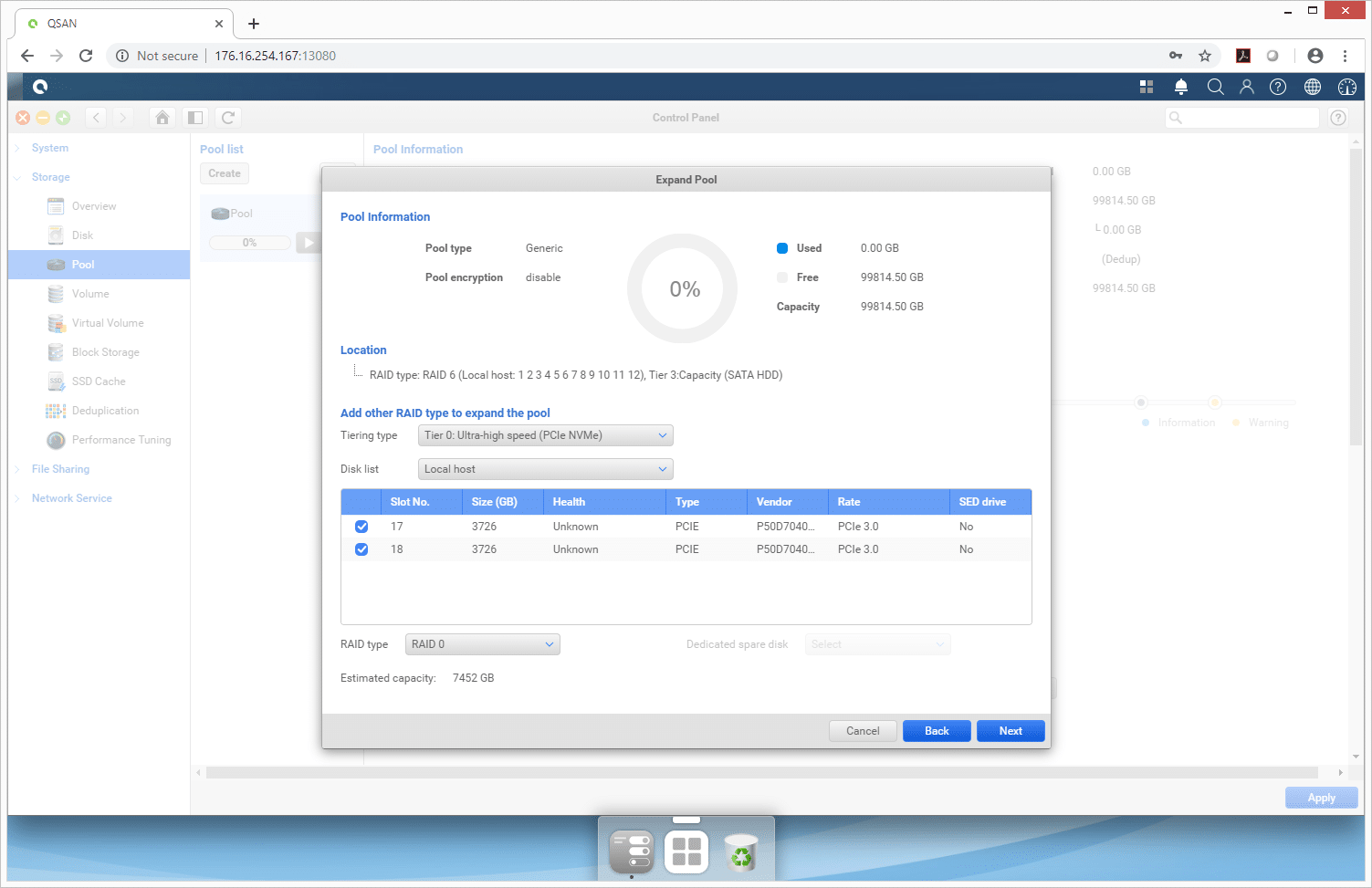

Clicking on Tiering, one can select the type of media for tiering as well as the slot to be used with the drive(s) information and RAID type.

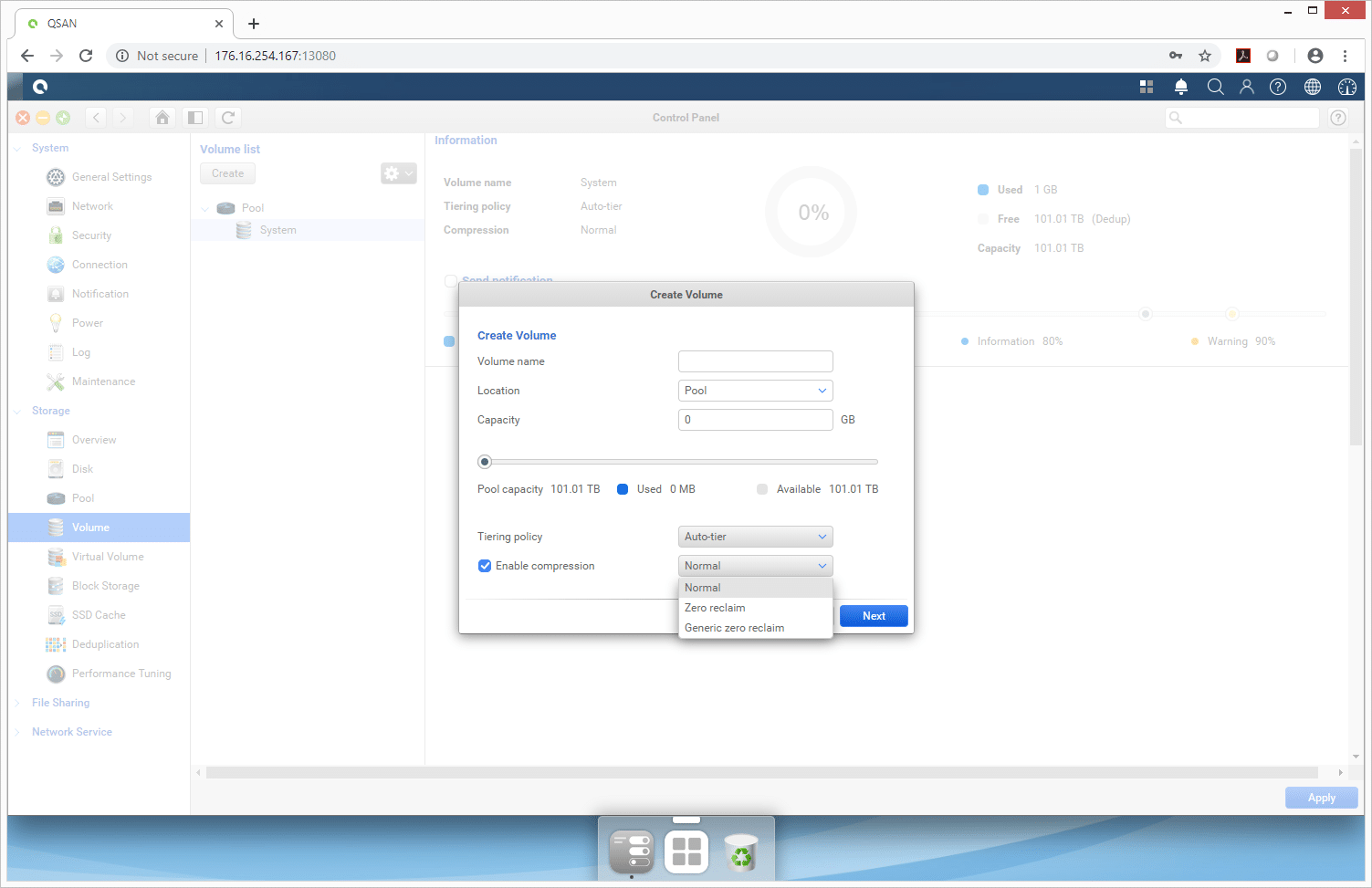

Through the Volumes tab, one can create a new volume, decide its location and capacity as well as enable auto-tiering and compression.

Testing Background and Comparables

We publish an inventory of our lab environment, an overview of the lab’s networking capabilities, and other details about our testing protocols so that administrators and those responsible for equipment acquisition can fairly gauge the conditions under which we have achieved the published results. None of our reviews are paid for or overseen by the manufacturer of equipment we are testing.

We tested both CIFS and iSCSI performance using a RAID6 configuration of twelve Seagate Exos X12 12TB HDDs and two 4TB Memblaze PBlaze5 Mixed Use NVMe SSDs in RAID1 for Tier0. Our particular system is loaded with 16GB of RAM. For testing we leveraged default levels of compression enabled, but with deduplication off.

Our standard StorageReview Enterprise Test Lab regimen runs the device through its paces with a battery of varying performance levels and throughput activity workloads. For the NAS, the following profiles were utilized to compare performance between different RAID configurations and different networking standard protocols (CIFS and iSCSI):

- 4K 100% Read / 100% Write throughput

- 8K 100% Read / 100% Write throughput

- 8K 70% Read / 30% Write throughput

- 128K 100% Read / 100% Write throughput

In the first of our enterprise workloads, we measured a long sample of random 4k performance with 100% write and 100% read activity using the CIFS and iSCSI protocol in RAID6. Here, the QSAN XCubeNAS XN8012R gave the best read performance in CIFS with 57,763 IOPS compared to the iSCSI 56,977 IOPS. For writes the best performance was iSCSI with 8,096 IOPS compared to the CIFS 7,430 IOPS.

Next up is 4K average latency. Here we see the same placing with CIFS having the best read 4.43ms (iSCSI had 4.49ms) and iSCSI having the best write performance of 31.6ms (CIFS had 37.8ms).

For 4K max latency the XN8012R had the best reads in iSCSI with 423.7ms (CIFS had 643.3ms). For writes iSCSI again showed the best performance with 1,723ms (CIFS had 52,448ms).

With 4K standard deviation CIFS took the top read spot with 2.5ms (iSCSI had 3.7ms) and iSCSI took the top write spot with 77ms (CIFS had 781ms).

In our next benchmark, we doubled the transfer size to 8K. Here, CIFS had the best read throughput with 171,767 IOPS (iSCSI had 123,345 IOPS) and iSCSI had the best write throughput with 106,582 IOPS (CIFS had 79,125 IOPS).

In our next four charts, we will be showing results based on a protocol consisting of 70% read operations and 30% write operations with an 8K transfer size. As such, the workload is then varied from 2 threads and a queue depth of 2 up to 16 threads and 16 queue. In throughput iSCSI started off stronger and stayed in the lead until about halfway through and CIFS came out on top. iSCSI ranged from 9,450 IOPS to end at 20,050 IOPS while CIFS started at 8,004 IOPS and ended at 21,603 IOPS.

For 8K 70/30 average latency, we see the two configurations very close to one another throughout with CIFS finishing just better than iSCSI with 11.84ms to 12.76ms.

In 8K 70/30 max latency iSCSI was much lower throughout with CIFS making large swings and finishing much higher. We’ve included two charts to show the scale difference.

Standard deviation was similar to the above with iSCSI much lower from beginning to end.

The final synthetic benchmark utilizes much larger 128k transfer sizes with 100% read and 100% write operations. In this scenario, CIFS gave us 1.58GB/s read and 2.04GB/s write while iSCSI had 1.29GB/s read and 1.34GB/s write.

Conclusion

QSAN’s new XCubeNAS XN8000R series are 2U NAS devices that are geared around performance. For this review, we looked at the 12-bay (front loading) XCubeNAS XN8012R. The NAS can scale up to 2PB of capacity with expansion units and up to 178TB raw in a 2U unit. For those looking to take advantage of SSD caching and tiering, the XCubeNAS XN8012R has six rear loading bays for SATA and NVMe SSDs. The NAS supports dual Intel Kaby Lake CPUs and up to 64GB of RAM.

With performance, the QSAN XCubeNAS XN8012R was tested with both CIFS and iSCSI storage in RAID6 with the NVMe Tier0 engaged. The NAS was able to put up some decent numbers. Highlights include 58K IOPS read in CIFS, 8,096 IOPS write in iSCSI in 4K with average latencies of 4.43ms read (CIFS) and 31.6ms write (iSCSI). In 8K the NAS hit 172K IOPS read (CIFS) and 107K IOPS write (iSCSI). And in our large block sequential 128K the NAS had bandwidth speeds of 1.6GB/s read (CIFS) and 2GB/s write (also CIFS).

The QSAN XCubeNAS XN8012R would make an excellent NAS for SMBs that need plenty of performance but may need to scale up in the future. The rear flash expansion bays make it a nice choice for tiering and caching without giving up capacity bays from the front, and the interface is easy to operate. While the NAS makes for a strong file host and lighter applications platform, database and other latency-sensitive use cases may want to step up to a more robust system. The ZFS filesystem, while offering many data integrity and data-reduction benefits, isn’t as performance-friendly for these types of workloads. In total though, the XN8012R is a nice complete offering from QSAN that should do well in SMB, ROBO and edge type use cases.

Amazon

Amazon