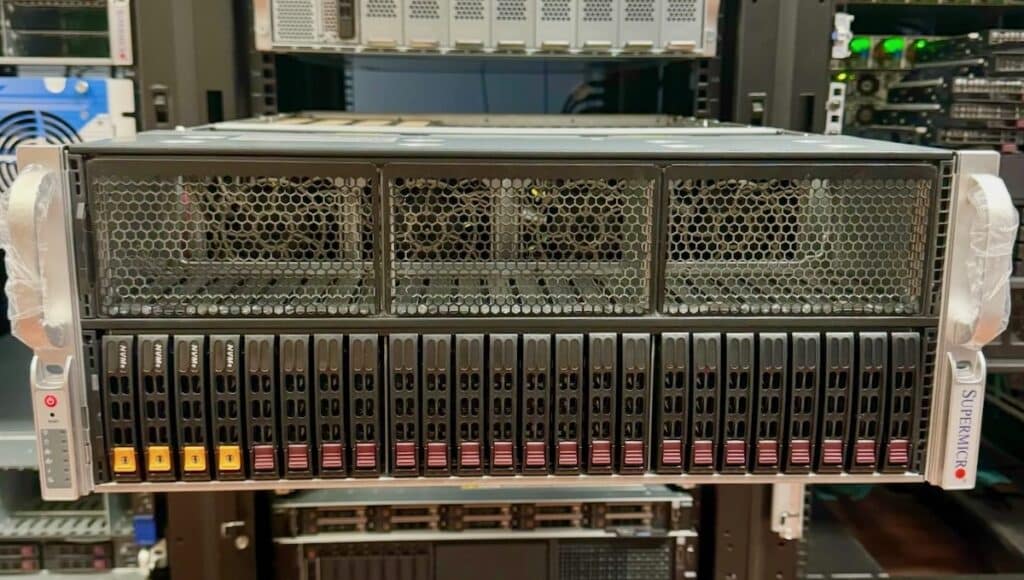

Supermicro has long offered GPU servers in more shapes and sizes than we have time to discuss in this review. Today, we’re looking at their relatively new 4U air-cooled GPU server that supports two AMD EPYC 9004 Series CPUs, PCIe Gen5, and a choice of eight double-width or 12 single-width add-in GPU cards. While Supermicro also offers Intel-based variants of these servers, the AMD-based AS-4125GS-TNRT family are the only servers in this class supporting NVIDIA H100 and AMD Instinct Mi210 GPUs.

The Supermicro AS-4125GS-TNRT GPU server has a few other hardware highlights like 10GbE networking onboard, out-of-band management, 9 FHFL PCIe Gen5 slots, 24 2.5″ bays, with four being NVMe, and the rest SATA/SAS. There are also 4x redundant titanium-level 2000W power supplies. On the motherboard, there’s a single M.2 NVMe slot for boot.

Before we get too far down this road, it’s also worth mentioning that Supermicro offers two other variants of the AS-4125GS-TNRT server config. While they use the same motherboard, the AS-4125GS-TNRT1 is a single-socket configuration with a PCIe switch that supports up to 10 double-width GPUs and 8 NVMe SSD bays. The AS -4125GS-TNRT2 is a dual-processor configuration that’s more or less the same thing, again with the PCIe switch.

No matter the configuration, the Supermicro AS-4125GS-TNRT is incredibly flexible thanks to its design and ability to select models with the PCIe switch. This style of GPU server is popular because it allows organizations to start small and expand, mix and match GPUs for different needs, or do anything they like. The socketed GPU systems bring the ability to better aggregate GPUs for big AI workloads, but the add-in card systems can’t be beaten for workload flexibility.

Supermicro AS-4125GS-TNRT with AMD and NVIDIA GPUs from SC23

Further, while this may come as blasphemy to some, the Supermicro add-in card GPU servers can even be used with cards from AMD and NVIDIA in the same box! Gasp, if you will, but plenty of customers have figured out that some workloads prefer an Instinct, while other workloads like the NVIDIA GPU. Lastly, while less popular than GPU servers stuffed to the gills, it is worth mentioning that these slots are just PCIe slots; it’s not unreasonable to imagine scenarios where customers may prefer FPGAs, DPUs, or some other form of accelerator in this rig. Again, flexibility is the core resounding benefit of this design.

For our review purposes, the Supermicro AS-4125GS-TNRT came in barebones, ready for us to add CPU, DRAM, storage, and, of course, GPUs. We worked with Supermicro to borrow 4x NVIDIA H100 GPUs for this review.

Supermicro AS-4125GS-TNRT Specifications

| Specifications | |

| CPU | Dual Socket SP5 CPUs up to 128C / 256T Each |

| Memory | Up to 24x 256GB 4800MHz ECC DDR5 RDIMM/LRDIMM (Total 6TB Memory) |

| GPU |

|

| Expansion Slots | 9x PCIE 5.0 x16 FHFL Slots |

| Power Supplies | 4x 2000W Redundant Power Supplies |

| Networking | 2x 10GbE |

| Storage |

|

| Motherboard | Super H13DSG-O-CPU |

| Management |

|

| Security |

|

| Chassis Size | 4U |

Supermicro AS-4125GS-TNRT Review Configuration

We configured our system from Supermicro as barebones, though they largely sell this as a configured system. When it got to the lab, the first thing we did was populate it with a pair of AMD EPYC 9374F 32c 64t CPUs. These were selected for their high clock speed and respectable multi-core performance.

For accelerators, we had quite the shelf to pick from, ranging from old Intel Phi Coprocessors to the latest H100 PCIe cards to high-end RTX 6000 ada workstation GPUs. We aimed to balance raw computational power with efficiency and versatility. Ultimately, we decided to start with four NVIDIA RTX A6000 GPUs and then move on to four NVIDIA H100 PCIe cards for our initial tests. This combination demonstrates the Supermicro platform’s flexibility and the NVIDIA accelerator cards.

The RTX A6000, primarily designed for performance in graphics-intensive workloads, also excels in AI and HPC applications with its Ampere architecture. It offers 48GB of GDDR6 memory, making it ideal for handling large datasets and complex simulations. Its 10,752 CUDA and 336 Tensor cores enable accelerated computing, which is crucial for our AI and deep learning tests.

On the other hand, the NVIDIA H100 PCIe cards are the latest shipping cards in the Hopper architecture lineup, designed primarily for AI workloads. Each card features an impressive 80 billion transistors, 80GB of HBM3 memory, and the groundbreaking Transformer Engine, tailored for AI models like GPT-4. The H100’s 4th generation Tensor Cores and DPX instructions significantly boost AI inference and training tasks.

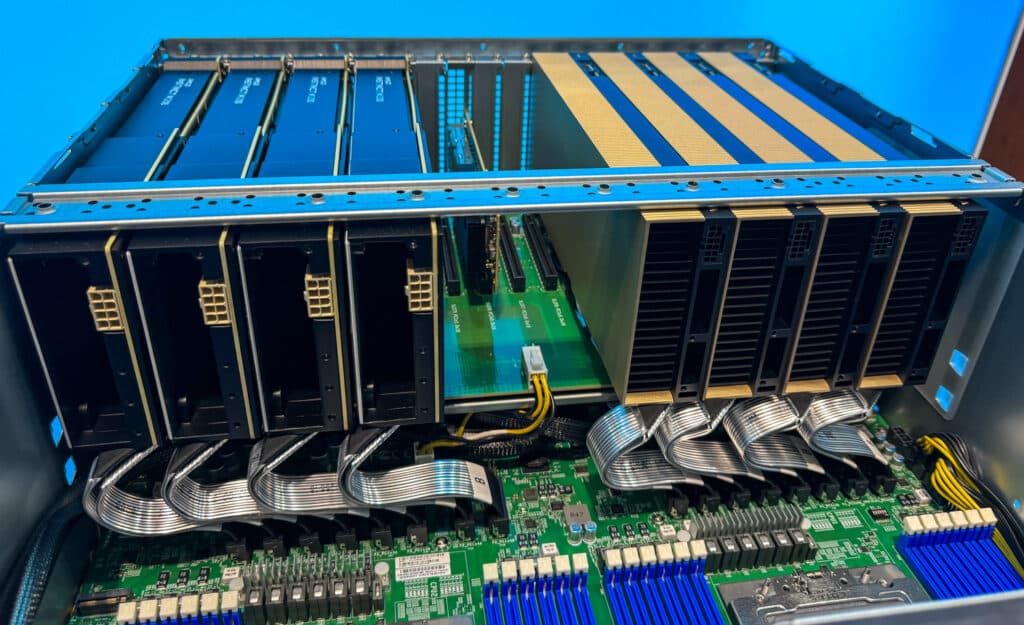

Integrating these GPUs into our Supermicro barebones system, we focused on ensuring optimal thermal management and power distribution, given the substantial power draw and heat generation from these high-end components. The Supermicro chassis, though not officially supporting such a configuration, proved to be versatile enough to accommodate our setup. To keep the thermals of the A6000s in check, we had to space them out by a card-width due to the squirrel-cage fan design, but the H100s can be packed in with their passthrough, passive cooling fins.

Our benchmarking suite included a mix of HPC and AI-specific use cases. These ranged from traditional benchmarking workloads to AI training and inference tasks using convolutional neural network models. We aimed to push these accelerators to their limits, evaluating their raw performance and efficiency, scalability, and ease of integration with our Supermicro A+ server.

Supermicro AS-4125GS-TNRT GPU Testing

As we move through the flagship GPUs from NVIDIA while working on a CNN foundational model in the lab, we started with some workstation-level training on a pair of older but highly capable RTX8000 GPUs.

During our AI performance analysis, we observed a remarkable yet expected progression in capabilities, moving from the NVIDIA RTX 8000 to four RTX A6000 GPUs and finally to four NVIDIA H100 PCIe cards. This progression showcased the raw power of these accelerators and the evolution of the NVIDIA accelerators through the last few years as more and more focus is put on AI workloads.

Starting with the RTX 8000, we noted decent performance levels. With this setup, our AI model training on a 6.36GB image dataset took approximately 45 minutes per epoch. However, the limitations of the RTX 8000 were apparent in terms of batch size and the complexity of tasks it could handle. We were constrained to smaller batch sizes and limited in the complexity of the neural network models we could effectively train.

The shift to four RTX A6000 GPUs marked a significant leap in performance. The A6000’s superior memory bandwidth and larger GDDR6 memory allowed us to quadruple the batch size while maintaining the same epoch duration and model complexity. This improvement improved the training process and enabled us to experiment with more sophisticated models without extending the training time.

However, the most striking advancement came with the introduction of four NVIDIA H100 PCIe cards. Leveraging the Hopper architecture’s enhanced AI capabilities, these cards allowed us to double the batch size again. More impressively, we could significantly increase the complexity of our AI models without any notable change in epoch duration. This capability is a testament to the H100’s advanced AI-specific features, such as the Transformer Engine and 4th generation Tensor Cores, which are optimized for handling complex AI operations efficiently.

Throughout these tests, the 6.36GB image dataset and model parameters served as a consistent benchmark, allowing us to directly compare the performance across different GPU configurations. The progression from the RTX 8000 to the A6000s, and then to the H100s, highlighted improvements in raw processing power and the GPUs’ ability to handle larger, more complex AI workloads without compromising on speed or efficiency. This makes these GPUs particularly suitable for cutting-edge AI research and large-scale deep learning applications.

The Supermicro server employed in our testing features a direct PCIe connection to the CPUs, bypassing the need for a PCIe switch. This direct attachment ensures that each GPU has a dedicated pathway to the CPU, facilitating fast and efficient data transfer. This architecture is crucial in some workloads in AI and HPC for minimizing latency and maximizing bandwidth utilization, particularly beneficial when dealing with high-throughput tasks such as AI model training or complex VDI environments when all work is local to the server.

Conclusion

The scalability and flexibility of the Supermicro GPU A+ Server AS-4125GS-TNRT server are the killer features here. It is particularly beneficial for customers needing to adapt to evolving workload demands, whether in AI, VDI, or other high-performance tasks. Starting with a modest configuration, users can effectively handle entry-level AI or VDI tasks, offering a cost-effective solution for smaller workloads or those just beginning to venture into AI and virtual desktop infrastructure. This initial setup provides a solid and scalable foundation, allowing users to engage with basic yet essential AI and VDI applications.

Additionally, while we know a lot of enterprises want to take advantage of the socketed H100 GPUs, the wait times for these platforms is excessive, we’ve been told by many sources the wait is nearly a year. The supply chain logistics underscore the great thing about this server, it can handle anything. L40S GPUs are available “now” so customers can at least get their AI workloads moving sooner rather than later along with this combo. And as needs change, customers can easily swap the cards. This ensures that the Supermicro GPU A+ Server AS-4125GS-TNRT server is not just for immediate needs but is future-proof, catering to the evolving technological landscape.

Amazon

Amazon