Earlier this year Fujitsu announced updates to its ETERNUS all-flash (AF) line, with the introducing of AF250 S2 And AF650 S2. Having previously looked at the ETERNUS AF250 and found it to be a strong performer (winning our Editor’s Choice award) especially compared to its pricing. Now we are taking a good look at the updated version to see what improvements have been made and how they stack up.

The new AF250 S2 is also a 2U unit, in fact from a physical perspective it looks nearly identical to the non-S2 version. The AF250 S2 is an array aimed at the midrange in terms of pricing and capacity with a maximum capacity of 737TB (with expansion). For performance, the array is a dual controller with up to 64GB of RAM with quoted performance of 760K IOPS and random performance of 430K IOPS, with read latency as low as 170μs and write latency as low as 60μs. This makes it an ideal choice for teir1 applications. An interesting note here is that the performance claims have not changed form one model to the next. So what is different is a question begging to be asked. According to Fujitsu they have added Intel Skylake CPUs, optimized the flash software, and made enhancements on both the controller and OS side of things.

The AF250 S2 comes with an automated QoS that ensures the proper amount of performance is dedicated to the proper applications. And though the array offers a fair amount of storage, with an expansion, it also offers flexible deduplication and compression that can be turned off when not needed. The array offers disaster recovery with mirroring and automated transparent failover.

For our review the Fujitsu ETERNUS AF250 S2 is configured with 46TB raw system capacity, leveraging more cost effective 1.92TB SSDs.

Fujitsu Storage ETERNUS AF250 specifications

| Form factor | 2U |

| Number of Controllers | 2 |

| Number of host interfaces | 4/8 ports [FC(16Gbit/s), iSCSI(10Gbit/s)] |

| Max System Memory | 64GB |

| Supported RAID levels | 0, 1, 1+0, 5, 5+0, 6 |

| Host interfaces | |

| Fibre Channel (16 Gb/s) | |

| iSCSI (10 Gb/s, 10 GBASE-T) | |

| iSCSI (10 Gb/s, 10 GBASE-SR) | |

| Max Number of Hosts | 1,024 |

| Storage | |

| Max Capacity | 737TB |

| Total drive bays | 48 (with expansion) |

| Drive Type supported | |

| 2.5-inch, SSD | (15.36TB / 7.68TB / 3.84 TB / 1.92 TB / 960GB / 400GB) |

| 2.5-inch, SSD | (self-encrypting) (1.92 TB) |

| Drive interface | SAS (12Gb/s) |

| Performance | |

| Latency | Write 60μs, Read 160μs (Minimum) |

| Sequential access performance | 760K IOPS (100 % Read, 4 KB Blocks) |

| Random access performance | 430K IOPS (100 % Read, 4 KB Blocks) |

| Physical | |

| Dimensions (WxDxH) | 482 x 645 x 88 mm (19 x 25.4 x 3.5 inch) |

| Weight | 35 kg (77 lb) |

| Environmental | |

| Temperature (not operating) | 0 – 50 °C |

| Humidity (operating) | 20 – 80 % (relative humidity, non-condensing) |

| Humidity (not operating) | 8 – 80 % (relative humidity, non-condensing) |

| Altitude | 3,000 m (10,000 ft.) |

| Sound pressure (LpAm) | 47dB(A) |

| Sound power (LWAd; 1B = 10dB) | 6.5B |

| Power | |

| Power voltage | AC 100 – 120 V / AC 200 – 240 V |

| Power frequency | 50 / 60 Hz |

| Power supply efficiency | 92% (80 PLUS gold) |

| Maximum Power Consumption | AC 100 – 120 V: 1,240 W (1,260 VA) |

| Maximum Power Consumption | AC 200 – 240 V: 1,240 W (1,260 VA) |

| Power phase | Single |

Management

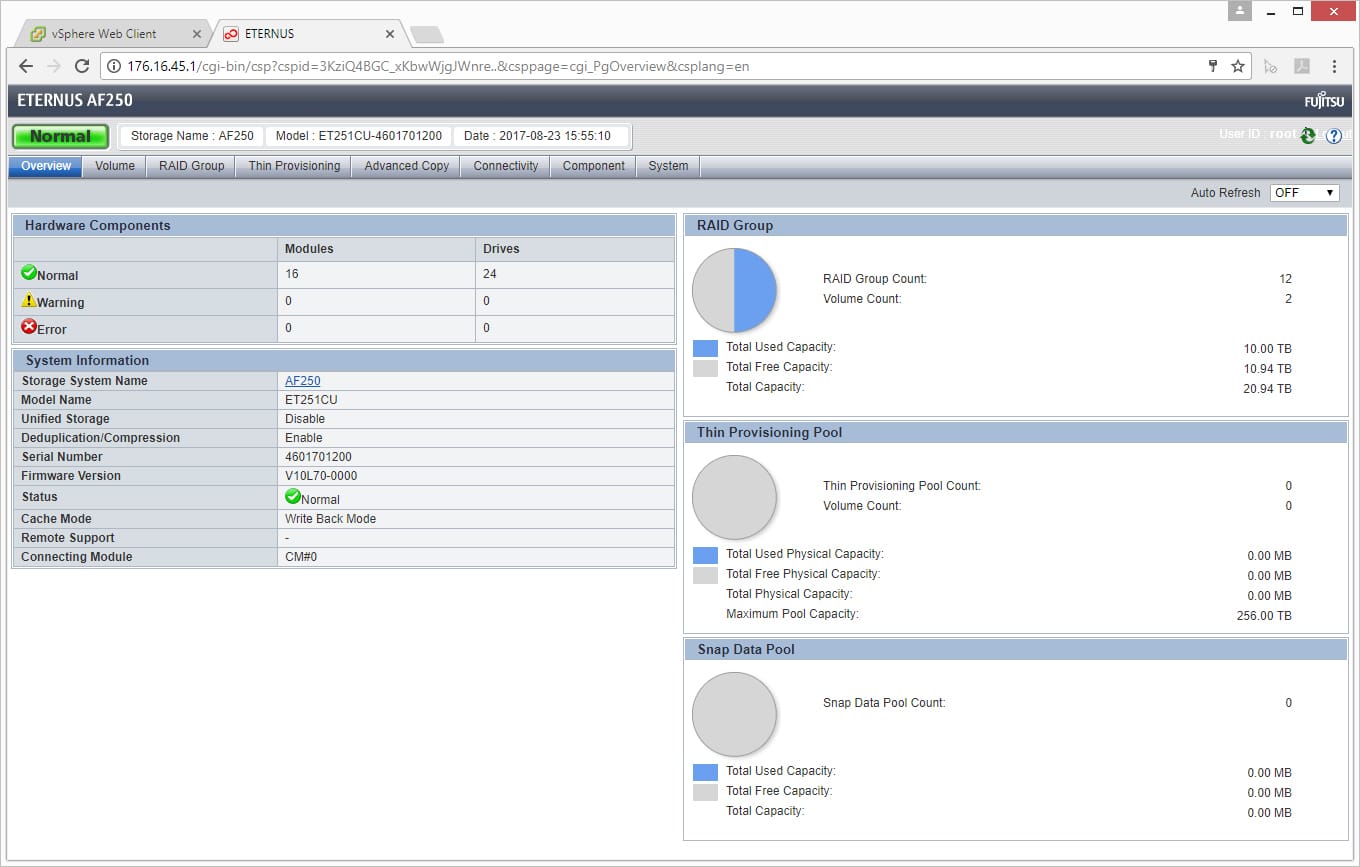

Since the S2 uses the same management as the AF250 and for a more in-depth look into the management of the array reader can check out the previous review.

Performance

Application Workload Analysis

The application workload benchmarks for the Fujitsu Storage ETERNUS AF250 S2 consist of the MySQL OLTP performance via SysBench and Microsoft SQL Server OLTP performance with a simulated TPC-C workload. In each scenario, we had the array configured with 26 Toshiba PX04SV SAS 3.0 SSDs, configured in two 12-drive RAID10 disk groups, one pinned to each controller. This left 2 SSDs as spares. Two 5TB volumes were then created, one per disk group. In our testing environment, this created a balanced load for our SQL and Sysbench workloads.

SQL Server Performance

Each SQL Server VM is configured with two vDisks: 100GB volume for boot and a 500GB volume for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. While our Sysbench workloads tested previously saturated the platform in both storage I/O and capacity, the SQL test is looking for latency performance.

This test uses SQL Server 2014 running on Windows Server 2012 R2 guest VMs, and is stressed by Quest’s Benchmark Factory for Databases. While our traditional usage of this benchmark has been to test large 3,000-scale databases on local or shared storage, in this iteration we focus on spreading out four 1,500-scale databases evenly across the Fujitsu AF250 (two VMs per controller).

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

SQL Server OLTP Benchmark Factory LoadGen Equipment

- Dell EMC PowerEdge R740xd Virtualized SQL 4-node Cluster

- 8 Intel Xeon Gold 6130 CPU for 269GHz in cluster (Two per node, 2.1GHz, 16-cores, 22MB Cache)

- 1TB RAM (256GB per node, 16GB x 16 DDR4, 128GB per CPU)

- 4 x Emulex 16GB dual-port FC HBA

- 4 x Mellanox ConnectX-4 rNDC 25GbE dual-port NIC

- VMware ESXi vSphere 6.5 / Enterprise Plus 8-CPU

For our testing, we will be comparing the S2 version to the older version of the Fujitsu Storage ETERNUS AF250.

For SQL Server the AF250 S2 was able to pull of an aggregate transactional score of 12,638.3 with individual VMs ranging from 3,159.2 to 3,159.8 TPS. This is a slight improvement over the older version of the array with an aggregate score of 12,622.1 TPS.

In SQL Server average latency the S2 pulled ahead quite a bit more with an aggregate of 4.2ms compared to the older version’s 9.8ms.

Sysbench Performance

Each Sysbench VM is configured with three vDisks, one for boot (~92GB), one with the pre-built database (~447GB), and the third for the database under test (270GB). From a system-resource perspective, we configured each VM with 16 vCPUs, 60GB of DRAM and leveraged the LSI Logic SAS SCSI controller. Load gen systems are Dell R740xd servers.

Dell PowerEdge R740xd Virtualized MySQL 4 node Cluster

- 8 Intel Xeon Gold 6130 CPU for 269GHz in cluster (two per node, 2.1GHz, 16-cores, 22MB Cache)

- 1TB RAM (256GB per node, 16GB x 16 DDR4, 128GB per CPU)

- 4 x Emulex 16GB dual-port FC HBA

- 4 x Mellanox ConnectX-4 rNDC 25GbE dual-port NIC

- VMware ESXi vSphere 6.5 / Enterprise Plus 8-CPU

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Storage Footprint: 1TB, 800GB used

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

For Sysbench, we tested several sets of VMs including 4VM, 8VM, 16VM, and 24VM. Unlike SQL Server, here we only looked at raw performance. In transactional performance, the AF250 S2 had stronger performance across the board compared to the older model starting at 8,336 TPS for 4VM, jumping to 12,447 TPS for 8VM (higher than the previous array’s 16VM performance), 15,595 TPS for 16VM, and 17,237 TPS for 24VM.

With average latency, the AF250 S2 had 15.36ms for 4VM, 20.57ms for 8VM, 32.87ms for 16VM, and only 44.59ms for 24VM. Again this is an improvement over the older model in every way.

In our worst-case scenario latency benchmark, the AF250 S2 again showed very consistent performance with a 99th percentile latency of 28.16ms for 4VM, 38.87ms for 8VM, 67.19ms for 16VM, and 93.52ms for 24VM.

VDBench Workload Analysis

When it comes to benchmarking storage arrays, application testing is best, and synthetic testing comes in second place. While not a perfect representation of actual workloads, synthetic tests do help to baseline storage devices with a repeatability factor that makes it easy to do apples-to-apples comparison between competing solutions. These workloads offer a range of different testing profiles ranging from “four corners” tests, common database transfer size tests, as well as trace captures from different VDI environments. All of these tests leverage the common vdBench workload generator, with a scripting engine to automate and capture results over a large compute testing cluster. This allows us to repeat the same workloads across a wide range of storage devices, including flash arrays and individual storage devices. On the array side, we use our cluster of Dell PowerEdge R740xd servers:

Profiles:

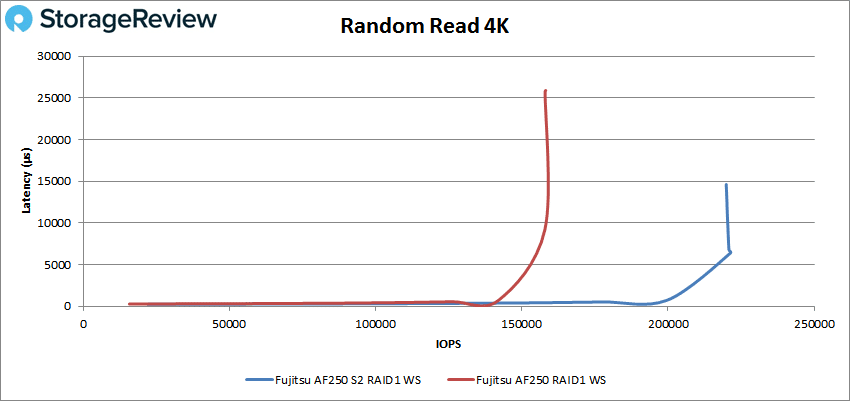

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

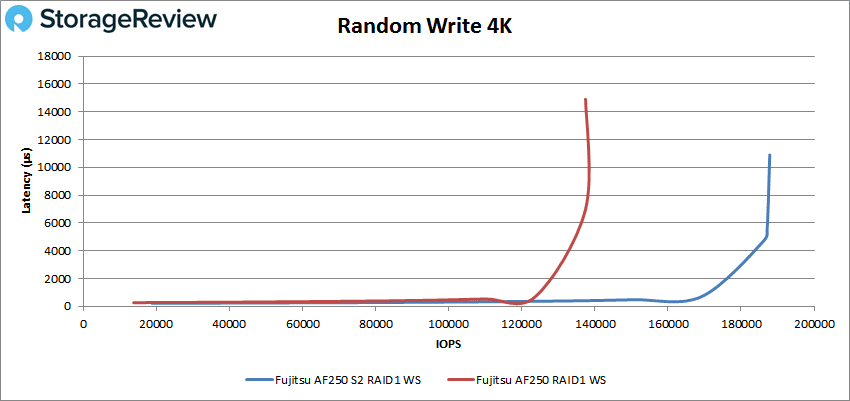

- 4K Random Write: 100% Write, 64 threads, 0-120% iorate

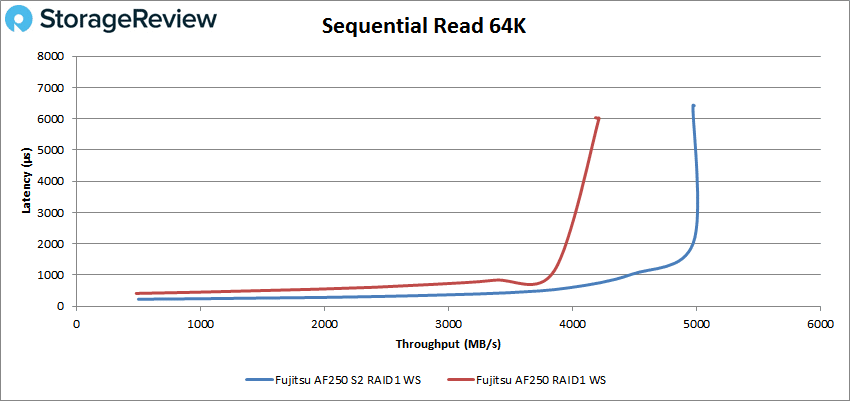

- 64K Sequential Read: 100% Read, 16 threads, 0-120% iorate

- 64K Sequential Write: 100% Write, 8 threads, 0-120% iorate

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

In 4K peak read performance, the AF250 S2 had sub-millisecond latency performance up until nearly 200K IOPS. The array peaked at 221,327 IOPS with a latency of 6.38ms before dropping slightly and spiking in latency. This is a massive improvement in performance versus the older model that had peak performance of 158K IOPS at a latency of 25.77ms.

In 4K peak write performance, the AF250 S2 had performance under 1ms until it hit roughly 170K IOPS. The S2 peaked at 187,167 IOPS with latency popping up to 10.9ms. The previous model had a peak of 137K IOPS with a latency of 14.89ms.

Switching to 64K peak read, the AF250 S2 had sub-millisecond latency performance up to about 71K IOPS or about 4.4GB/s surpassing the previous models peak performance of 4.21GB/s. The S2 peaked at 79,495 IOPS or 4.97GB/s with a latency of 6.43ms.

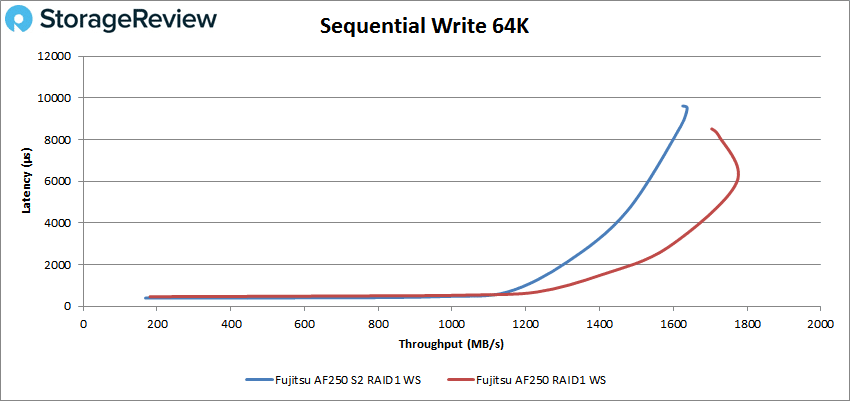

For 64K sequential peak write, the AF250 S2 actually fell behind the previous model with sub-millisecond latency performance until just under 20K IOPR or 1.1GB/s peaking at 26,201 IOPS or 1.64GB/s with a latency of 9.6ms. In contrast, the originally tested model had peak performance of 27,623 at 8.5ms latency and 1.77GB/s bandwidth.

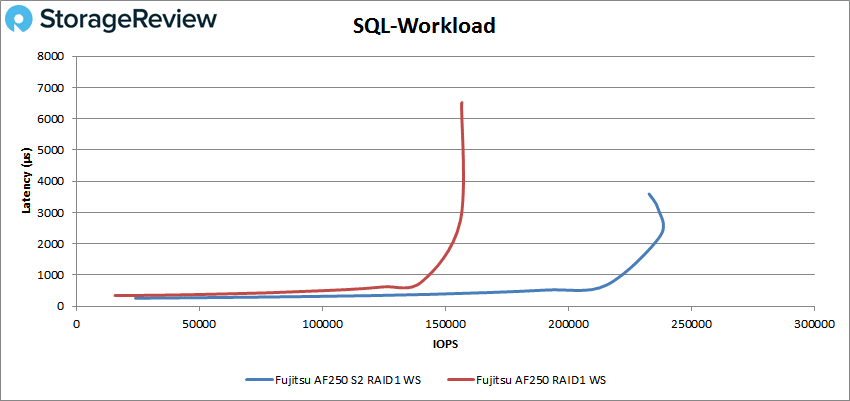

In our SQL workload, the AF250 S2 made it to nearly 217K IOPS before going over 1ms and peaked at about 238K IOPS with a latency of 2.26ms before dropping off some in performance and going up in latency. The previous model peaked at 156,638 IOPS at 6.48ms latency.

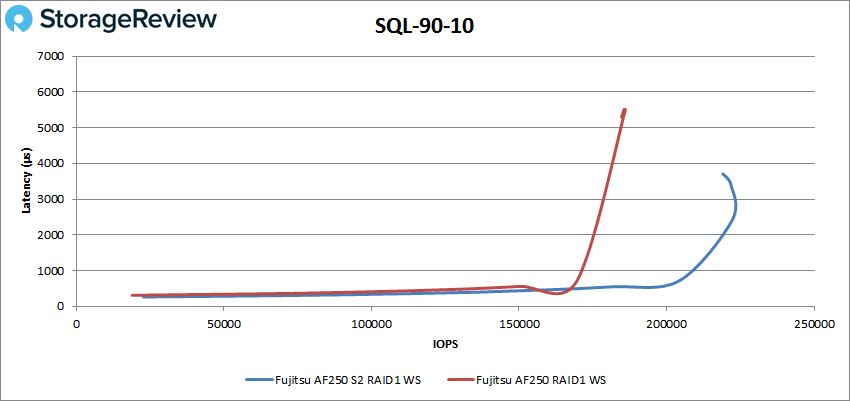

In the SQL 90-10 benchmark, the AF250 S2 stayed under 1ms until about 206K IOPS and peaked at just over 222K IOPS with 2.41ms latency before dropping off some. The previous model peaked at 186,021 IOPS at 5.5ms latency.

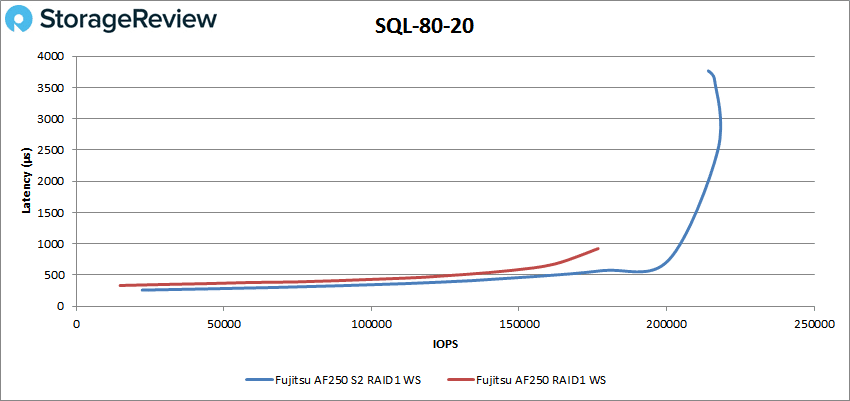

The SQL 80-20 benchmark showed the older model once again outperforming the S2. Here the AF250 S2 had sum-millisecond latency until about 201K IOPS and peaked at of about 218K IOPS and a latency of 2.5ms. The previous model did not have as high of peak performance (176,789 IOPS) but had sum-millisecond performance throughout.

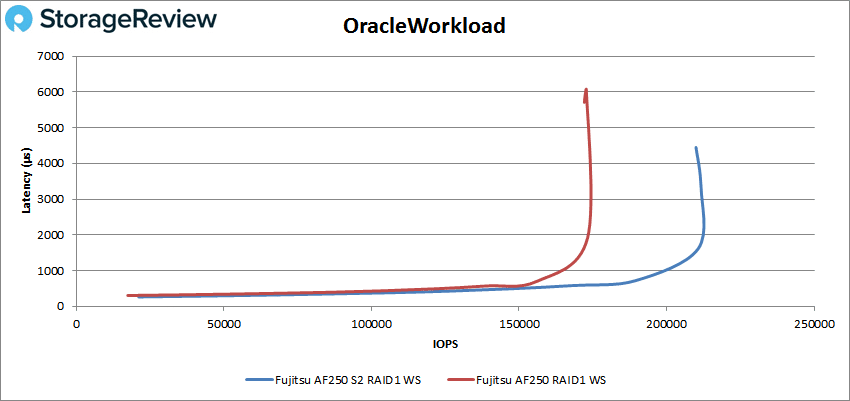

With the Oracle Workload, the AF250 S2 stayed under 1ms until just shy of 200K IOPS with a peak performance of about 212K IOPS with a latency of 3.3ms compared to the older model’s 172,811 IOPS with a latency of 6.05ms.

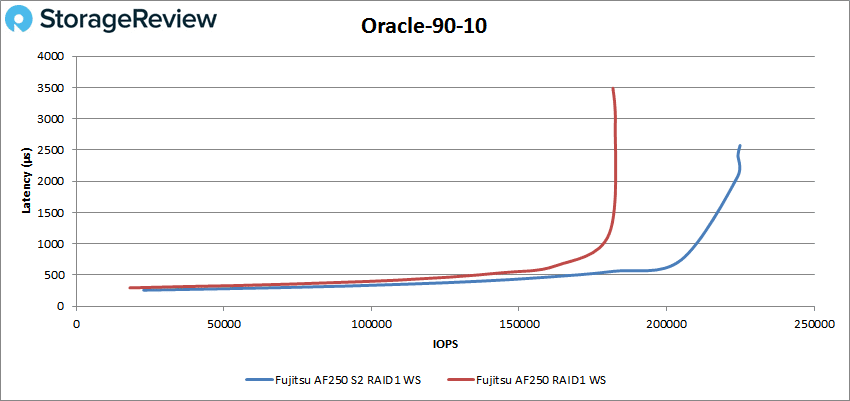

The Oracle 90-10 showed the AF250 S2 with sub-millisecond latency performance until about 206K IOPS and peaked at nearly 225K with a latency of 2.1ms. The previous model peaked at 181,813 IOPS at 3.5ms latency.

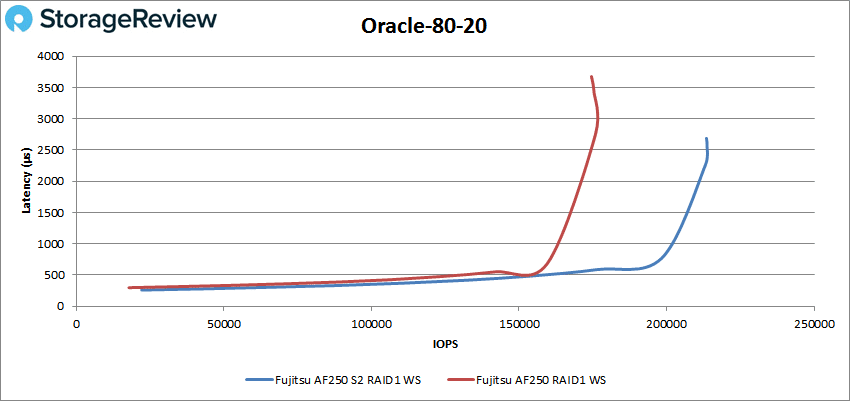

The AF250 S2 was about to make it just shy of 200K IOPS under 1ms in the Oracle 80-20 benchmark. The array peaked at about 214K IOPS with a latency of 2.3ms compared to the earlier array’s peak of 174,519 IOPS with a latency of 3.68ms.

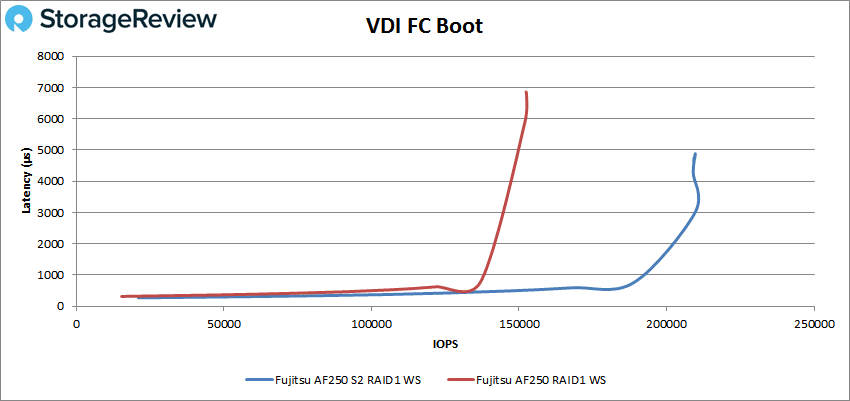

Next we switched over to our VDI clone test, Full and Linked. For VDI Full Clone Boot, the AF250 S2 had performance under 1ms until it hit about 190K IOPS and peaked at about 210K IOPS with a latency of 3ms. The previous model peaked at 152,469 IOPS with a latency of 6.84ms.

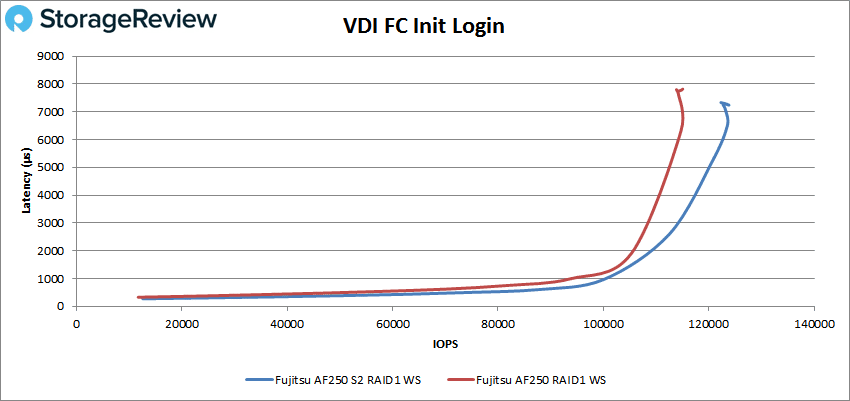

The VDI Full Clone initial login saw the AF250 S2 make it to just over 100K IOPS before breaking 1ms. The array peaked at 123,835 IOPS with a latency of 7.24ms compared to the older version’s 113,865 IOPS at 7.8ms latency.

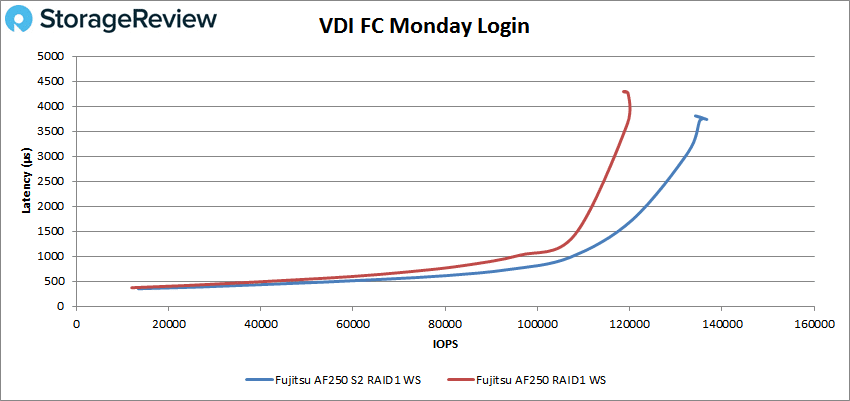

The VDI Full Clone Monday login had the AF250 S2 run sub-millisecond latency until about 108K IOPS and peak at 135,978 IOPS with a latency of 3.76ms. The previous model topped out at 118,884 IOPS at 4.3ms latency.

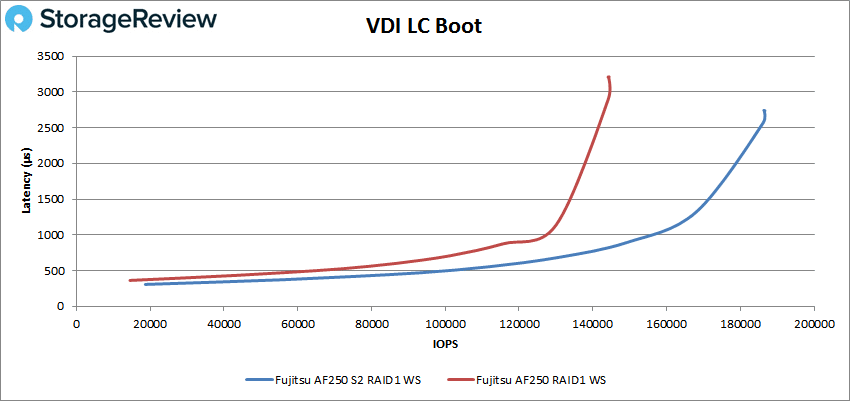

Moving over to VDI Linked Clone, the boot test showed sub-millisecond latency performance until the AF250 S2 hit over 150K IOPS and a peak of 186,477 IOPS with a latency of 2.74ms. The previous model hit 144,317 IOPS with a latency of 3.2ms.

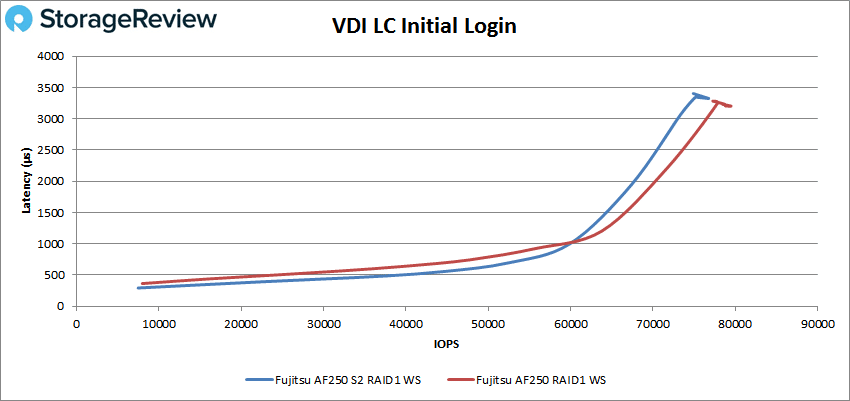

In the Linked Clone VDI profile measuring Initial Login performance, the AF250 S2 broke above 1ms at about 60K IOPS along with the older model. There the S2 peaked at a lower number than the original with 77,278 IOPS versus 79,496 IOPS and 3.3ms latency versus 3.2ms.

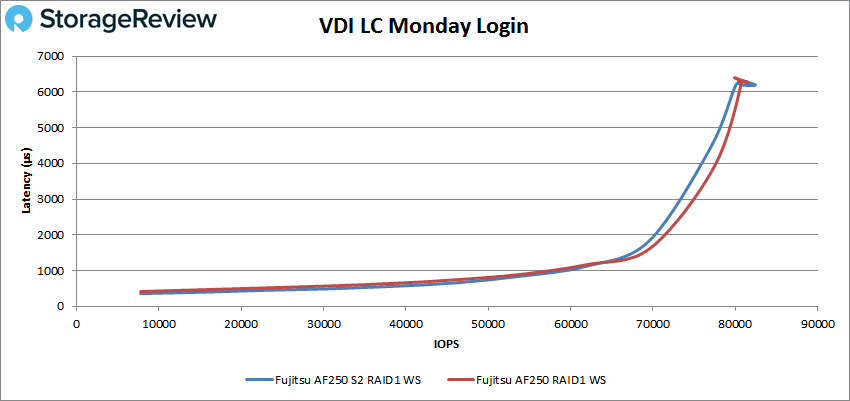

In our last profile looking at VDI Linked Clone Monday Login performance, both AF250 arrays ran neck and neck breaking sub-millisecond latency performance at about 55K IOPS. The S2 was able to just edge out the older version with 82,460 IOPS with 6.19ms latency versus 80,703 IOPS and 6.27ms latency.

Conclusion

Fujitsu has made a handful of changes under the hood to its ETERNUS AF250 array with the new version AF250 S2. It still comes in the same 2U form factor and with the same amount of total drives (48 with expansion equaling 737TB total leveraging the 15+TB drives). The design and management are also the same, however, Fujitsu added in a new Skylake CPU and optimized its flash storage software and enhanced the controller on the OS side.

Looking at the performance of our Application Workload Analysis, we saw a big jump in performance with the S2. In our SQL server benchmarks the new array had slightly better transactional performance at 12,628.3 to 12,622.1 TPS but nearly less than half the latency with 4.2ms to 9.8ms. In our Sysbench we also saw improvements across the board with the array outperforming the older model by having TPS scores as high as 17,237 for 24VM and 8,336 for 4VM. For average latency the S2 had 15.36ms for 4VM, 20.57ms for 8VM, 32.87ms for 16VM, and only 44.59ms for 24VM. And In our worst-case scenario latency we saw the S2 have 4VM, 38.87ms for 8VM, 67.19ms for 16VM, and 93.52ms for 24VM.

Looking at VDBench tests of its raw storage in RAID1 wide-striped, for the most part we saw significant improvements in performance for the AF250 S2 over the older version of the array in several instances the new version had better sub-millisecond latency performance than the older version’s peak performance. In our 4K read test we saw a performance improvement of over 63K IOPS with peak performance of 221,327 IOPS. In 4K write we saw a 50K IOPS peak improvement between the arrays with the S2 hitting 187K IOPS. For 64K sequential read the AF250 S2 peaked at 4.97GB/s. In the SQL workloads the AF250 S2 hit 238K IOPS, 222K IOPS for the 90-10, and 218K IOPS for the 80-20. Oracle showed strong numbers with 212K IOPS, 225K IOPS for the 90-10, and 214K IOPS for the 80-20. For our VDI Clone benchmarks, Boot, Initial Login, and Monday Login the AF250 S2 was able to hit 210K IOPS, 124K IOPS, and 136K IOPS for full clone and 186K IOPS, 77K IOPS, and 82K IOPS for linked clone. There were a handful of tests where the S2 was outperformed by the previous model such as the 64K write, the VDI LC Initial Login, and in the SQL 80-20 the latency was much better on the older model though it had overall lower performance.

With just a few minor tweaks under the hood, Fujitsu was able to get an appreciable performance bump out of their ETERNUS AF250 all-flash storage array. While not record setting, the AF250 S2 packs ample performance for small to medium business users.

Amazon

Amazon