CTERA has introduced Fusion Direct, a federated data architecture designed to collapse the long-standing gap between enterprise file systems and object storage. The new offering extends the CTERA Fusion family, which already includes CTERA Fusion Gateway, and is positioned as a core component of the CTERA Intelligent Data Platform. The goal is to present a single, high-performance data fabric that can serve both human-centric file workloads and machine-driven AI pipelines without duplicating data or refactoring applications.

Unifying File and Object Under One Namespace

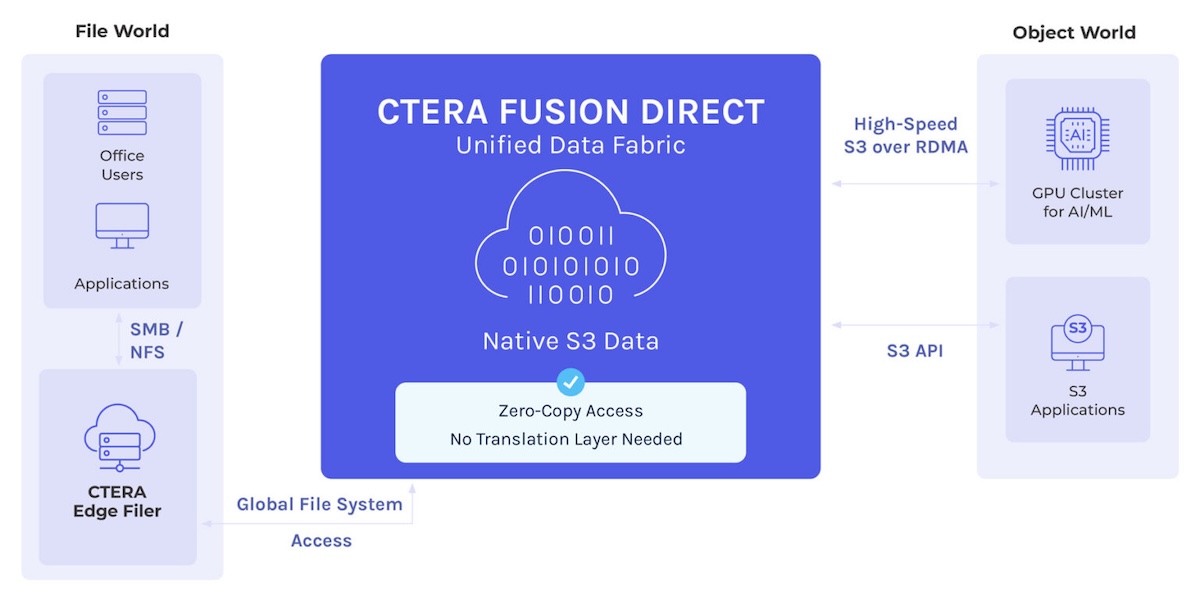

Enterprise IT teams have traditionally been forced to operate two separate storage domains. NAS systems provide SMB and NFS access for user collaboration and legacy or line-of-business applications. Object storage, accessed typically via S3, is used for large-scale, cloud-native, and analytics workloads. Bridging these environments has often meant standing up parallel infrastructures, copying data between them, or relying on gateways that translate file calls to object APIs. Those translation layers can introduce additional latency, complexity, and operational risk, especially at scale.

Fusion Direct targets that problem by exposing a single federated global namespace in which files and objects coexist natively. Data can be written to the platform as files and read back as objects, or written as objects and read as files. The system supports full bidirectional read and write behavior without converting files into proprietary object chunks or routing access through a separate translation gateway. CTERA is explicit that there is no file-to-object conversion bottleneck and no proprietary encapsulation scheme in play.

From an access perspective, existing enterprise applications and users continue to operate over SMB and NFS as they do today. At the same time, AI training clusters, HPC environments, and cloud-native services can access the same datasets via S3 and S3 over RDMA, which is designed to deliver line-rate throughput to GPU clusters and other high-performance compute environments.

Leveraging Existing Object Stores and Distributed Edge

An important design point is the ability to attach existing S3 buckets directly to the Fusion Direct data fabric. Rather than migrating or rehydrating object data into a new system, organizations can present their current object storage namespaces as part of the global file/object space. Once attached, the objects in those buckets can be accessed as standard files across edge locations and multi-cloud deployments.

This approach allows IT teams to expose object data as files to users and applications globally, while still presenting it natively as S3 to AI and analytics workloads. It also reduces the need for duplicate infrastructure that would otherwise be deployed in multiple regions or edge sites to stage or reformat data. The net effect is a simpler storage footprint for distributed datasets spanning multiple geographies.

Architecture for AI-Era Performance

CTERA Fusion Direct leverages CTERA’s existing Intelligent Data Platform stack and is backed by U.S. Patent 12,007,9521. The architecture emphasizes simultaneous support for collaborative file workloads and high-throughput, machine-scale data consumption.

One core capability is native zero-copy access. Data written to the CTERA platform as files is immediately available as standard S3 objects, without any secondary copies or background conversions. Conversely, S3 buckets can be connected in place, and their contents become immediately addressable as files in the global namespace. This is intended to avoid both latency and storage overhead associated with duplicate copies or intermediate caches.

High-speed file streaming is another focus area. Large media assets, training datasets, and other capacity-heavy content can be streamed directly from object storage to file-based applications. This approach eliminates the need for bulk local downloads or staging steps, which can slow workflows and consume additional storage at the edge or in compute clusters.

On the performance side, Fusion Direct exposes native objects in ways that support S3-over-RDMA and GPU-direct access patterns. For AI clusters, that means GPUs can read and write data at or near wire speed from object-backed datasets without additional protocol translation in the data path. This is particularly relevant for training and inference jobs constrained by I/O throughput rather than raw compute.

CTERA also calls out data sovereignty considerations. Because data resides in standard S3 buckets without proprietary wrapping or gateways that own the metadata, organizations retain control of their information across on-premises deployments and public clouds. The architecture is intended to minimize data-layer lock-in and preserve flexibility as infrastructure strategies evolve.

Collapsing Human and Machine Data Silos

CTERA CEO Oded Nagel says the main barrier to enterprise AI adoption isn’t data shortage but the challenge of using data effectively. He highlights that separating data for human use from data for machine analytics creates friction. Maintaining separate environments and datasets slows AI deployment. Nagel proposes combining these into a single platform supporting SMB/NFS and S3 over RDMA, giving enterprises a direct path from raw data to AI-ready datasets. A unified platform can help organizations better utilize data and stay competitive in a market driven by machine learning and automation.

Availability

CTERA Fusion Direct is available now as part of the CTERA Intelligent Data Platform and is positioned as a core component of the broader CTERA Fusion family for file and object unification. For more information, please visit CTERA.

Amazon

Amazon