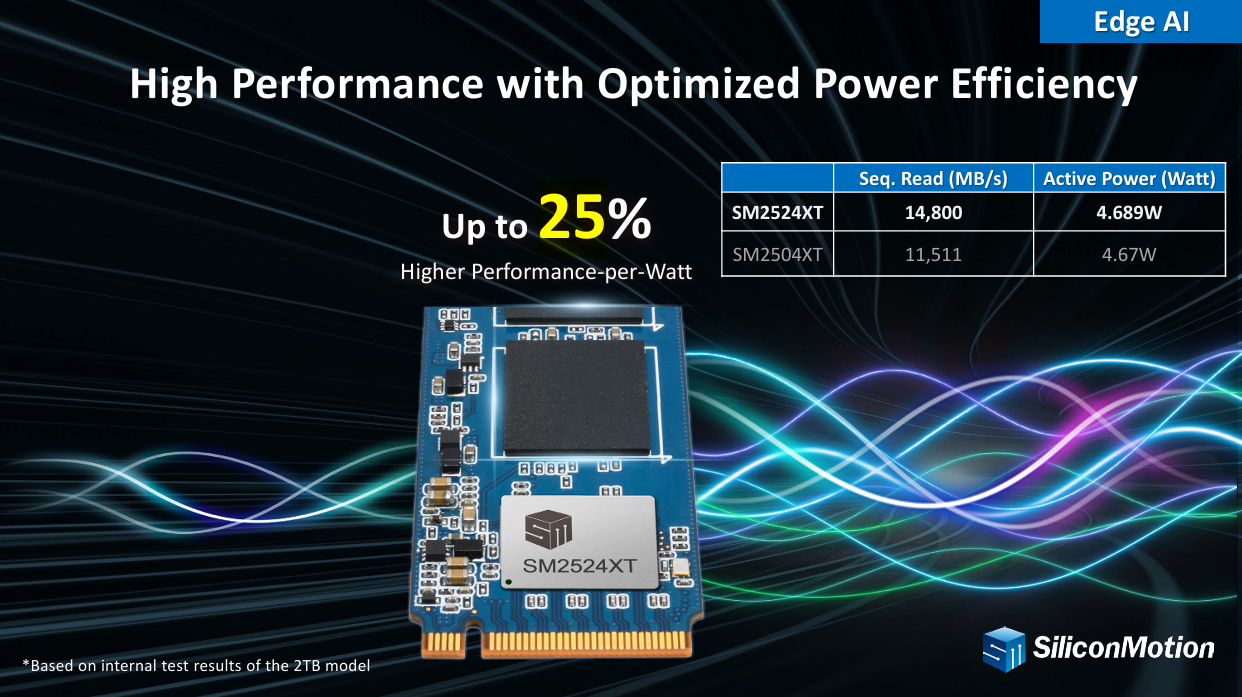

Silicon Motion has introduced the SM2524XT, a PCIe Gen5 DRAM-less SSD controller targeting AI PCs, edge AI systems, and workloads centered on local AI inference. The controller is engineered to meet the storage demands of KV cache-intensive workloads, where sustained random I/O performance and low-latency access are increasingly critical for continuous inference. Silicon Motion says

Amazon

Amazon