At MWC 2026 in Barcelona, AMD highlighted how its portfolio is helping telecom operators move from AI pilots to production deployments as they transition from traditional RAN to open, virtualized architectures. The company is positioning a combination of open software stacks, GPUs, CPUs, networking, and adaptive computing to support distributed, telco-grade AI across core, edge, and RAN environments.

The underlying challenge for operators is not just model development but also running AI reliably at scale across live networks. That requires an open ecosystem, support for industry collaboration, and infrastructure tuned for constrained, often rugged edge sites.

Open Telco AI: Collaborative Approach to Telco-Grade Models

Telco networks are complex, mission-critical systems that increasingly rely on AI for dynamic optimization, automation, and resilience. Despite rapid advances in AI, GSMA data shows that only a small fraction of generative AI deployments are currently used in networks, underscoring a gap between general-purpose AI progress and telco-specific adoption.

To address this, AMD is participating in Open Telco AI, a new global initiative led by the GSMA and announced at MWC. Founding collaborators include AT&T, TensorWave, AMD, and other industry stakeholders. The initiative aims accelerate telco-grade AI models and systems through open collaboration.

A central component is the launch of the open-telco.ai portal, which is intended to serve as a shared hub for operators, vendors, researchers, and developers. The portal aggregates datasets, tools, benchmarks, and other shared resources to build and validate models that address telco-specific requirements such as network optimization, anomaly detection, and operational automation.

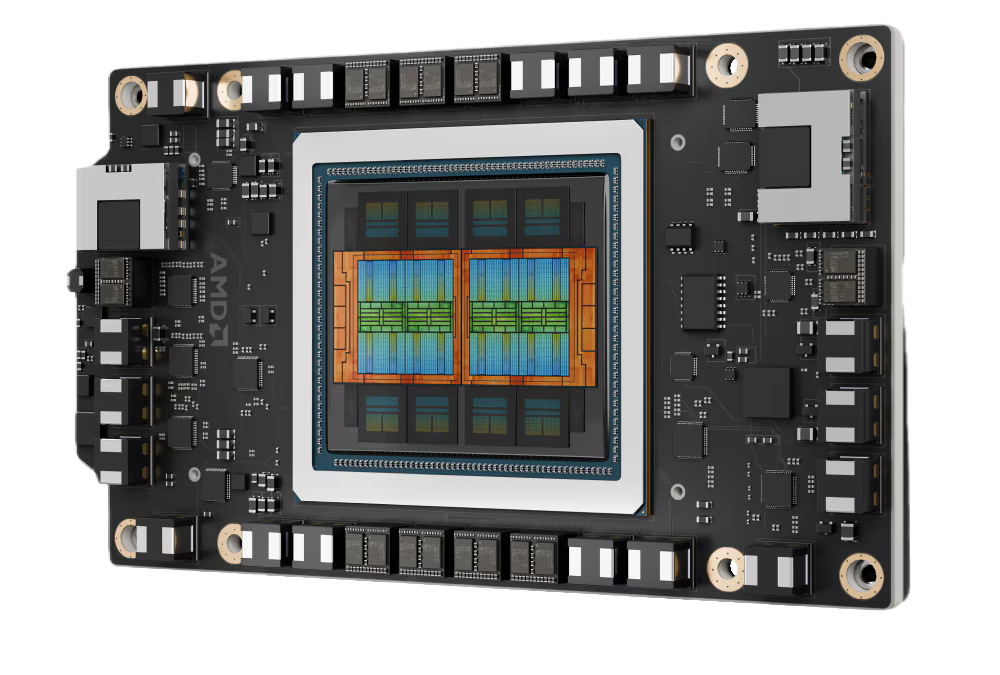

As part of the collaboration, AT&T is contributing open-telco models, AMD is providing compute, and TensorWave is offering hosting infrastructure. AMD Instinct GPUs train the Open Telco AI models, transforming shared datasets and benchmarks into practical, telco-focused models that others in the ecosystem can reuse and extend. These GPUs are paired with the AMD ROCm software stack, an open platform for training and inference that supports rapid iteration from experimentation through validation.

Operationalizing Telco AI with AMD Enterprise AI Suite

Developing domain-specific models is only one step in deploying AI in live networks. Operators also need a production software layer that can turn models into governed, observable, and repeatable services, integrated with existing operational processes.

The AMD Enterprise AI Suite is designed as the production layer. It connects open-source AI frameworks and generative AI models with an enterprise-ready platform tuned for AMD compute, particularly GPU-based infrastructure. The suite integrates components for model serving, validated workflows, governance capabilities, and developer environments, all intended for organizations operating GPU clusters at scale.

Architecturally, the Enterprise AI Suite is a Kubernetes-native, container-based approach. This aligns with existing DevOps and MLOps practices among large operators and supports multi-team use, security controls, and lifecycle management. For telco environments, this combination provides a path from domain-trained Open Telco AI models to operational services such as network automation, capacity planning, and operational intelligence, without locking into a proprietary AI platform.

Edge and vRAN Foundation with AMD EPYC 8005 Server CPUs

As operators scale open and virtualized RAN deployments, they face tight constraints on power, space, and cooling at distributed edge sites, along with the need for deterministic performance and long-term platform stability. These factors place specific requirements on the CPU platforms that support vRAN and other edge workloads.

The recently announced AMD EPYC 8005 Server CPUs are designed for these challenging edge environments. They deliver high compute density to support virtual RAN workloads, including compute-intensive Layer 1 processing. This focus on L1 is critical for meeting real-time and low-latency requirements in 5G and future RAN implementations.

The EPYC 8005 family also supports wide thermal operating ranges, which is important for outdoor and non-traditional locations where environmental conditions cannot always be tightly controlled. This enables OEMs to design and certify NEBS-compliant platforms for ruggedized and outdoor telco deployments, as well as compact, small-form-factor systems for space-constrained sites.

By combining these characteristics, the EPYC 8005 platform is intended to provide operators with a CPU foundation that addresses real-world deployment constraints for commercial vRAN, while still delivering the performance required for higher-layer network functions and edge workloads.

End-to-End View: From Open Models to Distributed Infrastructure

Across Open Telco AI, the Enterprise AI Suite, and the EPYC 8005 edge platform, AMD is presenting an end-to-end approach for telco AI at MWC 2026 that includes:

- Open collaboration on telco-specific models via Open Telco AI and the open-telco.ai portal, powered by AMD Instinct GPUs and ROCm.

- A production software stack in the AMD Enterprise AI Suite that operationalizes those models on Kubernetes-based infrastructure aligned with telco DevOps and MLOps practices.

- Edge-optimized compute in the form of EPYC 8005 CPUs designed for vRAN and distributed telco deployments with strict power, space, and environmental requirements.

For operators working to move AI and open RAN initiatives from proof of concept to large-scale production, the focus is on enabling telco-grade performance and reliability while leveraging open models, open software, and scalable, efficient infrastructure. At MWC 2026, AMD is using these announcements and collaborations to position its hardware and software stack as a foundation for that transition.

Amazon

Amazon