The TYAN Transport CX GC68A-B8036 barebone system server is designed to excel in most high-performance Cloud-computing environments, supporting the newest generation of AMD processors (AMD EPYC 7002 and 7003, up to 240W). Because this compact, single-socket 1U server leverages the new AMD technology, TYAN indicates that the GC68-B8036 server offers scalable 32 and 64-bit computing, high bandwidth memory design, and speedy PCI-E bus implementation.

The TYAN Transport CX GC68A-B8036 also allows for support of up to two PCIe 4.0 add-in cards via preinstalled riser cards at the back panel for a (potential) significant increase in performance compared to the last-gen model. The CX GC68A-B8036 also supports two GbE ports and a management GbE port that is dedicated to IPMI.

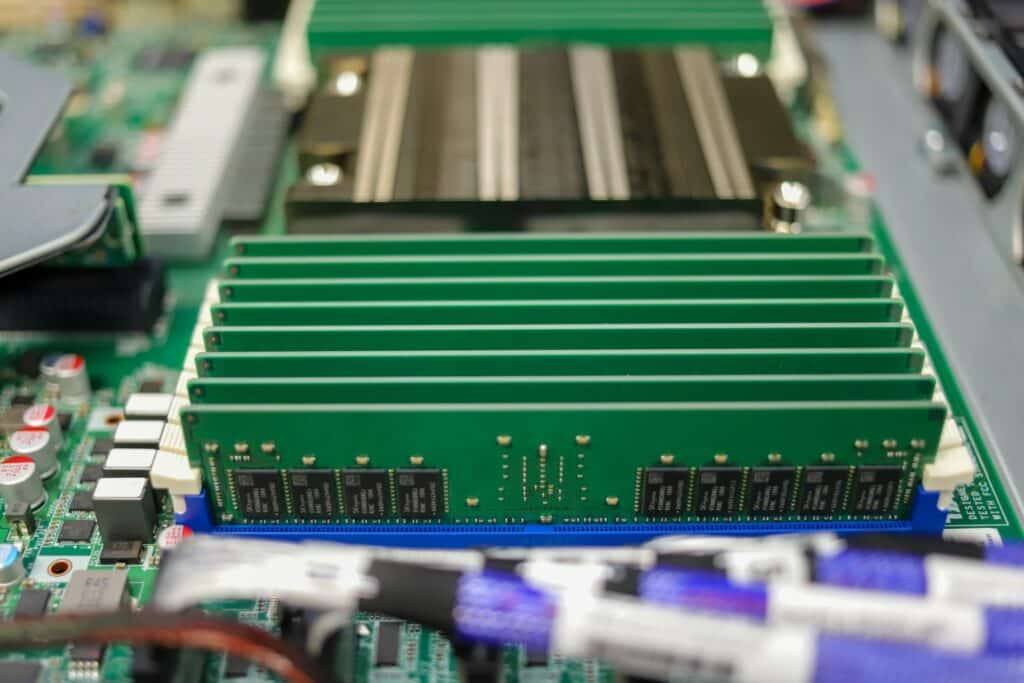

Moreover, the support for the new EPYC CPUs means TYAN was able to add sixteen DIMM slots to the server for a total of just over 4TB of LRDIMM 3DS DDR4 RAM (up to 2,048GB when using RDIMM). Our review build includes 256GB of DDR4 3200MHz DRAM (utilizing all 16 DIMMs with 16GB sticks in each).

For storage, the server can house up to twelve NVMe U.2 SSDs (or a blend of NVMe and SATA drives) via its hot-swappable tool-less drive bays. The CX GC68A-B8036 is equipped with two internal NVMe/SATA M.2 slots for boot drives. For input, it comes equipped with five USB 3.1 Gen1 ports, including one with Type-A connectivity, and the usual VGA and COM ports.

We were shipped with the B8036G68AE12HR model, which handles both SATA and NVMe drives. Our test build includes an AMD EPYC Gen3 7763 processor and eightIntel P5510 7.68TB Gen4 SSDs.

TYAN Transport CX GC68A-B8036 Specifications

| System | Form Factor | 1U Rackmount |

| Chassis Model | GC68A | |

| Dimension (D x W x H) | 26.77″ x 17.26″ x 1.69″ (680 x 438.5 x 43mm) | |

| Motherboard Name | S8036GM2NE | |

| Gross Weight | 20 kg (44 lbs) | |

| Net weight | 10.5kg (23.5 lbs) | |

| Front Panel | Buttons | (1) ID / (1) PWR w/ LED |

| LEDs | (1) ID / (1) PWR | |

| I/O Ports | (1) USB 3.1 Gen.1 port | |

| External Drive Bay | Q’ty / Type | (12) 2.5″ hot-swap NVMe HDD/SSDs with (4) 2.5″ hot-swap SATA 6Gb/s |

| HDD Backplane Support | SAS 12Gb/s /SATA 6Gb/s /NVMe | |

| Supported HDD Interface | (4) SATA 6Gb/s & SAS* / (12) NVMe | |

| Notification | The SAS/SATA HDD backplane is connected to onboard SATA connection by default. Please contact Tyan technical support if a discrete SAS HBA/RAID adapter is required. | |

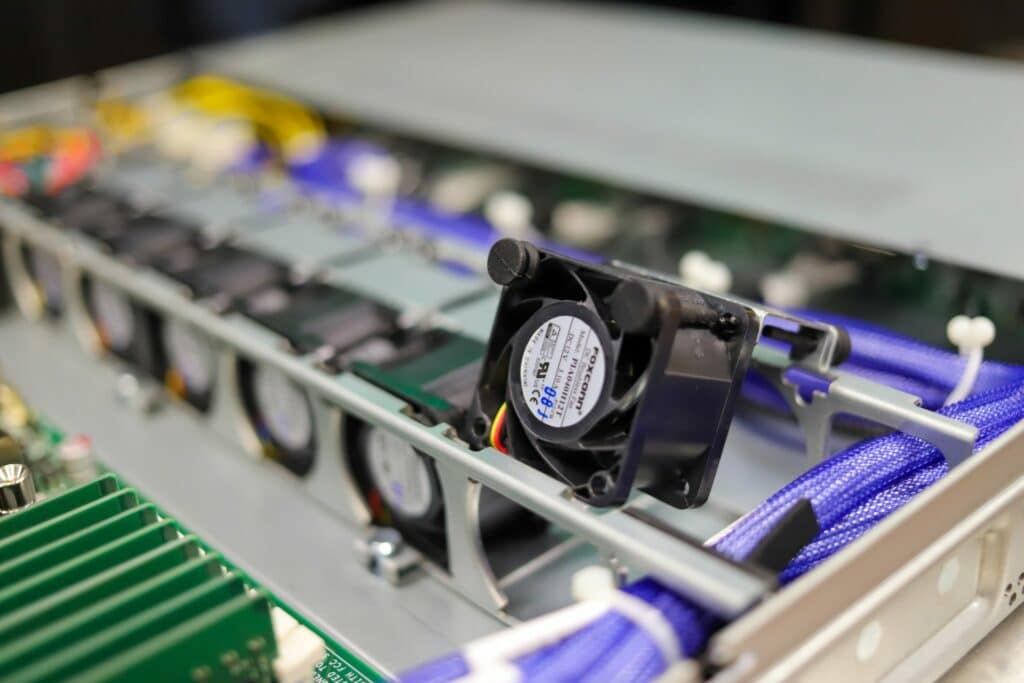

| System Cooling Configuration | FAN | (5) 4028 + (1) 4056 fans |

| Power Supply | Type | CRPS |

| Input Range | AC 100~240V/12~6A | |

| Output Watts | 850 Watts | |

| Efficiency | 80 plus Platinum | |

| Redundancy | 1+1 | |

| Processor | Q’ty / Socket Type | (1) AMD Socket SP3 |

| Supported CPU Series | (1) AMD EPYC™ 7002/7003 Series Processor | |

| Thermal Design Power Wattage | Max up to 240W (cTDP) | |

| Memory | Supported DIMM Qty | (16) DIMM slots |

| DIMM Type / Speed | RDIMM DDR4 3200 w/ ECC up to 2,048GB (128GB*16) / LRDIMM DDR4 3200 w/ ECC up to 4,096GB (256GB*16) / 3DS DDR4 3200 w/ ECC up to 4,096GB (256*16) | |

| Capacity | Up to 2,048GB RDIMM / 4,096GB LRDIMM 3DS | |

| Memory channel | 8 Channels per CPU | |

| Memory voltage | 1.2V | |

| Expansion Slots | PCIe | (1) PCIe Gen.4 x16 slot (FH/HL) / (1) PCIe Gen.4 x16 slot (HH/HL) |

| Pre-installed TYAN Riser Card (PCIe Gen.4) | (1) M8036-L16-1F for (1) HH/HL PCIe Gen.4 x16 slot (Left) / (1) M8036-R16-1F for (1) FH/HL PCIe Gen.4 x16 slot (Right) | |

| Others | (1) PCIe Gen.3 x16 OCP v2.0 mezzanine slot | |

| Physical Dimension Abbreviation | HH/HL (Half-height / Half-length): 2.7″ x 6.6″ (68.9 x 167.7mm) / FH/HL (Full-height / Half-length): 4.4″ x 6.6″ (111.2 x 167.7mm) | |

| LAN | Q’ty / Port | (2) GbE ports + (1) GbE dedicated for IPMI |

| Controller | Broadcom BCM5720 | |

| PHY | Realtek RTL8211E | |

| Storage SATA | Connector | (4) 7-pin SATA for (4) front SATA ports |

| Controller | Marvell 9235 | |

| Speed | 6Gb/s | |

| RAID | N/A | |

| Storage NVMe | Connector (M.2) | (2) 22110/2280 (by PCIe Gen.3 & SATA interface) |

| Connector (U.2) | (6) SFF-8654 for (12) NVMe ports | |

| Graphic | Connector type | D-Sub 15-pin |

| Resolution | Up to 1920×1200 | |

| Chipset | Aspeed AST2500 | |

| I/O Ports | USB | (1) USB3.1 Gen.1 port (Type-A) / (2) USB3.1 Gen.1 ports (via Cable) / (2) USB3.1 Gen.1 ports (@ rear) |

| COM | (1) DB-9 port (COM1) + (1) header (COM2) | |

| VGA | (1) D-Sub 15-pin port | |

| RJ-45 | (2) GbE ports + (1) dedicated GbE for IPMI | |

| TPM (Optional) | TPM Support | Please refer to our TPM supported list. |

| System Monitoring | Chipset | Aspeed AST2500 |

| Temperature | Monitors temperature for CPU & memory & system environment | |

| Voltage | Monitors voltage for CPU, memory, chipset & power supply | |

| LED | Over temperature warning indicator / Fan & PSU fail LED indicator | |

| Others | Watchdog timer support | |

| Server Management | Onboard Chipset | Onboard Aspeed AST2500 |

| AST2500 iKVM Feature | 24-bit high quality video compression / Supports storage over IP and remote platform-flash / USB 2.0 virtual hub | |

| AST2500 IPMI Feature | IPMI 2.0 compliant baseboard management controller (BMC) / 10/100/1000 Mb/s MAC interface | |

| BIOS | Brand / ROM size | AMI / 32MB |

| Feature | Hardware Monitor / FAN speed control automatic / Boot from USB device/PXE via LAN/Storage / Console Redirection / SMBIOS 3.0/PnP/Wake on LAN / ACPI 6.1 / ACPI sleeping states S0, S5 | |

| Operating System | OS supported list | Please refer to our AVL support lists. |

| Regulation | FCC (SDoC) | Class A |

| CE (DoC) | Class A | |

| CB/LVD | Yes | |

| RCM | Class A | |

| VCCI | Class A | |

| Operating Environment | Operating Temp. | 10° C ~ 35° C (50° F~ 95° F) |

| Non-operating Temp. | – 40° C ~ 70° C (-40° F ~ 158° F) | |

| In/Non-operating Humidity 90 | 90%, non-condensing at 35° C | |

| Package Contains | Barebone | (1) GC68A-B8036 Barebone |

| Manual | (1) Quick Installation Guide | |

| RoHS | RoHS 6/6 Compliant | Yes |

TYAN Transport CX GC68A-B8036 Design and Build

The Transport CX GC68A-B8036 is a 1U server that uses TYAN’s latest chassis model, offering a comprehensive structure and a rugged, quality mechanical enclosure. It’s a fairly standard server at 43mm in height, just over 438mm in width, and 680mm in depth.

On the sides of the front panel is the USB 3.0 port (left), Power Button with green & red LED (right), and ID button with a blue LED (right). The drive trays are located in between.

Our specific model is the B8036G68AE12HR. This is a single-socket AMD EPYC 7002/7003 barebone platform that features twelve tool-less, hot-swappable 2.5” drive trays that support eight NVMe U.2 devices and four NVMe/SATA 6G/SAS12G devices. Each drive tray has a status (red) and activity (green) LED, which indicates whether a drive is present (activity/no activity, or if it’s being identified, rebuilt, or has failed.

As usual, adding drives was a seamless process. Simply press the blue locking tab and pull the lever open to easily slide the drive tray out, then press the locking tab again to release the side locking mechanism (used to tightly secure the drive in place). After we installed the drive inside the tray via its toolless design, we were able to easily slide it back in the slot and then close the lever to secure the drive tray in place.

Turning the TYAN server to the back panel (starting from the left side) we see the dual 850W (80+ Platinum) PSUs, LAN1 (dedicated for IPMI), and two USB 3.0 ports, the VGA and Serial ports, and the other two LAN ports. Next to these are the OCP card area and expansion slots (Half-height / Half-length PCI-E Gen.4 x16 card w/ tall bracket x2).

To remove the server lid, simply slide the cover a bit forward and then lift up; though you will need to use a screwdriver to open up the back panel of the server.

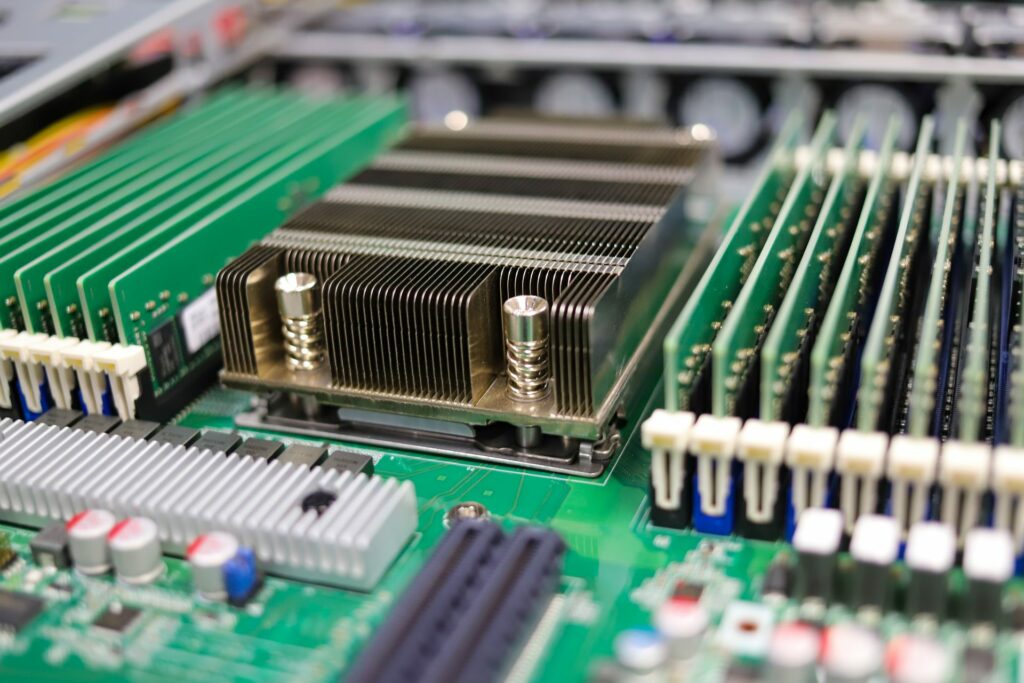

Once removed, you will that all the components are set up through the middle of the server, nicely spaced out to allow for effective airflow.

Next to the fans is the M1631G68-D-PDB power distribution board. The fans themselves keep the air circulating around the single socket AMD processor, which is surrounded by eight DDR4 DIMMS, and the rest of the S8036 motherboard.

In the back-right corner are the dual PSUs, while the pre-installed riser card bracket (M8036-R16-1F) is located in the left corner. To install an expansion card, we simply had to loosen the two screens of the riser card bracket, insert the expansion card into the bracket, and then secure the card with a screw.

TYAN Transport CX GC68A-B8036 Performance

We configured our TYAN Transport CX GC68A-B8036 with the following components:

- AMD EPYC 7763 CPU

- 256GB DDR4-3200 memory via (16) 16GB DIMMs

- 8 x Intel P5510 7.68TB

SQL Server Performance

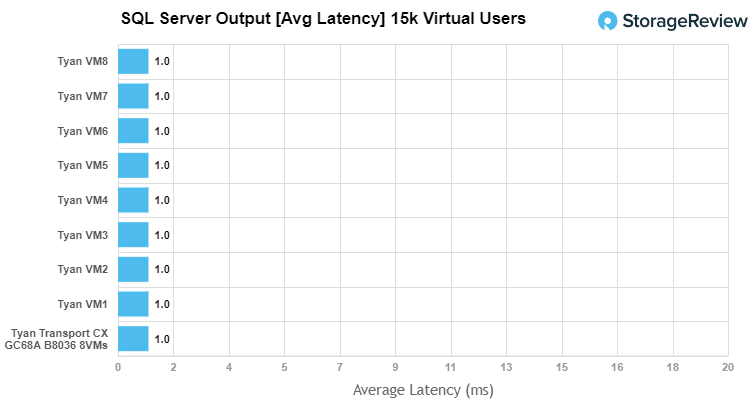

StorageReview’s Microsoft SQL Server OLTP testing protocol employs the current draft of the Transaction Processing Performance Council’s Benchmark C (TPC-C), an online transaction processing benchmark that simulates the activities found in complex application environments. The TPC-C benchmark comes closer than synthetic performance benchmarks to gauging the performance strengths and bottlenecks of storage infrastructure in database environments.

Each SQL Server VM is configured with two vDisks: 100GB volume for boot and a 500GB volume for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. While our Sysbench workloads tested previously saturated the platform in both storage I/O and capacity, the SQL test looks for latency performance.

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 5 hours preconditioning

- 30 minutes sample period

We measured an aggregate latency of just 1ms through eight VMs from the Transport CX GC68A-B8036.

Sysbench MySQL Performance

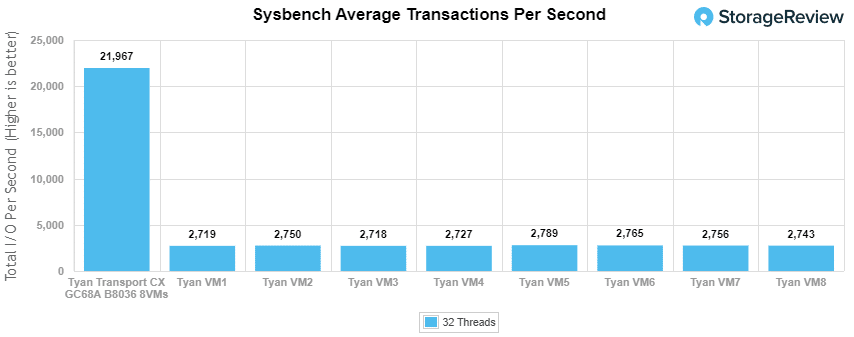

Our first local-storage application benchmark consists of a Percona MySQL OLTP database measured via SysBench. This test measures average TPS (Transactions Per Second), average latency, and average 99th percentile latency as well.

Each Sysbench VM is configured with three vDisks: one for boot (~92GB), one with the pre-built database (~447GB), and the third for the database under test (270GB). From a system resource perspective, we configured each VM with 16 vCPUs, 60GB of DRAM and leveraged the LSI Logic SAS SCSI controller.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

-

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

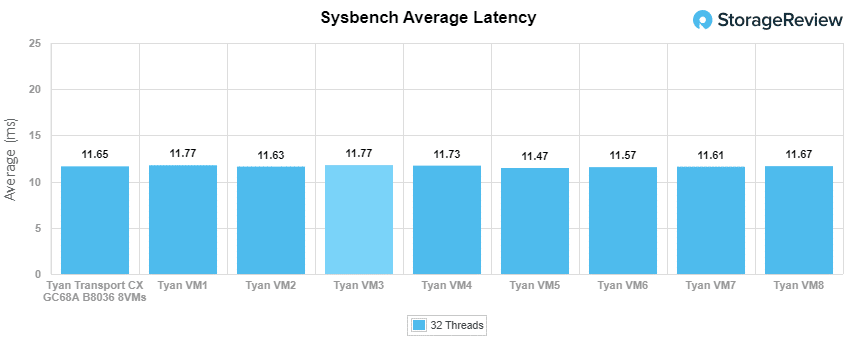

With Sysbench OLTP, we recorded an aggregate score of 21,967.23 TPS; the VMs ranged from just 2,718.15 TPS to 2,789.15 TPS.

Average latency in Sysbench was also a bit disappointing with an aggregate score of 11.65ms, with the eight VMs ranging from 11.61ms to 11.77ms.

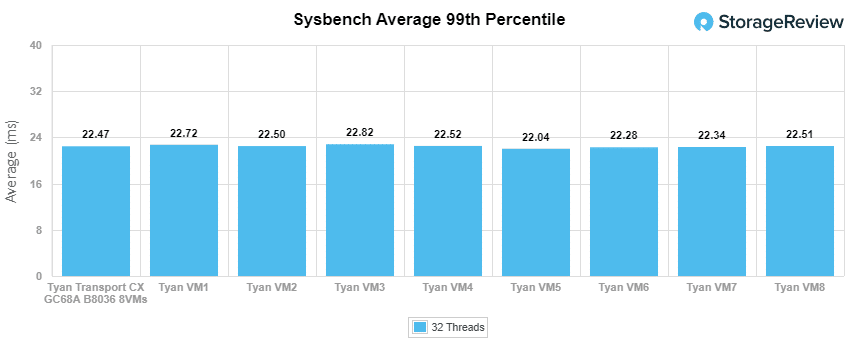

Wrapping up Sysbench, the worst-case Sysbench 99th percentile numbers ranged from 22.04ms to 22.82ms for an aggregate of 22.47ms.

VDBench Workload Analysis

When it comes to benchmarking storage devices, application testing is best, and synthetic testing comes in second place. While not a perfect representation of actual workloads, synthetic tests do help to baseline storage devices with a repeatability factor that makes it easy to do apples-to-apples comparison between competing solutions.

These workloads offer a range of different testing profiles ranging from “four corners” tests, common database transfer size tests, as well as trace captures from different VDI environments. All of these tests leverage the common vdBench workload generator, with a scripting engine to automate and capture results over a large compute testing cluster. This allows us to repeat the same workloads across a wide range of storage devices, including flash arrays and individual storage devices.

Profiles:

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

- 4K Random Write: 100% Write, 128 threads, 0-120% iorate

- 64K Sequential Read: 100% Read, 32 threads, 0-120% iorate

- 64K Sequential Write: 100% Write, 16 threads, 0-120% iorate

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

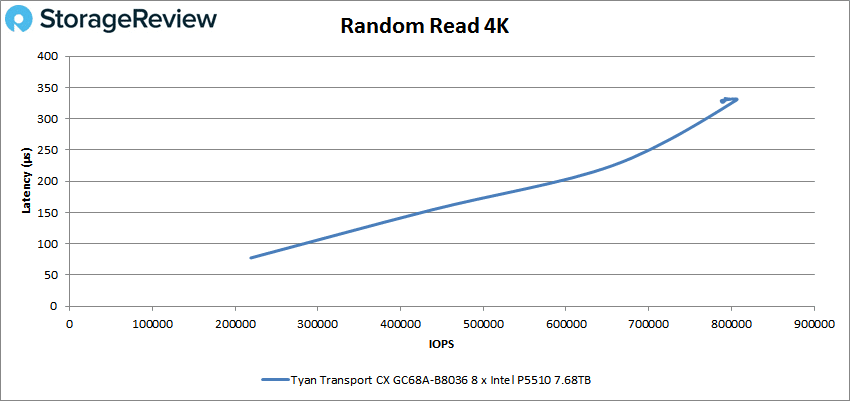

First up is the 4K random read test, where the TYAN Transport CX GC68A-B8036 showed disappointing results. Here, it posted a peak of just 805,037 IOPS with a latency of 329.2ms. Each one of the installed drives are capable of reaching 800K IOPS; however, inside this TYAN server, they only saw roughly 100K IOPS each.

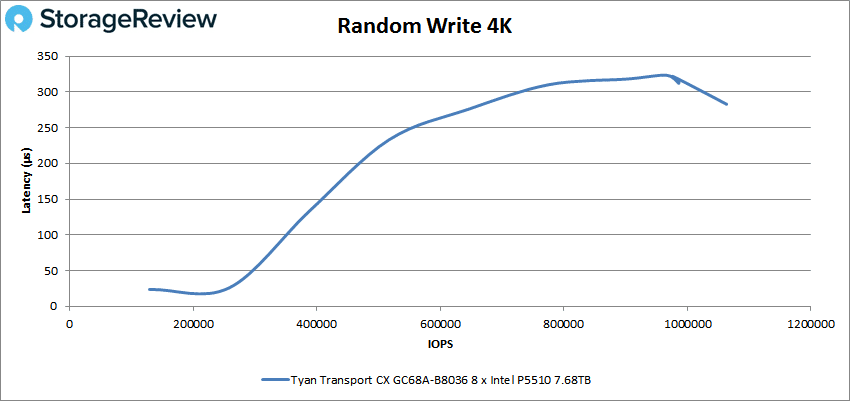

Moving on to 4K random writes, the CX GC68A-B8036 managed to stay under 300µs until about 775 IOPS, after which it maxed out at 1.06 million IOPS with a latency of 282.8µs.

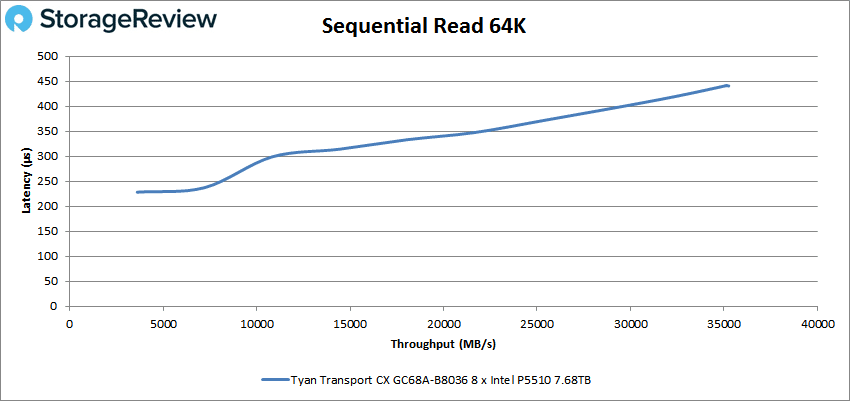

Next up is the 64K sequential tests. In reads, the Transport CX GC68A-B8036 topped out at a solid 35.1GB/s or 562,242 IOPS and 441.6µs latency.

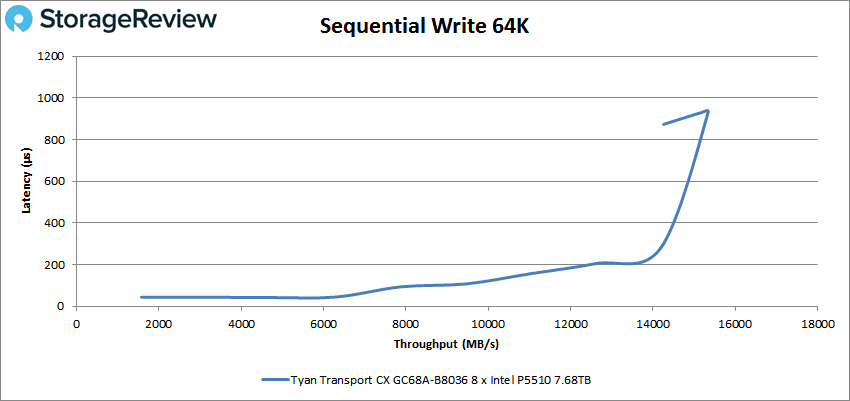

In the 64K sequential write test, the had its highest throughput was 15.4GB/s or 245,529 IOPS with a 932.7µs latency before suffering from a small spike in performance at the very end.

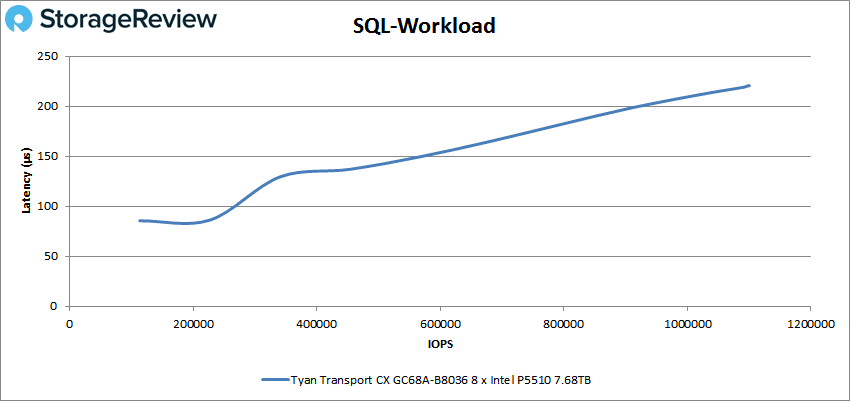

Next up are our SQL workloads, SQL, SQL 90-10, and SQL 80-20. Starting with SQL, the Transport CX GC68A-B8036 showed a noticeable increase in latency throughout, eventually topping out at 1,098,443 IOPS and 220.6µs.

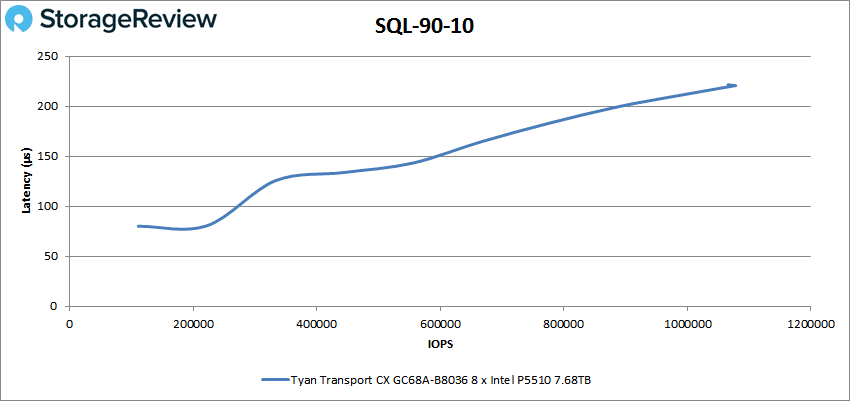

It returned similar results in the SQL 90-10 test where the TYAN Transport CX GC68A-B8036 peaked at 8.4GB/s (1,075,321 IOPS) with 220.4µs latency.

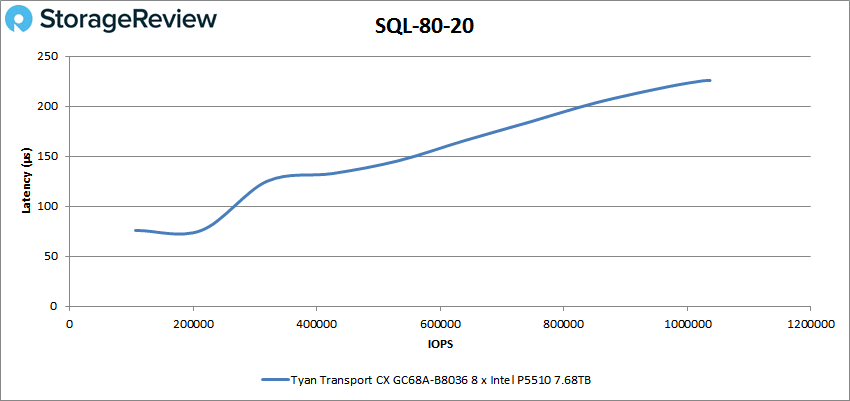

The numbers also stayed consistent in the last test, SQL 80-20, peaking at 8.1GB/s (1,036,700 IOPS) at 225.7µs latency.

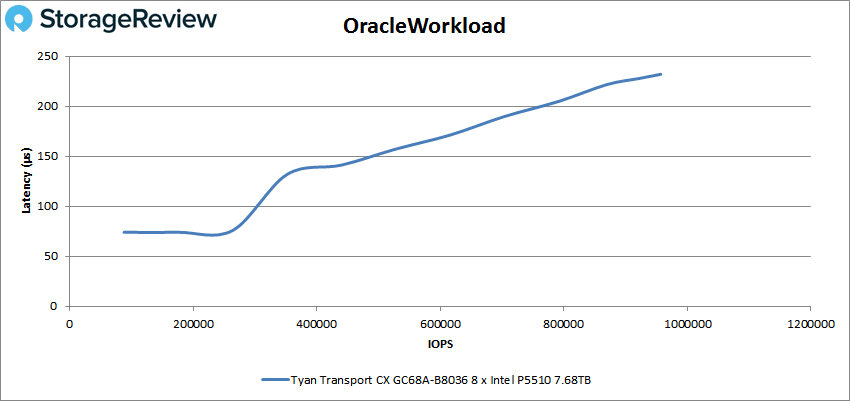

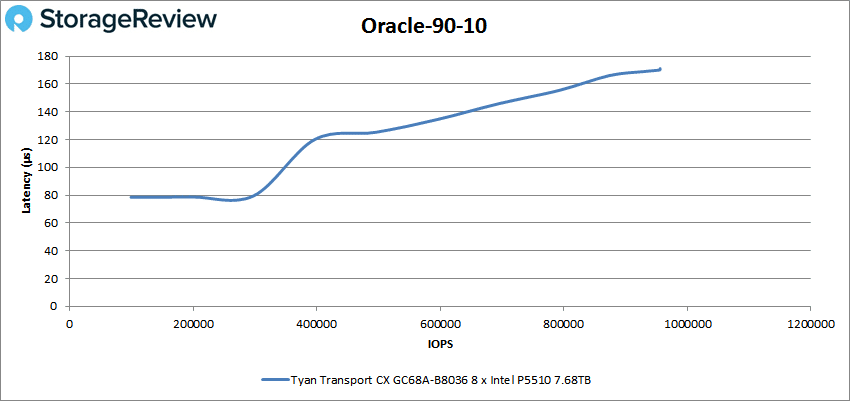

Next up are our Oracle workloads (Oracle, Oracle 90-10, and Oracle 80-20) where it couldn’t quite crack the 1 million IOPS mark. In our first test (Oracle Workload), the Transport CX GC68A-B8036 peaked at 957,174 IOPS (8GB/s) with a 232µs latency.

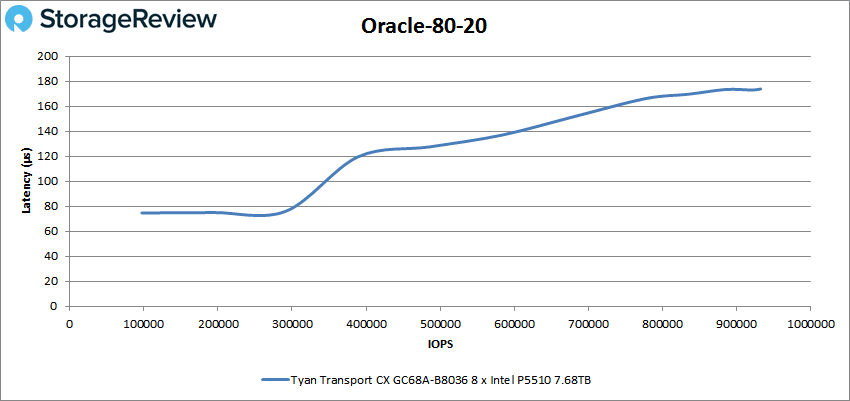

Finally, in the Oracle 80-20 test, the Transport CX GC68A-B8036 still started just below 80µs then went up to 173.8µs at the end, where it posted a peak of 932,328 IOPS.

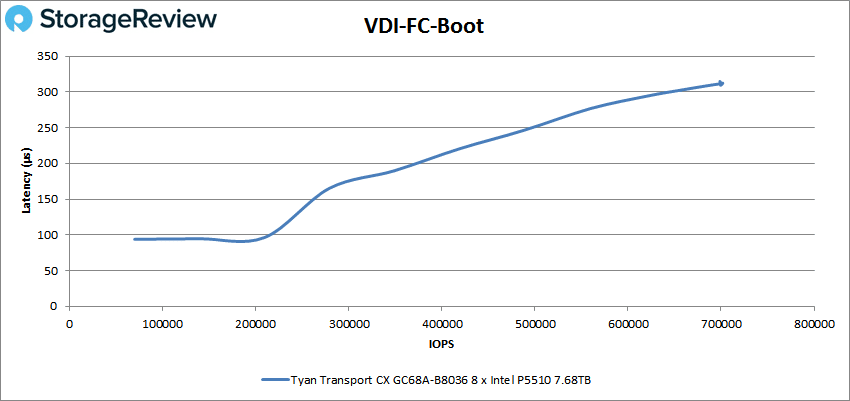

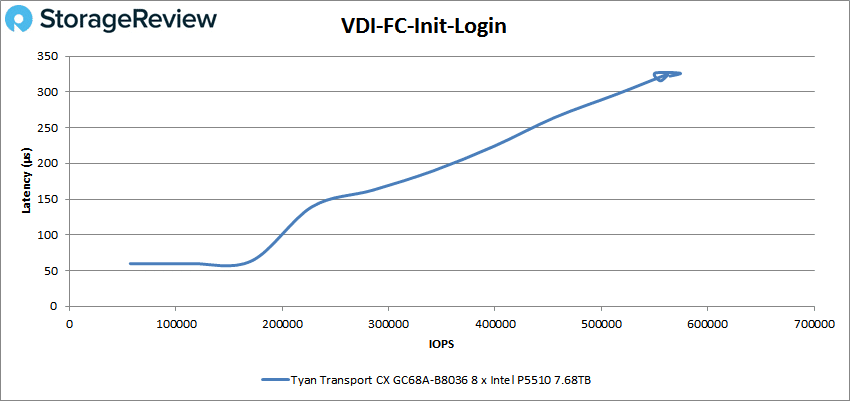

Our last benchmark is the VDI clone test, Full and Linked. In VDI Full Clone (FC) Boot, the Transport CX GC68A-B8036 reached 700,379 IOPS with a 312µs latency.

Moving onto VDI FC Initial Login, the CX GC68A-B8036 was able to top out at 574,091 IOPS with a latency of 326.3µs showing some spikes in performance at the very end.

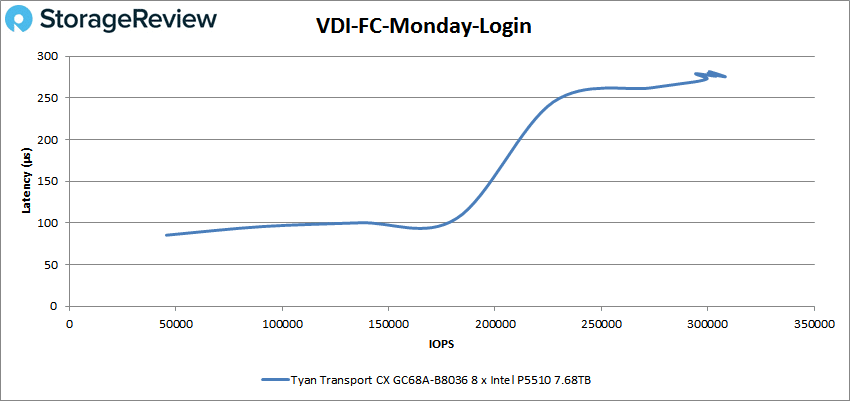

In VDI FC Monday Login, the TYAN server was below 100µs until around 180K IOPS mark, where it eventually peaked at 308,245 IOPS with a latency of 275.5µs. The performance showed some spikes at the end of this test as well.

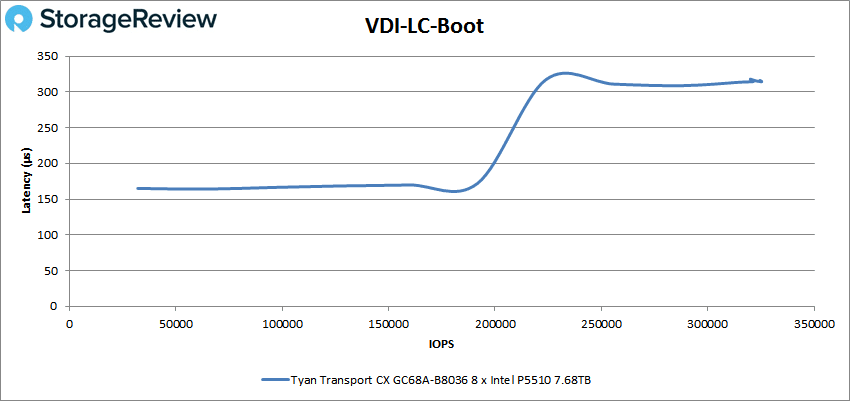

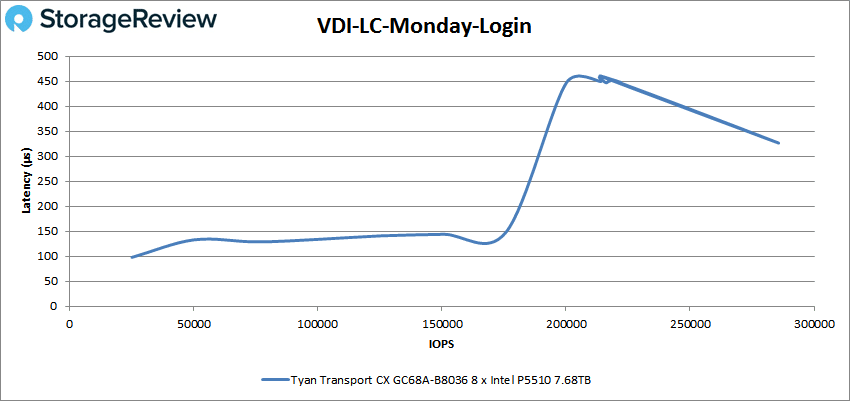

Moving onto the Linked Clone (LC) tests, the Transport CX GC68A-B8036 showed good latency until around the 200K IOPS mark, where it spiked to roughly 350µs (ending up at 314.4µs). Performance topped out at 325,334 IOPS.

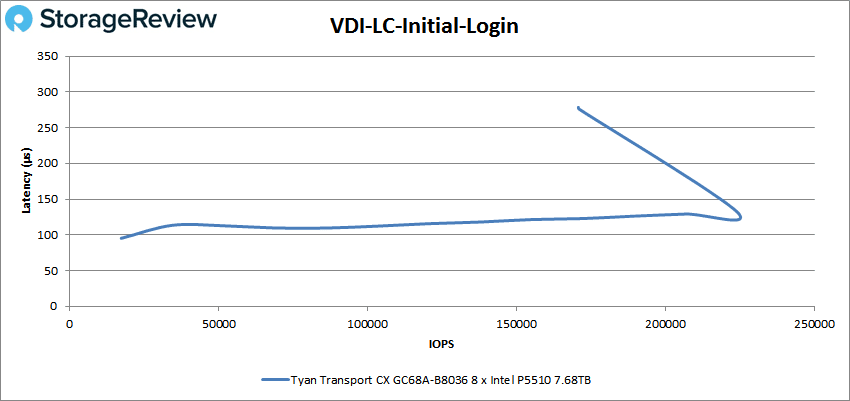

The last test is VDI LC Monday Login, where the Transport CX GC68A-B8036 again started with a lower latency before suffering from a huge spike around the 175K mark. The highest IOPS was 285,692 at 326.5µs.

Conclusion

The Tyan Transport CX GC68A-B8036 is purpose-built for cloud storage server use cases, and at times, it fills this role well. This 1U, single-socket server is based on AMD’s EPYC 7003/7002 processors and supports up to twelve NVMe U.2 SSDs (or a blend of NVMe and SATA drives) via its hot-swappable tool-less drive bays. It uses an efficient and easy-to-service design like the most recent TYAN servers and comes with five USB 3.1 Gen.1 ports (one of which is Type-A) as well as the usual buttons and status LEDs.

For performance testing, we ran the Transport CX GC68A-B803 through our Application Workload Analysis, including SQL Server latency and Sysbench, and VDBench. In SQL Server latency, it returned an aggregate average latency of just 1ms. In Sysbench, we recorded an aggregate 21,967.23 TPS, a 11.65ms average latency, and a 22.47ms latency in the worst-case 99th percentile test.

For our synthetic testing, we saw a mixed performance from the eight Gen4 SSDs we installed. Bandwidth figures roughly aligned with Gen4 figures, but IOPS was drastically slower than expected. We observed a maximum of 1.10 million IOPS in SQL workload, 1.08 million in SQL 90-10, and 1.036 million in SQL 80-20. Meanwhile, in our Oracle tests we saw 957K IOPS in Oracle workload, 955K in Oracle 90-10, and 932K in Oracle 80-20.

We recorded 805K IOPS in 4K read, 1.03 million in 4K write, 35.1GB/s in 64K read, and 15.4GB/s in 64K write. Looking at the 4K figures, the results line up with where we’d expect to see a single drive, not a group of eight. The 64K bandwidth figure however aligned with expectations at around 4.4GB/s per SSD. Last, in our VDI Full Clone tests, the Transport CX GC68A-B8036 achieved 700K IOPS in boot, 574K in Initial Login, and 308K in Monday Login; the Linked Clone numbers for those benchmarks were 325K, 171K, and 285K IOPS.

Overall, the synthetic benchmark results CX GC68A-B803 were disappointing in a few spots. Unfortunately this likely has to do with the platform itself, we’ve seen similar behavior out of other AMD platforms. Though the high bandwidth in 64K sequential reads was in the Gen4 performance range, its 4K random read was poor at roughly 800K IOPS. This translates to around 100K IOPS per drive; however, each of the Intel P5510 SSDs is capable of 800K IOPS themselves. Thankfully, application workloads in VMware didn’t have the same issue.

Overall the TYAN Transport CX GC68A-B8036 offers datacenters a very dense platform that’s geared more toward storage density than compute power. Most application workloads should be just fine, a PoC will shake out any issues ahead of time.

Amazon

Amazon