Samsung has updated its highly-popular consumer SSD line again with the 970 EVO, which is being released alongside the 970 PRO. The new 970 EVO is the 2nd generation of Samsung’s 3-bit MLC NVMe SSDs for client PCs and features upgraded Intelligent TurboWrite technology and the all-new, enhanced Phoenix controller. Using the tiny M.2 2280 form factor, the new Samsung drive is targeted specifically prosumers, gamers and media professionals who require reliable performance under high workloads.

Performance-wise, the 970 EVO is expected to deliver transfer speeds that should certainly satisfy the above-mentioned demographic, quoting sequential read/write performance up to 3,500MB/s and 2,500 MB/s, respectively, and random performance reaching as high as 500,000 IOPS and 480,000 IOPS for read and write, respectively. The updated Intelligent TurboWrite technology also benefits from a large buffer size of up to 78GB for faster sequential write speeds. This performance gain should be most noticeable during large file transfers or when running high-workload applications.

Samsung also claims some pretty solid reliability specs as well, including an endurance of up to 1,200 TBW, which roughly 50% more than that of the previous model. Moreover, the thermal control solutions increase performance while reducing heat issues, and, coupled with an integrated thin copper-film heat spreader, the Dynamic Thermal Guard technology is designed to “proactively prevent” overheating. Samsung also mentions that its new Phoenix controller has a new nickel coating to promote faster heat dissipation.

Samsung 970 EVO is backed by a 5-year warranty and goes for roughly $129, $230 $450 and $850 for the 250GB, 500GB, 1TB and 2TB models, respectively.

Samsung 970 EVO Specifications

| Form Factor | M.2 2280 | |||||

| Interface | PCIe Gen 3.0 x4, NVMe 1.3 | |||||

| Controller | Samsung Phoenix Controller | |||||

| NAND Flash Memory | Samsung V-NAND 3bit MLC | |||||

| Capacity | 250GB | 500GB | 1TB | 2TB | ||

| Performance | ||||||

| Sequential Read MB/s | 3,400 | 3,400 | 3,400 | 3,500 | ||

| Sequential Write MB/s | 1,500 | 2,300 | 2,500 | 2,500 | ||

| Random Read 4K IOPS | 200K | 370K | 500K | 500K | ||

| Random Write 4K IOPS | 350K | 450K | 450K | 500K | ||

| TBW | 150TB | 300TB | 600TB | 1,200TB | ||

| Power | ||||||

| Average Active Power (read) | 5.4W | 5.7W | 6W | 6W | ||

| Average Active Power (write) | 4.2W | 5.8W | 6W | 6W | ||

| DEVSLP (L1.2 mode) | 5mW | |||||

| Warranty | 5-years | |||||

Performance

Testbed

The test platform leveraged in these tests is a Dell PowerEdge R740xd server. We measure SAS and SATA performance through a Dell H730P RAID card inside this server, although we set the card in HBA mode only to disable the impact of RAID card cache. NVMe is tested natively through an M.2 to PCIe adapter card. The methodology used better reflects end-user workflow with the consistency, scalability and flexibility testing within virtualized server offers. A large focus is put on drive latency across the entire load range of the drive, not just at the smallest QD1 (Queue-Depth 1) levels. We do this because many of the common consumer benchmarks don’t adequately capture end-user workload profiles.

Houdini by SideFX

The Houdini test is specifically designed to evaluate storage performance as it relates to CGI rendering. The test bed for this application is a variant of the core Dell PowerEdge R740xd server type we use in the lab with dual Intel 6130 CPUs and 64GB DRAM. In this case we installed Ubuntu Desktop (ubuntu-16.04.3-desktop-amd64) running bare metal. Output of the benchmark is measured in seconds to complete, with fewer being better.

The Maelstrom demo represents a section of the rendering pipeline that highlights the performance capabilities of storage by demonstrating its ability to effectively use the swap file as a form of extended memory. The test does not write out the result data or process the points in order to isolate the wall-time effect of the latency impact to the underlying storage component. The test itself is composed of five phases, three of which we run as part of the benchmark, which are as follows:

- Loads packed points from disk. This is the time to read from disk. This is single threaded, which may limit overall throughput.

- Unpacks the points into a single flat array in order to allow them to be processed. If the points do not have dependency on other points, the working set could be adjusted to stay in-core. This step is multi-threaded.

- (Not Run) Process the points.

- Repacks them into bucketed blocks suitable for storing back to disk. This step is multi-threaded.

- (Not Run) Write the bucketed blocks back out to disk.

Looking at the performance of rendering time (where less is better), the 970 EVO found capacities measured numbers at the bottom of the pack with 3,893.9 and 4,195.1 seconds for the 2TB and 500TB, respectively.

SQL Server Performance

We use a lightweight virtualized SQL Server instance to appropriately represent what an application developer would use on a local workstation. The test is similar to what we run on storage arrays and enterprise drives, just scaled back to be a better approximation for behaviors employed by the end user. The workload employs the current draft of the Transaction Processing Performance Council’s Benchmark C (TPC-C), an online transaction processing benchmark that simulates the activities found in complex application environments.

The lightweight SQL Server VM is configured with three vDisks: 100GB volume for boot, a 350GB volume for the database and log files, and a 150GB volume used for the database backup we recover after each run. From a system resource perspective, we configure each VM with 16 vCPUs, 32GB of DRAM and leverage the LSI Logic SAS SCSI controller. This test uses SQL Server 2014 running on Windows Server 2012 R2 guest VMs and is stressed by Dell’s Benchmark Factory for Databases.

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

When looking at SQL Server Output, the 970 EVO 2TB recorded 3,158.9 TPS while the 500GB had a more humble 3,148.0 TPS.

In SQL Server average latency, the 2TB 970 EVO had a respectable 5ms, which placed it just behind the 960 PRO and Intel 900P. The 500GB model had a middle-of-the-pack 20ms.

VDBench Workload Analysis

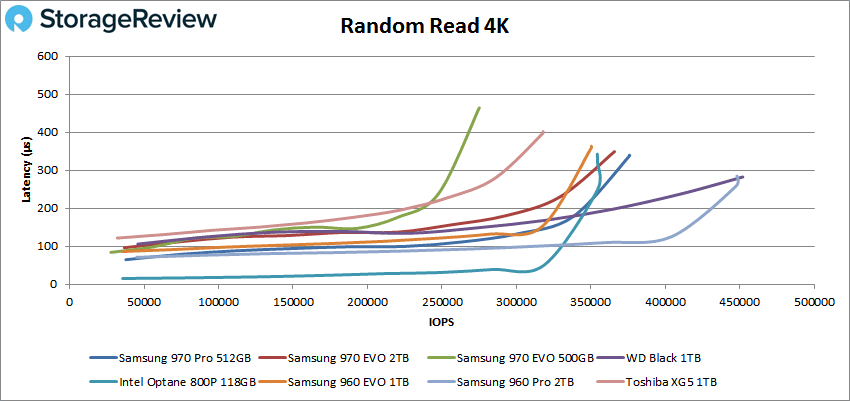

In our first VDBench Workload Analysis, we looked at random 4K read performance. Here, the Samsung 970 EVO came in fourth with 365,813 IOPS and 349μs latency for the 2TB model and last place with peak performance of 274,980 IOPS and a latency of 464μs for the 500GB model.

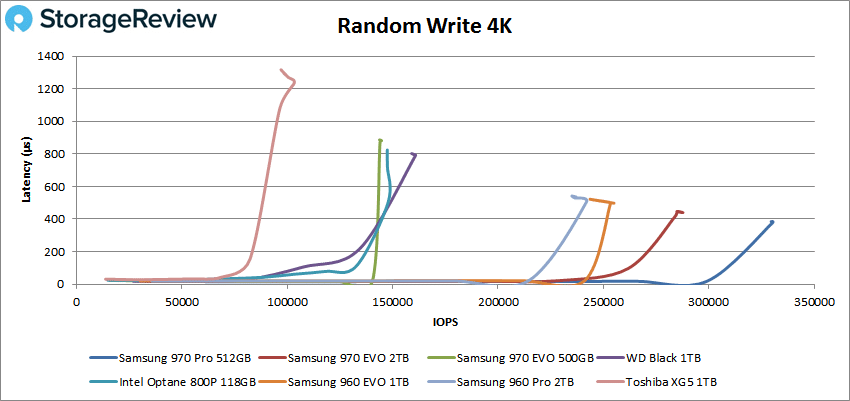

For 4K write the 2TB 970 EVO took second with 287,756 IOPS and 440μs latency and the 500GB came in next to last with 144,051 IOPS and 874μs latency.

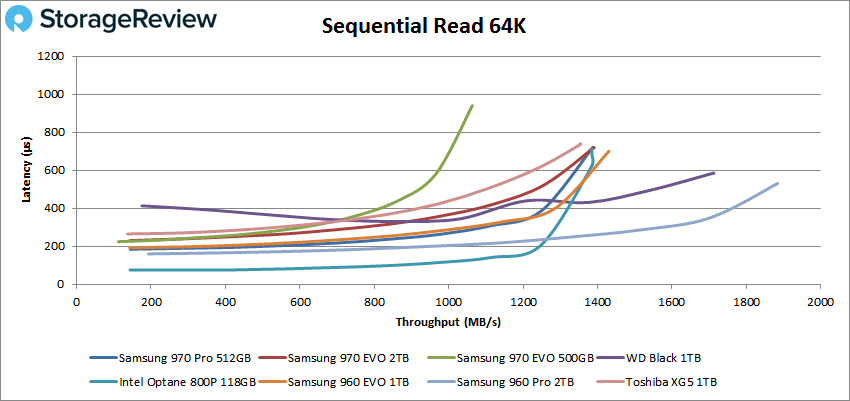

Switching over to sequential workloads in our 64K benchmarks, the 970 EVO 2TB tied for fourth with 22,154 IOPS or 1.4GB/s and a latency of 710μs. The 500GB model came in last with 17,012 IOPS or 1.06GB/s and a latency of 939μs.

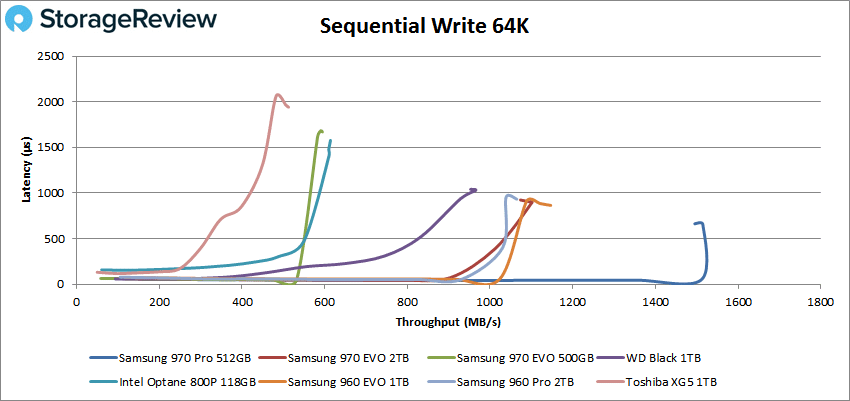

For 64K write, the 2TB 970 EVO landed third with 17,629 IOPS or 1.1GB/s and a latency of 900μs. The 500GB EVO came in second to last with 9,333 IOPS or 583MB/s with a latency of 1.6ms.

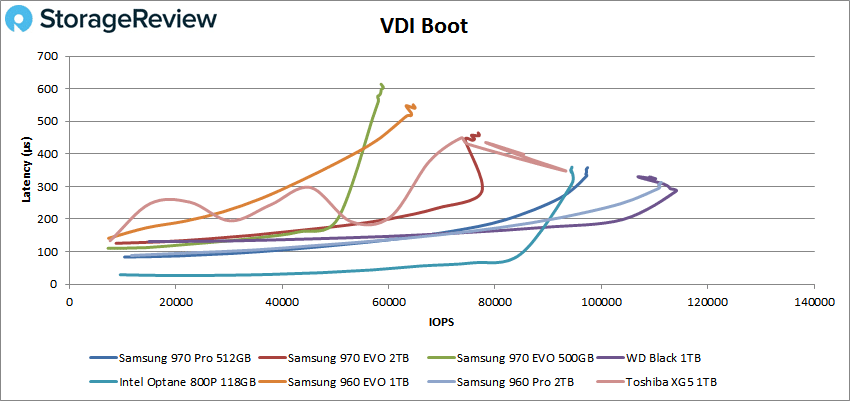

Next, we looked at our VDI benchmarks, which are designed to tax the drives even further. These tests include Boot, Initial Login, and Monday Login. Looking at the Boot test, the 2TB 970 EVO came in sixth with 79,983 IOPS and a latency of 465μs. The 500GB came in last with 58,509 IOPS and a latency of 563μs.

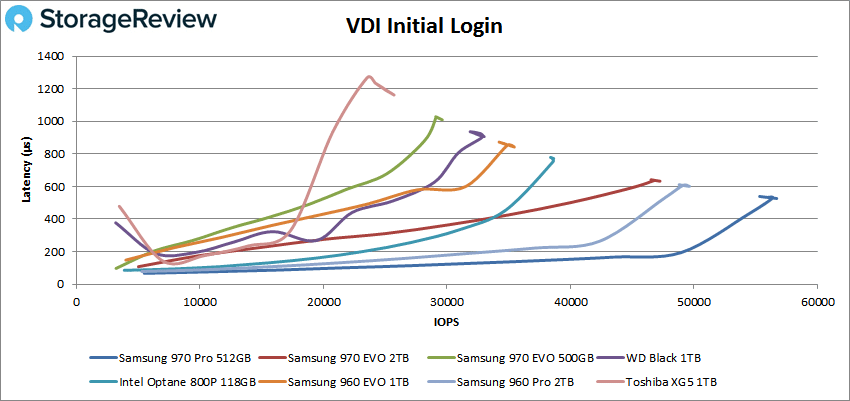

VDI Initial Login saw the 2TB 970 EVO found itself in third with 46,807 IOPS and a latency of 637μs. The 500GB version was second to last with 29,167 IOPS and a latency of 1.01ms.

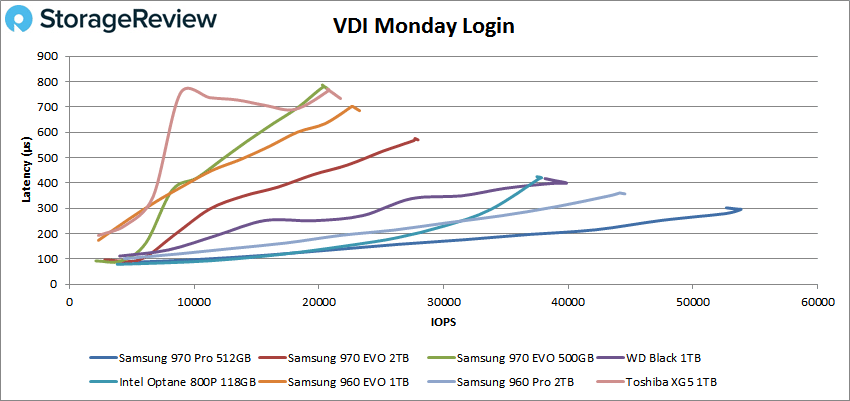

Finally with VDI Monday Login the 2TB 970 EVO was in fifth with 27,772 IOPS and a latency of 575μs. The 500GB was last with 20,751 IOPS and a latency of 768μs.

Conclusion

The Samsung 970 EVO is the latest version of its highly popular M.2 NVMe SSD line. The new drive leverages the company’s 3-bit MLC V-NAND. The 970 EVO leverages the new Phoenix controller for better performance and comes with higher endurance, nearly 50% more, through the latest generation of V-NAND. The drive also comes with unpadded Intelligent TurboWrite technology for large file transfers. The drive comes in capacities running from 250GB to 2TB.

For performance the drive was lackluster to poor, a bit of a shock coming from Samsung. In our Houdini test it came in second and third to last with 4,195.1 seconds for the 500GB and 3,893.9 seconds for the 2TB. The 2TB version did well in SQL server with 3,158.9 TPS and an average latency of 5ms; the 500GB on the other hand had 3,148.9 TPS and an average latency of 20ms.

In our VDBench workloads the poor performance of the 500GB model was more pronounced as it tended to land near or at the bottom of the pack and coming near or going over 1ms of latency. The 2TB version performed better landing in second in the 4K write (288K IOPS) and third in 64K write (1.1GB/s) and VDI Initial Login (47K IOPS). The 2TB tended to land in the middle of the other benchmarks.

It is a bit of a disappointment to see Samsung release a new EVO M.2 to watch the lower capacity perform poorly and the high capacity to be average to slightly better. Samsung drives are typically industry leading in performance and come with a price premium for it. The price is still there this time around but the performance doesn’t match.

The Bottom Line

The Samsung 970 EVO is an M.2 NVMe SSD aimed at mainstream users, but Samsung’s grip on the industry-leading spots is starting to slip as the 970 EVO delivers an uneven performance profile with comparatively high price.

Amazon

Amazon