…balancer services. GPUs have become a necessity for many containerized applications. Microsoft recognizes this and supports deploying GPU-enabled node pools on top of NVIDIA Tesla T4 GPUs using Discrete Device…

…balancer services. GPUs have become a necessity for many containerized applications. Microsoft recognizes this and supports deploying GPU-enabled node pools on top of NVIDIA Tesla T4 GPUs using Discrete Device…

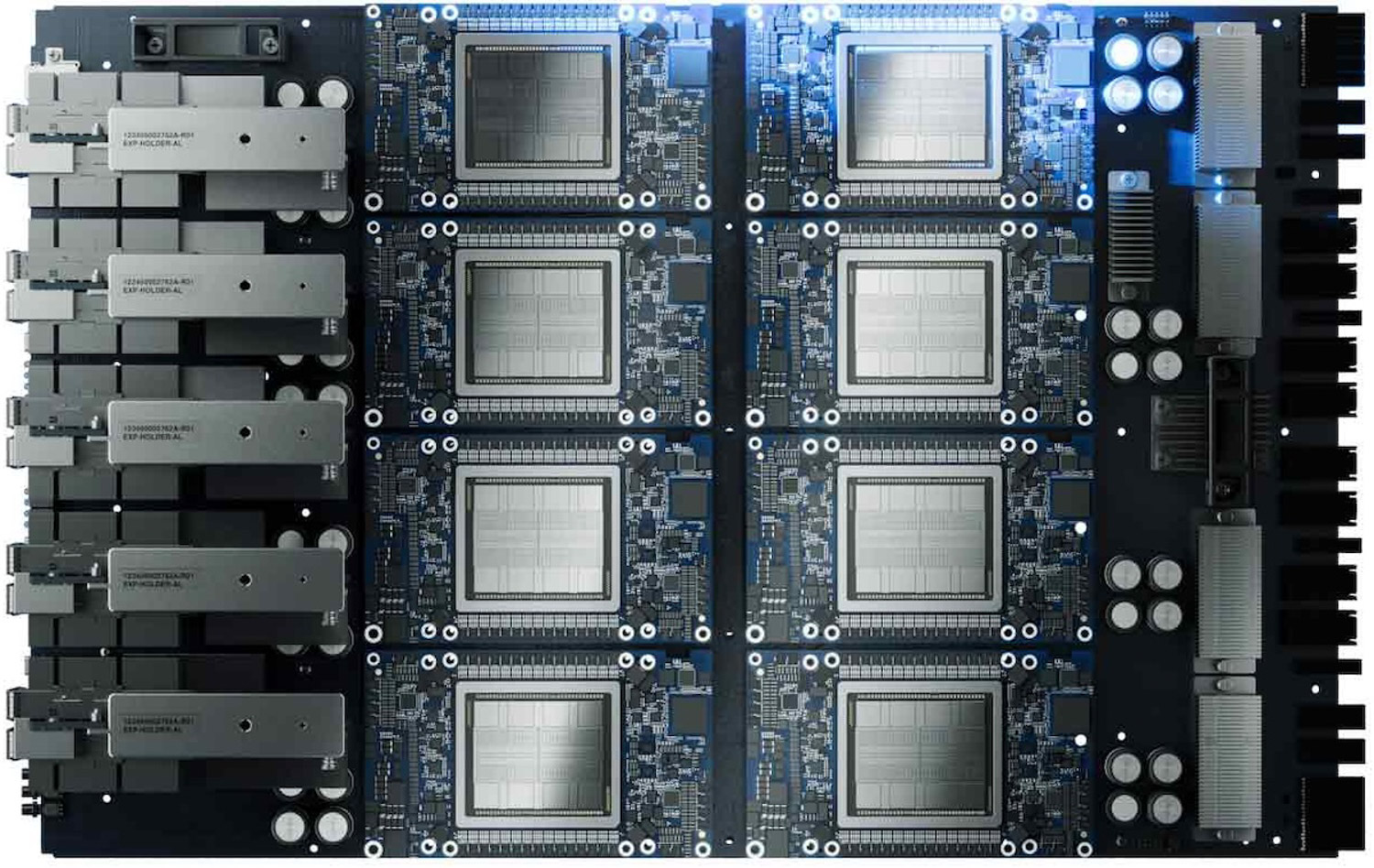

…optimizing for thermals and cabling. After an initial consultation, Supermicro delivers a project proposal aligned with the customer’s power budget, performance targets, and other operational needs. The 256-node AI Factory…

…enterprise workloads. The Xeon 6 series also serves as a foundational central processing unit (CPU) for AI systems, functioning exceptionally well alongside a GPU as a host node CPU. Compared…

…placement follows the E1 SSDs. This size is ideal for dense storage servers, accommodating up to 40 E2 SSDs in a 2U node, which can translate to up to 40…

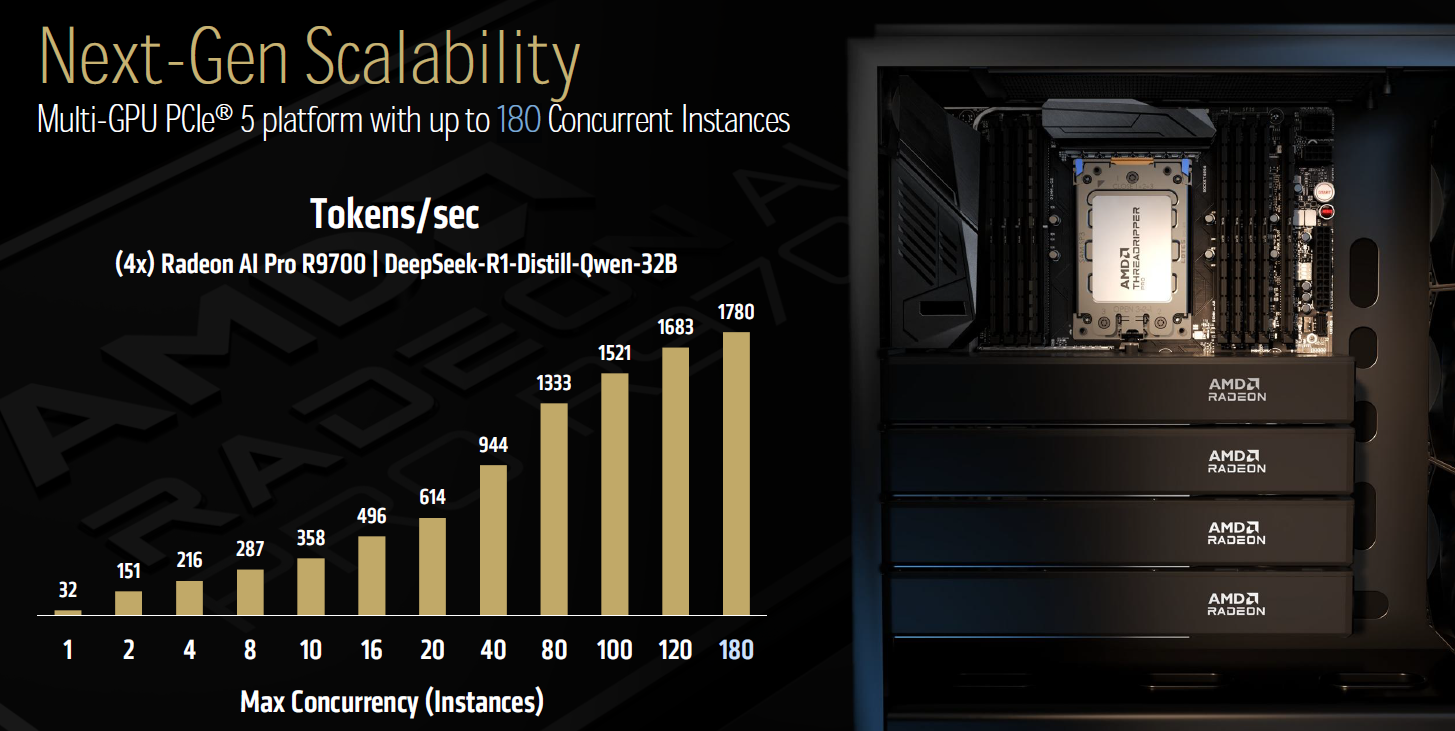

…All this is packed into a chip built on TSMC’s 4nm node, with a die size of 356.5mm² and 53.9 billion transistors. The Threadripper 9000 series and Radeon AI Pro…

…with one device serving as the active node and the other in standby. When the active device fails, failover occurs automatically via the standby device, maintaining service continuity. Availability The…

…NVLink is evident on the traditional throughput-per-watt versus latency Pareto frontier. By transitioning from a PCIe mesh to an NVLink Switch node, both throughput and latency improve, and further advancements…

…hyperscalers but now commercially available through NVIDIA-Intel platforms. Such integrations could provide tangible benefits in AI training throughput, better memory bandwidth alignment, and optimized inter-node scaling, reducing the complexity and…

…SMBs to scale with confidence using industry-standard designs. AI Edge-Ready Node: For organizations moving workloads closer to where data is generated, the Lenovo ThinkEdge SE100 with Scale Computing HyperCore introduces…

…Blackwell Server Edition GPUs, the solution debuts with S3-over-RDMA (Remote Direct Memory Access), providing an eightfold faster performance boost. The platform achieves 35GB/s per node on reads, scaling linearly to…