The SuperMicro MicroBlade family is made up of two key components: a wide selection of chassis configurations, and a multitude of dense-blade options. The MicroBlade enclosure comes in two primary flavors, one being a 3U unit that supports 14 servers and the other a 6U unit that supports 28 servers. Between those sizes there are varying power-configuration options that users can chose from depending on how the ultimate solution will be configured. The servers themselves cover a broad landscape, from single- or dual-processor Intel Xeon systems to ultra-dense blades offering quad Intel-Avoton powered nodes. This allows Supermicro to reach high core per rack counts, upwards of 6272 cores with 784 quad-Avoton nodes in a 42U footprint. The variety of options provides a ton of flexibility for those who need dense compute for high-intensity applications, or for those who want to start small, but know their compute demands will need to scale rapidly. In either case, the MicroBlade enclosures offer a simple deployment model, as well as a chassis management module (CMM) for remote access to blades, power supplies, cooling fans, and networking switches.

In the StorageReview lab, we were supplied with a 6U chassis configuration (MBE-628E-820) populated with two different styles of single- and dual-node blades. Connecting it all together on one fabric was the Intel 1G/2.5G MBM-GEM-001 switch, with 1G internal access and 10/40GB external connectivity.

With just four blades, the testing focused on management of the enclosure, as well as how the nodes (as configured) performed in our VMware environment with a virtualized MySQL TPC-C workload. Our four blade servers are also each a little different, highlighting the variety of CPU, SSD and RAM configurations. This comes in handy for pooling compute resources for specific workloads, or simply being flexible to support new technologies as they come to market.

SuperMicro MicroBlade Specifications

- Enclosure MBE-628E-820 (8x PWS):

- Server Blade: Up to 28 hot-plug server blades

- GbE Switch / Pass-Through Module: Up to 2 hot-swap MBM-GEM-001/003i/003S or MBM-XEM-001 switches

- Management Module:

- Up to 2 hot-swap Chassis Management Modules (CMM) providing remote KVM and IPMI 2.0 functionalities

- Management module not included in the enclosure

- Power Supply: Up to 8 hot-swap high-efficiency 2000W, N+1 or N+N redundant power supplies

- Cooling Design: Up to 8 cooling fans

- Dimensions (HxWxD): 10.43″ x 17.67″ x 36.10″ (265mm x 449mm x 917mm)

- Available Models:

- MBE-628E-820 – Enclosure chassis with eight high-efficiency 2000W power supplies

- MBE-628E-420 – Enclosure chassis with four high-efficiency 2000W power supplies + four fan modules

- Atom C2750/2550:

- MBI-6418A-T7H/T5H

- Node per 6U/3U: 112/56

- SSD/HDD per 6U/3U: 112/56x 2.5” SATA3 SSD/HDD

- Node per Blade: 4

- Processor:

- Intel Atom 2750 (T7H) 8-core, 2.4GHz, 20W

- Intel Atom 2550 (T5H) 4-core, 2.4GHz, 14W

- Memory Capacity: Up to 32GB DDR3-1600 ECC SO-DIMM in 2 DIMM slots

- Drive Bay:

- 1x 2.5” SATA3 HDD/SSD

- 1 SATADOM

- Network connectivity: Dual-port 2.5GbE

- MBI-6418A-T7H/T5H

- Xeon D UP Broadwell-DE

- MBI-6118G-T41X

- Node per 6U/3U: 28/14

- SSD/HDD per 6U/3U:

- 112/56x 2.5” SATA3 SSD

- 56/28x 2.5” SATA3 HDD + 56/28 SSD

- Node per Blade: 1

- Processor: Intel Broadwell-DE SoC Xeon D-1541 8-core, 45W

- Memory Capacity: Up to 128GB DDR4-2400 ECC VLP RDIMM in 4 DIMM slots

- Drive Bay: 4x 2.5” SATA3 SSD (2 HDD/SSD + 2 SSD) 1 SATADOM

- Network Connectivity: Dual-port 10GbE

- MBI-6218G-T41X

- Node per 6U/3U: 56/28

- SSD/HDD per 6U/3U: 58/28x 2.5” SATA3 SSD/HDD

- Node per Blade: 2

- Processor: Intel Broadwell-DE SoC Xeon D-1541 8-core, 45W

- Memory Capacity: Up to 128GB DDR4-2400 ECC VLP RDIMM in 4 DIMM slots

- Drive Bay:

- 1x 2.5” SATA3 HDD/SSD

- 1 SATADOM

- Network Connectivity: Dual-port 10GbE

- MBI-6118G-T41X

- Xeon UP E3-1200 v5/v4/v3

- MBI-6219G-T

- Node per 6U/3U: 56/28

- SSD/HDD per 6U/3U:

- 112/56x 2.5” SATA3 SSD

- 56/28x 2.5” SATA3 HDD

- Node per Blade: 2

- Processor: Intel E3-1200 v5 4-core, 25W-95W

- Memory Capacity: Up to 64GB DDR4-2400 ECC VLP UDIMM in 4 DIMM slots

- Drive Bay: 2x 2.5” SATA3 SSD or 1 HDD

- Network Connectivity: Dual-port 1GbE

- MBI-6118D-T4H/T2H

- Node per 6U/3U: 28/14

- SSD/HDD per 6U/3U:

- 112/56x 2.5” SATA3 HDD/SSD

- 56/28x 3.5” SATA3 HDD

- Node per Blade: 1

- Processor: Intel E3-1200 v4 w/ Iris Pro Graphics

- Memory Capacity: Up to 32GB DDR3-1600 ECC VLP UDIMM in 4 DIMM slots

- Drive Bay:

- 2x 3.5” SATA3 HDD (T2H)

- 4x 2.5” SATA3 HDD/SSD (T4H

- Network Connectivity: Dual-port 1GbE

- MBI-6118D-T2/T4

- Node per 6U/3U: 28/14

- SSD/HDD per 6U/3U:

- 112/56x 2.5” SATA3 HDD/SSD

- 56/28x 3.5” SATA3 HDD

- Node per Blade: 1

- Processor: Intel E3-1200 v3

- Memory Capacity: Up to 32GB DDR3-1600 ECC VLP UDIMM in 4 DIMM slots

- Drive Bay:

- 2x 3.5” SATA3 HDD (T2)

- 4x 2.5” SATA3 HDD/SSD (T4

- Network Connectivity: Dual-port 1GbE

- MBI-6219G-T

- Xeon DP E5-2600 v4/v3

- MBI-6128R-T2X/T2

- Node per 6U/3U: 28/14

- SSD/HDD per 6U/3U: 56/28x 2.5” SATA3 SSD/HDD

- Node per Blade: 1

- Processor: Dual Intel E5-2600 v4/v3 Up to 18-core, 120W

- Memory Capacity: Up to 256GB DDR4-2400 ECC VLP RDIMM in 8 DIMM slots

- Drive Bay: 2x 2.5” SATA3 HDD/SSD 1 SATADOM

- Network Connectivity:

- Dual-port 10GbE (T2X)

- Quad-port 1GbE (T2)

- MBI-6128R-T2X/T2

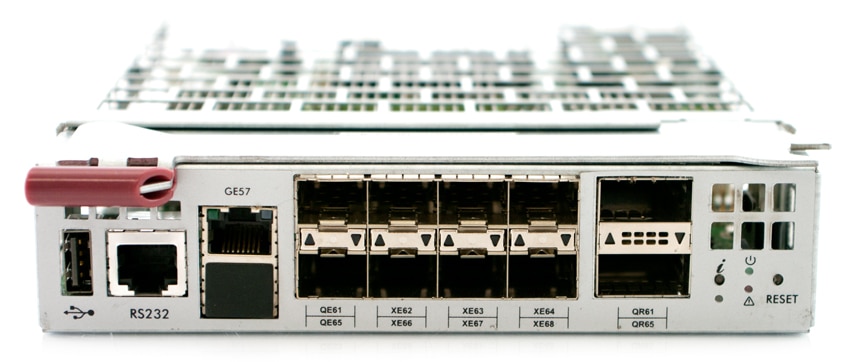

MicroBlade Switch Configurations

- Intel 1G/2.5G MBM-GEM-001

- External Ports:

- 2x40Gbps QSFP

- 8x10Gbps SFP+

- 1x1Gbps RJ45

- Internal Ports: 56×2.5G/1G

- Switch Chipset: Intel FM5224

- External Ports:

- Broadcom 1G MBM-GEM-004

- External Ports:

- 4x10Gbps SFP+

- 8x1Gbps RJ45

- Internal Ports: 42x1G

- Switch Chipset: Broadcom BCM56151

- External Ports:

- Intel 10G MBM-XEM-001

- External Ports:

- 4x40Gbps QSFP

- Internal Ports: 56x10G

- Switch Chipset: Intel FM6348

- External Ports:

- Broadcom 10G MBM-XEM-002

- External Ports:

- 2x40Gbps QSFP

- 4x10Gbps SFP+

- Internal Ports: 56x10G

- Switch Chipset: Broadcom BCM56846

- External Ports:

Design and build

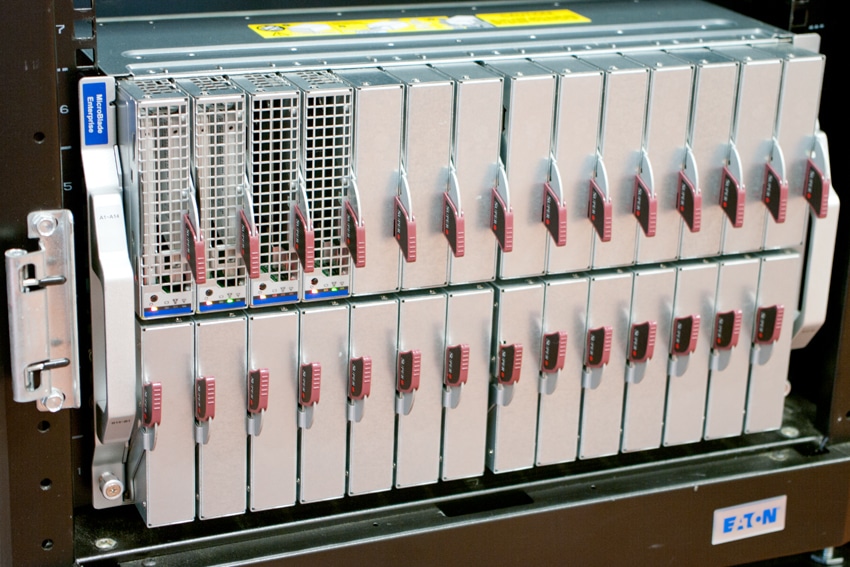

The SuperMicro X11 MicroBlade Solution is a fairly large 6U chassis, which can support up to 28 microblade servers. All along the front of the device are the handles for pulling out the server blades. There are 14 on top and 14 on bottom. To open them up, one simply needs to pull the handle up on the top row or down on the bottom row.

Switching around to the back of the device, power supplies and fans run across both the top and the bottom. The middle of the device is where the Chassis module and the 10G network switch reside, one set on either side.

MicroBlade Chassis Management

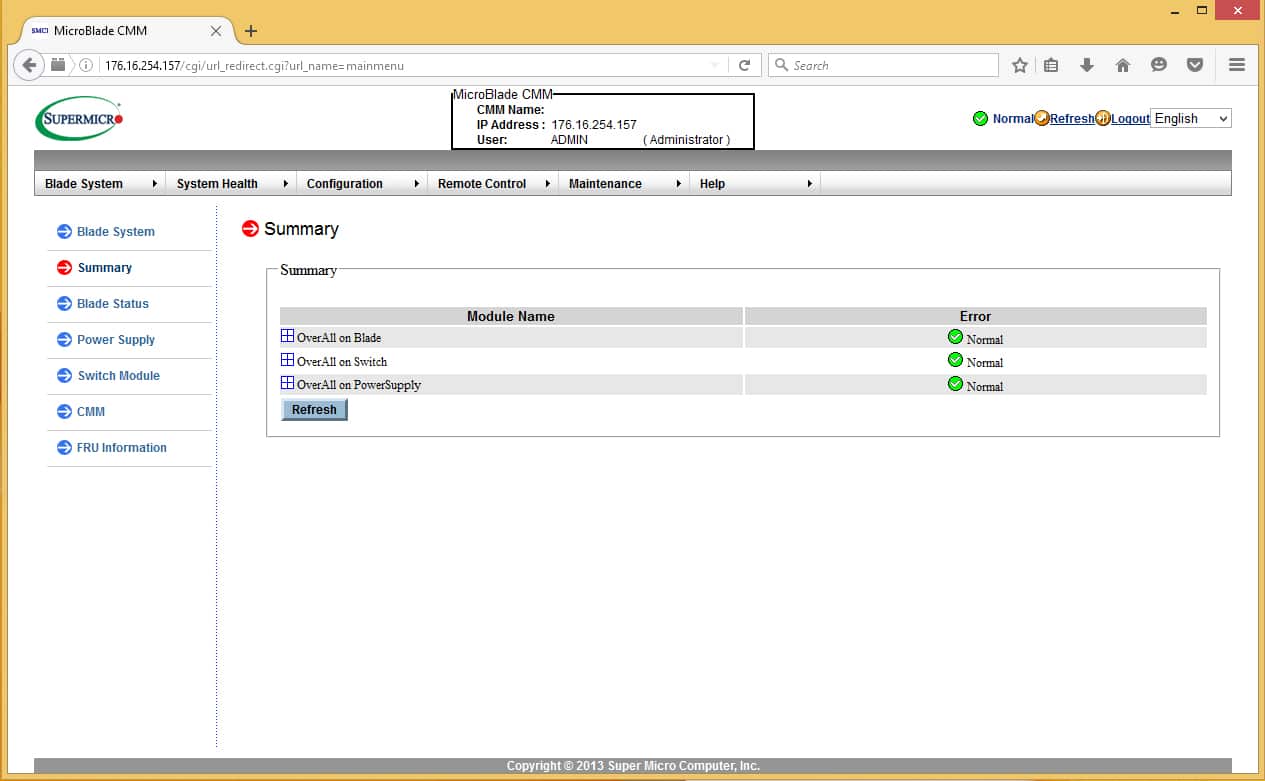

The SuperMicro Microblade chassis builds on their standard bladecenter management interface that they have used for quite some time. If you’ve used SuperMicro for anything in the last decade, you will no doubt be familiar with how the interface is laid out. The Microblade chassis ups the number of systems connected, but remains similar in most every other aspect–resulting in a smooth transition from the older systems. The system allows you to connect via a web-based interface, via a standalone application from SuperMicro called IPMITool, or from their SuperMicro Server Manager (SSM) platform designed to manage multiple systems in an enterprise. Today we will be focusing on the web-based interface as it requires no additional downloads.

The first page shows an overall health status of the system, the user that you are logged in as, and the IP address of the system that you are connected to. You can drill down into the OverAll Blade, Switch, and Power Supply areas to get more information on each component of the system. This is very helpful for figuring out which node or device could be contributing to errors in the system.

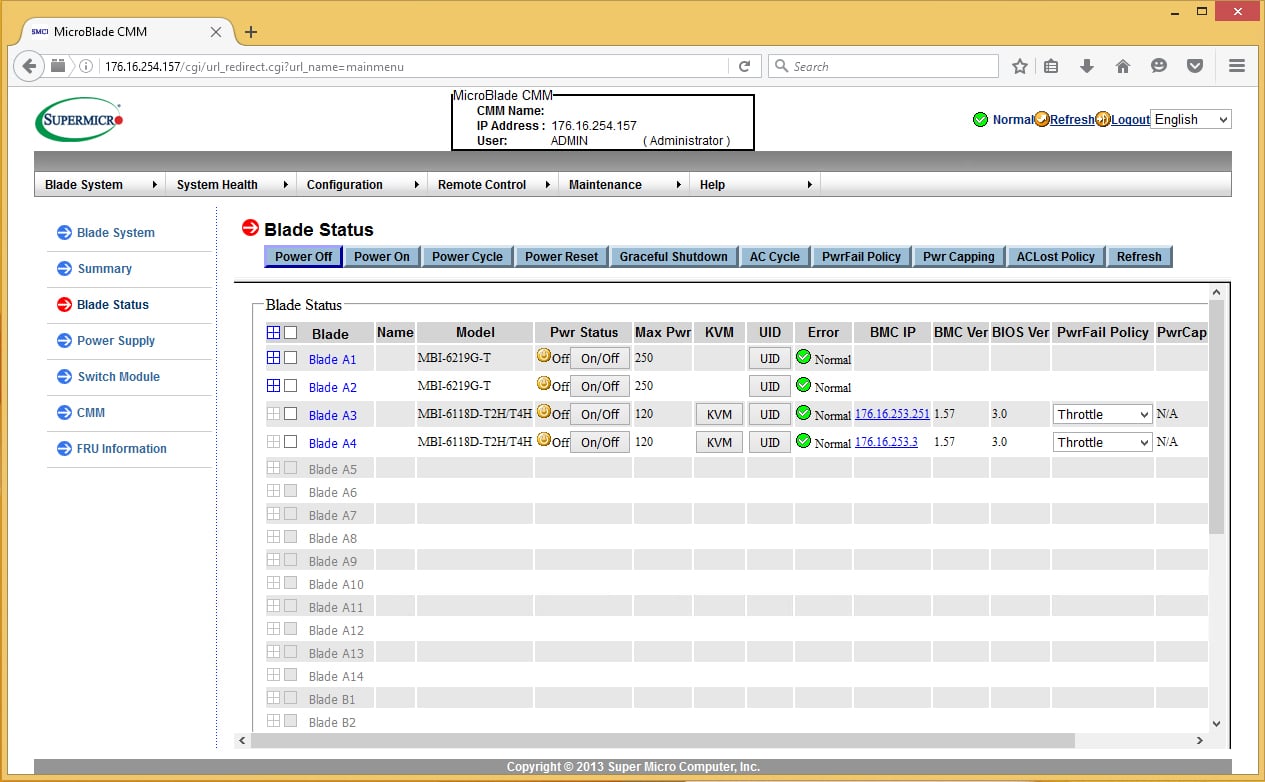

The Blade Status page allows you to modify several policies for a blade/node. You can power on or off a node, access the KVM, illuminate the UID (Unit identifier) LED, or set multiple policies regarding power failure behavior.

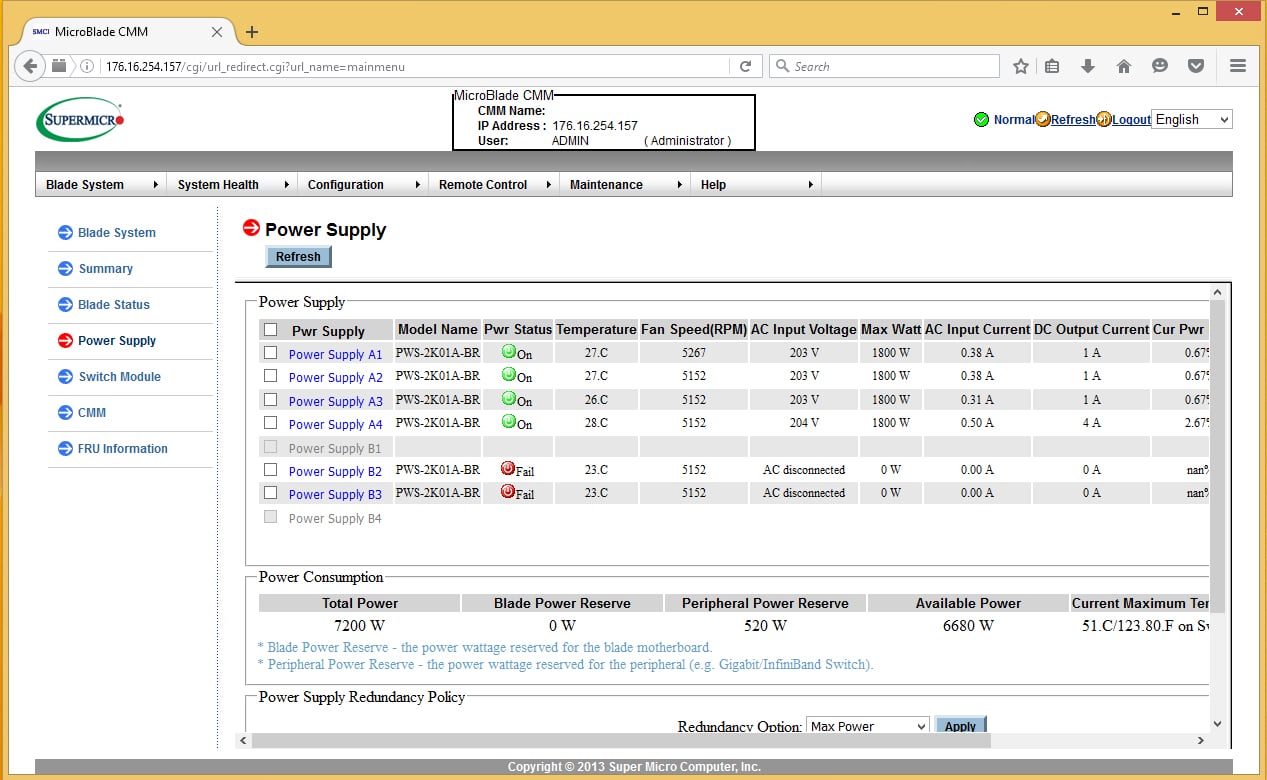

The Power Supply page gives you insight into how the power supplies in the system are performing. They display temp, fan speed, input voltage and a number of other power statistics that will help you in determining if the power supplies have sufficient headroom for more growth. You also have options to set redundancy for the power supplies. Available configurations include “Max Power,” N+1 and N+N. Max Power configuration allows full power from all PSUs combined, allowing greater compute density. N+1 and N+N gives significantly greater resiliency at the expense of power capacity. For people who are doing HPC work or distributed-computing work, the Max Power configuration would be great for getting the most density out of a rack. N+1 and N+N would be most useful for the majority of enterprise and service-provider users.

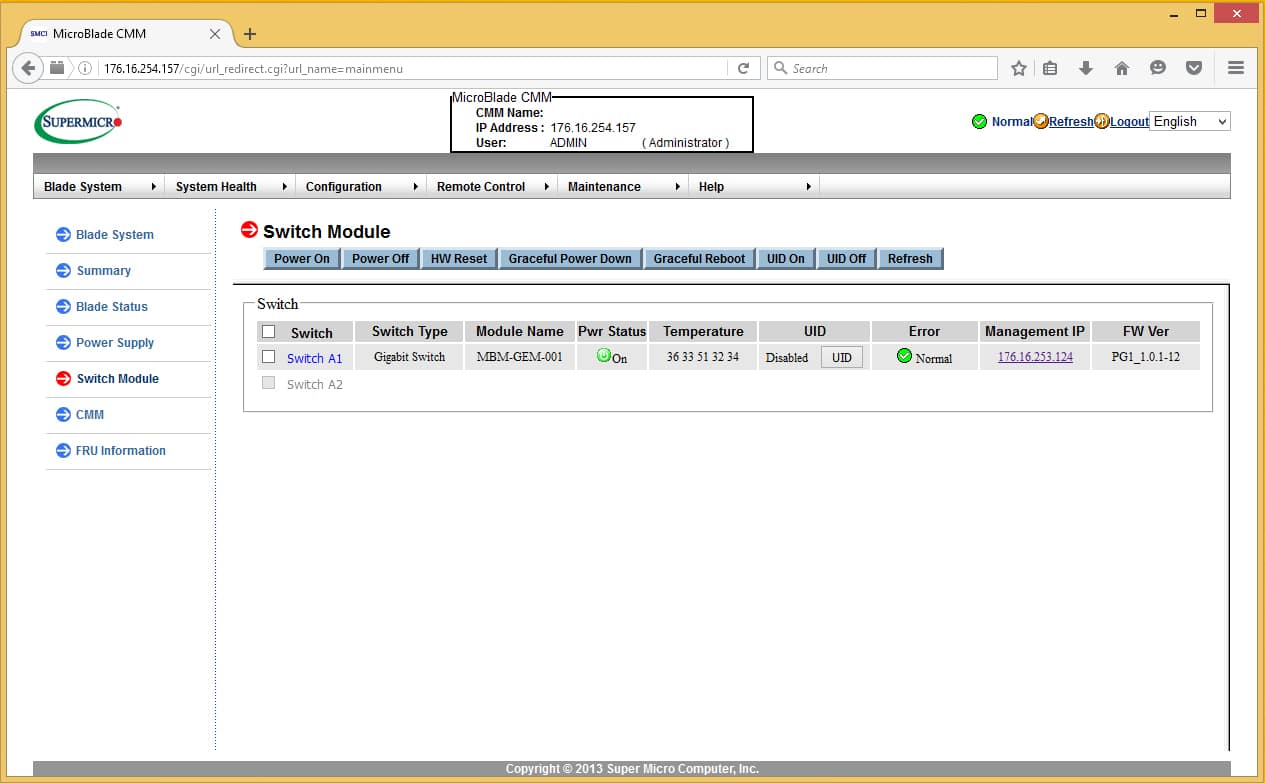

The switch module page is fairly barren showing a little information about the type of switch, the management IP of the switch, and temp status. There is very little configuration (other than the management IP) available on this page. This page seems like it could be forgone and combined with another page to reduce clutter in the primary interface.

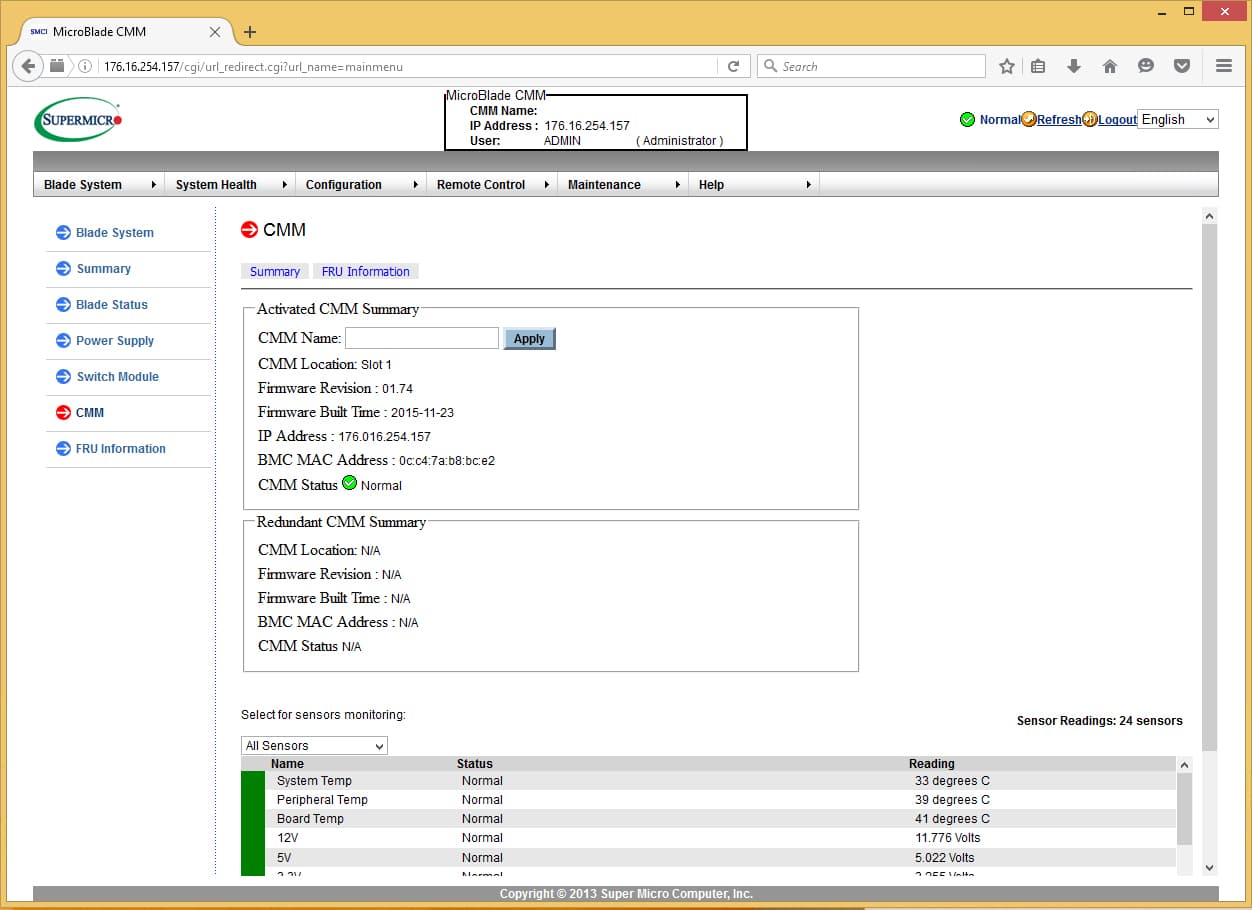

The CMM page, like the Switch Module page, is fairly barren of information. There is very little configuration information on this page other than the CMM name. Everything else is simply telling the status of the module. You cannot even power cycle the CMM from this page. This would be a good candidate for consolidation with the network switch page.

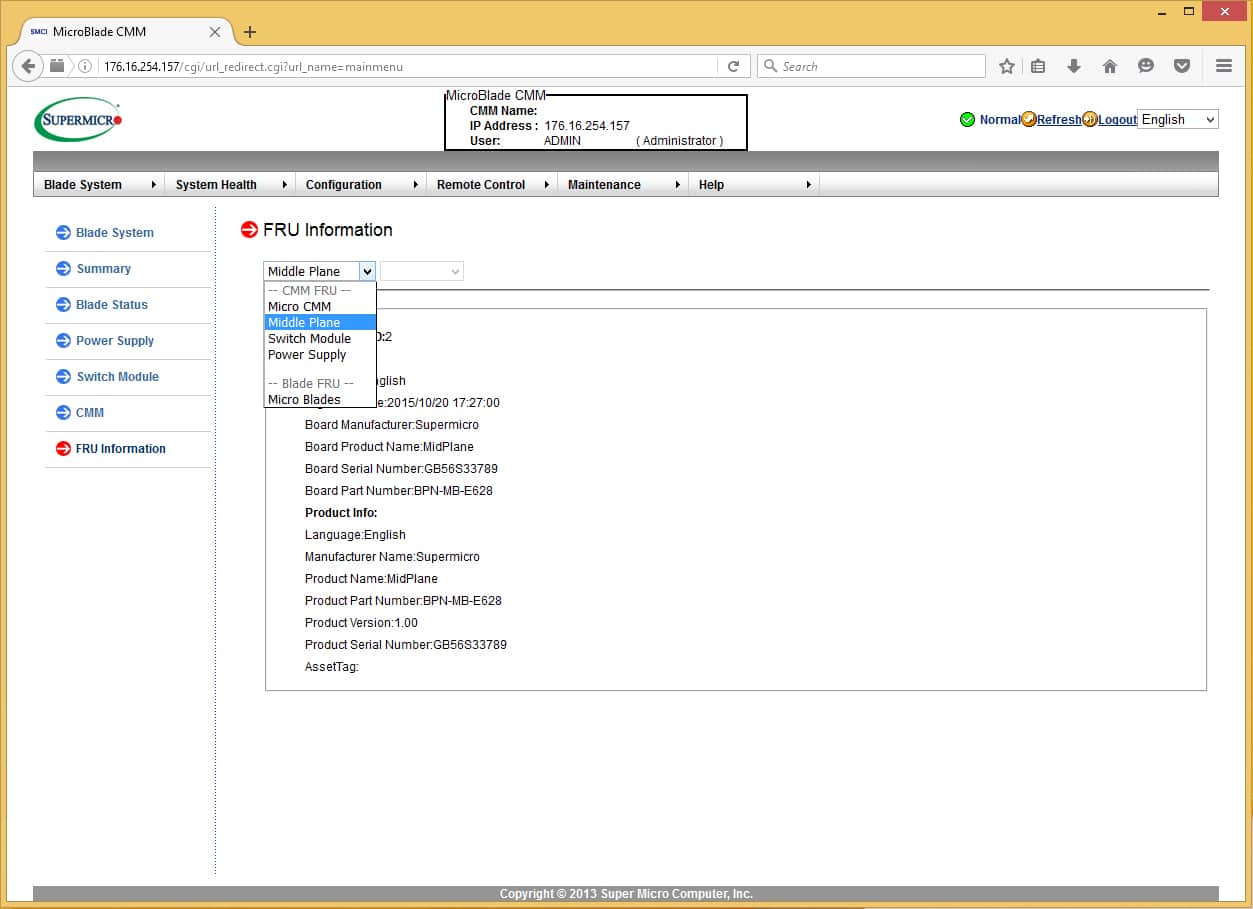

The FRU information lists pretty much every part in the system and the part number for ordering replacements or capacity adds. This is actually a really good idea for sparing. It’s quite practical having a place that tells you exactly what is installed in a system so you can go order identical replacements (or augment capacity) without having to refer to an order sheet, drive to a datacenter, or call someone to read off of the system.

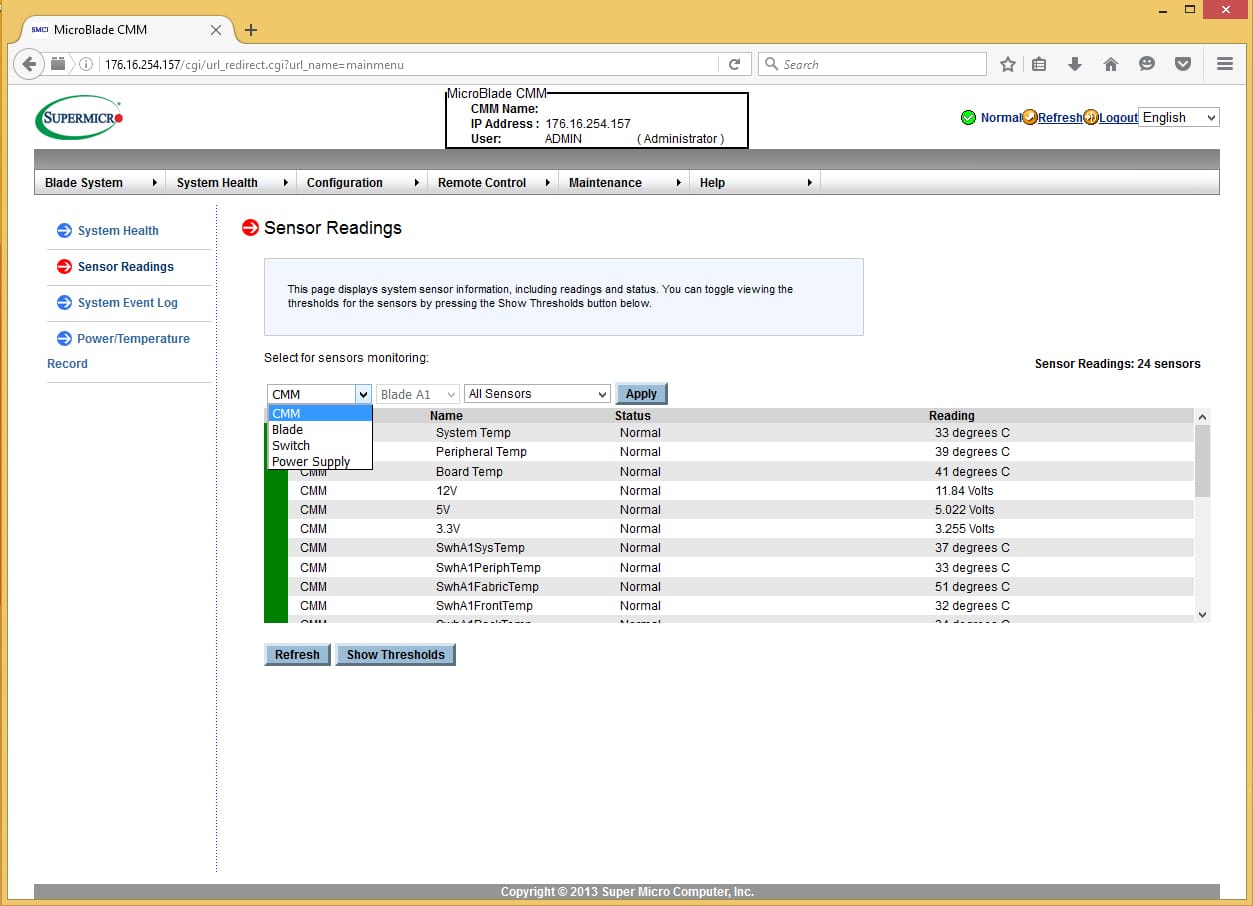

Moving on to System Health you will find a screen dedicated to all sensor readings of any module attached to the system. Power Supply, Blades, Network Modules and Chassis Management Modules are all selectable here for easy review of infrastructure parameters.

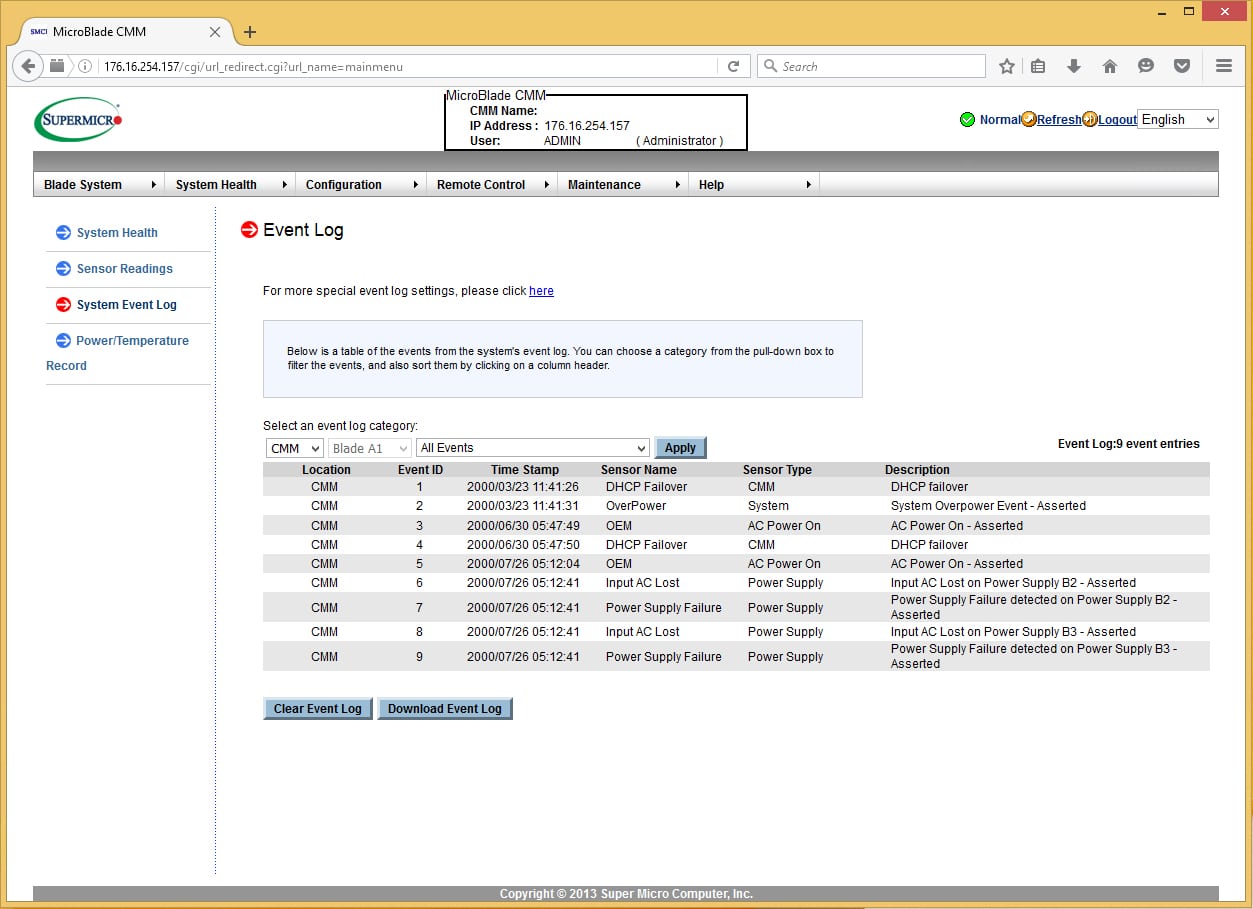

The System Event Log screen shows events logged by Blades, Nodes, and Chassis Management Modules. It appears as though Power Supplies log to the Chassis Management Module. No events from the Network Module were logged in the event log as the Network Module logs all of its events internally.

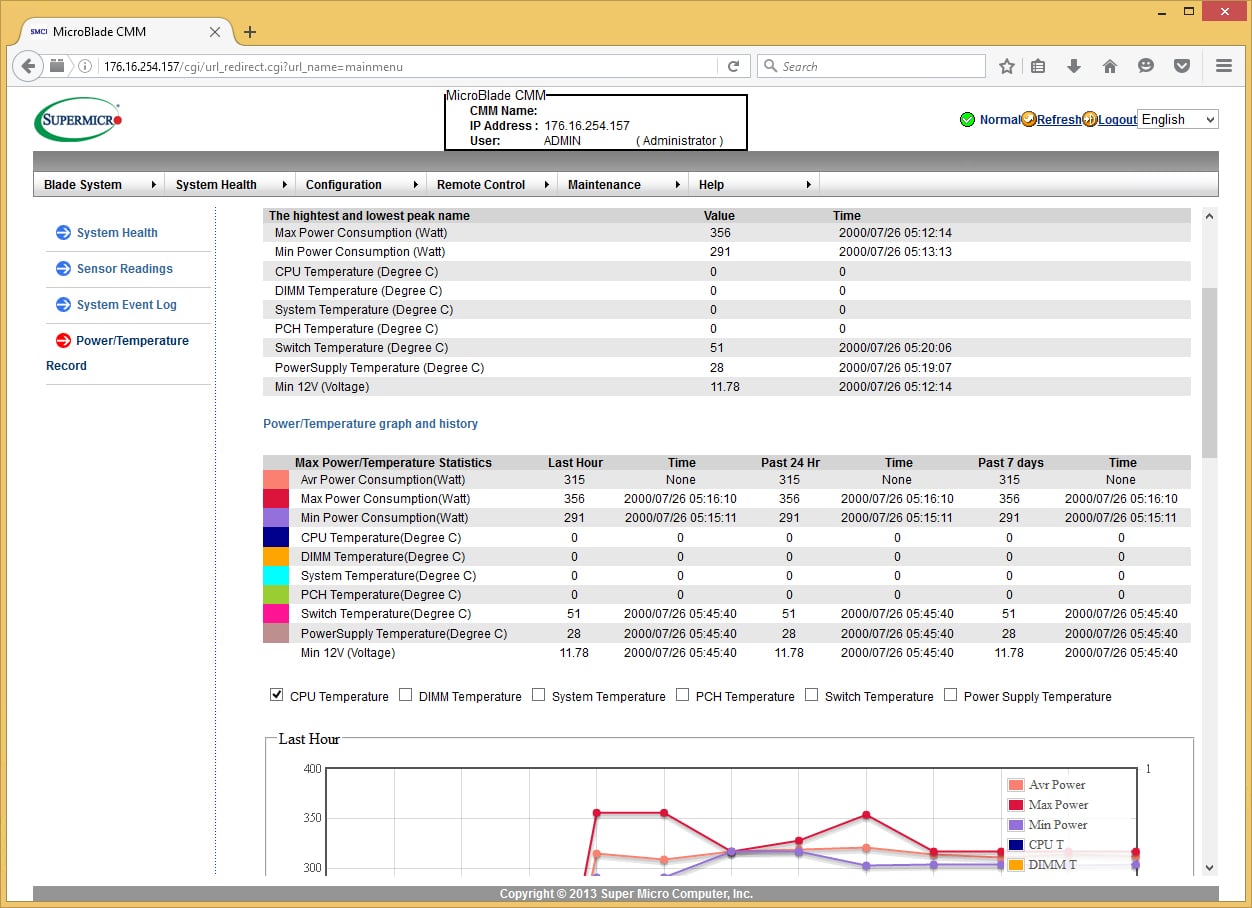

The Power/Temperature page shows exactly what you would expect: historical power and temperature readings from the system. You can select what items to show via check boxes, which are then displayed below. Graphs are presented for the last hour, the last day, and the last week of data points. There is also an option to download the entire record in CSV format that you can import into other systems for analysis.

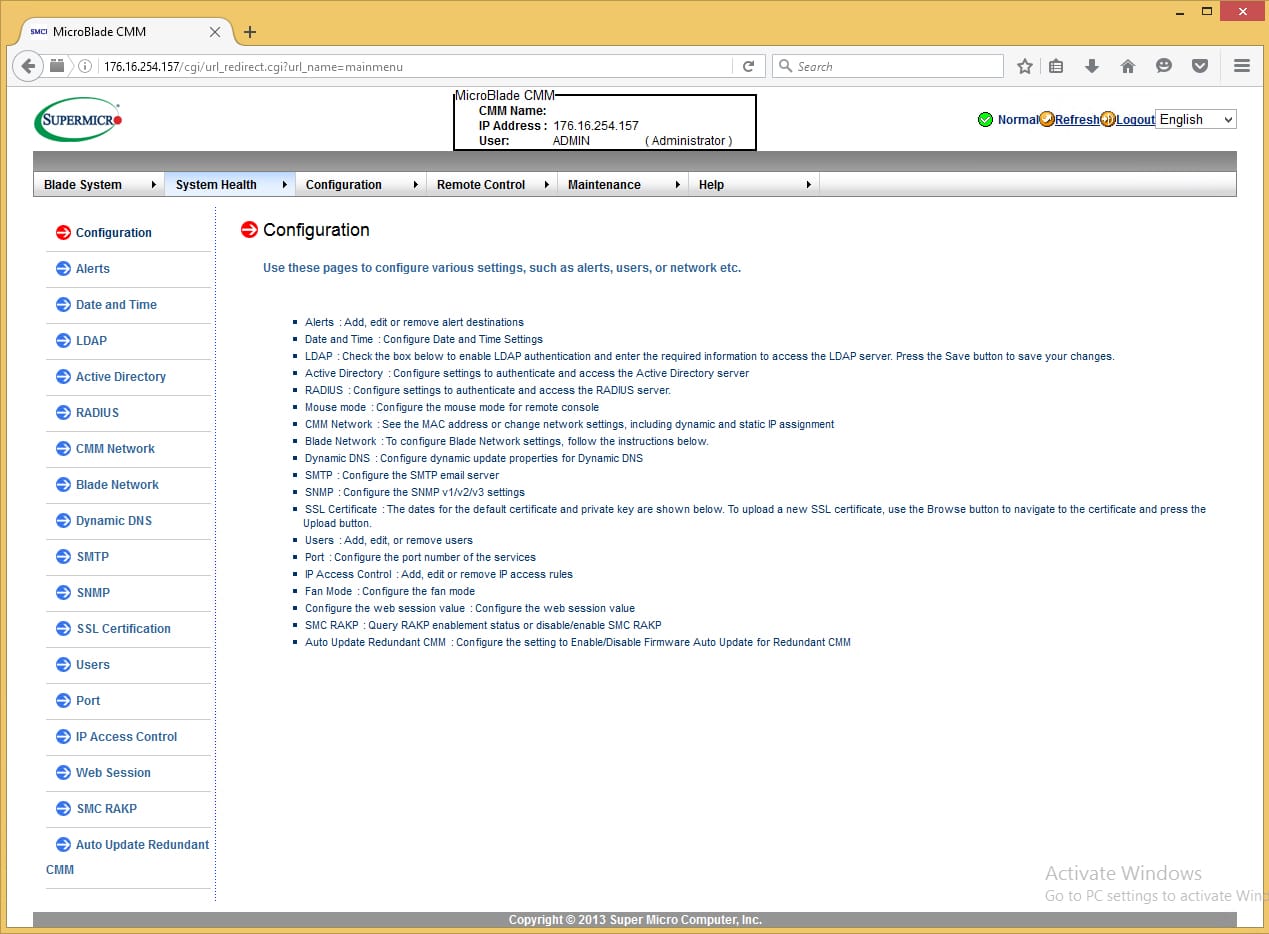

The next area that we will look at is the Configuration page. This page contains nearly all of the configuration variables that you will need to set before you are ready for production. These include Email Alerts, Date and Time, LDAP integration, Active Directory, RADIUS, Network configuration for blades and Chassis Management Modules, Dynamic DNS, SMTP server, SNMP, SSL Certificates, User accounts, Web Service Ports, IP Access control, Session timeouts, SMC RAKP (Remote Access Key Exchange Protocol), and Chassis Management Module auto-update settings. A specific note about SNMP: SNMP supports read and write communities in the web interface, but you cannot access the SNMP Trap configurations. To enable SNMP trapping you need to connect using the IPMITool software or the SSM software. Overall it appears as though most everything that needs to be configured on this system is accessible on this page and the sub-pages.

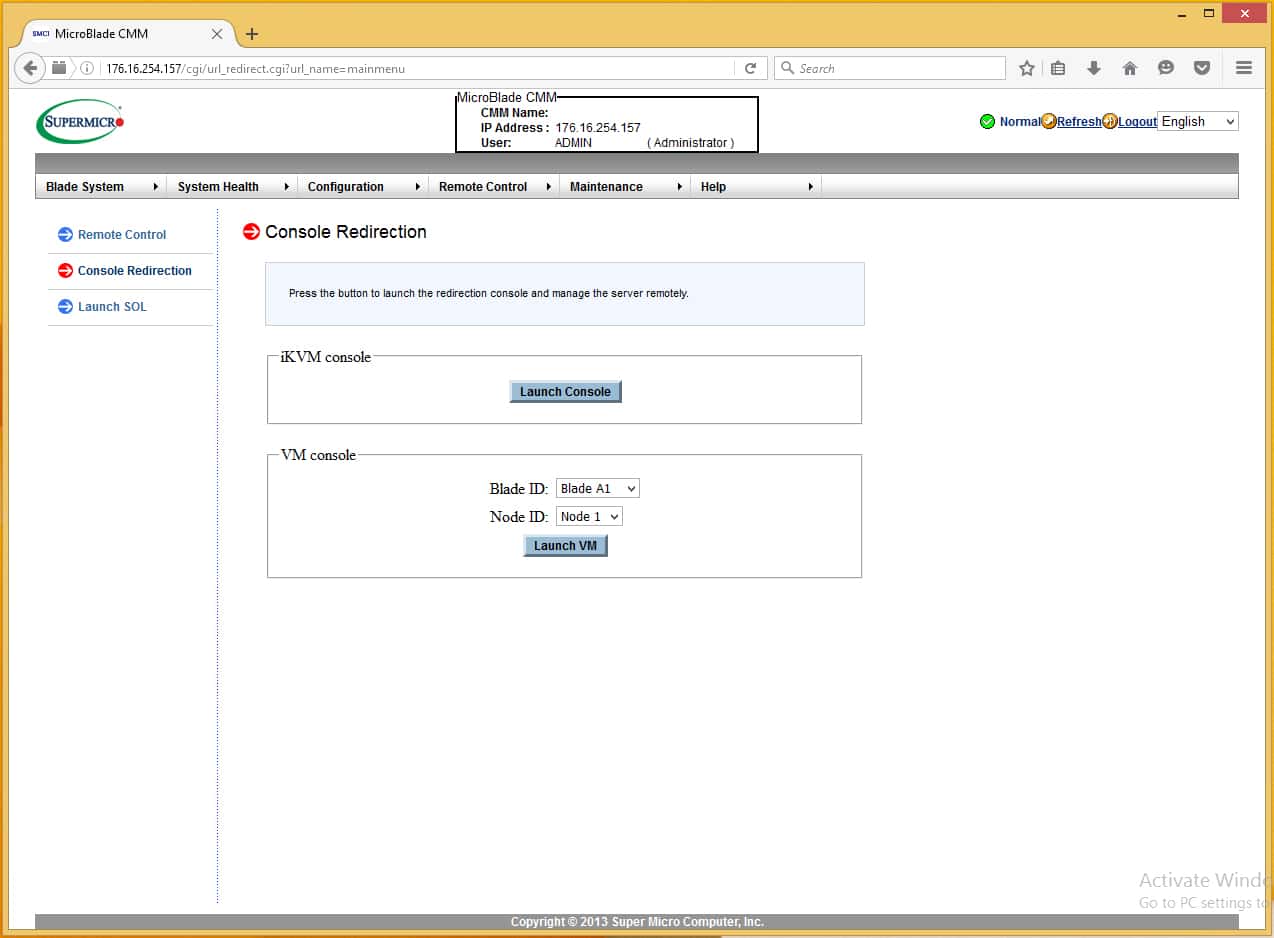

The Remote Control area of the user interface is probably the most used part of the interface for the MicroBlade system. From here you can launch the iKVM Java application to get a remote console to any system in the Microblade system. You can also launch the Virtual Media application to remote mount media to any system. The two applications are separate. For users who are familiar with the VMware console, you are accustomed to having your virtual media accessible from the same window as your virtual console. This is not how this application behaves. You are given options to mount local disks (hard drives or optical media) or network images. These mounts (even network images) do not persist after the java application closes. This is somewhat of a drawback when working on something that may take a while to install (think unattended installs).

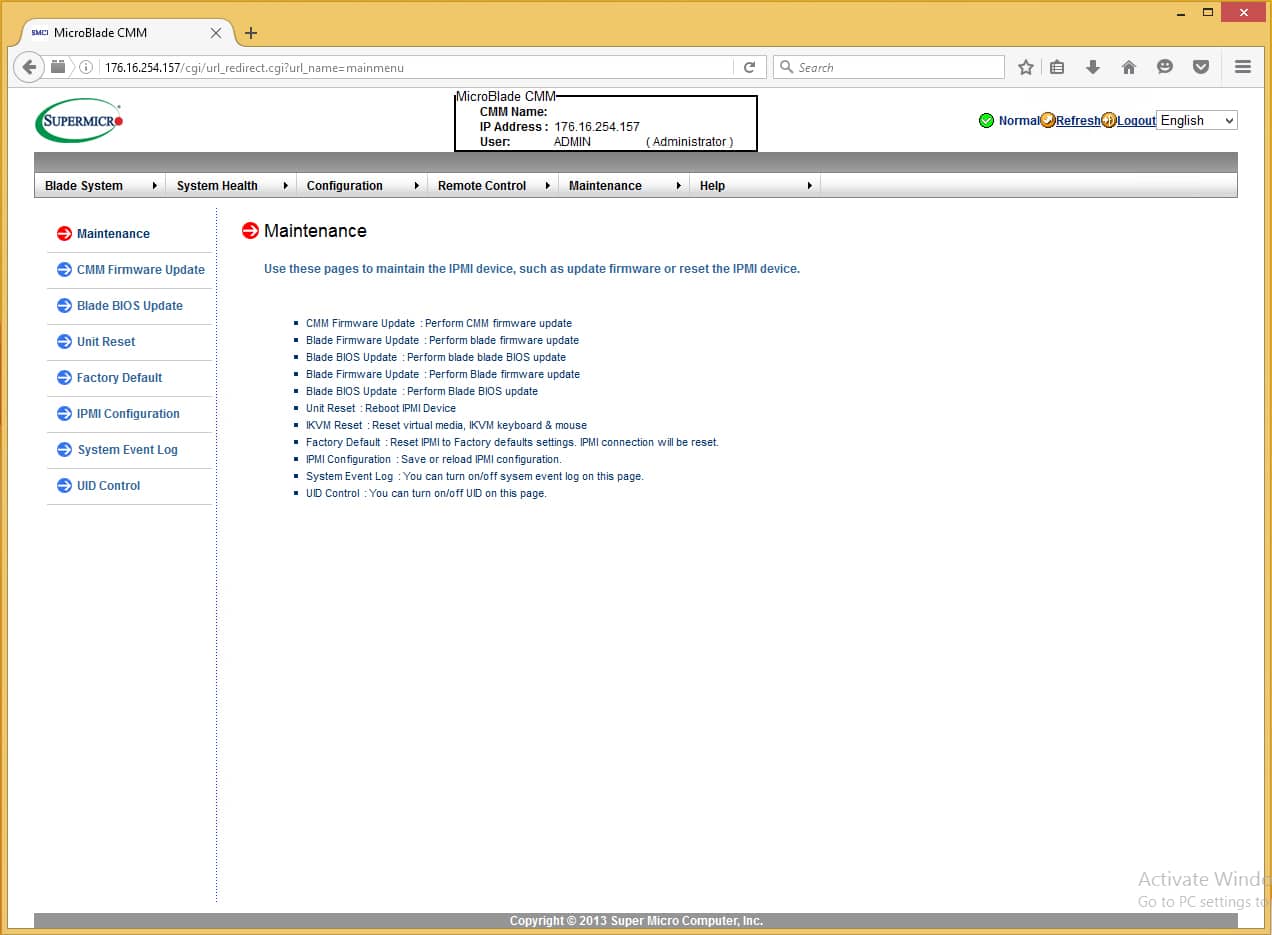

The final screen is the Maintenance screen. This page allows you to make updates to most of the critical elements of the system, including the Chassis Management Module, Blades, System Resets, Config resets, and IPMI configuration reloads.

In closing, the system is primarily updated to provide access to the significantly higher density of blades in the MicroBlade architecture, versus the original SuperBlade systems. In this respect, it succeeds in providing an interface that anyone would be able to familiarize themselves with quickly to manage this extremely dense system. There could be improvements in the consolidation of some configuration items to reduce the amount of unique screens that are presented in the system. Another thing that could be improved upon is the use of Java for the interface and the use of dynamic screens. When making changes to many display selections, you have to click “apply” to view the changes rather than the page updating dynamically when a new selection is made (specifically in the System Health area). Overall usability is still maintained for most of the system, and for many shops, this system will work extremely well for management.

Application Testing

We put the two node configurations we were shipped under our Sysbench workload to see how they stacked up. With that said, there were many different configuration options across both nodes that directly impacted performance besides CPU differences alone. The MBI-6118D-T4H MicroBlade came equipped with the E3-1285L v4 CPU, 32GB of DRAM, and Intel S3700 400GB SSDs. The MBI-6219G-T MicroBlade, on the other hand, came with the E3-1275 v5 CPU, 64GB of DRAM, and Intel S3500 480GB SSDs. As we’ve noted differences in the past between these SSDs individually, it goes without saying that CPU differences alone won’t just be the main drivers of performance levels in the following benchmark.

With our lowest DRAM setting being 32,000MB per VM for our Sysbench test, we were able to show 1VM on the MBI-6118D-T4H MicroBlade, while the MBI-6219G-T with 64GB installed allowed us to run both 1 and 2 VMs. In each blade we used the supplied Intel SSDs to act as the database datastore for our tests. In the case of the MBI-6219G-T where we tested 2 VMs, we used two SSDs, while on the MBI-6118D-T4H where we tested only 1 VM, we used just one SSD.

Each Sysbench VM is configured with three vDisks, one for boot (~92GB), one with the pre-built database (~447GB) and the third for the database under test (270GB). The boot drive and pre-built-database remained on shared storage in our lab. From a system resource perspective, we configured each VM with 8 vCPUs, 32,000MB of DRAM and leveraged the LSI Logic SAS SCSI controller.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Percona XtraDB 5.5.30-rel30.1

-

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 3 hours

- 2 hours preconditioning 32 threads

- 1 hour 32 threads

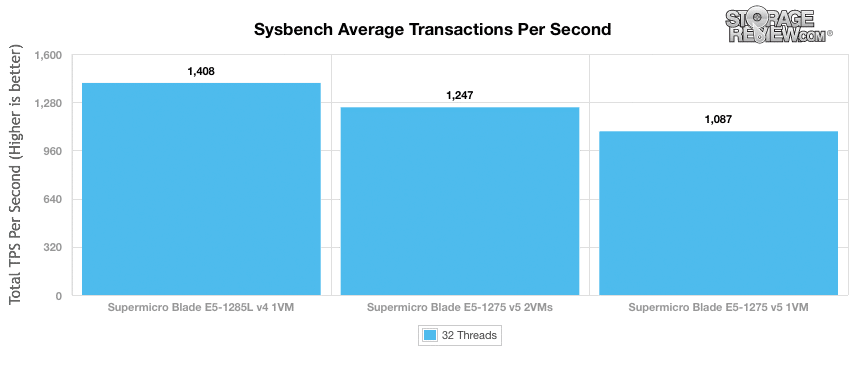

Our Sysbench test measures average TPS (Transactions Per Second), average latency, as well as average 99th percentile latency at a peak load of 32 threads. Looking at average TPS we found that the Supermicro Blade E5-1285L v4 with one VM was able to hit 1,408 TPS. The Supermicro Blade E5-1275 v5 with one VM was able to hit 1,087 TPS and with two VMs, the same blade hit 1,247 TPS.

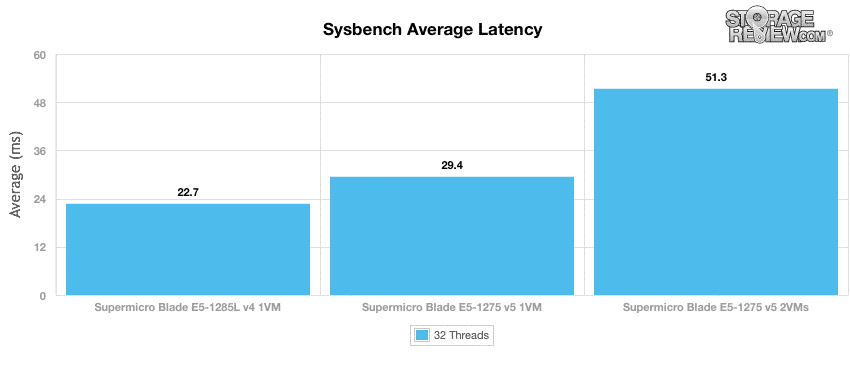

With average latency, the Supermicro Blade E5-1285L v4 with one VM had an average latency of only 22.7ms. Looking at the Supermicro Blade E5-1275 v5 with one VM, we saw an average latency of 29.4ms and with two VMs, the E5-1275 blade gave us an average latency of 51.3ms.

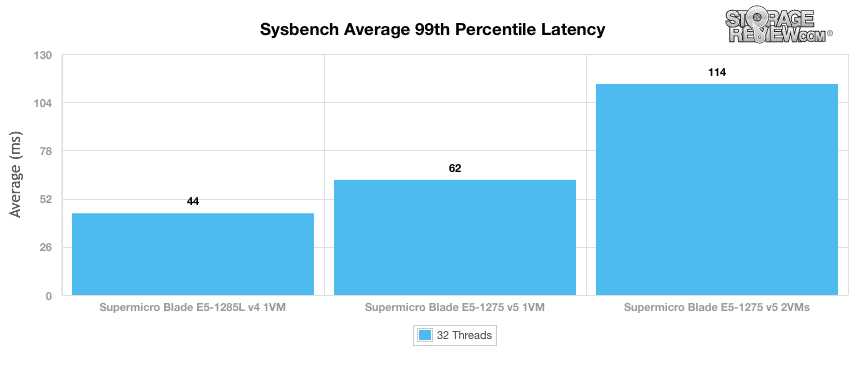

In terms of our worst-case MySQL latency scenario (99th percentile latency), the Supermicro blades again performed well with the E5-1285L v4 with one VM having a latency of 44ms. The Supermicro Blade E5-1275 v5 with one VM had a latency of 62ms, and 114ms with two VMs.

Conclusion

Coming in with either a 3U or a more expansive 6U configuration, the Supermicro X11 MicroBlade solution offers a multitude of blade options that, in turn, offer a variety of densities and CPUs. The 3U unit gives customers the ability to support up to 14 blades or 56 nodes–and the 6U unit doubles that. This setup makes it very easy for users to deploy multiple blades. The quick deployment of servers is ideal for customers who need to start off small and anticipate rapid growth at some point. With the amount and flexibility of server blades that can be used, Supermicro offers varying power-configuration options depending on how the solution will be configured as well as chassis management for ease of managing the server blades.

The web-based management offered on the SuperMicro X11 MicroBlade Chassis offers a wide range of features for users to effectively manage and control the platform. From a features standpoint Supermicro easily has most users covered, although from an ease of use or visual standpoint the interface appears dated and lacking some refinement. Supermicro also relies on Java, whereas other vendors such as Dell and Cisco have moved on with HTML5 support this year. For many users this won’t be a problem, but broader device compatibility and cleaner interfaces are appreciated by IT administrators.

Depending on the overall performance demands of each customer, there are a wide range of blade options offered. Supermicro shipped us two embedded CPU variants, while customers can configure up to dual-proc E5-2600-series versions if they need to. Supermicro provided us with two different server blades (the E5-1285L v4 and the E5-1275 v5) that we ran through our Sysbench test with either 1VM for the E5-1285L v4 and either one or two VMs for the E5-1275 v5 with additional DRAM. In our throughput test we saw a score as high as 1,408 TPS with the E5-1285L v4. The E5-1275 v5 blade gave us 1,087 TPS with one VM and 1,247 TPS with two VMs. With average latency, we saw 22.7ms for the E5-1285L v4 and the E5-1275 v5 gave us 29.4ms with one VM and 51.3ms with two VMs. Looking at worst-case latency, both blade servers gave fairly impressive performances, with the E5-1285L v4 having a latency of only 44ms and the E5-1275 v5 having a latency of only 62ms with one VM and 114ms with two VMs.

Pros

- Dense enclosure supports 28 blades or 112 nodes in 6U

- Management software makes it easy to pool and deploy resources

- Wide range of compute and networking options offered

Cons

- Chassis management not single pane of glass and lacks HTML5 support

The Bottom Line

The SuperMicro X11 MicroBlade chassis and blades provide an amazingly dense platform for a variety of use cases, enabling enterprises to start small and grow or deploy up to 112 nodes in a single 6u chassis.

Discuss this review

Amazon

Amazon