The Dell EMC VxRail family of appliances are hyper-converged infrastructure (HCI) underpinned by VMware vSAN. VxRail has long been the lead product when VMware talks about vSAN, as the concept of deploying and managing the VxRail appliance is appealing to many. Of course, Dell EMC and others sell vSAN Ready Nodes for those who want a little more control over server configuration. VxRail isn’t new to the lab as we wrote about the features like streamlined deployment and rigorous compatibility testing that Dell EMC brings to the table in early 2017. Much has changed since then; primarily Dell EMC has migrated off whitebox servers to PowerEdge servers. This is not an insignificant change, primarily because PowerEdge servers bring additional management and reliability features to the table that VxRail appliances can benefit from, further strengthening the Dell EMC/VMware value proposition when discussing the benefits of the VxRail appliance vs. Ready Nodes or roll your own options.

In this review we’re looking at a typical four-node configuration of Dell EMC VxRail P570F appliances. Dell EMC offers quite a few configurations of VxRail, and the nomenclature can get a little cumbersome. The P Series units are typically more performance-oriented and are based on single node 2U PowerEdge R740xd servers. There are dozens of configuration options available including single or dual processor systems, SATA, SAS and NVMe (for cache) drive support, RAM configurations up to 3TB per node and networking up to 25GbE. The VxRail P570 is a hybrid configuration whereas the P570F is the all-flash variant we deployed for this review.

Our version of the P570F appliances under review include dual Intel 6132 CPUs (14-core 2.6Ghz), 384GB RAM, six 3.84TB read intensive SSDs for capacity and two 800GB write intensive SSDs for the cache. Each node has two disk groups, one cache drive backed by three of the capacity SSDs. Connectivity between nodes is handled via Intel X710 10GbE cards.

Dell EMC VxRail P570F Specifications

| Form Factor | 2U |

| CPU | Intel Xeon Scalable Processors |

| CPU Sockets | Single or dual |

| CPU cores | 8-56 |

| CPU frequency | 1.7GHz-3.6GHz |

| RAM | 64GB-3,072GB |

| Cache SSD | 400GB-1.6TB SAS 800GB-1.6TB NVMe |

| Flash Storage | 1.92TB-76.8TB SAS or SATA |

| Drive Bays | 24 x 2.5” |

| Max disk groups | 4 |

| Max nodes per cluster | 64 |

| Min nodes per cluster | 3 |

| Ports | 2×25 GbE SFP28 or 4×10 GbE RJ45 or 4×10 GbE SFP+ or 4×1 GbE RJ45 1x1GbE iDRAC9 |

| Optional ports | Up to 16×10 GbE RJ45 or Up to 16×10 GbE SFP+ or Up to 8×25 GbE SFP28 |

| Power | |

| Dual Redundant PSU | 1100W 100V – 240V AC 1100W -48V DC 1600W 200V – 240V AC |

| Cooling fans | 4 or 6 |

| Temperature | |

| Operating | 10°C to 30°C (50°F to 86°F) |

| Non-operating | -40°C to +65°C (-40°F to +149°F) |

| Relative Humidity | 10% to 80% (non-condensing) |

| Physical dimensions | 86.8mm/3.42in H 434mm/17.09in W 678.8mm/26.72in D 28.1kg/61.95lb |

Build and Design

The Dell EMC VxRail P570F is a 2U HCI node based off the PowerEdge R740xd that comes with one of the company’s stylish new honeycomb bezels (ours is the prior generation) as seen in this mock-up.

Beneath the bezel are the 24 2.5” drives. On the left, there are the health, ID, and status LED lights. On the right are the power button, VGA port, iDRAC micro-USD port, and two USB 2.0 ports.

Swinging around to the rear, one can easily see that there is plenty of room for expansion through cards. The bottom right has dual PSUs. In the bottom center there are four 10G SFP+ ports, and going to the left, two USB 3.0 ports, a VGA port, Serial port and an 1G RJ45 iDRAC port.

The top easily pops off for access to the CPUs and RAM, or to add on more network connectivity or storage in the rear of the device.

Management

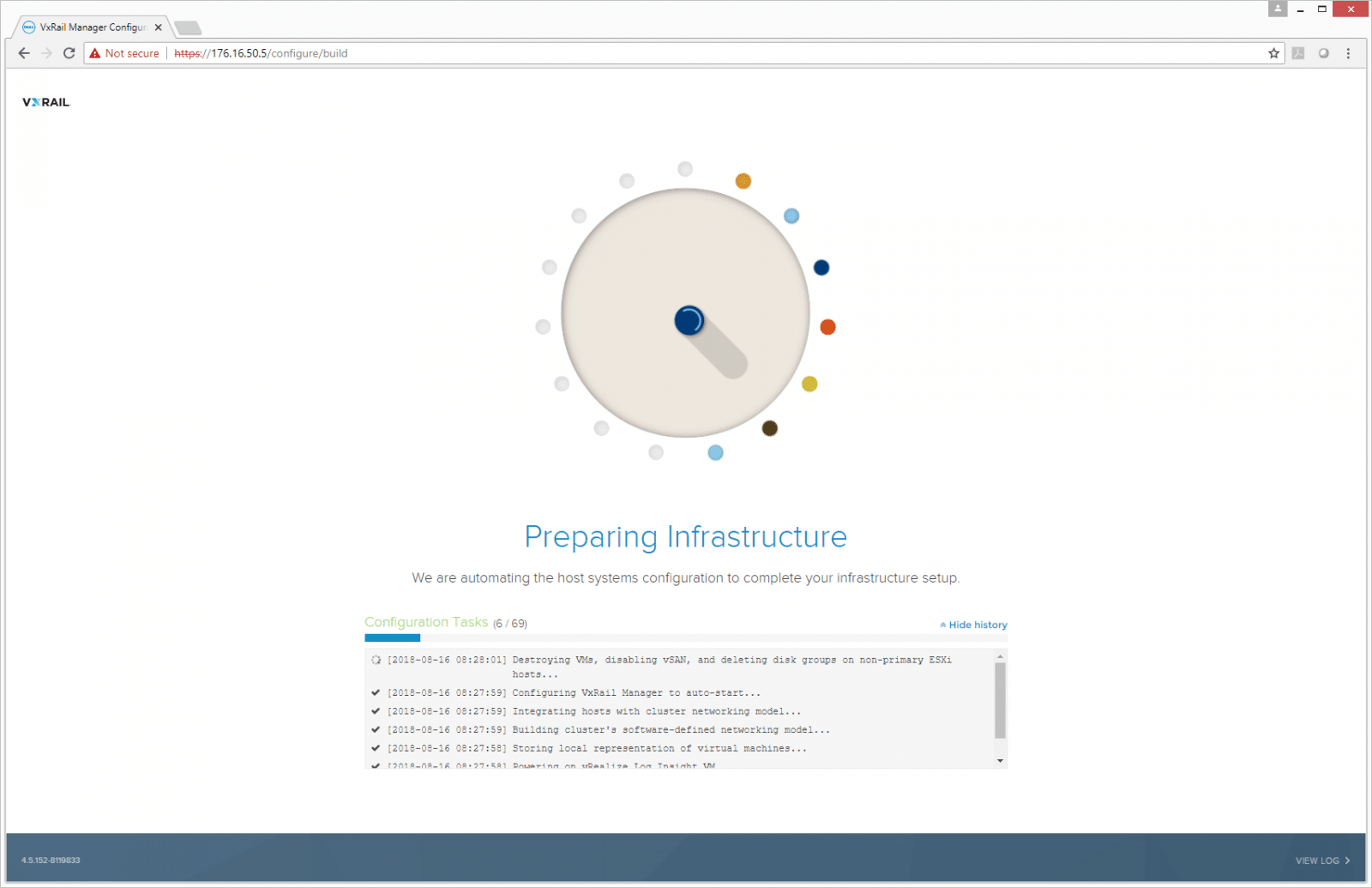

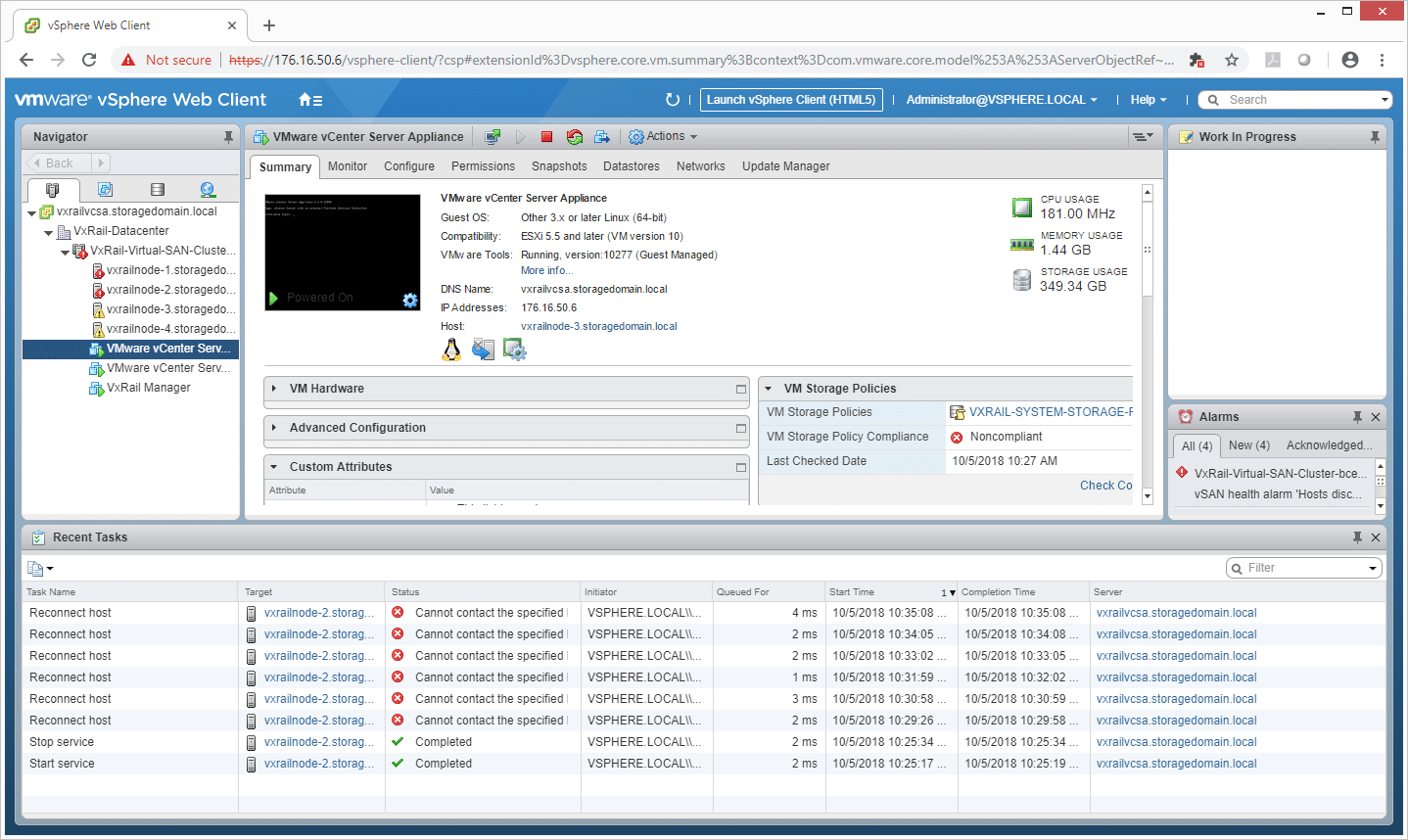

A big selling point for VxRail is ease of HCI deployment and here the Dell EMC VxRail P570F didn’t disappoint. The VxRail quickly prepared the infrastructure, eliminating anything that it didn’t need.

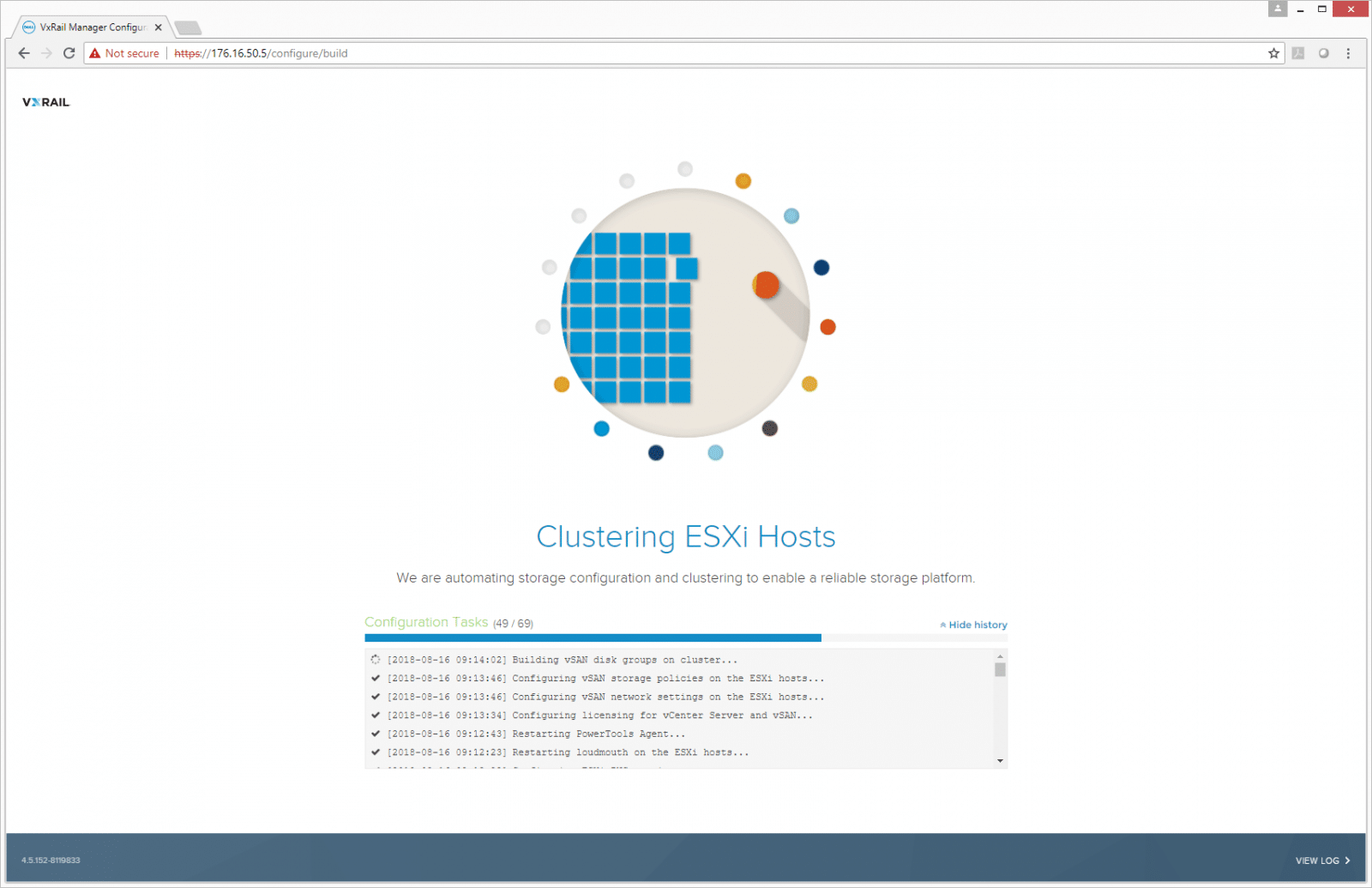

Once the infrastructure is correctly set up, the VxRail will being clustering ESXi Hosts and automating storage configurations.

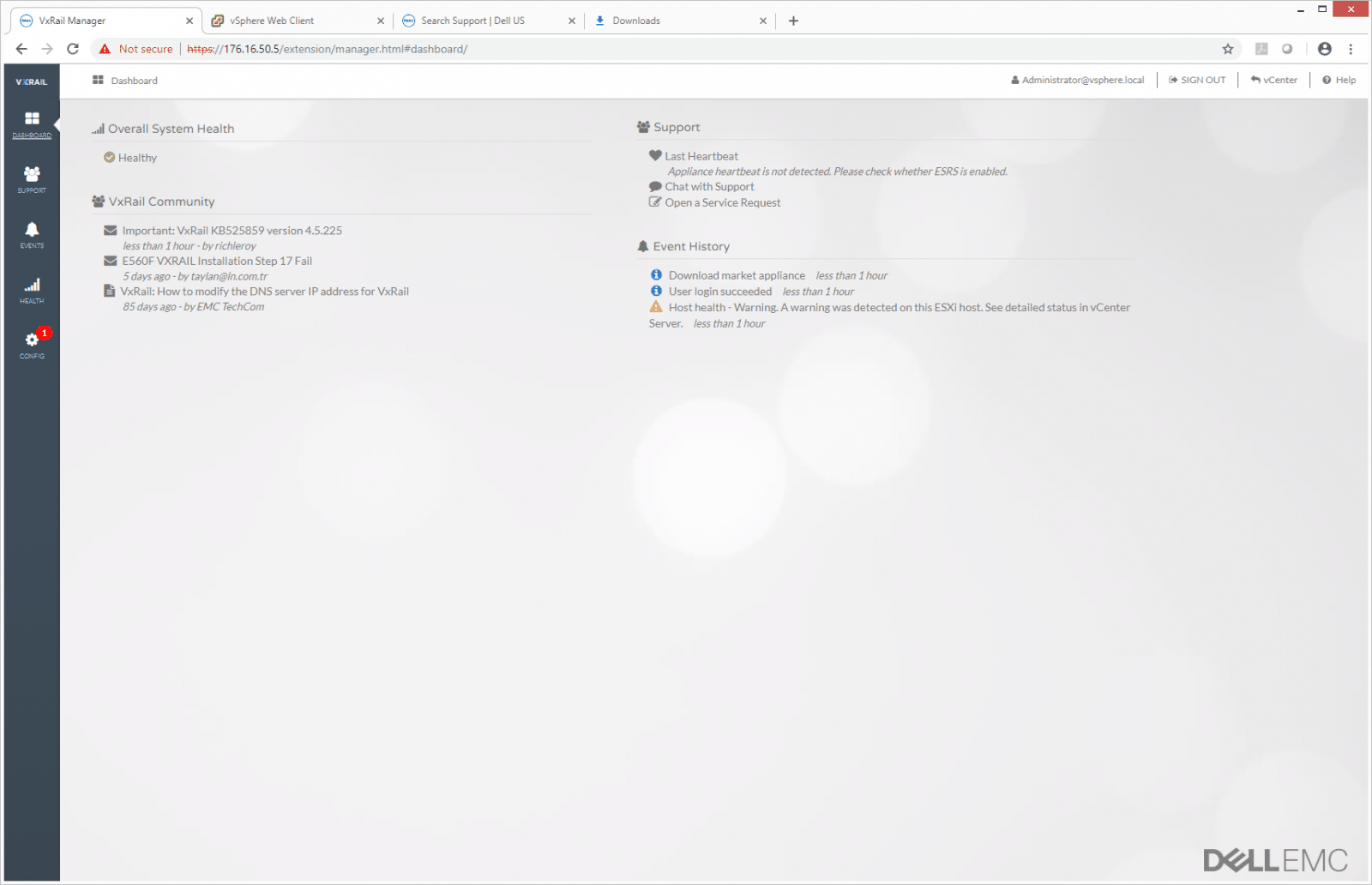

After the unit is deployed, admins will be brought to the main screen of VxRail Manager. Users are automatically brought to the dashboard that shows information such as overall system health, support, VxRail community, and event history. Along the left side are several tabs including: Dashboard, Support, Events, Health, and Config.

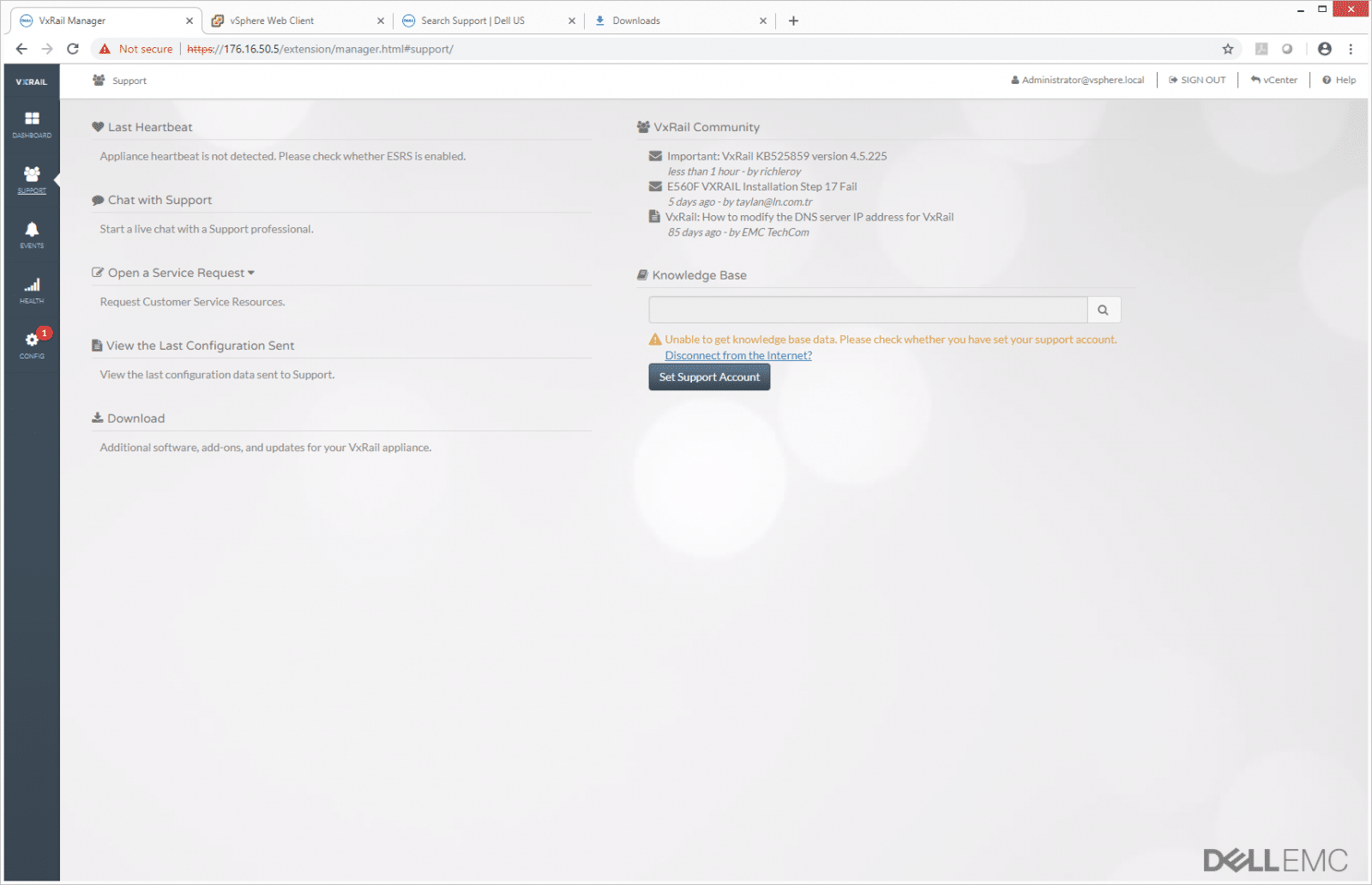

The Support tab is as it sounds. It checks the last “heartbeat” of the appliance to see if there is an issue. It allows for several support options including chatting, opening a service request, viewing the last configuration sent, downloading software such as upgrades, and letting users see what is happening in the VxRail community. There is a Knowledge Base search bar as well to find a particular issue.

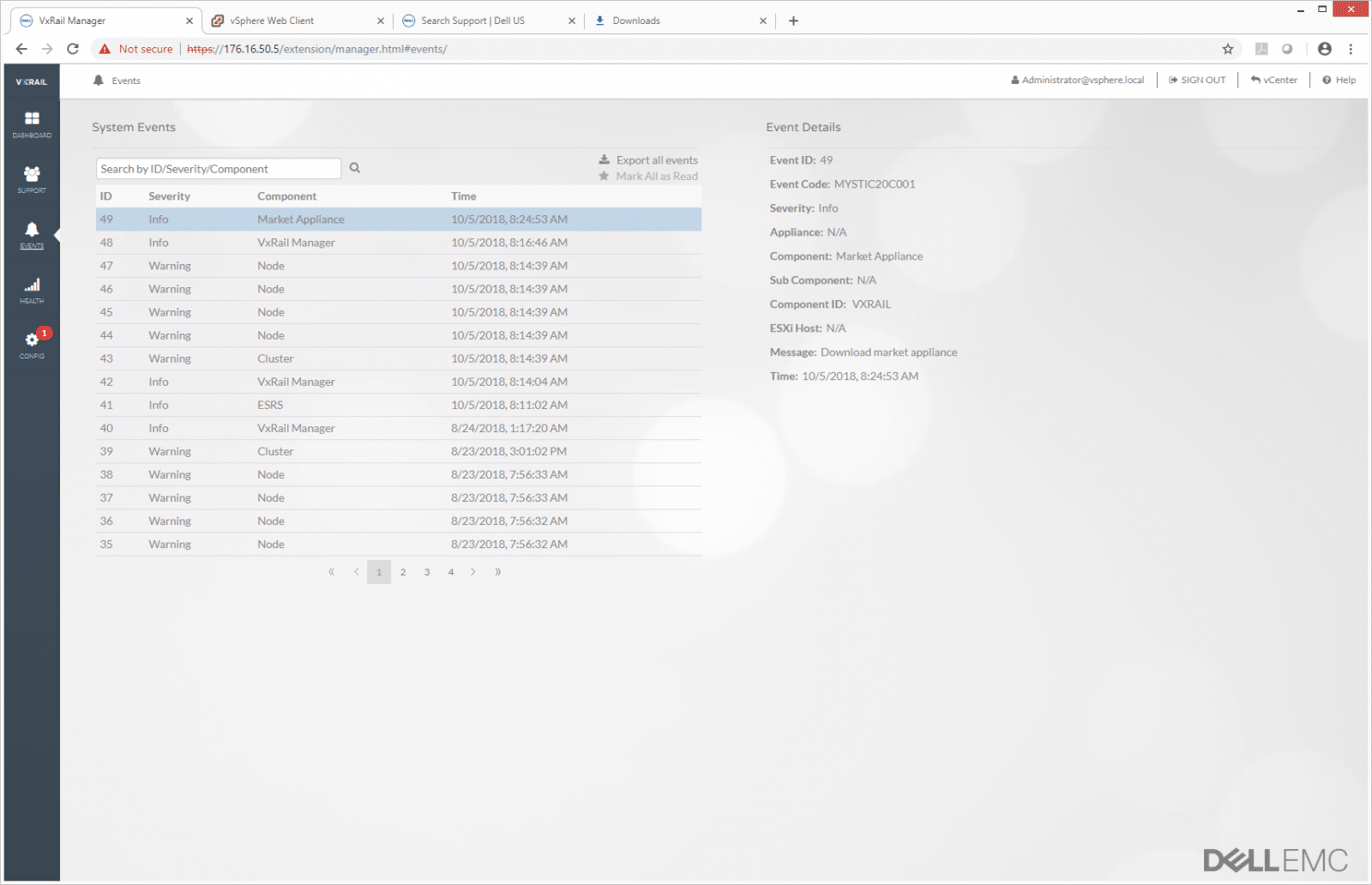

The Events tab is another fairly intuitive tab as it lists the event by either ID, severity, component, or time. Clicking on an event allows users to drill down into it better for details that may resolve issues or prevent them in the future.

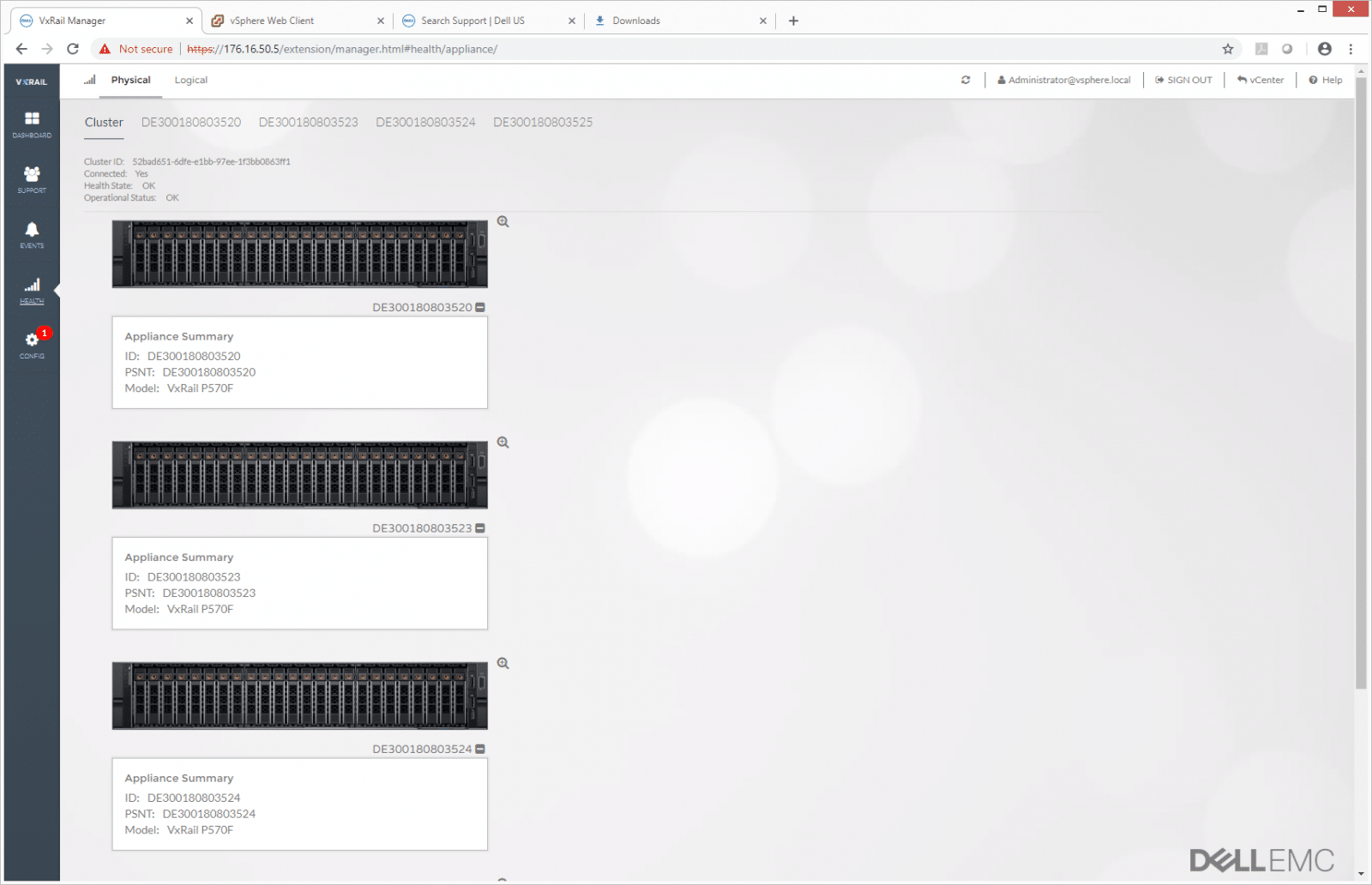

The Health tab lets admins see the health summary of a cluster as a whole or allows them to drill down into each appliance.

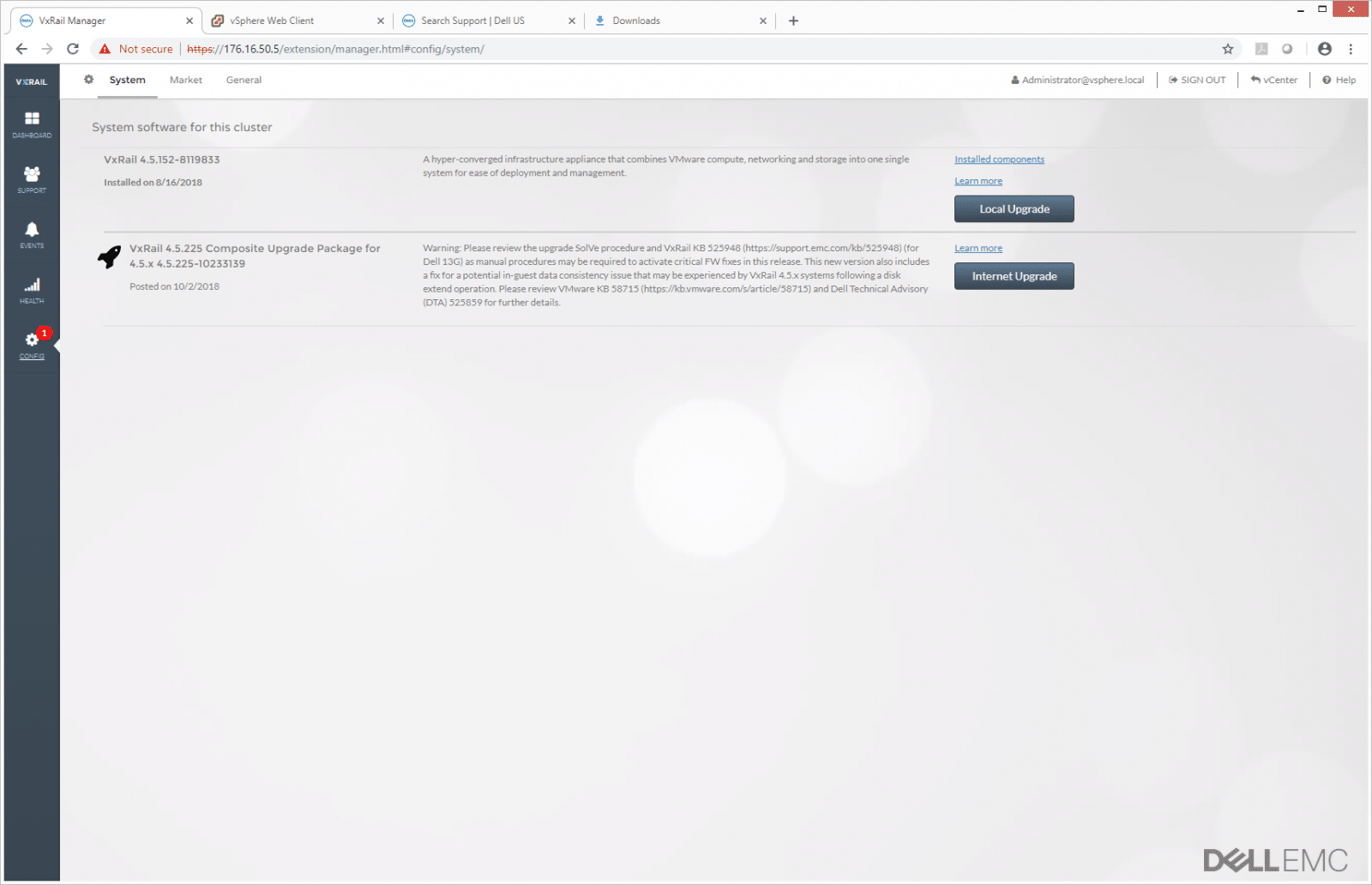

The Config tab let users see the system software on their cluster and allows for two types of upgrades: local and Internet.

As the name suggests, Local Upgrade allows users to upgrade locally; in this case, from the PC that is being used to monitor VxRail Manager.

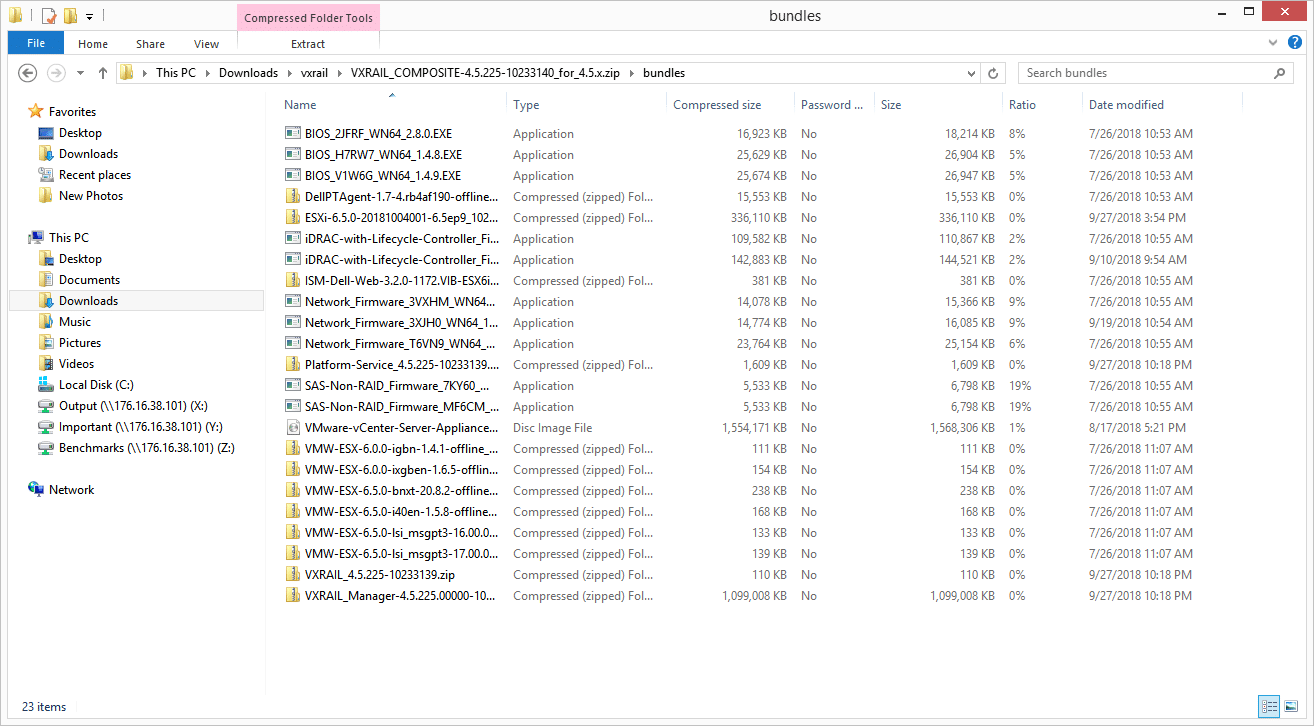

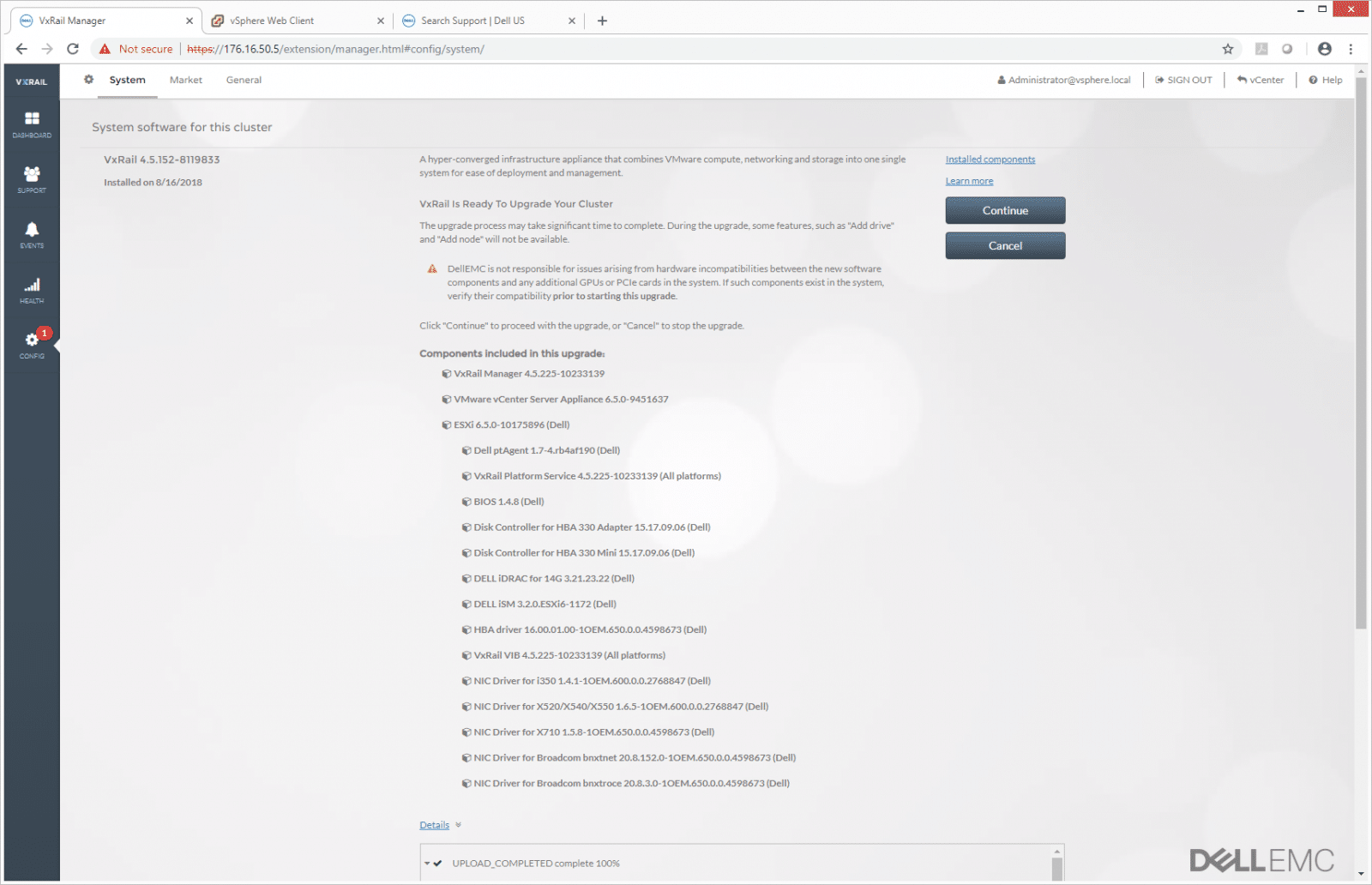

The VxRail upgrade package includes updates for nearly all components in the server, leveraging the benefits from vertical integration with the Dell ecosystem. While some vendors will focus on the software stack, VxRail is able to update everything inside the server down to the power supply firmware, if necessary. These are the same files provided through Dell’s LifeCycle Controller, which has access to all server components. In a world where NIC firmware might be vulnerable to an attack, how many IT administrators are upgrading it as patches come out regularly? VxRail handles this in an automated fashion, making it as easy as a few clicks.

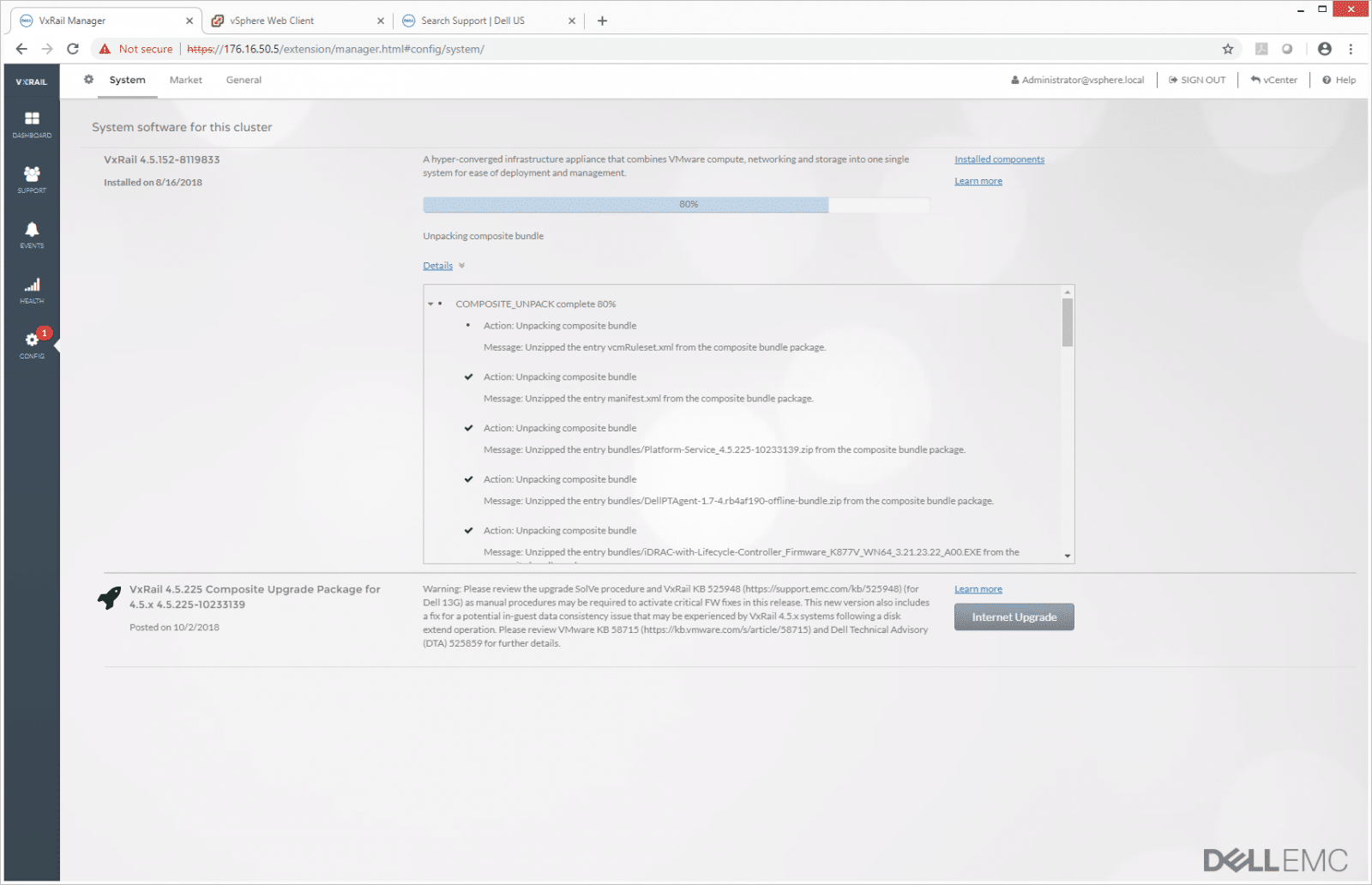

As the cluster goes through the update and upgrade process, users can view the breakdown of what is taking place. The same process repeats for each server in the cluster as needed.

When the upgrade is complete, users will have a list of everything upgraded within VxRail Manager.

While the cluster is being upgraded, you can see some of the activity up at the vCenter level. Most of this action is the individual hosts going into maintenance mode.

In total, the VxRail Manager is a great value add when it comes to hardening vSAN from a compatibility standpoint and ensuring management and maintenance are as easy as possible. The only negative is this hardening comes with a little bit of a cost, as VxRail is slower to adopt new versions of vSphere. This system runs 6.5, while 6.7 has been out for some time. Dell EMC is well aware of this, however, and continues to integrate with VMware where they can to accelerate the adoption of updates.

Performance

Application Workload Analysis

The first benchmarks consist of the MySQL OLTP performance via SysBench and Microsoft SQL Server OLTP performance with a simulated TPC-C workload.

Each SQL Server VM is configured with two vDisks, one 100GB for boot and one 500GB for the database and log files. From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller. These tests are designed to monitor how a latency-sensitive application performs on the cluster with a moderate, but not overwhelming, compute and storage load.

SQL Server Testing Configuration (per VM)

- Windows Server 2012 R2

- Storage Footprint: 600GB allocated, 500GB used

- SQL Server 2014

- Database Size: 1,500 scale

- Virtual Client Load: 15,000

- RAM Buffer: 48GB

- Test Length: 3 hours

- 2.5 hours preconditioning

- 30 minutes sample period

For our transactional SQL Server benchmark, the Dell EMC VxRail P570F was able to hit an aggregate score of 12,585 TPS with individual VMs running from 3,145.1 TPS to 3,148.5 TPS.

A more telling sign of SQL Server performance is latency. With SQL Server average latency, the P570F was able to hit an aggregate score of 24.4ms with individual VMs running from 21ms to 26ms.

Sysbench Performance

Each Sysbench VM is configured with three vDisks: one for boot (~92GB), one with the pre-built database (~447GB), and the third for the database under test (400GB). From a system resource perspective, we configured each VM with 16 vCPUs, 64GB of DRAM and leveraged the LSI Logic SAS SCSI controller.

Sysbench Testing Configuration (per VM)

- CentOS 6.3 64-bit

- Storage Footprint: 1TB, 800GB used

- Percona XtraDB 5.5.30-rel30.1

- Database Tables: 100

- Database Size: 10,000,000

- Database Threads: 32

- RAM Buffer: 24GB

- Test Length: 12 hours

- 6 hours preconditioning 32 threads

- 1 hour 32 threads

- 1 hour 16 threads

- 1 hour 8 threads

- 1 hour 4 threads

- 1 hour 2 threads

With the Sysbench OLTP, we look at the 8VM configuration for each. The VxRail had an aggregate score of 8,645.9 TPS with individual VMs ranging from 925.48 TPS to 1,243.1 TPS.

For Sysbench average latency, the VxRail had an aggregate score of 29.9ms with individual VMs ranging from 25.7ms to 34.6ms.

In our worst-case scenario (99th percentile) latency, the VxRail had an aggregate score of 55.1ms with individual VMs ranging from 47ms to 64.4ms.

VDBench Workload Analysis

When it comes to benchmarking storage arrays, application testing is best and synthetic testing comes in second place. While not a perfect representation of actual workloads, synthetic tests do help to baseline storage devices with a repeatability factor that makes it easy to do apples-to-apples comparison between competing solutions. These workloads offer a range of different testing profiles ranging from “four corners” tests, common database transfer size tests, as well as trace captures from different VDI environments. All of these tests leverage the common vdBench workload generator, with a scripting engine to automate and capture results over a large compute testing cluster. This allows us to repeat the same workloads across a wide range of storage devices.

Profiles:

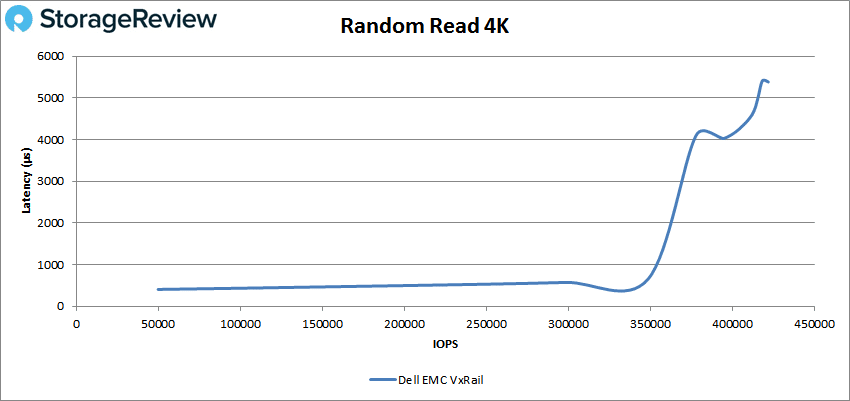

- 4K Random Read: 100% Read, 128 threads, 0-120% iorate

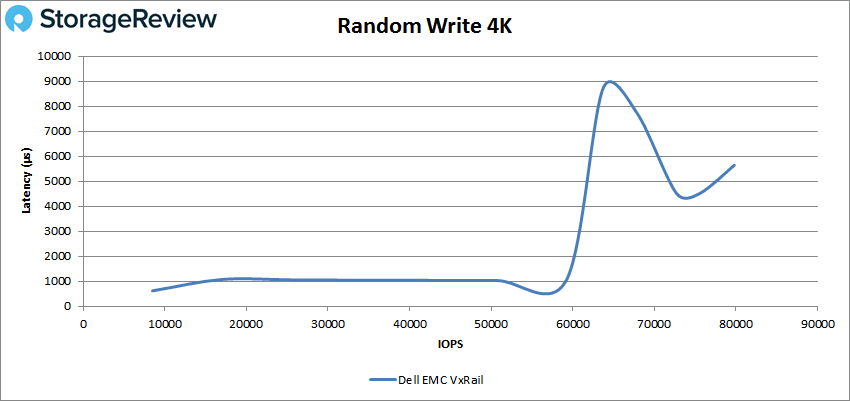

- 4K Random Write: 100% Write, 64 threads, 0-120% iorate

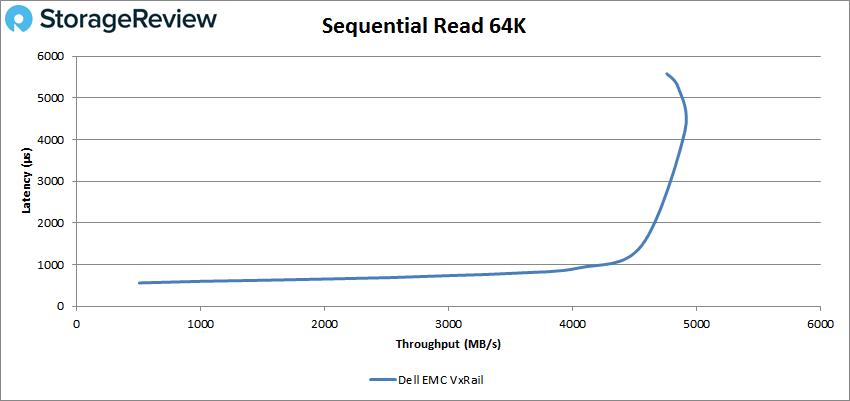

- 64K Sequential Read: 100% Read, 16 threads, 0-120% iorate

- 64K Sequential Write: 100% Write, 8 threads, 0-120% iorate

- Synthetic Database: SQL and Oracle

- VDI Full Clone and Linked Clone Traces

With 4K random read, the VxRail had sub-millisecond latency performance over 350K IOPS and went on to peak at 422,052 IOPS with a latency of 5.38ms.

For 4K random write, the VxRail broke 1ms early, around 17K IOPS, and rode the 1ms line until over 60K IOPS peaking at 79,801 IOPS with a latency of 5.64ms.

Next we look at sequential workloads with 64K. For Read, the VxRail had sub-millisecond latency up to about 67K IOPS or 4.1GB/s and peaked at about 80K IOPs or 4.9GB/s with a latency of roughly 4.5ms.

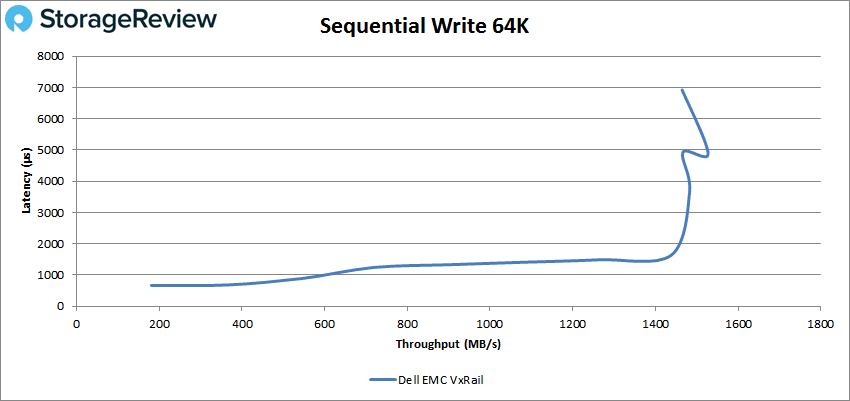

For 64K sequential write, the VxRail ran under 1ms until about 10K IOPS or 600MB/s and went on to peak at about 25K IOPS or 1.53GB/s with a latency of 4.9ms before dropping off some.

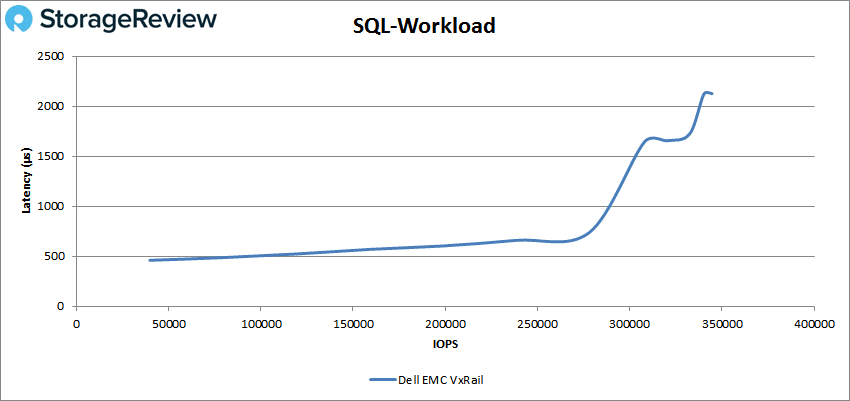

Next up is our SQL workloads with the Dell EMC VxRail P570F having sub-millisecond latency performance up until about 285K IOPS going on to peak at 344,619 IOPS with a latency of 2.1ms.

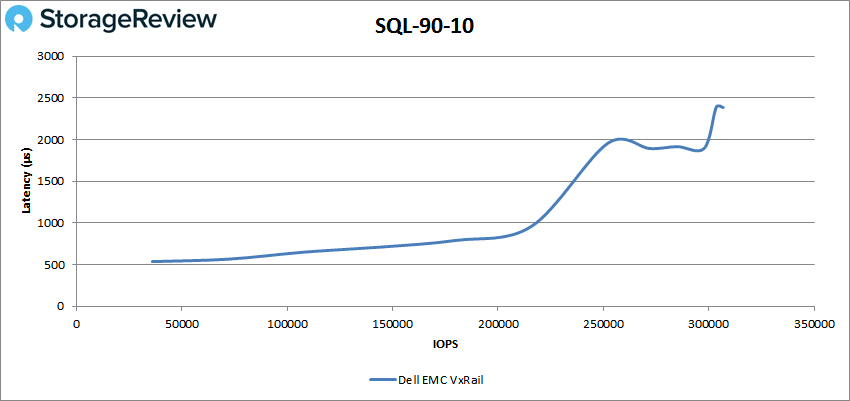

For SQL 90-10, the VxRail ran up to just over 215K IOPS at less than 1ms latency before going on to peak at 306,851 IOPS with a latency of 2.4ms.

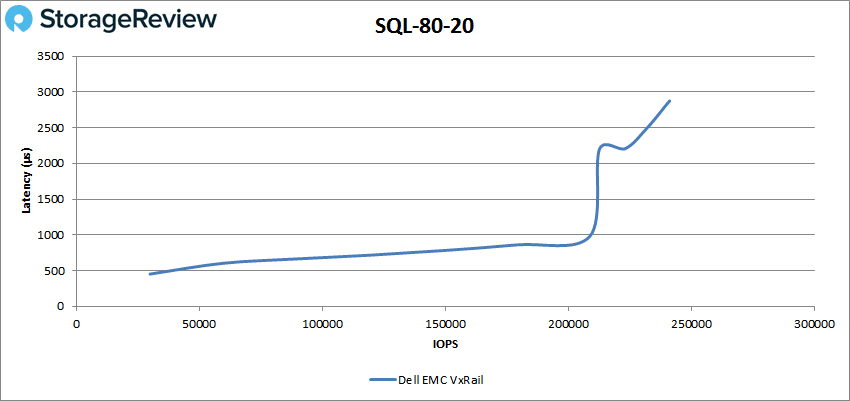

SQL 80-20 saw the VxRail with sub-millisecond latency until about 209K IOPS and a peak performance of 240,468 IOPS with a latency of 2.9ms.

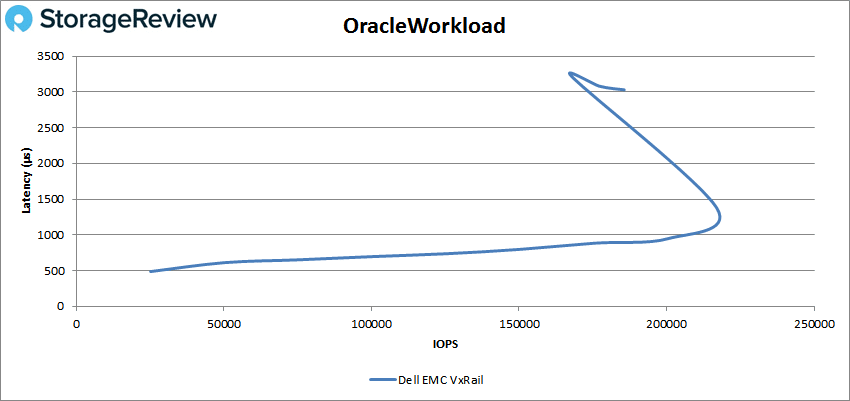

Following our SQL workloads is our Oracle workloads. Here the VxRail had sub-millisecond latency until about 200K IOPS, and quickly peaked at roughly 218K IOPS with 1.1ms latency before dropping off significantly in performance.

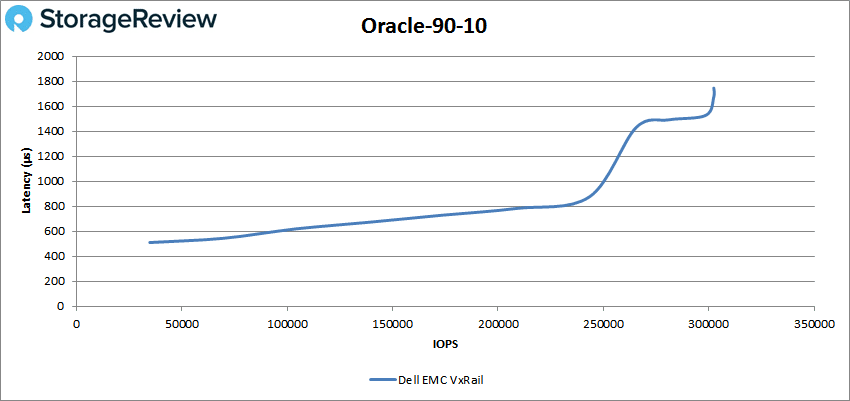

Oracle 90-10 saw the VxRail with performance under 1ms until about 250K IOPS and a peak of 302,381 IOPS with a latency of 1.7ms.

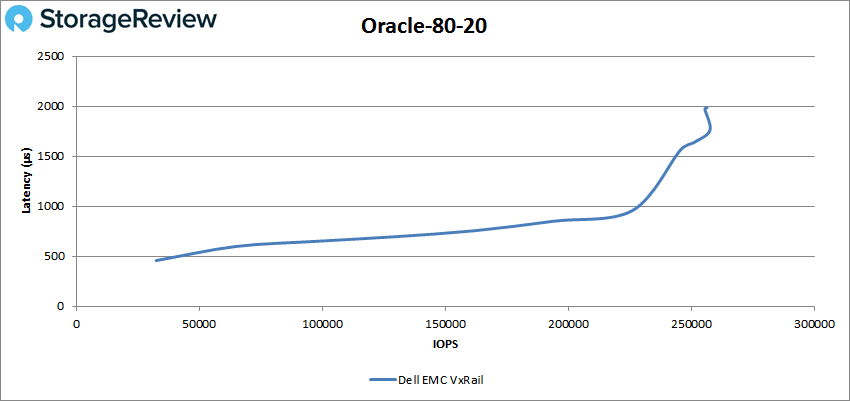

With Oracle 80-20, the VxRail had sub-millisecond performance until over 226K IOPS going on to peak at about 258K IOPS with a latency of 1.76ms.

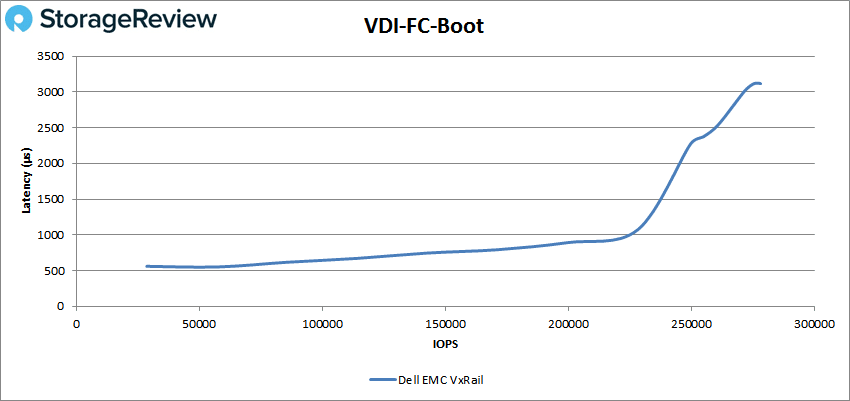

Next, we switched over to our VDI clone test, Full and Linked. For VDI Full Clone Boot, the VxRail ran with latency under 1ms until it reached about 228K IOPS and peaked at 277,332 IOPS with a latency of 3.1ms.

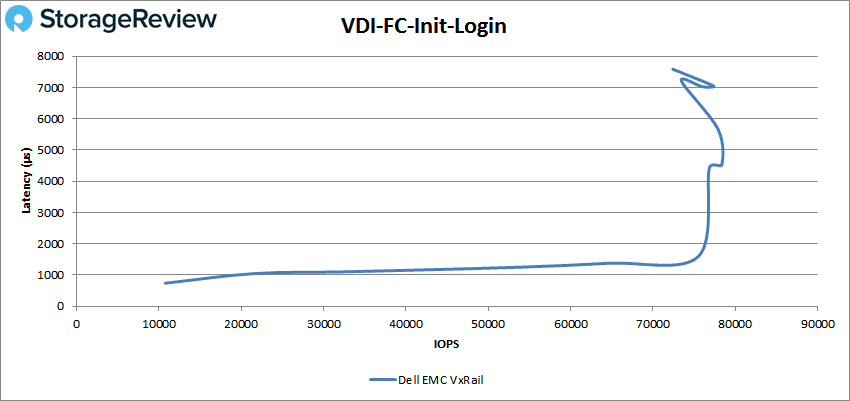

VDI FC Initial Login saw the VxRail shoot up over 1ms early on, going on to peak at about 78K IOPS with 4.5ms latency before dropping off some.

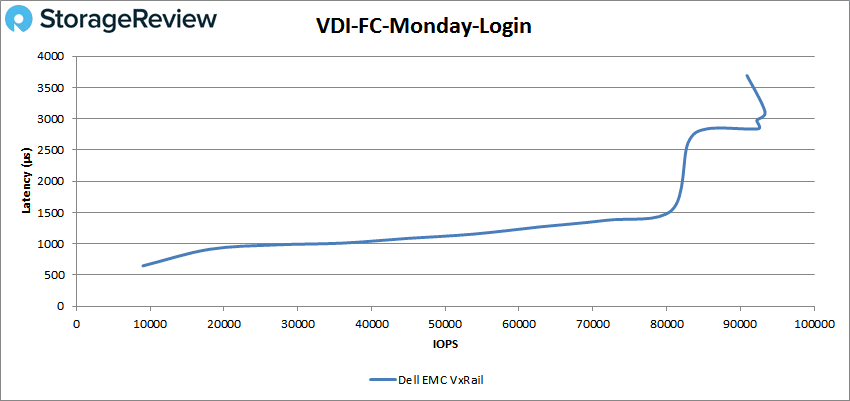

The VxRail had sub-millisecond latency until about 20K IOPS in the VDI FC Monday Login and went on to peak at about 93K IOPS with a latency of 3.1ms before a slight drop off.

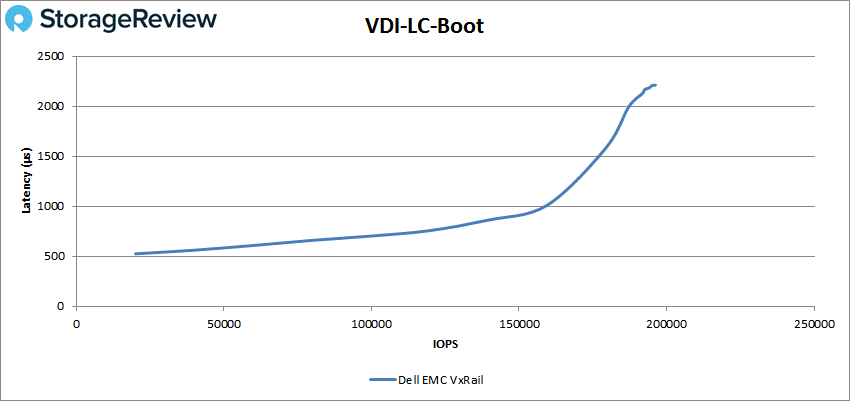

Switching over to VDI Linked Clone (LC) Boot we see the VxRail made it until 159K IOPS before breaking 1ms and going on to peak at 195,062 IOPS with a latency of 2.2ms.

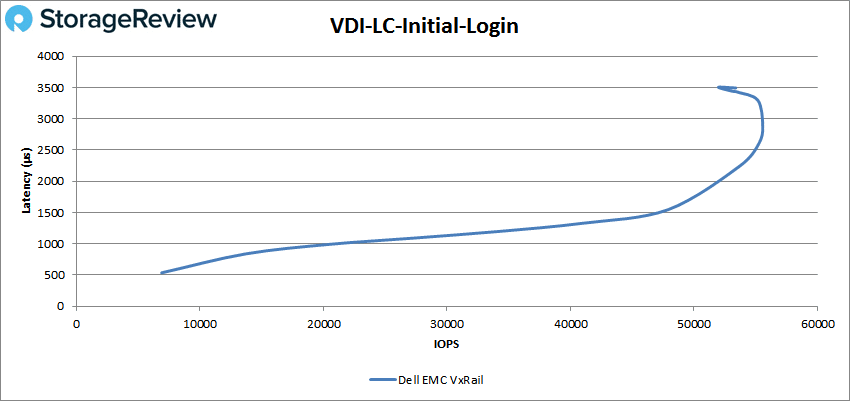

For VDI LC Initial Login, the VxRail made it to roughly 20K IOPS with sub-millisecond latency and peaked at about 56K IOPS with 3ms before dropping off.

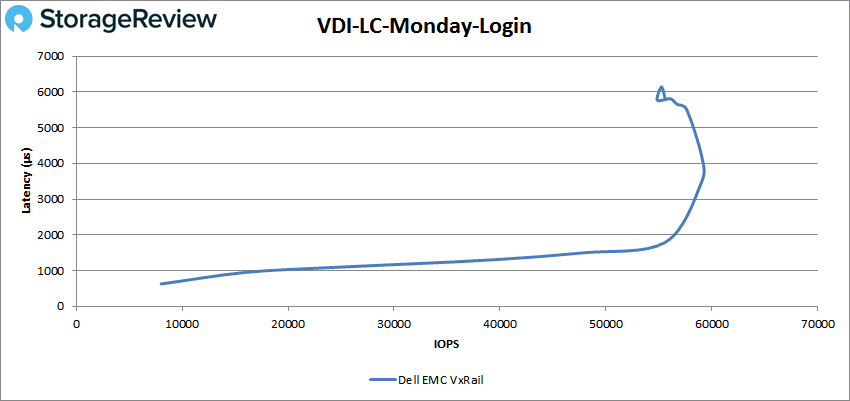

Finally, VDI LC Monday Login saw the VxRail ride the 1ms line a bit and peaked around 60K IOPS with 3.9ms latency before a drop off in performance and a jump in latency.

Conclusion

The Dell EMC VxRail P570F is an all-flash HCI appliance that is geared toward performance. These new versions of VxRail bring easy-to-deploy HCI that are now built off a Dell EMC PowerEdge backbone. PowerEdge servers offer a slew of benefits for customers who are looking to use HCI, and the VxRail platform makes it easier than ever for customers to stay updated at the OS or even host level. As with most Dell EMC offerings, there is a massive variety of configurations, giving it the flexibility to hit just about any need. Being aimed at performance, the Dell EMC VxRail P570F can be outfitted with up to 3TB of memory per node, supports NVMe storage, and networking up to 25GbE.

During our Application Workload analysis, the P570F was able to hit an aggregate score of 12,585 TPS in SQL Server with an aggregate average latency of only 24.3ms. For Sysbench, the VxRail had aggregate scores of 8,645.9 TPS, average latency of 29.9ms, and worst-case scenario latency of 55.1ms.

For our VDBench performance, the Dell EMC VxRail P570F leveraged SAS storage, so while it started off in every benchmark with sub-millisecond latency, response times did increase above 1ms under intense workloads. This isn’t unexpected given the SAS3 flash media, however, it did record some fairly strong numbers. Highlights include 422K IOPS for random 4K read, 4.1GB/s for 64K sequential read, 1.53GB/s for sequential 64K write, 345K IOPS for SQL, 307K IOPS for SQL 90-10, 241K IOPS for SQL 80-20, 302K IPS for Oracle 90-10, 258K IOPS for Oracle 80-20, VDI FC Boot of 277K IOPS, and VDI LC Boot of 195K IOPS. From a latency standpoint, the HCI appliance ran from peak latency of 1.1ms to 5.64ms. While not sub-millisecond, it is still a strong showing for HCI.

Overall, the performance we saw from this appliance was very good, considering the target audience of this configuration. VxRail can certainly go faster, but the point here is to highlight a mainstream flash configuration. Again, we were impressed with the benefits of going the appliance route when it comes to vSAN, and VxRail Manager does the heavy lifting. The HCL is thoroughly vetted by Dell EMC at levels that go beyond what happens with vSAN Ready Nodes. Furthermore, the system itself updates everything down to device firmware, something that VxRail buyers see great value in. The worry of having to manage the nodes themselves goes away, making VxRail easy to own and manage.

Amazon

Amazon