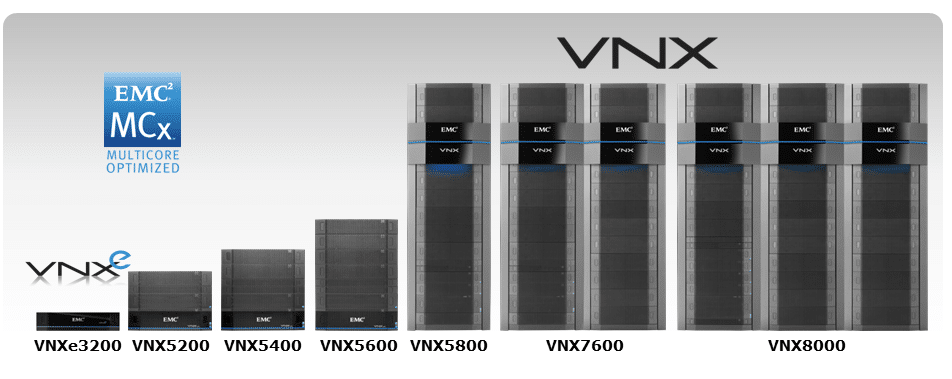

EMC's VNX5200 storage controller is the entry point for the company's VNX2 offerings and features block storage with optional file and unified storage functionality. The VNX5200 can manage up to 125x 2.5-inch or 3.5-inch SAS and NL-SAS hard drives and SSDs and is built with EMC's MCx multicore architecture. Kevin O'Brien, Director of the StorageReview Enterprise Test Lab, recently traveled to EMC's data center in Hopkinton, MA for some hands-on time and benchmarks of the VNX5200.

In September of last year, EMC updated their popular VNX line of unified storage arrays with significant hardware enhancements. The result was the VNX2 lineup, with enhancements like a move from PCIe 2.0 to PCIe 3.0 and the new MCx architecture (encompassing multicore RAID, multicore cache, and multicore FAST Cache) to take better advantage of multiple CPU cores in the storage processors.

Many of these enhancements focus on enabling the VNX platform to make better use of flash at a time when storage administrators continue to move towards hybrid array configurations. According to EMC, nearly 70% of VNX2 systems are now shipping in hybrid flash configurations, a shift that has also placed more importance on the role of EMC's FAST suite for caching and tiering.

While the smaller VNXe3200 that we previously reviewed has also been updated with VNX2 technologies, the VNX5200 is designed for midmarket customers who need a primary storage system at their headquarters and for remote/branch office needs that are more robust than what the VNXe3200 can handle. The VNX5200 can be configured for block, file, or unified storage and utilizes a 3U, 25x 2.5-inch EMC Disk Processor Enclosure (DPE) chassis. The VNX5200’s storage processor units incorporate a 1.2GHz, four-core Xeon E5 processor with 16GB of RAM and can manage a maximum of 125 drives with FC, iSCSI, FCoE and NAS connectivity.

The VNX2 family also currently includes five storage systems designed for larger scales than the VNXe3200 and the VNX5200.

- VNX5400: Up to 1PB of raw capacity across 250 drives, up to 1TB of SSD FAST Cache, and up to 8 UltraFlex I/O modules per array

- VNX5600: Up to 2PB of raw capacity across 500 drives, up to 2TB of SSD FAST Cache, and up to 10 UltraFlex I/O modules per array

- VNX5800: Up to 3PB of raw capacity across 750 drives, up to 3TB of SSD FAST Cache, and up to 10 UltraFlex I/O modules per array

- VNX7600: Up to 4PB of raw capacity across 1,000 drives, up to 4.2TB of SSD FAST Cache, and up to 10 UltraFlex I/O modules per array

- VNX8000: Up to 6PB of raw capacity across 1,500 drives, up to 4.2TB of SSD FAST Cache, and up to 22 UltraFlex I/O modules per array

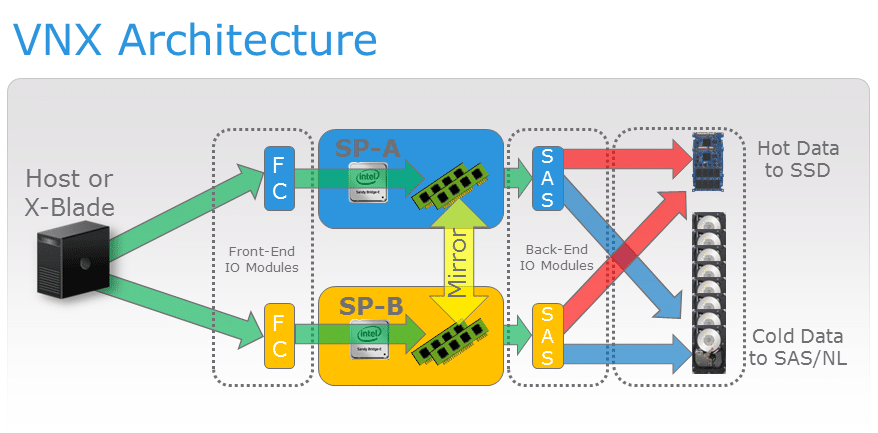

VNX5200 block storage is powered by dual VNX Storage Processors with a 6Gb SAS drive topology. A VNX2 deployment can use one or more Data Movers and a controller unit to offer NAS services. Like other members of the VNX series, the VNX5200 uses UltraFlex I/O modules for the both its Data Movers and the block storage processors. The VNX5200 supports up to three Data Movers and a maximum of three UltraFlex modules per Data Mover.

MCx Multi-Core Functionality

VNX predates widespread multicore processor technology, and prior generations of the platform were not built on a foundation that could leverage dynamic CPU scaling. FLARE, the VNX1 and CLARiiON CX operating environment, did allow services including RAID to be run on a specific CPU core, but FLARE’s single-threaded paradigm meant that many core functions were bound to the first CPU core. For example, all incoming I/O processes were handled by Core 0 before being delegated to other cores, leading to bottleneck scenarios.

MCx implements what EMC characterizes as horizontal multicore system scaling, which allows all services to be spread across all cores. Under this new architecture available on both VNX2 and the VNXe3200, incoming I/O processes are less likely to become bottlenecked because, for example, front-end Fibre Channel ports can be evenly distributed among several processor cores. MCx also implements I/O core affinity through its concept of preferred cores. Every port, front-end and back-end, has both a preferred core and an alternate core assignment. The system services host requests with the same front-end core where the requests originated in order to avoid swapping cache and context between cores.

A major advantage of the new MCx architecture is support for symmetric active/active LUN, which enables hosts to access LUNs simultaneously via both Storage Processors in the array. Unlike with FLARE’s asymmetric implementation, symmetric active/active mode allows both SPs to write directly to the LUN without the need to send updates to the primary Storage Processor.

At present, VNX2 systems support symmetric active/active access for classic RAID group LUNs, but do not support active/active for private pool LUNs which instead can make use of asymmetric active/active mode. Private pool LUNs or “Classic LUNs” cannot currently make use of symmetric active/active mode if they use Data Services other than the VNX RecoverPoint splitter.

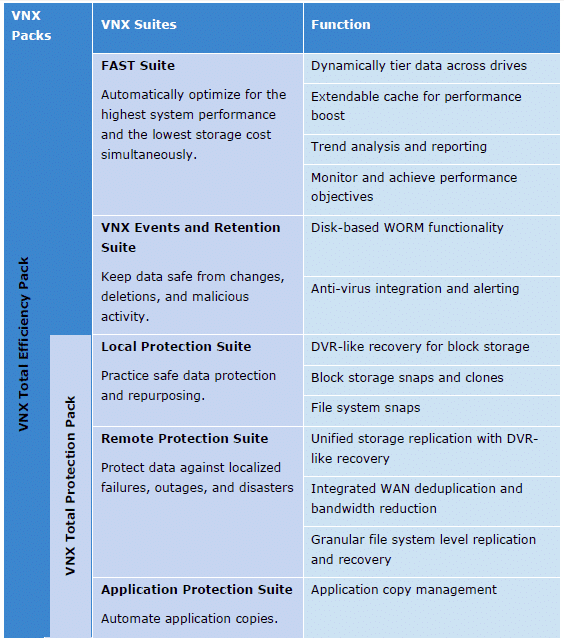

One of the VNX5200’s compelling synergies between the MCx architecture and flash media storage is the system's support for the EMC FAST Suite, which allows administrators to take advantage of flash storage media to increase array performance across heterogeneous drives. The FAST Suite combines two approaches for using flash to accelerate storage performance – caching and tiering – into an integrated solution. VNX2 updates to the FAST Suite include four times better tiering granularity and support for new eMLC flash drives.

VNX FAST Cache allows up to 4.2TB of SSD storage to be utilized in order to serve highly active data and dynamically adjust to workload spikes, although the upper cache size limit of the VNX5200 is 600GB. As data ages and becomes less active over time, FAST VP tiers the data from high-performance to high-capacity drives in 256MB increments based on administrator-defined policies.

EMC VNX5200 Specifications

- Array Enclosure: 3U Disk Processor Enclosure

- Drive Enclosures:

- 2.5-inch SAS/Flash (2U), 25 drives

- 3.5-inch SAS/Flash (3U), 15 drives

- Max SAN Hosts: 1,024

- Min/Max Drives: 4/125

- Max FAST Cache: 600GB

- CPU/Memory per Array: 2x Intel Xeon E5-2600 4-Core 1.2GHz/32GB

- RAID: 0/1/10/3/5/6

- Max Raw Capacity: 500TB

- Protocols: FC, FCoE, NFS, CIFS, iSCSI,

- Storage Types: Unified, SAN, NAS, Object

- Drive Types: Flash SSD, SAS, NL-SAS

- Capacity Optimization: Thin Provisioning, Block Deduplication, Block Compression, File-Level Deduplication and Compression

- Performance: MCx, FAST VP, FAST Cache

- Management: EMC Unisphere

- Virtualization Support: VMware vSphere, VMware Horizon View, Microsoft Hyper-V, Citrix XenDesktop

- Max Block UltraFlex I/O Modules per Array: 6

- Max Total Ports per Array: 28

- 2/4/8Gb/s FC Max Ports per Array: 24

- 1GBASE-T iSCSI Max Total Ports per Array: 16

- Max FCoE Ports Per Array: 12

- 10GbE iSCSI Max Total Ports per Array: 12

- Control Stations: 1 or 2

- Max Supported LUNs: 1,000 (Pooled)

- Max LUN Size: 256TB (Virtual Pool LUN)

- Max File System Size: 16TB

- Number of File Data Movers: 1, 2 or 3

- CPU/Memory per Data Mover: Intel Xeon 5600/6GB

- UltraFlex I/O Expansion Modules for Block:

- 4-Port 2/4/8Gb/s Fibre Channel

- 4-Port 1Gb/s (copper) iSCSI

- 2-Port 10Gb/s (optical) iSCSI

- 2-Port 10GBASE-T (copper) iSCSI

- 2-Port 10GbE (optical or twinax) FCoE

- UltraFlex I/O Expansion Modules for File:

- 4-Port 1GBASE-T

- 4-Port 1GBASE-T and 1GbE (optical)

- 2-Port 10GbE (optical)

- 2-Port 10GBASE-T (copper)

Design and Build

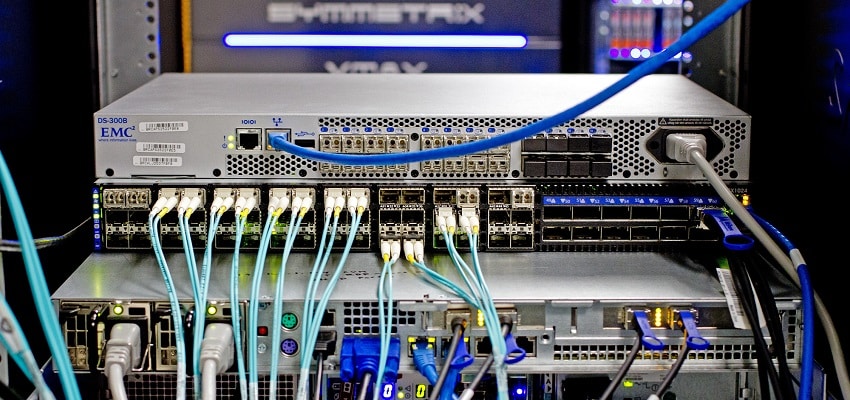

In VNX terminology, a storage deployment consists of several integrated rack components. The controller component is either a Storage-Processor Enclosure (SPE) which does not incorporate drive bays, or as with this VNX5200, a Disk-Processor Enclosure (DPE) which does provide internal storage. VNX Disk Array Enclosures (DAEs) can be used to incorporate additional storage capacity.

An SPE incorporates the VNX storage processors in addition to dual SAS modules, dual power supplies, and fan packs. Disk-Processor Enclosures (DPEs) include an enclosure, disk modules, storage processors, dual power supplies, and four fan packs. Adding additional storage through the use of DAEs can be done through the use of either 15x 3.5-inch, 25x 2.5-inch, or 60x 3.5-inch drive bays with dual SAS link control cards and dual power supplies.

Whether they are part of a storage-processor enclosure or a disk-processor enclosure, VNX storage processors provide data access to external hosts and bridge the array’s block storage with optional VNX2 file storage functionality. The VNX5200 can take advantage of a variety of EMC's UltraFlex IO Modules to customize its connectivity.

UltraFlex Block IO Module Options:

- Four-port 8Gb/s Fibre Channel module with optical SFP and OM2/OM3 cabling to connect to an HBA or FC switch.

- Four-port 1Gb/s iSCSI module with a TCP offload engine and four 1GBaseT Cat6 ports.

- Two-port 10Gb/s Opt iSCSI Module with a TCP offload engine, two 10Gb/s Ethernet ports, and an SFP+ optical connection or an twinax copper connection to an Ethernet switch.

- Two-port 10GBaseT iSCSI module with a TCP offload engine and two 10GBaseT Ethernet ports

- Two-port 10GbE FCoE module with two 10Gb/s Ethernet ports and an SFP+ optical connection or a twinax copper connection

- Four-port 6Gb/s SAS V2.0 module for backend connectivity to VNX Block Storage Processors. Each SAS port has four lanes per port at 6Gb/s for 24Gb/s nominal throughput. This module is designed for PCIe 3 connectivity and can be configured as 4x4x6 or 2x8x6.

In order to configure the VNX5200 for file storage or unified storage, it must be used in tandem with one or more Data Mover units. The VNX Data Mover Enclosure (DME) is 2U in size and houses the Data Movers. Data Movers use 2.13GHz, four-core Xeon 5600 processors with 6GB RAM per Data Mover and can manage a maximum storage capacity of 256TB per Data Mover. The data mover enclosure can operate with one, two, or three Data Movers.

Control Stations are 1U in size and provide management functions to the Data Movers, including controls for failover. A Control Station can be deployed with a secondary Control Station for redundancy. UltraFlex File IO Module options include:

- Four-port 1GBase-T IP module with four RJ-45 ports for Cat6 cables

- Two-port 10GbE Opt IP module with two 10Gb/s Ethernet ports and a choice of an SFP+ optical connection or a twinax copper connection to an Ethernet switch

- Two-port 10 GBase-T IP module with copper connections to an Ethernet switch

- Four-port 8Gb/s Fibre Channel Module with optical SFP and OM2/OM3 cabling to connect directly to a captive array and to provide an NDMP tape connection

Management and Data Services

Unisphere incorporates snapshot functionality that includes configuration options for an auto-delete policy that deletes snapshots either (1) after a specified amount of time or (2) once snapshot storage space exceeds a specified percentage of storage capacity. VNX snapshots can make use of EMC’s thin provisioning and redirect on write technologies to improve the speed and reduce storage requirements for stored snapshots.

VNX uses block and file compression algorithms designed for relatively inactive LUNs and files, allowing both its compression and deduplication operations to be conducted in the background with reduced performance overhead. VNX’s fixed-block deduplication is built with 8KB granularity for scenarios like virtual machine, virtual desktop, and test/development environments with much to gain from deduplication. Deduplication can be set at the pool LUN level, and thin, thick and deduplicated LUNs can be stored in a single pool.

Unisphere Central provides centralized multi-box monitoring for up to thousands of VNX and VNXe systems, for example systems deployed in remote and branch offices. The Unisphere suite also includes VNX Monitoring and Reporting software for storage utilization and workload patterns in order to facilitate problem diagnosis, trend analysis, and capacity planning.

In virtualized environments, the VNX5200 can deploy EMC’s Virtual Storage Integrator for VMware vSphere 5 for provisioning, management, cloning, and deduplication. For VMware, the VNX5200 offers API integrations for VAAI and VASA, and in Hyper-V environments it can be configured for Offloaded Data Transfer and Offload Copy for File. The EMC Storage Integrator offers provisioning functionality for Hyper-V and SharePoint. EMC Site Recovery Manager software can manage failover and failback in disaster recovery situations as well.

VNX2 arrays from the VNX5200 through the VNX8000 offer EMC’s new controller-based data at rest encryption, named D@RE, which encrypts all user data at the drive level. D@RE uses AES 256 encryption and is pending validation for FIPS-140-2 Level 1 compliance. New D@RE-capable VNX arrays ship with the encryption hardware included, and existing VNX2 systems can be field-upgraded to support D@RE and non-disruptively encrypt existing storage as a background task.

D@RE encrypts all data written to the array using a regular data path protocol with a unique key per disk. If drives are removed from the array for any reason, information on the drive is unintelligible. D@RE also incorporates crypto-erase functionality because its encryption keys are deleted when a RAID group or storage pool is deleted.

VNX2 offers mirrored write cache functionality, where each storage processor contains both primary cached data for its LUNs and a secondary copy of the cache for the peer storage processor. RAID levels 0, 1, 1/0, 5, and 6 can coexist in the same array and the system’s proactive hot sparing function further increases data protection. The system uses integrated battery backup units to provide cache de-staging and other considerations for an orderly shutdown during power failures.

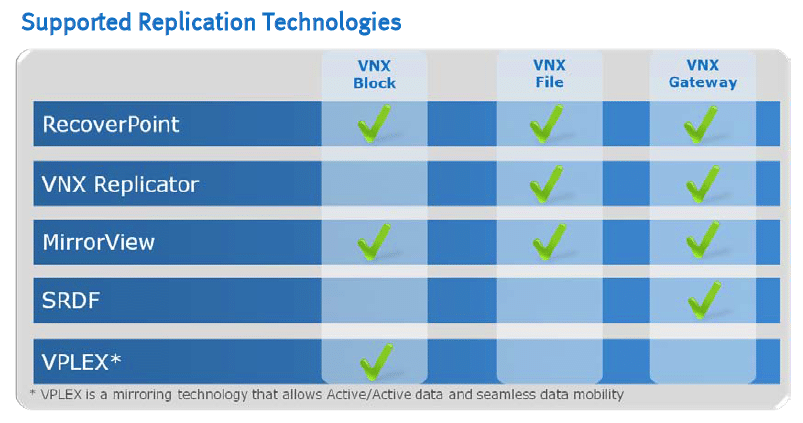

Local protection is available through Unisphere’s point-in-time snapshot feature, and continuous data protection is available via RecoverPoint local replication. EMC’s VPLEX can be used to extend continuous availability within and across data centers. According to EMC, it used television DVRs as an inspiration for its RecoverPoint Continuous Remote Replication software.

Replication Manager and AppSync provide application-consistent protection with EMC Backup and Recovery solutions including Data Domain, Avamar, and Networker to shorten the backup windows and recovery times.

Testing Background and Storage Media

We publish an inventory of our lab environment, an overview of the lab's networking capabilities, and other details about our testing protocols so that administrators and those responsible for equipment acquisition can fairly gauge the conditions under which we have achieved the published results. To maintain our independence, none of our reviews are paid for or managed by the manufacturer of equipment we are testing.

The EMC VNX5200 review stands out as a rather unique testing approach compared to how we usually evaluate equipment. In our standard review process the vendor ships us the platform, which we then connect to our fixed testing platforms for performance benchmarks. With the VNX5200, the size and complexity, as well as initial setup caused us to change this approach and fly our reviewer and equipment to EMC's lab. EMC prepared a VNX5200 with the equipment and options necessary for iSCSI and FC testing. EMC supplied their own dedicated 8Gb FC switch, while we brought one of our Mellanox 10/40Gb SX1024 switches for Ethernet connectivity. To stress the system we leveraged an EchoStreams OSS1A-1U server, exceeding the hardware specifications of what we use in our traditional lab environment.

EchoStreams OSS1A-1U Specifications:

- 2x Intel Xeon E5-2697 v2 (2.7GHz, 30MB Cache, 12-cores)

- Intel C602A Chipset

- Memory – 16GB (2x 8GB) 1333MHz DDR3 Registered RDIMMs

- Windows Server 2012 R2 Standard

- Boot SSD: 100GB Micron RealSSD P400e

- 2x Mellanox ConnectX-3 dual-port 10GbE NIC

- 2x Emulex LightPulse LPe16002 Gen 5 Fibre Channel (8GFC, 16GFC) PCIe 3.0 Dual-Port HBA

Our evaluation of the VNX5200 will compare its performance in synthetic benchmarks across five configurations that reflect how EMC customers deploy VNX2 systems in a production setting. Each configuration leveraged 8 LUNs measuring 25GB in size.

- A RAID10 array composed of SSD media with Fibre Channel connectivity

- A RAID10 array composed of SSD media with iSCSI connectivity

- A RAID6 array composed of 7k HDD media with Fibre Channel connectivity

- A RAID5 array composed of 10k HDD media with Fibre Channel connectivity

- A RAID5 array composed of 15k HDD media with Fibre Channel connectivity.

Each configuration incorporated 24 drives and used RAID configurations most common with their deployment in a production setting.

Enterprise Synthetic Workload Analysis

Prior to initiating each of the fio synthetic benchmarks, our lab preconditions the device into steady state under a heavy load of 16 threads with an outstanding queue of 16 per thread. Then the storage is tested in set intervals with multiple thread/queue depth profiles to show performance under light and heavy usage.

Preconditioning and Primary Steady-State Tests:

- Throughput (Read+Write IOPS Aggregated)

- Average Latency (Read+Write Latency Averaged Together)

- Max Latency (Peak Read or Write Latency)

- Latency Standard Deviation (Read+Write Standard Deviation Averaged Together)

This synthetic analysis incorporates four profiles which are widely used in manufacturer specifications and benchmarks:

- 4k random – 100% Read and 100% Write

- 8k sequential – 100% Read and 100% Write

- 8k random – 70% Read/30% Write

- 128k sequential – 100% Read and 100% Write

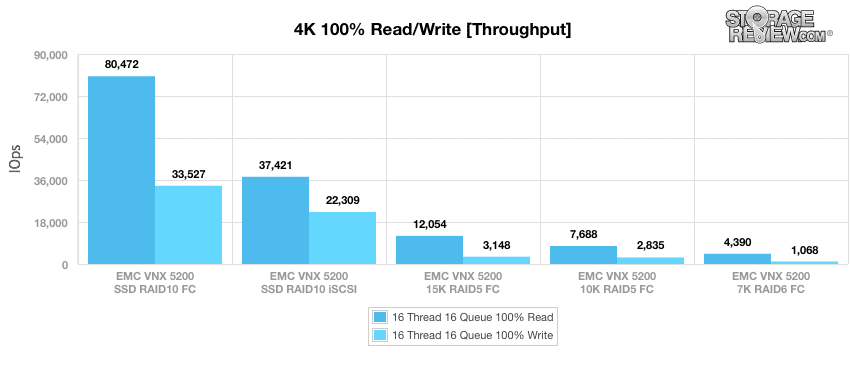

After being preconditioned for 4k workloads, we put the VNX5200 through our primary tests. As such, when configured with SSDs and accessed via Fibre Channel, it posted 80,472 IOPS read and 33,527 IOPS write. Using the same SSDs in RAID10 (though this time using our iSCSI block-level test), the VNX5200 achieved 37,421 IOPS read and 22,309 IOPS write. Switching to 15K HDDs in RAID5 using Fibre Channel connectivity showed 12,054 IOPS read and 3,148 IOPS write, while the 10K HDD configuration hit 7,688 IOPS read and 2,835 IOPS write. When using 7K HDDs in a RAID6 configuration of the same connectivity type, the VNX5200 posted 4,390 IOPS read and 1,068 IOPS write.

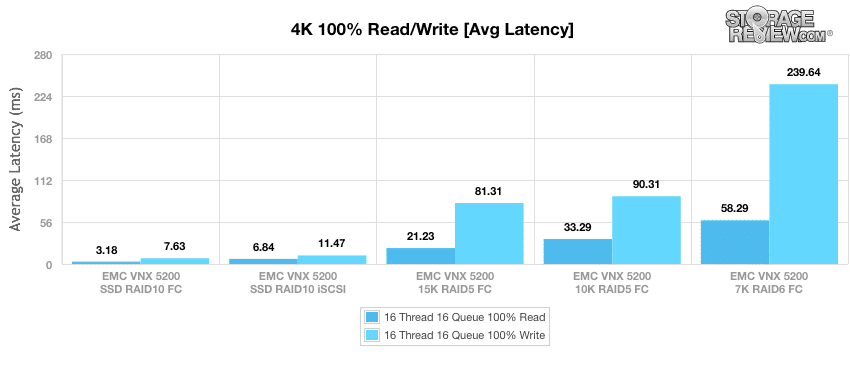

Results were much of the same in average latency. When configured with SSDs in RAID10 and using a Fibre Channel connectivity, it showed 3.18ms read and 7.63ms write. Using the same SSDs with our iSCSI block-level test, the VNX5200 boasted 6.84ms read and 11.47me write. Switching to 15K HDDs in RAID5 using Fibre Channel connectivity showed 21.23ms read and 81.31ms write in average latency, while the 10K HDD configuration posted 33.29ms read and 90.31ms write. When switching to 7K HDDs in a RAID6 configuration of the same connectivity type, the VNX5200 posted 58.29ms read and 239.64ms write.

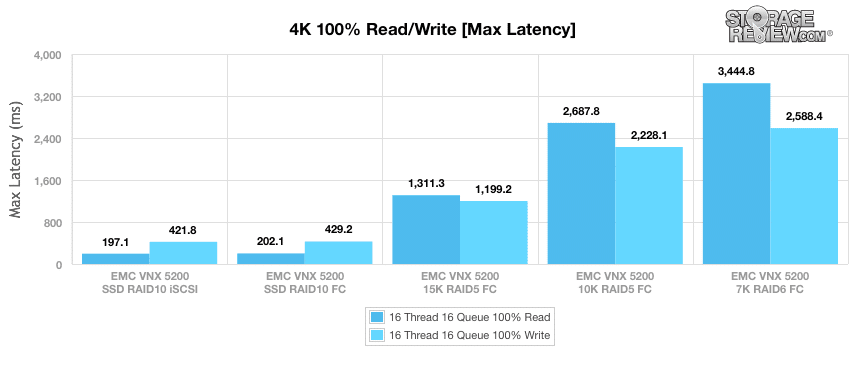

Next, we move to our maximum latency benchmark. When configured with SSDs in RAID10 and using our iSCSI block-level test, it showed 197ms read and 421.8ms write. Using the same SSDs, though this time with Fibre Channel connectivity, the VNX5200 boasted 202.1ms read and 429.2ms write. Switching to 15K HDDs in RAID5 using Fibre Channel connectivity recorded 1,311.3ms read and 1,199.2ms write for maximum latency, while the 10K HDD configuration posted 2,687.8ms read and 2,228.1ms write. When switching to 7K HDDs in a RAID6 configuration of the same connectivity type, the VNX5200 posted 3,444.8ms read and 2,588.4ms write.

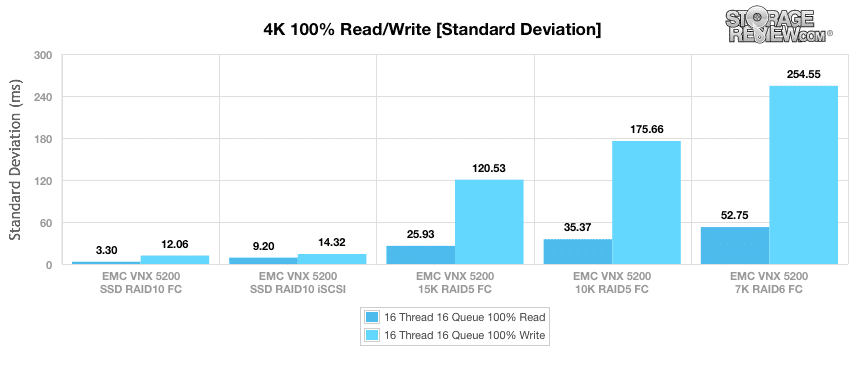

Our last 4K benchmark is standard deviation, which measures the consistency of the VNX5200's performance. When configured with SSDs in RAID10 using our iSCSI block-level test, the VNX5200 posted 3.30ms read and 12.06ms write. Using the same SSDs with Fibre Channel connectivity shows 9.20ms read and 14.32ms write. Switching to 15K HDDs in RAID5 with Fibre Channel connectivity recorded 25.93ms read and 120.53ms write, while the 10K HDD configuration posted 35.37ms read and 175.66ms write. When using 7K HDDs RAID6 configuration of the same connectivity type, the VNX5200 posted 52.75ms read and 254.55ms write.

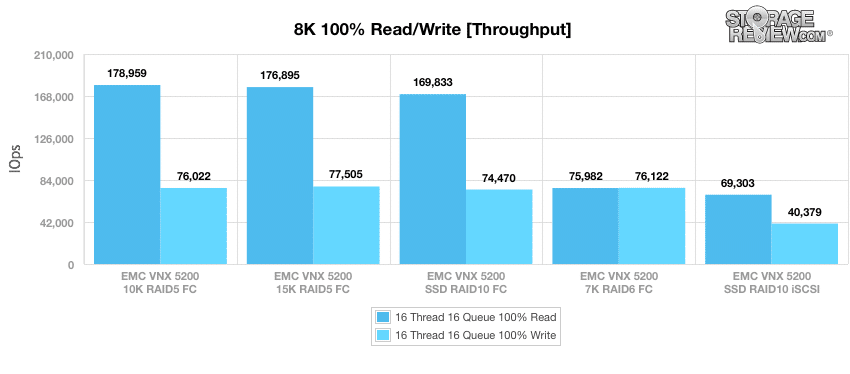

Our next benchmark uses a sequential workload composed of 100% read operations and then 100% write operations with an 8k transfer size. Here, the VNX5200 posted 178,959 IOPS read and 76,022 IOPS write when configured with 10K HDDs in RAID5 Fibre Channel connectivity. Using 15K HDDs in RAID5 with Fibre Channel connectivity shows 176,895 IOPS read and 77,505 IOPS write. Switching to SSDs in RAID10 using a Fibre Channel connectivity recorded 169,833 IOPS read and 74,470 IOPS write, while the SSD iSCSI block-level test showed 69,303 IOPS read and 40,379 IOPS write. When using 7K HDDs in a RAID6 configuration with Fibre Channel connectivity, the VNX5200 posted 75,982 IOPS read and 76,122 IOPS write.

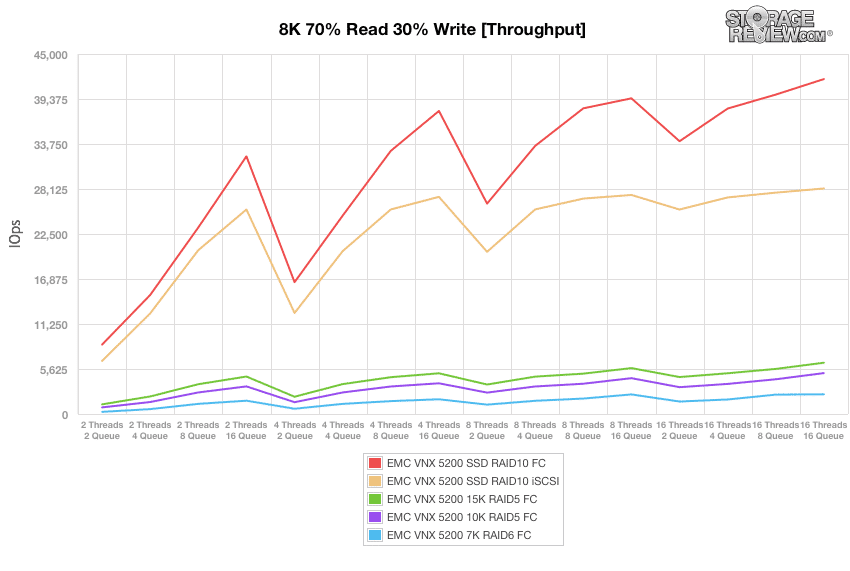

Our next series of workloads are composed of a mixture of 8k read (70%) and write (30%) operations up to a 16 Threads 16 Queue, our first being throughput. When configured with SSDs in RAID10 using Fibre Channel connectivity, the VNX5200 posted a range of 8,673 IOPS to 41,866 IOPS by 16T/16Q. Using the same SSDs during our iSCSI block-level test shows a range of 6,631 IOPS to 28,193 IOPS. Switching to 15K HDDs in RAID5 with Fibre Channel connectivity recorded a range of 1,204 IOPS and 6,411 IOPS, while the 10K HDD configuration posted 826 IOPS and 5,113 IOPS by 16T/16Q. When using 7K HDDs RAID6 configuration of the same connectivity type, the VNX5200 posted a range of 267 IOPS to 2,467 IOPS.

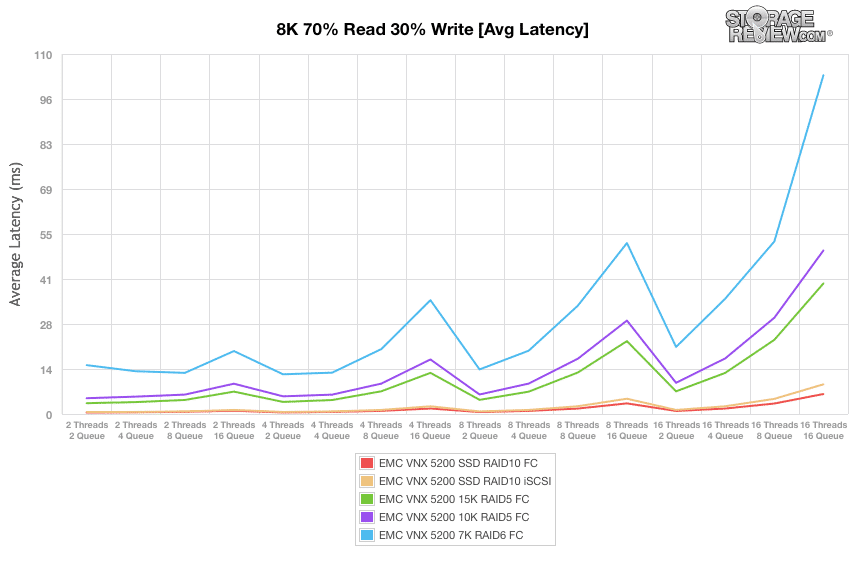

Next, we looked at average latency. When configured with SSDs in RAID10 using Fibre Channel connectivity, the VNX5200 posted a range of 0.45ms to 6.11ms by 16T/16Q. Using the same SSDs during our iSCSI block-level test shows a range of 0.59ms to 9.07ms. Switching to 15K HDDs in RAID5 with Fibre Channel connectivity recorded a range of 3.31ms and 39.89ms, while the 10K HDD configuration posted 4.83ms initially and 49.97ms by 16T/16Q. When using 7K HDDs RAID6 configuration of the same connectivity type, the VNX5200 posted a range of 14.93ms to 103.52ms.

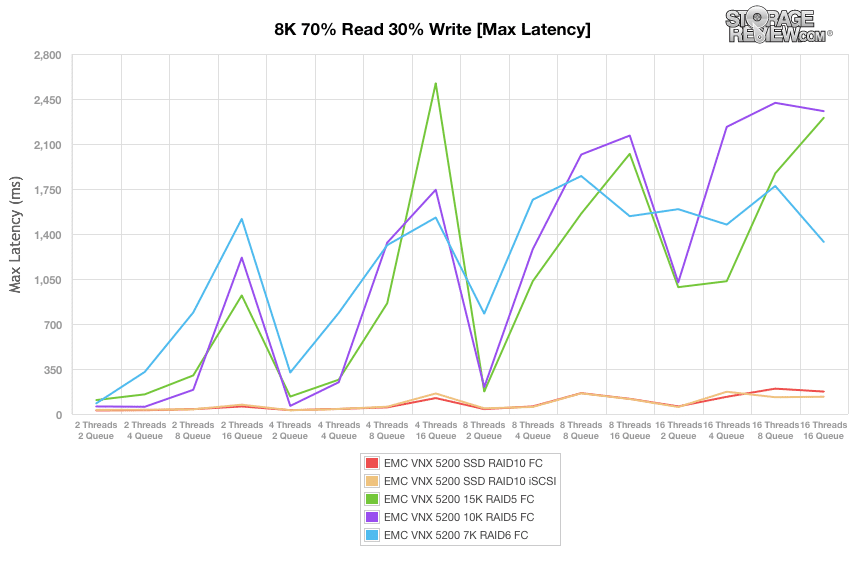

Taking a look at its maximum latency results, when configured with SSDs in RAID10 using Fibre Channel connectivity, the VNX5200 had a range of 27.85ms to 174.43ms by 16T/16Q. Using the same SSDs during our iSCSI block-level test shows a range of 31.78ms to 134.48ms in maximum latency. Switching to 15K HDDs in RAID5 with Fibre Channel connectivity recorded a range of 108.48ms and 2,303.72ms, while the 10K HDD configuration showed 58.83ms and 2,355.53ms by 16T/16Q. When using 7K HDDs RAID6 configuration of the same connectivity type, the VNX5200 posted a maximum latency range of 82.74ms to 1,338.61ms.

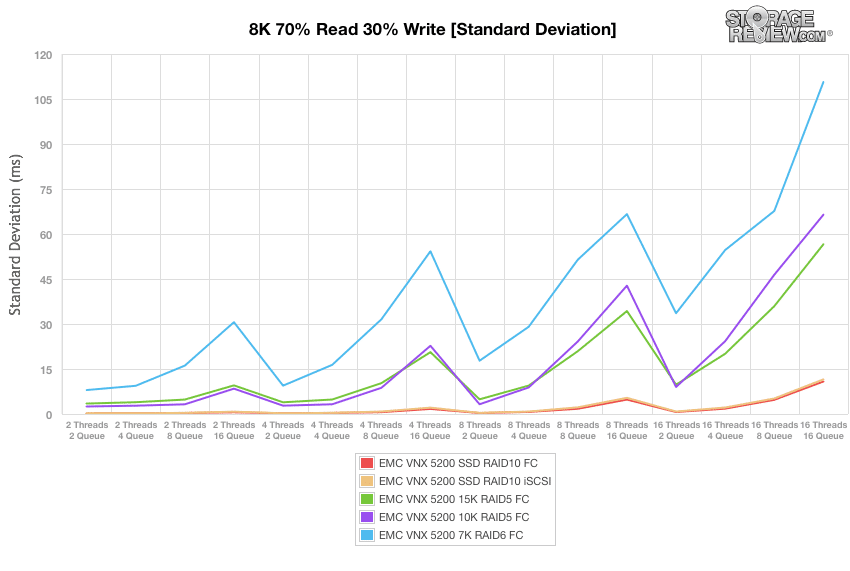

Our next workload analyzes the standard deviation for our 8k 70% read and 30% write operations. Using SSDs in RAID10 using Fibre Channel connectivity, the VNX5200 posted a range of just 0.18ms to 10.83ms by 16T/16Q. Using the same SSDs during our iSCSI block-level test shows a similar range of 0.21ms to 11.54ms in latency consistency. Switching to 15K HDDs in RAID5 with Fibre Channel connectivity recorded a range of 3.48ms to 56.58ms, while the 10K HDD configuration showed 2.5ms initially and 66.44ms by 16T/16Q. When using 7K HDDs RAID6 configuration of the same connectivity type, the VNX5200 posted a standard deviation range of 7.98ms to 110.68ms.

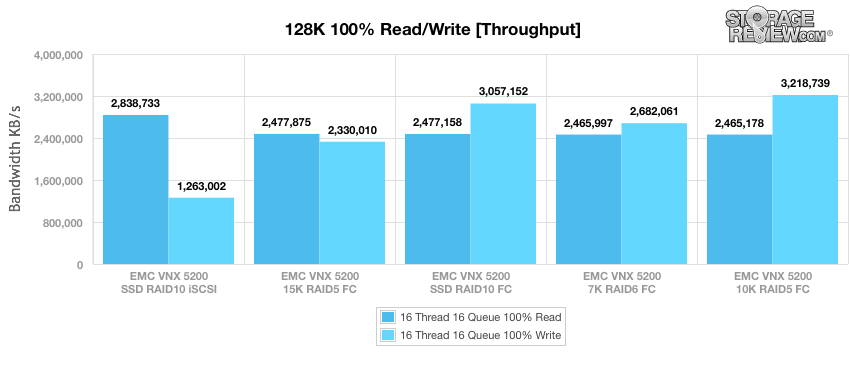

Our final synthetic benchmark made use of sequential 128k transfers and a workload of 100% read and 100% write operations. In this scenario, the VNX5200 posted 2.84GB/s read and 1.26GB/s write when configured with SSDs using our iSCSI block-level test. Using 15K HDDs in RAID5 with Fibre Channel connectivity shows 2.48GB/s read and 2.33GB/s write. Switching back to SSDs in RAID10 (this time using a Fibre Channel connectivity) it recorded 2.48GB/s read and 3.06GB/s write. When using 7K HDDs in a RAID6 configuration with Fibre Channel connectivity, the VNX5200 posted 2.47GB/s read and 2.68GB/s write while the 10K HDD configuration posted 2.47GB/s read and 3.22GB/s write.

Conclusion

EMC's VNX ecosystem is well established in the enterprise storage market, but VNX2 reflects the company's willingness to completely overhaul the VNX architecture in order to make the most of advances in processor, flash, and networking technologies. Much like the VNXe3200, which is oriented towards smaller deployments, the VNX5200 also demonstrates that EMC is paying attention to midmarket firms and remote/branch offices which may not be able to justify the expense of the larger systems in the VNX family but still want all of the enterprise benefits.

Used in conjunction with the FAST Suite, the VNX5200 is able to offer flash caching and tiering in tandem, a combination against which EMC's peers have trouble competing. In our testing we broke out the common tiers and configurations of storage to illustrate with a limited test suite just what users can expect from the 5200. Tests covered 7K HDD on up to SSDs, illustrating the flexibility the system has, delivering capacity, performance, or both over a variety of interface options.

Ultimately the VNX2 platform can handle just about anything thrown its way; and it's that flexibility that wins EMC deals on a daily basis. Given the blend of storage options, IO modules and NAS support the systems are able to manage against almost everything an organization could need. Of course there are use cases that go beyond the 5200 we tested here. The VNX family scales up quite a bit (and down some too with the VNXe3200) to address these needs be it with more compute power, flash allotment or disk shelves.

Pros

- A variety of connectivity options via UltraFlex I/O modules

- Configurations that support block, file, and unified storage scenarios

- Access to the robust VNX ecosystem of administrative tools and third-party integrations

Cons

- Maximum of 600GB of FAST Cache

Bottom Line

The EMC VNX5200 is a key entry in a their new wave of unified storage arrays that are architected to take advantage of flash storage, multi-core CPUs and modern datacenter network environments. Its affordability, flexibility in configuration, and access to VNX2 management software and third-party integrations makes for a formidable overall package for the midmarket.

Amazon

Amazon