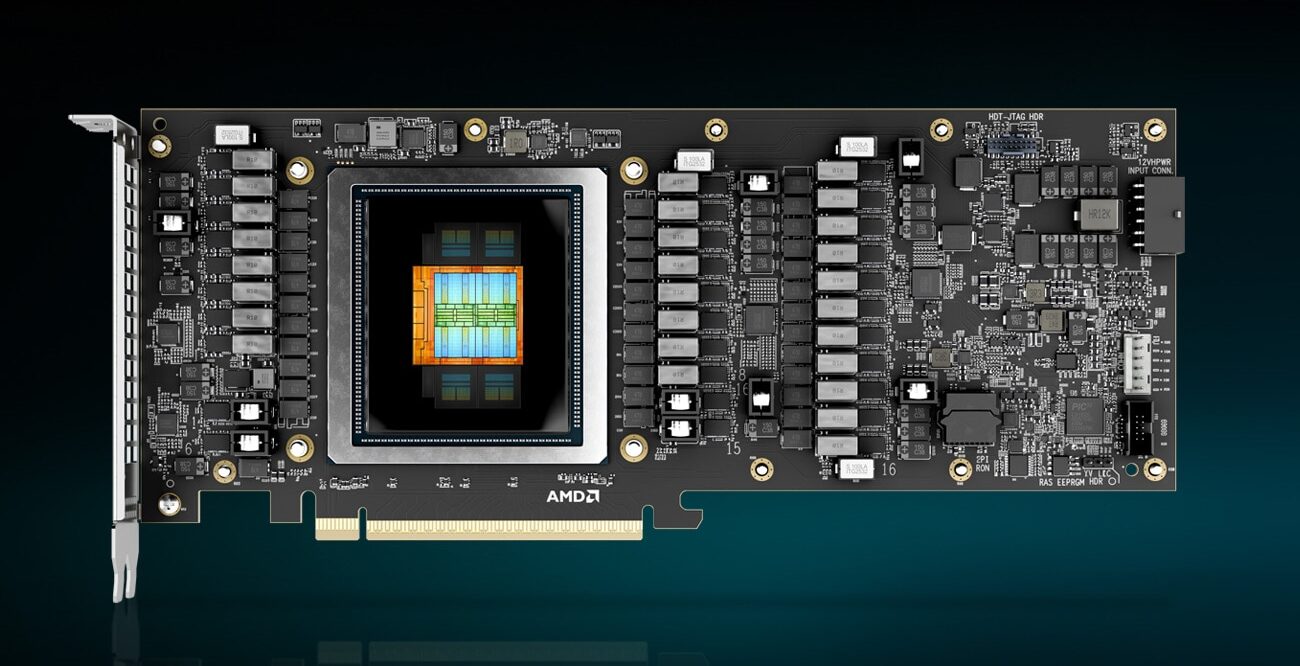

AMD has announced the Instinct MI350P, a PCIe accelerator aimed at enterprises that want on-premises AI inference without rebuilding their data center. The card is a dual-slot, full-height, full-length design built for standard air-cooled servers. It is also the first time in nearly four years that AMD has put a current-generation Instinct chip into a form factor that drops into a normal server.

The PCIe Instinct line had effectively gone quiet after the MI210 shipped in early 2022. Every generation since (MI300X, MI325X, and the OAM MI350X) has been an OAM socketed module on a Universal Baseboard, requiring a purpose-built chassis with the power delivery and airflow for eight 1,000W-class accelerators in a single tray. That works for hyperscalers buying GPUs by the rack. It does not work for an enterprise that wants on-prem inference but cannot or will not commit to a custom AI rack. The MI350P fits that gap, and at the moment, NVIDIA does not have a flagship-tier server PCIe card in the same class, so AMD has the segment to itself for now.

Hardware: MI350P vs. MI350X OAM

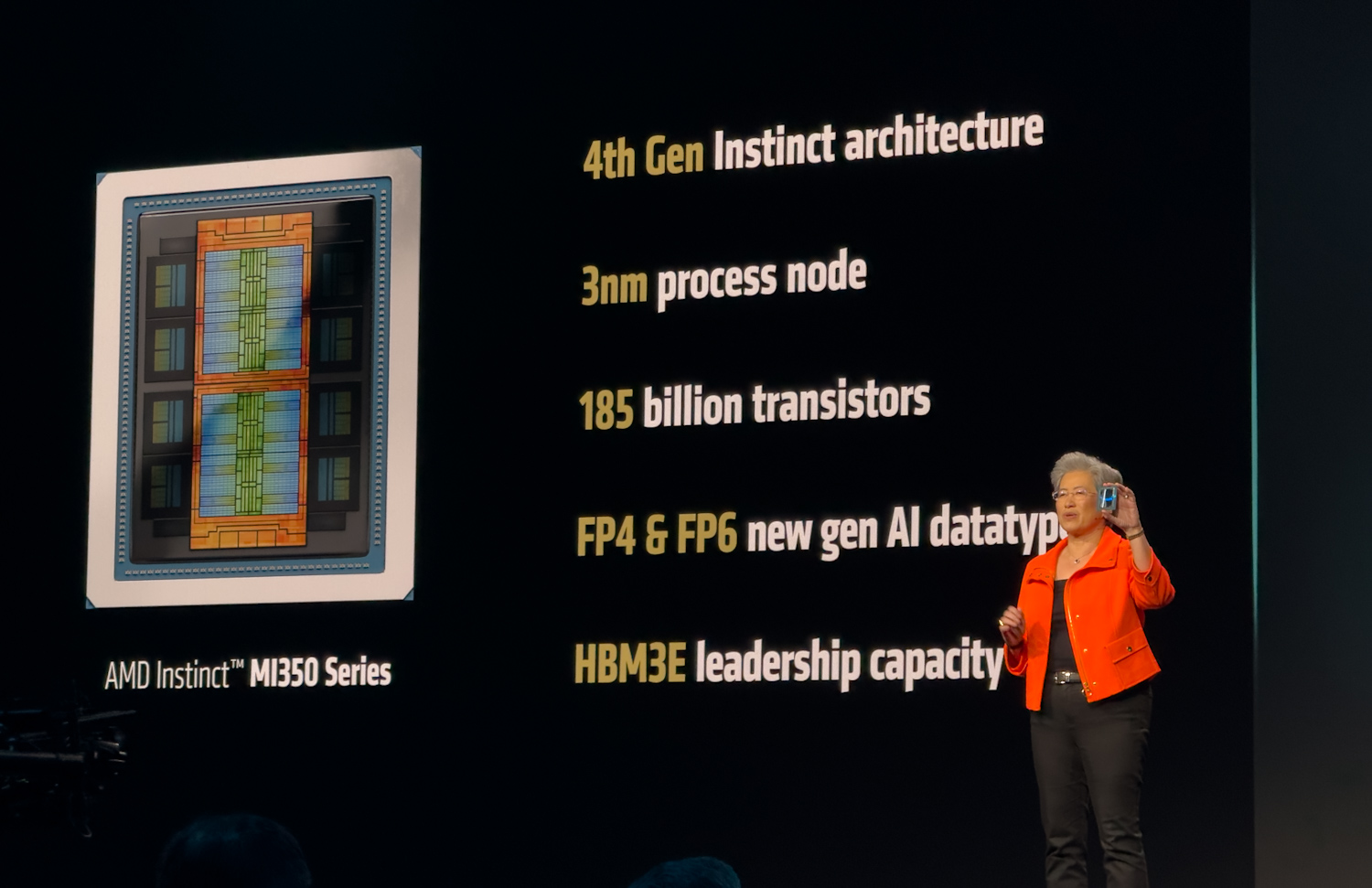

The MI350P is not a binned MI350X. AMD designed a smaller chip for it. The MI350X carries two I/O dies, each with four accelerator complex dies (XCDs), for a total of eight XCDs and 256 compute units. The MI350P has a single I/O die with four XCDs and 128 compute units, half the silicon, running at the same 2.2 GHz peak clock as its larger sibling. Memory follows the same pattern. Four HBM3E stacks instead of eight. A 4,096-bit bus instead of an 8,192-bit bus. 144GB at 4 TB/s instead of 288GB at 8 TB/s.

Peak compute also halves. The MI350P tops out at 4,600 TFLOPS at MXFP4 against the MI350X’s 9.2 PFLOPS, and 2,300 TFLOPS at FP8 against 4.6 PFLOPS. BF16, FP16, and the rest of the precision stack scale the same way. It is refreshing to see AMD publish delivered numbers alongside peak. The delivered figures are 2,299 TFLOPS on MXFP4, 1,529 TFLOPS on FP8, and 713 TFLOPS on BF16. Those numbers reflect what the card can actually do inside a 600W envelope, where electrical and memory bandwidth limits eat into the theoretical peaks.

We took a look at the MI350X platform through Supermicro’s Jumpstart program and were genuinely impressed by its performance across inference workloads. We cannot wait to get the MI350P in for testing and see how the PCIe variant holds up in the more conventional server chassis for which it was designed.

| AMD Instinct MI350P PCIe Card | ||

|---|---|---|

| Specification | Delivered (FLOPS) | Peak (TFLOPS) |

| Performance | ||

| BF16 | 713 | 1150 |

| FP16 | 672 | 1150 |

| FP8 | 1529 | 2300 |

| MXFP8 | 1327 | 2300 |

| MXFP6 | 1804 | 4600 |

| MXFP4 | 2299 | 4600 |

| Memory and Partitioning | ||

| Memory Capacity | 144 GB HBM3E | 144 GB HBM3E |

| Memory BW | 3.6 TB/s | 4.0 TB/s |

| GPU Instances | Up to 4 @ 36GB each | Up to 4 @ 36GB each |

| Platform | ||

| Video & JPEG Decode | ||

| GPU Scale-up Interconnect | Not supported | Not supported |

| Product FF | FHFL dual-slot Air-cooled | FHFL dual-slot Air-cooled |

| Max Total Board Power (TBP) | 600W (450W configurable) |

600W (450W configurable) |

| PCIe Host | x16 PCIe Gen 5 at 128GB/s | x16 PCIe Gen 5 at 128GB/s |

Power does not quite halve. The MI350P is rated at 600W TBP, about 60% of the MI350X’s 1,000W. 600W is the ceiling defined by the PCIe CEM specification, so the card is running as hot as the slot allows. A 450W mode is available for chassis that cannot deliver the full power or cooling envelope, with some performance trimmed off. The 600W rating also places the MI350P in the same bracket as NVIDIA’s H200 NVL and the RTX Pro 6000 Server, which it will be cross-shopped against in this segment.

Unlike NVIDIA’s NVL4 offering with the H200, AMD does not expose the GPU’s Infinity Fabric links on the MI350P; all collective communications go through the PCIe Gen5 x16 (128 GB/s) link.

The Eight-GPU Air-Cooled Story

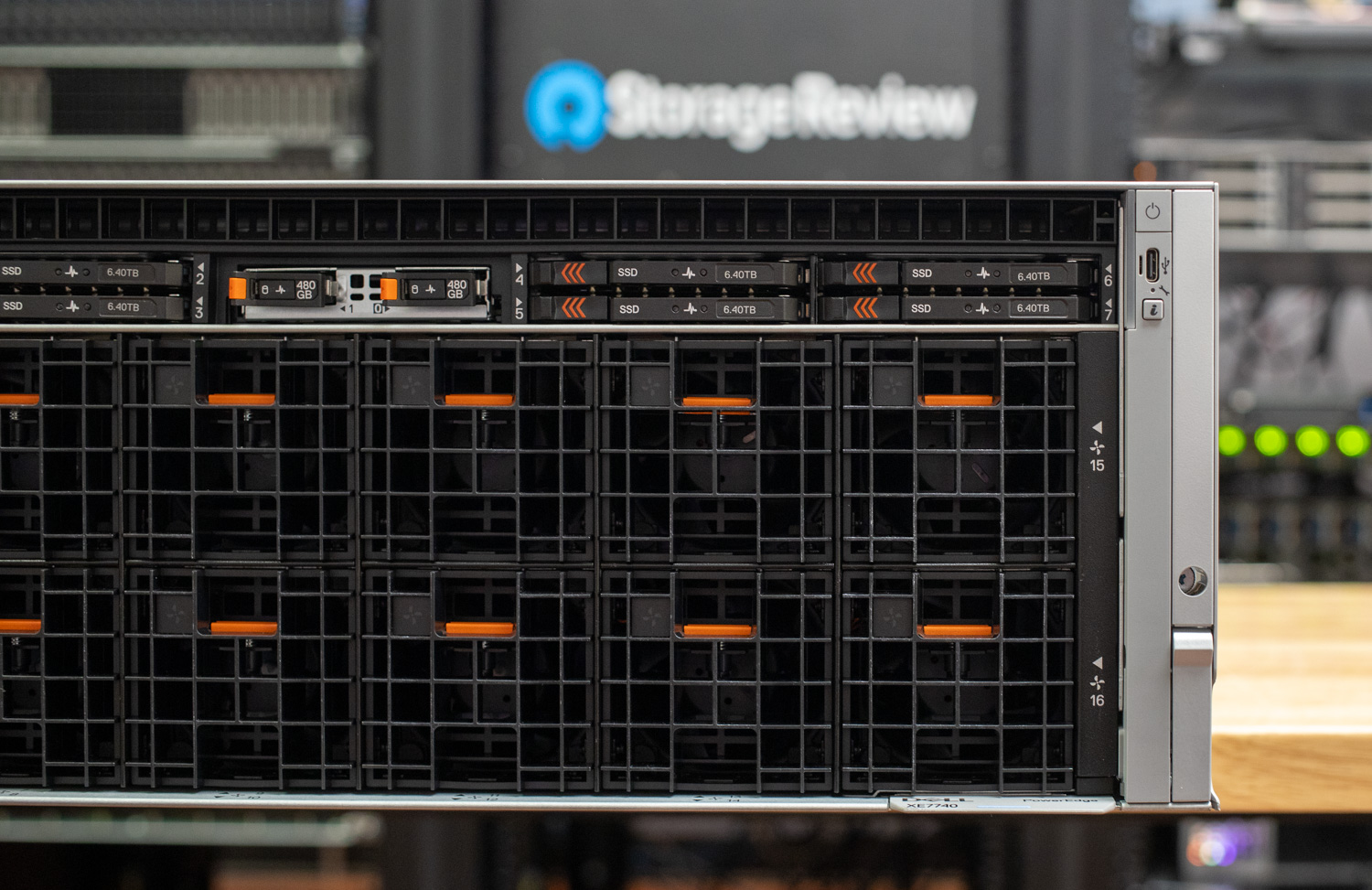

Because the MI350P is a standard dual-slot, full-height, full-length PCIe card, it fits into servers that enterprises already deploy and operate, including the dense eight-GPU air-cooled platforms now coming from the major OEMs. The Dell PowerEdge XE7740 and the HPE ProLiant DL380a Gen12, both of which we have reviewed previously, are the obvious targets. Each is built specifically to host eight dual-slot, FHFL accelerators in an air-cooled chassis with the power delivery and airflow already engineered for 600W-class cards. No custom rack, no liquid loop, no OAM baseboard.

An eight-card MI350P configuration in one of these systems puts 1,152GB of HBM3E and 32 TB/s of aggregate memory bandwidth into a single air-cooled box. For inference on large open-weight models, that is enough to host a trillion-parameter model on MXFP4 in a single chassis. But as we mentioned earlier, the trade-off is the absence of scale-up fabric. On the OAM MI350X, GPUs communicate over Infinity Fabric across the Universal Baseboard. On the MI350P, every GPU-to-GPU collective rides PCIe Gen5 x16 at 128 GB/s, the same path used to reach the host. For inference workloads, particularly with tensor-parallel sharding inside a node and pipeline or data parallelism across nodes, this is workable. For tightly coupled training where all-reduce bandwidth dominates step time, the OAM platform remains the right answer.

Precision Formats

Precision is worth covering, although none of the formats supported on the MI350P are new. The MI350X has the same set. The reason it still matters is that the OCP block-scaling data types (MXFP8, MXFP6, MXFP4) have become the standard for frontier model labs to train and ship models. These formats let labs train at lower precision with little to no loss in quality, and the inference benefits appear immediately afterward.

Lower precision is faster. MXFP4 runs more than twice as fast as FP8 and roughly four times as fast as BF16 at peak. That speedup shows up in real workloads. OpenAI’s gpt-oss release made the throughput uplift obvious, and frontier models like Kimi K2.6 are being natively quantization-aware-trained in INT4 from the start, rather than quantized after the fact. The other half of the story is memory. INT4 and MXFP4 weights take a quarter of the space of BF16. That math means trillion-parameter models can fit inside a single eight-GPU box. For an enterprise that wants to host a large open-weight model on-prem, the difference is one rack against a multi-node cluster with all the networking and orchestration that implies.

Bottom Line

Most enterprises evaluating on-prem AI run out of power, cooling, rack density, or budget before they run out of compute headroom. A PCIe Instinct that drops into a server estate they already operate sidesteps the worst of those constraints. NVIDIA does not currently have a flagship server PCIe card to compete with it, which gives AMD a clean run at the segment for as long as that holds.

Additional information is available on the AMD Instinct page.

Amazon

Amazon