Broadcom announced VMware Cloud Foundation 9.1, positioning the platform as a private cloud foundation optimized for production AI workloads. The release focuses on tighter integration of AI and Kubernetes, expanded hardware support across AMD, Intel, and NVIDIA, and embedded security capabilities designed for enterprise AI deployments. The update targets organizations running inference and emerging agentic AI applications, with an emphasis on cost control, infrastructure flexibility, and data governance.

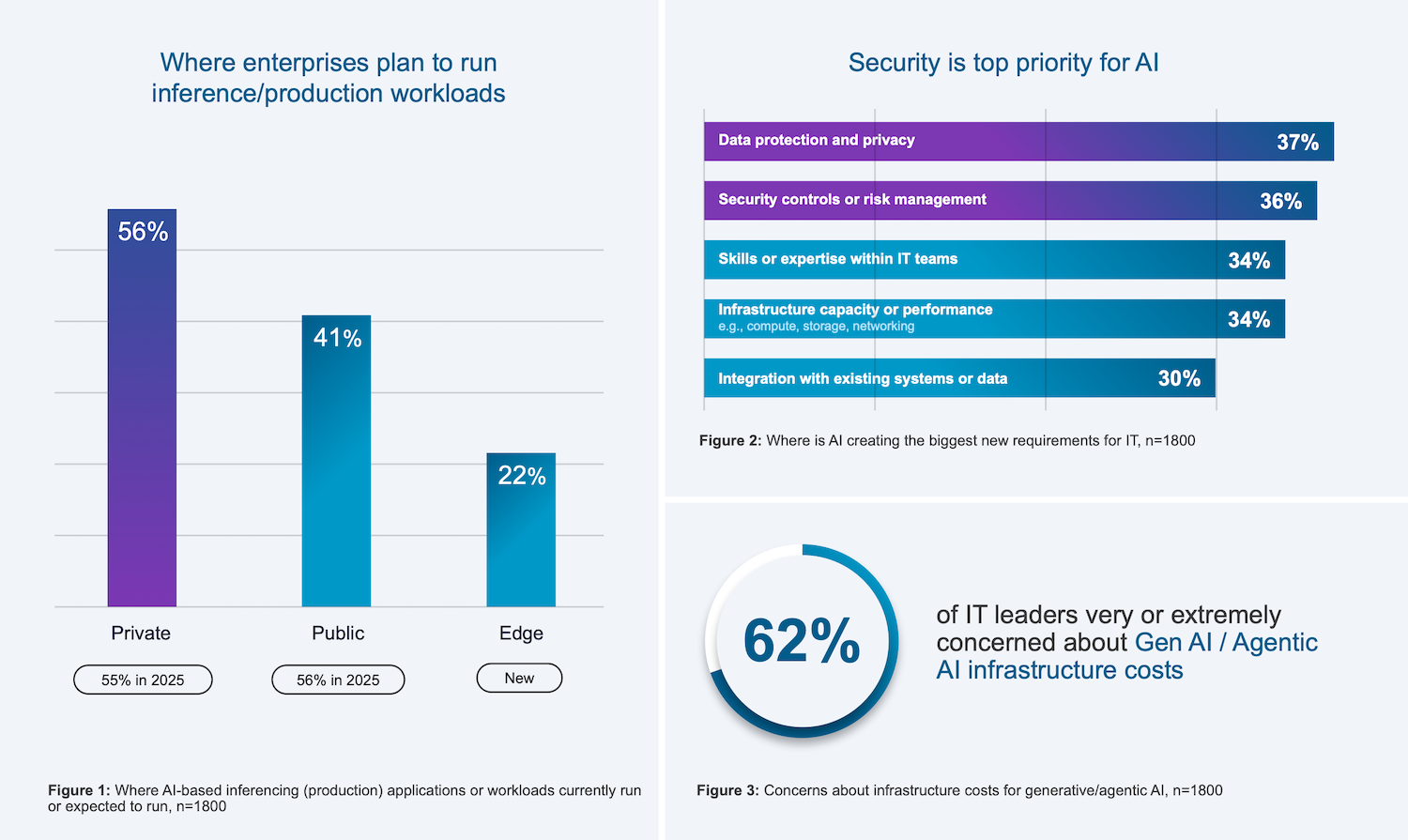

Radius Tech compiled the Private Cloud Outlook 2026 data in partnership with Broadcom. The global survey included 1,800 IT decision-makers in enterprise organizations.

The company also shared early findings from its upcoming Private Cloud Outlook 2026 report, indicating a continued shift toward private cloud for production AI. According to the preview data, 56% of organizations are running or planning to run inference workloads in private environments, compared to 41% in the public cloud, a decline year over year. Cost concerns remain a primary driver, with 62% of IT leaders citing generative AI infrastructure costs as a major issue. Additionally, 36% report new requirements around data protection, privacy, and risk management driven by AI adoption.

Krish Prasad, senior vice president and general manager of VMware Cloud Foundation Division at Broadcom, highlighted three main challenges enterprises face when adopting AI: data and IP privacy concerns, rising infrastructure costs, and readiness for agentic AI. He emphasized that VCF 9.1 offers a unified platform that tackles these issues by providing advanced infrastructure for Private AI, enabling zero-trust security, optimizing costs through intelligent infrastructure choices, and supporting both agentic workflows and accelerated inferencing on a single platform.

Cost and Infrastructure Efficiency

VCF 9.1 introduces several infrastructure optimizations to improve resource efficiency for mixed AI and traditional workloads. Broadcom claims up to a 40% reduction in server costs through intelligent memory tiering, allowing higher workload density without additional hardware. Storage efficiency is also addressed through enhanced compression and deduplication techniques in AI data pipelines, reducing TCO by up to 39%.

Kubernetes operations are another focus area. The platform is designed to lower operational overhead by up to 38% while improving scalability and deployment speed. Broadcom also highlights operational improvements, including four-times-faster cluster upgrades and a doubling of fleet capacity, enabling enterprises to scale AI infrastructure more quickly across distributed environments.

Unified Platform for Mixed Workloads

VCF 9.1 continues VMware’s push toward a unified infrastructure model that supports virtual machines, containers, and AI workloads on the same platform. This includes support for both GPU-accelerated inference and CPU-heavy agentic workflows, reflecting the growing need to balance heterogeneous compute requirements.

The platform expands Kubernetes capabilities by offering greater cluster scale, faster deployment times, and shorter upgrade windows than earlier releases. These improvements are intended to support production-grade AI services that require continuous availability and minimal downtime.

Operational tooling has also been enhanced with new observability features that provide metrics such as time-to-first-token, token throughput, and GPU utilization across multiple accelerator types. This enables more granular performance tuning and capacity planning. Additionally, reusable application blueprints allow teams to standardize multi-VM and container-based deployments, reducing configuration drift across environments.

Hardware Flexibility and Ecosystem Integration

Broadcom emphasizes open ecosystem support in VCF 9.1, with multi-accelerator GPU compatibility spanning AMD and NVIDIA GPUs, as well as support for AMD and Intel CPUs. The platform also integrates with standards-based networking technologies such as EVPN and VXLAN, including interoperability with Arista Universal Cloud Network.

This approach allows enterprises to mix and match hardware based on workload requirements and availability, an important consideration given ongoing supply constraints in the GPU market.

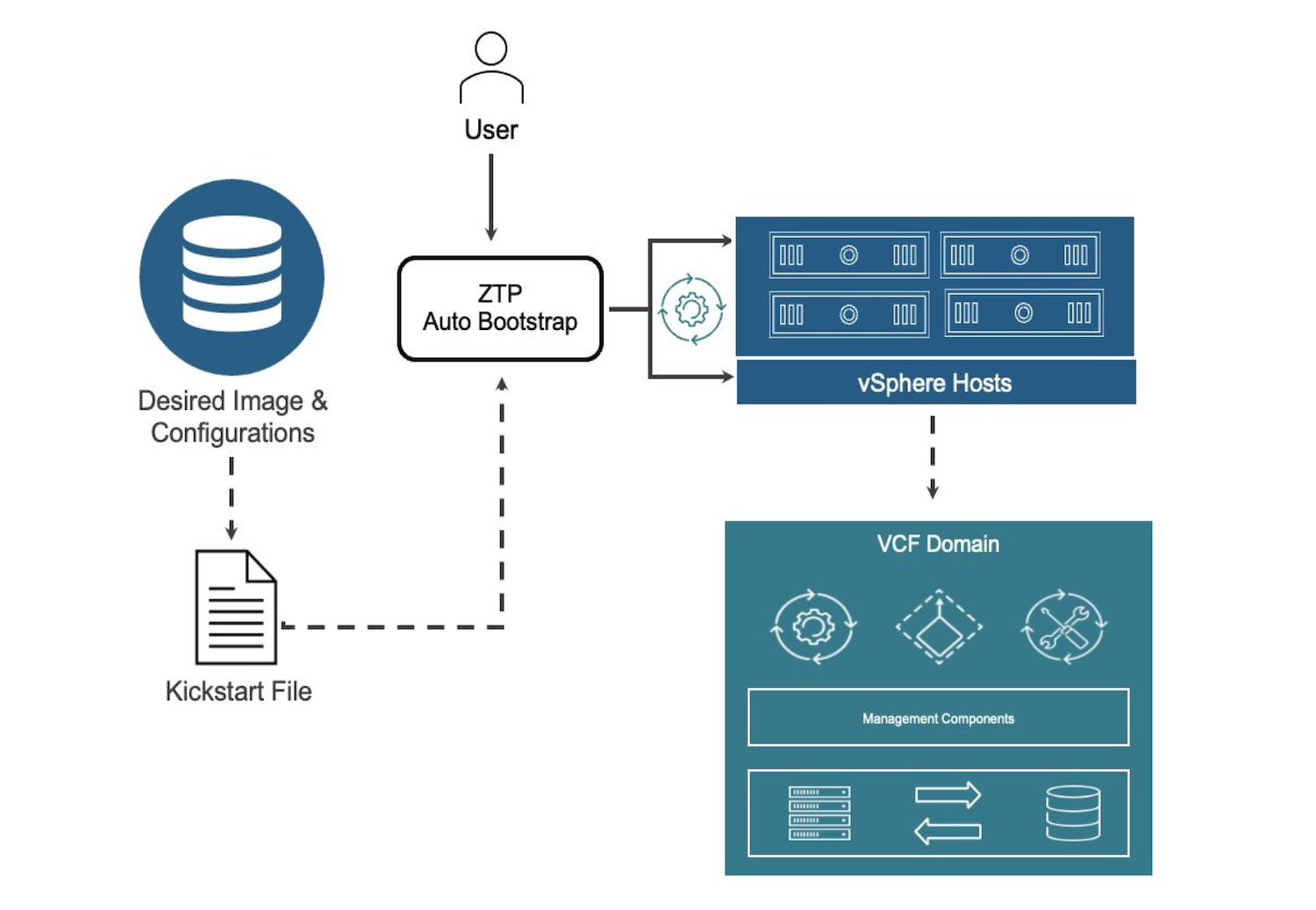

Automation and Scalable Operations

Automation is a central theme of the release. VCF 9.1 extends fleet management capabilities to support up to 5,000 hosts and includes automated lifecycle operations that reduce manual intervention. Cluster upgrades are significantly accelerated, with support for distributed and air-gapped environments.

Multi-tenancy is also enhanced, enabling isolation of AI workloads across teams or customers while maintaining high utilization of shared GPU and CPU resources. This is especially relevant for service providers and large enterprises consolidating AI infrastructure.

The platform further reduces reliance on external appliances by integrating virtualized load balancing and security services via VMware Avi Load Balancer and vDefend. This helps reduce capital expenses while maintaining application resilience and lifecycle automation.

Integrated Security and Zero-Trust Architecture

Security is built into the VCF 9.1 infrastructure, with a focus on protecting AI models, training data, and inference pipelines. The platform implements zero-trust segmentation across workloads, including Kubernetes environments, and introduces distributed intrusion detection and prevention with high-throughput inspection.

New ransomware recovery capabilities provide isolated recovery environments and validation tools, and integrate with CrowdStrike Falcon endpoint security. This approach is designed to protect high-value AI assets while keeping data localized to meet sovereignty requirements.

Continuous compliance features automate monitoring and remediation in line with defined policies, helping organizations maintain audit readiness without additional tooling. Live patching further reduces operational risk by enabling updates without downtime in most use cases, supporting always-on AI services.

Our Take: Strong Platform Evolution, But Not the Default AI Destination

VMware Cloud Foundation 9.1 is a logical step forward for Broadcom, especially as it tries to reposition VMware as a viable platform for enterprise AI. The emphasis on private cloud, integrated security, and unified operations across VMs, containers, and GPUs aligns with what many large organizations are asking for as they move inference workloads closer to their data.

Where the narrative gets more complicated is in how AI infrastructure is actually being deployed today. Modern distributed AI pipelines are being built around Kubernetes-first architectures, with increasingly modular infrastructure designs that prioritize flexibility and direct access to accelerated compute. In those environments, VMware is not typically the control plane, and in many cases becomes an additional layer rather than the foundation.

Cost is another factor that’s difficult to ignore. Broadcom is positioning VCF 9.1 as a way to reduce infrastructure spend through efficiency gains, but that argument runs into ongoing concerns from enterprise customers around VMware licensing and total cost of ownership. This tension is especially visible in smaller organizations, where teams that could benefit from a more integrated platform are often the most sensitive to licensing changes and platform lock-in. Larger enterprises, by contrast, are more likely to absorb those costs and invest in dedicated Kubernetes expertise to build and operate AI infrastructure at scale.

There is still a clear role for VCF 9.1. Enterprises that are already deeply invested in VMware, especially those prioritizing data governance, operational consistency, and private infrastructure, may find this approach compelling for inference and early-stage AI services. But for greenfield AI deployments, or teams moving at the pace of current AI frameworks, the center of gravity continues to sit with Kubernetes-native stacks and infrastructure designed specifically for distributed AI workloads.

The result is less about VMware becoming the destination for enterprise AI, and more about VMware ensuring it remains relevant as AI workloads begin to land inside the enterprise.

Amazon

Amazon