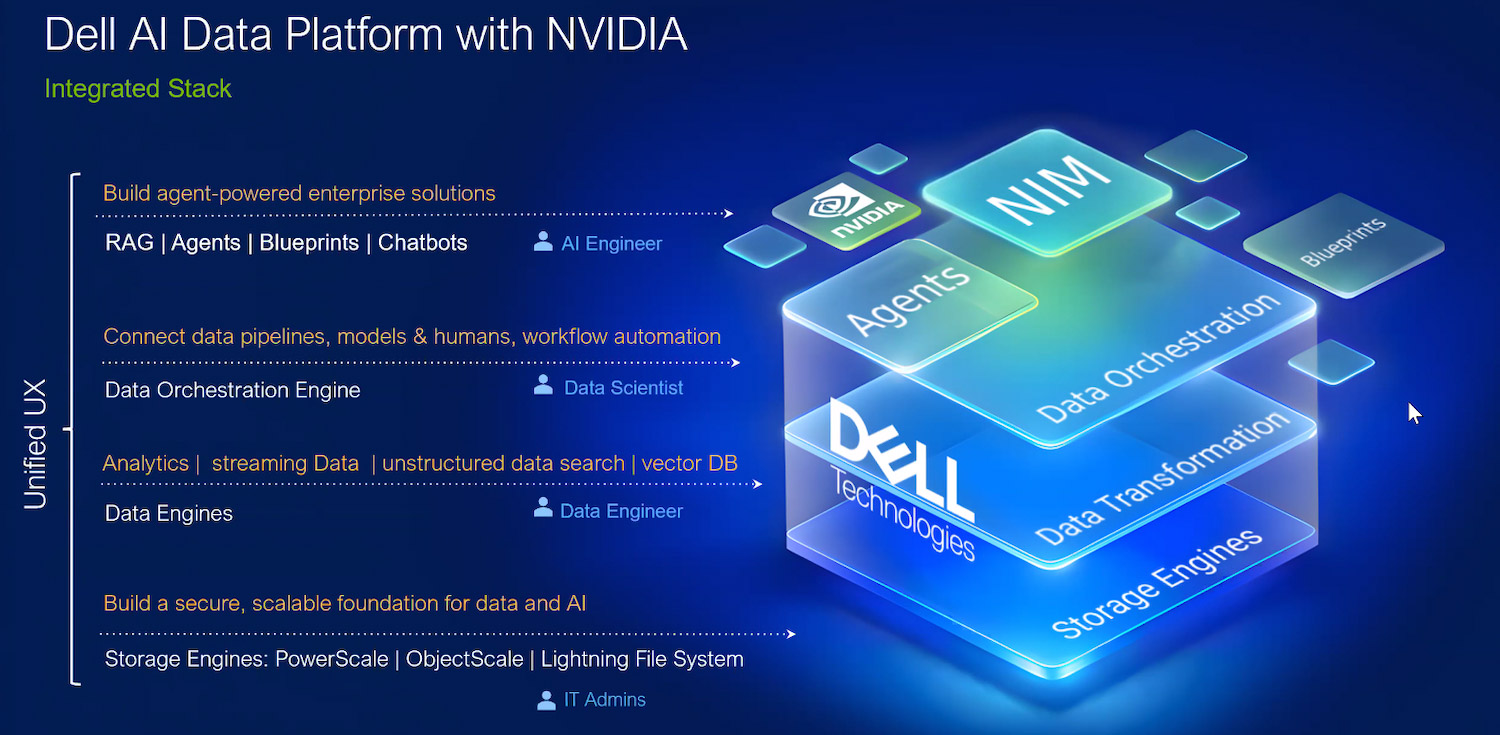

Dell Technologies has introduced the Dell AI Data Platform, a set of data and storage technologies aligned with NVIDIA’s AI ecosystem, designed to help enterprises move from AI pilots to production-scale, agentic systems. The platform is designed to address a familiar constraint in enterprise AI: data that is too slow, siloed, or poorly governed to support high-value AI workloads.

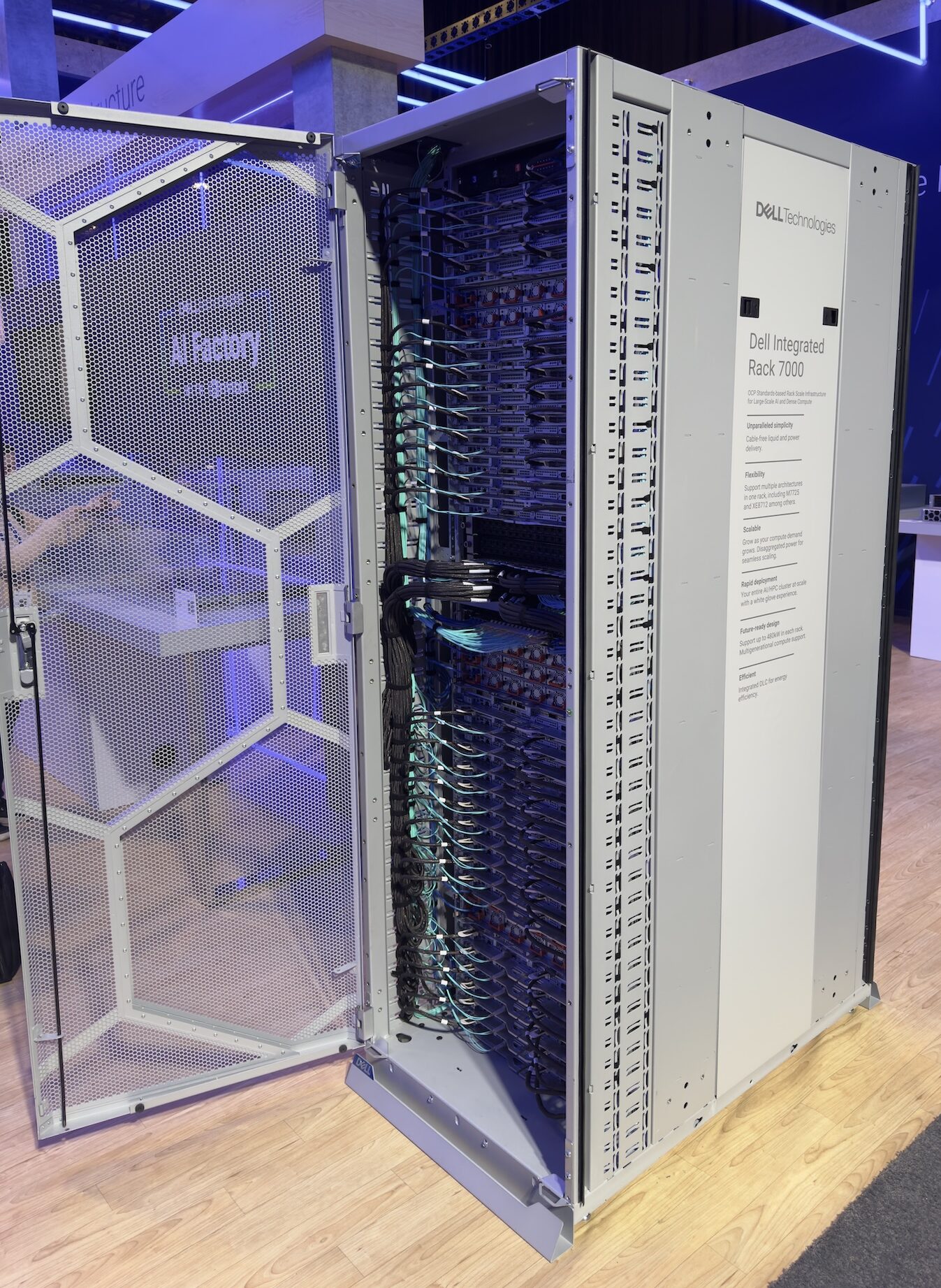

As organizations attempt to operationalize AI agents and long-context workflows, they often discover that the limiting factor is not model choice but the ability to access, structure, and govern data across fragmented systems. Dell positions the AI Data Platform as part of the Dell AI Factory with NVIDIA, providing a combined stack of data engines, GPU-accelerated processing, and high-throughput storage.

Dell cites internal benchmarks showing up to 12x faster vector indexing, 3x faster data processing, and 19x faster time-to-first-token compared with “traditional computing methods,” with acceleration driven by NVIDIA GPUs and CUDA-X libraries integrated into the data layer.

Automating the AI Data Lifecycle with Dell Data Engines

At the core of the Dell AI Data Platform is a set of Dell data engines, accelerated by NVIDIA AI infrastructure, to automate the AI data lifecycle and reduce data prep time while preserving enterprise controls.

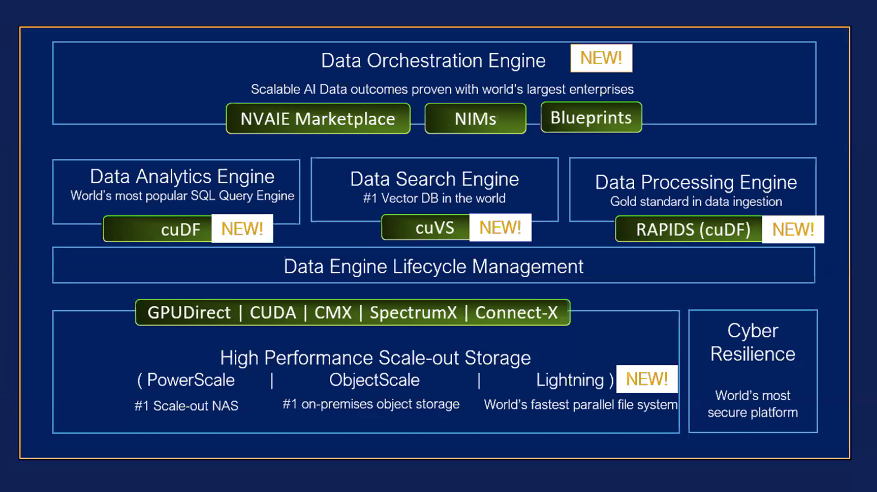

The Dell Data Orchestration Engine, based on technology from Dell’s Dataloop acquisition, is designed to manage data from ingestion through to AI-ready datasets. It automatically discovers structured, unstructured, and multimodal data sources, then labels, enriches, and transforms them into governed datasets suitable for training and inference. The platform combines automated pipelines with active learning and human-in-the-loop review to iteratively improve dataset quality and model accuracy without sacrificing governance.

To speed deployment, the Data Orchestration Engine includes a Marketplace with pre-built data workflows that incorporate NVIDIA NIM microservices, NVIDIA AI blueprints, and more than 200 additional models, applications, and templates. This marketplace-style approach is intended to shorten time-to-value by providing reusable components for common enterprise AI patterns.

Integration with NVIDIA AI Blueprints, NIM, and Nemotron

The Dell AI Data Platform aligns with NVIDIA’s AI-Q blueprint for building AI agents that deliver actionable insights across enterprise data. Dell integrates NVIDIA-accelerated data engines to support efficient data preparation, retrieval, and reasoning across structured and unstructured sources, with a focus on retrieval-augmented generation and agentic workflows.

Enterprises can access a library of NVIDIA content, including:

- Pre-built NVIDIA AI Blueprints for common agent and application patterns

- NVIDIA NIM microservices for model deployment and inference

- The NVIDIA Nemotron 3 Super model via the Dell Enterprise Hub on Hugging Face

This integration allows customers to pair Dell’s data layer and storage stack with NVIDIA’s model, inference, and microservice ecosystem in a supported configuration.

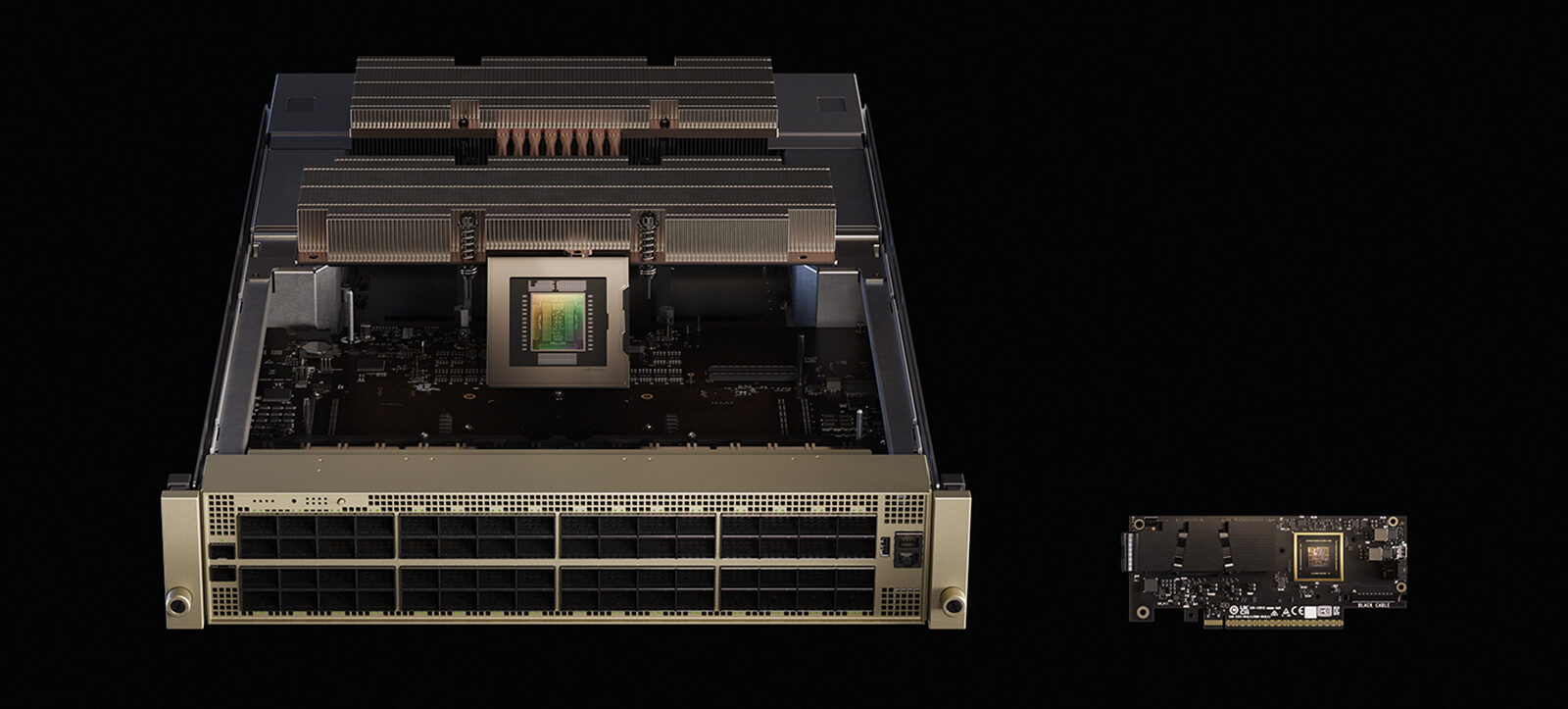

Support for NVIDIA STX, Vera Rubin NVL72, and Spectrum-X

Dell is also aligning its infrastructure portfolio with NVIDIA’s STX modular reference design. The company plans to support NVIDIA STX, powered by the NVIDIA Vera Rubin NVL72, NVIDIA BlueField-4 DPUs, and NVIDIA Spectrum-X Ethernet networking. The intent is to provide a modular, scalable platform for AI data management and processing that tightly couples GPU, DPU, and high-performance networking with Dell’s storage and data software.

This STX-based approach targets environments that need to manage, process, and retrieve large volumes of data for training, fine-tuning, and real-time inference, while maintaining performance isolation and predictable throughput.

AI Assistant for SQL Analytics

Within the Dell Data Analytics Engine, Dell is introducing an AI Assistant that provides a conversational interface to SQL analytics. The assistant allows business and technical users to:

- Query governed, structured data using natural language instead of raw SQL

- Visualize query results for analysis and collaboration

- Work within existing governance boundaries while expanding data access to non-SQL experts

For organizations deploying AI agents that need high-quality structured data, this SQL-focused assistant is intended to reduce reliance on specialized data engineering skills and accelerate decision cycles.

NVIDIA RTX PRO Blackwell and CUDA-X in the Data Layer

A key design point of the Dell AI Data Platform is the integration of NVIDIA GPUs directly into the data layer. Dell is incorporating NVIDIA RTX PRO Blackwell Server Edition GPUs to accelerate data processing close to where data resides.

NVIDIA CUDA-X libraries, such as cuDF for columnar operations on structured data and cuVS for vector indexing, run alongside Dell’s data engines to offload and parallelize data workloads. Dell reports performance improvements of up to 3x for SQL queries and up to 12x for vector indexing compared to traditional, CPU-centric approaches.

By embedding GPU acceleration into the data path, Dell aims to keep downstream AI training and inference pipelines fed with prepared, indexed data at a rate that matches GPU consumption, which is a common bottleneck in production-scale AI environments.

Storage: Keeping GPUs Utilized at Scale

As AI workloads move beyond experimentation, storage bandwidth and latency often become the limiting factor, leaving costly GPUs idle. Dell is responding with AI-optimized storage engines that are architected to sustain high performance as capacity and node counts grow.

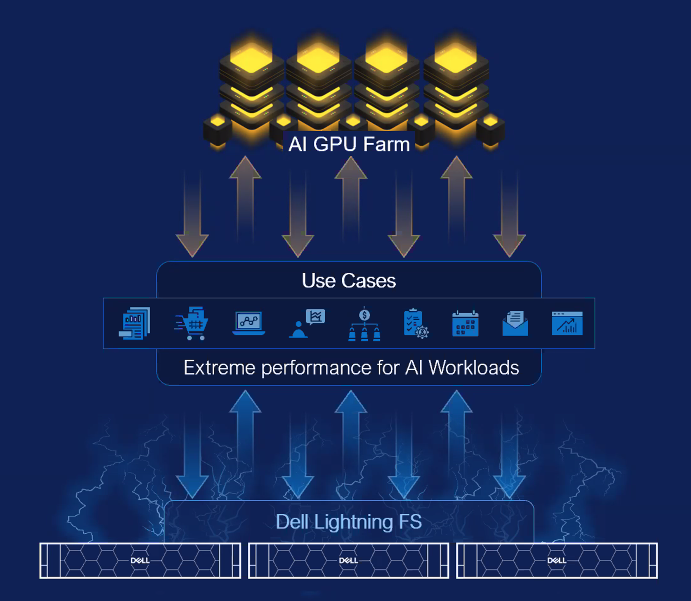

Dell Lightning File System

The Dell Lightning File System is a high-performance parallel file system tuned for AI training and inference workloads. Dell specifies up to 150 GB/s per rack unit, with performance advantages versus traditional flash-only and parallel file systems.

Lightning uses a fabric-based architecture that enables direct, high-bandwidth access from compute nodes to storage, aiming to maintain GPU utilization at scale. The system integrates with NVIDIA-based AI infrastructure and is well-suited to GPU-dense training clusters where sustained throughput is critical.

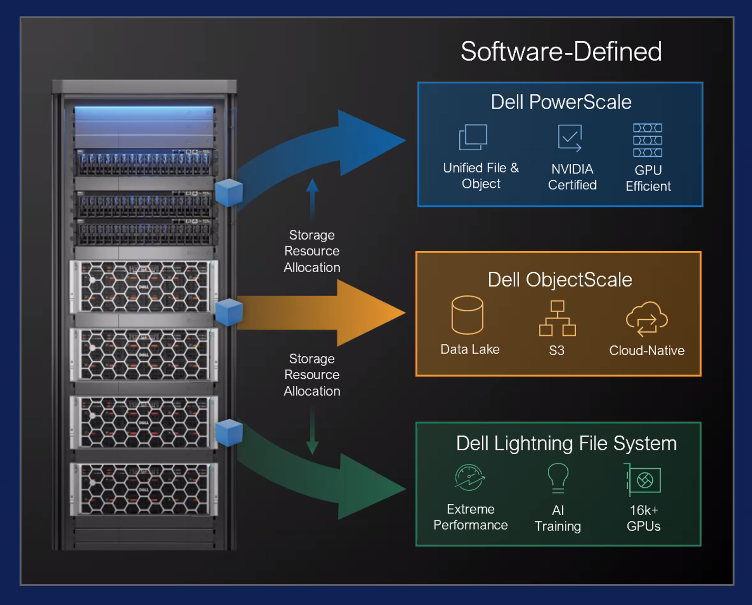

Dell Exascale Storage

Dell Exascale Storage is presented as a 3-in-1 storage system for AI and high-performance computing, supporting concurrent file, object, and parallel file storage on Dell PowerEdge servers. On a single hardware platform, organizations can deploy:

- Dell PowerScale for scale-out file system

- Dell ObjectScale for S3-compatible object storage

- Dell Lightning File System for a high-throughput parallel file system

This converged approach targets demanding AI and HPC workloads, including high-frequency trading and “neoclouds,” where mixed protocols and extreme throughput requirements coexist. Exascale Storage supports NVIDIA CX-8 and CX-9 SuperNICs and up to 800 GbE network links, and Dell quotes performance of up to 6 TB/s per rack for AI workloads that must ingest very large datasets quickly.

KV Cache Offload with NVIDIA CMX and Dell Storage

To support long-context and agentic AI workloads, Dell and NVIDIA are enabling KV Cache offload from GPU memory to shared storage. Using NVIDIA CMX memory-storage technologies and inference-acceleration capabilities, KV Cache can be deployed on Dell CMX Storage and high-speed networks across PowerScale, ObjectScale, and Lightning File System.

By relocating the KV Cache from limited GPU memory to high-performance shared storage, organizations can:

- Increase effective context length for large language models

- Maintain continuity for long-running agent interactions

- Improve GPU utilization by freeing VRAM for compute rather than cache

This is particularly relevant for AI agents that need to reference extensive historical data or maintain long, stateful conversations without exhausting GPU memory.

PowerScale pNFS for Large File Performance

Dell PowerScale’s software-driven Parallel Network File System (pNFS) architecture is optimized for large-file throughput in AI environments. Dell reports up to 6x faster performance for large files compared with NESv3.12.

pNFS distributes file access across nodes to maintain high bandwidth and reduce hot spots, helping keep data flowing to GPU workloads. The goal is to minimize I/O bottlenecks and reduce idle GPU time, especially in training scenarios with large checkpoint files and datasets.

Dell AI Factory with NVIDIA: Scale and ROI

The Dell AI Data Platform and storage enhancements arrive as Dell marks the two-year milestone of the Dell AI Factory with NVIDIA. According to Dell, more than 4,000 customers are deploying the AI Factory stack, with early adopters reporting up to 2.6x ROI in the first year.

The broader Dell AI Factory portfolio spans infrastructure, software, solutions, and services designed to move AI from isolated pilots to production at scale. The AI Data Platform, data engines, and storage stack outlined here are intended to address the data access and performance aspects of that journey, which, in many environments, have proven more challenging than standing up GPU clusters or models.

The Dell AI Data Platform with NVIDIA offers an integrated, data-centric architecture that combines lifecycle automation, GPU-accelerated data processing, and high-throughput storage, all designed to keep AI agents and models fully utilized.

Amazon

Amazon