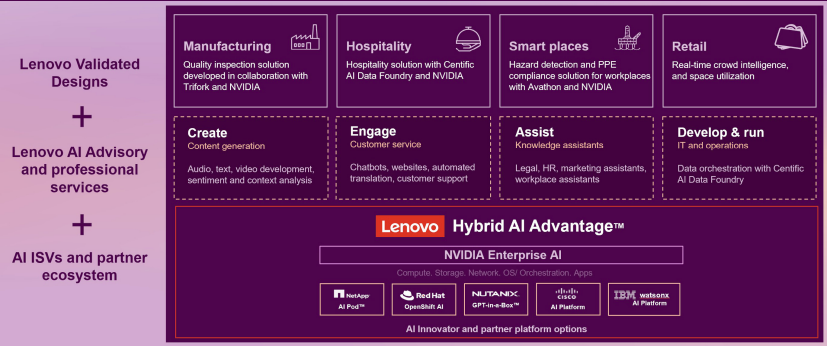

At NVIDIA GTC, Lenovo introduced an expanded phase of its Lenovo Hybrid AI Advantage with NVIDIA, positioning the portfolio as an end-to-end path for production AI inferencing across client devices, enterprise infrastructure, and large-scale AI cloud deployments. The announcement centers on accelerating AI adoption, cutting time-to-first-token (TTFT), and improving per-token economics as organizations move from model training toward real-time, decision-oriented AI.

Lenovo framed the update as a continuation of its inferencing acceleration initiatives first discussed at Lenovo Tech World, now extending beyond enterprise deployments to include rack-scale and “gigawatt-scale” AI cloud buildouts. The company’s message is that inferencing is becoming the operational bottleneck and value driver for agentic AI. The increased inference volume elevates the importance of cost control, security, and predictable performance across edge, on-prem, and cloud environments.

Hybrid AI Demand

Lenovo cited the “CIO Playbook 2026” (commissioned by Lenovo and conducted by IDC), noting that 84% of organizations expect to run AI on-premises or at the edge alongside cloud resources. The implication is that hybrid architectures are becoming the default for production inference, driving demand for validated platforms that can be deployed consistently across sites while meeting enterprise requirements for governance, latency, and data locality.

Lenovo CEO Yuanqing Yang emphasized that the Lenovo-NVIDIA partnership is intended to push AI from pilot projects into enterprise production and cloud-scale deployments. His focus was on agentic AI, increasing inference workloads, and managing costs while optimizing per-token performance. Lenovo said it is combining NVIDIA AI Enterprise software with its hybrid AI platforms to streamline deployment and improve scaling efficiency.

NVIDIA CEO Jensen Huang similarly framed the moment as an “AI production era,” in which real-time intelligence generation and agentic behaviors require scalable, accelerated computing, software, and infrastructure. NVIDIA’s view is that full-stack platforms will be needed to keep pace as agents evolve to reason, plan, and act.

Device-Side AI

On the client side, Lenovo is extending AI development and inference capabilities to mobile and desktop workstations powered by NVIDIA RTX Pro Blackwell GPUs. Lenovo’s next-generation mobile workstation lineup includes NVIDIA RTX PRO Blackwell laptop GPUs across systems such as the ThinkPad P14s Gen 7, ThinkPad P16s Gen 5, and ThinkPad P1 Gen 9, targeting professionals who need local acceleration for AI workflows and content pipelines.

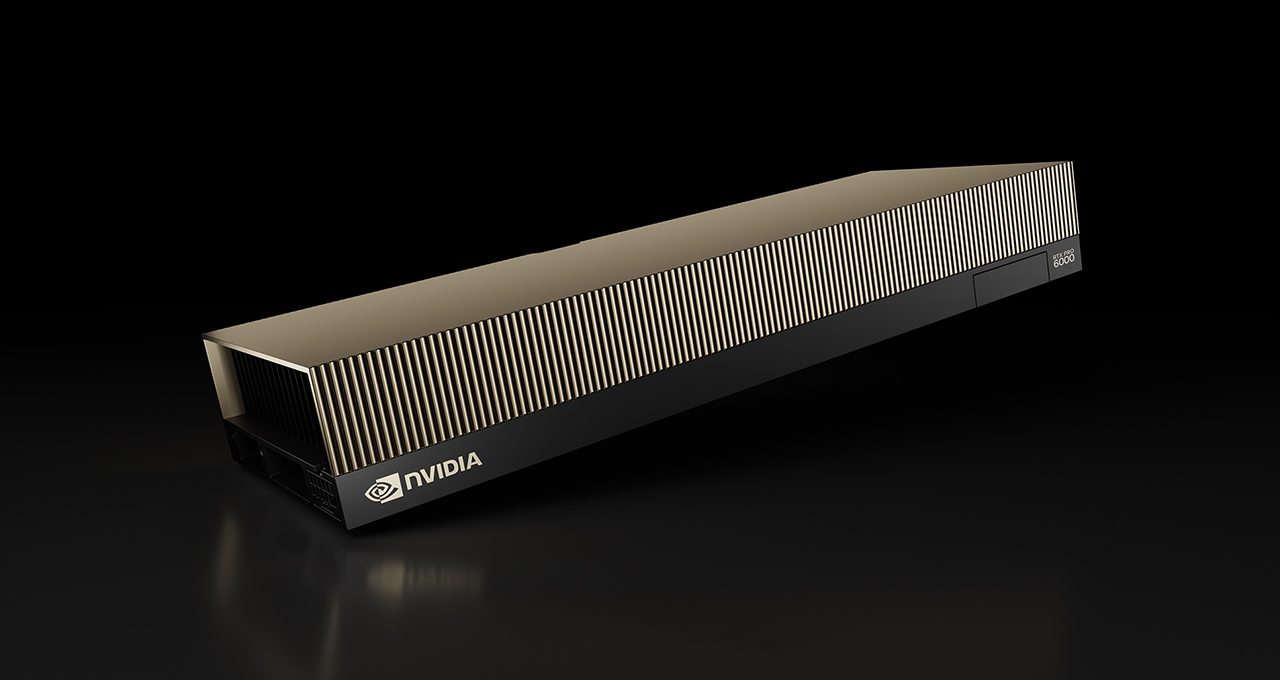

For fixed workstations, Lenovo highlighted the ThinkStation P5 Gen 2, configurable with up to two NVIDIA RTX PRO 6000 Blackwell Max-Q Workstation Edition GPUs and support for NVIDIA OpenShell, described as a safe, private runtime for autonomous AI agents. Lenovo also pointed to a “ThinkStation PGX” AI developer device designed for secure, private, on-prem development and inference, claiming up to 1 petaflop of AI compute and support for models with up to 200B parameters.

Lenovo also packaged the workstation story with deployment and manageability components. The company described “Lenovo AI Developer” as a full-stack development suite with pre-designed blueprints to help teams build and secure workflows, and referenced Lenovo Imaging Services for Devices to simplify PC fleet deployment and day-one readiness.

In parallel, Lenovo previewed a laptop and workstation battery proof of concept: a silicon-anode design cited as 1,000Wh/L, with up to 99.9Whr capacity, intended to improve battery life and sustained performance without increasing device footprint.

Enterprise Inference

For data center and edge, Lenovo positioned its Hybrid AI Advantage with NVIDIA as a production inferencing platform, designed to bring AI on-premises with tighter operational control and improved economics compared to cloud-only approaches. Lenovo claimed ROI in under six months and up to 8x lower per-token cost than “comparable cloud IaaS,” aligning the message with TTFT, throughput, and per-token cost as decision criteria.

Lenovo introduced new inference-optimized ThinkSystem and ThinkEdge servers, along with enhanced hybrid AI platforms and partner integrations, designed for real-time inference across retail, manufacturing, healthcare, sports, and smart cities.

The expanded portfolio includes NVIDIA-Certified Systems integrated with NVIDIA AI Enterprise software, with several specific platform tiers:

- Two Lenovo Hybrid AI platforms: one built around NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs for scale-out enterprise AI and multimodal inferencing, and another based on NVIDIA Blackwell Ultra targeting training, fine-tuning, and large-scale inference.

- Hybrid AI inferencing starter platform: using NVIDIA RTX PRO 4500 Blackwell Server Edition GPUs, positioned for single-node deployments. Lenovo claimed up to 3x performance gains for vision AI and up to 4x performance for content generation compared to NVIDIA L4.

- ThinkAgile HX650a: paired with Nutanix Enterprise AI and Nutanix Kubernetes Platform as a validated foundation for protected inferencing and agentic workloads.

- Data and protection integrations: Lenovo Hybrid AI platforms with Cloudian for scalable, sovereignty-aligned data pipelines, and Veeam Kasten for Kubernetes-native protection of AI models and services.

Lenovo also said the portfolio is backed by an expanded global collaboration with IBM Technology Lifecycle Services, positioning the relationship to accelerate deployment and operations of hybrid AI at scale.

Industry Solutions

Lenovo is expanding its Lenovo AI Library with vertical solutions built on Lenovo Hybrid AI Advantage with NVIDIA, emphasizing production-grade inferencing embedded into workflows rather than stand-alone demos.

In sports, Lenovo described use cases for real-time analytics, operational intelligence, and broadcast optimization, with the underlying theme of low-latency inference for live production and venue operations. In retail, Lenovo highlighted in-store and digital assistants delivered through the Lenovo xIQ Agent Platform with NVIDIA, aiming at personalization and operational efficiency.

For industrial and mobility environments, Lenovo described “physical AI” offerings that combine robotics, edge compute, and multimodal sensing to automate inspection, improve worker safety, optimize fleet operations, and reduce downtime. The company also referenced an “Auto AI Box” intended to extend edge inference into vehicle computing platforms for advanced driver assistance, predictive maintenance, and real-time fleet intelligence.

Lenovo tied these efforts to its Lenovo AI Innovators ecosystem, naming partners AiFi, RocketBoots, and Vaidio as part of a validated-solutions approach for the public sector, smart cities, retail, and other verticals.

AI Cloud

On the cloud infrastructure side, Lenovo positioned itself as a launch partner for NVIDIA’s Vera Rubin NVL72, offering liquid-cooled, rack-scale AI systems for hyperscale and sovereign AI cloud providers. Lenovo’s pitch here is faster deployment and improved token economics, citing up to 10x higher throughput and reduced cost per token.

Lenovo also introduced NVL8 systems and said it is collaborating with Nscale to support large-scale inference and agentic workloads. Lenovo Hybrid AI Factory Services is being used to wrap these deployments with lifecycle management, global rollout support, and operational optimization, with the stated goal of reducing risk and accelerating time-to-revenue for AI cloud providers.

Channel and Go-to-Market

Finally, Lenovo emphasized that its partnership with NVIDIA is structured for broad partner delivery through the Lenovo 360 framework. The company said partners can package devices, infrastructure, services, NVIDIA AI Enterprise software, accelerated computing, and networking into a guided engagement model designed to move customers from pilot to production and then scale AI capabilities across hybrid environments.

Amazon

Amazon