At Think 2026, IBM made a broad set of announcements to show how it wants enterprises to operationalize AI across data, infrastructure, governance, and regulated environments. The company’s updates included a new enterprise AI operating model, the general availability of IBM Sovereign Core, and a separate quantum computing milestone with Cleveland Clinic and RIKEN, advancing biomolecular simulation to 12,635 atoms.

Taken together, the announcements show IBM positioning AI as much as an infrastructure and operations problem as a model problem. The company’s message was that enterprise adoption now depends less on proving AI can work and more on building the control planes, data pipelines, and compliance frameworks needed to run it at scale.

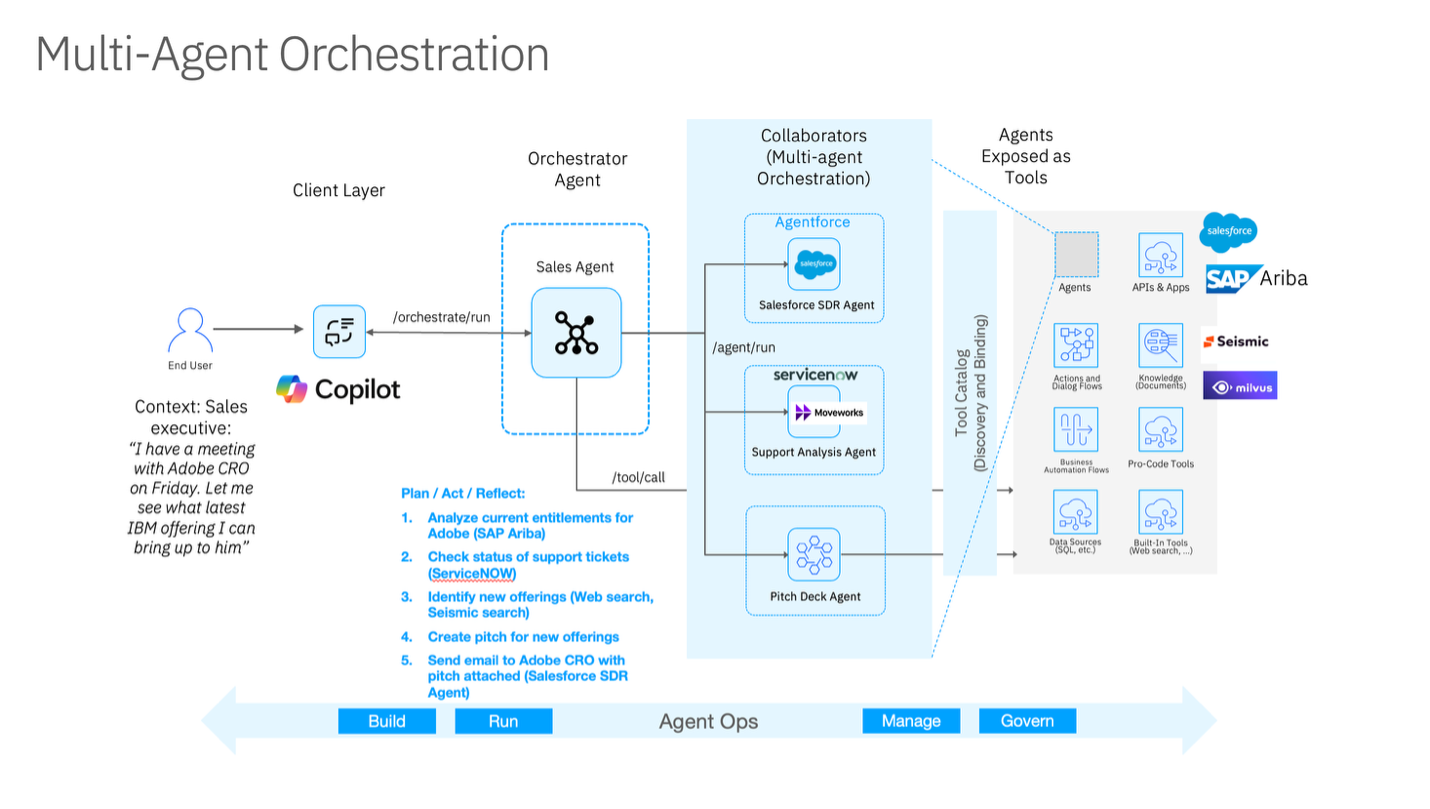

IBM framed this in terms of four layers: agents, data, automation, and hybrid operations. On the agent side, the company introduced the next-generation watsonx Orchestrate in private preview as a multi-agent control plane, enabling enterprises to deploy and govern agents from different sources under a common policy model. IBM also highlighted IBM Bob, now generally available, as an agentic development tool for enterprise developers, along with a Bob Premium Package for Z in private preview for mainframe environments.

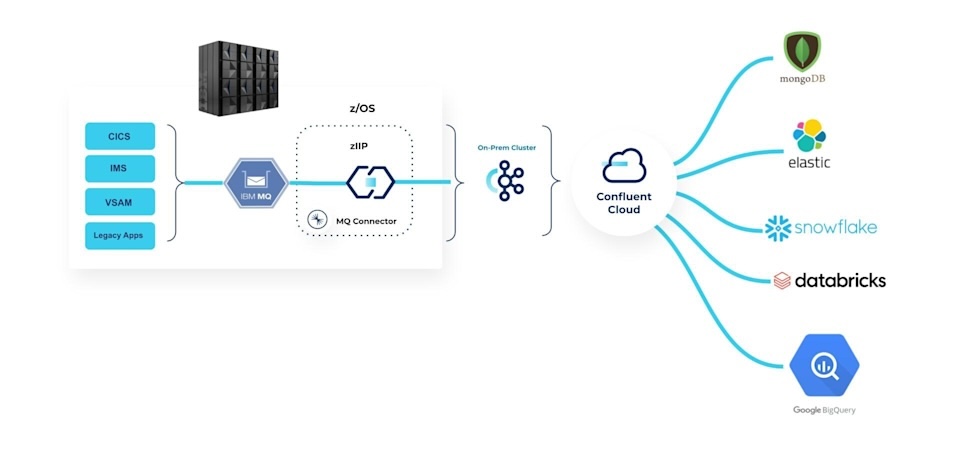

For the data layer, IBM emphasized the need for real-time, AI-ready context over static data silos. It tied that strategy to Confluent, which it described as part of its expanding real-time data foundation, and to new watsonx.data capabilities. Notable additions included a federated context layer in watsonx.data, new OpenRAG and OpenSearch capabilities, and integrations that connect real-time event streaming with batch analytics across hybrid environments. IBM also highlighted GPU-accelerated Presto in watsonx.data, which, in internal and proof-of-concept work, was positioned to improve price-performance for large enterprise data workloads.

IBM’s automation story focused on reducing the operational friction of running AI across fragmented infrastructure stacks. The new IBM Concert platform, now in public preview, is designed to correlate telemetry and operational signals across applications, infrastructure, and networks, creating a more unified operational view. IBM also used the event to expand its security automation portfolio, including Concert Secure Coder, Vault 2.0, and zSecure Secret Manager, all aimed at tightening the connection among development, remediation, secrets management, and hybrid operations.

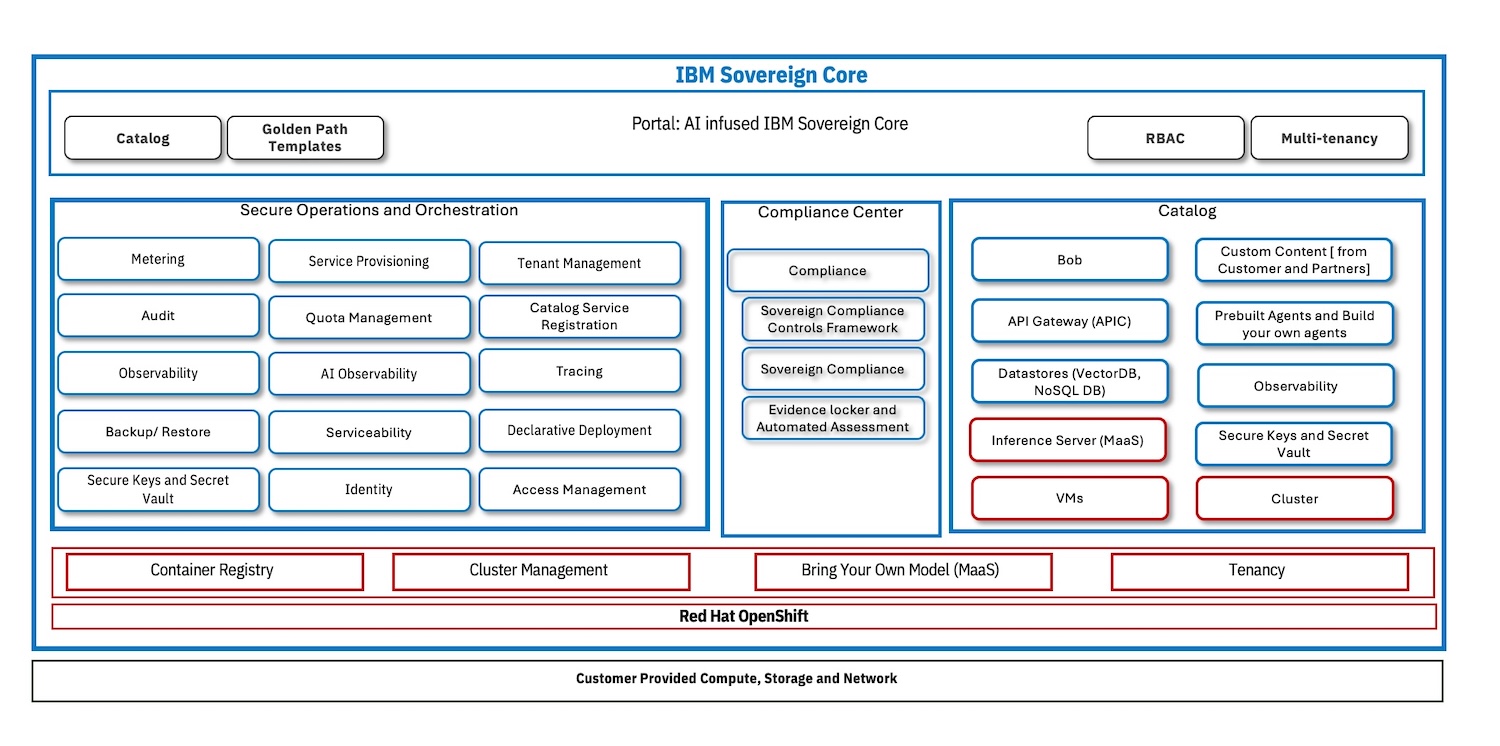

The sovereignty layer was among the more concrete product announcements. IBM Sovereign Core is now generally available as a software platform for building and operating AI-ready sovereign environments across hybrid infrastructure. IBM describes the platform as a way to move sovereignty from policy language to runtime enforcement, with controls spanning operations, data, technology architecture, and AI execution.

In practice, Sovereign Core packages a customer-operated control plane with in-boundary identity, encryption, logging, compliance monitoring, and AI execution. It also includes preloaded regulatory frameworks, automated evidence generation, and templates for CPU, GPU, and AI inference environments. The goal is to enable enterprises, governments, and service providers to demonstrate where workloads run, how they are governed, and whether they remain compliant over time. IBM also emphasized that the platform is built on open technologies such as Red Hat OpenShift and Red Hat AI, and that its ecosystem catalog includes partners such as AMD, Dell, Intel, MongoDB, Cloudera, Elastic, and Palo Alto Networks.

The Sovereign Core launch matters because AI governance increasingly clashes with regional regulations, data localization rules, and enterprise audit requirements. IBM’s position is that sovereignty must now include operational control over models, agents, and inference workflows, not just data residency. This is especially relevant for public-sector, regulated-industry, and service-provider deployments, where the compliance posture must be continuously demonstrated.

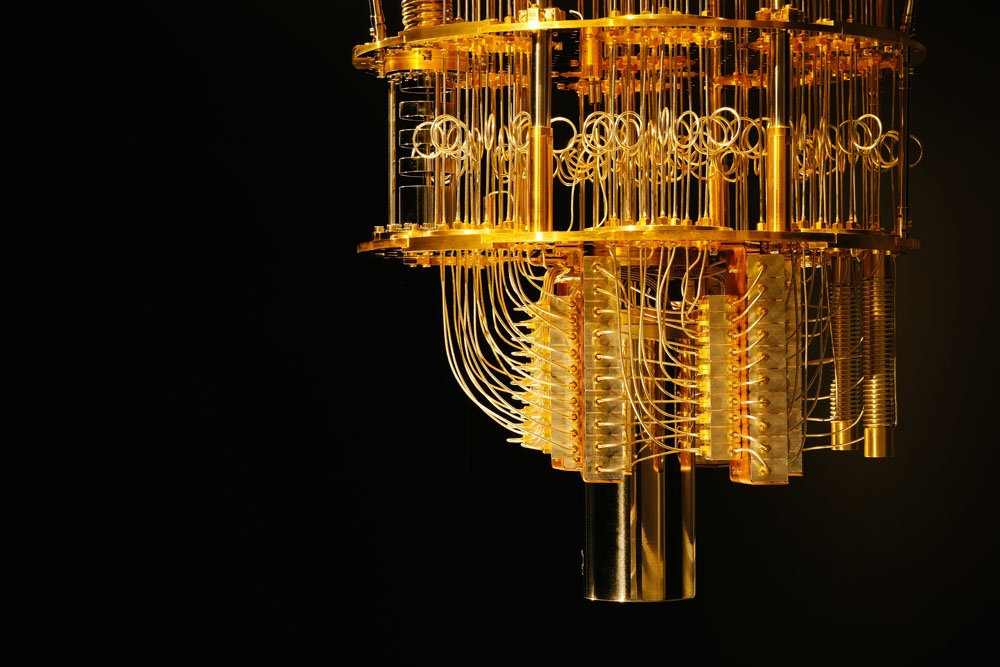

In addition to the Think infrastructure announcements, IBM also published a significant quantum computing update focused on drug discovery and molecular simulation. In collaboration with the Cleveland Clinic and RIKEN, IBM used quantum hardware and two major supercomputers to simulate protein complexes with up to 12,635 atoms. According to the release, these are the largest known simulations of biologically meaningful molecules performed on quantum hardware to date.

The work used IBM’s 156-qubit Heron processors alongside the Fugaku and Miyabi-G supercomputers, with classical systems decomposing protein-ligand complexes into fragments and quantum systems calculating the quantum-mechanical behavior of those fragments. IBM said the workflow required up to 94 qubits and nearly 6,000 quantum operations at certain stages of the calculation. The team also introduced a hybrid algorithm called EWF-TrimSQD, which IBM said reduced computational overhead and broadened the range of molecules that could be modeled. Compared with results from six months earlier, the organizations reported roughly a 40x increase in the size of proteins addressed by the method and up to a 210x improvement in accuracy in a key workflow step.

IBM and its research partners cast the result as evidence that quantum-centric supercomputing is moving beyond benchmark science into practical scientific computing. The near-term implication is not that quantum systems replace classical HPC, but that they can begin contributing to energy calculations and molecular modeling workflows relevant to drug discovery, enzyme behavior, and protein interactions.

IBM is attempting to connect agent orchestration, real-time data, hybrid operations, and sovereignty controls into a single enterprise narrative. The quantum milestone is more forward-looking, but it reinforces the same theme: IBM wants advanced compute, AI, and governance to be seen as part of a single architectural continuum rather than as separate product silos.

According to messages from Think 2026, enterprises need more than models. They need governed data, observable infrastructure, enforceable sovereignty, and operational tooling that scales AI without compromising compliance or adding complexity beyond what teams can manage.

Amazon

Amazon