HPE introduced the Alletra Storage MP X10000 platform in late November 2024 as the first disaggregated, all-flash, scale-out storage system designed to unify modern workloads under a single architecture. The design centers on a common hardware foundation managed entirely through the GreenLake (formerly HPE GreenLake) cloud platform, delivering high-performance object storage, flexible scaling, and integrated data intelligence services. In August 2025, HPE expanded the platform with the data protection accelerator node (DPAN). This addition created a dedicated path for high-speed backup and rapid restore operations by combining StoreOnce Catalyst with the X10000’s native S3 interface. Together, these components form the data protection solution we are evaluating in this report.

Key Takeaways

- Flash-First NVMe Architecture: X10000 delivers all-flash, scale-out object storage that brings primary-storage-class performance to backup and recovery workflows.

- Up to 300 TB/Hour Ingest Per DPAN: In testing, a single DPAN sustained up to 83.83 GB/s (300+ TB/hour), with throughput scaling predictably as additional nodes are added.

- Bottlenecks Shift Upstream: DPAN removes target-side constraints, exposing limits in backup software orchestration, networking, and client-write performance.

- Catalyst-Driven Data Reduction Pipeline: Source-side deduplication and compression using StoreOnce Catalyst reduce network load and extend effective storage capacity by up to 60:1.

- Built for Modern Workloads: The DPAN and X10000 architecture prepare backup infrastructure for high-concurrency environments, large databases, and AI-scale data growth.

The core job of the data protection accelerator node is straightforward. It performs deduplication, encryption, and data movement from backup software to the X10000 at speeds that traditional backup hardware cannot match. Catalyst plays the key role here. Catalyst is HPE’s data reduction and data movement protocol that integrates with several enterprise backup platforms. It identifies duplicate segments at the source and sends only clean, encrypted data to the DPAN. This reduces network load and allows the X10000 to ingest backup streams at very high speeds. HPE’s public claims include up to 1.2 PB of ingest performance per hour in a 4-node DPAN configuration, up to 22 times faster restores than older systems, and up to 60:1 data reduction. These numbers promise meaningful relief for organizations that face backup window constraints and slow recovery times.

Where this platform becomes interesting is in how dramatically it diverges from the legacy blueprint for data protection infrastructure. Traditional backup hardware has long been anchored in HDD-based systems with controller bottlenecks and rehydration cycles that limit performance. The DPAN and X10000 approach turns that model on its head. Instead of a slow, capacity-driven target, HPE delivers an all-flash, horizontally scaled, analytics-grade storage platform as the foundation for data protection. This elevates backup and recovery performance to the same level of responsiveness as primary storage architectures. In practical terms, this changes how backup windows look and, more importantly, what recovery timelines are possible with a modern backup infrastructure.

The X10000 runs a log-structured, flash-optimized key-value engine that maintains predictable latency even as concurrency increases. It uses NVMe SSDs throughout the cluster and links nodes via a disaggregated shared-everything model, delivering linear performance growth as hardware is added. The data protection accelerator node completes the picture by pairing the Catalyst engine with an encrypted, high-speed data path into the X10000 over 100 GbE. This design avoids the controller bottlenecks, rehydration penalties, and mechanical disk limitations that define traditional backup appliances. The result is a target that behaves more like a high-performance primary storage platform than a simple capacity tier.

There are clear business benefits when performance moves into this range. Shorter backup windows reduce operational risk and enable the protection of larger datasets without increasing the hardware footprint. Faster restore performance reduces downtime and improves resilience under tight RTO requirements. Data reduction lowers effective storage costs and extends the cluster’s useful life before expansion is necessary. Cloud-based management through GreenLake also reduces the operational burden on administrators, who would otherwise manage several independent platforms. Taken together, these strengths position the X10000 with the DPAN as a modern and scalable foundation for organizations that have outgrown traditional backup targets.

For this analysis, we partnered with Commvault, a major ISV supporting Catalyst-based workflows. Commvault already integrates source-side deduplication and high-speed data streaming, which closely aligns with HPE’s recommended configuration. HPE also supports other enterprise backup platforms, so the architectural and performance characteristics described here apply across a broader ecosystem of data protection partners.

Building a High-Speed Engine for Data Protection

With the high-level platform story established, we can examine in more detail how the X10000 and the data protection accelerator node are built and how they work together. The X10000 brings a flash-based, scale-out object layer designed for wide parallelism, while the accelerator node supplies the compute path for dedupe, encryption, and high-speed data movement. Understanding how these components are constructed, how data flows between them, and how performance increases with additional nodes provides the technical foundation for the performance results.

Data Protection Accelerator Node Capabilities in Practice

At scale, the Data Protection Accelerator Node behaves less like a traditional backup appliance and more like a high-throughput data movement engine. A single accelerator node can sustain approximately 300 TB/hour of backup ingest. As additional nodes are introduced, performance scales linearly. A four-node DPAN configuration can deliver up to 1.2 PB per hour, providing a clear and predictable path to higher throughput as backup environments grow.

Scalability in the data protection accelerator node is achieved through a modular design built on the HPE StoreOnce Gen5 platform. Each data protection accelerator node is a self-contained system that incorporates four dual-port 25 GbE NICs (eight 25 GbE ports total), aggregated via LACP into a 200 GbE logical network path, along with eight SSDs in RAID 6, providing 92 TB of usable cache for data management operations.

Backup ingest and processing are distributed across nodes, allowing performance and capacity to scale horizontally as additional accelerator nodes are added. With support for up to 10 active accelerator nodes (plus up to two optional high-availability nodes), the platform increases aggregate ingest bandwidth and effective backup capacity in a linear fashion, avoiding centralized bottlenecks and enabling predictable scaling as backup demands grow.

| Specification | HPE Alletra Storage MP X10000 DPAN |

|---|---|

| Node Configuration | |

| Form Factor | 2U |

| Minimum Nodes | 0 |

| Maximum Nodes | 10 active (+2 optional HA) |

| Storage & Caching | |

| SSDs | 8 |

| Usable Cache (Data Management) | 92 TB |

| Max Usable Backup Storage per Node | 2 PB |

| Max Effective Backup Storage per Node | 120 PB |

| Networking – 25 GbE Ports | 8 |

Source-Side Deduplication and Data Reduction Pipeline

A defining characteristic of the architecture is its reliance on inline, source-side deduplication using HPE StoreOnce Catalyst. Deduplication is performed before data is transmitted to the accelerator node, using fine-grained 4 KB chunk sizes. This approach minimizes data movement across the network and maximizes space efficiency, particularly in environments with high levels of redundancy across backup sets.

Once duplicate segments are eliminated, the remaining data is compressed before transmission. This further reduces network utilization and ensures that only optimized data enters the accelerator pipeline. The accelerator node does not store backup payloads locally. Instead, it maintains metadata and catalog information required to track deduplicated objects and restore relationships. All backup data is streamed directly and continuously to the X10000 using its native S3 interface.

This design avoids the need for rehydration stages, staging disks, or secondary landing zones. The X10000 receives deduplicated, compressed, and encrypted data in a form that can be written efficiently to its flash-based object store.

Secure, Native S3 Storage Target

Using native S3 as the storage target is central to the system’s flexibility. Because the accelerator node writes directly to the X10000 over S3, the platform integrates cleanly with modern backup software without proprietary storage protocols or translation layers. Data arrives encrypted and optimized, allowing the X10000 to focus on durable, parallel object placement across NVMe flash.

Real-world Catalyst data reduction ratios of up to 60:1 significantly extend storage capacity and reduce the frequency of expansion events. This level of reduction directly impacts the total cost of ownership, particularly for long-retention backup datasets that would otherwise require substantial raw capacity. For Commvault’s direct S3 backup to the X10000, the ratio is typically 6:1 to 7:1.

Supporting Best-Practice Data Protection Models

Beyond raw performance, the architecture aligns well with established data protection best practices. The system supports a 3-2-1-1-0 copy model in which primary data resides on production storage, backup data is stored on the X10000, and an additional copy is replicated to public cloud storage using HPE Cloud Bank Storage. HPE’s 3-2-1-1-0 support is achieved by enabling fast backup to flash, a secure off-site copy, and an immutable copy for ransomware recovery. This ensures resilience against both local failures and site-wide outages.

The design naturally supports the “two different media types” principle by separating primary storage from the flash-based object storage used for backups, with the public cloud serving as an additional medium. Storing one copy off-site in the cloud provides geographic isolation and strengthens disaster recovery and ransomware protection strategies without introducing operational complexity.

Backup Software Ecosystem Support

The Data Protection Accelerator Node integrates with multiple enterprise backup platforms that support Catalyst-based workflows. Commvault and Cohesity NetBackup are fully supported, and support for Veeam expands the platform’s applicability across a wide range of enterprise environments. Because deduplication and data movement are handled by the accelerator node rather than by the backup application alone, these integrations deliver consistent performance regardless of the software layer in use.

Scaling Backup Performance by Design

Taken together, these elements explain why adding data protection accelerator nodes directly boosts backup performance. Each node contributes additional compute capacity, network bandwidth, and deduplication throughput to a specific X10000 cluster. There is no shared global accelerator pool and no hidden contention between clusters. Scaling is explicit, local, and predictable.

Putting the DPAN to Work (3-Server Backup and Restore Validation)

The data protection accelerator node fundamentally changes the performance profile of backup and restore workflows. Rather than being constrained by the target storage subsystem, organizations implementing the accelerator node often discover that throughput is now limited by upstream components: the backup application’s orchestration overhead, source server capacity, or network infrastructure. This is similar to how the transition to NVMe flash in primary storage exposed bottlenecks in legacy network fabrics.

This validation effort focused on characterizing real-world DPAN behavior under enterprise workloads, with particular emphasis on understanding where performance boundaries shift as the backup target is no longer the constraint.

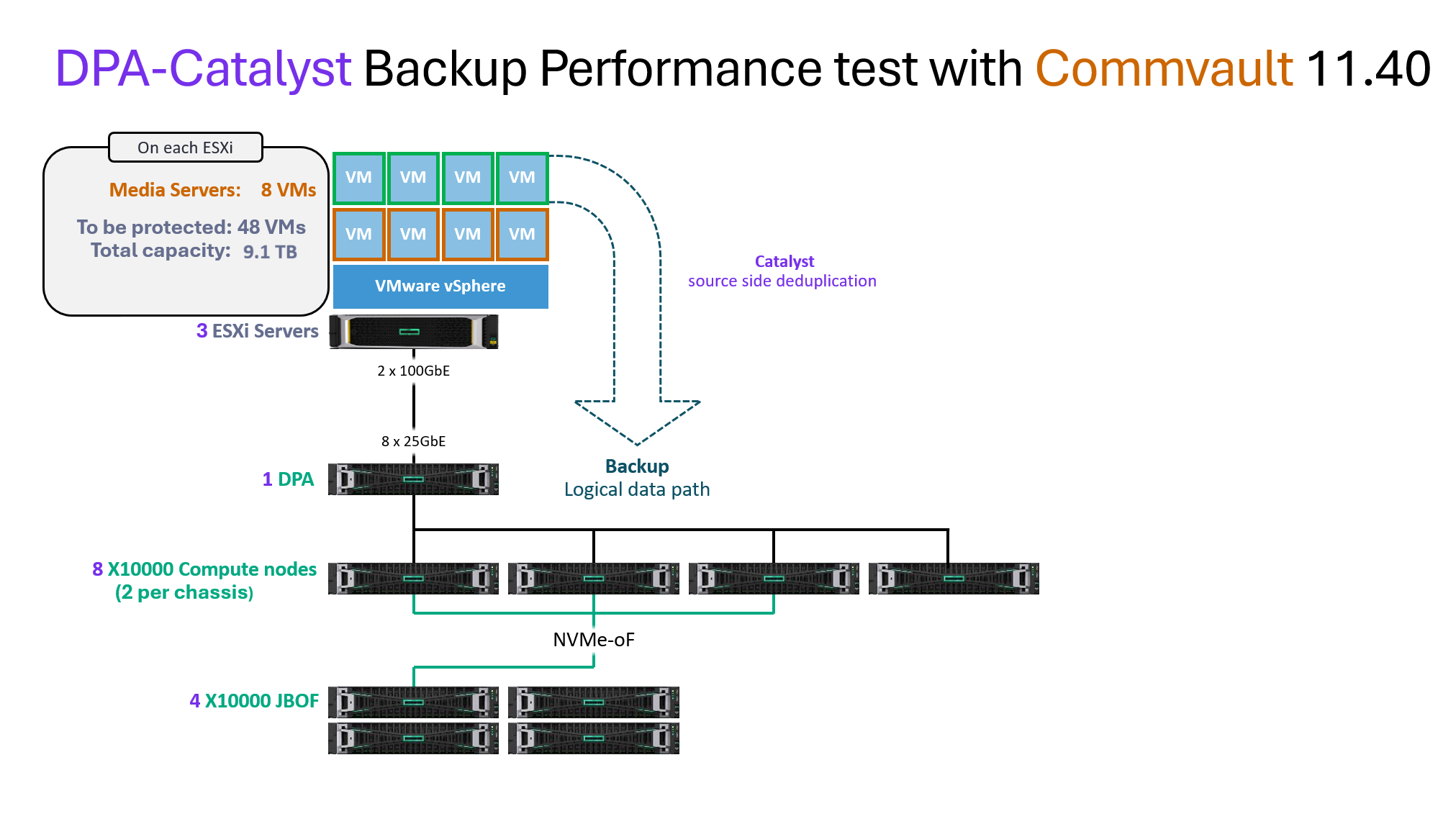

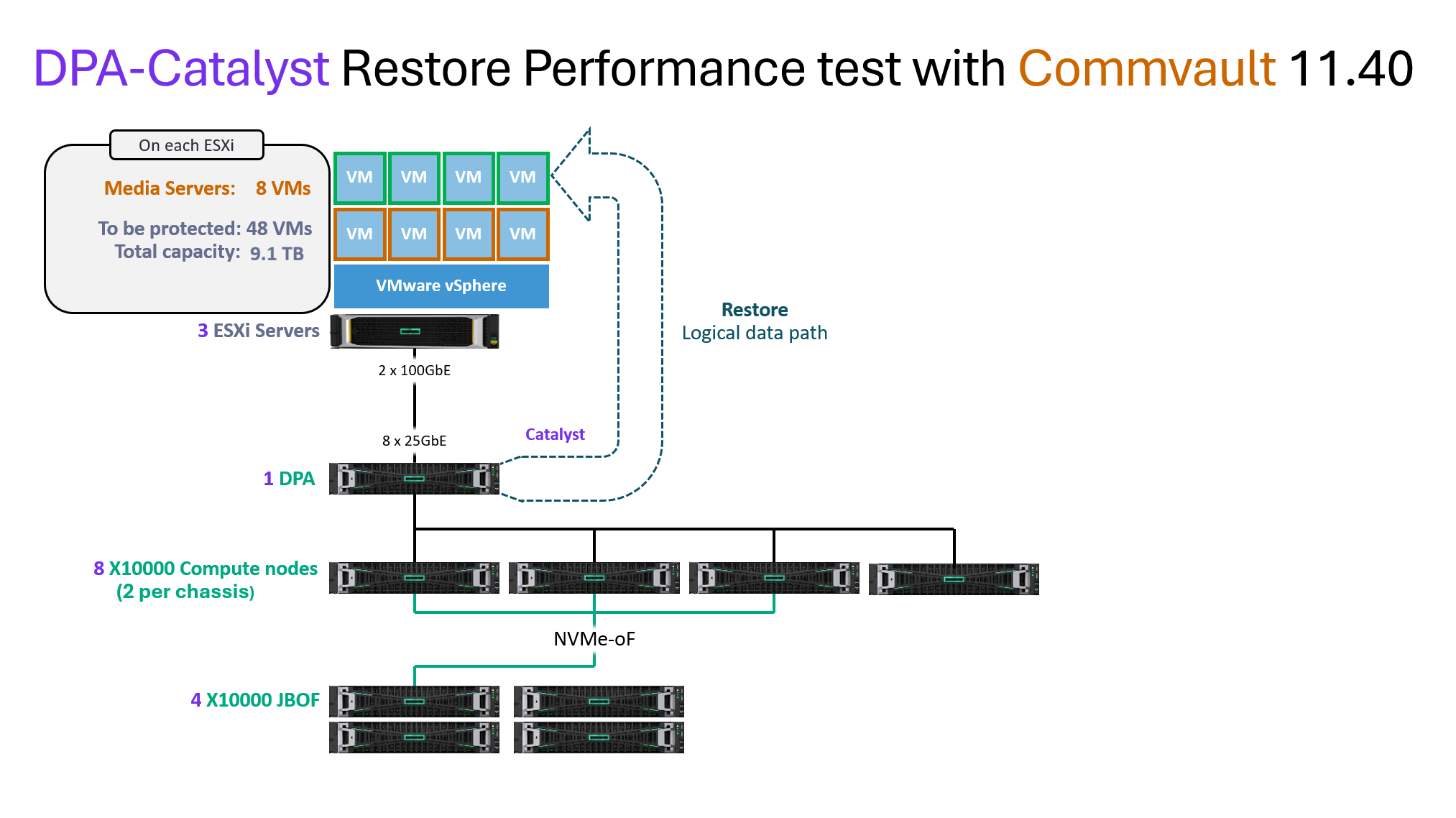

Testing Configuration

For the three-server configuration, we used a focused test design to isolate and validate the performance characteristics of a single DPAN under parallel load. In our backup and restore test, we used eight Commvault Media Gateway VMs on each host. The backup throughput was slightly impacted by the additional gateways due to the ESXi memory ceiling. However, there was noticeable improvement in the restore performance.

| Component | Configuration |

| Backup Hosts | 3 (HPE DL380 Gen11, ESXi hosts with NVMe storage, each with 48 VMs and 8 Commvault Media Gateways) |

| Commvault Host | 1 (HPE DL380 Gen 11) Dual Xeon Gold 6430 (64 Cores total), 512GB RAM, 8TB storage (Commvault 11.40.26) |

| Data Protection Accelerator | 1 (HPE 7720 DPA) Dual Xeon Gold 6538Y+ (64 cores total) 1.5TB RAM, 92TB NVMe bonded 200GbE |

| VMs | 144 VMs total across the 3 backup hosts (190GB each, 27.36 TB total dataset) |

| Storage | 4 server 8-node HPE Alletra Storage MP X10000 + (4x JBOF configuration), each with 2x disk controllers per JBOF |

| Networking Fabric | 200 GbE network fabric (2x NVIDIA SN4600c Mellanox, bonded 8x 25GbE per DPA) + 2x Backend Switches (NVMeoF) (Aruba CX 8325) |

This single-DPAN configuration enabled clear characterization of throughput and system behavior, demonstrating the contribution of each DPAN to the overall architecture.

Backup Performance

With three backup servers feeding a single DPAN, the system demonstrated how the accelerator node eliminates the target as a performance bottleneck.

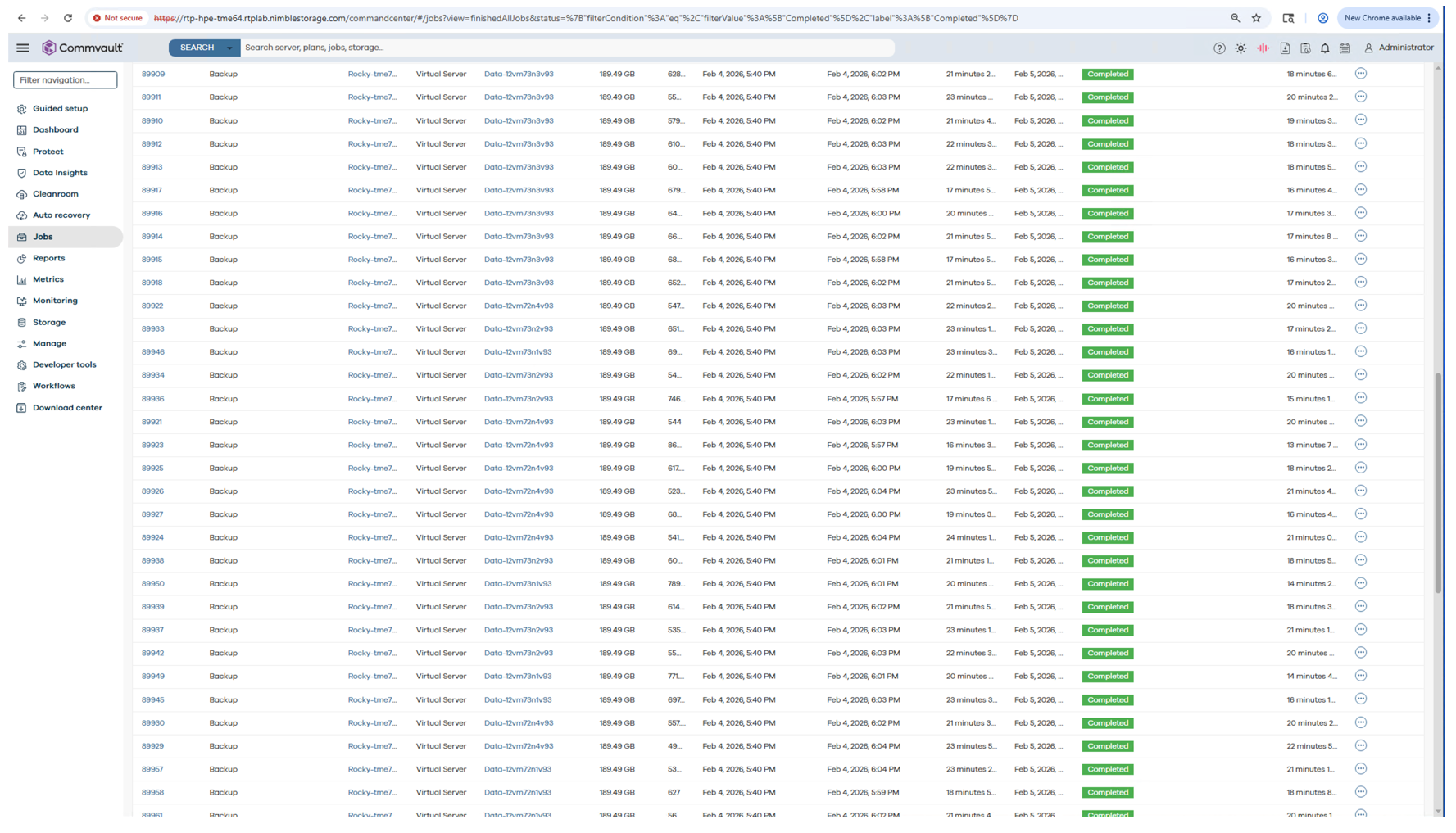

In this test, the environment sustained 17.54 GB/s of aggregate backup throughput while protecting 144 virtual machines concurrently. The full 27.36 TB dataset was completed in just 26 minutes, yielding an effective backup rate of approximately 63.1 TB per hour, or 0.063 PB/hr. At this point, throughput was no longer constrained by the HPE Alletra MP X10000 object storage layer but by the backup servers’ and network fabric’s ability to generate and sustain parallel streams.

Commvault Interface showing backup jobs for 144 VMs.

When projected to a larger configuration with 30 backup servers connected to a single DPAN, the projected aggregate throughput reaches 175.38 GB/s, corresponding to a theoretical maximum of roughly 0.63 PB per hour.

| Metric | Result |

| Sustained Throughput | 17.54 GB/s |

| Effective Throughput | 63.1 TB/hour |

| VMs Backed Up Concurrently | 144 |

| Total Dataset | 27.36 TB |

| Total Backup Window | 26 minutes |

| Extrapolated Capacity (30 servers, 1 DPA) | |

| Aggregate Throughput | 175.38 GB/s |

| Theoretical Maximum | 0.63 PB/hour |

*Extrapolated figures are based on a 3 x 10 ESXi server, 1 DPAN configuration scaling estimate.

Restore Performance

Restore operations similarly demonstrated that the DPAN eliminates target-side constraints, with performance now bounded by client infrastructure.

During testing, the environment sustained 4.90 GB/s of aggregate restore throughput while restoring 144 virtual machines concurrently. A total of 27.36 TB was recovered in just 93 minutes, translating to an effective restore rate of approximately 17.64 TB per hour. This demonstrates the DPAN’s ability to parallelize read operations at scale while maintaining consistent throughput across a heavily concurrent workload.

It is also important to note that Commvault required approximately one hour of pre-restore preparation time before data movement. This orchestration overhead materially impacts total restore duration and is reflected in the reported metrics.

In a larger deployment of 30 servers protected by 1 DPAN, the projected aggregate throughput is approximately 49.03 GB/s, equating to roughly 0.18 PB/hr of sustained read capability per accelerator node.

| Metric | Result |

| Sustained Throughput | 4.90 GB/s |

| Effective Throughput | 17.64 TB/hour |

| VMs Restored Concurrently | 144 |

| Total Dataset | 27.36 TB |

| Total Restore Window | 93 minutes |

| Extrapolated Capacity (30 servers, 1 DPA) | |

| Aggregate Throughput | 49.03 GB/s |

| Per DPAN Sustained Read Capability | 0.18 PB/hour |

*Extrapolated figures are based on a 3 x 10 ESXi server, 1 DPAN configuration scaling estimate.

The restore performance highlights that once DPAN is deployed, restore speed becomes heavily constrained by client-side factors: network capacity, storage write speed, and the restore targets’ ability to absorb data. The DPA can deliver significantly more throughput than most client environments currently consume.

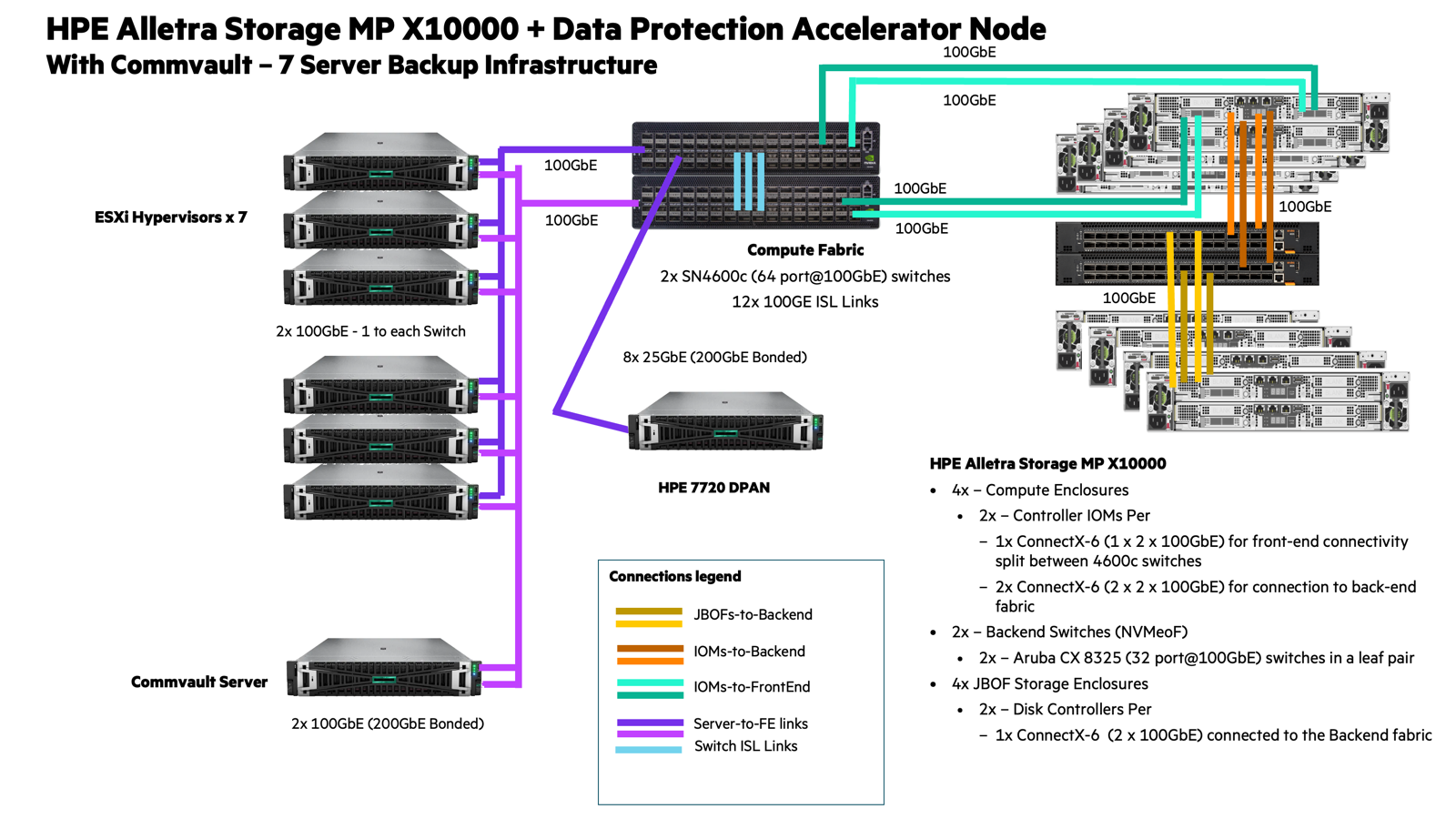

Pushing Single-DPAN Limits: The “Hero Configuration”

To identify the upper performance boundary of a single DPA node, HPE scaled beyond the three-server baseline configuration to what they termed the “hero configuration”: seven backup servers protecting 336 VMs totaling approximately 65 TB.

Seven-Server Hero Configuration Results:

Across seven backup servers, the system delivered 83.83 GB/s of aggregate throughput while protecting 336 virtual machines concurrently. The total 65.38 TB dataset was completed in just 13 minutes, translating to an effective backup rate of approximately 301.7 TB/hr, or 0.301 PB/hr. At this level of concurrency, the environment operated as a highly parallelized ingest pipeline, with performance driven primarily by available client compute, media processing capacity, and fabric bandwidth rather than by target-side constraints.

| Metric | Result |

| Sustained Throughput | 83.83 GB/s |

| Effective Throughput | 0.301 PB/hour |

| VMs Backed Up Concurrently | 336 |

| Total Dataset | 65.38 TB |

| Total Backup Window | 13 minutes |

This configuration showed that, even with more than twice as many backup servers, the single DPAN had not reached its performance ceiling. HPE was unable to find the maximum throughput limit of a single DPA because the backup application reached its orchestration capacity first. The constraint was no longer the target storage system or even the DPA itself, but rather the backup application’s ability to manage job scheduling, data movement coordination, and metadata operations at this scale.

Validated four-node testing sustained approximately 1.2 PB/hour of aggregate ingest throughput while confirming linear scaling characteristics across active nodes. With support for up to 10 accelerator nodes per cluster, the architecture operates in the multi-petabyte-per-hour class and is designed to scale beyond 2.5 PB/hour as configurations expand. In our testing, upstream orchestration and network infrastructure became limiting factors before the accelerator nodes reached saturation, underscoring the architectural headroom available within the design.

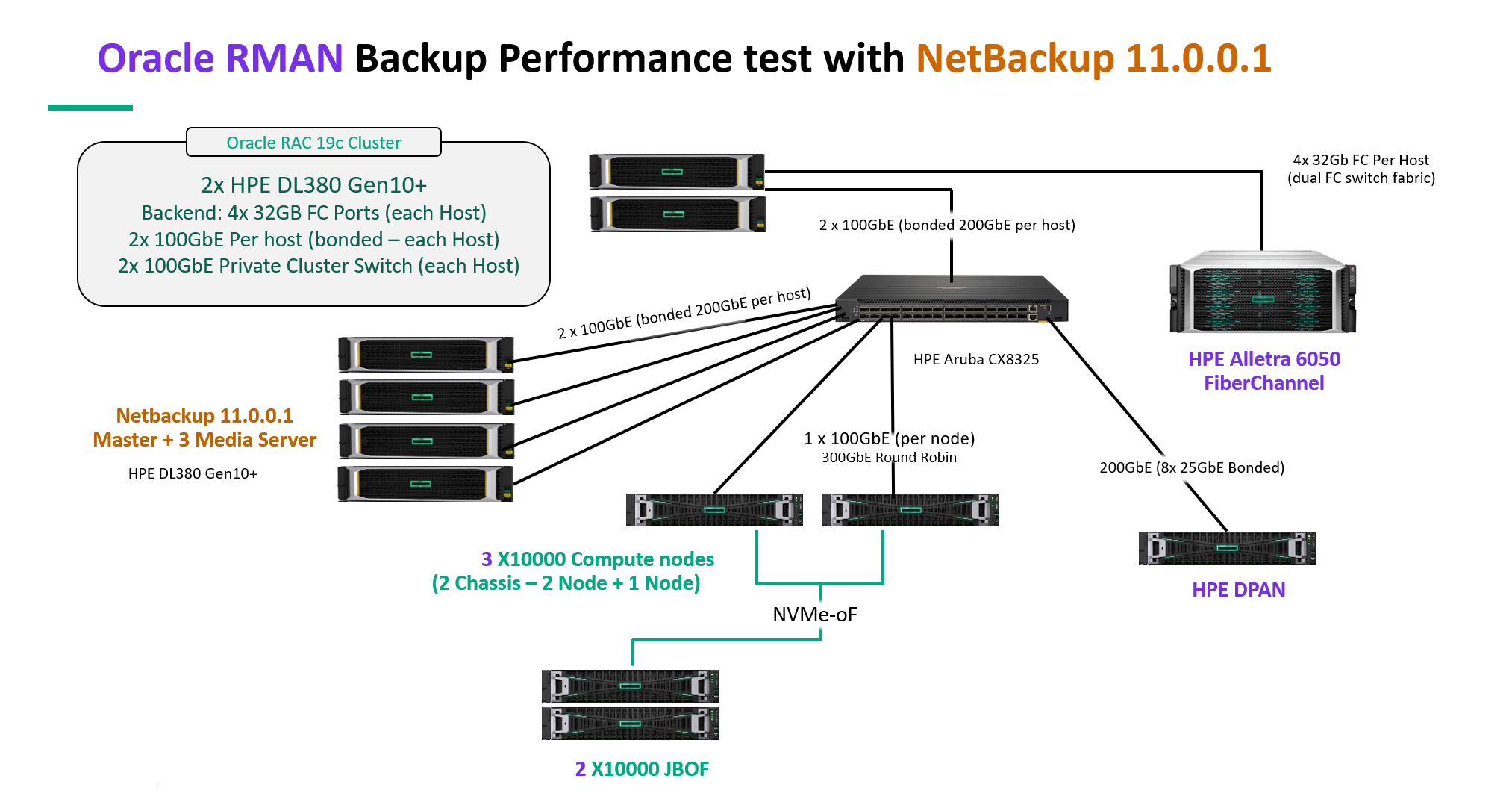

Oracle RMAN Database Backup: Direct-to-Storage vs. DPAN

To demonstrate the DPAN’s impact on large-scale database backup workflows, HPE also conducted a comparative test using a 100TB Oracle database workload. This test directly compared backup performance when writing to the X10000 storage array alone versus leveraging the DPAN architecture.

Test Configuration:

The table below outlines the detailed testing configuration of the Oracle backup validation environment. This setup was designed to evaluate large-scale Oracle RAC protection using NetBackup 11 with HPE Alletra storage platforms and a DPAN.

| Component | Configuration |

| NetBackup Media Servers | 3x HPE DL380 Gen10+ NetBackup (Version 11.0.0.1) |

| Oracle RAC 19c Cluster | 2x HPE DL380 Gen10+ (4x 32x Gb FC per host) |

| Source Array | Single Alletra 6050 (hosting Oracle database) (4x 32x Gb FC per host) |

| Backup Target | 2x Alletra Storage MP X10000 (3-node configuration 2+1 node config) + (2x JBOF configuration) each with 2x disk controllers per JBOF |

| Data Protection Accelerator | Single node (DPAN for configuration only) |

| Network | Single 100GbE (HPE Aruba CX8325) |

| Workload | 100TB Oracle database |

Test Results:

The table below shows the results of the same 100TB full Oracle database backup executed under two conditions:

- Direct-to-X10000 (S3 target without DPAN)

- Accelerated backup with a single DPAN in the data path

In both scenarios, the identical Oracle workload and dataset were protected. The only architectural change was the introduction of the Data Protection Accelerator Node (DPAN) bonded 200GbE into the networking fabric, allowing for a direct performance comparison.

| Metric | Direct-to-X10K (without DPAN) | Accelerated With Single DPAN |

| Sustained Throughput | 6.46 GB/s | 11.57 GB/s |

| Effective Throughput | 23.3 TB/hour | 41.7 TB/hour |

| Total Backup Window | 4.3 hours | 2.4 hours |

| Database Backed Up | 100TB | 100TB |

Performance Impact:

- 1.79x throughput improvement (11.57 GB/s vs. 6.46 GB/s)

- 44% reduction in backup window (2.4 hours vs. 4.3 hours)

This test validates that the DPAN delivers measurable acceleration even for structured database workloads, nearly doubling throughput and cutting backup windows in half. The improvement demonstrates how the accelerator node’s deduplication and compression processing offloads are performed in the source environment and the target storage array, enabling faster data protection cycles for mission-critical database systems.

Turning Performance Into Real-World Outcomes

The most important takeaway from this testing is not just the throughput numbers; it is where the bottlenecks moved. Across every validation scenario, the X10000 and data protection accelerator node removed the traditional target-side ceiling that has defined backup infrastructure for decades. In the three-server validation, ingest performance quickly shifted upstream into media server orchestration and client data generation limits. In the seven-server configuration, even at more than 0.30 PB per hour, the DPAN had not reached its maximum. The constraint became the backup application’s ability to coordinate jobs at scale.

Restore testing followed the same pattern. Once the DPAN eliminated target-side friction, restore performance was governed by client-side write speeds, network bandwidth, and orchestration overhead. In practice, the backup appliance was no longer the bottleneck. The surrounding infrastructure was, and this shift is significant.

Traditional HDD-based backup systems were architected around landing zones, controller chokepoints, and rehydration workflows. Performance was tuned to match those limitations. In contrast, the DPAN and X10000 combination introduces primary-storage-class ingest and restore behavior into backup workflows. That changes where design pressure is applied within the data center.

Network fabrics must sustain higher concurrency. Media servers must coordinate more streams. Client systems must absorb restore traffic at speeds they may not have been designed for. Even SSD write performance on protected servers becomes a consideration during large-scale restores.

Perhaps most importantly, backup applications themselves were not originally engineered for this level of throughput. As demonstrated in testing, orchestration overhead can become the limiting factor before the DPAN or X10000 reaches saturation. That reality highlights a broader industry challenge. Backup software stacks must evolve to fully exploit modern storage performance.

HPE, however, has positioned the DPAN as a purpose-built acceleration layer that bridges that gap today. By offloading deduplication, encryption, and high-speed data movement into a dedicated compute node and pairing it with a flash-native object backend, HPE has created a platform that pushes backup and recovery performance into territory historically reserved for primary storage systems.

The result is not incremental improvement. It is a redistribution of stress across the data protection infrastructure stack. And that redistribution is exactly what modern backup environments require as datasets grow and recovery expectations tighten.

A New Standard for Backup Infrastructure

The HPE Data Protection Accelerator Node represents a structural shift in how backup infrastructure is designed. By pairing StoreOnce Catalyst’s source-side deduplication with a high-speed encrypted data path into the flash-native X10000 object platform, DPAN creates a dedicated acceleration layer that separates data movement and reduction from storage durability. That architectural clarity is what enables its performance characteristics.

Catalyst ensures that only optimized data traverses the network and enters the object layer. The X10000 focuses on durable, parallel object placement across NVMe flash. DPAN focuses on compute-intensive tasks, such as deduplication and encryption. Each layer performs its role without compromise. The result is a backup architecture built around throughput and concurrency rather than containment. What makes this particularly relevant is not just how it performs today but what it enables tomorrow.

Modern infrastructure is evolving toward higher concurrency, denser virtualization, AI-driven analytics pipelines, and increasingly aggressive recovery objectives. These environments generate larger datasets at higher velocity and require faster recovery when issues arise. Backup systems can no longer operate as slow secondary tiers that quietly trail production performance. They must keep pace.

HPE’s Alletra Storage MP X10000 with DPAN prepares backup and recovery for that future. It provides a scalable acceleration model that aligns with flash-native infrastructure trends and object-based storage strategies. As enterprises modernize their primary storage and compute stacks, DPAN ensures the data protection layer does not become the weak link.

HPE has taken a decisive position in this space. With the X10000 and DPAN, the company has delivered a platform that elevates backup and recovery into the same performance conversation as primary storage. For organizations facing shrinking backup windows, expanding data footprints, and more demanding recovery objectives, this architecture offers both immediate gains and long-term alignment with enterprise infrastructure trends.

This report is sponsored by HPE. All views and opinions expressed in this report are based on our unbiased view of the product(s) under consideration.

Amazon

Amazon