Earlier this year we posted Intel Optane DC Persistent Memory data in our review of the Supermicro SuperServer 1029U-TN10RT platform. Supermicro was one of the first out of the gate with Intel persistent memory support and the dual-processor 2U system has done great work as a persistent memory test bed. Looking at Optane DC persistent memory speed in the traditional block storage way is instructive, but the real value of persistent memory is revealed by applications that can natively take advantage of this new medium, intelligently placing data in DRAM, persistent memory or onboard storage as the application needs. To better understand the performance profile of Optane DC persistent memory, we put the Supermicro server to work using a leading NoSQL platform, Aerospike.

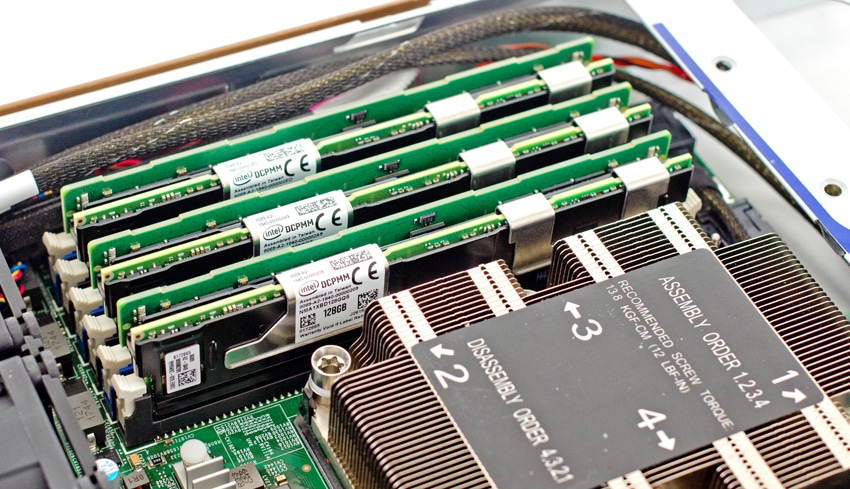

Intel Optane DC Persistent Memory Modules in the Supermicro Server

What is Aerospike?

Aerospike provides a distributed, highly scalable, non-relational database management system for demanding read/write workloads involving operational data. It was designed to deliver extremely fast—and predictable—response times for accessing data sets that span billions of records in databases of 10s – 100s TB. Aerospike powers a wide variety of strategic applications, including fraud prevention, digital payments, recommendation engines, real-time bidding and more. Aerospike customers include very big names like Adobe, Airtel, FlipKart, Kayak, Nielsen, PayPal, and Wayfair.

Depending on the use case and data set, Aerospike can be deployed in various configurations that optimize the system resources for a use case. Aerospike can be launched with data in memory, or index in memory with data on SSD, or index on SSD with data on SSD. Recently Aerospike released a new configuration that takes advantage of Intel’s Optane DC persistent memory in AppDirect mode. The index is stored in PMEM with the data on SSD. This new mode expands the capacity of Aerospike while maintaining the performance very near to index in memory with data on SSD. Not only does this new mode provide sub millisecond latencies but fast full restarts of Aerospike are possible without primary index rebuilds as well.

By applying different types of workloads across different Aerospike configurations, it is possible to evaluate the benefits and performance from utilizing Intel Optane DC persistent memory on a SuperMicro SuperServer. Running and comparing benchmarks against an index in memory/data on SSD configuration and an index in PMEM/data on SSD provides the information to make an informed choice on the use of persistent memory versus DRAM. There is one additional configuration that can provide additional performance insight for persistent memory. Although the index in PMEM and data in PMEM feature has not been released, there is a way to configure the PMEM on the server to run with index in PMEM and run a portion of PMEM configured as a block device to give you an idea of the performance possibilities for index in PMEM and data in PMEM.

Aerospike NoSQL Configuration

Three different workloads were applied to each of the three different configurations. The Aerospike Java Benchmark generated a 50/50 read/write workload, a read only workload, and a write only workload from 4 client servers. Each test consisted of multiple phases:

- Ingest phase – Loading data into the database.

- Warm up phase – Run a write load for two hours to create a steady state for the database.

- Test phase – Run the actual workload for the test for an hour.

Before running any tests, an appropriate key set and object size for the data were selected. Although Aerospike has a large range of object sizes from couple of bytes to a million bytes, the key set and object size were selected to exercise the server hardware and demonstrate the performance of PMEM configurations. Larger object sizes may create a network bottleneck and not fully demonstrate the power of the Optane DC persistent memory. Therefore an object size of 440 bytes was used for all of the tests.

The key set size was constrained by the amount of memory used for the index in memory/data on SSD configuration. The index in memory configuration was limited to a data set of 4 billion objects. Even though the index in PMEM could handle the capacity of 15.5 billion keys, only 4 billion keys were used for a better comparison to the index in memory test. The final sets of tests were run with index in PMEM and data in PMEM. Because the server had a total of 1.5TB of PMEM, only a billion keys were used for these tests.

Hardware Configuration

The hardware configuration includes two key components. The single database server houses the Intel Optane PC persistent memory. The four client servers generate the load against the database server.

Database server

- Chassis – SuperMicro Ultra 1U SYS-1029U-TN10RT

- CPU

- 2 x Intel Xeon Scalable 8268 (2.9GHz, 24C)

- 2 x Intel Xeon Scalable 8280 (2.7GHz, 28C)

- Storage – 10 x Intel DC P4510 2TB NVMe SSD, 1DWPD

- DRAM – 12 x 32GB DDR4-2933

- Persistent Memory – 12 x 128GB DDR4-2666 Intel Optane DC PMMs

- Network – 100 GbE

- OS – Fedora 29

Client servers

- Chassis – Dell R740xd

- CPU – 2 x Intel Xeon Scalable 6130

- DRAM – 256GB

- Network – 2 x 25 GbE

- OS – Ubuntu – 18.04

- Software – Aerospike Enterprise 4.5.1

- Load Generator – Aerospike Java Benchmark (Aerospike Java Client 4.4.0)

Aerospike Performance Results

As noted, we ran the test in a variety of workload configurations as well as the location of the index and database. Additionally, we used two different sets of Second Generation Intel Xeon Scalable CPUs. We ran both the 8268's and the 8280's in the database server, the 8280 CPUs being the highest bin Intel CPU that supports Optane DC persistent memory. In raw clockspeed, the 8280's offer a 12Ghz bump over the 8268's, or 8.6% increase in performance. While not in the tables below, it should be noted that in terms of quality of service, all of the latency results for the tests were at or near 100% sub millisecond at the server.

Index in Memory, Data on NVMe SSDs

| Activity | Throughput Operations Intel 8268 |

Throughput Operations Intel 8280 |

|---|---|---|

| Read/Write 50/50 | 2,100,000 | 2,298,000 |

| Read 100% | 2,240,000 | 2,720,000 |

| Write 100% | 1,760,000 | 2,020,000 |

While we know the 8280's offer an 8.6% improvement in raw clockspeed over the 8268's, seeing how that converts into application improvement is a core objective. With Aerospike, with the index sitting in memory and the data on NVMe SSDs, we saw the following line item changes. Mixed Read/Write 50/50 performance scaled up 9.4%, 100% read up 21.4% and in 100% write the performance bumped up 14.8%.

Index in Optane DC Persistent Memory, Data on NVMe SSDs

| Activity | Throughput Operations Intel 8268 |

Throughput Operations Intel 8280 |

|---|---|---|

| Read/Write 50/50 | 2,000,000 | 2,252,000 |

| Read 100% | 2,200,000 | 2,630,000 |

| Write 100% | 1,740,000 | 1,980,000 |

As we can see in this data, moving the indexes from DRAM to persistent memory had little impact on transactional performance. In production though, what this means is that because Aerospike can use persistent memory instead of DRAM for indexes, restoring after a database node reboot is much faster, as a large pool of DRAM doesn't need to go to SSDs to rebuild. There's also a cost savings in high density boxes, as the 128GB Optane DC persistent memory modules under test are considerable less expensive than 128GB DIMMS.

Index and Data on Optane DC Persistent Memory

| Activity | Throughput Operations Intel 8268 |

Throughput Operations Intel 8280 |

|---|---|---|

| Read/Write 50/50 | 2,600,000 | 2,866,000 |

| Read 100% | 2,810,000 | 3,100,000 |

| Write 100% | 2,120,000 | 2,210,000 |

As noted, Aerospike has announced but has not yet provided general availability for the ability to run both the indexes and database on Intel Optane DC persistent memory. That said, we did demo the current code build that allows for it, which shows a boost of roughly 33% or 600,000 operations per second in the mixed workload.

Conclusion

Intel Optane DC persistent memory is an incredibly powerful part of the data hierarchy. Slotting in between RAM and storage, Intel's incarnation of persistent memory finally brings the technology mainstream. But just having access to a new storage technology isn't good enough, applications that can take advantage of persistent memory natively will have a huge competitive advantage. The flexibility we see with Aerospike shows they were ready out of the gate with app direct mode support (block storage) for Optane DC persistent memory. Further, they're a leader in the NoSQL world when it comes to keeping both indexes and data on the persistent memory modules. While the latter is still an emerging vision, the early results look very promising.

Amazon

Amazon