AMD has released its MLPerf Inference v6.0 results, positioning the Instinct MI355X GPU as a scalable inference platform across single-node, multinode, and heterogeneous deployments. The submission extends beyond incremental gains by adding new workloads, demonstrating cluster-scale throughput exceeding 1 million tokens per second, and validating reproducibility across a growing partner ecosystem.

CDNA 4 Architecture Targets High-Capacity Inference

The Instinct MI355X GPU is based on AMD’s CDNA 4 architecture built on a TSMC 3nm | 6nm FinFET process (it uses a dual-process chiplet design: the compute dies (XCDs) use TSMC’s 3nm node while the I/O dies use 6nm), integrating 185 billion transistors and supporting FP4 and FP6 data formats. It’s worth noting that this is across the entire multi-chiplet package, not a monolithic die. Each GPU includes up to 288GB of HBM3E memory, enabling support for models up to 520 billion parameters on a single device. AMD positions this combination of compute density and memory capacity as critical to large-model inference without excessive model partitioning.

The platform is available in UBB8 configurations with both air-cooled and direct liquid-cooled options, aligning with data center deployment requirements.

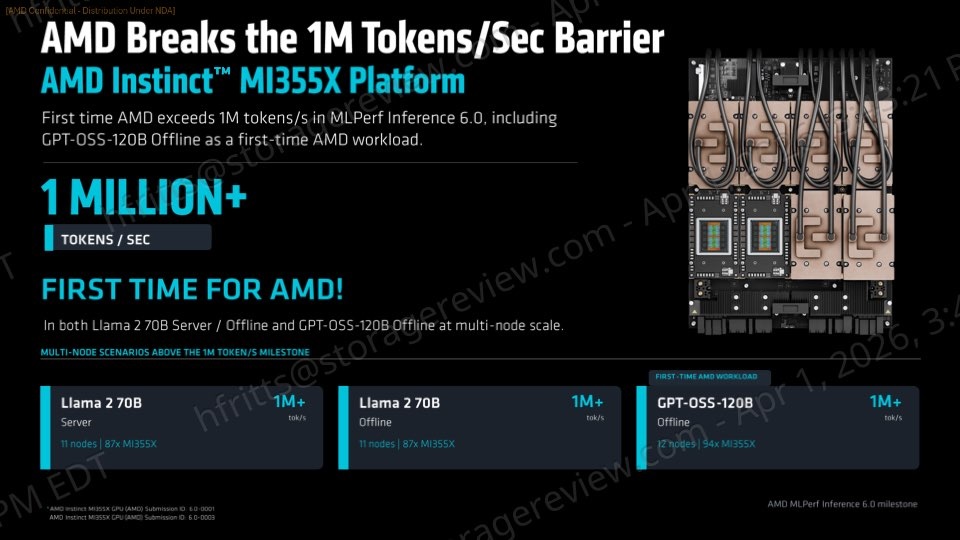

Multinode Throughput Surpasses 1 Million Tokens per Second

A key result from this round is AMD surpassing 1 million tokens per second at the cluster scale. Using Instinct MI355X GPUs, AMD achieved this threshold on Llama 2 70B in both Server and Offline scenarios, and on GPT-OSS-120B in Offline.

These results reflect a shift toward evaluating inference performance at the cluster level rather than per accelerator. Aggregate throughput and time-to-serve are increasingly used to determine production readiness for large-scale AI deployments.

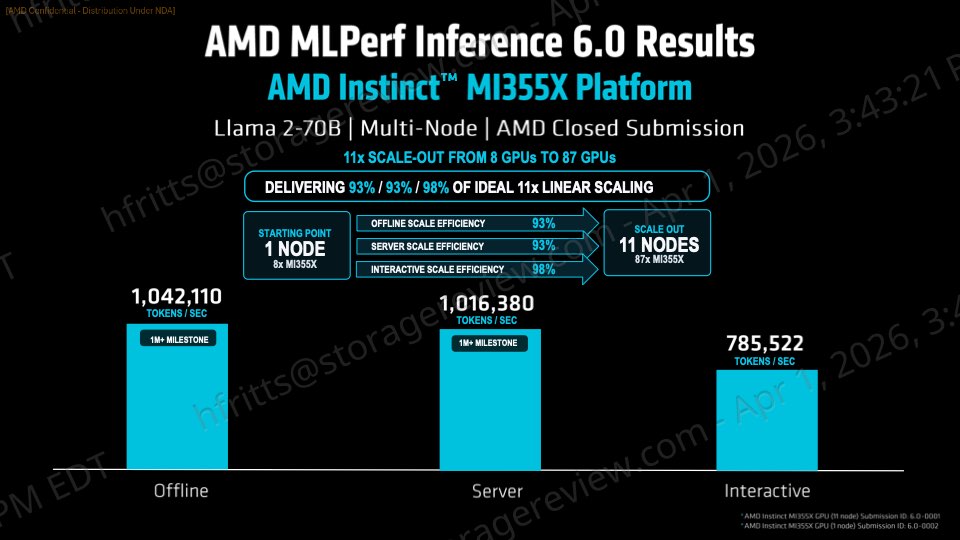

AMD also demonstrated efficient scaling. On Llama 2 70B, a configuration of 11 nodes and 87 GPUs reached over 1 million tokens per second across Offline, Server, and Interactive scenarios, with scale-out efficiency ranging from 93% to 98%. On GPT-OSS-120B, a 12-node, 94-GPU cluster achieved similar throughput with over 90% scaling efficiency. These results indicate that performance gains translate effectively as deployments expand beyond a single system.

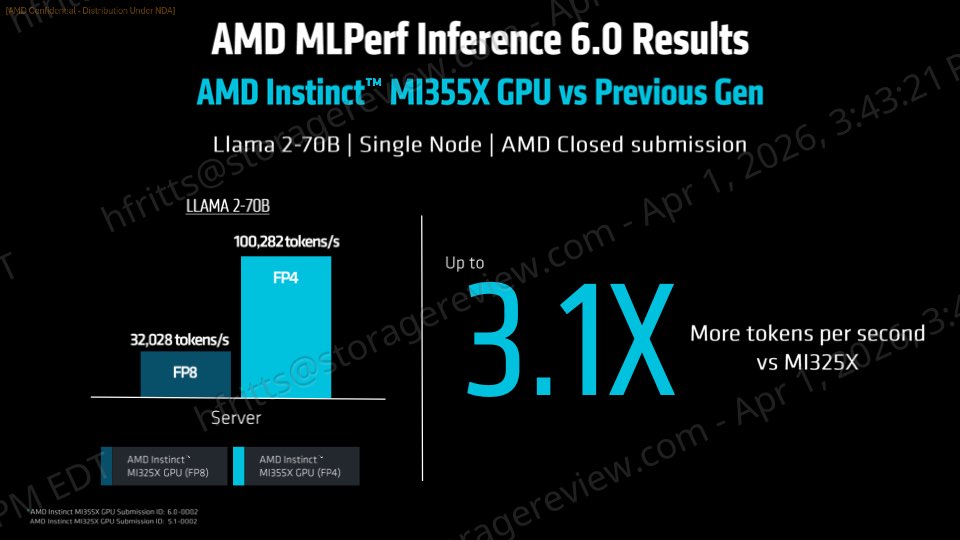

Generational Gains and Competitive Single-Node Performance

AMD reported a 3.1x performance increase on Llama 2 70B Server compared to the prior Instinct MI325X generation, reaching 100,282 tokens per second. The improvement reflects both architectural changes and ROCm software optimizations. Offline scores improved by 4.4x and Server scores improved by 4.8x compared to prior rounds. These gains are primarily attributed to FP4 quantization.

In single-node comparisons, MI355X demonstrated competitive positioning against NVIDIA platforms. On Llama 2 70B, AMD matched NVIDIA B200 in Offline throughput, reached near parity in Server performance, and exceeded Interactive performance. Against Nvidia’s B300, AMD’s GPU delivers 92% in offline mode, 93% in server mode, and exceeds it with 104% in interactive mode.

First-Time Model Enablement Expands Coverage

MLPerf Inference v6.0 includes several new workloads, and AMD used this round to demonstrate rapid model enablement. GPT-OSS-120B, a mixture-of-experts model, was introduced for the first time and achieved competitive results compared to NVIDIA systems across both Offline and Server scenarios.

AMD also submitted results for Wan-2.2 text-to-video generation, marking its entry into multimodal and generative video inference. While the official submission focused on Single Stream latency, the results were competitive with those of existing platforms. Post-submission tuning further improved performance, indicating headroom for optimization as software matures.

These additions highlight AMD’s focus on expanding beyond traditional LLM benchmarks to support emerging AI workloads.

ROCm Software Enables Scaling and Heterogeneous Inference

AMD attributes much of the performance and scalability to its ROCm software stack. Enhancements include optimized FP4 execution, improved GPU-to-GPU communication for distributed inference, and support for dynamic workload distribution across heterogeneous environments.

The initial MLPerf heterogeneous submission was developed using three AMD Instinct GPU models: MI300X, MI325X, and MI355X. Submitted by Dell and MangoBoost, the configuration achieved 141,521 tokens per second on Llama 2 70B Server and 151,843 tokens per second on Llama 2 70B Offline.

Worth noting, the AMD Instinct MI355X platform was located in Dell’s lab in the United States, while the Instinct MI300X and MI325X platforms were in Korea. This demonstrates the capability to coordinate systems across different geographic locations.

Ecosystem Growth and Reproducibility

AMD’s partner ecosystem expanded in this MLPerf round, with nine companies submitting results across multiple Instinct GPU generations. Participating vendors included Cisco, Dell, Giga Computing, HPE, MangoBoost, MiTAC, Oracle, Supermicro, and Red Hat.

Partner submissions closely matched AMD’s internal results, typically within 4% and, in some cases, within 1%. This consistency indicates that performance is reproducible across OEM and cloud platforms, reducing deployment risk and improving confidence in real-world outcomes.

Amazon

Amazon