At NVIDIA GTC 2026, Intel announced that its Intel Xeon 6 processors are being used as the host CPUs for NVIDIA DGX Rubin NVL8 systems. This design win extends the established use of Xeon within NVIDIA’s GPU platforms and underscores the processor’s role in orchestrating large-scale, GPU-accelerated AI infrastructure. As AI workloads transition toward massive, real-time inference, the host CPU remains a critical component for system-level scalability and architectural continuity.

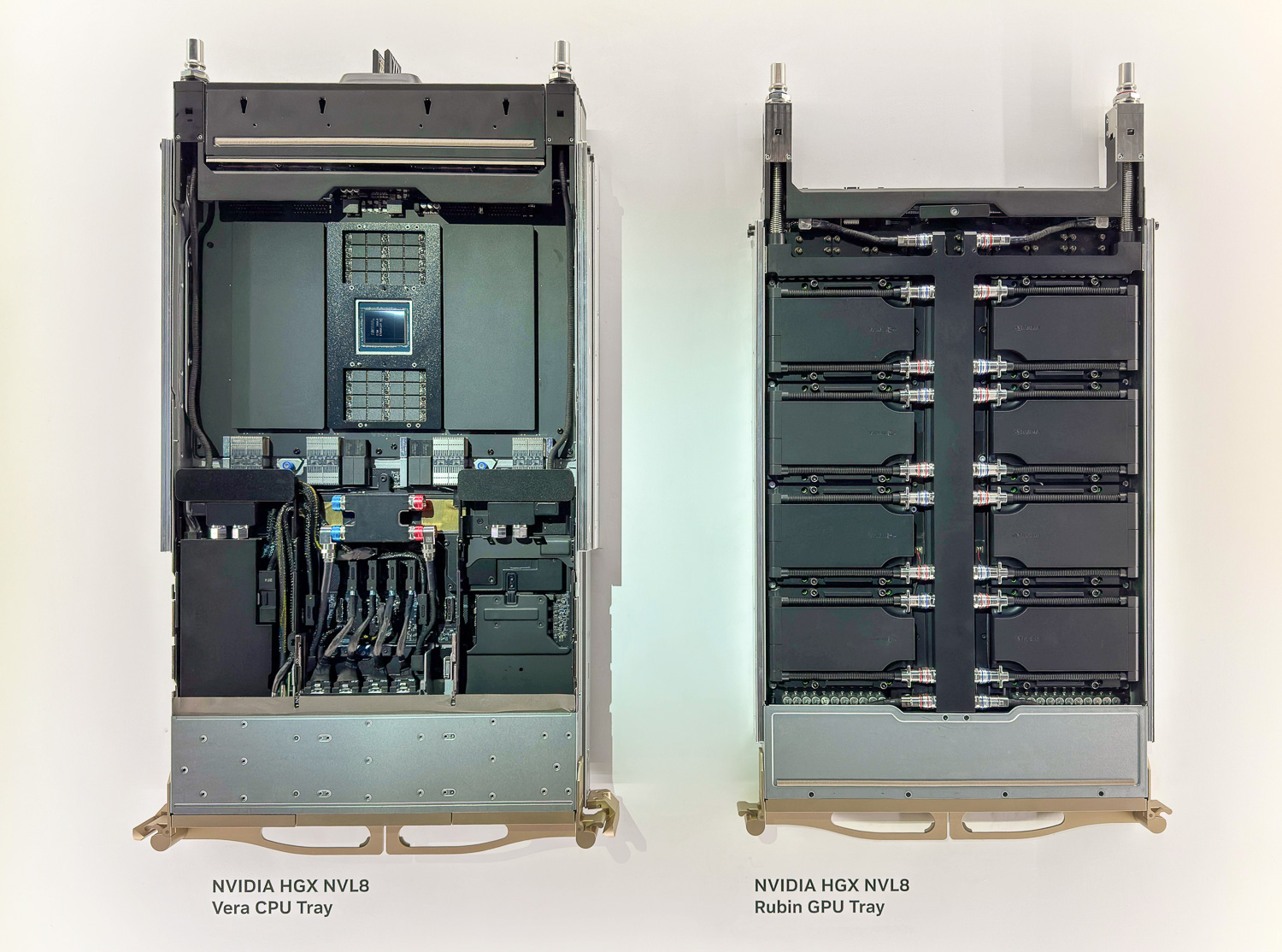

NVIDIA HGX NVL8 with Vera, Xeon 6 is Available with DGX NVL8

The Evolving Role of the Host CPU in AI Inference

The shift from large-scale model training to real-time, agentic AI and reasoning systems has elevated the importance of the host CPU. In these environments, the CPU is no longer a secondary component but a mission-critical engine that governs orchestration, memory access, and model security. Intel states the Xeon 6 is engineered to deliver the throughput and efficiency required to manage these complex GPU-accelerated systems while maintaining compatibility with the extensive x86 software ecosystem that enterprise customers rely on for scaling inference.

Inference performance is increasingly defined by CPU-led system orchestration rather than by raw GPU throughput alone. The host CPU directly shapes overall cluster efficiency and total cost of ownership by managing critical functions such as memory management and workload scheduling. By ensuring operational continuity and reliability, Xeon 6 provides the foundation necessary for modern AI infrastructure across data centers, the cloud, and edge environments.

Technical Advantages of Xeon 6 in DGX Rubin Systems

The integration of Intel Xeon 6 into DGX Rubin NVL8 systems builds upon the architectural foundation established with the Intel Xeon 6776P in current NVIDIA Blackwell-based platforms, including DGX B300 systems. This continuity allows organizations to carry forward existing performance optimizations and system-level expertise into the next generation of AI hardware. According to Intel, Xeon is engineered to maximize GPU utilization through features such as Priority Core Turbo, which maintains a consistent data flow to accelerators.

Memory capacity and bandwidth are central to the Xeon 6 value proposition for AI. The platform supports up to 8 TB of system memory to accommodate large models and expanding KV caches. Furthermore, the implementation of MRDIMM technology delivers 2.3 times higher memory bandwidth generation over generation, significantly improving the rate at which data is fed to GPUs. This is complemented by a high count of PCIe 5.0 lanes, providing the high-bandwidth, low-latency I/O required to support multiple AI accelerators and high-speed networking.

Security and Confidential Computing

As AI inference scales, end-to-end confidential computing has become essential for protecting sensitive data and proprietary models. Intel Xeon 6 addresses these requirements through Intel Trust Domain Extensions (TDX), which provide hardware-based isolation and attestation. This creates a secure foundation for modern AI clusters by ensuring that data remains protected as it moves through the system.

Security is further enhanced across the CPU-to-GPU data paths. Features such as the Encrypted Bounce Buffer enable confidential computing throughout the entire processing pipeline, safeguarding AI data and models during use. This hardware-rooted isolation is critical for maintaining the integrity of mission-critical environments and protecting intellectual property in heterogeneous inference workloads.

Ecosystem Integration and Efficiency

Intel Xeon 6 offers optimized support throughout the AI software stack, including new compatibility with NVIDIA Dynamo. This enables more efficient heterogeneous inference across both CPUs and GPUs, allowing for better resource allocation. The platform’s focus on efficient performance per watt helps organizations manage the power density of modern AI clusters, reducing long-term TCO without sacrificing the single-thread performance needed for effective orchestration and scheduling.

By providing orchestration of GPU-accelerated systems, Intel Xeon 6 ensures that even as inference workloads grow in complexity, data movement remains fluid and efficient. The combination of proven reliability, mature software support, and advanced I/O capabilities reinforces Xeon’s position as a cornerstone of modern AI infrastructure, enabling the deployment of secure and scalable AI solutions at a global scale.

Amazon

Amazon