Rackspace Technology and AMD have signed a memorandum of understanding establishing a framework for a multi-year strategic partnership focused on enterprise AI infrastructure for regulated organizations and sovereign workloads.

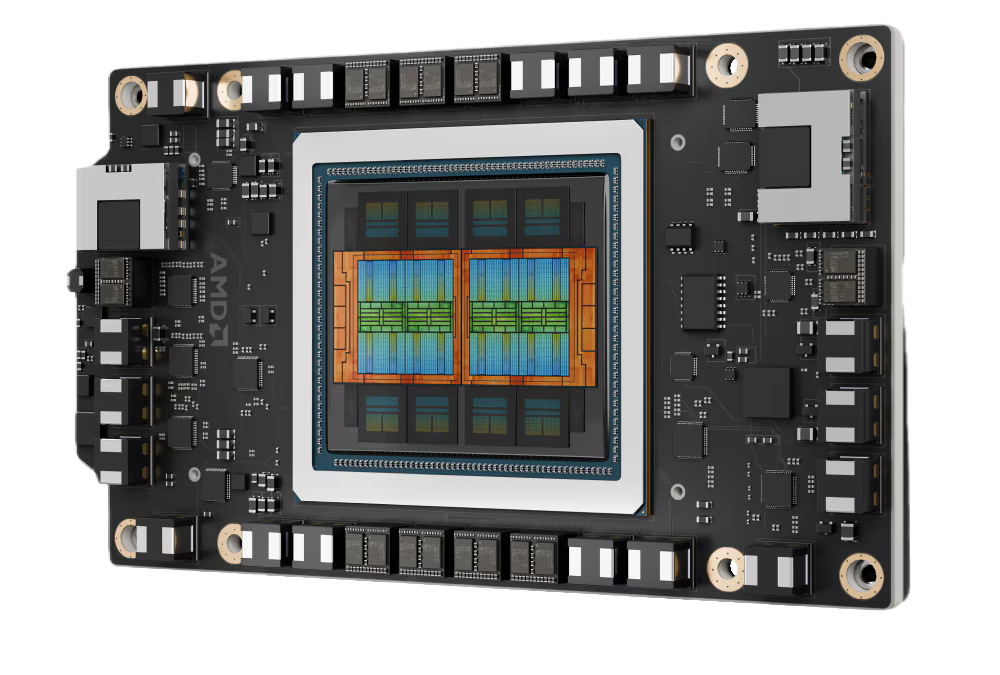

The agreement centers on a proposed Enterprise AI Cloud designed for mission-critical AI deployments in which security, governance, compliance, and operational accountability are core requirements. The platform would combine AMD Instinct GPUs and AMD EPYC CPUs with Rackspace Technology’s managed operating model to create a dedicated AI infrastructure stack for organizations that cannot rely on generic GPU rental models or unmanaged public infrastructure for production AI workloads.

Rackspace Technology and AMD stressed that many enterprises are moving AI from experimentation into production, but face significant operational demands, including infrastructure integration, security, data governance, performance management, and accountability. The current model often requires enterprises to rent GPU capacity by the hour while shouldering much of the supporting infrastructure and operational responsibility themselves.

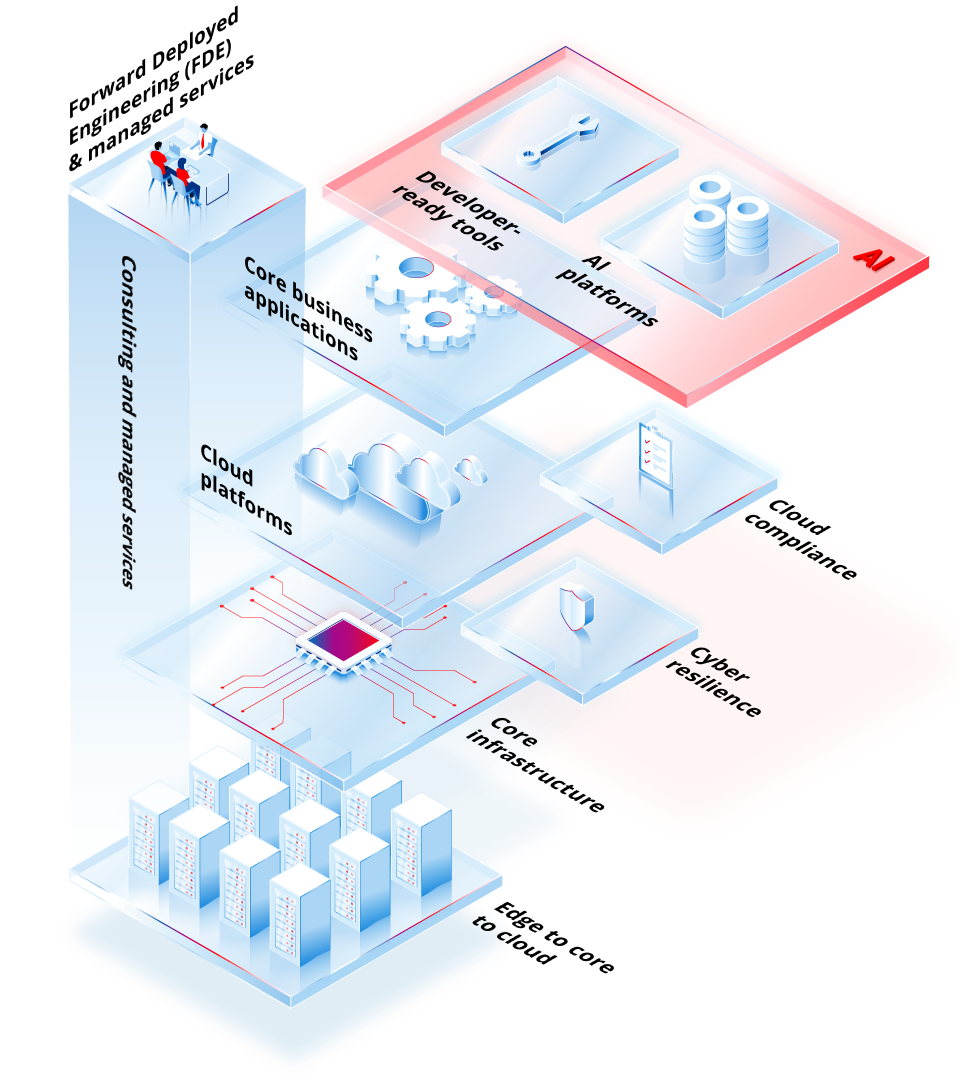

Both companies are proposing a managed approach in which dedicated AMD compute is embedded within a governed enterprise operating model. Under the framework, Rackspace would operate the full stack, from silicon and accelerated compute to inference, agents, and production support.

As enterprises move AI into production environments, Rackspace Technology and AMD believe trust and accountability become central concerns. Governance in regulated environments must be built into the infrastructure from the start, rather than added later. AMD’s role would focus on providing the compute foundation through its Instinct GPU accelerators and EPYC processors, supporting AI workloads that require performance, efficiency, and scale in private and governed environments.

Four Capabilities Planned for the Stack

The collaboration is intended to help Rackspace complete a curated enterprise AI stack built around four integrated capabilities: Enterprise AI Cloud, Enterprise Inference Engine, Inference as a Service, and Bare Metal AMD Instinct.

The proposed Enterprise AI Cloud would be a fully managed private and hybrid AI environment built on AMD Instinct GPUs, AMD EPYC CPUs, and Rackspace’s governed operating model. Rackspace would assemble, integrate, and operate the stack for enterprises requiring sovereignty, compliance, and operational accountability.

The Enterprise Inference Engine would provide organizations with a context-aware runtime that can carry domain knowledge, session history, and company-specific context across queries. The goal is to help AI agents and large language models deliver more consistent results in production settings. Rackspace would manage the service-level commitments, including availability, scaling, and performance, while allowing organizations to use their own proprietary data with auditability and cost accountability.

Inference as a Service would offer dedicated, managed capacity for AMD Instinct GPUs, along with developer-ready tools for inference and fine-tuning. Customers would bring their own models and engineering teams, while Rackspace would handle the bare-metal AMD Instinct capacity, hardware-level support, operational management, and performance service objectives.

Bare Metal AMD Instinct would provide dedicated, high-performance compute for customers needing physical isolation, predictable performance, and direct hardware access for demanding training and inference workloads.

Single Operator for Governed AI Infrastructure

Together, the planned capabilities provide enterprises with a single accountable operator across the AI infrastructure lifecycle. Rackspace manages the stack, from bare-metal compute and developer tools to inference runtime and governed cloud infrastructure.

The goal is to match each workload to the appropriate level of sovereignty, compliance, performance, and oversight. The MOU provides Rackspace and AMD with a framework to collaborate on managed enterprise AI infrastructure for regulated enterprises, sovereign workloads, and production AI environments that require accountability at every layer.

Amazon

Amazon