The Acer Veriton GN100 AI Mini Workstation is one of several Spark-based systems we are evaluating, all built around NVIDIA’s GB10 Grace Blackwell Superchip. Like the others, the GN100 is designed to bring datacenter-class AI compute into a compact desktop form factor, enabling developers and researchers to run and refine models locally rather than relying entirely on cloud infrastructure.

In this configuration, the GN100 pairs the 20-core Arm-based GB10 processor with integrated Blackwell graphics, delivering up to 1 petaFLOP of FP4 AI performance. It is equipped with 128GB of LPDDR5x unified memory and a 4TB PCIe Gen5 NVMe SSD, providing the memory bandwidth and storage throughput needed for large language models, generative AI workflows, and data-heavy experimentation.

As with the other Spark systems in this group, the GN100 supports local AI execution while allowing system-to-system expansion via the integrated NVIDIA ConnectX-7 SmartNIC. Two units can be linked to support larger model workloads beyond a single appliance.

The system ships with NVIDIA DGX OS and the full AI software stack preinstalled, making it ready for immediate deployment in development and research environments.

Acer Veriton GN100 AI Specifications

| Specification | Acer Veriton GN100 AI (GB10) |

|---|---|

| Dimensions & Weight | |

| Height | 2 in |

| Width | 5.9 in |

| Depth | 5.9 in |

| Weight | 2.65 lb |

| Processor | |

| Processor Type | NVIDIA GB10 (Grace Blackwell Superchip) (20 Cores) |

| Integrated Graphics | NVIDIA Blackwell GPU (integrated) |

| Memory | |

| Memory Type | LPDDR5x (Unified System Memory) |

| Memory Configuration | 128 GB LPDDR5x, unified system memory |

| Memory Bandwidth | 273 GB/s (8533 MT/s) |

| Operating System | |

| Supported OS | NVIDIA DGX OS |

| External Ports & Slots | |

| Network Ports | One RJ45 (10GbE) NVIDIA ConnectX-7 NIC (200G × 2 QSFP) |

| USB Ports | Three USB 3.2 Gen 2×2 Type-C (20Gbps) One USB 3.2 Gen 2×2 Type-C with PD in |

| Video Port(s) | One HDMI 2.1a |

| Power Adapter Port | USB Type-C (PD IN) |

| Security Slot | One Kensington Lock |

| Wireless | |

| WiFi | WiFi 7 (AW-EM637, 2×2) |

| Bluetooth | Bluetooth 5.4 |

| Storage | |

| Storage Options | Up to 4 TB NVMe SSD (PCIe Gen5) |

| Power Adapter | |

| Type | 240 W external adapter (USB Type-C) |

Acer Veriton GN100 AI Build And Design

The front of the Veriton GN100 is dominated by a wall of vertical slats that span the width of the chassis. A horizontal accent line cuts across the grille, giving it a unique look compared to the other Spark-based systems we’ve looked at.

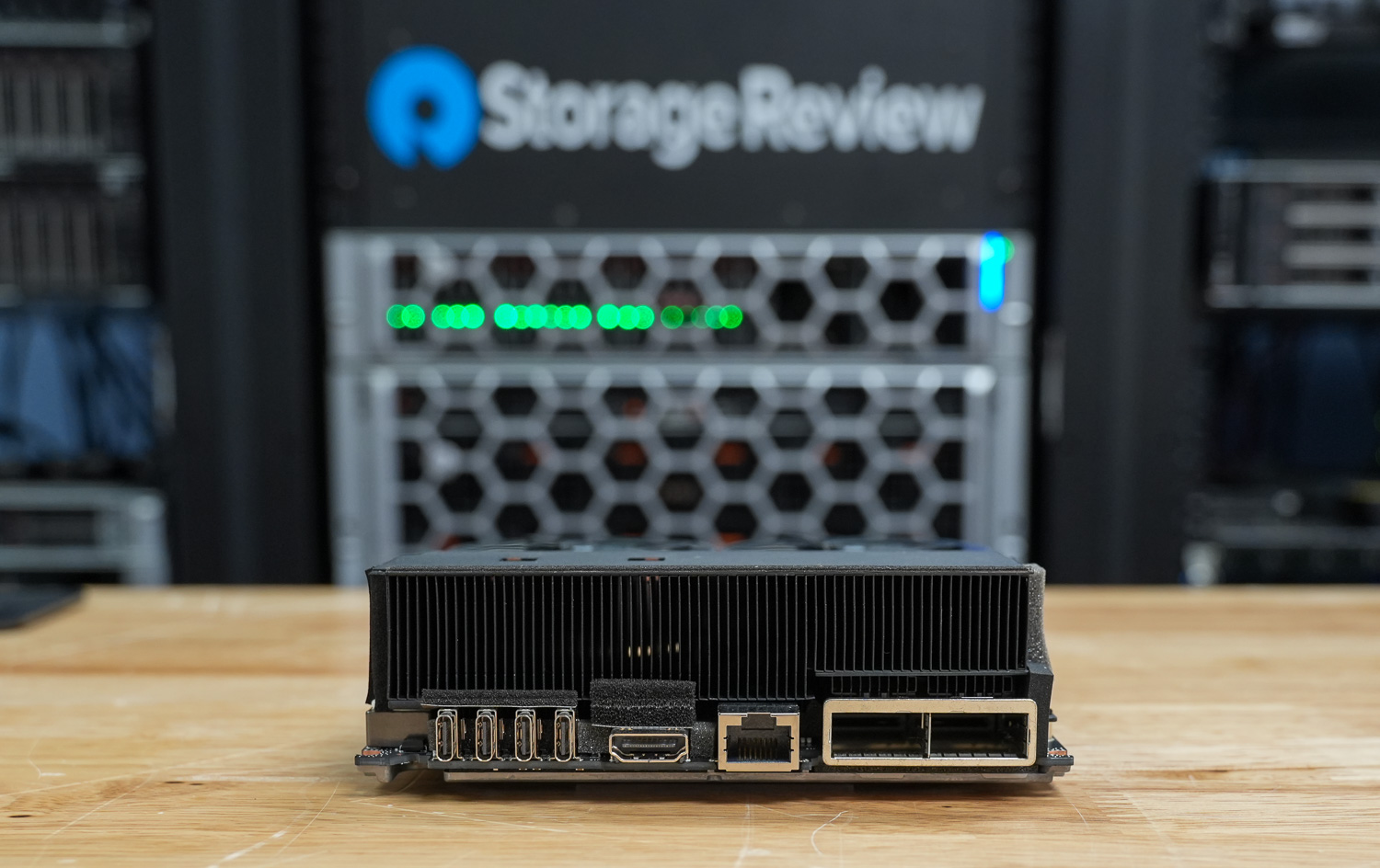

All primary I/O is located at the rear of the system. Acer includes four USB 3.2 Type-C ports, one of which supports power delivery, along with an HDMI 2.1b port for display output. Networking features an RJ-45 Ethernet port and an NVIDIA ConnectX-7 Smart NIC, allowing high-bandwidth connectivity and system linking. Wireless support includes Wi-Fi 7 and Bluetooth 5.1 or later. A Kensington lock slot is available for physical security.

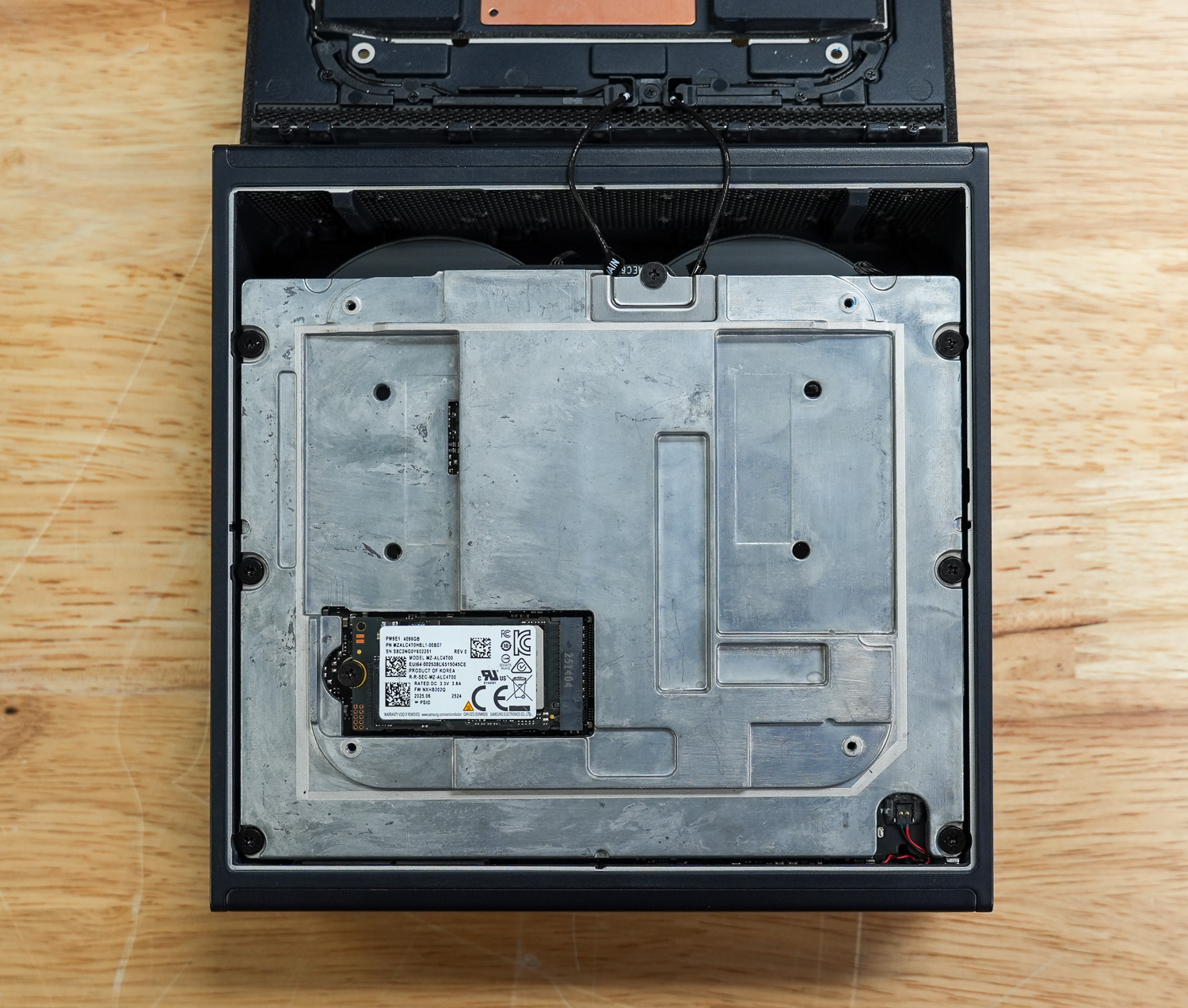

Here, you can see the metal shielding and structural plate that spans the entire chassis, acting as both a reinforcement frame and a heat spreader. In the lower left is an easily accessible M.2 2242 NVMe SSD slot, secured with a single screw and partially tucked beneath the metal plate.

To access the bottom section of the Acer unit, remove the perimeter screws to lift the lower panel away cleanly. Once inside, the layout is straightforward and well organized, providing direct visibility into the cooling assembly, storage area, and mainboard components. The bottom plate itself feels substantial and contributes to the overall rigidity of the chassis.

Compared to the other Spark systems we have evaluated, Acer takes a slightly different approach here. Instead of the more refined painted-metal bottom plate we have seen on the Founders Edition and several OEM implementations, Acer uses a raw, unfinished cast-metal bottom plate. The finish is less polished, with visible casting texture, but it remains thick and structurally sound. Functionally, it still serves the same purpose as a reinforcement frame and secondary heat spreader, though the manufacturing choice clearly reflects a different design and cost philosophy.

Acer Veriton GN100 AI Thermals Testing

To test the Acer Veriton GN100 AI thermals, we compared them against the Founders Edition and OEMs such as Dell, ASUS, and GIBABYTE. We did a deeper dive on this in our Spark Thermal Testing paper.

Across the stack, we monitored components over a given timeframe with three stages to the workload, ramping up utilization over roughly an hour. This allowed us to see the device in extended use and various workload stages. We monitored CPU, GPU, network, NVMe temps, and total power consumption.

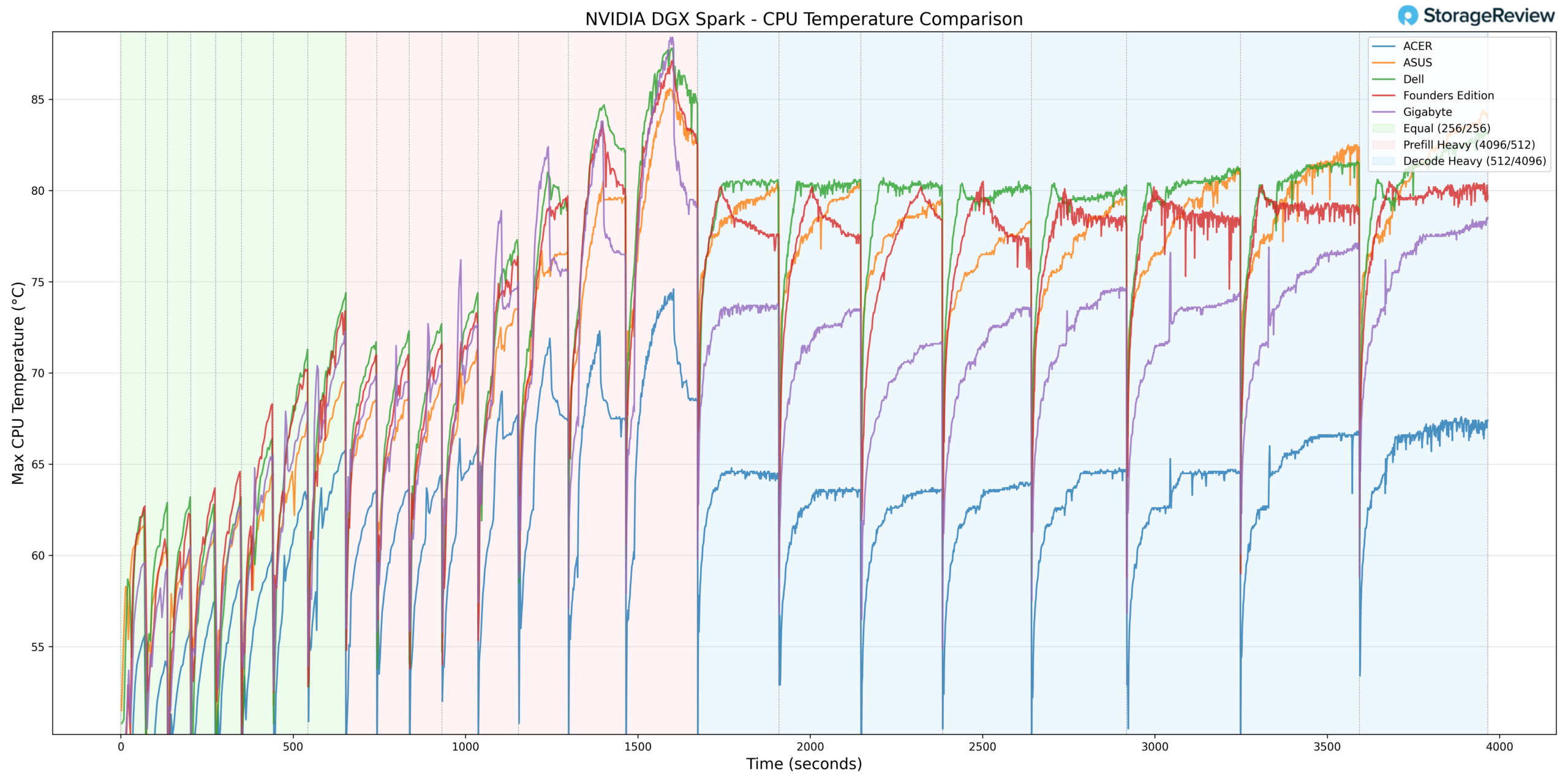

CPU Temperature

During CPU thermal testing, the Acer system reached a peak temperature of 74.7°C during burst-heavy Prefill activity. This represents one of the lowest maximum CPU temperatures observed in the comparison group, indicating a notably conservative or efficient thermal implementation.

As the workload transitioned to Equal ISL/OSL and sustained Decode Heavy phases, CPU temperatures remained well-controlled without aggressive ramping. Rather than climbing into the upper 80°C range seen in some competing systems, Acer maintained a lower sustained operating band, reflecting strong cooling headroom under extended compute load.

At the low end, the CPU recorded a minimum temperature of 37.8°C during idle or light-load conditions. This baseline aligns with the broader stack and reinforces that Acer’s cooling solution remains effective both at rest and under load.

Overall, Acer delivered one of the coolest CPU thermal profiles in the group across both burst and sustained phases.

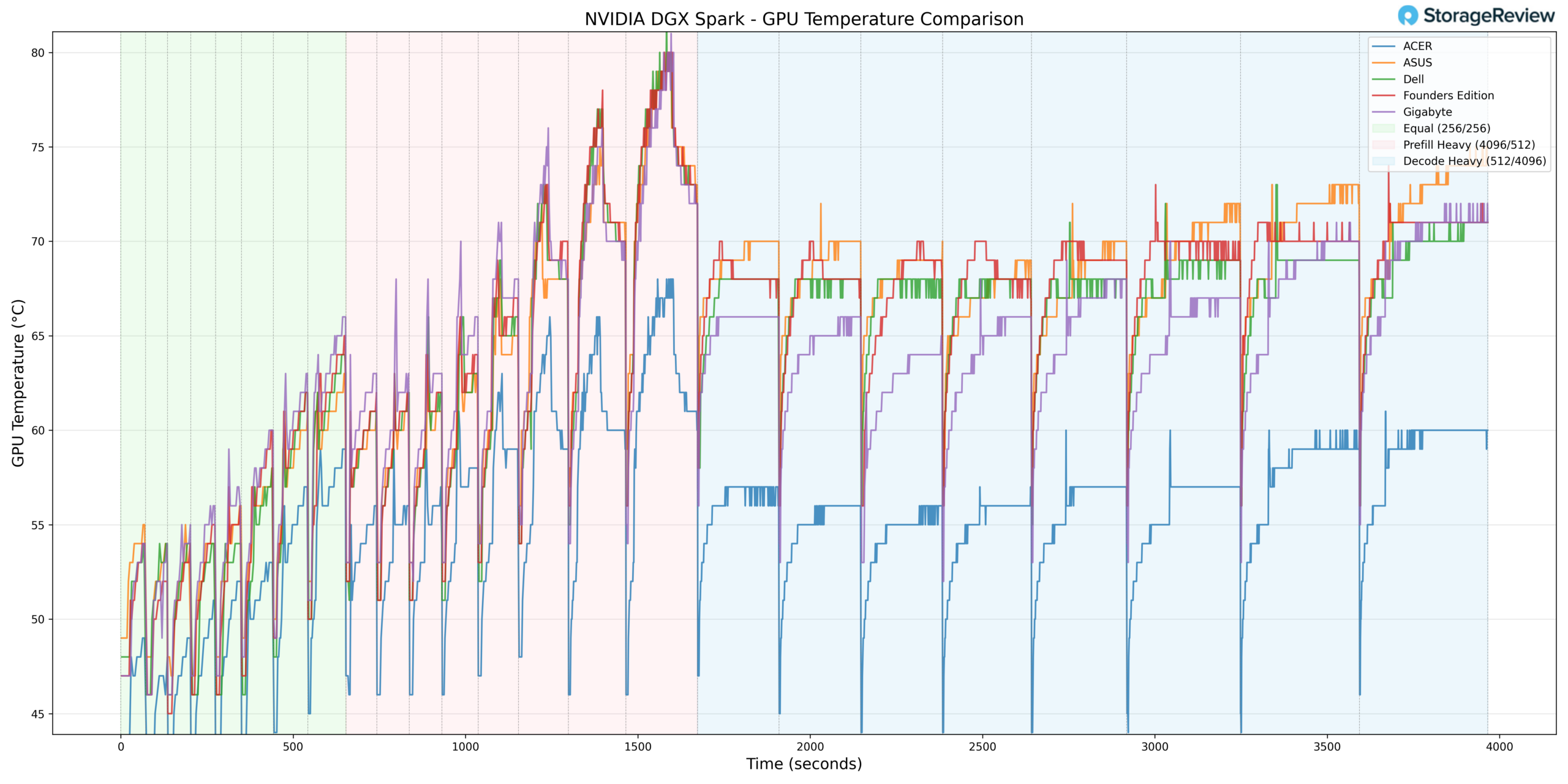

GPU Temperature

GPU thermals followed a similar pattern of moderation. During Prefill Heavy acceleration, the GPU reached a maximum temperature of 69°C, which is significantly lower than that of several competing implementations during burst activity.

As workloads progressed into Equal ISL/OSL and Decode Heavy phases, the GPU stabilized in a controlled range without notable thermal spikes. The system demonstrated consistent behavior under sustained decoding, maintaining a wide margin below upper operating limits.

The minimum GPU temperature recorded was 35°C during lighter phases, representing one of the lowest idle baselines in the stack.

Taken together, Acer exhibited one of the coolest overall GPU implementations under both burst and sustained workloads.

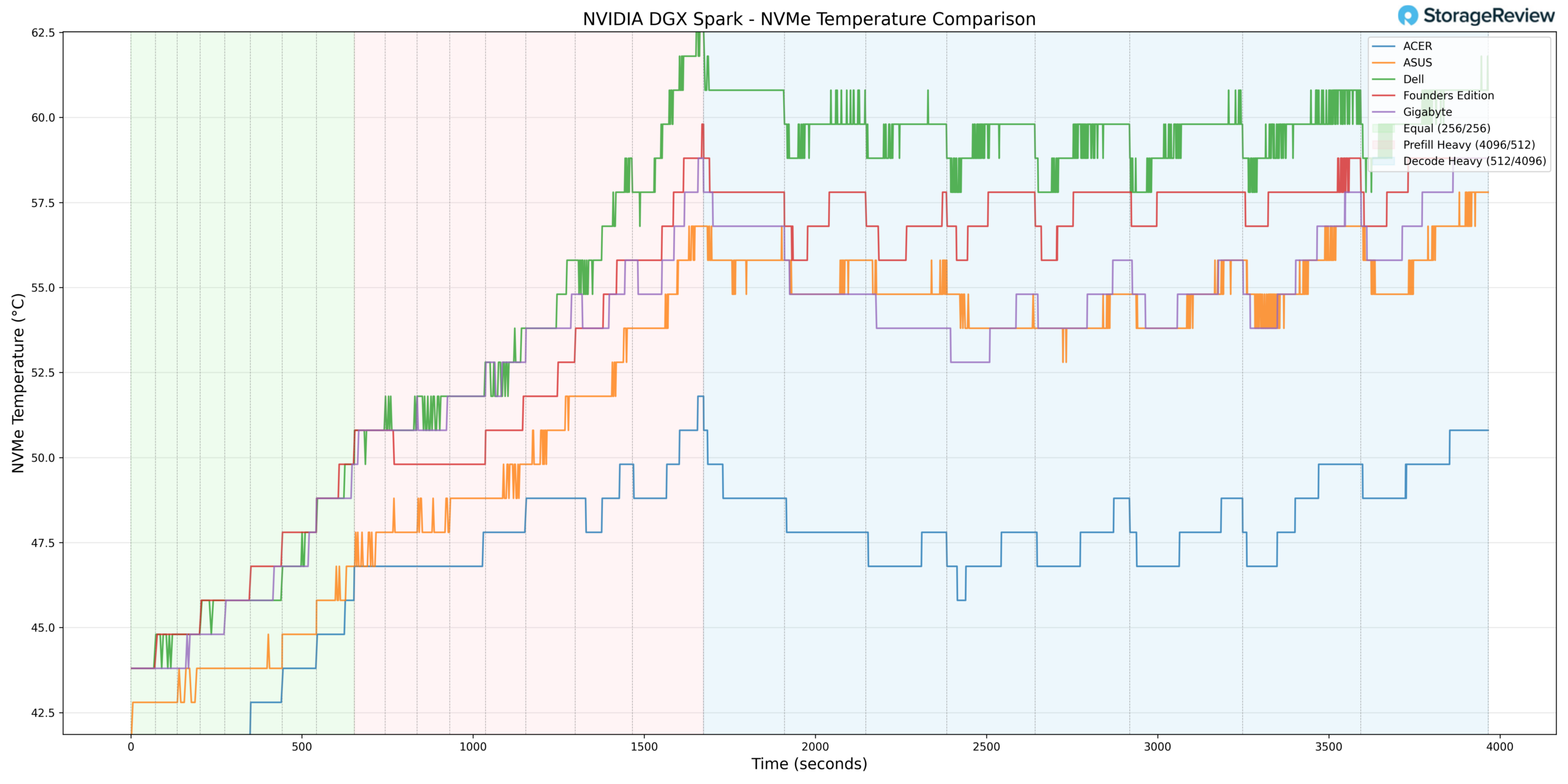

NVMe Temperature

Storage thermals remained well within specification throughout testing. The NVMe drive peaked at 56.8°C during heavier-workload phases, staying comfortably below common throttling thresholds and aligning with the group’s more moderate storage results.

At idle or light utilization, the NVMe temperature dropped to 36.8°C, indicating that the storage subsystem is not thermally constrained under low load.

Overall, Acer maintained competitive NVMe thermals, pairing low sustained temperatures with stable baseline behavior.

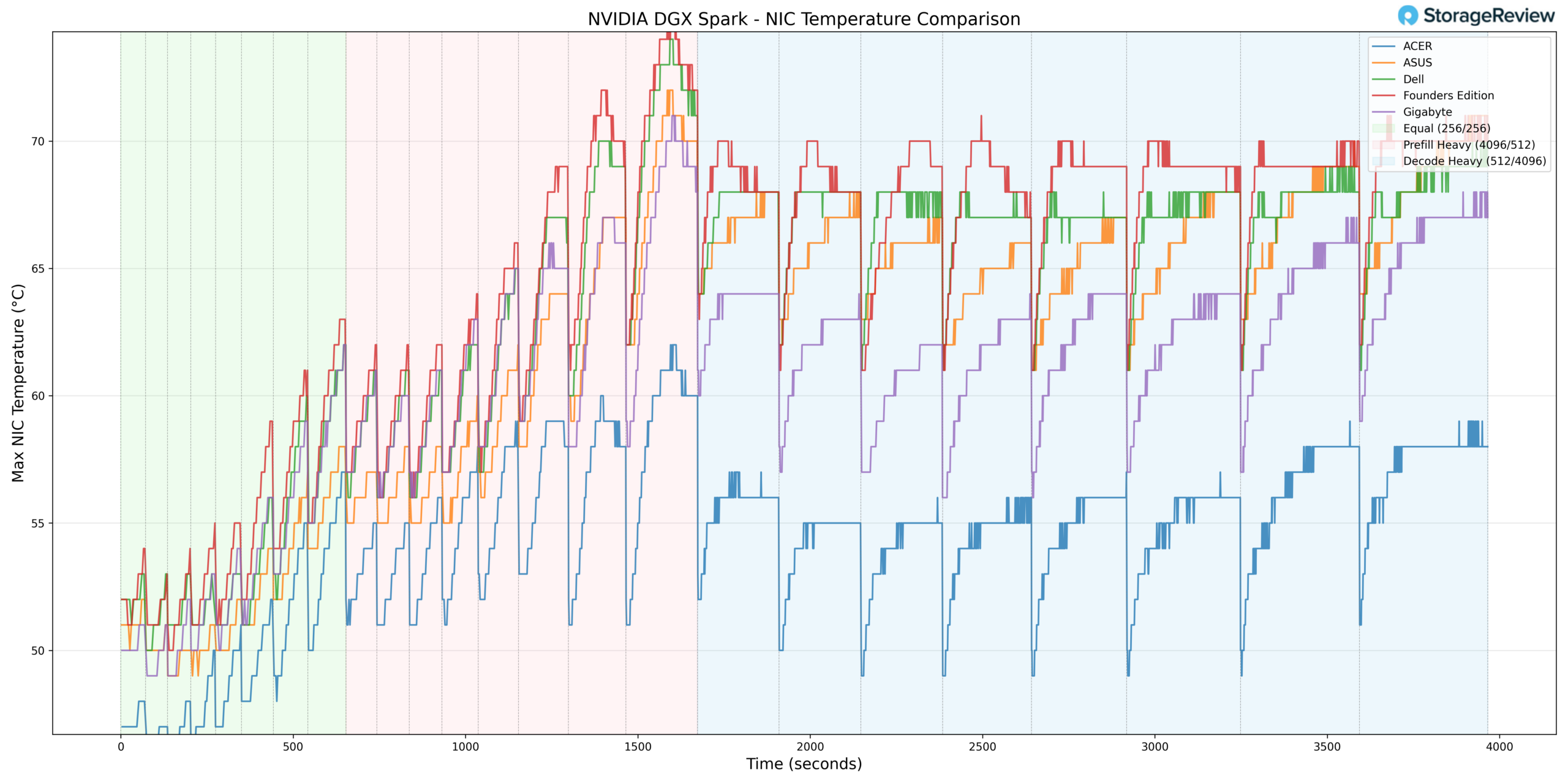

NIC Temperature

NIC thermals peaked at 61°C during heavier workload stages. This represents one of the lower maximum NIC temperatures observed in the comparison group, suggesting effective airflow or component placement within the chassis.

The minimum NIC temperature recorded was 39°C during lighter phases, again reflecting strong baseline thermal behavior.

Throughout testing, the network controller tracked proportionally with workload demand without excessive scaling.

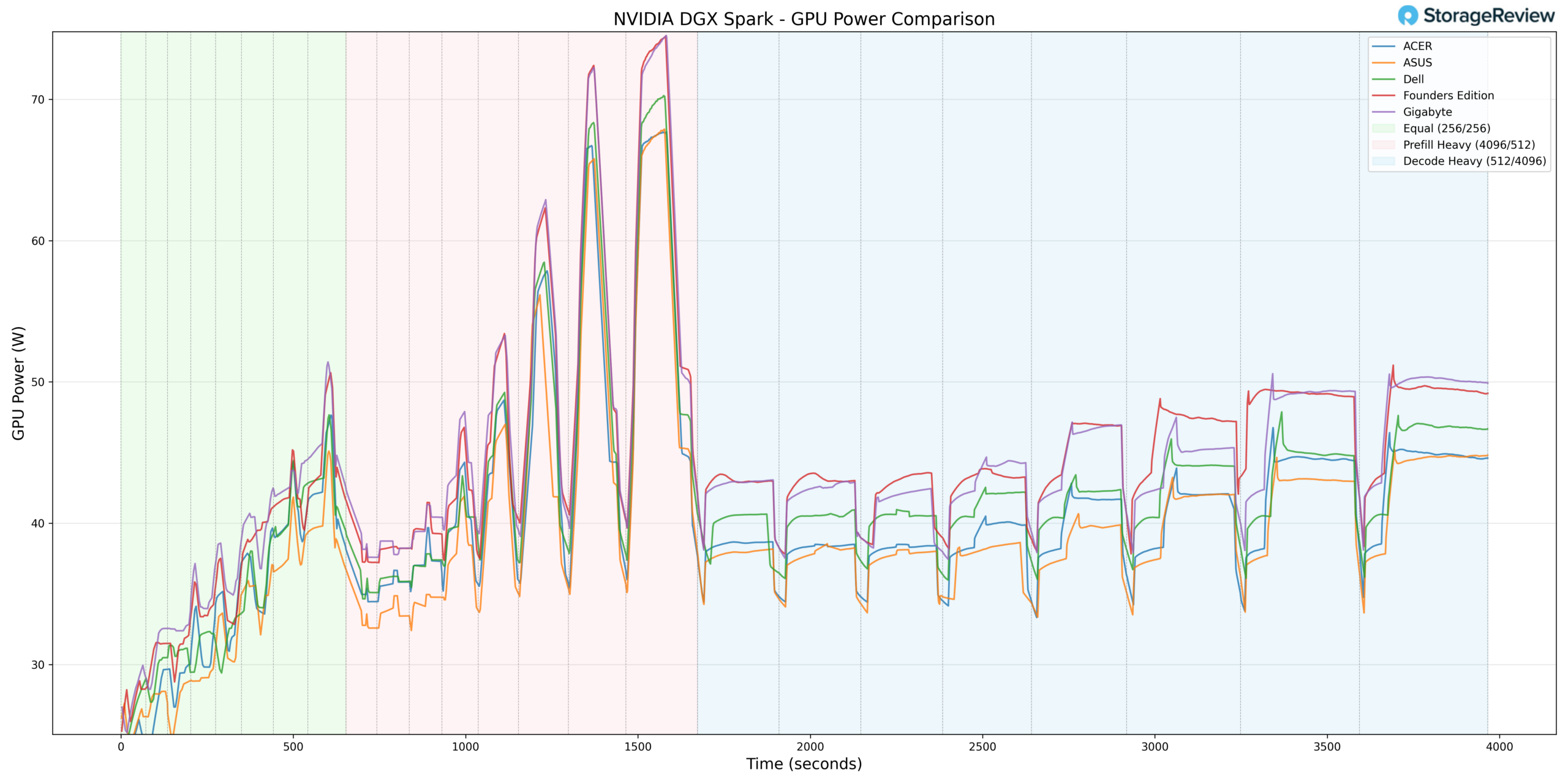

GPU Power Consumption

GPU power draw peaked at 69.18W during Prefill Heavy transitions. This places Acer slightly below the highest power ceilings observed within the GB10 stack.

The lower peak power allocation correlates directly with Acer’s cooler GPU thermal profile. Rather than aggressively pushing toward the upper limit of the power envelope, Acer appears to balance performance and thermal efficiency, resulting in consistently lower component temperatures during burst phases.

During sustained Decode workloads, power consumption stabilized in line with workload demand and remained predictable.

Thermal Summary

Across CPU, GPU, NVMe, and NIC monitoring, the Acer GB10 demonstrated the coolest overall thermal profile in the comparison group. CPU peaked at 74.7°C and GPU at 69°C during burst-heavy transitions, while NVMe remained under 57°C and NIC peaked at 61°C. GPU power draw topped out at 69.18W, slightly below the highest observed values in the stack.

Overall, Acer’s implementation prioritizes thermal efficiency and sustained stability, maintaining substantial headroom during aggressive workload transitions while delivering consistent performance under extended load.

Acer Veriton GN100 Performance Testing

To evaluate the Acer Veriton GN100, we tested Spark units using the vLLM Online Serving benchmark, the most widely adopted high-throughput inference and serving engine for large language models. The vLLM online serving benchmark simulates real-world production workloads by sending concurrent requests to a running vLLM server, measuring key metrics, including total token throughput (tokens per second), time to first token, and time per output token, across varying load conditions.

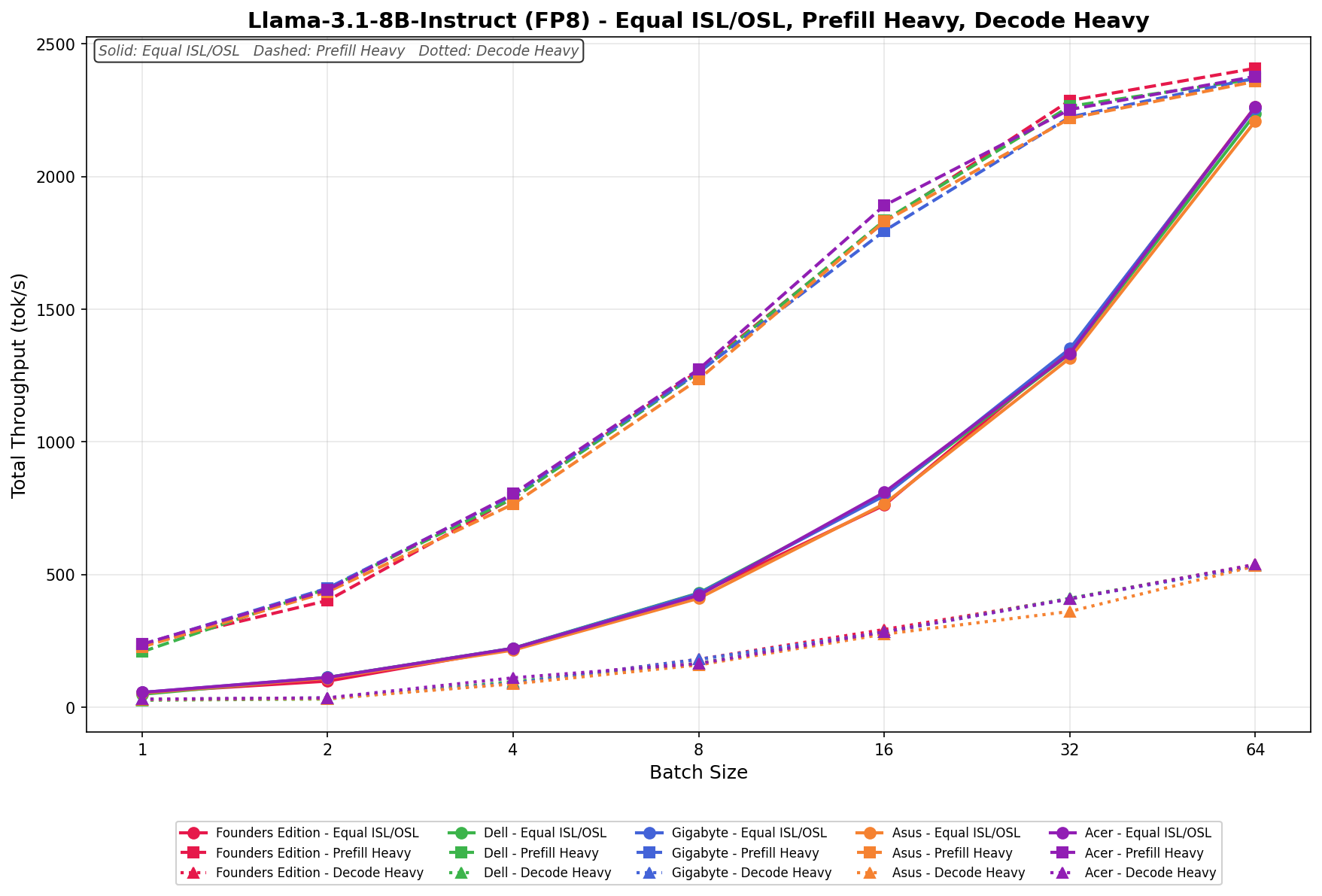

Our testing spanned a range of models, from dense architectures to micro-scaling data types. The tests evaluated performance across three workload scenarios: Equal ISL/OSL, Prefill Heavy, and Decode Heavy. These scenarios represent distinct real-world serving patterns, from balanced input and output loads to compute-intensive prompt processing and memory-bandwidth-bound token generation.

In addition to the Acer Veriton GN100, we benchmarked the NVIDIA Founders Edition Spark as a reference point, alongside OEM systems from ASUS, Dell, and GIGABYTE. This allowed us to place Acer’s results within the broader competitive landscape and understand where it leads, keeps pace with the pack, or trails across different models and workload types.

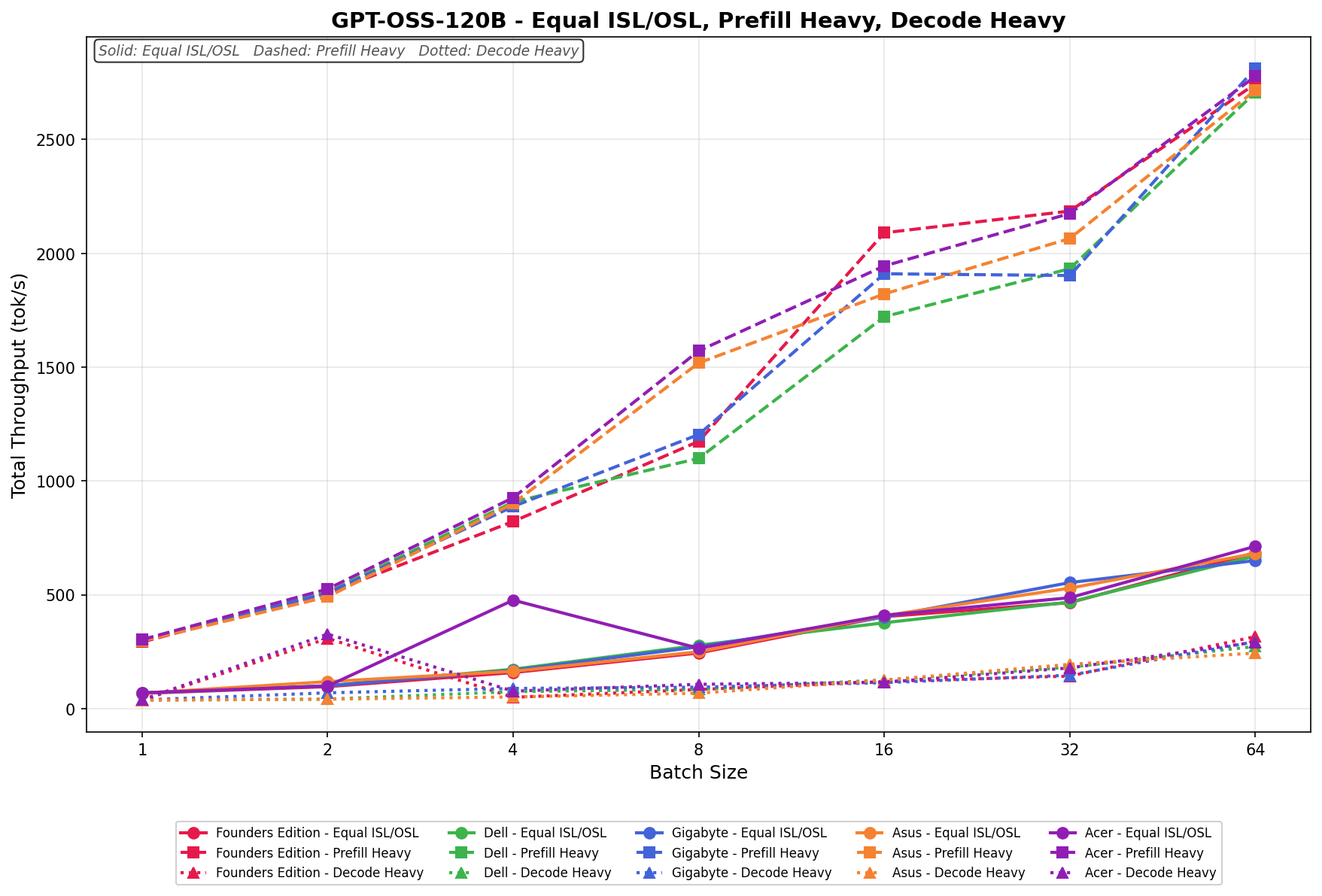

GPT-OSS-120B

In Equal ISL/OSL, the Acer scales from 69.65 to 713.18 tok/s across the batch sweep. Throughput shows some variability at lower batch sizes before stabilizing and growing consistently from batch 8 onward through batch 64.

Prefill Heavy begins at 303.61 tok/s and climbs to 2,777.56 tok/s by batch size 64. Scaling is strong and progressive throughout, with particularly aggressive growth through batch 8 and continued gains at larger batch sizes.

Decode Heavy ranges from 38.38 to 292.41 tok/s, with gradual and consistent scaling across the batch sweep. Lower batch sizes show some variability before throughput stabilizes and grows steadily from batch 16 onward.

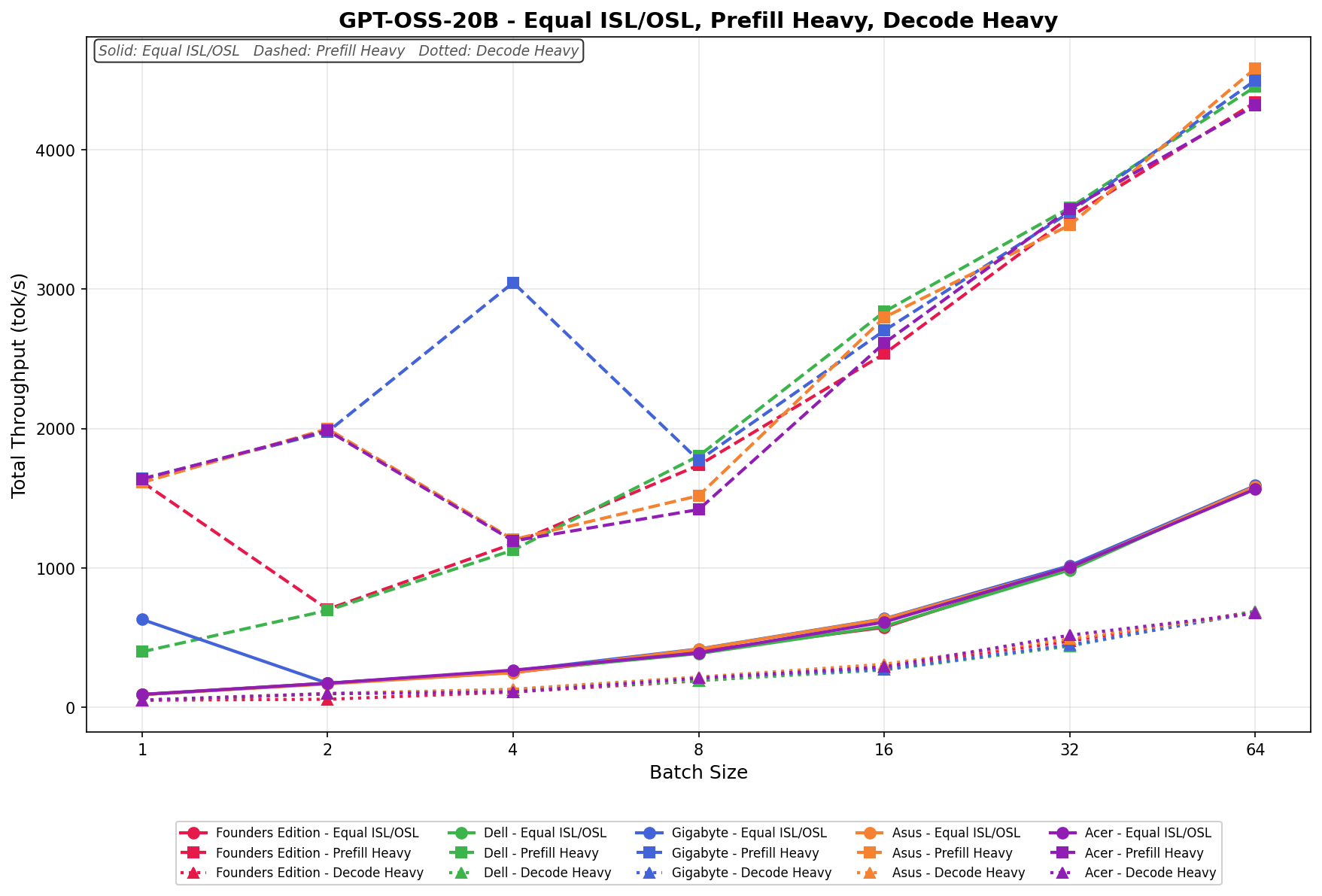

GPT-OSS-20B

In Equal ISL/OSL, the Acer scales from 91.91 to 1,565.62 tok/s with strong and consistent gains across the full batch sweep. Throughput approximately doubles from batch 1 through batch 4, then continues climbing through batches 32 and 64.

Prefill Heavy begins at 1,637.72 tok/s and climbs to 4,317.73 tok/s as the batch size increases to 64. Growth is strong through batch 2, moderates through the mid-range, then accelerates again at batch 32 and 64.

Decode Heavy ranges from 50.55 to 674.26 tok/s, with gradual and consistent scaling across the batch sweep.

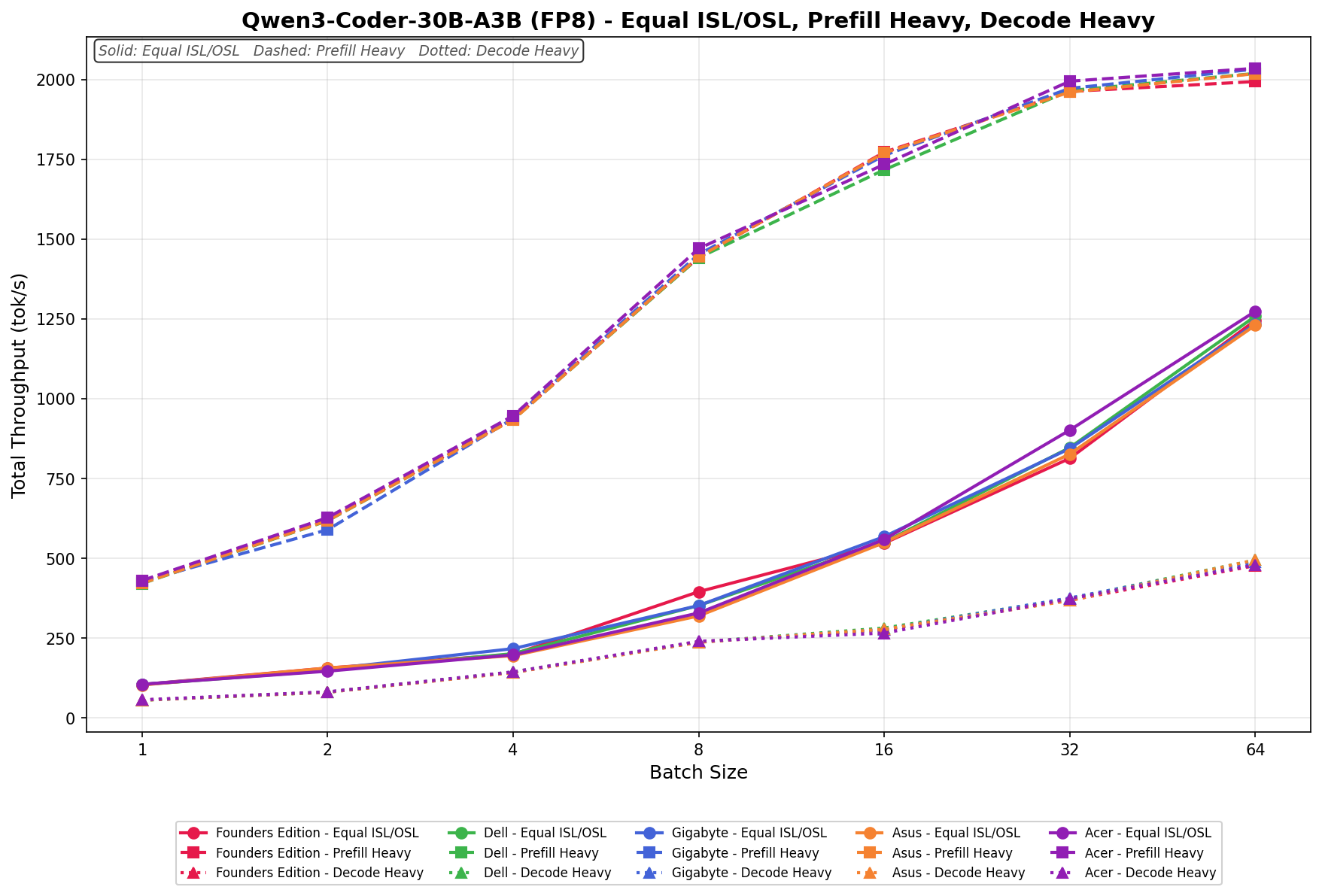

Qwen3 coder 30B A3B FB8

In Equal ISL/OSL, the Acer scales from 104.64 to 1,273.47 tok/s, with steady, consistent growth across the full batch sweep. Throughput approximately doubles at each step through batch 16, then continues to climb through batches 32 and 64.

Prefill Heavy begins at 429.86 tok/s and grows to 2,034.76 tok/s by batch size 64. Scaling is strong through batch 8, then moderates as the workload approaches a plateau at batch 32 and 64.

Decode Heavy ranges from 55.94 to 478.59 tok/s, with gradual and consistent scaling across the batch sweep.

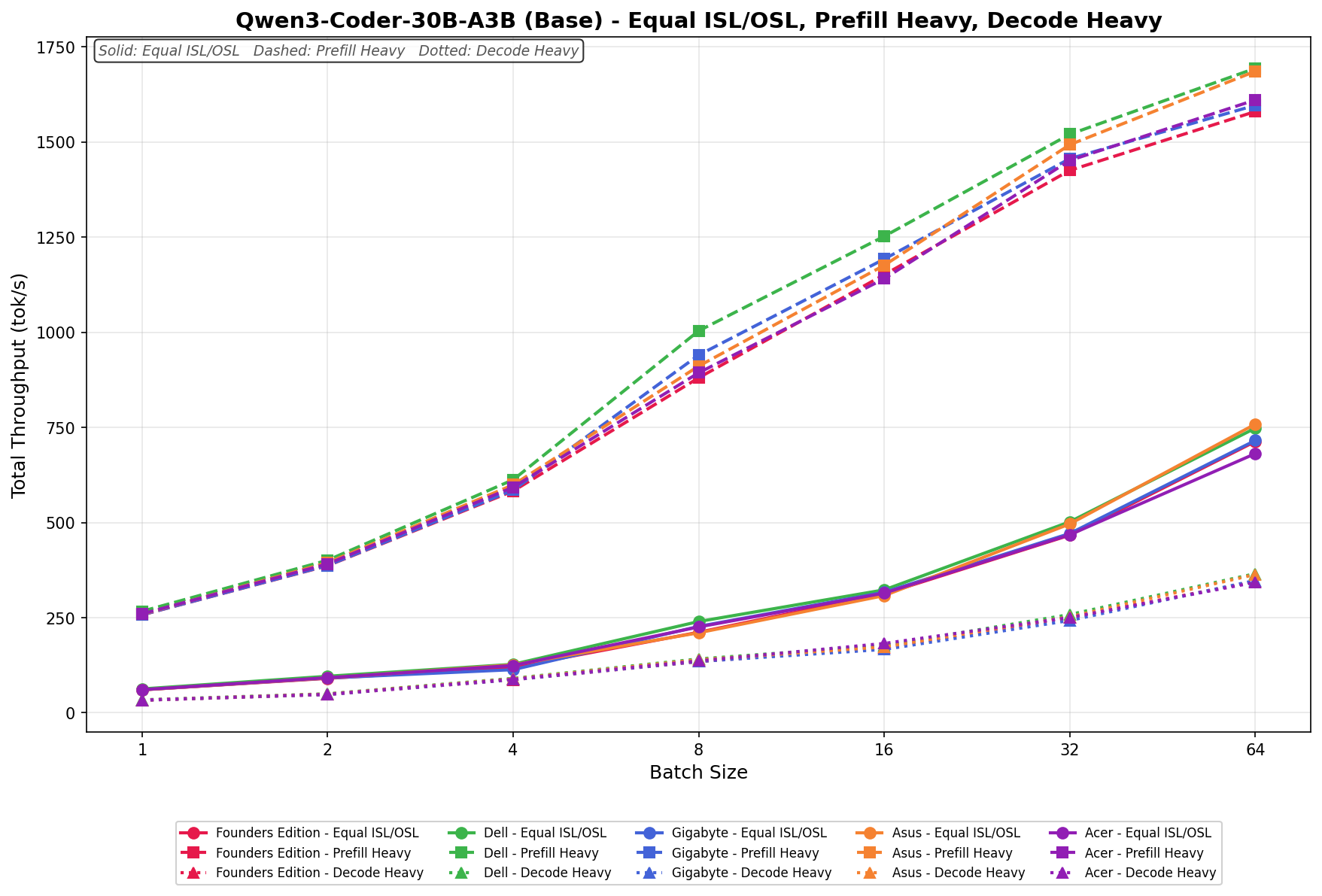

Qwen3 coder 30B A3B Base

In Equal ISL/OSL, the Acer scales from 60.71 to 681.18 tok/s, delivering a meaningfully lower throughput than the FP8 variant across all batch sizes. Growth is steady through the sweep, though the gap to FP8 widens at higher batch sizes.

Prefill Heavy begins at 260.11 tok/s and climbs to 1,610.56 tok/s by batch size 64. Scaling is consistent across the sweep, though throughput sits well below the FP8 counterpart at every batch size.

Decode Heavy ranges from 33.31 to 342.79 tok/s, with lower output than the FP8 variant throughout the sweep. Growth is gradual and consistent across all batch sizes.

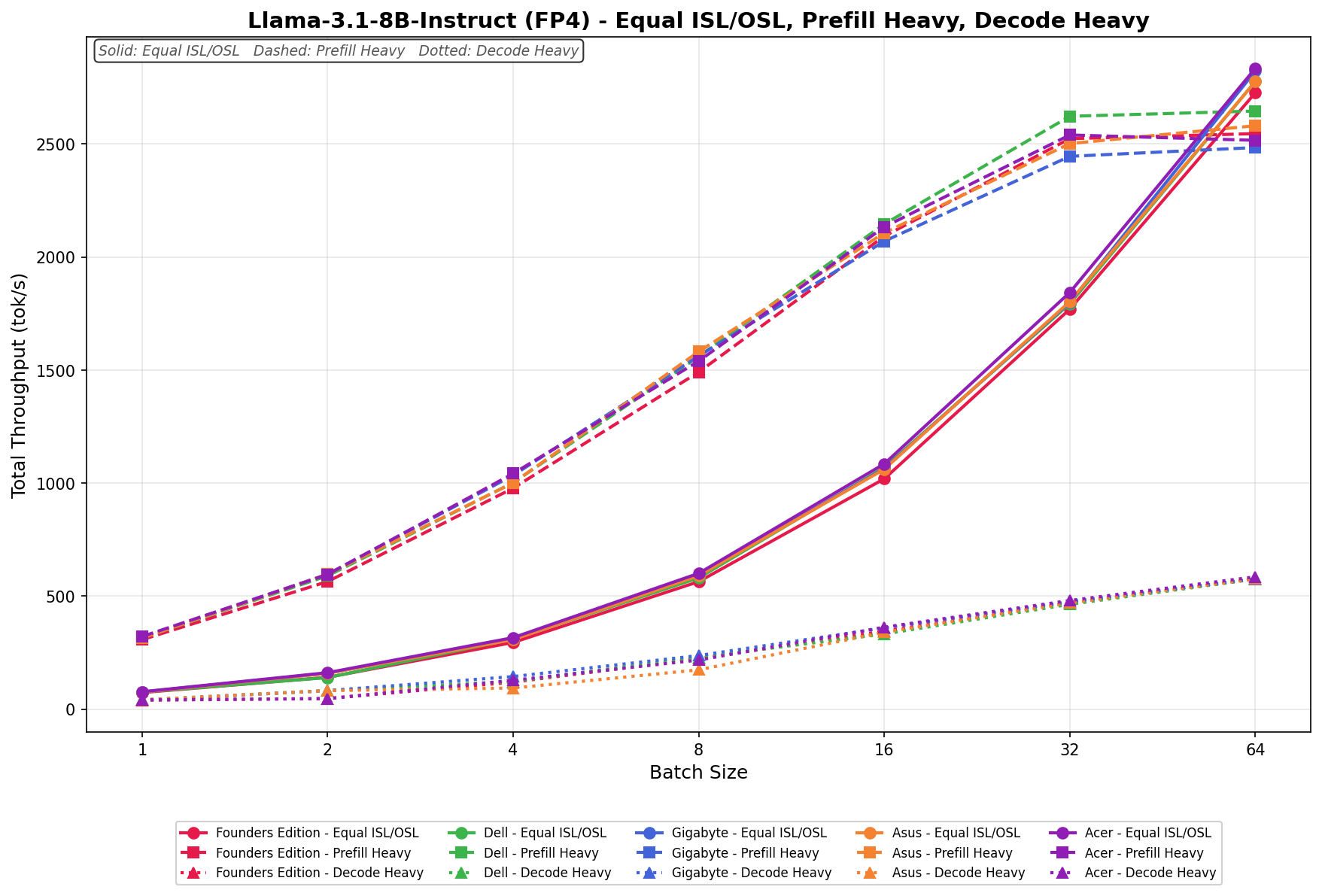

Llama 3.1 8B Instruct FP4

In Equal ISL/OSL, the Acer scales from 77.15 to 2,834.70 tok/s, delivering a clear throughput advantage over the FP8 variant across all batch sizes. Scaling is smooth and linear through batch 32, with continued strong gains at batch 64.

Prefill Heavy begins at 321.54 tok/s and climbs to 2,539.85 tok/s at batch 32 before plateauing slightly at 2,516.13 tok/s at batch 64. The FP4 model maintains higher throughput than its FP8 counterpart across mid- and upper-batch sizes.

Decode Heavy ranges from 41.21 to 585.63 tok/s, with notably higher output than the FP8 variant across all batch sizes. Growth is consistent across the full sweep.

Llama 3.1 8B Instruct FP8

In Equal ISL/OSL, the Acer scales from 55.93 to 2,262.73 tok/s across the batch sweep, with steady and consistent gains at every step. Throughput nearly doubles from batch 1 through batch 8 and continues climbing through batch 32 and 64 with no signs of saturation.

Prefill Heavy begins at 237.62 tok/s and grows to 2,376.88 tok/s by batch size 64. Scaling is strong and progressive throughout, with throughput growing consistently across the full batch sweep.

Decode Heavy ranges from 30.39 to 538.18 tok/s, with consistent growth across the batch sweep.

GPU Direct Storage

One of the tests we conducted on the Spark was the MagnumIO GPU Direct Storage (GDS) test. GDS is a feature developed by NVIDIA that allows GPUs to bypass the CPU when accessing data stored on NVMe drives or other high-speed storage devices. Instead of routing data through the CPU and system memory, GDS enables direct communication between the GPU and the storage device, significantly reducing latency and improving data throughput.

Acer uses the 4TB Samsung PM9E1 Gen5 SSD inside the Veriton GN100 AI, which to date is the fastest 2242 M.2 drive we’ve seen on the market.

How GPU Direct Storage Works

Traditionally, when a GPU processes data stored on an NVMe drive, the data must first travel through the CPU and system memory before reaching the GPU. This process introduces bottlenecks because the CPU acts as a middleman, adding latency and consuming valuable system resources. GPU Direct Storage eliminates this inefficiency by enabling the GPU to access data directly from the storage device via the PCIe bus. This direct path reduces data movement overhead, enabling faster and more efficient data transfers.

AI workloads, especially those involving deep learning, are highly data-intensive. Training large neural networks requires processing terabytes of data, and any delay in data transfer can lead to underutilized GPUs and longer training times. GPU Direct Storage addresses this challenge by ensuring that data is delivered to the GPU as quickly as possible, minimizing idle time and maximizing computational efficiency.

In addition, GDS is particularly beneficial for workloads that involve streaming large datasets, such as video processing, natural language processing, or real-time inference. By reducing the reliance on the CPU, GDS accelerates data movement and frees up CPU resources for other tasks, further enhancing overall system performance.

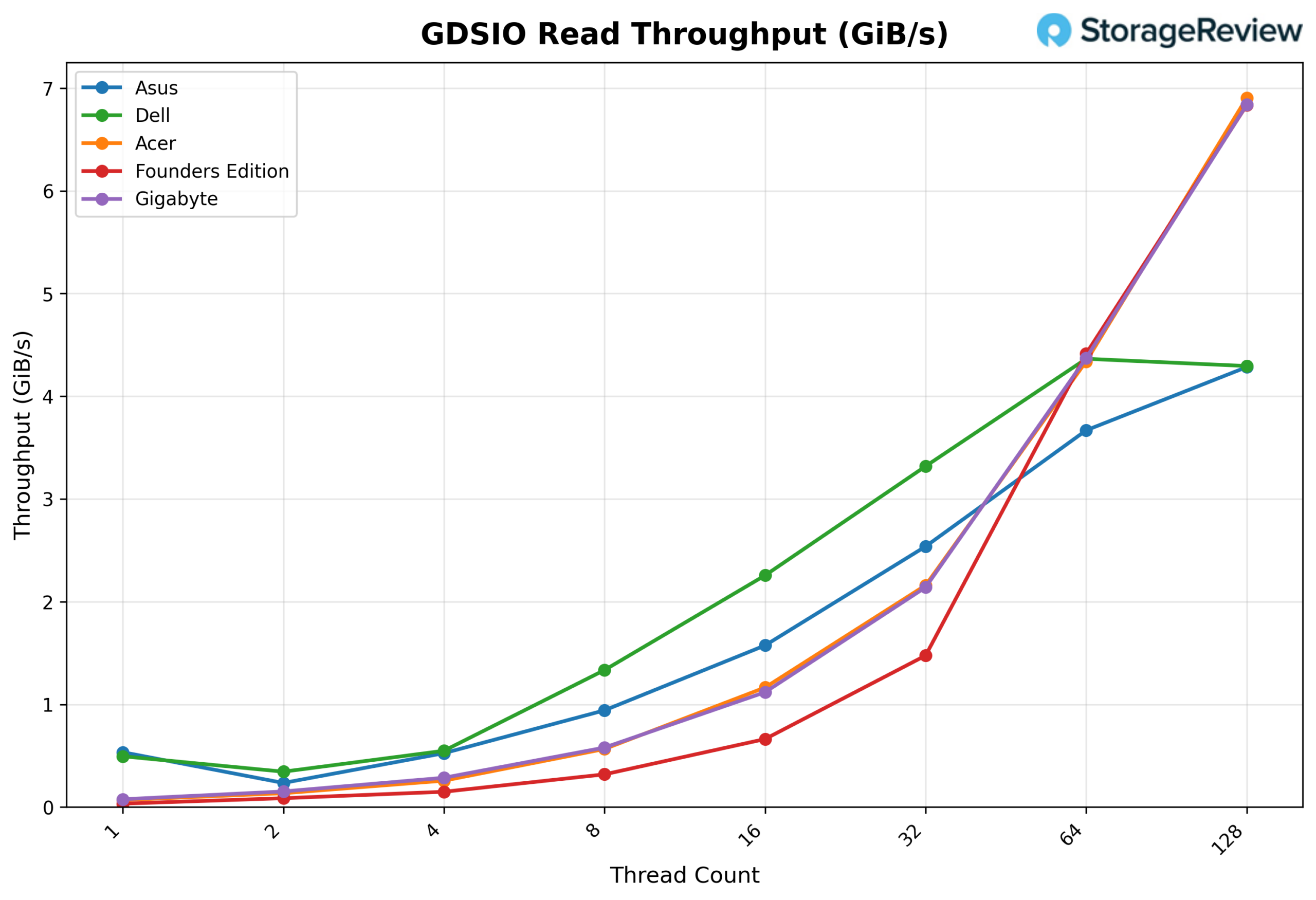

GDSIO Read Throughput 16k

Looking at GDSIO Read Throughput 16K, the Acer begins at 0.07 GiB/s at 1 thread and scales linearly through 2 threads (0.13 GiB/s), 4 threads (0.26 GiB/s), and 8 threads (0.57 GiB/s). Throughput continues to climb at 16 threads (1.16 GiB/s), 32 threads (2.16 GiB/s), and 64 threads (4.34 GiB/s). At 128 threads, throughput reaches 6.91 GiB/s, the highest observed result in the sweep, with no clear saturation point indicating the platform continues to scale at small I/O sizes.

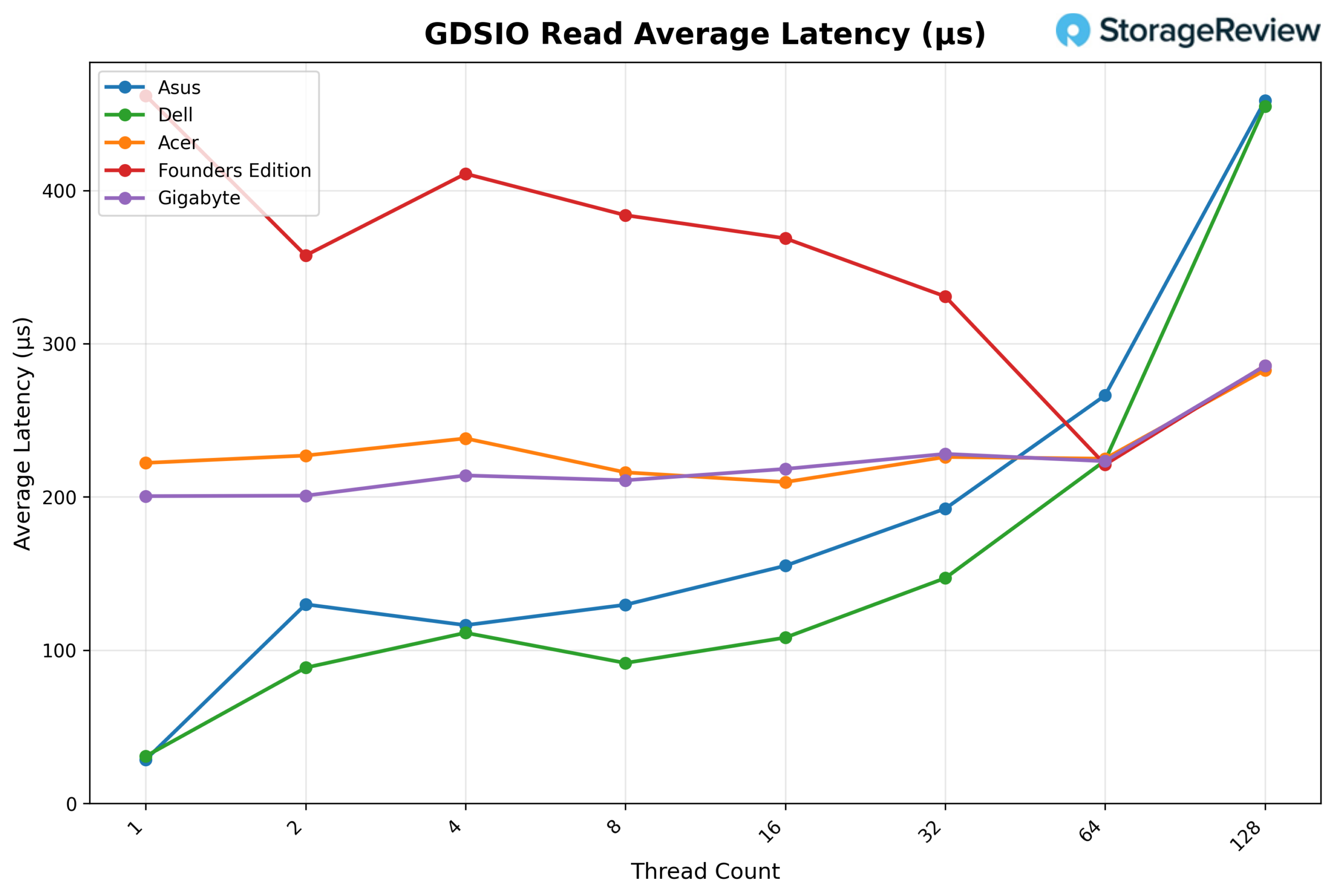

GDSIO Read Average Latency 16K

Looking at GDSIO Read Average Latency (16K), the Acer starts at approximately 0.22ms at 1 thread and remains remarkably stable across the sweep: 0.23ms at 2 threads, 0.24ms at 4 threads, and 0.22ms at 8 threads. Latency stays similarly flat at 16 threads (0.21ms), 32 threads (0.23ms), and 64 threads (0.22ms), before rising slightly to 0.28ms at 128 threads. This near-flat latency profile across all thread counts reflects the continued scaling behavior seen in throughput.

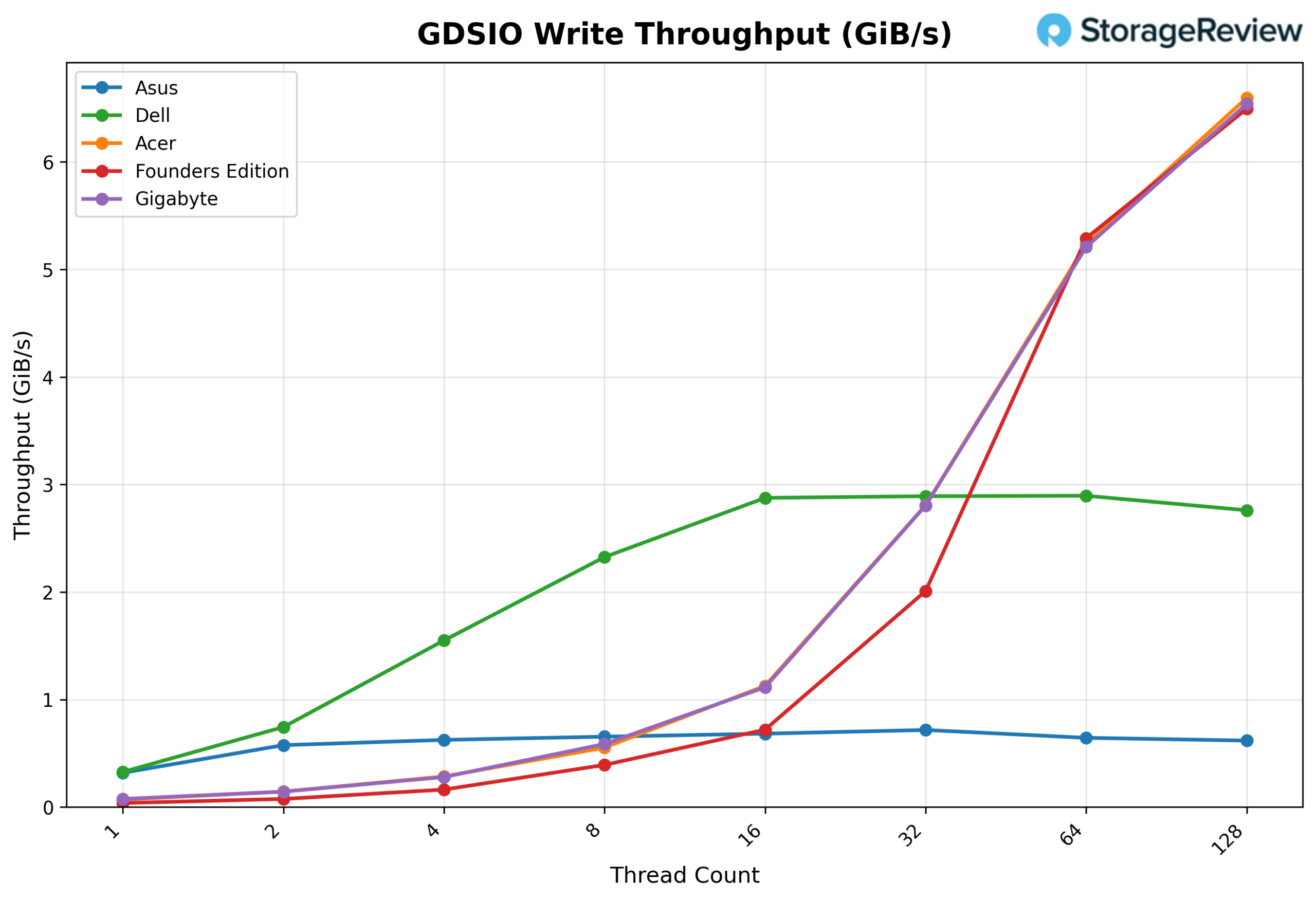

GSDIO Write Throughput 16K

Looking at GDSIO Write Throughput 16K, the Acer begins at 0.07 GiB/s at 1 thread and scales through 2 threads (0.14 GiB/s), 4 threads (0.28 GiB/s), and 8 threads (0.55 GiB/s). Growth accelerates at 16 threads (1.13 GiB/s), 32 threads (2.81 GiB/s), and 64 threads (5.23 GiB/s). At 128 threads, throughput reaches 6.60 GiB/s, the peak of the sweep, again showing no clear saturation and continued scaling at small block sizes.

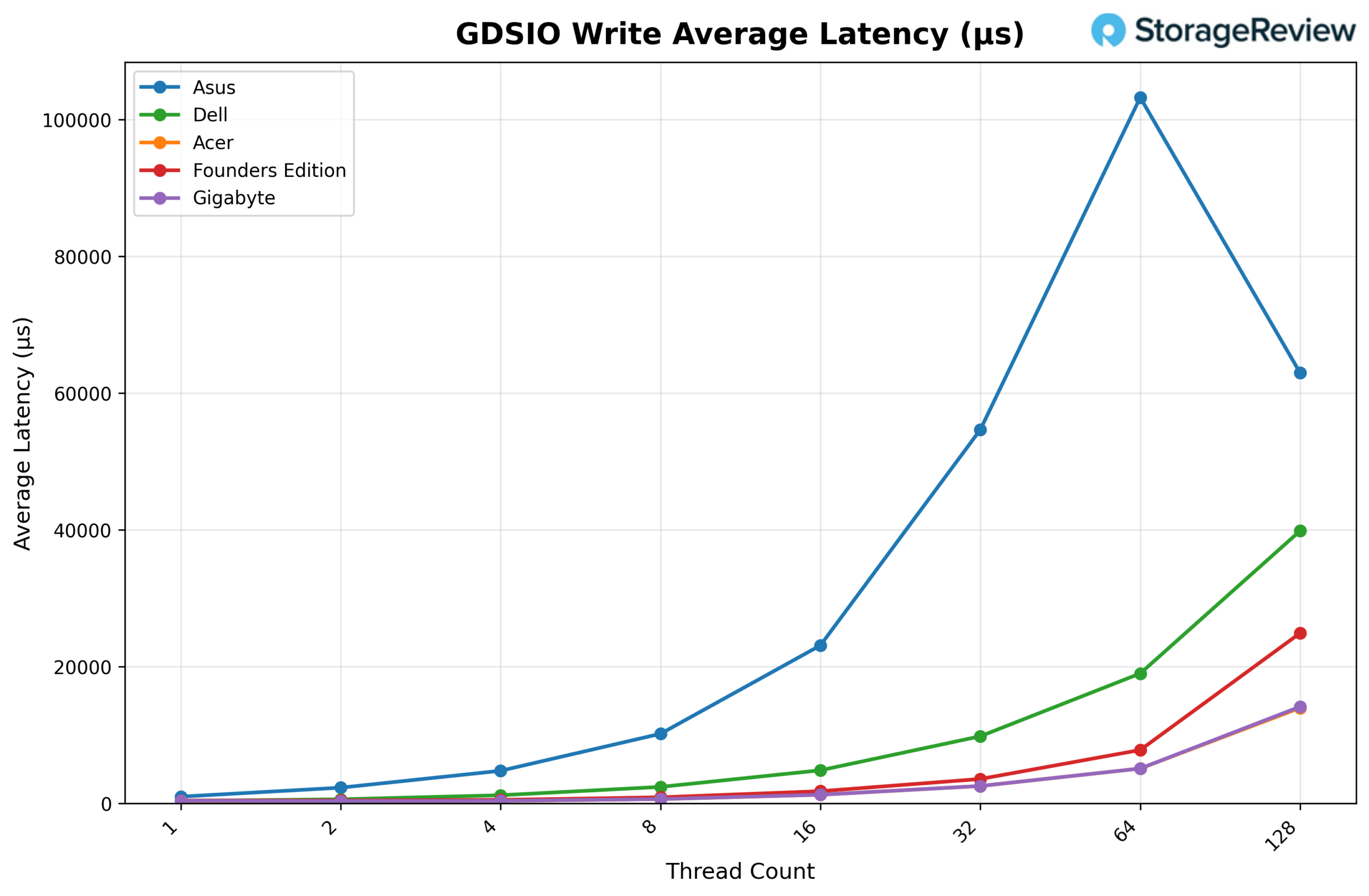

GDSIO Write Average Latency 16K

Looking at GDSIO Write Average Latency (16K), the Acer starts at approximately 0.22ms with 1 thread and remains stable at 2 threads (0.21ms), 4 threads (0.22ms), and 8 threads (0.22ms). Latency dips slightly to 0.17ms at 32 threads before rising modestly to 0.19ms at 64 threads. At 128 threads, latency climbs to 0.30ms, still relatively low compared to the 1M results, consistent with the continued scaling behavior at this smaller I/O size.

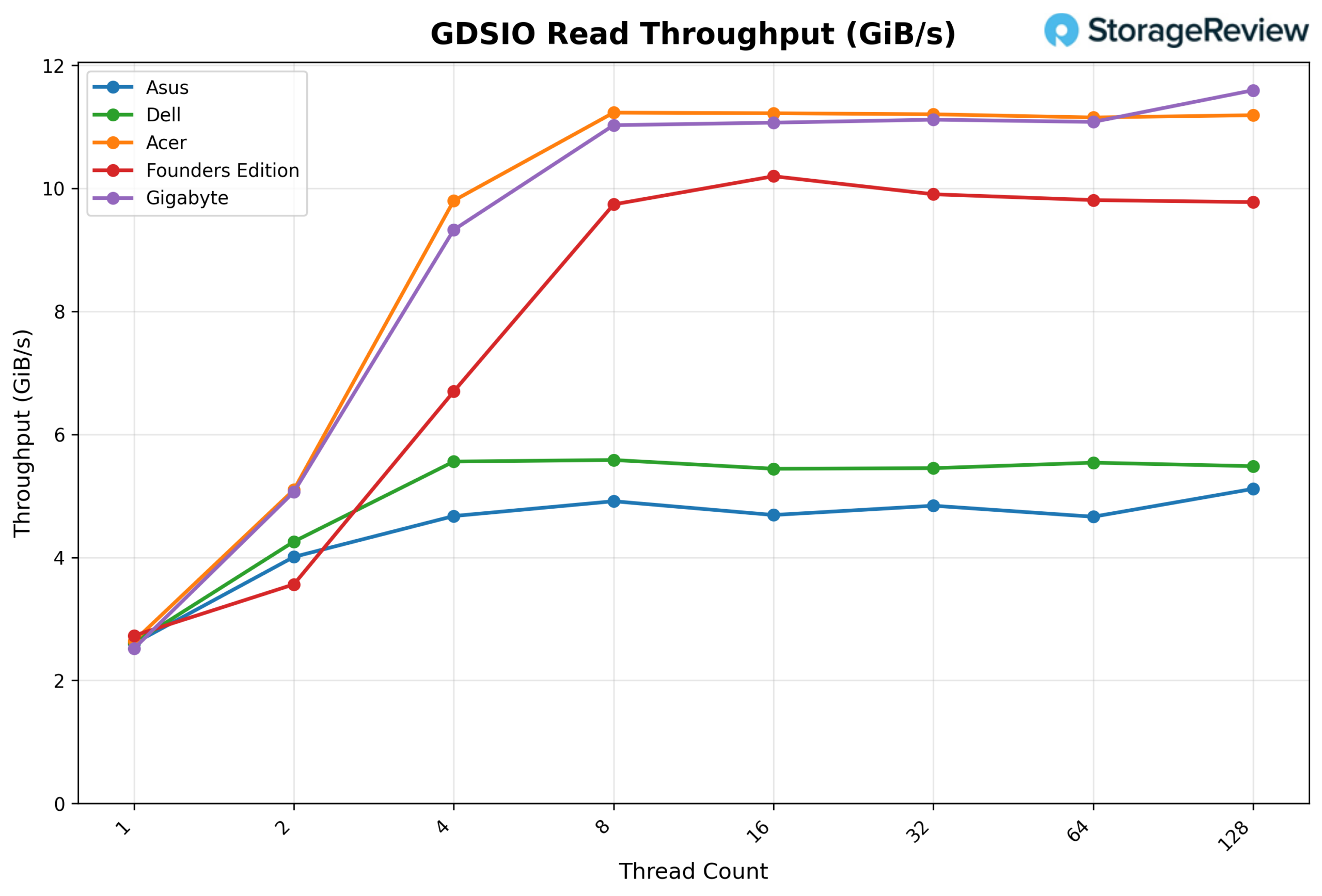

GDSIO Read Throughput 1M

Looking at GDSIO Read Throughput 1M, the Acer begins at 2.64 GiB/s on 1 thread, scales to 5.10 GiB/s on 2 threads, and 9.80 GiB/s on 4 threads. By 8 threads, throughput reaches 11.23 GiB/s, after which the platform effectively saturates. Performance remains consistent at 16 threads (11.22 GiB/s), 32 threads (11.21 GiB/s), and 64 threads (11.15 GiB/s), showing a stable plateau. At 128 threads, throughput holds steady at 11.19 GiB/s, confirming the saturation ceiling across the full sweep.

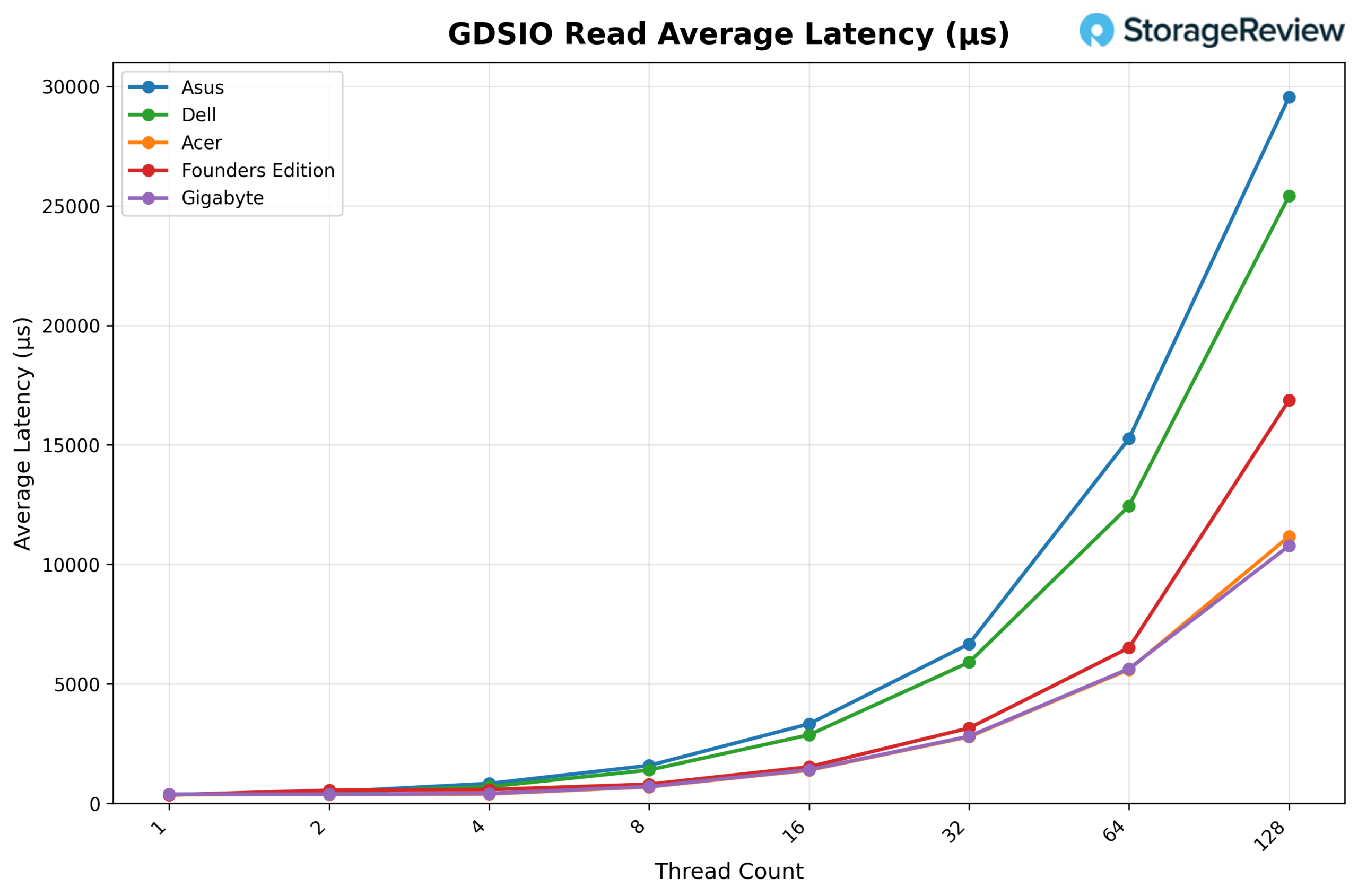

GDSIO Read Average Latency 1M

Looking at GDSIO Read Average Latency (1M), the Acer starts at approximately 0.37ms at 1 thread and remains similar at 2 threads (0.38ms) and 4 threads (0.40ms). Latency increases with concurrency, rising to 0.70ms at 8 threads, 1.39ms at 16 threads, and 2.79ms at 32 threads. The upward trend continues at 64 threads (5.60ms) and reaches 11.17ms at 128 threads, corresponding with peak concurrency levels, while throughput remains largely sustained.

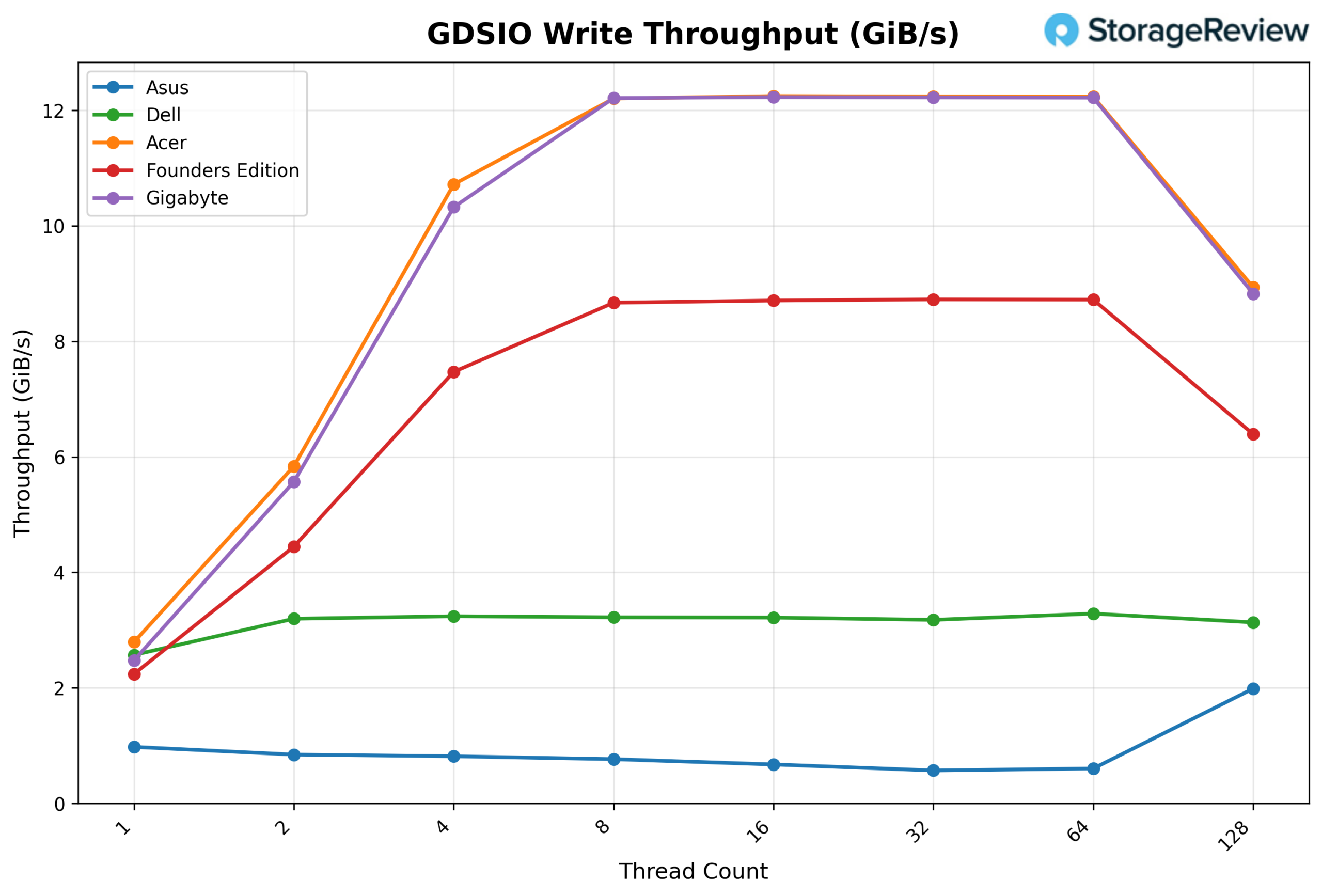

GDSIO Write Throughput 1M

Looking at GDSIO Write Throughput 1M, the Acer begins at 2.79 GiB/s on 1 thread and scales strongly to 5.84 GiB/s on 2 threads and 10.72 GiB/s on 4 threads. By 8 threads, throughput reaches 12.20 GiB/s, and the platform effectively saturates. Performance remains consistent at 16 threads (12.25 GiB/s), 32 threads (12.24 GiB/s), and 64 threads (12.23 GiB/s), showing a stable plateau. At 128 threads, throughput drops notably to 8.94 GiB/s, suggesting contention or resource exhaustion at the highest concurrency level.

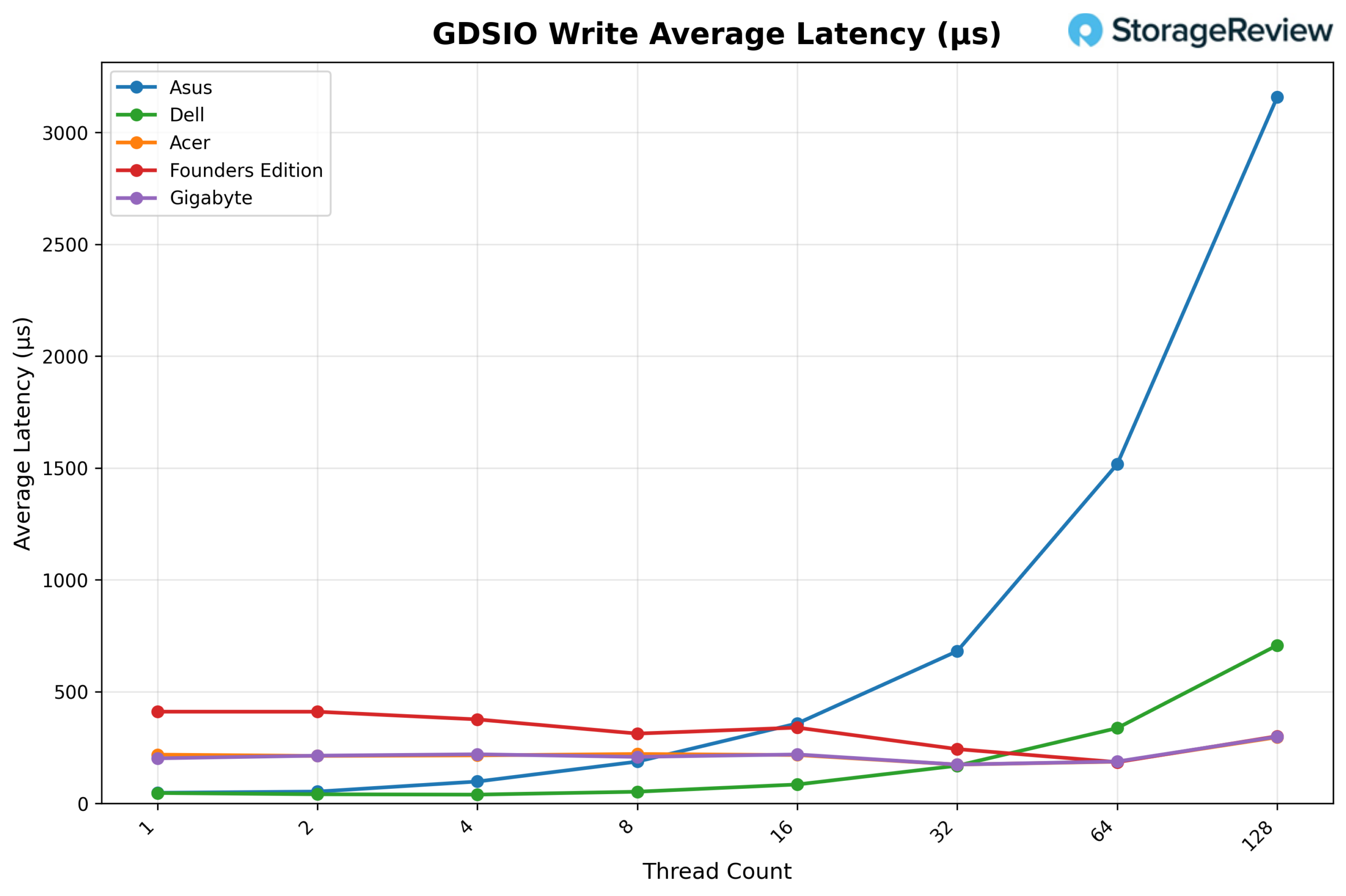

GDSIO Write Average Latency 1M

Looking at GDSIO Write Average Latency (1M), the Acer starts at approximately 0.35ms with 1 thread and remains low at 2 threads (0.33ms) and 4 threads (0.36ms). Latency rises with higher concurrency, reaching 0.64ms at 8 threads, 1.28ms at 16 threads, and 2.55ms at 32 threads. The upward trend continues at 64 threads (5.11ms) and climbs sharply to 13.98ms at 128 threads, consistent with the throughput degradation observed at maximum concurrency.

Conclusion

The Acer Veriton GN100 posted the coolest overall thermal profile in our Spark comparison. During burst-heavy Prefill transitions and sustained Decode workloads, it consistently maintained lower peak CPU and GPU temperatures than the rest of the group. Rather than operating in the upper 80°C range under load, it remained within a more controlled operating band throughout extended runs, indicating effective airflow design and balanced power tuning.

Storage performance was another clear strength. Equipped with the 4TB Samsung PM9E1 Gen5 SSD, the GN100 delivered the strongest small-block GPU Direct Storage scaling in the group. At 16K block sizes, read and write latency remained remarkably flat across thread counts while throughput scaled cleanly to the top of the chart. At 1M transfers, the platform reached saturation quickly and sustained competitive ceilings through mid-level concurrency before tapering at the highest thread counts.

In vLLM inference testing, performance remained tightly grouped with the broader Spark ecosystem, as expected given the shared GB10 foundation. The separation emerged not in raw compute output, but in thermals and storage behavior under load.

Across the Spark lineup, architectural consistency keeps inference performance closely aligned. What distinguishes the GN100 in this round is its cooler sustained operating profile paired with strong Gen5 NVMe performance, making it one of the more thermally efficient and storage-forward implementations we have tested so far.

Amazon

Amazon