On December 15, 2025, Microsoft announced that Windows Server 2025 would finally adopt the NVMe standard natively in its storage architecture. However, NVMe storage has been a popular storage format on servers, enterprise workstations, and consumer PCs for many years, with OS compatibility built in since Windows Server 2012 R2 and Windows 8.1. With this in mind, an announcement about “native” NVMe support might not seem important or even newsworthy, but we promise there’s more to this than meets the eye.

What Does “Native NVMe” Even Mean?

In previous versions of the Windows consumer and server edition storage stacks, commands used to read and write data, regardless of the underlying hardware’s protocols, were always translated into SCSI commands. The Small Computer System Interface (SCSI) standard dates back to the early 1980s and was designed to connect peripherals and storage drives to computers (Storage Networking Industry Association, n.d.). It is the foundation of several modern storage protocols used for various workloads, including network-connected protocols such as iSCSI (Internet Small Computer System Interface) and FCP (Fibre Channel Protocol), and local storage interfaces such as SAS (Serial Attached SCSI) and UASP (USB Attached SCSI).

The Old Way

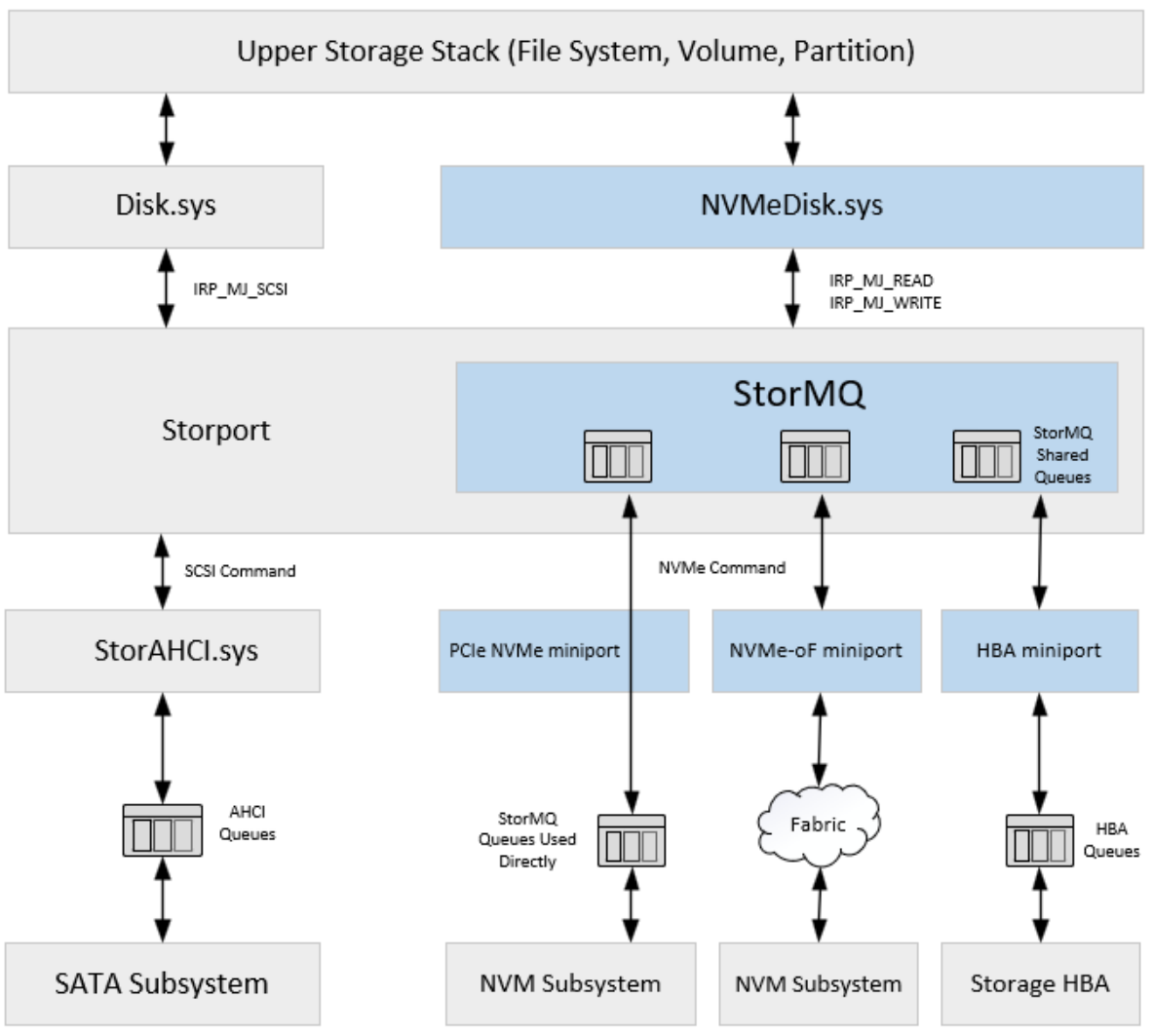

By converting different protocols into SCSI commands, Microsoft unified storage commands at higher levels of the operating system. Still, it sacrificed many of the scalability and performance improvements of modern storage architectures. The old path for I/O operations went like this:

- Read and write operations take place in the upper storage stack at the filesystem level.

- Commands are passed down to the Disk.sys driver.

- Disk.sys translates generic storage commands into SCSI commands.

- Storport receives the SCSI commands and sends them to the appropriate Miniport driver (e.g., StorAHCI.sys for SATA drives).

- The corresponding Miniport driver communicates directly with the storage device, translating it again to the appropriate storage command format.

Other SCSI conventions, such as LUNs (Logical Unit Numbers) used to identify partitions of data on a storage device, were carried forward into the Windows storage stack, even though newer concepts like NVMe namespaces have existed for quite some time (Hands, Worley, & Lakhveer Kaur, n.d.).

The New Standard

Microsoft’s newest storage architecture for Windows Server 2025 enables new features in Storport and replaces Disk.sys with NVMeDisk.sys, providing a scalable, future-ready, high-performance framework.

- Read and write operations take place in the upper storage stack at the filesystem level.

- Commands are passed directly from NVMeDisk.sys to the new StorMQ code within Storport.

- StorMQ generates the appropriate NVMe (or other storage type) commands for each read and write operation and sends them directly to the hardware itself.

(Image from Scott Lee’s SNIA Developer Conference presentation on September 16, 2025)

This new standard for drive operations on Windows Server 2025 eliminates a layer of translation and fully integrates itself with the storage command queues on NVMe, RAID, and HBA devices. Streamlining the Windows storage system also offers additional benefits, such as reduced CPU resource usage from eliminating unnecessary storage command translation and improved utilization of logical processors. The new architecture adopts other NVMe specifications, such as NVMe namespaces and plug-and-play support. It allows vendor or device-type-specific storage Miniport drivers to be created and “plugged in” to Windows for greater compatibility and performance with new classes of storage devices (Lee, SNIA SDC 2025 – Storage Multi-Queue on Windows, 2025).

Ready to Test?

In his presentation at the SNIA Developer Conference on September 16, 2025, Scott Lee revealed that Microsoft was already working closely with vendors to develop new drivers for devices such as RAID cards and HBAs. This suggests that the improvements to StorMQ are coming soon or may already be enabled on many storage devices. The feature was announced as generally available last December, but the new storage stack is enabled only as an opt-in, requiring the addition of a registry key. You can read the steps for enabling it in Microsoft’s native NVMe announcement article.

Warning: Incorrectly modifying the registry can cause serious problems, so be sure to test this on a non-critical server first. Several users who enabled this feature reported issues with NVMe drives with deduplication enabled. Although an official fix from Microsoft is coming soon, you proceed at your own risk!

Testing Native NVMe on Windows Server 2025

Our testing platform for evaluating native NVMe on Windows Server 2025 (OS Build 26100.32370) featured a dual-SP5 socket server equipped with two 128-core AMD EPYC 9754 CPUs. Alongside the multi-core processors was an equally impressive 768GB of DDR5 memory running at 4800 MT/s.

Note: According to Microsoft’s Yash Shekar, an interim improvement unrelated to native NVMe has already been released for Windows Server 2025, which may have provided additional uplift for the non-native storage stack, decreasing the potential delta between results.

To evaluate the potential of the new storage stack, we used fifteen 30.72 TB Solidigm P5316 NVMe SSDs with PCIe 4.0 in a JBOD configuration. An important note is that the Solidigm P5316 has an indirection unit size of 64 kilobytes, which means that write results for smaller sizes (such as 4K tests) are often worse than expected. Considering this larger indirection unit, we ran FIO benchmarks with read and write tests for random 4K, random and sequential 64K, and sequential 128K to compare overall speed across different block sizes. We also monitored CPU usage during testing to evaluate Microsoft’s claims of higher efficiency.

Highlights

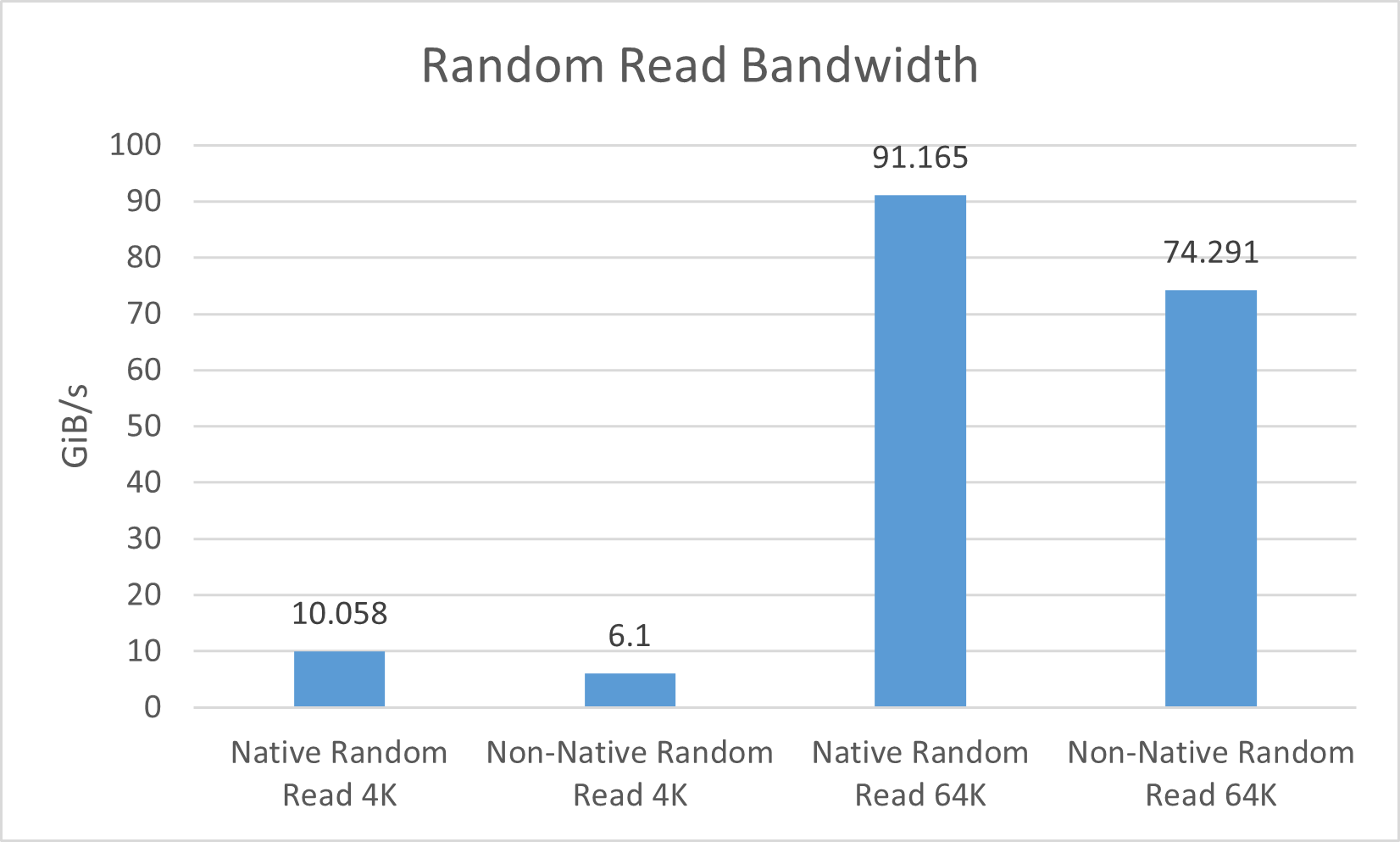

- Massively increased 4K and 64K random read bandwidth and IOPS

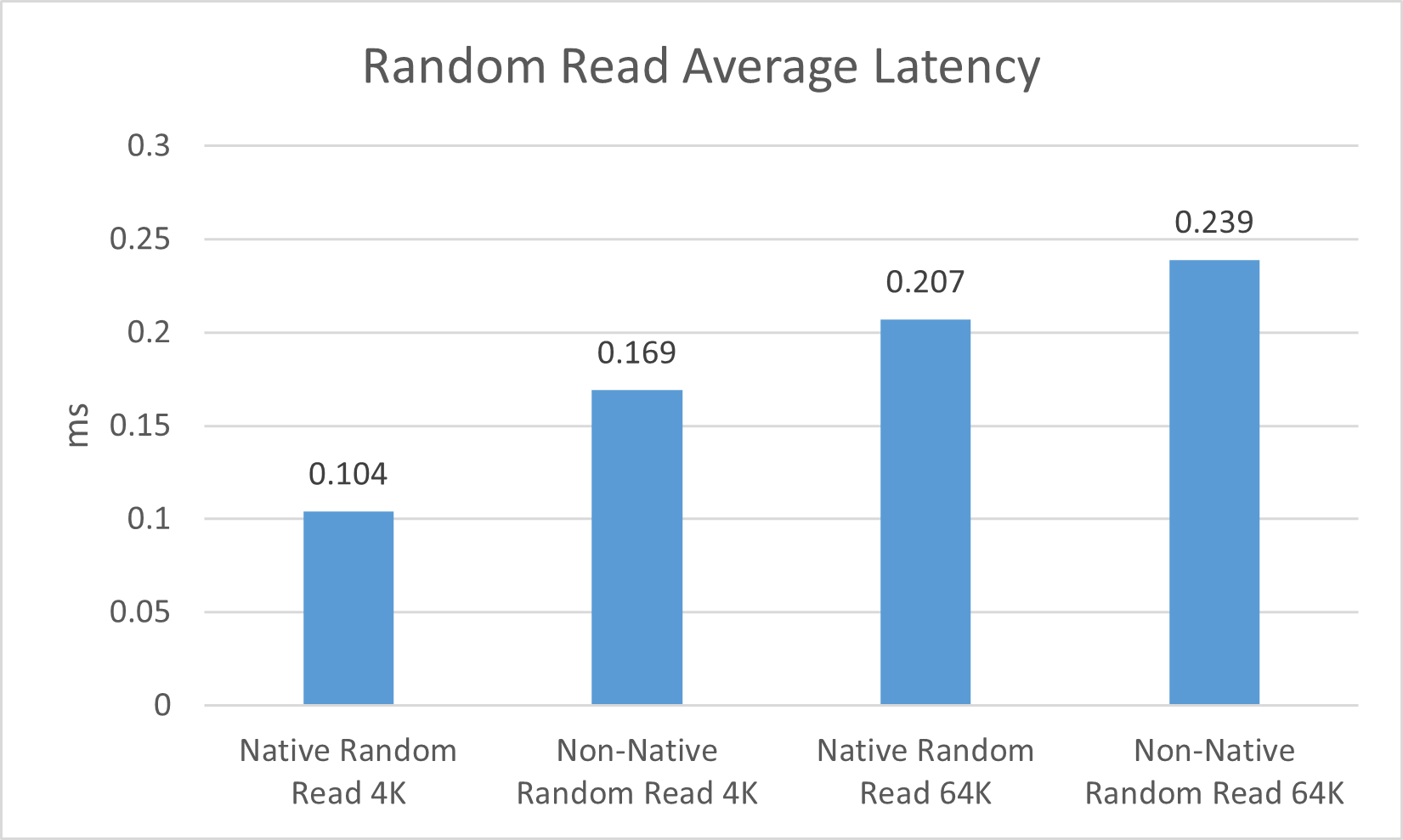

- Lower 4K and 64K random read latency

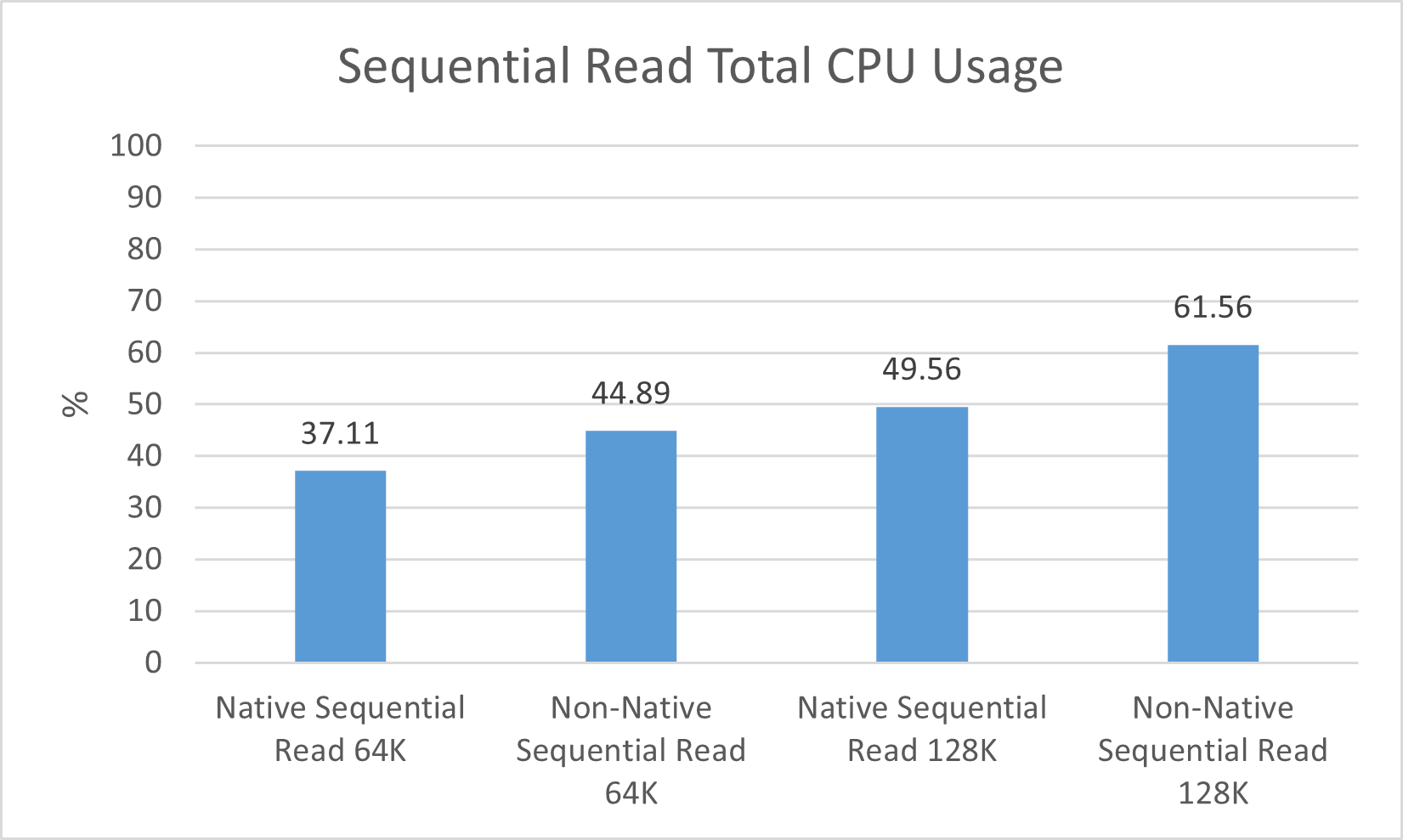

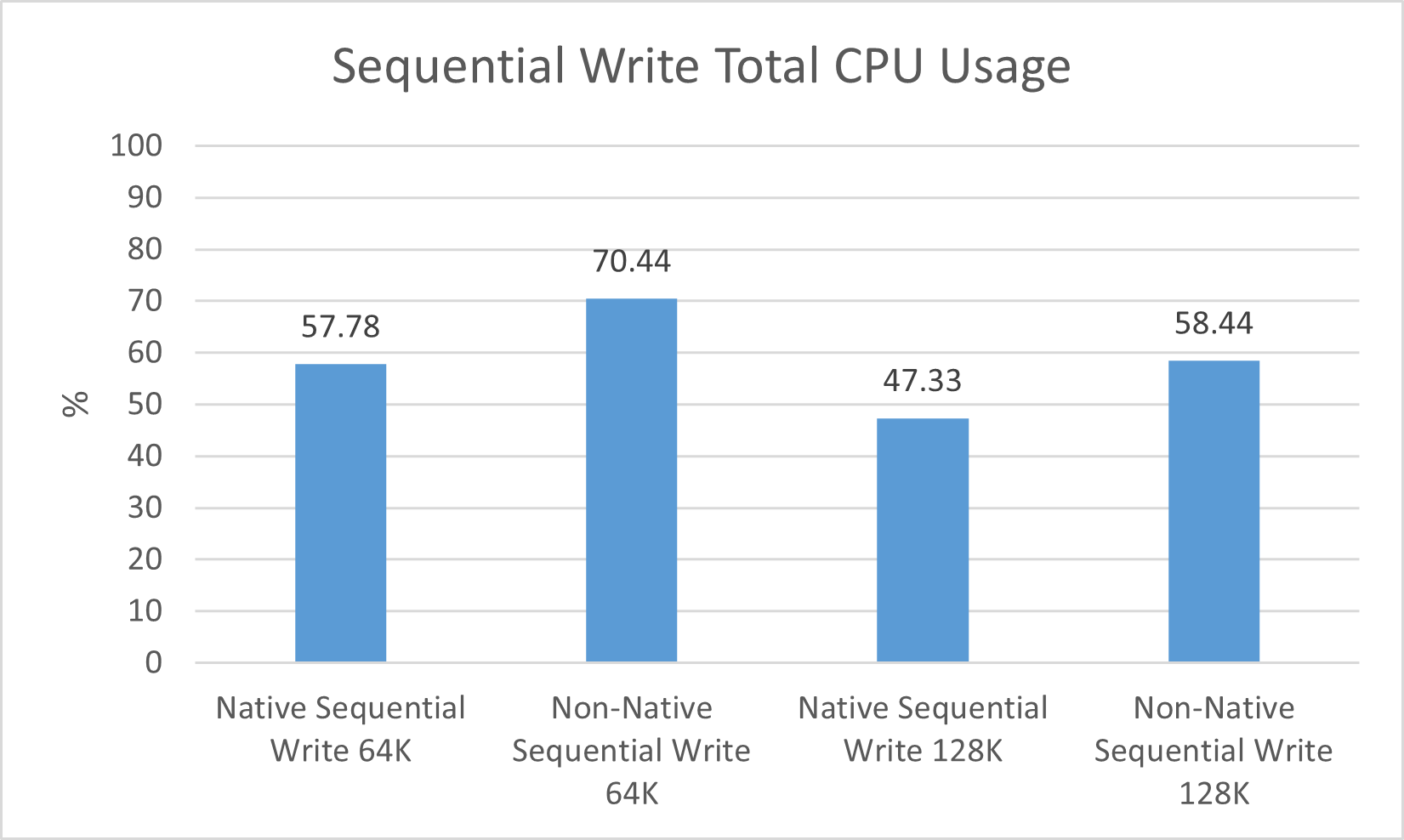

- Significant decreases in CPU usage for sequential reads and writes across varying block sizes

| Metric | Random 4K | Random 64K | Sequential 64K | Sequential 128K | ||||

|---|---|---|---|---|---|---|---|---|

| Non-Native | Native | Non-Native | Native | Non-Native | Native | Non-Native | Native | |

| Read | ||||||||

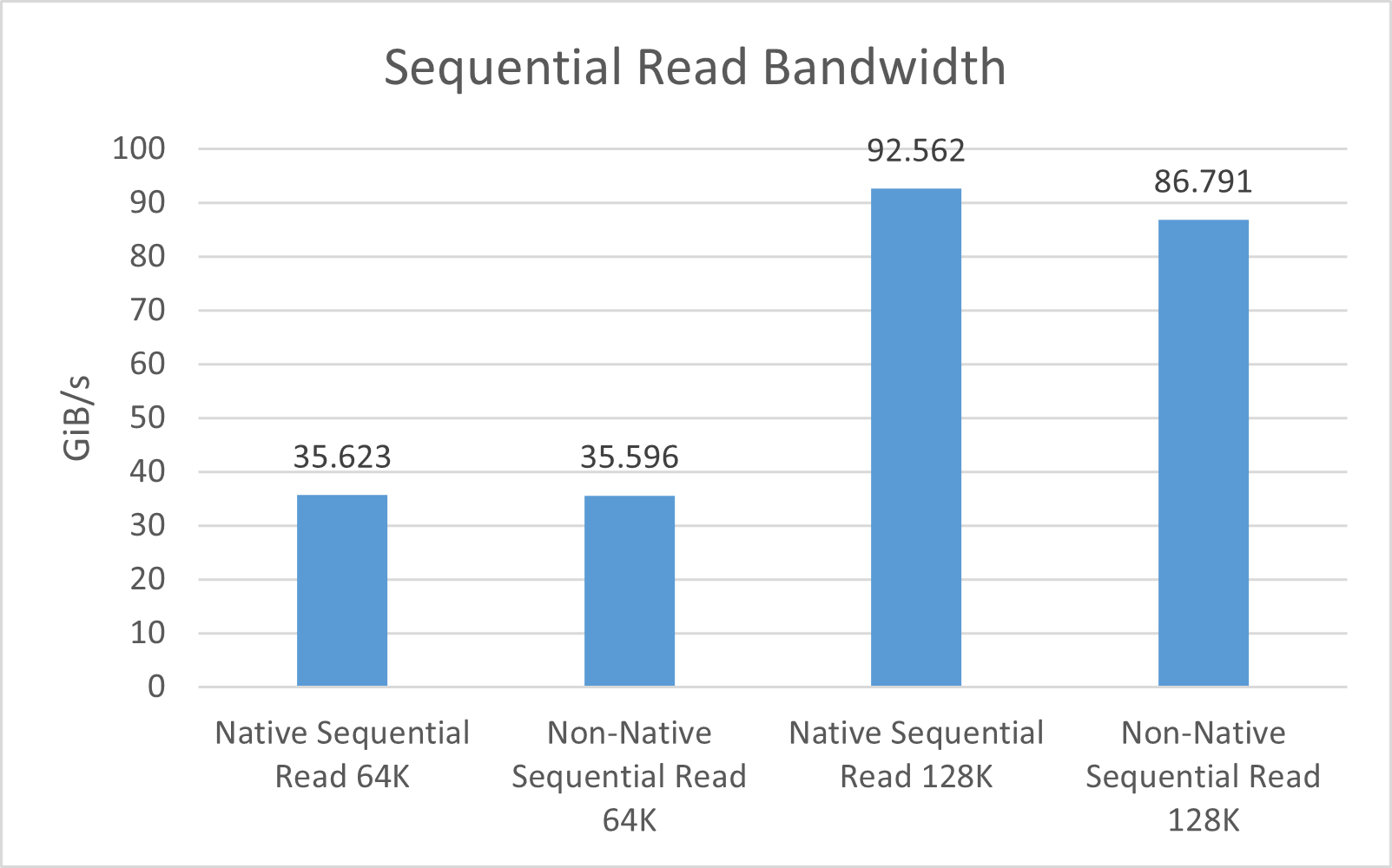

| Bandwidth (GiB/s) | 6.1 | 10.058 | 74.291 | 91.165 | 35.596 | 35.623 | 86.791 | 92.562 |

| IOPS | 1,598,959 | 2,636,516 | 1,217,176 | 1,493,637 | 583,192 | 583,638 | 710,978 | 758,252 |

| Average Latency (ms) | 0.169 | 0.104 | 0.239 | 0.207 | 0.809 | 0.812 | 0.613 | 0.608 |

| Total CPU Usage (%) | 72.67 | 74.22 | 68.44 | 65.11 | 44.89 | 37.11 | 61.56 | 49.56 |

| Metric | Random 4K | Random 64K | Sequential 64K | Sequential 128K | ||||

|---|---|---|---|---|---|---|---|---|

| Non-Native | Native | Non-Native | Native | Non-Native | Native | Non-Native | Native | |

| Write | ||||||||

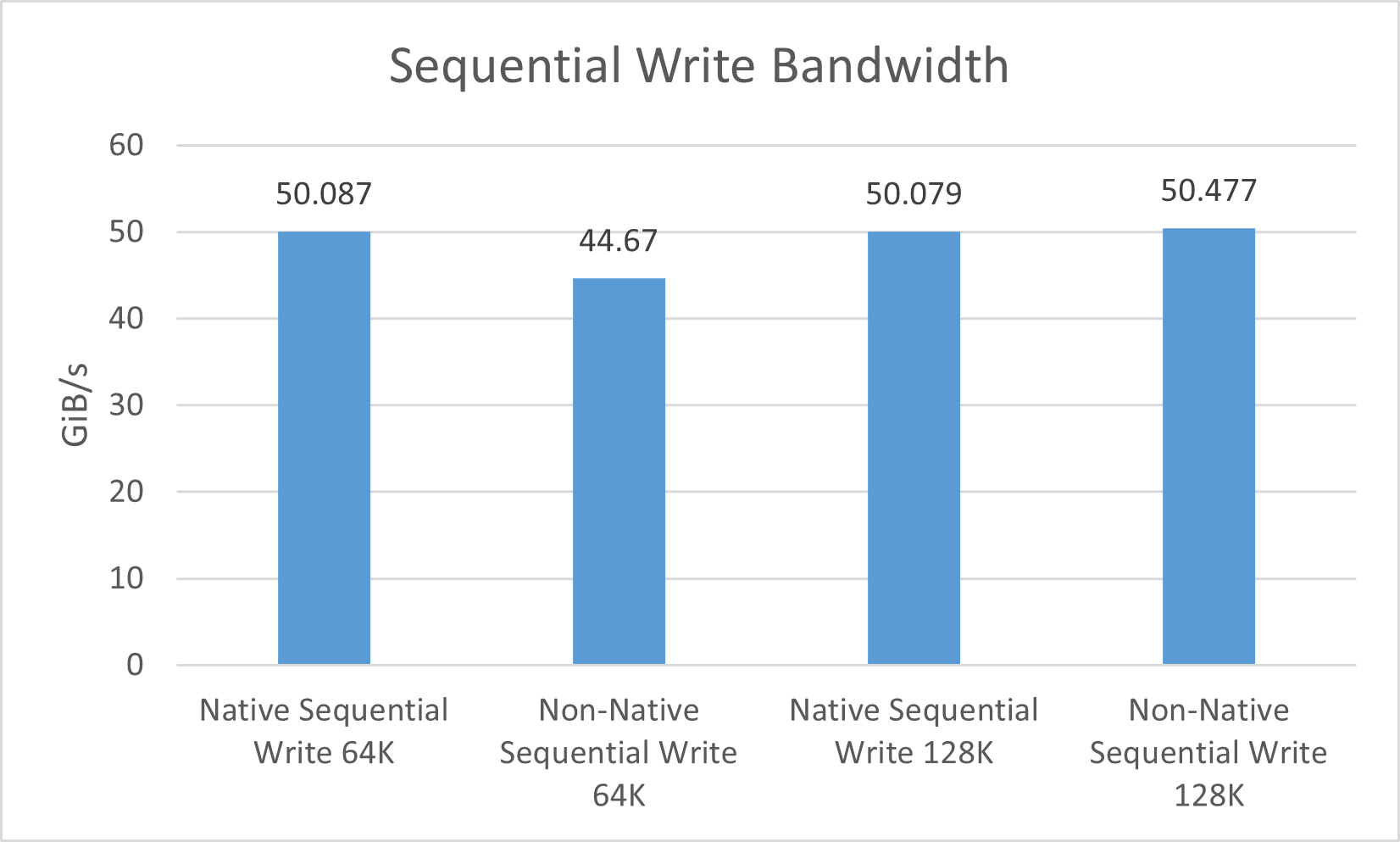

| Bandwidth (GiB/s) | 1.803 | 1.756 | 7.654 | 7.655 | 44.67 | 50.087 | 50.477 | 50.079 |

| IOPS | 472,725 | 460,383 | 125,391 | 125,406 | 731,859 | 820,603 | 413,495 | 410,232 |

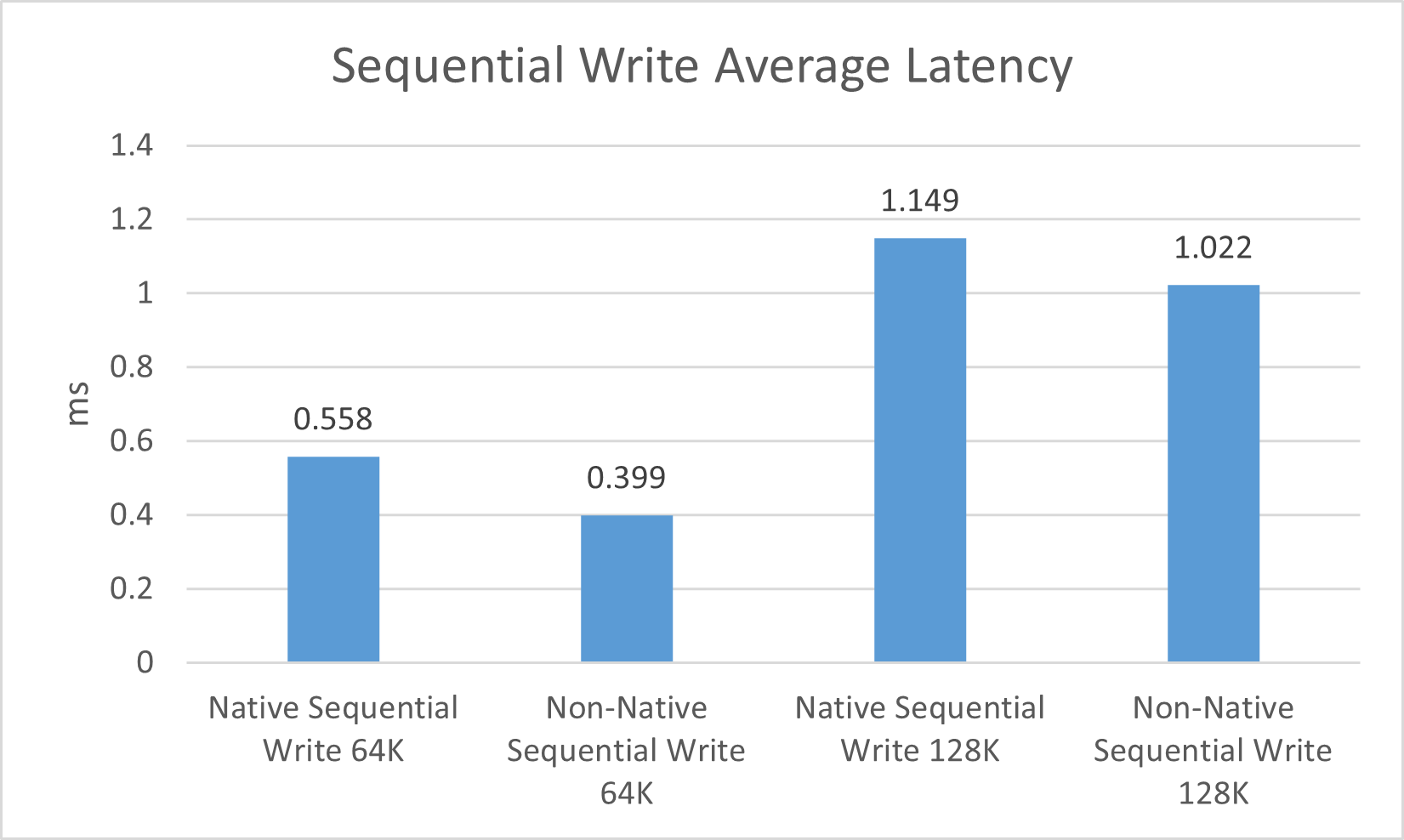

| Average Latency (ms) | 0.992 | 1.028 | 3.814 | 3.816 | 0.399 | 0.558 | 1.022 | 1.149 |

| Total CPU Usage (%) | 26.00 | 20.67 | 12.22 | 9.33 | 70.44 | 57.78 | 58.44 | 47.33 |

Analysis of Results

Beginning with random 4K and 64K read benchmarks, we observed significantly higher read speeds, with an almost 4 GiB/s difference between the native and non-native storage stacks (respectively) on the random 4K read test, and nearly a 16.9 GiB/s uplift on random 64K read. We also saw a decent increase in sequential 128K read operations, with our tests showing about a 5.8 GiB/s increase in bandwidth.

Curiously, we did not see any significant increases across our random or sequential write bandwidth tests, with the only notable difference being an approximate 5.4 GiB/s increase in 64K sequential writes. Most of our results were within 100 MiB/s of each other, suggesting that the new storage stack’s performance is at least consistent with the old’s in cases where it did not improve.

Since throughput is typically correlated with latency, we also observed large drops in average random read latency for both 4K and 64K tests. We saw a 38.46% decrease in non-native random read 4K, from 0.169 milliseconds to 0.104. Random 64K read tests had a smaller decrease, at around 13.39%. Latency did not change drastically with sequential read operations, but random write and sequential write operations showed increases across the board, despite similar or higher throughput.

In addition to the increase in random read speeds, another interesting trend revealed by our FIO tests was a substantial decrease in total CPU usage for 64K and 128K sequential read and write operations. Sequential write tests showed the most dramatic differences, with an average drop of 12.66% CPU usage for 64K, and a nearly matching 11.11% drop for 128K. The sequential 128K read test we performed also saw a fitting 12% usage decrease, but only a 7.78% decrease for sequential 64K read. One factor to consider is that with a fast enough CPU, there may be instances where both stacks can reach a storage device’s full potential; therefore, throughput might not increase, but CPU resource usage would decrease.

Takeaways

While many of our results were within run-to-run variance after enabling the new storage stack, we were able to corroborate many of Microsoft’s claims, including higher read bandwidth with lower latency, and decreased CPU usage across the board. Since this is quite a radical change to their decades-old Windows Server storage stack, Microsoft will first enable native NVMe by default in Windows Server vNext. Fortunately, the feature can be enabled on Windows Server 2025 with a quick registry edit or a group policy, allowing brave server administrators to take advantage of the new stack as early as today (after acknowledging the risks of deploying it).

We look forward to seeing native NVMe enabled by default on the Windows Server platform, and hope to see buy-in from NVMe SSD, RAID card, and HBA manufacturers, who should be able to take Microsoft’s improvements to the next level!

References

Hands, J., Worley, D., & Lakhveer Kaur. (n.d.). NVMe Namespaces. Retrieved December 30, 2025, from NVM Express: https://nvmexpress.org/resource/nvme-namespaces/

Lee, S. (2025, September 15). SNIA SDC 2025 – Storage Multi-Queue on Windows. San Tomas, CA, United States of America: Storage Networking Industry Association. Retrieved December 29, 2025, from https://www.youtube.com/watch?v=dR-DWrmCba0&t

Lee, S. (2025, September 16). Storage Multi-Queue on Windows: A New Stack for High Performance Storage Hardware. Retrieved December 29, 2025, from SNIA Developer Conference: https://www.snia.org/sites/default/files/2025-10/SNIA-SDC25-Lee-Storage-Multi-Queue-On-Windows.pdf

Shekar, Y. (2025, December 15). Announcing Native NVMe in Windows Server 2025: Ushering in a New Era of Storage Performance. (Microsoft) Retrieved December 29, 2025, from Windows Server News and Best Practices: https://techcommunity.microsoft.com/blog/windowsservernewsandbestpractices/announcing-native-nvme-in-windows-server-2025-ushering-in-a-new-era-of-storage-p/4477353

Storage Networking Industry Association. (n.d.). What is SCSI? Retrieved December 30, 2025, from Storage Networking Industry Association: https://www.snia.org/education/what-is-scsi

Amazon

Amazon