HPE has unveiled updates to the NVIDIA AI Computing by HPE portfolio to support large-scale AI factories and next-generation supercomputers. The offerings combine compute, GPUs, networking, liquid cooling, software, and services into full‑stack solutions designed for at‑scale and sovereign environments. NVIDIA AI Integrated into HPE Exascale Supercomputing Platform. Argonne National Laboratory, HLRS, Hudson River Trading, and Korea Institute of Science and Technology Information have adopted HPE AI infrastructure integrated with NVIDIA technologies to accelerate scientific and industrial innovation.

HPE is extending NVIDIA technologies into its second-generation exascale-class platform, the HPE Cray Supercomputing GX5000, which unifies AI and HPC in a single architecture. Research labs, sovereign entities, and enterprises are increasingly blending AI models with traditional HPC simulations, and HPE is positioning the platform to meet these converging requirements.

A new option in the lineup is an HPE compute blade based on NVIDIA Vera CPUs. Each liquid‑cooled HPE Cray Supercomputing GX240 Compute blade includes up to 16 NVIDIA Vera CPUs. A full rack supports up to 40 blades, totaling 640 CPUs and 56,320 Arm-compatible cores. The GX240 targets high‑density, high‑performance AI compute installations that require sustained throughput.

HPE is also expanding networking options for large-scale systems, adding support for NVIDIA Quantum‑X800 InfiniBand. These switches provide 144 ports operating at 800 Gb/s and incorporate power-efficiency features, which are increasingly important at exascale.

Trish Damkroger, Senior Vice President and General Manager of HPC and AI Infrastructure Solutions at HPE, said the company’s experience in exascale supercomputing is shaping how it integrates advanced AI workloads with traditional HPC. She noted that the ongoing collaboration with NVIDIA helps customers reach higher performance density and drive progress across fields such as medicine, life sciences, engineering, and manufacturing.

Enhancements to HPE AI Factory for At‑Scale and Sovereign Deployments

Beyond its supercomputing portfolio, HPE is expanding the HPE AI Factory to support service providers, sovereign governments, and large enterprises that are adopting the NVIDIA Vera Rubin and NVIDIA Blackwell platforms.

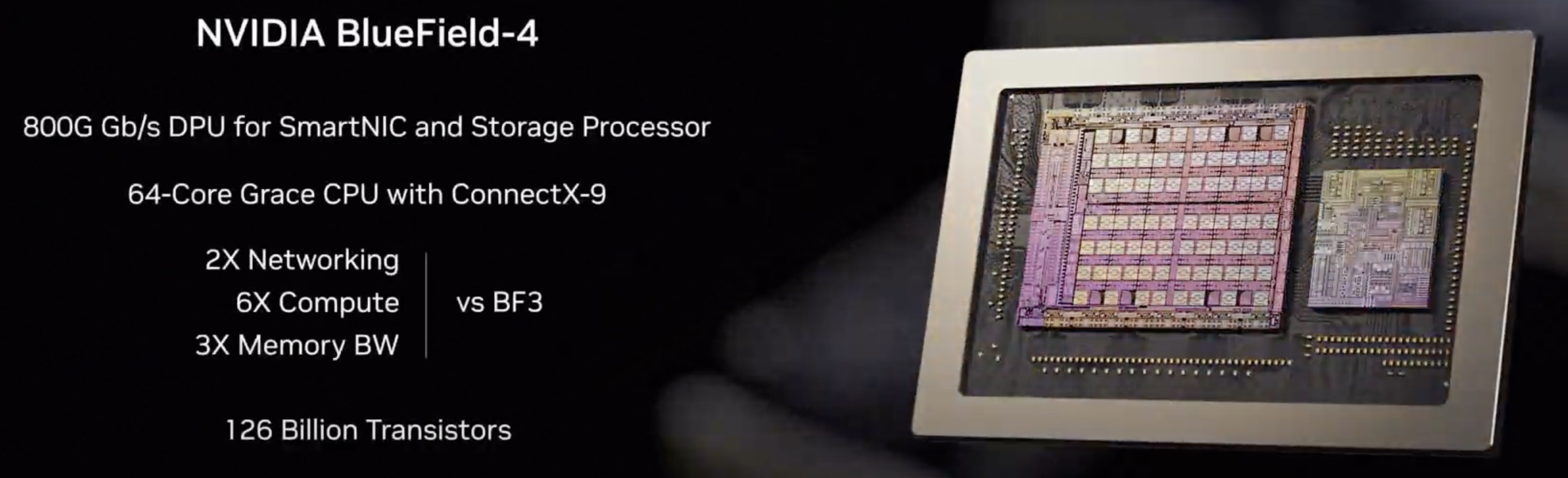

The next-generation NVIDIA Vera Rubin NVL72 by HPE is the headline addition. Built for frontier‑scale models exceeding one trillion parameters, the rack-scale system is engineered for high-efficiency deployments in neo-cloud environments. It includes 36 NVIDIA Vera CPUs, 72 NVIDIA Rubin GPUs, sixth‑generation NVIDIA NVLink scale-up networking, NVIDIA ConnectX‑9 SuperNICs, and NVIDIA BlueField‑4 DPUs. HPE integrates the platform with its liquid cooling technologies and data center design services to streamline large-scale rollouts.

HPE is also introducing the HPE Compute XD700, an OCP‑inspired system built on NVIDIA HGX Rubin NVL8. The XD700 is intended for dense AI training and inference, supporting up to 128 Rubin GPUs per rack. The system doubles the GPU density of the previous generation while targeting reductions in space, power, and cooling.

In addition, HPE is broadening access to NVIDIA Blackwell by making the NVIDIA RTX PRO 6000 Blackwell Server Edition GPU available across all HPE AI Factory systems.

Software and Services Updates for Faster AI Deployment

HPE updates extend into the software and services stack that supports large-scale AI factories. The HPE AI Factory portfolio is now endorsed under the NVIDIA Cloud Partner program, which simplifies NVIDIA Cloud Provider certification for cloud operators. This supports faster validation cycles and easier integration for service providers building multi-tenant AI environments.

HPE is also expanding multi-tenancy options by supporting virtual machine GPU passthrough and secure Kubernetes namespaces using NVIDIA Multi‑Instance GPU (MIG). These capabilities are enabled by integration with SUSE Virtualization and SUSE Rancher Prime, giving providers the choice between strict and flexible tenancy models.

Red Hat Enterprise Linux and Red Hat OpenShift remain supported as part of Red Hat AI Enterprise, integrating cleanly with NVIDIA AI Enterprise software for customers standardizing on enterprise Linux.

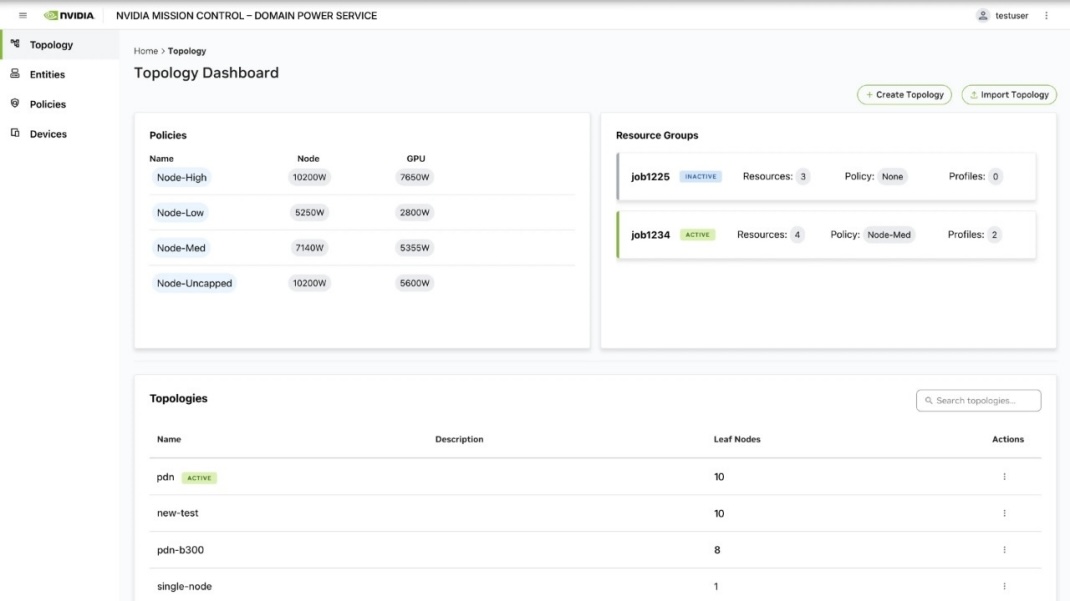

HPE AI Factory at scale and HPE AI Factory sovereign will also integrate NVIDIA Mission Control software. Mission Control provides a unified operational layer for AI factories, streamlining orchestration via NVIDIA Run:ai and adding monitoring and autonomous recovery features from NVIDIA Dynamo. The intent is to simplify management and improve operational consistency for platform teams running large AI clusters.

Chris Marriott, Vice President of Enterprise Platforms at NVIDIA, emphasized that AI development depends on robust infrastructure. He highlighted the joint engineering work between HPE and NVIDIA in accelerated computing, advanced networking, and liquid cooling to support faster insights in large-scale and sovereign deployments.

Availability

NVIDIA RTX PRO 6000 Blackwell Server Edition GPUs are available today in the HPE AI Factory portfolio. Integration of Red Hat Enterprise Linux and Red Hat OpenShift with NVIDIA is also available today.

HPE AI Factory multi‑tenancy and GPU passthrough capabilities will be available in Spring 2026. NVIDIA Mission Control support for HPE AI Factory at scale and sovereign is planned for later in 2026. The NVIDIA Vera Rubin NVL72 by HPE rack‑scale system will be available in December 2026.

The HPE Compute XD700 will be available in early 2027. The HPE Cray Supercomputing GX240 Compute blade with up to 16 NVIDIA Vera CPUs and NVIDIA Quantum‑X800 InfiniBand networking for the HPE Cray Supercomputing GX5000 will also be available in 2027.

Amazon

Amazon