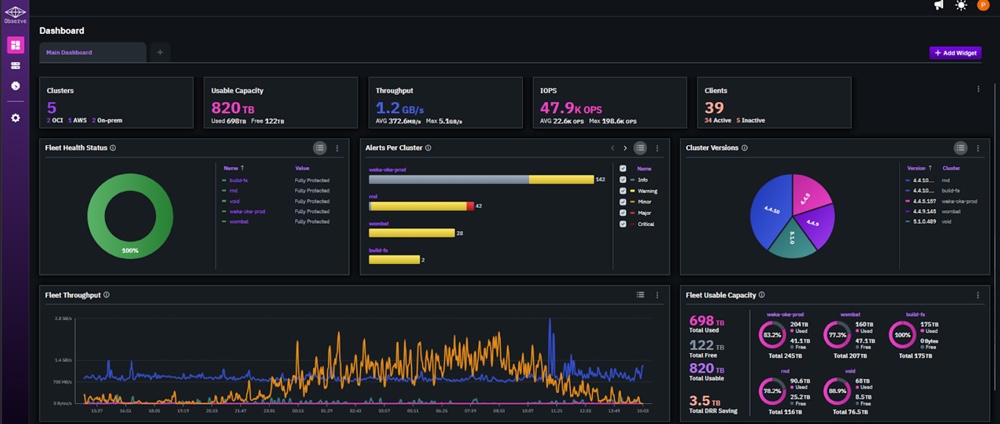

WEKA has announced the general availability of its NeuralMesh AI Data Platform, an enterprise-focused, composable infrastructure designed for AI factory deployments. Built on the NVIDIA AI Data Platform reference architecture, NeuralMesh AIDP provides an integrated stack that delivers AI-ready data to production environments, with a focus on accelerating time-to-deployment for large-scale AI applications.

The platform targets a persistent challenge in enterprise AI adoption. While many organizations complete proof-of-concept projects, scaling those implementations into production often exposes limitations in data infrastructure, performance consistency, and operational complexity. NeuralMesh is designed to address this transition by providing a unified data platform optimized for both early-stage experimentation and production-scale workloads.

Architecture and Design

NeuralMesh is built on more than a decade of AI-native storage development and incorporates over 170 patents. The platform is designed to scale efficiently into exabyte-class environments while maintaining performance and resilience. WEKA positions NeuralMesh as an adaptive system that improves with increasing deployments, particularly in distributed AI environments where data access patterns and concurrency increase over time.

At the core of the platform is WEKA’s Augmented Memory Grid, which extends GPU memory by externalizing and persisting inference context. This architecture enables higher utilization of GPU resources by reducing redundant computation and maintaining context across sessions. In inference workloads, the company reports up to 6.5 times more tokens per GPU compared to traditional storage approaches, reflecting improved efficiency in handling large context windows and concurrent workloads.

Integration with NVIDIA AI Infrastructure

NeuralMesh AIDP is aligned with NVIDIA’s AI Data Platform, enabling tight integration with GPU, networking, and data processing technologies. The platform is designed to support continuous data pipelines and persistent inference context, which are critical for production agentic AI systems. NVIDIA highlights the importance of a persistent context layer to maintain stability and performance as inference workloads scale.

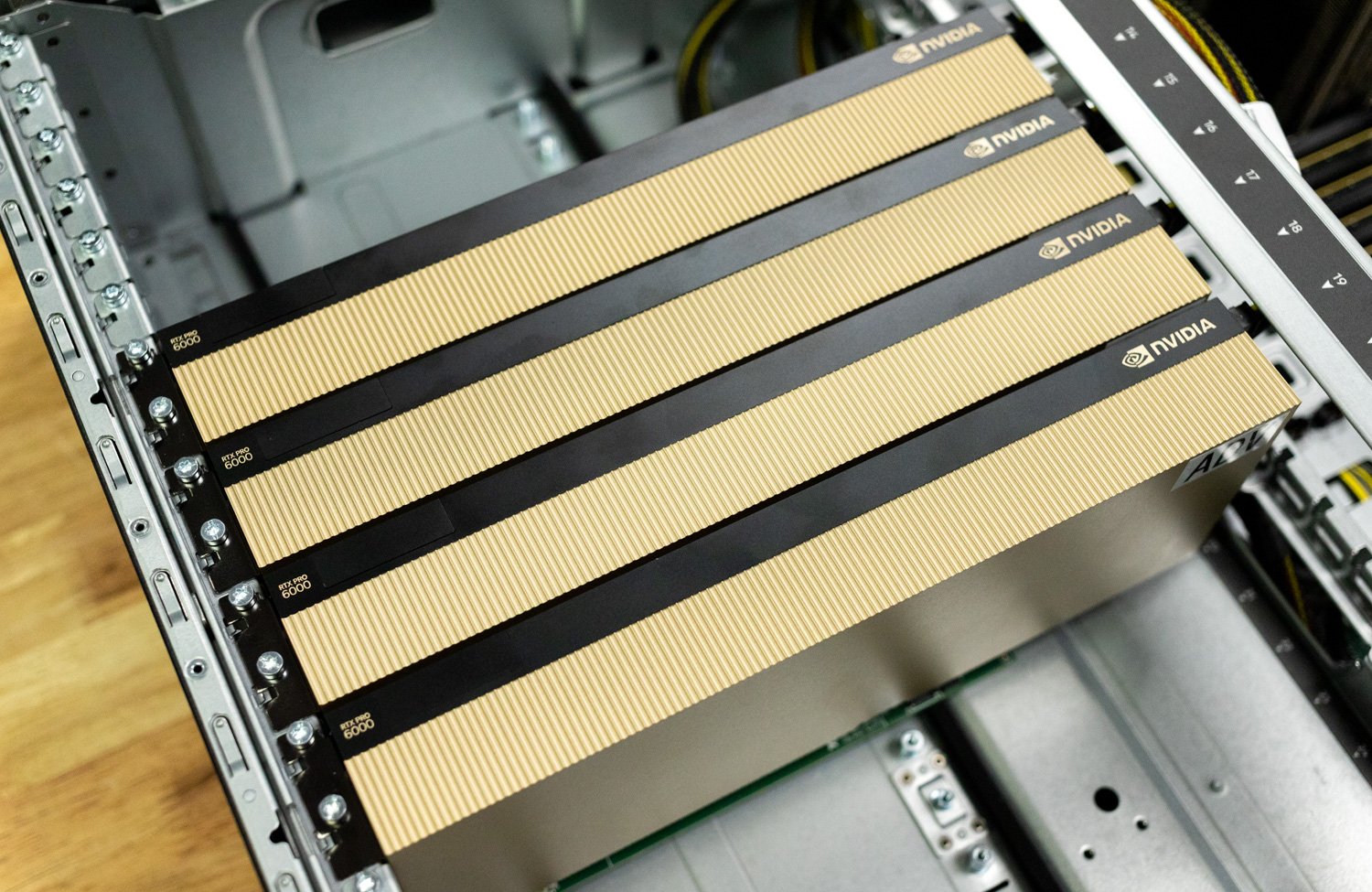

The solution is available as a pre-integrated platform that includes validated configurations with NVIDIA RTX 6000 PRO and RTX 4500 PRO Server Edition GPUs, along with ecosystem integrations from vendors such as Red Hat, Spectro Cloud, and Supermicro. This appliance-style delivery model reduces deployment complexity and accelerates time to production.

AI Factory Enablement

WEKA positions NeuralMesh AIDP as the infrastructure for AI factories, in which data ingestion, processing, and inference operate as continuous, interconnected workflows. These environments require more than raw storage capacity. They depend on sustained data movement, context persistence, and predictable performance under load.

NeuralMesh is designed to support these requirements by enabling a continuous data loop between storage and compute resources. This allows organizations to maintain high GPU utilization while supporting dynamic, multi-stage AI pipelines that include training, fine-tuning, and inference.

Pre-Built AI Workloads

The platform includes preconfigured pipelines for a range of AI applications, allowing organizations to deploy common workloads without extensive integration. These include semantic search, video search and summarization, AlphaFold workflows for drug discovery, and agentic retrieval-augmented generation use cases.

In production environments, NeuralMesh is being applied across multiple sectors. In healthcare and life sciences, it supports large-scale data analysis workflows such as identifying patient cohorts and processing cryo-electron microscopy datasets. In financial services, it enables analysis of market signals and secure access to institutional knowledge. Public sector deployments focus on contextual threat detection and automated evidence synthesis. In robotics and physical AI, the platform reduces the time between data collection and model retraining, improving system responsiveness and deployment cycles.

Availability

NeuralMesh AI Data Platform is available immediately as an appliance-style solution.

Amazon

Amazon