NVIDIA and Google Cloud used Google Cloud Next in Las Vegas to outline a new phase of their long-standing engineering partnership, introducing updates to the Google Cloud AI Hypercomputer platform to scale agentic and physical AI for production environments. The companies continue to co-design infrastructure spanning silicon, systems, networking, and software to support increasingly complex AI workloads, including autonomous agents, robotics, and digital twins.

Vera Rubin-Based A5X Infrastructure Targets Large-Scale AI Factories

Google Cloud introduced A5X bare-metal instances built on NVIDIA Vera Rubin NVL72 rack-scale systems. These systems are designed to significantly improve inference economics and efficiency, delivering up to 10x lower cost per token and 10x higher token throughput per megawatt than the prior generation.

The A5X platform integrates NVIDIA ConnectX-9 SuperNICs with Google’s next-generation Virgo networking stack. This architecture enables cluster scaling to 80,000 Rubin GPUs within a single site and to 960,000 GPUs across multi-site deployments. The design targets hyperscale AI training and inference environments where network performance and system-level optimization are critical.

Google Cloud emphasized that tightly integrated infrastructure and managed AI services are required to support the next wave of AI workloads. The combined stack enables customers to train, fine-tune, and deploy models with an emphasis on performance, efficiency, and operational scalability.

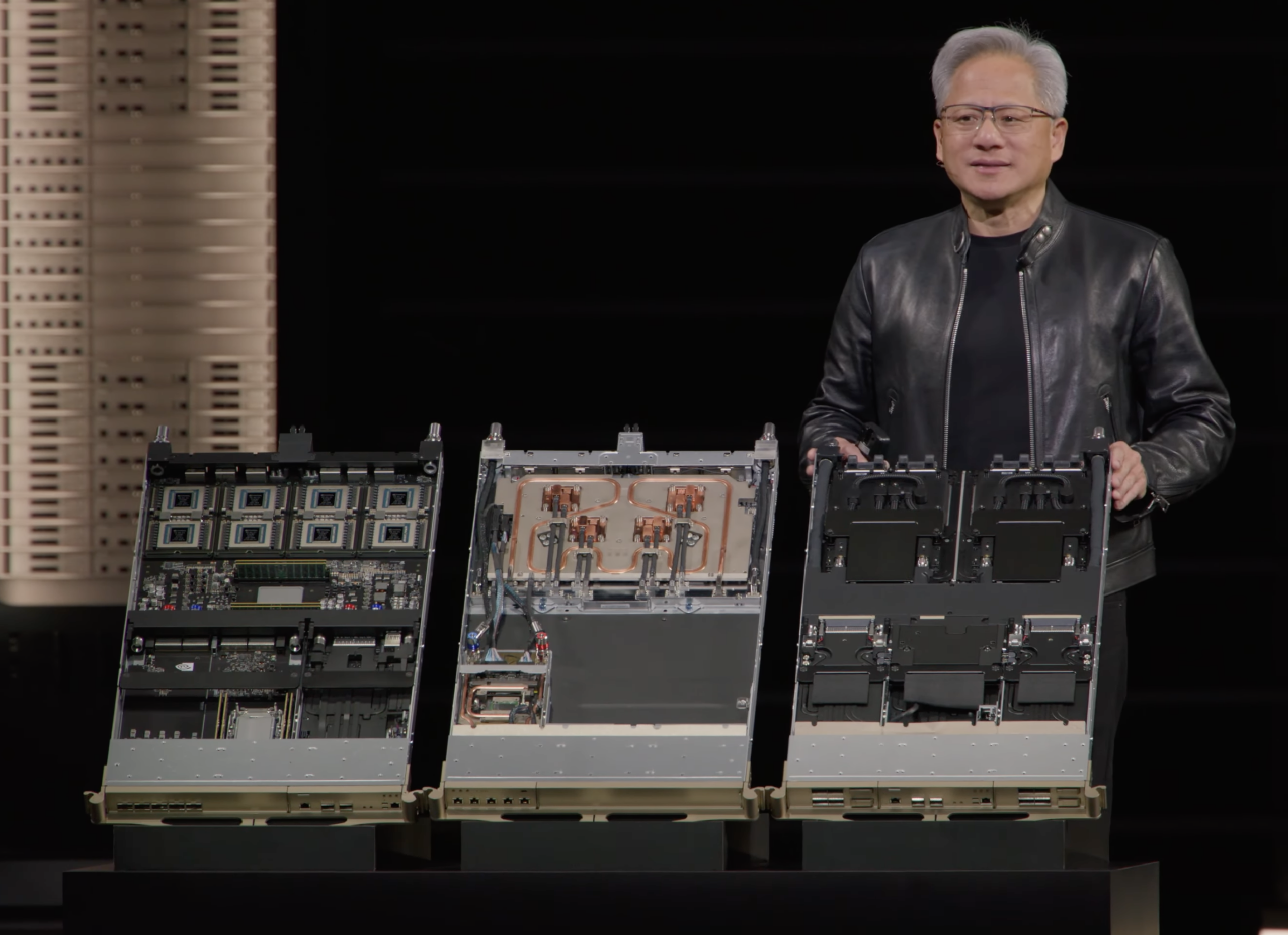

Broad Blackwell Portfolio Enables Right-Sized Acceleration

Google Cloud also outlined its portfolio of NVIDIA Blackwell-based instances, spanning a wide range of deployment sizes and performance profiles. Offerings include A4 VMs based on NVIDIA HGX B200 systems, A4X and A4X Max configurations built on the GB200 and GB300 NVL72 platforms, and fractional GPU access via G4 instances with RTX PRO 6000 Blackwell Server Edition GPUs.

This range allows organizations to align infrastructure with workload requirements. Configurations range from fractional GPUs for lighter inference tasks to full NVL72 racks with 72 GPUs interconnected via fifth-generation NVLink and NVLink Switch technology. At the high end, deployments can scale to tens of thousands of GPUs for large-model training and distributed inference.

These systems are designed to support a range of AI workloads, including mixture-of-experts (MoE) models, multimodal inference, large-scale data processing, and simulation workloads for robotics and physical AI.

Early adopters are already leveraging the platform. Thinking Machines Lab is using GB300 NVL72-based A4X Max instances to scale training for its Tinker API, while OpenAI is running large-scale inference workloads, including ChatGPT, on GB200 and GB300-based instances on Google Cloud.

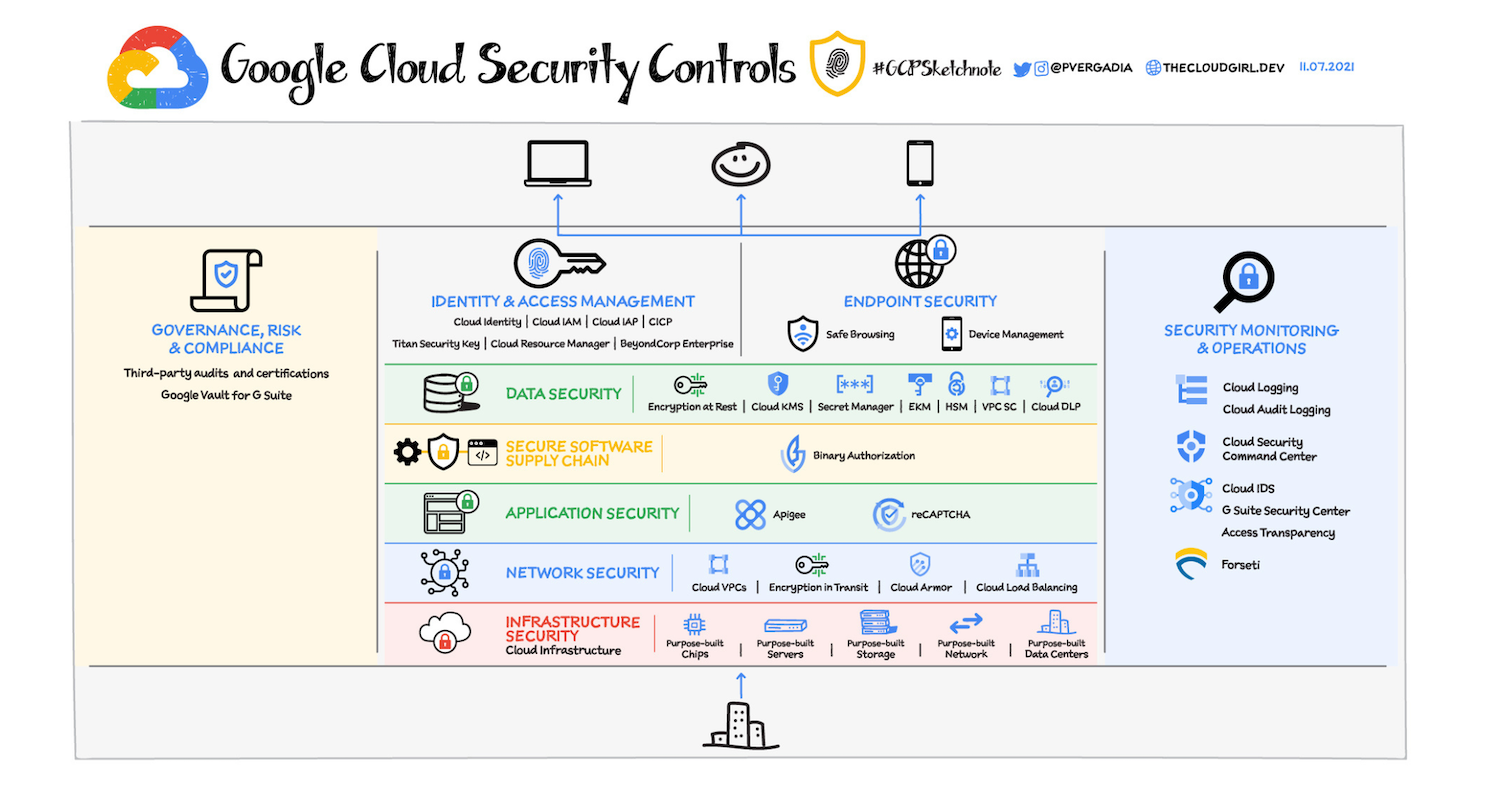

Confidential AI Extends to Blackwell GPUs

Google Cloud is extending confidential computing capabilities to its AI infrastructure. Gemini models running on NVIDIA Blackwell and Blackwell Ultra GPUs are now available in preview on Google Distributed Cloud, enabling organizations to deploy models closer to sensitive data sources.

NVIDIA Confidential Computing enables encrypted execution environments in which prompts and fine-tuning data remain protected from unauthorized access, including by cloud operators. This capability is also coming to multi-tenant environments through Confidential G4 VMs with RTX PRO 6000 Blackwell GPUs.

This marks the first confidential computing implementation for Blackwell GPUs in the public cloud, targeting regulated industries that require strict data protection while maintaining access to high-performance AI infrastructure.

Open Models and Managed RL Pipelines for Agentic AI

The platform supports a broad model ecosystem, including Google’s Gemini and Gemma models and NVIDIA’s Nemotron open models. NVIDIA Nemotron 3 Super is now integrated with the Gemini Enterprise Agent Platform, enabling developers to build and deploy reasoning-driven agentic workflows.

Google Cloud is also introducing Managed Training Clusters with a reinforcement learning API built on NVIDIA NeMo. This service automates cluster provisioning, job orchestration, and fault handling, enabling large-scale RL training. The goal is to reduce operational complexity and allow teams to focus on model behavior and optimization.

CrowdStrike uses NVIDIA NeMo tools, including Data Designer, Automodel, and Megatron Bridge, to generate synthetic data and fine-tune domain-specific cybersecurity models. These workflows run on Blackwell-based infrastructure, accelerating threat detection and response pipelines.

Expanding Industrial and Physical AI Workloads

The joint platform also targets industrial and physical AI use cases. Applications from Cadence and Siemens Digital Industries Software are now available on Google Cloud with NVIDIA acceleration, supporting design, simulation, and manufacturing workflows across industries such as semiconductors, automotive, aerospace, and heavy equipment.

NVIDIA Omniverse libraries and Isaac Sim are available on Google Cloud Marketplace, enabling the development of physically accurate digital twins and robotics simulation pipelines. These tools allow organizations to simulate and validate systems before deployment.

In addition, NVIDIA NIM microservices can be deployed on Vertex AI and Google Kubernetes Engine to support vision AI and robotics workloads. These services enable capabilities such as real-time video analytics, robotic planning, and automated data processing.

Platform Focus: From Experimentation to Production

The updates position Google Cloud AI Hypercomputer as a full-stack platform for moving AI workloads from research to production. With tightly integrated compute, networking, software, and security capabilities, the platform is designed to support large-scale agentic systems, industrial automation, and real-time AI applications.

Amazon

Amazon