The DGX Spark platform is familiar territory for us at this point. We’ve reviewed the Dell, ASUS, Acer, and Gigabyte takes on NVIDIA’s GB10 Grace Blackwell reference design, and the core ingredients are consistent across all of them: 1,000 TOPS of FP4 compute, 128GB of unified LPDDR5x memory, and dual 200GbE networking in a 150mm chassis. HP’s ZGX Nano G1n AI Station builds on that foundation, but the way HP has built around it sets this unit apart from the rest of the Spark field.

The most visible differences are in materials and construction. HP wraps the ZGX Nano in a chassis built from up to 75% recycled aluminum and 20% recycled steel, with packaging that carries up to 93% recycled content. The internal layout splits the chassis into upper and lower halves, making it easier to access components like the SSD and coin-cell battery than on several of the Spark units we’ve tested. Thermally, HP rates the system at 22 dBA idle and 27.6 dBA under intensive workloads, quiet for a system dissipating approximately 780 BTU/hr at peak.

Security is where HP pushes furthest past the reference platform. The ZGX Nano ships with TPM 2.0 operating in FIPS 140-2 certified mode, meets Common Criteria EAL4+, and includes BIOS-level secure boot and PXE controls. Storage is factory-installed as a self-encrypting OPAL NVMe drive. Taken together, HP is positioning this unit not only as a developer desk-side AI node but also as a system that can operate within regulated environments where supply chain certifications, encryption at rest, and tamper resistance matter for procurement.

| Specification | HP ZGX Nano G1n AI Station |

|---|---|

| Overview | |

| Product Name | HP ZGX Nano G1n AI Station |

| Form Factor | Mini |

| Operating System | NVIDIA DGX OS 7 / Ubuntu 24.04 NOTE: This product does not support Microsoft Windows. |

| Hardware | |

| Processor | NVIDIA GB10 Grace Blackwell Superchip Blackwell Architecture GPU 20-core Arm CPU (10x Cortex-X925 + 10x Cortex-A725) Blackwell CUDA Cores 5th Gen Tensor Cores 4th Gen RT Cores 1x NVENC 1x NVDEC |

| Memory | 128GB LPDDR5x, unified, 16 channels, soldered |

| Memory Bandwidth | 273 GB/s |

| Storage (Internal I/O) | 1x M.2 PCIe Gen5 x4 Options: 2TB or 4TB PCIe Gen4 x4 NVMe (2242, SED OPAL TLC) |

| Networking & I/O | |

| Rear I/O Ports | 1x USB-C power (240W) 3x USB-C 20Gbps (DisplayPort 1.4a, 30W total) 1x HDMI 2.1a 1x 10GbE RJ-45 2x QSFP 200GbE (ConnectX-7) |

| Network Controllers | Realtek RTL8127-CG 10GbE NVIDIA ConnectX-7 200GbE |

| WLAN & Bluetooth | AzureWave AW-EM637 Wi-Fi 7 + Bluetooth 5.4 |

| Performance | |

| AI Compute | Up to 1,000 TOPS (FP4) |

| Model Capacity | Up to 200B parameters |

| Physical & Power | |

| Dimensions (H x W x D) | 2.01″ (no feet) / 2.1″ (with feet) 5.9″ x 5.9″ |

| Weight | Starting at 1.25kg (2.76 lbs) |

| Power Supply | 240W USB-C external adapter, 89% efficiency, active PFC |

Build and Design

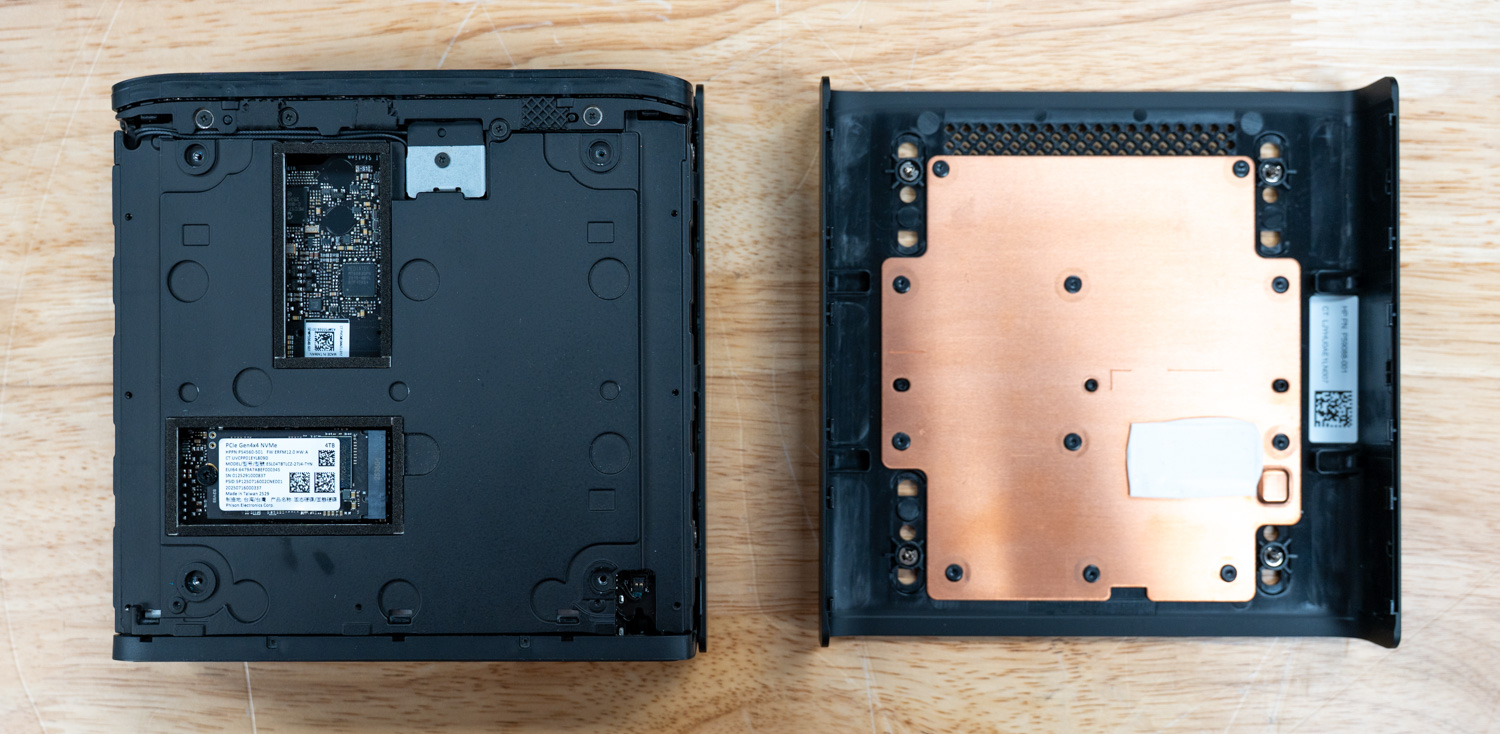

The HP ZGX Nano G1n takes a noticeably different approach to the DGX Spark design compared with the other systems we have looked at so far (see our Dell/ASUS/Acer/Gigabyte reviews). Instead of the more common build, where the internals feel tucked into a top cover, HP splits the chassis into upper and lower halves, making the internal layout easier to understand once inside. What first appears more complicated turns out to be fairly practical, with straightforward access to parts like the coin-cell battery and SSD after removing just a handful of screws. That more considered internal structure also carries over to the outer build, where HP places greater emphasis on how the system is constructed and the materials used throughout.

That said, HP wraps it in a sleek black case with a 150mm-square footprint and relies heavily on recycled materials. Specifically, the build uses up to 75% recycled aluminum, 20% recycled steel, and significant amounts of post-consumer recycled plastics. Even the packaging reflects this commitment. Corrugated materials contain up to 93% recycled content, and plastic packaging incorporates at least 30% recycled content.

Thermally, the system relies on forced-air cooling. This is a notable engineering choice given the density of the NVIDIA GB10 Grace Blackwell Superchip. Despite its compact footprint, HP specifies a full thermal envelope. Under maximum load, the system dissipates up to approximately 780 BTU/hr, depending on configuration. Peak system power draw reaches approximately 228W. Furthermore, HP advertises relatively low noise levels, rated at 22 dBA at idle and 27.6 dBA under intensive workloads.

Physically, the unit measures 5.9 x 5.9 x 2.01 inches without feet, firmly placing it in ultra-compact territory. HP explicitly states that the unit is not rack-mountable, reinforcing its role as a desk-side AI node rather than traditional data center infrastructure. Serviceability is minimal by design. Users need a #1 Phillips screwdriver to access internal components, and most components, including memory, are non-user-replaceable.

Internally, the ZGX Nano uses NVIDIA’s reference board design, as do many other OEMs building on the DGX Spark platform. The LPDDR5x memory is soldered directly to the board and runs at up to 8533 MHz. Overall, the platform prioritizes efficiency and density over modularity.

Security and Upgradability

HP locks down the ZGX Nano G1n by design. It features an integrated TPM 2.0 module that operates in FIPS 140-2-certified mode, meets Trusted Computing Group specifications, and is Common Criteria EAL4+ certified. BIOS-level protections include secure boot controls, PXE-based remote boot capabilities, and the ability to disable boot from removable media entirely.

From a hardware standpoint, HP is explicit: this system is not upgradeable. The 128GB of LPDDR5x unified memory sits soldered directly to the board. Additionally, buyers must select storage at the time of purchase. While the single M.2 slot supports PCIe Gen5 x4 electrically, factory configurations ship with PCIe Gen4 x4 NVMe SSDs. These come in 2TB or 4TB capacities and are all self-encrypting OPAL drives.

HP notes that spare parts will remain available for up to five years after production ends. Nevertheless, this is fundamentally an appliance-style system rather than a modular workstation.

I/O and Expansion

The front of the unit is minimalist, featuring only a power button and a status LED. On the back, the system offers a dense array of high-performance connectivity options. HP delivers power via a standard NVIDIA-recommended 240W USB-C adapter and warns that third-party adapters may cause degraded performance or instability.

Three USB 3.2 Type-C ports provide USB connectivity, each operating at 20 Gbps and supporting DisplayPort 1.4a Alt Mode. A dedicated HDMI 2.1a port provides additional display output. For networking, the system includes both a Realtek RTL8127-CG 10GbE controller and an NVIDIA ConnectX-7 controller, providing dual 200GbE QSFP112 ports, each with 200 Gbps throughput.

The networking stack supports a wide range of enterprise features. These include PXE boot, Wake-on-LAN, VLAN tagging (802.1Q), time synchronization (802.1as/1588), and full-duplex operation across all supported speeds. Additionally, a Wi-Fi 7 (802.11be) 2×2 module with Bluetooth 5.4 provides wireless connectivity and supports MU-MIMO, WPA3 security, and operation across the 2.4GHz, 5GHz, and 6GHz bands.

Graphics and Audio

The integrated NVIDIA Blackwell GPU in the GB10 Superchip handles all graphics tasks. The system supports up to 8K output at 60Hz via USB-C DisplayPort 1.4a and 8K at 30Hz via HDMI 2.1a. HP recommends using direct cable connections for 8K output, as adapters or docks may cause instability or degrade signal quality.

Audio runs over HDMI, with no dedicated analog audio outputs. This aligns with the system’s positioning as a compute node rather than a traditional multimedia workstation.

Thermals Testing

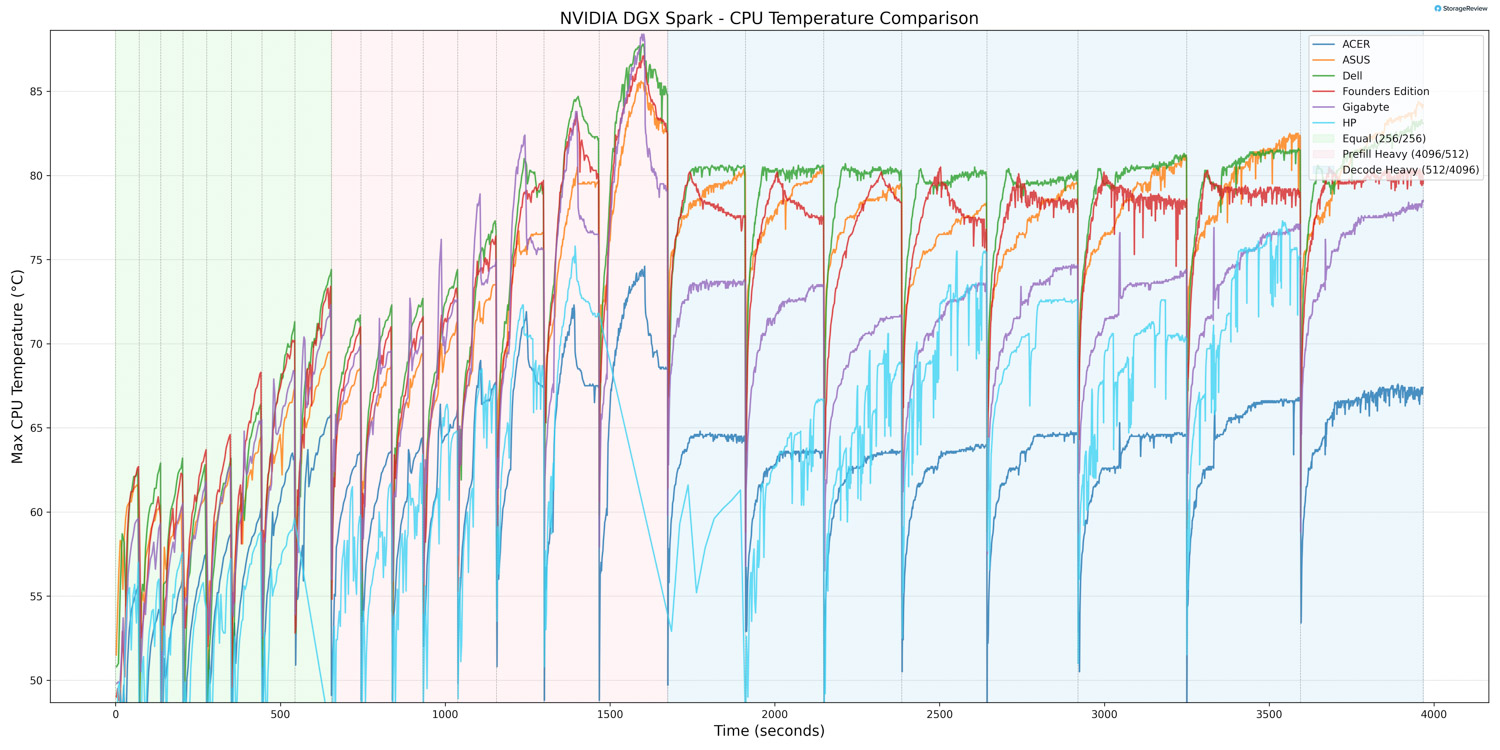

CPU Temperature

During CPU thermal testing, the HP ZGX Nano G1n reached a peak temperature of 77.3°C during the workload’s more intense bursts. This places HP below the hottest systems in the comparison stack during peak transitions, as other units climbed into the 90°C range. As the workload transitioned into Equal ISL/OSL and then Decode Heavy, CPU temperatures stabilized rather than continuing to rise sharply.

At the lower end, the CPU recorded a minimum temperature of 36.4°C during light-load conditions. This means the HP has effective heat dissipation when the system is not under heavier computational stress. Overall, the ZGX demonstrated controlled burst CPU thermal behavior with stable sustained-load performance.

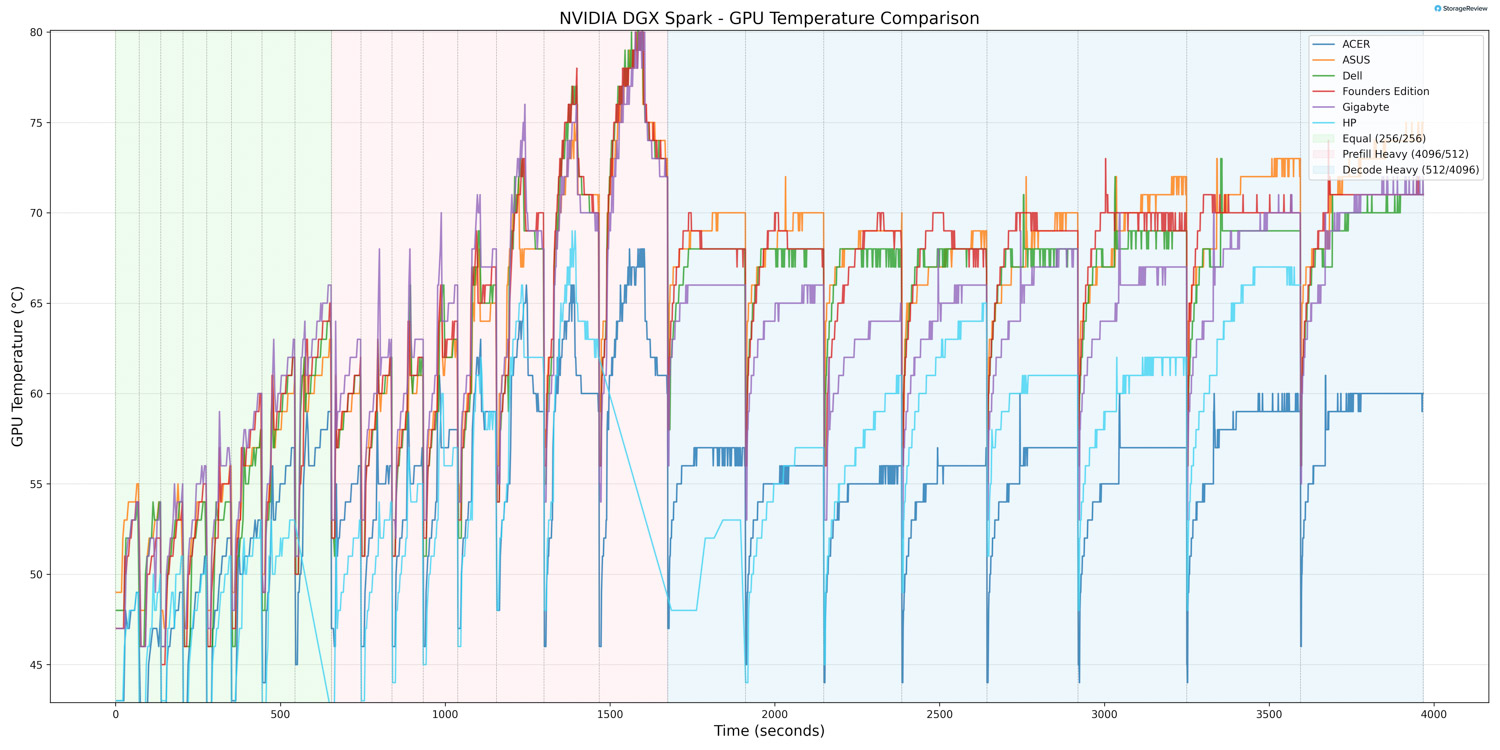

GPU Temperature

GPU thermals followed a similar pattern. During periods of heavy acceleration, the GPU reached a maximum temperature of 69°C. This positions HP on the cooler side of the comparables during peak burst conditions, with several other systems (like the Dell, ASUS, and Founders Edition) running noticeably warmer at the top end. As activity shifted into Equal ISL/OSL and Decode Heavy phases, GPU temperatures leveled off and remained stable.

The GPU recorded a minimum temperature of 34°C during lighter phases, indicating solid idle thermal capabilities.

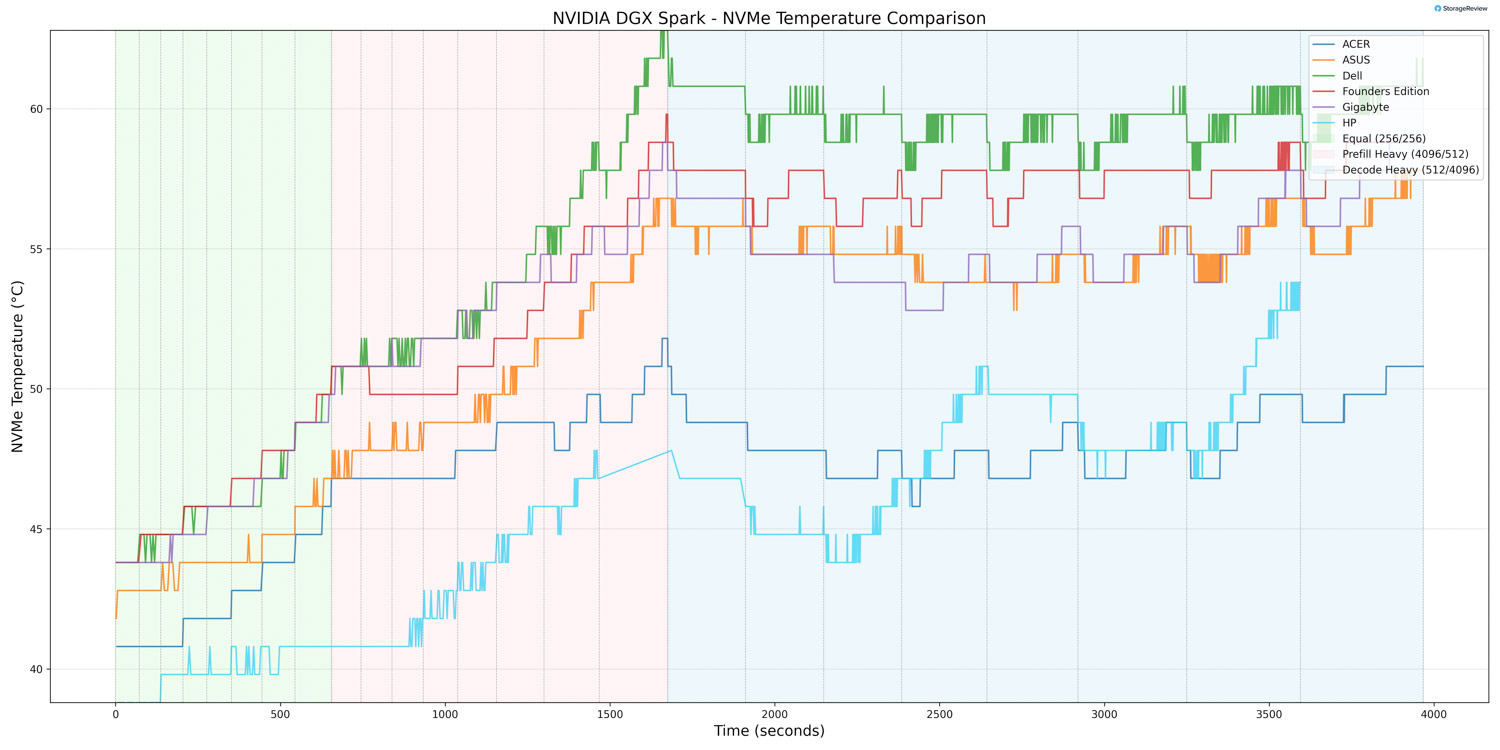

NVMe Temperature

During the Equal phase, the NVMe drive reached roughly 42°C, showing only a gradual rise from its resting baseline. As the workload shifted to Prefill Heavy, the storage temperature rose noticeably, ranging from 42°C to 47°C. In Decode Heavy, the drive operated in its warmest range, 47°C to 54°C, where it peaked, yet remained noticeably below most other Spark systems.

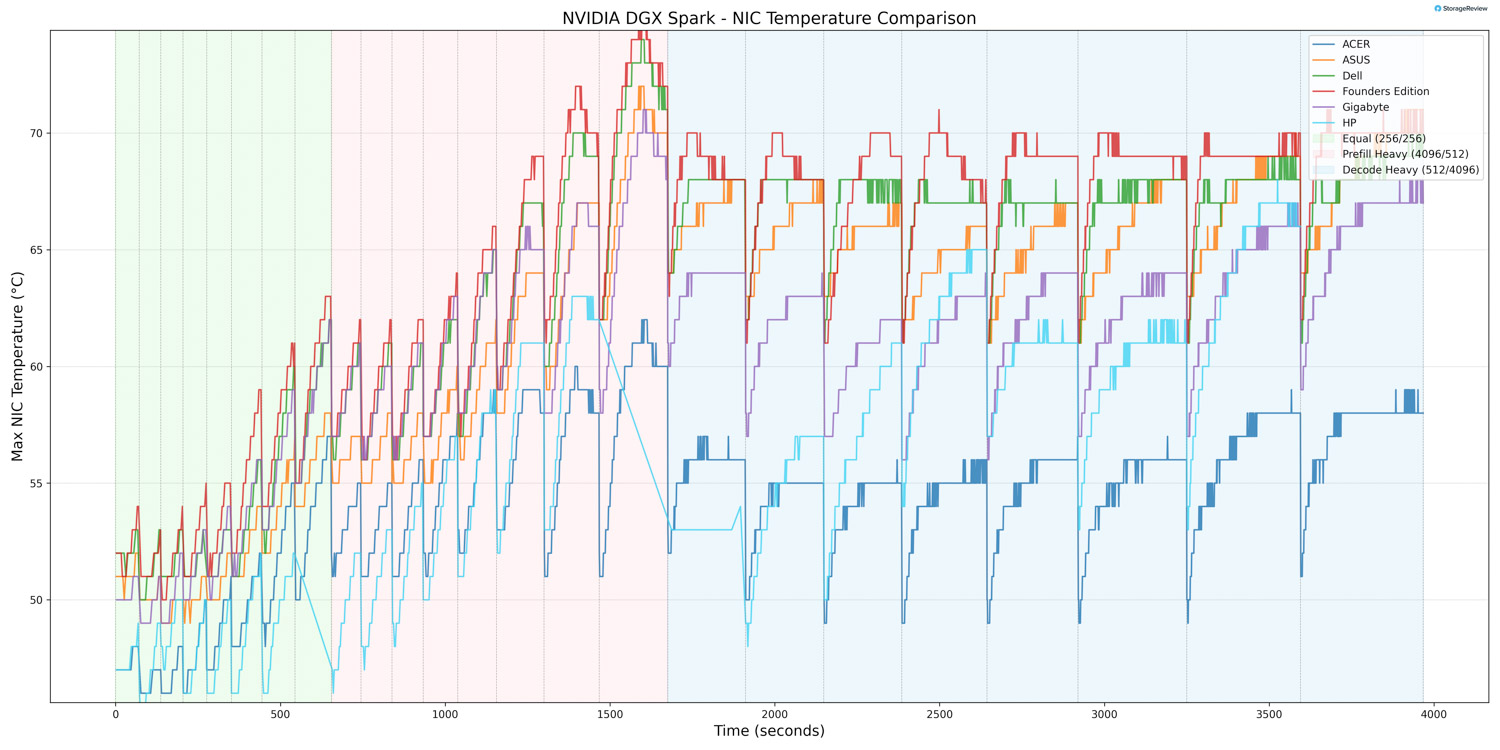

NIC Temperature

During the Equal phase, NIC temperature ranged from 39°C to 52°C, showing a steady climb, indicating moderate thermal buildup as network activity ramps up early in the run.

In Prefill Heavy, NIC thermals increased, ranging from 48°C to 64°C, because this phase places much more sustained pressure on the networking subsystem. During Decode Heavy, NIC temperature was in its warmest range, 52°C to 68°C, where the peak was reached. Nonetheless, thermal behavior remained stable throughout the test.

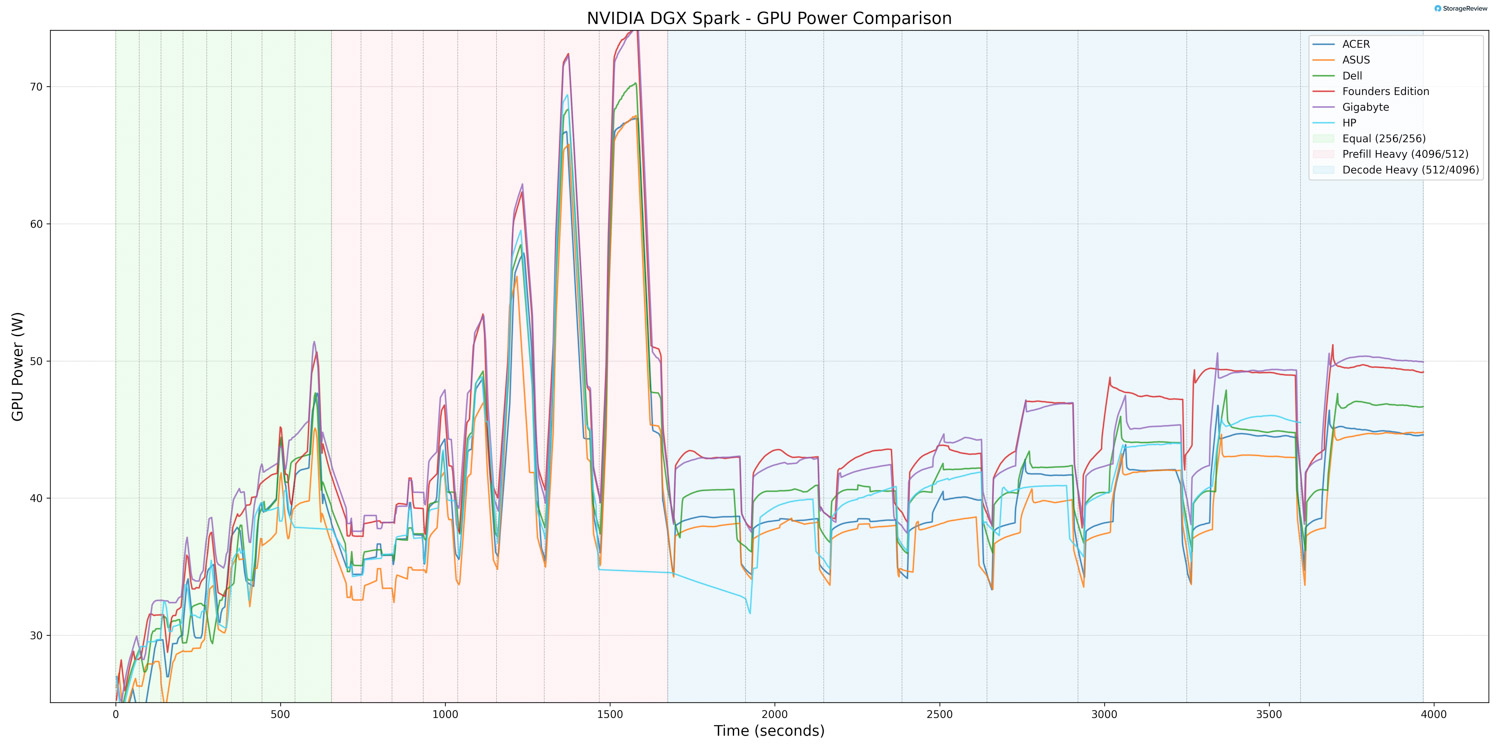

GPU Power Consumption

During the Equal phase, GPU power consumption ranged from 2.86W to just over 40W, placing the HP ZGX Nano G1n in the middle of the pack.

In Prefill Heavy, GPU power started at roughly 37W, dipped to as low as 35W, and spiked to as high as 69W, making this the most power-intensive phase of the run.

During Decode Heavy, GPU power consumption settled into a lower, more stable range of 35W to 46W, indicating that power demand eased as the workload shifted away from the more aggressive burst behavior.

Thermal Summary

Under load, the ZGX Nano G1n operates within a tightly controlled thermal envelope. Maximum system power consumption is approximately 228W, and heat dissipation is approximately 780 BTU/hr. By contrast, idle power draw remains low at approximately 36–38W, which indicates efficient power scaling when the system is not active. The forced-air cooling solution maintains stable operation within HP’s specified range of 5°C to 30°C.

HP ZGX Nano AI Performance Testing

To evaluate the HP ZGX Nano with GB10, we tested Spark units using the vLLM Online Serving benchmark, the most widely adopted high-throughput inference and serving engine for large language models. The vLLM online serving benchmark simulates real-world production workloads by sending concurrent requests to a running vLLM server and measuring key metrics, including total token throughput (tokens per second), time to first token, and time per output token, across varying load conditions.

Our testing spanned a range of models, including dense architectures and micro-scaling data types, and evaluated performance across three workload scenarios: Equal ISL/OSL, Prefill Heavy, and Decode Heavy. These scenarios represent distinct real-world serving patterns, from balanced input and output loads to compute-intensive prompt processing and memory-bandwidth-bound token generation.

In addition to the HP ZGX Nano with GB10, we benchmarked other OEM systems from Dell, ASUS, Acer, and Gigabyte. This allowed us to place HP’s results within the broader competitive landscape and understand where it leads, keeps pace with the pack, or trails across different models and workloads.

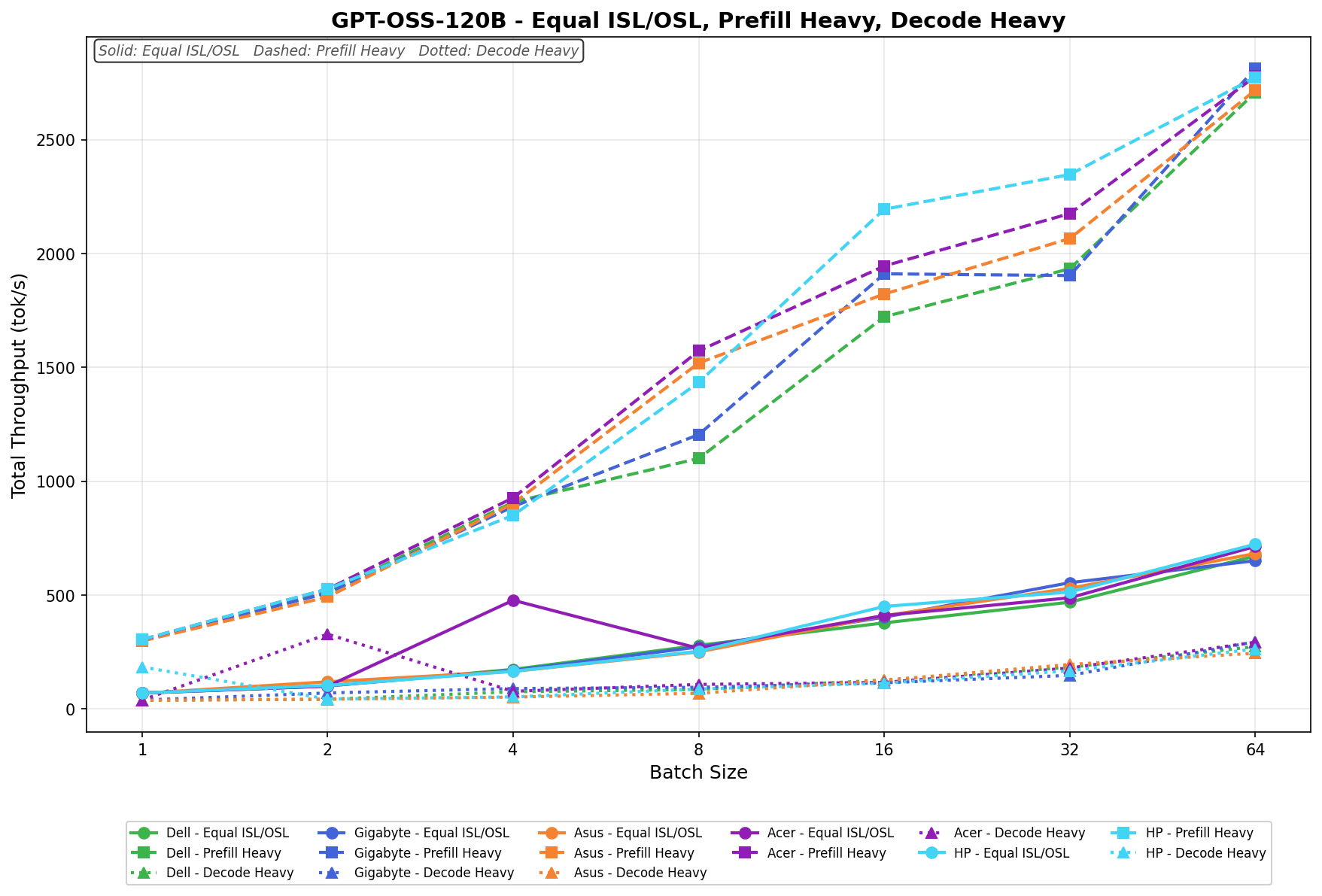

GPT-OSS-120B

With GPT-OSS-120B, the HP ZGX Nano G1n posts its strongest results in Prefill Heavy, where throughput climbs from 304.5 tok/s at batch 1 to 2773.3 tok/s at batch 64. Equal ISL/OSL also scales steadily, rising from 69.6 tok/s to 722.9 tok/s across the sweep. Decode Heavy is much lighter by comparison, starting at 183.7 tok/s in batch 1, dipping slightly in batch 2, then recovering to 262.9 tok/s by batch 64.

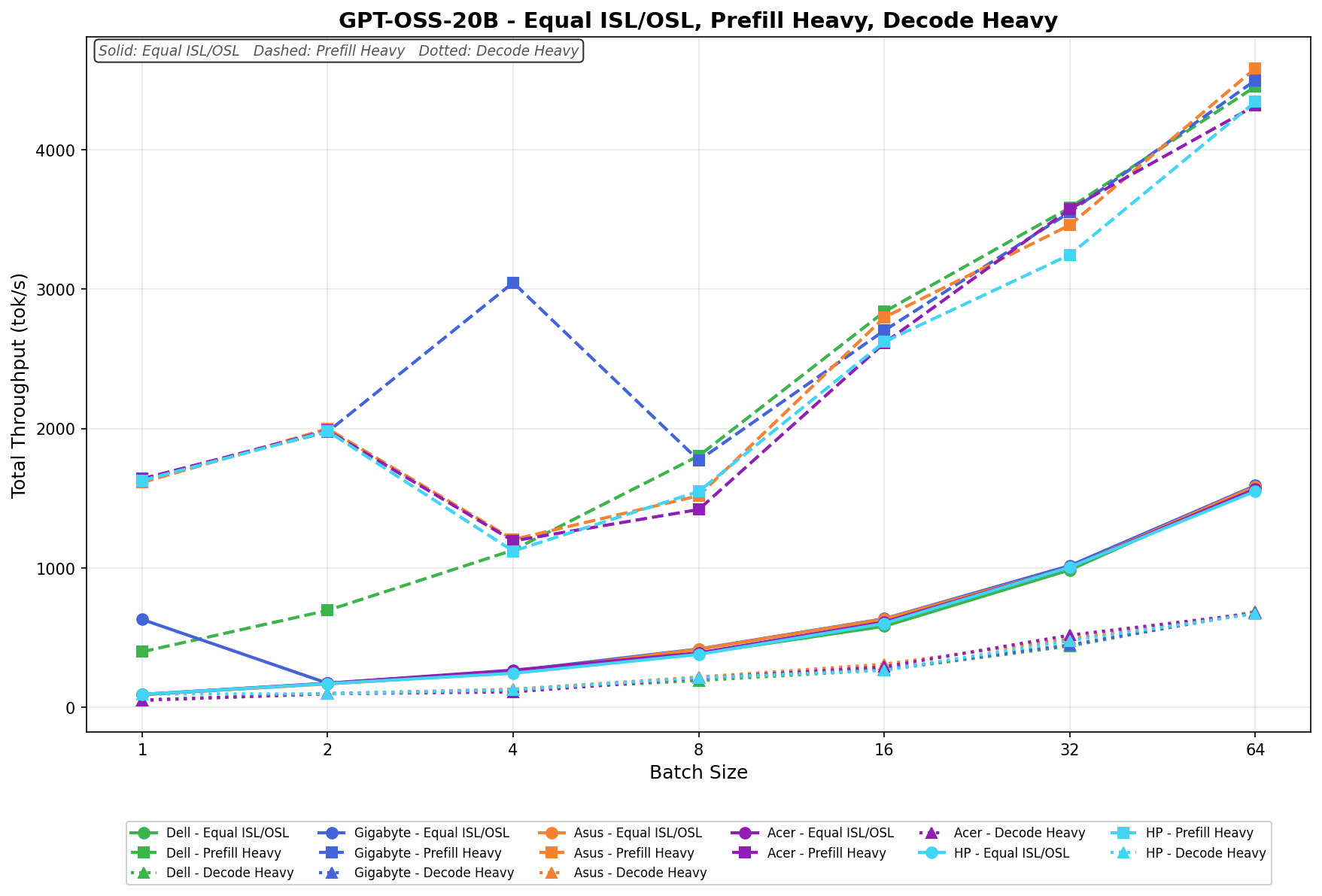

GPT-OSS-20B

With GPT-OSS-20B, HP’s highest numbers come from Prefill Heavy, but the scaling is less linear than with the other models. Prefill starts at 1626.6 tok/s at batch 1, climbs to 1980.3 tok/s at batch 2, drops sharply to 1120.3 tok/s at batch 4, then recovers to 4345.1 tok/s by batch 64. Equal ISL/OSL scales more smoothly from 92.6 tok/s to 1550.6 tok/s, and Decode Heavy rises from 94.4 tok/s to 670.4 tok/s.

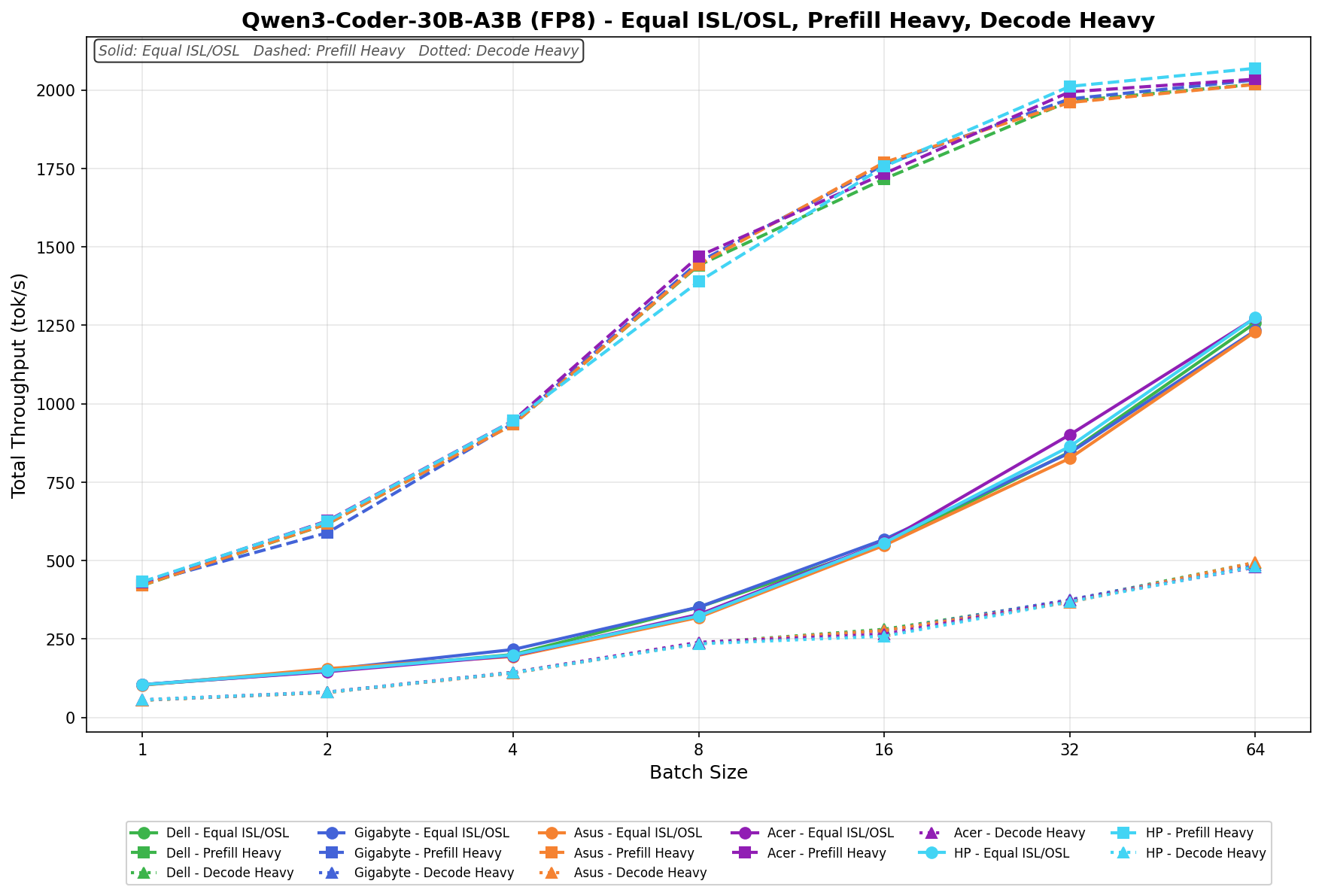

Qwen3 Coder 30B A3B FP8

For Qwen3 Coder 30B A3B (FP8), HP again excels in Prefill Heavy, with throughput increasing from 432.2 tok/s at batch size 1 to 2069.4 tok/s at batch size 64. Equal ISL/OSL rises from 104.2 tok/s to 1274.4 tok/s, while Decode Heavy improves from 55.9 tok/s to 480.4 tok/s. This is among HP’s stronger overall results.

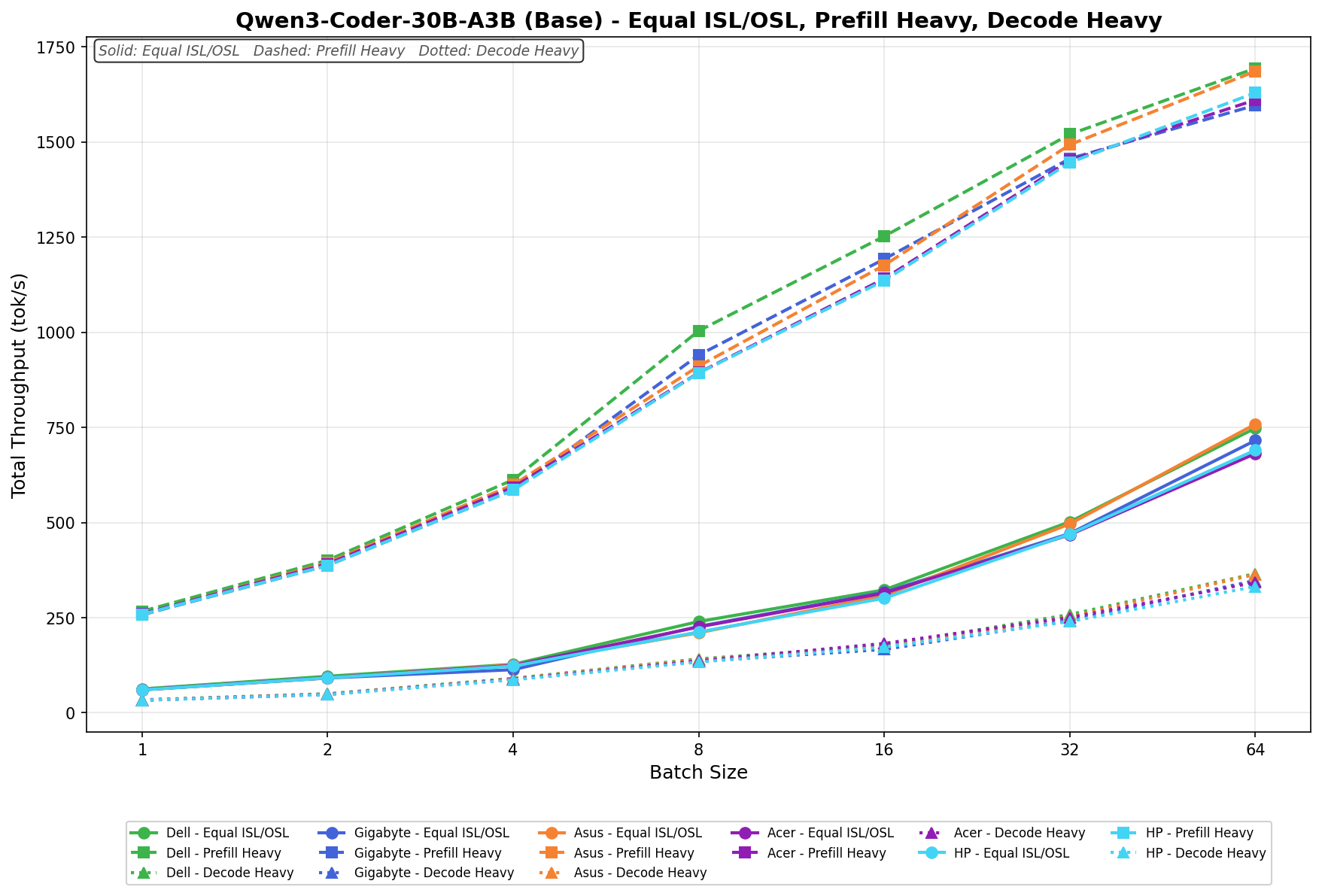

Qwen3 Coder 30B A3B Base

On Qwen3 Coder 30B A3B (Base), HP delivers steady growth across all three phases, although the topline remains in the Prefill Heavy phase. That phase increases from 258.6 tok/s at batch 1 to 1629.4 tok/s at batch 64. Equal ISL/OSL scales from 60.3 tok/s to 690.3 tok/s, while Decode Heavy rises from 33.0 tok/s to 331.8 tok/s.

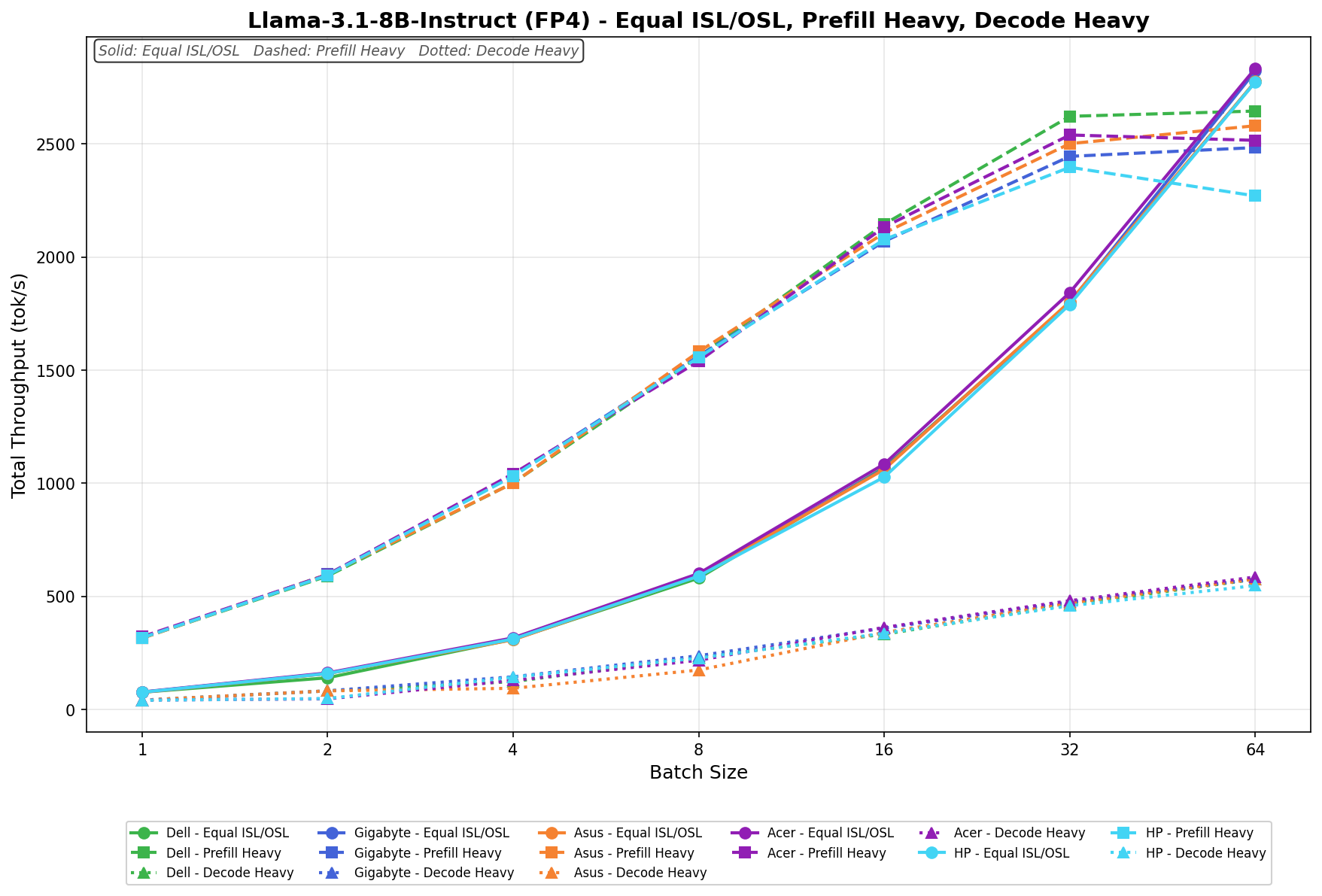

Llama 3.1 8B Instruct FP4

With Llama-3.1-8B-Instruct (FP4), HP shows a clear step up in throughput. Equal ISL/OSL climbs from 76.4 tok/s at batch 1 to 2774.1 tok/s at batch 64, making it the strongest of HP’s three phases on this model. Prefill Heavy also scales aggressively, rising from 316.8 tok/s to 2397.1 tok/s at batch 32 before slipping to 2270.4 tok/s at batch 64. Decode Heavy increases from 40.7 tok/s to 547.6 tok/s across the sweep.

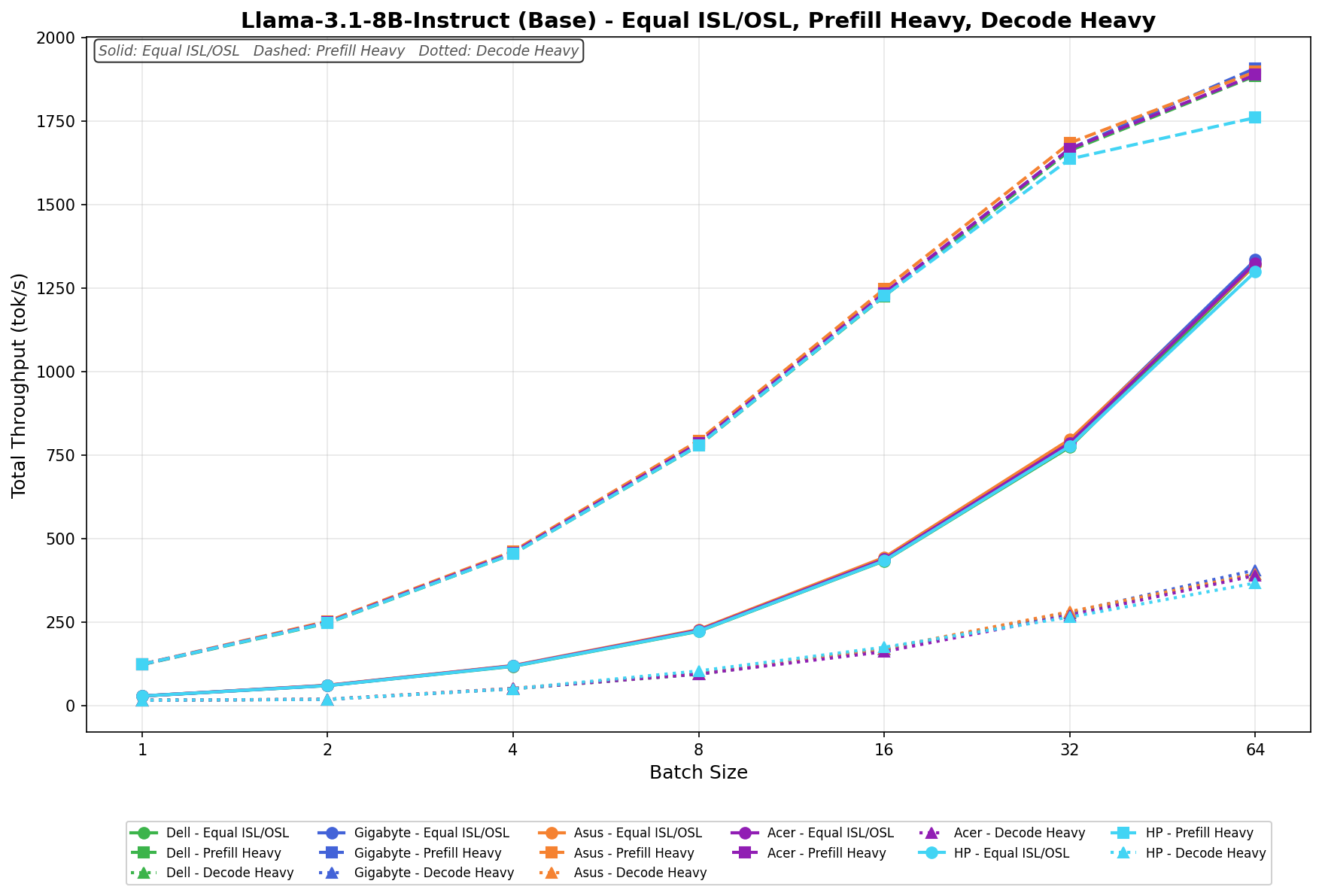

Llama 3.1 8B Instruct (Base)

On Llama-3.1-8B-Instruct (Base), the HP ZGX Nano G1n scales cleanly across all three phases. In Equal ISL/OSL, throughput rises from 28.2 tok/s at batch 1 to 1298.6 tok/s at batch 64. In Prefill Heavy, HP increases from 123.2 tok/s to 1759.5 tok/s, with gains remaining strong throughout the sweep before tapering slightly at the top end. Decode Heavy is much lighter by comparison, rising from 15.5 tok/s at batch 1 to 366.4 tok/s at batch 64.

GPU Direct Storage

How GPU Direct Storage Works

Traditionally, when a GPU processes data from an NVMe drive, the data must first pass through the CPU and system memory before reaching the GPU. This process creates bottlenecks because the CPU acts as a middleman, adding latency and consuming system resources. GPU Direct Storage eliminates this inefficiency by allowing the GPU to access data directly from the storage device over the PCIe bus. This direct path reduces data movement overhead, enabling faster, more efficient transfers.

AI workloads, especially those involving deep learning, are highly data-intensive. Training large neural networks requires processing terabytes of data, and any delay in data transfer leads to underutilized GPUs and longer training times. Accordingly, GPU Direct Storage addresses this challenge by delivering data to the GPU as quickly as possible, minimizing idle time and maximizing computational efficiency.

In addition, GDS benefits workloads that stream large datasets, such as video processing, natural language processing, and real-time inference. By reducing CPU reliance, GDS accelerates data movement and frees CPU resources for other tasks, further enhancing overall system performance.

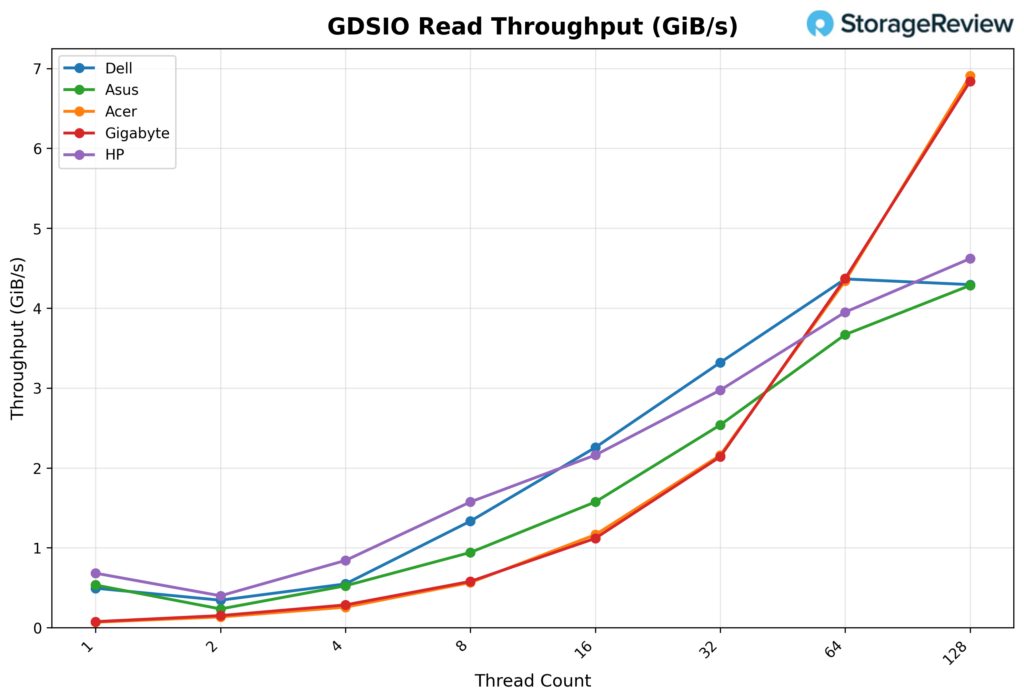

GDSIO Read Throughput 16K

Looking at GDSIO Read Throughput 16K, the HP ZGX Nano G1n starts at 0.70GiB/s with 1 thread, placing it among the stronger low-thread performers in the group. It dips to 0.41GiB/s at 2 threads, then climbs back to 0.86GiB/s at 4 threads, showing the same small early-thread inconsistency seen in a few of these systems. From there, scaling becomes much more consistent. Throughput rises to 1.6GiB/s at 8 threads and 2.2GiB/s at 16 threads, then continues upward to 3.0GiB/s at 32 threads. At the higher queue depths, the HP keeps gaining ground, reaching 3.9GiB/s at 64 threads and peaking at 4.6GiB/s at 128 threads.

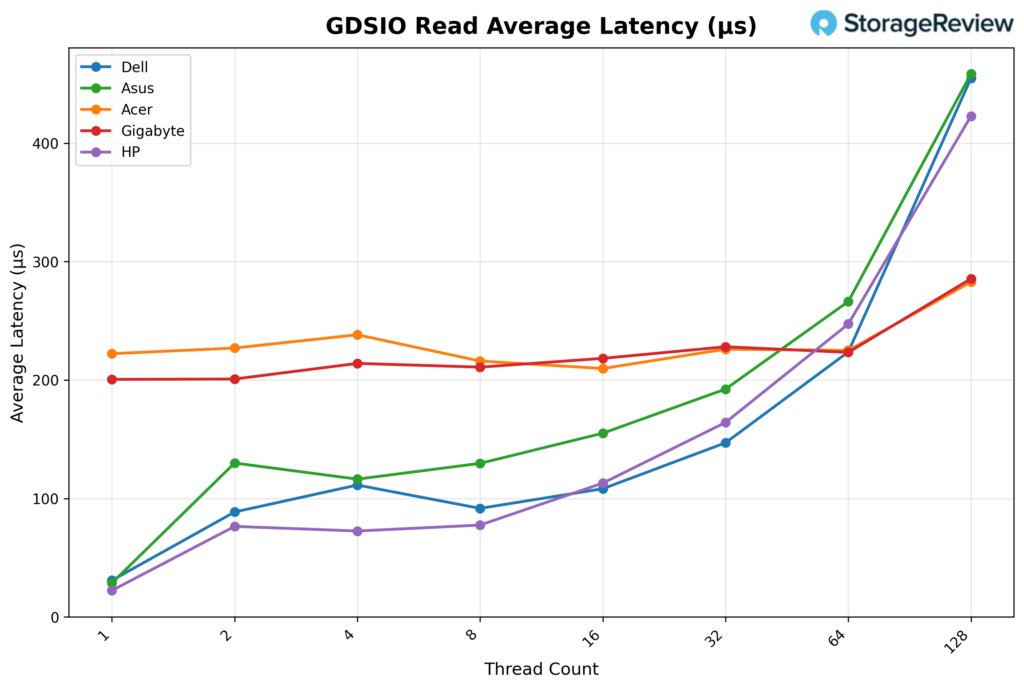

GDSIO Read Average Latency 16K

Looking at GDSIO Read Average Latency (16K), the HP ZGX Nano G1n starts at approximately 0.02ms with 1 thread and remains low through 2 threads (0.08ms) and 4 threads (0.07ms). Latency edges up slightly at 8 threads (0.08ms) and 16 threads (0.11ms), then increases more noticeably at 32 threads (0.16ms) and 64 threads (0.25ms). At 128 threads, latency reaches 0.42ms, still a bit below the highest results in the group while tracking the system’s steady throughput scaling across the test.

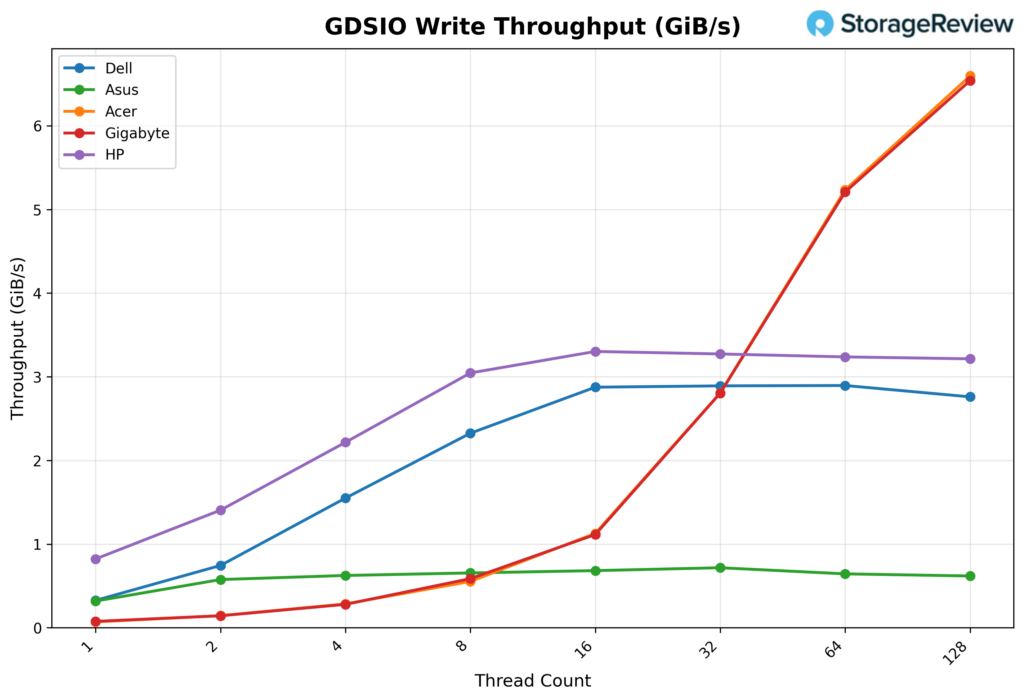

GDSIO Write Throughput 16K

Looking at GDSIO Write Throughput 16K, the HP ZGX Nano G1n starts at 0.84GiB/s on 1 thread, rises to 1.4GiB/s on 2 threads, and reaches 2.2GiB/s on 4 threads. Performance continues to scale strongly at 8 threads (3.0 GiB/s) and reaches 3.3GiB/s at 16 threads, where it effectively levels off. From there, throughput remains nearly flat at 3.3GiB/s with 32 and 64 threads, then eases slightly to 3.2GiB/s with 128 threads, indicating the platform reaches its write ceiling relatively early and sustains that level consistently through the rest of the sweep.

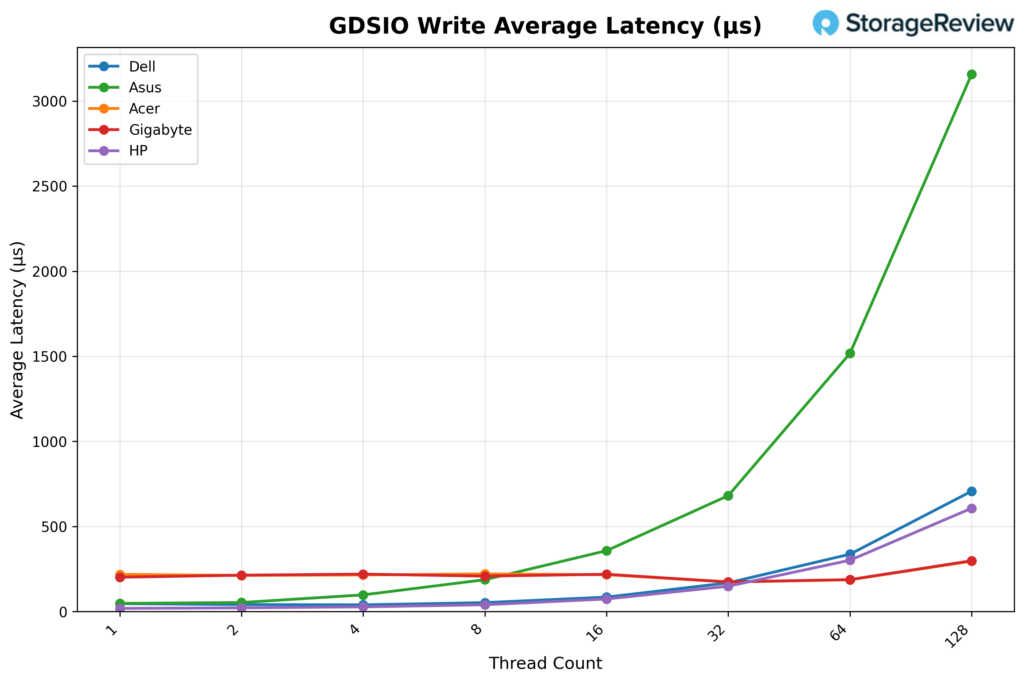

GDSIO Write Average Latency 16K

Looking at GDSIO Write Average Latency (16K), the HP ZGX Nano G1n starts at approximately 0.02ms with 1 thread and remains very low through 2 threads (0.02ms) and 4 threads (0.03ms). Latency rises modestly at 8 threads (0.04ms) and 16 threads (0.07ms), then jumps at 32 threads (0.15ms) and 64 threads (0.30ms). At 128 threads, latency reaches 0.61ms, still fairly well controlled overall, though the upward trend aligns with the point where write throughput has already flattened at higher thread counts.

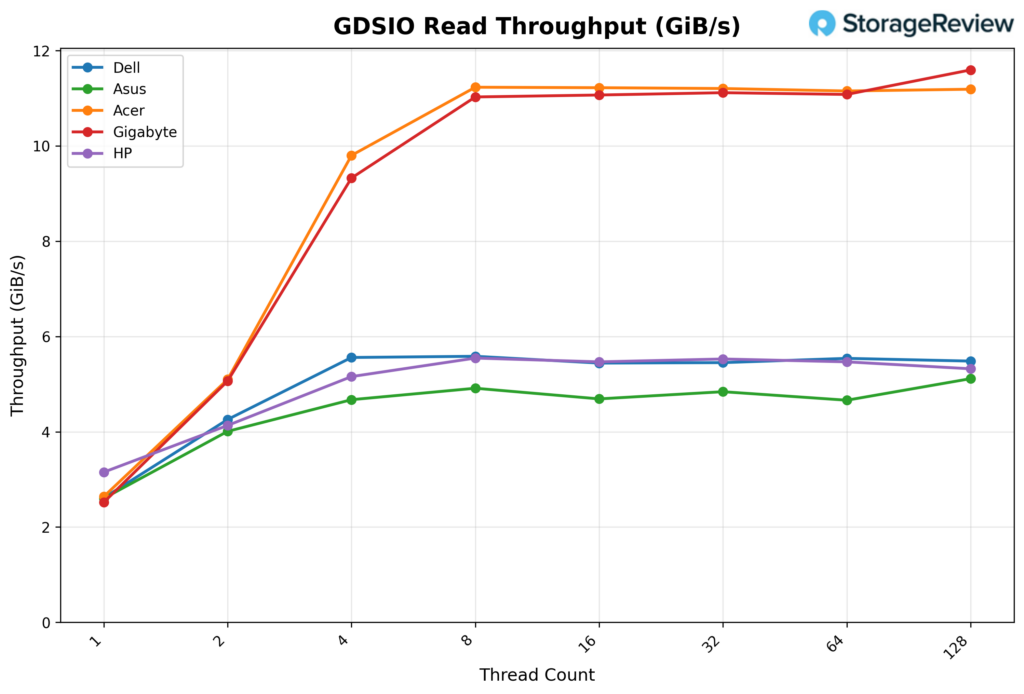

GDSIO Read Throughput 1M

Looking at GDSIO Read Throughput 1M, the HP ZGX Nano G1n starts at 3.2GiB/s on 1 thread and rises to 4.1GiB/s on 2 threads. Performance continues to climb at 4 threads (5.2GiB/s) and 8 threads (5.5GiB/s), after which the platform effectively reaches its ceiling. Throughput then holds essentially flat at 5.5GiB/s for 16, 32, and 64 threads, before easing slightly to 5.3 GiB/s at 128 threads, indicating a strong early ramp followed by a very stable high-thread plateau.

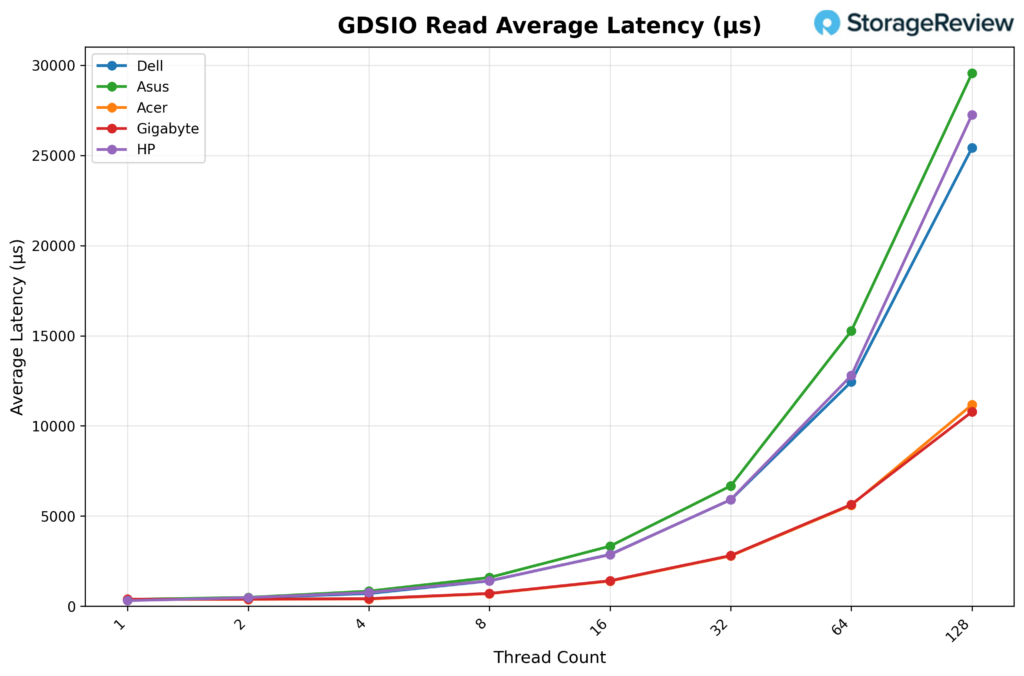

GDSIO Read Average Latency 1M

Looking at GDSIO Read Average Latency (1M), the HP ZGX Nano G1n starts at approximately 0.31ms with 1 thread and remains relatively low at 2 threads (0.47ms) and 4 threads (0.76ms). Latency increases with concurrency, rising to 1.4ms at 8 threads, 2.9ms at 16 threads, and 5.9ms at 32 threads. The trend continues at 64 threads (12.8ms) and reaches 27.2ms at 128 threads, tracking the higher queue depths even though throughput had already flattened much earlier in the sweep.

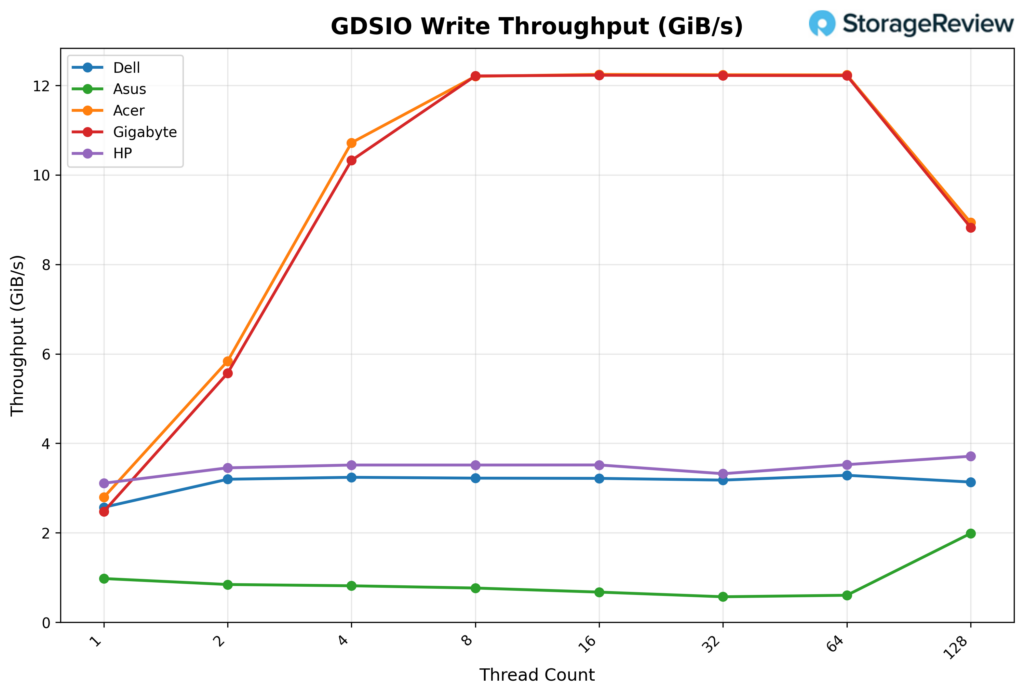

GDSIO Write Throughput 1M

Looking at GDSIO Write Throughput 1M, the HP ZGX Nano G1n starts at 3.1GiB/s with 1 thread and rises to 3.5GiB/s with 2 threads, then holds that level at 4, 8, and 16 threads. Performance dips slightly to 3.3GiB/s at 32 threads before returning to 3.5GiB/s at 64 threads. At 128 threads, throughput increases to 3.7GiB/s, indicating a mostly flat write profile across the sweep with only minor variation and a small uptick at the highest thread count.

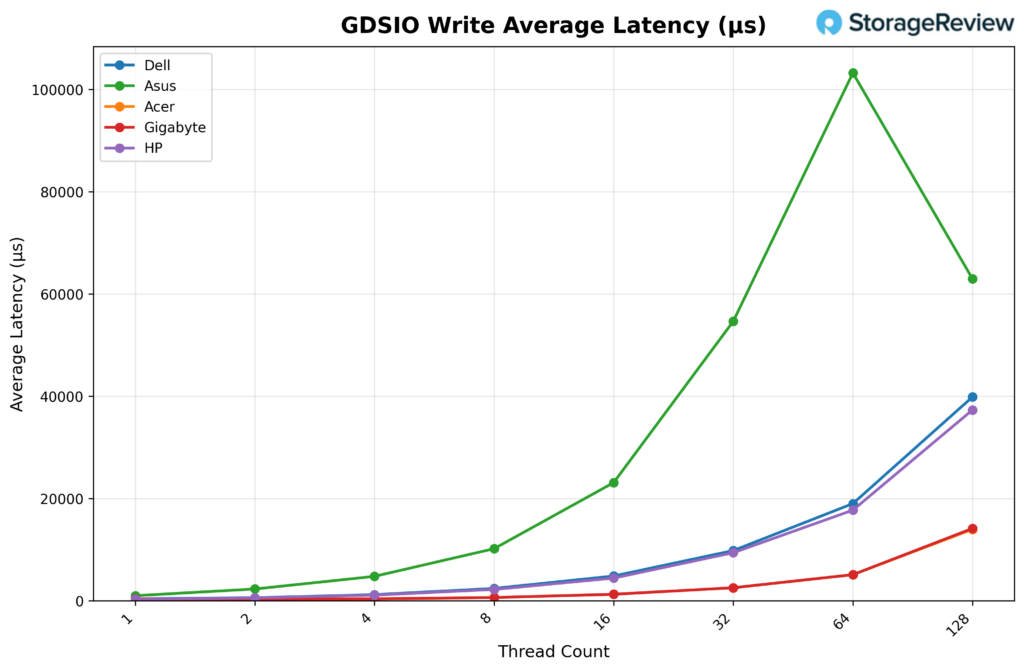

GDSIO Write Average Latency 1M

Looking at GDSIO Write Average Latency (1M), the HP ZGX Nano G1n starts at approximately 0.31ms with 1 thread, rising to 0.57ms with 2 threads and 1.1ms with 4 threads. Latency continues to climb as concurrency increases, reaching 2.2ms with 8 threads, 4.4ms with 16 threads, and 9.4ms with 32 threads. The upward trend continues at 64 threads (17.7ms) and reaches 37.3ms at 128 threads, reflecting steadily increasing queue pressure even though write throughput itself remains fairly flat through most of the sweep.

Conclusion

HP’s ZGX Nano G1n carries the DGX Spark platform’s expected performance profile and adds engineering choices that set it apart from the other Spark systems in the field. In our testing, CPU temperatures peaked at 77.3°C and GPU temperatures at 69°C, both on the cooler side of the Spark units we’ve benchmarked. vLLM performance was strongest in Prefill Heavy workloads across all six models we tested, with scaling that held cleanly through higher batch sizes. GPU Direct Storage read throughput reached 4.6 GiB/s at 16K and 5.5 GiB/s at 1M block sizes, and write throughput plateaued early but held that level consistently across the remaining thread counts.

Where the ZGX Nano G1n separates itself from the rest of the Spark field is in the work HP did around the reference design. The recycled-materials content, the upper/lower-chassis split that improves internal serviceability, and the acoustic envelope that holds at 27.6 dBA under load all reflect deliberate engineering choices beyond what the GB10 platform itself requires. The security stack follows the same pattern. TPM 2.0 in FIPS 140-2 mode, Common Criteria EAL4+, and SED OPAL storage push this unit past a developer appliance and toward a system that can clear procurement in regulated environments.

Like other Sparks, this is not a general-purpose workstation, and HP does not position it as one. For developers, small teams, and organizations that need local AI compute with credible sustainability and security stories behind the purchase, the ZGX Nano G1n is a clear differentiated option within the Spark lineup. For shops where those criteria do not apply, the underlying platform is the constant across all five OEM systems we’ve reviewed, and the decision comes down to ecosystem, support, and price.

Amazon

Amazon